The following objectives for Exam CX-310-200 are covered in this chapter:

Explain disk architecture including the UFS file system capabilities and naming conventions for devices for SPARC, x64, and x86-based systems.

![]() Device drivers control every device connected to your system, and some devices use multiple device drivers. This chapter explains device drivers so that you can recognize and verify all devices connected to your system. In addition, the Solaris operating system accesses devices, such as disks and tape drives, through device and path names. The system administrator must be familiar with the various path names that point to each piece of hardware connected to the system.

Device drivers control every device connected to your system, and some devices use multiple device drivers. This chapter explains device drivers so that you can recognize and verify all devices connected to your system. In addition, the Solaris operating system accesses devices, such as disks and tape drives, through device and path names. The system administrator must be familiar with the various path names that point to each piece of hardware connected to the system.

Explain when and how to list devices, reconfigure devices, perform disk partitioning, and relabel a disk in a Solaris operating environment using the appropriate files, commands, options, menus, and/or tables.

![]() The system administrator is responsible for adding and configuring new hardware on the system. This chapter describes how new devices are configured into the Solaris operating environment. You’ll need to describe disk architecture and understand naming conventions for disk devices as used in the Solaris operating environment.

The system administrator is responsible for adding and configuring new hardware on the system. This chapter describes how new devices are configured into the Solaris operating environment. You’ll need to describe disk architecture and understand naming conventions for disk devices as used in the Solaris operating environment.

![]() You’ll need to know how to set up the disks and disk partitions when installing the Solaris operating environment. However, to properly set up a disk, you first need to understand the concepts behind disk storage and partitioning. You then need to determine how you want data stored on your system’s disks.

You’ll need to know how to set up the disks and disk partitions when installing the Solaris operating environment. However, to properly set up a disk, you first need to understand the concepts behind disk storage and partitioning. You then need to determine how you want data stored on your system’s disks.

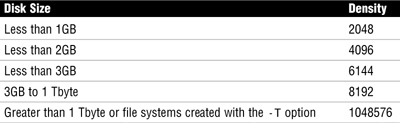

Explain the Solaris 10 OS file system, including disk-based, distributed, devfs, and memory file systems related to SMF, and create a new UFS file system using options for <1Tbyte and> 1Tbyte file systems.

![]() You’ll need to understand all of the file systems that are available in the Solaris operating environment. In addition, you’ll need to know when to use each type of file system.

You’ll need to understand all of the file systems that are available in the Solaris operating environment. In addition, you’ll need to know when to use each type of file system.

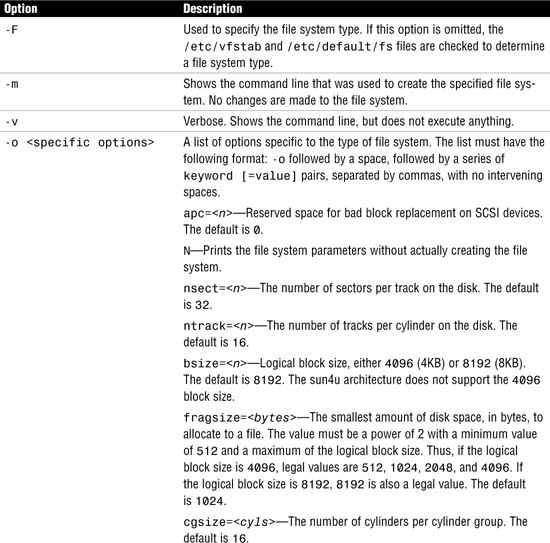

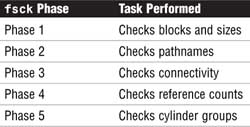

Explain when and how to create a new UFS using the newfs command, check the file system using fsck, resolve file system inconsistencies, and monitor file system usage using associated commands.

![]() You’ll need to be familiar with all of the commands used to create, check, and repair file systems. The system administrator needs to know how to use these tools and understand the effect that the various command options will have on performance and functionality.

You’ll need to be familiar with all of the commands used to create, check, and repair file systems. The system administrator needs to know how to use these tools and understand the effect that the various command options will have on performance and functionality.

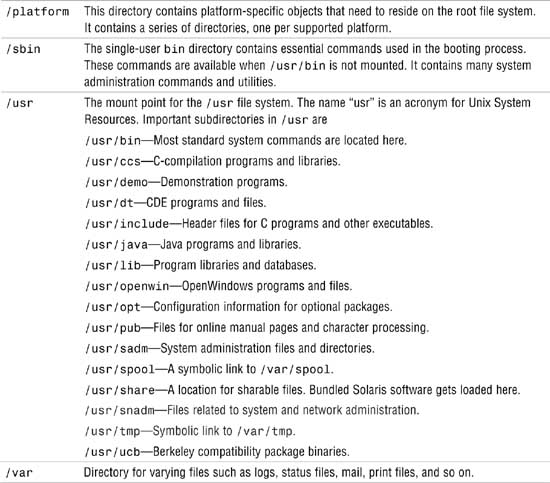

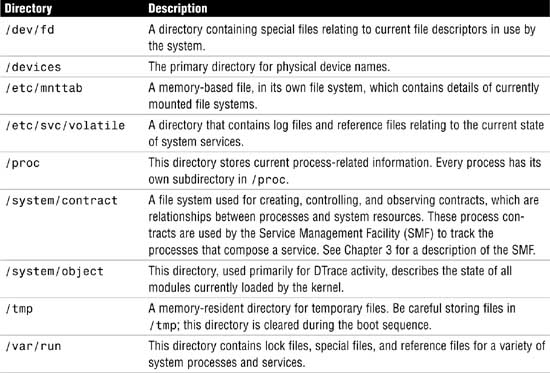

Describe the purpose, features, and functions of root subdirectories, file components, file types, and hard links in the Solaris directory hierarchy.

Explain how to create and remove hard links in a Solaris directory.

![]() You’ll need to know how to create, remove, and identify a hard link and understand why they are used in the Solaris operating environment. You’ll need to be able to identify and describe all of the file types available in the Solaris operating environment. You’ll need to understand the purpose of each subdirectory located in the root file system and the type of information that is stored in these subdirectories.

You’ll need to know how to create, remove, and identify a hard link and understand why they are used in the Solaris operating environment. You’ll need to be able to identify and describe all of the file types available in the Solaris operating environment. You’ll need to understand the purpose of each subdirectory located in the root file system and the type of information that is stored in these subdirectories.

Explain the purpose and function of the vfstab file in mounting UFS file systems, and the function of the mnttab file system in tracking current mounts.

![]() You’ll need to maintain the table of file system defaults as you configure file systems to mount automatically at bootup. You’ll also need to understand the function of the mounted file system table (mnttab) and the entries made in this file.

You’ll need to maintain the table of file system defaults as you configure file systems to mount automatically at bootup. You’ll also need to understand the function of the mounted file system table (mnttab) and the entries made in this file.

Explain how to perform mounts and unmounts, and either access or restrict access to mounted diskettes and CD-ROMs.

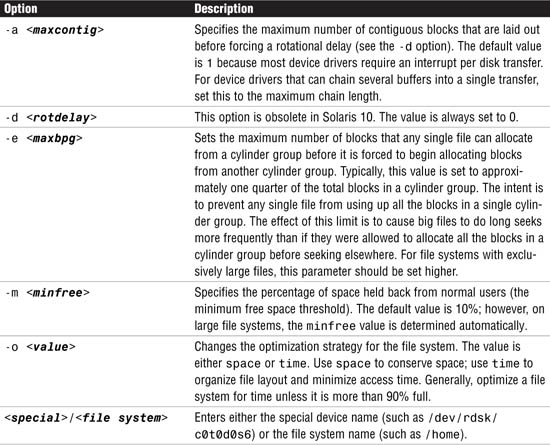

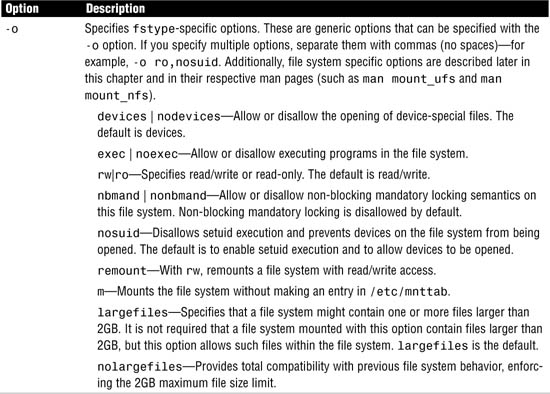

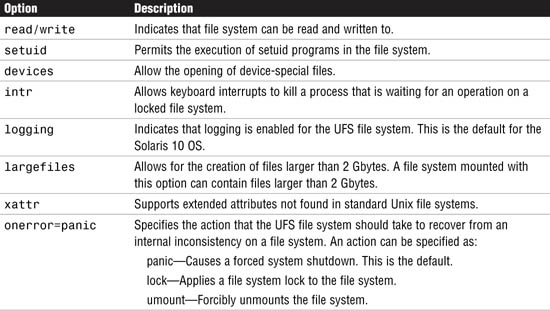

![]() Each file system type supports options that control how the file system will function and perform. You’ll need to understand all of these custom file system parameters. The system administrator needs to be familiar with mounting and unmounting file systems and all of the options associated with the process.

Each file system type supports options that control how the file system will function and perform. You’ll need to understand all of these custom file system parameters. The system administrator needs to be familiar with mounting and unmounting file systems and all of the options associated with the process.

The following study strategies will help you prepare for the exam:

![]() This chapter introduces many new terms that you must know well enough to match to a description if they were to appear on the exam. Know the terms I’ve provided in the “Key Terms” section at the end of this chapter.

This chapter introduces many new terms that you must know well enough to match to a description if they were to appear on the exam. Know the terms I’ve provided in the “Key Terms” section at the end of this chapter.

![]() Understand what a device driver is and the various device driver names. They are rather difficult to remember, but keep going over them until you can describe them from memory. Many questions on the exam refer to the various types of device names.

Understand what a device driver is and the various device driver names. They are rather difficult to remember, but keep going over them until you can describe them from memory. Many questions on the exam refer to the various types of device names.

![]() Practice all the commands and step by steps until you can describe and perform them from memory. The best way to memorize them is to practice them repeatedly on a Solaris system.

Practice all the commands and step by steps until you can describe and perform them from memory. The best way to memorize them is to practice them repeatedly on a Solaris system.

![]() As with every chapter of this book, you’ll need a Solaris 10 system on which to practice. Practice every step-by-step example that is presented until you can perform the steps from memory. Also, as you practice creating file systems, you’ll need some unused disk space with which to practice. I recommend an empty, secondary disk drive for this purpose.

As with every chapter of this book, you’ll need a Solaris 10 system on which to practice. Practice every step-by-step example that is presented until you can perform the steps from memory. Also, as you practice creating file systems, you’ll need some unused disk space with which to practice. I recommend an empty, secondary disk drive for this purpose.

![]() Familiarize yourself with the various types of file systems described in this chapter, but specifically, pay close attention to the UFS type and UFS parameters. Most questions on the exam revolve around the UFS. In addition, make sure you understand the Solaris Volume Manager. You don’t need to know how to use it—just understand what it does and why you would use it.

Familiarize yourself with the various types of file systems described in this chapter, but specifically, pay close attention to the UFS type and UFS parameters. Most questions on the exam revolve around the UFS. In addition, make sure you understand the Solaris Volume Manager. You don’t need to know how to use it—just understand what it does and why you would use it.

![]() Make sure that you practice disk slicing. Understand how to create and delete disk slices and pay close attention to the limitations inherent with standard disk slices. Practice partitioning a disk using the

Make sure that you practice disk slicing. Understand how to create and delete disk slices and pay close attention to the limitations inherent with standard disk slices. Practice partitioning a disk using the format utility and SMC GUI tools until you have the process memorized.

![]() Finally, understand how to mount and unmount a file system as well as how to configure the /etc/vfstab file. Make sure that you understand all of the commands described in this chapter that are used to manage and display information about file systems, such as

Finally, understand how to mount and unmount a file system as well as how to configure the /etc/vfstab file. Make sure that you understand all of the commands described in this chapter that are used to manage and display information about file systems, such as df, fsck, and prtvtoc.

Before we can describe file systems, it’s important that you understand how Solaris views the disk drives and various other hardware components on your system. In particular, you need to understand how these devices are configured and named before you can create a file system on them or install the Solaris operating environment.

Device management in the Solaris 10 environment includes adding and removing peripheral devices from a system, such as tape drives, disk drives, printers, and modems. Device management also involves adding a third-party device driver to support a device if the device driver is not available in Sun’s distribution of the Solaris operating environment. System administrators need to know how to specify device names if using commands to manage disks, file systems, and other devices.

This chapter describes disk device management in detail. It also describes disk device naming conventions as well as adding, configuring, and displaying information about disk devices attached to your system.

Objective:

Describe the basic architecture of a local disk and the naming conventions for disk devices as used in the Solaris operating environment.

Explain when and how to list and reconfigure devices.

A computer typically uses a wide range of peripheral and mass-storage devices such as a small computer system interface (SCSI) disk drive, a keyboard, a mouse, and some kind of magnetic backup medium. Other commonly used devices include CD-ROM drives, printers, and various Universal Serial Bus (USB) devices. Solaris communicates with peripheral devices through device files or drivers. A device driver is a low-level program that allows the kernel to communicate with a specific piece of hardware. The driver serves as the operating system’s “interpreter” for that piece of hardware. Before Solaris can communicate with a device, the device must have a device driver.

When a system is started for the first time, the kernel creates a device hierarchy to represent all the devices connected to the system. This is the autoconfiguration process, which is described later in this chapter. If a driver is not loaded for a particular peripheral, that device is not functional. In Solaris, each disk device is described in three ways, using three distinct naming conventions:

![]() Physical device name—Represents the full device pathname in the device information hierarchy.

Physical device name—Represents the full device pathname in the device information hierarchy.

![]() Instance name—Represents the kernel’s abbreviation name for every possible device on the system.

Instance name—Represents the kernel’s abbreviation name for every possible device on the system.

![]() Logical device name—Used by system administrators with most file system commands to refer to devices.

Logical device name—Used by system administrators with most file system commands to refer to devices.

System administrators need to understand these device names when using commands to manage disks and file systems. We discuss these device names throughout this chapter.

Exam Alert

Memorize these device names. You’ll encounter them in several questions and it’s important that you understand when and where each name is used. Make sure you can identify a particular device driver name when it is presented as a filename.

Before the operating system is loaded, the system locates a particular device through the device tree, also called the full device pathname. Full device pathnames are described in Chapter 3, “Perform System Boot and Shutdown Procedures.” After the kernel is loaded, however, a device is located by its physical device pathname. Physical device names represent the full device pathname for a device. Note that the two names have the same structure. For example, the full device pathname for a SCSI disk at target 0 on a Sun Ultra 450 system is as follows:

/pci@1f,4000/scsi@3/disk@0,0

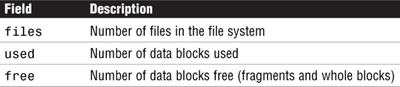

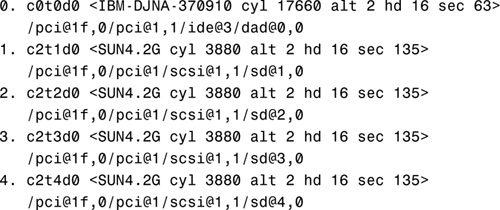

Now let’s look at the corresponding physical device name from the operating system level. Use the dmesg command, described later in this section, to obtain information about devices connected to your system. By typing dmesg at the command prompt, you’ll receive the following information about SCSI disks 3 and 4:

This same information is also available in the /var/adm/messages file.

The physical device pathnames for disks 3 and 4 are as follows:

As you can see, the physical device name and the full device name are the same. The difference is that the full device pathname is simply a path to a particular device. The physical device is the actual driver used by Solaris to access that device from the operating system.

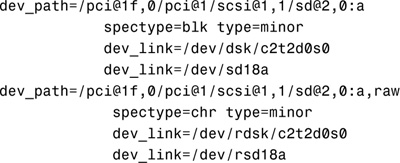

Physical device files are found in the /devices directory. The content of the /devices directory is controlled by the devfs file system. The entries in the /devices directory dynamically represent the current state of accessible devices in the kernel and require no administration. New device entries are added when the devices are detected and added to the kernel. The physical device files for SCSI disks 3 and 4 would be

/devices/pci@1f,0/pci@1/scsi@1,1/sd@3,0:<#>

/devices/pci@1f,0/pci@1/scsi@1,1/sd@4,0:<#>

for the block device and

/devices/pci@1f,0/pci@1/scsi@1,1/sd@3,0:<#>,raw

/devices/pci@1f,0/pci@1/scsi@1,1/sd@4,0:<#>,raw

for the character (raw) device, where <#> is a letter representing the disk slice. Block and character devices are described later in this chapter in the section titled “Block and Raw Devices.”

The system commands used to provide information about physical devices are described in Table 1.1.

Note

prtconf Output The output produced by the prtconf command can vary depending on the version of the system’s PROM.

prtconf

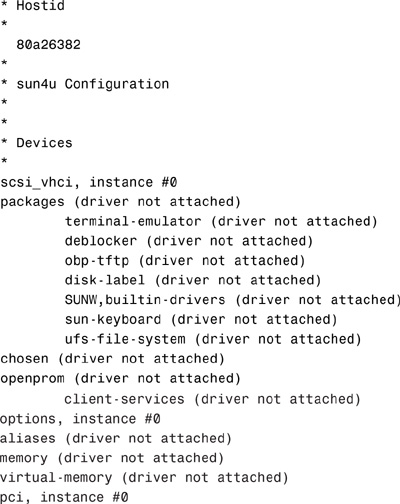

The following output is displayed:

Use the -v option to display detailed information about devices such as information about the attached SCSI disks:

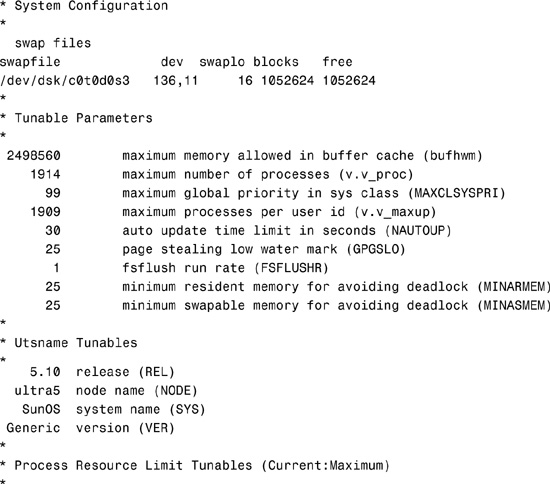

Next is an example of the output displayed by the sysdef command. Type the sysdef command:

sysdef

The following output is displayed:

0x0000000000000100:0x0000000000010000 file descriptors

*

* Streams Tunables

*

9 maximum number of pushes allowed (NSTRPUSH)

65536 maximum stream message size (STRMSGSZ)

1024 max size of ctl part of message (STRCTLSZ)

*

* IPC Messages module is not loaded

*

*

* IPC Semaphores module is not loaded

*

*

* IPC Shared Memory module is not loaded

*

*

* Time Sharing Scheduler Tunables

*

60 maximum time sharing user priority (TSMAXUPRI)

SYS system class name (SYS_NAME)

*Output has been truncated.

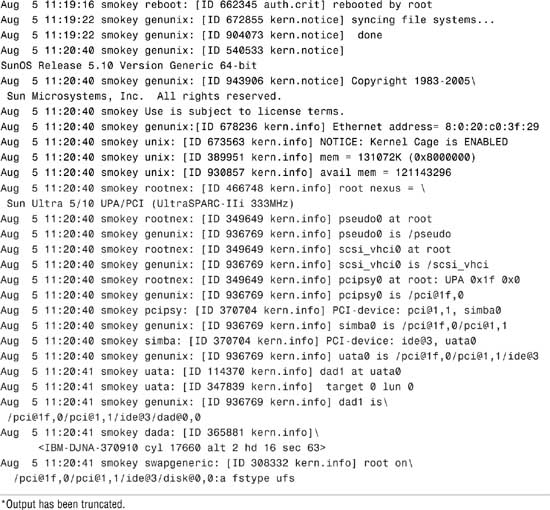

Finally, here’s an example of the device information for an Ultra system displayed using the dmesg command:

dmesg

The following output is displayed:

Use the output of the prtconf command to identify which disk, tape, and CD-ROM devices are connected to the system. As shown in the preceding prtconf and sysdef example, some devices display the driver not attached message next to the device instance. This message does not always mean that a driver is unavailable for this device. It means that no driver is currently attached to the device instance because there is no device at this node or the device is not in use. The operating system automatically loads drivers when the device is accessed, and it unloads them when it is not in use.

The system determines what devices are attached to it at startup. This is why it is important to have all peripherals powered on at startup, even if they are not currently being used. During startup, the kernel configures itself dynamically, loading needed modules into memory. Device drivers are loaded when devices, such as disk and tape devices, are accessed for the first time. This process is called autoconfiguration because all kernel modules are loaded automatically if needed. As described in Chapter 3, the system administrator can customize the way in which kernel modules are loaded by modifying the /etc/system file.

Autoconfiguration offers many advantages over the manual configuration method used in earlier versions of Unix, in which device drivers were manually added to the kernel, the kernel was recompiled, and the system had to be restarted. Now, with autoconfiguration, the administrator simply connects the new device to the system and performs a reconfiguration startup. To perform a reconfiguration startup, follow the steps in Step by Step 1.1.

STEP BY STEP

1.1 Performing a Reconfiguration Startup

1. Create the /reconfigure file with the following command:

touch /reconfigure

The /reconfigure file causes the Solaris software to check for the presence of any newly installed devices the next time you turn on or start up your system.

2. Shut down the system using the shutdown procedure described in Chapter 3.

If you need to connect the device, turn off power to the system and all peripherals after Solaris has been properly shut down.

After the new device is connected, restore power to the peripherals first and then to the system. Verify that the peripheral device has been added by attempting to access it.

Note

Automatic Removal of /reconfigure The file named /reconfigure automatically gets removed during the bootup process.

An optional method of performing a reconfiguration startup is to type boot -r at the OpenBoot prompt.

Note

Specify a Reconfiguration Reboot As root, you can also issue the reboot -- -r command from the Unix shell. The -- -r passes the -r to the boot command.

During a reconfiguration restart, a device hierarchy is created in the /devices file system to represent the devices connected to the system. The kernel uses this to associate drivers with their appropriate devices.

Autoconfiguration offers the following benefits:

![]() Main memory is used more efficiently because modules are loaded as needed.

Main memory is used more efficiently because modules are loaded as needed.

![]() There is no need to reconfigure the kernel if new devices are added to the system. When you add devices such as disks or tape drives other than USB and hot-pluggable devices, the system needs to be shut down before you connect the hardware so that no damage is done to the electrical components.

There is no need to reconfigure the kernel if new devices are added to the system. When you add devices such as disks or tape drives other than USB and hot-pluggable devices, the system needs to be shut down before you connect the hardware so that no damage is done to the electrical components.

![]() Drivers can be loaded and tested without having to rebuild the kernel and restart the system.

Drivers can be loaded and tested without having to rebuild the kernel and restart the system.

Note

devfsadm Another option used to automatically configure devices on systems that must remain running 24×7, and one that does not require a reboot, is the devfsadm command.

Occasionally, you might install a new device for which Solaris does not have a supporting device driver. Always check with the manufacturer to make sure any device you plan to add to your system has a supported device driver. If a driver is not included with the standard Solaris release, the manufacturer should provide the software needed for the device to be properly installed, maintained, and administered.

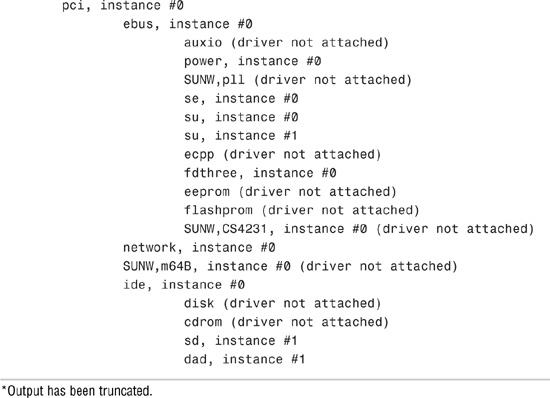

Third-party device drivers are installed as software packages using the pkgadd command. At a minimum, this software includes a device driver and its associated configuration (.conf) file. The .conf file resides in the /kernel/drv directory. Table 1.2 describes the contents of the module subdirectories located in the /kernel directory.

Universal Serial Bus (USB) devices were developed to provide a method to attach peripheral devices such as keyboards, printers, cameras, and disk drives using a common connector and interface. Furthermore, USB devices are hot-pluggable, which means they can be connected or disconnected while the system is running. The operating system automatically detects when a USB device has been connected and automatically configures the operating environment to make it available.

The Solaris 10 operating environment supports USB devices on Sun Blade, Netra, Sunfire, and x86/x64-based system. In addition, a USB interface can be added to Sun systems that may not already have one.

When hot-plugging a USB device, the device is immediately displayed in the device hierarchy. For example, a full device pathname for a USB Zip drive connected to an Ultra system would appear as follows:

/pci@1f,4000/usb@5/storage@1

A printer would look like this:

/pci@1f,4000/usb@5/hub@3/printer@1

Be careful when removing USB devices, however. If the device is being used when it is disconnected, you will get I/O errors and possible data errors. When this happens, you’ll need to plug the device back in, stop the application that is using the device, and then unplug the device.

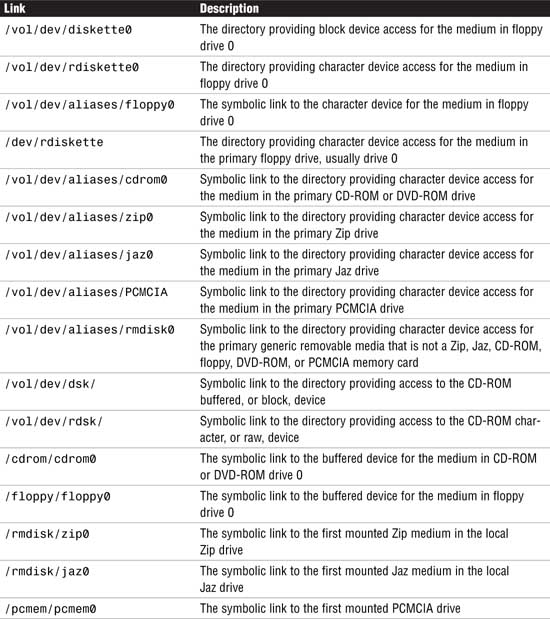

As stated in the “Volume Management” section later in this chapter, removable media such as floppy diskettes and CD-ROMs can be inserted and automatically mounted. When attaching a hot-pluggable device, it’s best to restart vold after attaching the USB device as follows: pkill -HUP vold

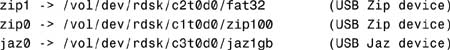

Once vold identifies that the device has been connected, you’ll see device names set up as follows:

When disconnecting a USB device such as a Zip drive, unmount the device, stop vold, disconnect the device, and then restart vold as follows:

1. Stop any application that is using the device.

2. Unmount the USB device using the volrmmount command as follows:

volrmmount -e zip0

or the eject command as follows:

eject zip0

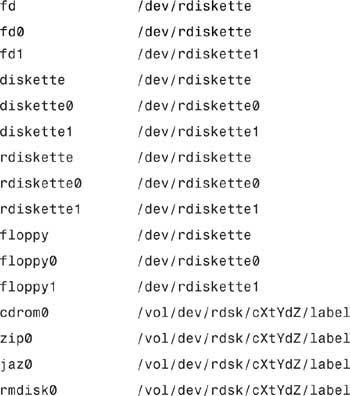

zip0 is a nickname for the Zip device. The following nicknames are recognized:

The -e option simulates the ejection of the media. For a more up-to-date listing of nicknames that might have been added since this writing, consult the volrmmount man page.

3. As root, stop vold:

/etc/init.d/volmgt stop

4. Disconnect the USB device.

5. Start vold:

/etc/init.d/volmgt start

For more information on vold and USB devices, see the section titled “Volume Management” later in this chapter.

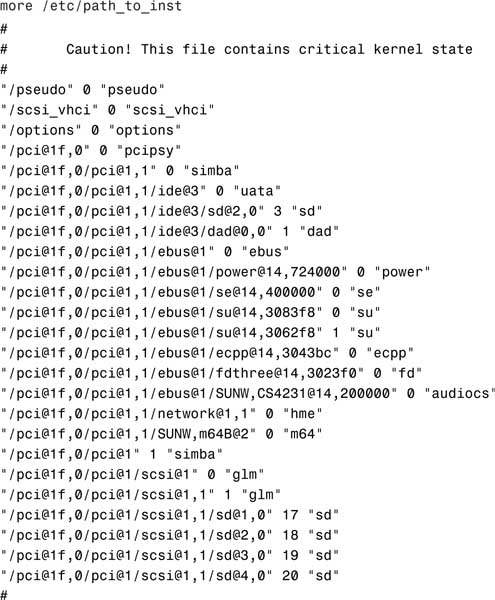

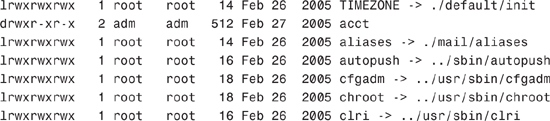

The instance name represents the kernel’s abbreviated name for every possible device on the system. For example, on an Ultra system, dad0 represents the instance name of the IDE disk drive, sd0 represents a SCSI disk, and hme0 is the instance name for the network interface. Instance names are mapped to a physical device name in the /etc/path_to_inst file. The following shows the contents of a path_to_inst file:

Although instance names can be displayed using the commands dmesg, sysdef, and prtconf, the only command that shows the mapping of the instance name to the physical device name is the dmesg command. For example, you can determine the mapping of an instance name to a physical device name by looking at the dmesg output, as shown in the following example from an Ultra system:

sd19 is /pci@1f,0/pci@1/scsi@1,1/sd@3,0

dad1 is /pci@1f,0/pci@1,1/ide@3/dad@0,0

In the first example, sd19 is the instance name and /pci@1f,0/pci@1/scsi@1,1/sd@3,0 is the physical device name. In the second example, dad1 is the instance name and /pci@1f,0/pci@1,1/ide@3/dad@0,0 is the physical device name. After the instance name has been assigned to a device, it remains mapped to that device. To keep instance numbers consistent across restarts, the system records them in the /etc/path_to_inst file. This file is only read at startup, and it is updated by the devfsadmd daemon described later in this section.

Devices already existing on a system are not rearranged when new devices are added, even if new devices are added to pci slots that are numerically lower than those occupied by existing devices. In other words, the /etc/path_to_inst file is appended to, not rewritten, when new devices are added.

It is generally not necessary for the system administrator to change the path_to_inst file because the system maintains it. The system administrator can, however, change the assignment of instance numbers by editing this file and doing a reconfiguration startup. However, any changes made in this file are lost if the devfsadm command is run before the system is restarted.

Note

Resolving Problems with /etc/path_to_inst If you can’t start up from the startup disk because of a problem with the /etc/path_to_inst file, you should start up from the CD-ROM (boot cdrom -s) and remove the /etc/path_to_inst file from the startup disk. To do this, start up from the CD-ROM using boot cdrom -s at the OpenBoot prompt. Use the rm command to remove the file named /a/etc/path_to_inst. The path_to_inst file will automatically be created the next time the system boots.

You can add new devices to a system without requiring a reboot. It’s all handled by the devfsadmd daemon that transparently builds the necessary configuration entries for those devices capable of notifying the kernel when the device is added (such as USB, FC-AL, disks, and so on). Before Solaris 7, you needed to run several devfs administration tools such as drvconfig, disks, tapes, ports, and devlinks to add in the new device and create the /dev and /devices entries necessary for the Solaris operating environment to access the new device. These tools are still available but only for compatibility purposes; drvconfig and the other link generators are symbolic links to the devfsadm utility. Furthermore, these older commands are not aware of hot-pluggable devices, nor are they flexible enough for devices with multiple instances. The devfsadm command should now be used in place of all these commands; however, devfsadmd, the devfsadm daemon, automatically detects device configuration changes, so there should be no need to run this command interactively unless the device is unable to notify the kernel that it has been added to the system.

An example of when to use the devfsadm command would be if the system had been started but the power to the CD-ROM or tape drive was not turned on. During startup, the system did not detect the device; therefore, its drivers were not installed. This can be verified by issuing the sysdef command and examining the output for sd6, the SCSI target ID normally used for the external CD-ROM:

sd, instance #6 (driver not attached)

To gain access to the CD-ROM, you could halt the system, turn on power to the CD-ROM, and start the system backup, or you could simply turn on power to the CD-ROM and issue the following command at the command prompt:

devfsadm

When used without any options, devfsadm will attempt to load every driver in the system and attach each driver to its respective device instances. You can restrict devfsadm to only look at specific devices using the -c option as follows:

devfsadm -c disk -c tape

This restricts the devfsadm command to devices of class disk and tape. As shown, the -c option can be used more than once to specify more than one device class.

Now, if you issue the sysdef command, you’ll see the following output for the CD-ROM:

sd, instance #6

You can also use the devfsadm command to configure only the devices for a specific driver such as “st” by using the -i option as follows:

devfsadm -i st

The devfsadm command will only configure the devices for the driver named “st.”

Each device has a major and minor device number assigned to it. These numbers identify the proper device location and device driver to the kernel. This number is used by the operating system to key into the proper device driver whenever a physical device file corresponding to one of the devices it manages is opened. The major device number maps to a device driver such as sd, st, or hme. The minor device number indicates the specific member within that class of devices. All devices managed by a given device driver contain a unique minor number. Some drivers of pseudo devices (software entities set up to look like devices) create new minor devices on demand. Together, the major and minor numbers uniquely define a device and its device driver.

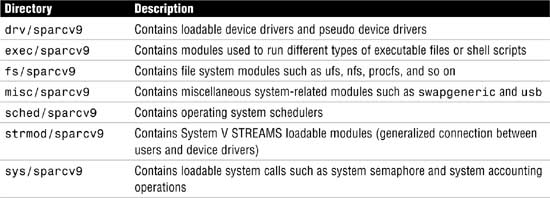

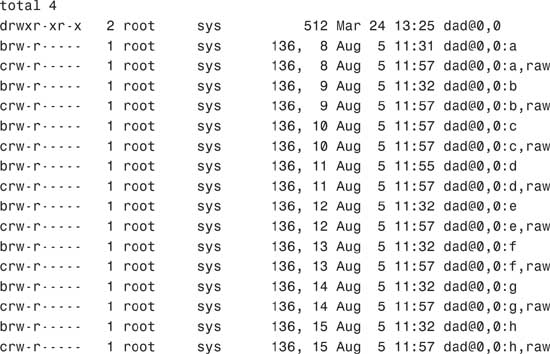

Physical device files have a unique output when listed with the ls -l command, as shown in the following example:

The system responds with this:

This long listing includes columns showing major and minor numbers for each device. The dad driver manages all the devices listed in the previous example, which have a major number of 136. Minor numbers are listed after the comma.

During the process of building the /devices directory, major numbers are assigned based on the kernel module attached to the device. Each device is assigned a major device number by using the name-to-number mappings held in the /etc/name_to_major file. This file is maintained by the system and is undocumented. The following is a sample of the /etc/name_to_major file:

more /etc/name_to_major

cn 0

rootnex 1

pseudo 2

ip 3

logindmux 4

icmp 5

fas 6

hme 7

p9000 8

p9100 9

sp 10

clone 11

sad 12

mm 13

iwscn 14

wc 15

conskbd 16

consms 17

ipdcm 18

dump 19

se 20

log 21

sy 22

ptm 23

pts 24

ptc 25

ptsl 26

bwtwo 27

audio 28

zs 29

cgthree 30

cgtwo 31

sd 32

st 33

...

...

envctrl 131

cvc 132

cvcredir 133

eide 134

hd 135

tadbat 136

ts102 137

simba 138

uata 139

dad 140

atapicd 141

To create the minor device entries, the devfsadmd daemon uses the information placed in the dev_info node by the device driver. Permissions and ownership information are kept in the /etc/minor_perm file.

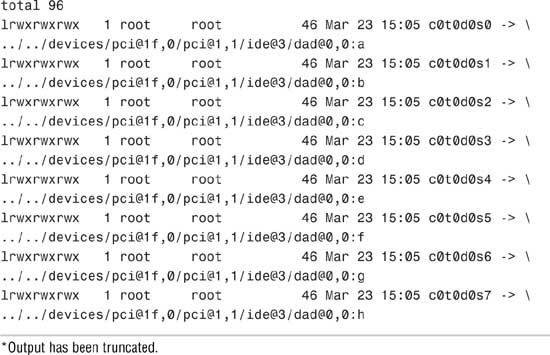

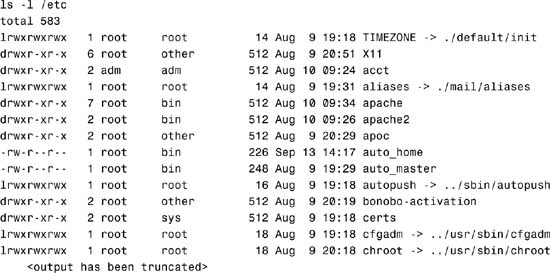

The final stage of the autoconfiguration process involves the creation of the logical device name to reflect the new set of devices on the system. To see a list of logical device names for the disks connected to a SPARC system, execute a long listing on the /dev/dsk directory, as follows:

On the second line of output from the ls -l command, notice that the logical device name c0t0d0s0 is linked to the physical device name, as shown in the following:

../../devices/pci@1f,0/pci@1,1/ide@3/dad@0,0:a

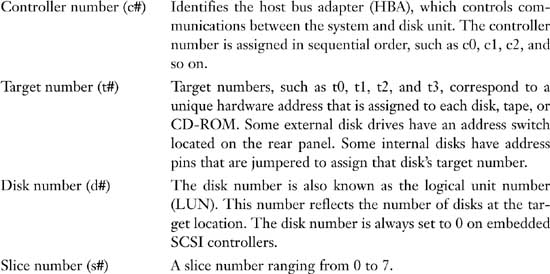

On Sun SPARC systems, you’ll see an eight string logical device name for each disk slice that contains the controller number, the target number, the disk number, and the slice number (c#t#d#s#).

X86-based Solaris systems have a different disk naming convention, but before describing the logical device name for a disk on an x86-based system, it’s worth pointing out a fundamental difference between disk slicing on a SPARC system and disk slicing on an x86-based system. Disk partitioning on the Solaris for the x86 platform has one more level than that of Solaris for SPARC. On Solaris for SPARC, slices and partitions are one and the same; on Solaris for x86, slices are “subpartitions” of a PC partition. This was done to allow Solaris to coexist with other PC operating systems, such as for dual boot configurations.

This difference in slicing brings some differences in the naming of disk devices on a Solaris x86-based PC. Slices are created in the first Solaris partition on a drive and, for SCSI disks, are named the same as on the Solaris for SPARC (c#t#d0s#). However, because slices are within a PC partition, the PC partitions have their own device names. The entire drive is named c#t#d0p0, and the PC partitions (maximum of 4) are c#t#d0p1 through c#t#d0p4. To support the x86 environment, the format utility also has an added command called fdisk to deal with the PC partitions.

Solaris x86-based systems have 16 slices versus 8 for SPARC. On the x86 PC, slice 8 is used to hold boot code and slice 9 is used for alternate sectors on some types of disks. Higher slices are available for use, but not supported by format at this time.

The major differences between the logical device names used on SPARC-based systems versus x86-based systems are as follows:

![]() c is the controller number.

c is the controller number.

![]() t is the SCSI target number.

t is the SCSI target number.

![]() s is the slice number.

s is the slice number.

![]() p represents the

p represents the fdisk partition (not slice partition).

![]() d is the LUN number or IDE Drive Number.

d is the LUN number or IDE Drive Number.

If an IDE drive is used, d is used to determine MASTER or SLAVE and the t is not used for IDE drives. For example, two controllers are installed on an x86 PC:

![]() c0 is an IDE controller.

c0 is an IDE controller.

![]() c1 is a SCSI controller.

c1 is a SCSI controller.

On an x86-based Solaris system, the following devices are listed in the /dev/dsk directory:

It’s easy to see which devices are IDE disks because they do not have a “t” in the logical device name, while the SCSI disks with “c1” have a target number listed. This system has one IDE drive and five SCSI drives listed, targets 0, 1, 2, 5, and 6 (t6 is typically the CD-ROM).

Note

In this text and in the examples, unless otherwise noted, I will be using SPARC-based logical device names.

On both SPARC-based and x86-based systems, the logical device name is the name that the system administrator uses to refer to a particular device when running various Solaris file system commands. For example, if running the mount command, use the logical device name /dev/dsk/c0t0d0s7 to mount the file system /home:

mount /dev/dsk/c0t0d0s7 /home

Logical device files in the /dev directory are symbolically linked to physical device files in the /devices directory. Logical device names are used to access disk devices if you do any of the following:

![]() Add a new disk to the system.

Add a new disk to the system.

![]() Move a disk from one system to another.

Move a disk from one system to another.

![]() Access (or mount) a file system residing on a local disk.

Access (or mount) a file system residing on a local disk.

![]() Back up a local file system.

Back up a local file system.

![]() Repair a file system.

Repair a file system.

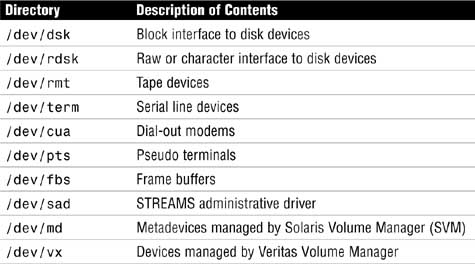

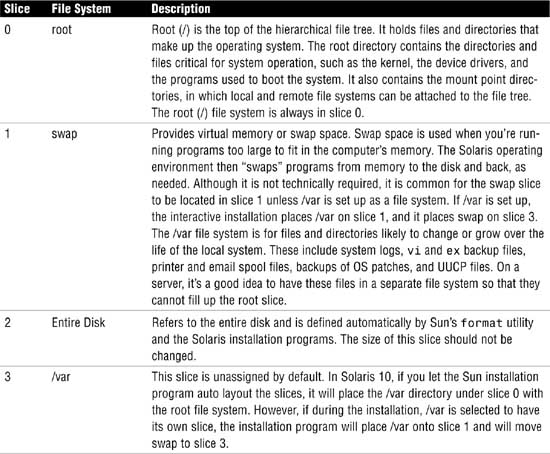

Logical devices are organized in subdirectories under the /dev directory by their device types, as shown in Table 1.3.

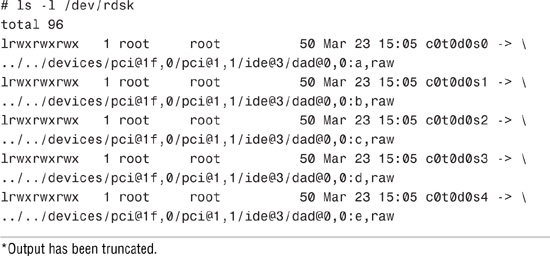

Disk drives have an entry under both the /dev/dsk and /dev/rdsk directories. The /dsk directory refers to the block or buffered device file, and the /rdsk directory refers to the character or raw device file. The “r” in rdsk stands for “raw.” You may even hear these devices referred to as “cooked” and “uncooked” devices. If you are not familiar with these devices, refer to Chapter 2, “Installing the Solaris 10 Operating Environment,” where block and character devices are described.

The /dev/dsk directory contains the disk entries for the block device nodes in /devices, as shown in the following command output:

The /dev/rdsk directory contains the disk entries for the character device nodes in /devices, as shown in the following command:

*Output has been truncated.

You should now have an understanding of how Solaris identifies disk drives connected to the system. The remainder of this chapter describes how to create file systems on these devices. It will also describe how to manage file systems and monitor disk space usage, some of the fundamental concepts you’ll need for the first exam.

Exam Alert

Make sure you understand when to use a raw device and when to use a buffered device. You’ll encounter several questions on the exam where you will need to select either the raw or buffered device for a particular command.

A file system is a collection of files and directories stored on disk in a standard Unix file system (UFS) format. All disk-based computer systems have a file system. In Unix, file systems have two basic components: files and directories. A file is the actual information as it is stored on the disk, and a directory is a list of the filenames. In addition to keeping track of filenames, the file system must keep track of files’ access dates, permissions, and ownership. Managing file systems is one of the system administrator’s most important tasks. Administration of the file system involves the following:

![]() Ensuring that users have access to data. This means that systems are up and operational, file permissions are set up properly, and data is accessible.

Ensuring that users have access to data. This means that systems are up and operational, file permissions are set up properly, and data is accessible.

![]() Protecting file systems against file corruption and hardware failures. This is accomplished by checking the file system regularly and maintaining proper system backups.

Protecting file systems against file corruption and hardware failures. This is accomplished by checking the file system regularly and maintaining proper system backups.

![]() Securing file systems against unauthorized access. Only authorized users should have access to files.

Securing file systems against unauthorized access. Only authorized users should have access to files.

![]() Providing users with adequate space for their files.

Providing users with adequate space for their files.

![]() Keeping the file system clean. In other words, data in the file system must be relevant and not wasteful of disk space. Procedures are needed to make sure that users follow proper naming conventions and that data is stored in an organized manner.

Keeping the file system clean. In other words, data in the file system must be relevant and not wasteful of disk space. Procedures are needed to make sure that users follow proper naming conventions and that data is stored in an organized manner.

You’ll see the term file system used in several ways. Usually, file system describes a particular type of file system (disk-based, network based, or virtual). It might also describe the entire file tree from the root directory downward. In another context, the term file system might be used to describe the structure of a disk slice, described later in this chapter.

The Solaris system software uses the virtual file system (VFS) architecture, which provides a standard interface for different file system types. The VFS architecture lets the kernel handle basic operations, such as reading, writing, and listing files, without requiring the user or program to know about the underlying file system type. Furthermore, Solaris provides file system administrative commands that enable you to maintain file systems.

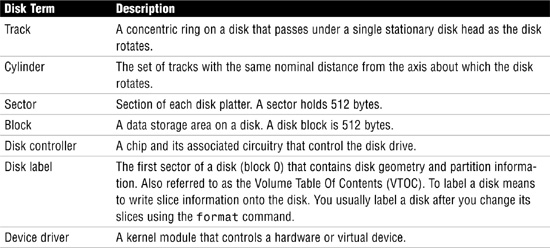

Before creating a file system on a disk, you need to understand the basic geometry of a disk drive. Disks come in many shapes and sizes. The number of heads, tracks, and sectors and the disk capacity vary from one model to another. Basic disk terminology is described in Table 1.4.

A hard disk consists of several separate disk platters mounted on a common spindle. Data stored on each platter surface is written and read by disk heads. The circular path that a disk head traces over a spinning disk platter is called a track.

Each track is made up of a number of sectors laid end to end. A sector consists of a header, a trailer, and 512 bytes of data. The header and trailer contain error-checking information to help ensure the accuracy of the data. Taken together, the set of tracks traced across all the individual disk platter surfaces for a single position of the heads is called a cylinder.

Associated with every disk is a controller, an intelligent device responsible for organizing data on the disk. Some disk controllers are located on a separate circuit board, such as SCSI. Other controller types are integrated with the disk drive, such as Integrated Device Electronics (IDE) and Enhanced IDE (EIDE).

Disks might contain areas where data cannot be written and retrieved reliably. These areas are called defects. The controller uses the error-checking information in each disk block’s trailer to determine whether a defect is present in that block. When a block is found to be defective, the controller can be instructed to add it to a defect list and avoid using that block in the future. The last two cylinders of a disk are set aside for diagnostic use and for storing the disk defect list.

A special area of every disk is set aside for storing information about the disk’s controller, geometry, and slices. This information is called the disk’s label or volume table of contents (VTOC).

To label a disk means to write slice information onto the disk. You usually label a disk after defining its slices. If you fail to label a disk after creating slices, the slices will be unavailable because the operating system has no way of knowing about them.

Solaris supports two types of disk labels, the VTOC disk label and the EFI disk label. Solaris 10 (and later versions of Solaris 9) provides support for disks that are larger than 1 terabyte on systems that run a 64-bit Solaris kernel. The acronym EFI stands for Extensible Firmware Interface and this new label format is REQUIRED for all devices over 1TB in size, and cannot be converted back to VTOC.

The EFI label provides support for physical disks and virtual disk volumes. Solaris 10 also includes updated disk utilities for managing disks greater than 1 terabyte. The UFS file system is compatible with the EFI disk label, and you can create a UFS file system greater than 1 terabyte.

The traditional VTOC label is still available for disks less than 1 terabyte in size. If you are only using disks smaller than 1 terabyte on your systems, managing disks will be the same as in previous Solaris releases. In addition, you can use the format-e command to label a disk less than 1TB with an EFI label.

The advantages of the EFI disk label over the VTOC disk label are as follows:

![]() Provides support for disks greater than 1 terabyte in size.

Provides support for disks greater than 1 terabyte in size.

![]() Provides usable slices 0–6, where slice 2 is just another slice.

Provides usable slices 0–6, where slice 2 is just another slice.

![]() Partitions (or slices) cannot overlap with the primary or backup label, nor with any other partitions. The size of the EFI label is usually 34 sectors, so partitions start at sector 34. This feature means that no partition can start at sector zero (0).

Partitions (or slices) cannot overlap with the primary or backup label, nor with any other partitions. The size of the EFI label is usually 34 sectors, so partitions start at sector 34. This feature means that no partition can start at sector zero (0).

![]() No cylinder, head, or sector information is stored in the EFI label. Sizes are reported in blocks.

No cylinder, head, or sector information is stored in the EFI label. Sizes are reported in blocks.

![]() Information that was stored in the alternate cylinders area, the last two cylinders of the disk, is now stored in slice 8.

Information that was stored in the alternate cylinders area, the last two cylinders of the disk, is now stored in slice 8.

![]() If you use the

If you use the format utility to change partition sizes, the unassigned partition tag is assigned to partitions with sizes equal to zero. By default, the format utility assigns the usr partition tag to any partition with a size greater than zero. You can use the partition change menu to reassign partition tags after the partitions are changed.

![]() Solaris ZFS (zettabyte file system) uses EFI labels by default. As of this writing, ZFS file systems are not implemented but are expected in a future Solaris 10 update.

Solaris ZFS (zettabyte file system) uses EFI labels by default. As of this writing, ZFS file systems are not implemented but are expected in a future Solaris 10 update.

The following lists restrictions of the EFI disk label:

![]() The SCSI driver, ssd or sd, currently supports only up to 2 terabytes. If you need greater disk capacity than 2 terabytes, use a disk and storage management product such as Solaris Volume Manager to create a larger device.

The SCSI driver, ssd or sd, currently supports only up to 2 terabytes. If you need greater disk capacity than 2 terabytes, use a disk and storage management product such as Solaris Volume Manager to create a larger device.

![]() Layered software products intended for systems with EFI-labeled disks might be incapable of accessing a disk without an EFI disk label.

Layered software products intended for systems with EFI-labeled disks might be incapable of accessing a disk without an EFI disk label.

![]() You cannot use the

You cannot use the fdisk command on a disk with an EFI label that is greater than 1 terabyte in size.

![]() A disk with an EFI label is not recognized on systems running previous Solaris releases.

A disk with an EFI label is not recognized on systems running previous Solaris releases.

![]() The EFI disk label is not supported on IDE disks.

The EFI disk label is not supported on IDE disks.

![]() You cannot boot from a disk with an EFI disk label.

You cannot boot from a disk with an EFI disk label.

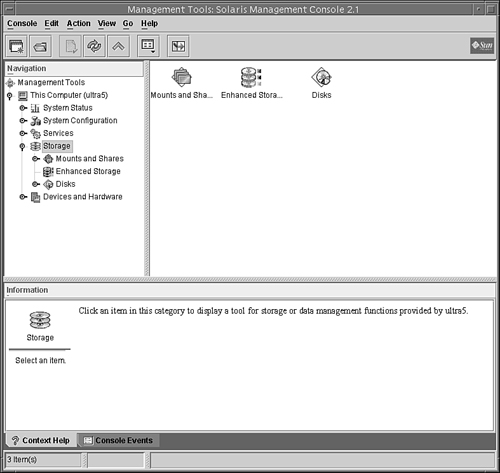

![]() You cannot use the Solaris Management Console’s Disk Manager tool to manage disks with EFI labels. Use the format utility to partition disks with EFI labels. Then, you can use the Solaris Management Console’s Enhanced Storage Tool to manage volumes and disk sets with EFI-labeled disks.

You cannot use the Solaris Management Console’s Disk Manager tool to manage disks with EFI labels. Use the format utility to partition disks with EFI labels. Then, you can use the Solaris Management Console’s Enhanced Storage Tool to manage volumes and disk sets with EFI-labeled disks.

![]() The EFI specification prohibits overlapping slices. The entire disk is represented by c#t#d#.

The EFI specification prohibits overlapping slices. The entire disk is represented by c#t#d#.

![]() The EFI disk label provides information about disk or partition sizes in sectors and blocks, but not in cylinders and heads.

The EFI disk label provides information about disk or partition sizes in sectors and blocks, but not in cylinders and heads.

![]() The following

The following format options are either not supported or are not applicable on disks with EFI labels:

![]() The

The save option is not supported because disks with EFI labels do not need an entry in the format.dat file.

![]() The

The backup option is not applicable because the disk driver finds the primary label and writes it back to the disk.

An important part of the disk label is the partition table, which identifies a disk’s slices, the slice boundaries (in cylinders), and the total size of the slices. A disk’s partition table can be displayed by using the format utility described in the “Disk Slices” section later in this chapter.

Objective:

Describe the purpose, features, and functions of disk-based, networked, and pseudo file systems in a Solaris operating environment, and explain the differences among these file system types.

Solaris file systems can be put into three categories: disk-based, network-based, and virtual.

Disk-based file systems reside on the system’s local disk. As of this writing, the following are four types of disk-based file systems found in Solaris 10:

![]() UFS (Unix File System)—The Unix file system, which is based on the BSD FFS Fast file system (the traditional Unix file system). The UFS is the default disk-based file system used in Solaris.

UFS (Unix File System)—The Unix file system, which is based on the BSD FFS Fast file system (the traditional Unix file system). The UFS is the default disk-based file system used in Solaris.

![]() HSFS (High Sierra File System)—The High Sierra and ISO 9660 file system, which supports the Rock Ridge extensions. The HSFS file system is used on CD-ROMs and is a read-only file system.

HSFS (High Sierra File System)—The High Sierra and ISO 9660 file system, which supports the Rock Ridge extensions. The HSFS file system is used on CD-ROMs and is a read-only file system.

![]() PCFS (PC File System)—The PC file system, which allows read/write access to data and programs on DOS-formatted disks written for DOS-based personal computers.

PCFS (PC File System)—The PC file system, which allows read/write access to data and programs on DOS-formatted disks written for DOS-based personal computers.

![]() UDF (Universal Disk Format)—The Universal Disk Format file system. UDF is the new industry-standard format for storing information on optical media technology called DVD (digital versatile disc).

UDF (Universal Disk Format)—The Universal Disk Format file system. UDF is the new industry-standard format for storing information on optical media technology called DVD (digital versatile disc).

![]() Not in Solaris 10 as of this writing, but worth noting is the zettabyte file system (ZFS), scheduled for a future Solaris 10 update, incorporating advanced data security and protection features, eliminating the need for

Not in Solaris 10 as of this writing, but worth noting is the zettabyte file system (ZFS), scheduled for a future Solaris 10 update, incorporating advanced data security and protection features, eliminating the need for fsck or other recovery mechanisms. By redefining file systems as virtualized storage, Solaris ZFS will enable virtually unlimited scalability.

Network-based file systems are file systems accessed over the network. Typically, they reside on one system and are accessed by other systems across the network.

The network file system (NFS) or remote file systems are file systems made available from remote systems. NFS is the only available network-based file system bundled with the Solaris operating environment. NFS is discussed in detail in Chapter 9, “Virtual File Systems, Swap Space, and Core Dumps.”

Virtual file systems, previously called pseudo file systems, are virtual or memory-based file systems that create duplicate paths to other disk-based file systems or provide access to special kernel information and facilities. Most virtual file systems do not use file system disk space, although a few exceptions exist. Cache file systems, for example, use a disk-based file system to contain the cache.

Some virtual file systems, such as the temporary file system, might use the swap space on a physical disk. The following is a list of some of the more common types of virtual file systems:

![]() SWAPFS (Swap File System)—A file system used by the kernel for swapping. Swap space is used as a virtual memory storage area when the system does not have enough physical memory to handle current processes.

SWAPFS (Swap File System)—A file system used by the kernel for swapping. Swap space is used as a virtual memory storage area when the system does not have enough physical memory to handle current processes.

![]() PROCFS (Process File System)—The Process File System resides in memory. It contains a list of active processes, by process number, in the

PROCFS (Process File System)—The Process File System resides in memory. It contains a list of active processes, by process number, in the /proc directory. Commands such as ps use information in the /proc directory. Debuggers and other development tools can also access the processes’ address space by using file system calls.

![]() LOFS (Loopback File System)—The Loopback File System lets you create a new virtual file system, which can provide access to existing files using alternate pathnames. Once the virtual file system is created, other file systems can be mounted within it, without affecting the original file system.

LOFS (Loopback File System)—The Loopback File System lets you create a new virtual file system, which can provide access to existing files using alternate pathnames. Once the virtual file system is created, other file systems can be mounted within it, without affecting the original file system.

![]() CacheFS (Cache File System)—The Cache File System lets you use disk drives on local workstations to store frequently used data from a remote file system or CD-ROM. The data stored on the local disk is the cache.

CacheFS (Cache File System)—The Cache File System lets you use disk drives on local workstations to store frequently used data from a remote file system or CD-ROM. The data stored on the local disk is the cache.

![]() TMPFS (Temporary File System)—The Temporary File System uses local memory for file system reads and writes. Because TMPFS uses physical memory and not the disk, access to files in a TMPFS is typically much faster than to files in a UFS. Files in the temporary file system are not permanent; they are deleted when the file system is unmounted and when the system is shut down or rebooted. TMPFS is the default file system type for the

TMPFS (Temporary File System)—The Temporary File System uses local memory for file system reads and writes. Because TMPFS uses physical memory and not the disk, access to files in a TMPFS is typically much faster than to files in a UFS. Files in the temporary file system are not permanent; they are deleted when the file system is unmounted and when the system is shut down or rebooted. TMPFS is the default file system type for the /tmp directory in the SunOS system software. You can copy or move files into or out of the /tmp directory just as you would in a UFS /tmp. When memory is insufficient to hold everything in the temporary file system, the TMPFS uses swap space as a temporary backing store, as long as adequate swap space is present.

![]() MNTFS—The MNTFS type maintains information about currently mounted file systems. MNTFS is described later in this chapter.

MNTFS—The MNTFS type maintains information about currently mounted file systems. MNTFS is described later in this chapter.

![]() CTFS (Contract File System)—The CTFS is associated with the

CTFS (Contract File System)—The CTFS is associated with the /system/contract directory and is the interface for creating, controlling, and observing contracts. The service management facility (SMF) uses process contracts (a type of contract) to track the processes which compose a service.

![]() DEVFS (Device file System)—The DEVFS is used to manage the namespace of all devices on the system. This file system is used for the

DEVFS (Device file System)—The DEVFS is used to manage the namespace of all devices on the system. This file system is used for the /devices directory. The devfs file system is new in Solaris 10 and increases system boot performance because only device entries that are needed to boot the system are attached. New device entries are added as the devices are accessed.

![]() FDFS (File Descriptor File System)—The FDFS provides explicit names for opening files by using file descriptors.

FDFS (File Descriptor File System)—The FDFS provides explicit names for opening files by using file descriptors.

![]() OBJFS (Object File System)—The OBJFS (object) file system describes the state of all modules currently loaded by the kernel. This file system is used by debuggers to access information about kernel symbols without having to access the kernel directly.

OBJFS (Object File System)—The OBJFS (object) file system describes the state of all modules currently loaded by the kernel. This file system is used by debuggers to access information about kernel symbols without having to access the kernel directly.

Objective:

Perform disk partitioning and relabel a disk in a Solaris operating environment using the appropriate files, commands, options, menus, and/or tables.

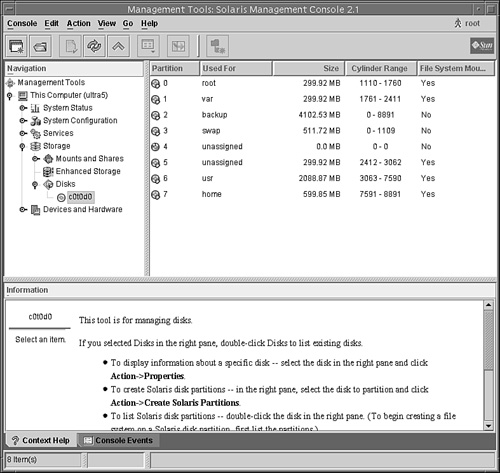

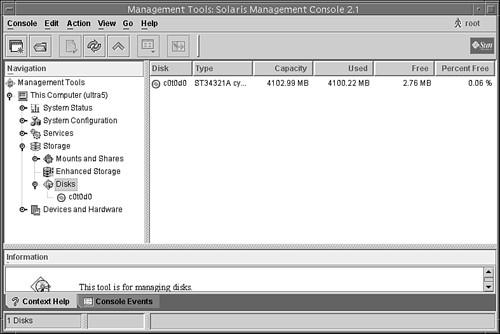

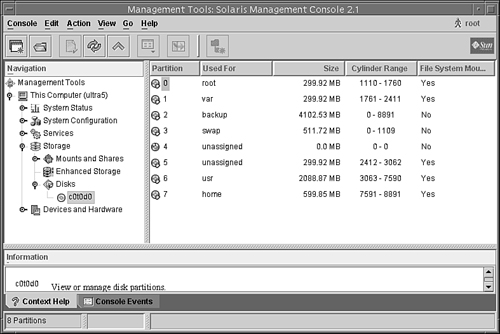

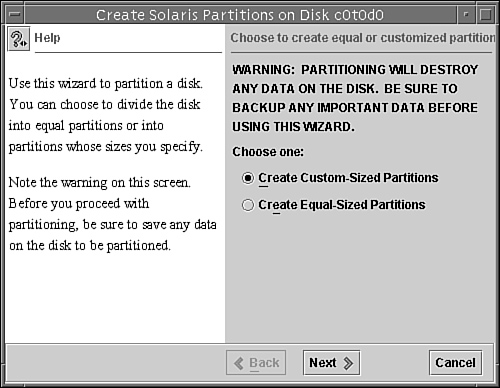

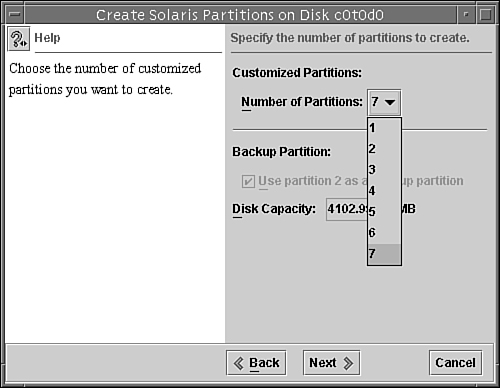

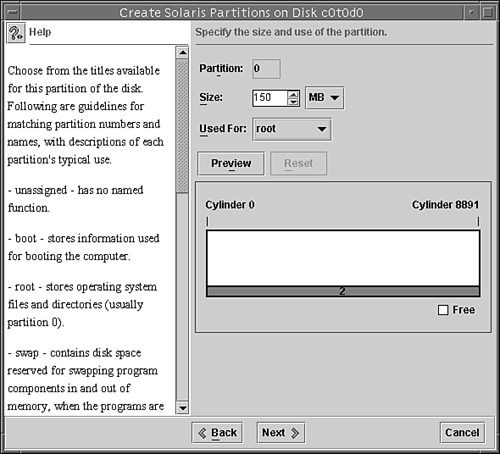

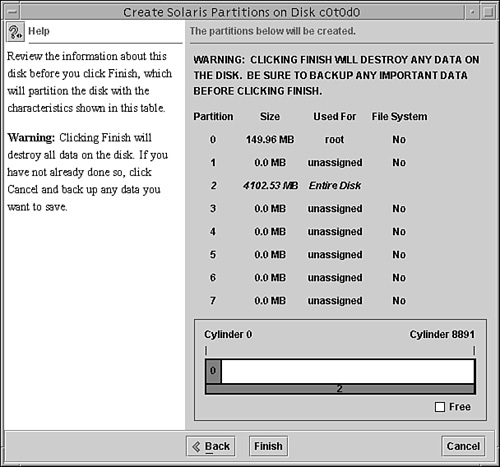

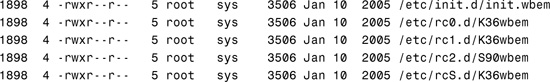

Disks are divided into regions called disk slices or disk partitions. A slice is composed of a single range of contiguous blocks. It is a physical subset of the disk (except for slice 2, which represents the entire disk). A Unix file system or the swap area is built within these disk slices. The boundaries of a disk slice are defined when a disk is partitioned using the Solaris format utility or the Solaris Management Console Disks Tool, and the slice information for a particular disk can be viewed by using the prtvtoc command. Each disk slice appears to the operating system (and to the system administrator) as though it were a separate disk drive.

Disk slicing differs between the SPARC and the x86 platforms. On the SPARC platform, the entire disk is devoted to the Solaris OS; the disk can be divided into 8 slices, numbered 0–7. On the x86 platform, the disk is divided into fdisk partitions using the fdisk command. The Solaris fdisk partition is divided into 10 slices, numbered 0–9.

Note

Slices Versus Partitions Solaris device names use the term slice (and the letter s in the device name) to refer to the slice number. Slices were called partitions in SunOS 4.x. This book attempts to use the term slice whenever possible; however, certain interfaces, such as the format and prtvtoc commands, refer to slices as partitions.

A physical disk consists of a stack of circular platters, as shown in Figure 1.1. Data is stored on these platters in a cylindrical pattern. Cylinders can be grouped and isolated from one another. A group of cylinders is referred to as a slice. A slice is defined with start and end points, defined from the center of the stack of platters, which is called the spindle.

Disk slices are defined by an offset and a size in cylinders. The offset is the distance from cylinder 0. To define a slice, the administrator provides a starting cylinder and an ending cylinder. A disk can have up to eight slices, named 0 to 7, but it is uncommon to use partition 2 as a file system. (See Chapter 2 for a discussion of disk-storage systems and sizing partitions.)

Note

Using Slice 2 As a Partition Sometimes a relational database uses an entire disk and requires one single raw partition. It’s convenient in this circumstance to use slice 2, as it represents the entire disk, but is not recommended because you would be using cylinder 0. You should start your database partition on cylinder 1 so that you don’t risk overwriting the disk’s VTOC. UFS file systems are smart enough not to touch the VTOC, but some databases have proven to be not so friendly.

When setting up slices, remember these rules:

![]() Each disk slice holds only one file system.

Each disk slice holds only one file system.

![]() No file system can span multiple slices.

No file system can span multiple slices.

![]() After a file system is created, its size cannot be increased or decreased without repartitioning and destroying the partition directly before or after it.

After a file system is created, its size cannot be increased or decreased without repartitioning and destroying the partition directly before or after it.

![]() Slices cannot span multiple disks; however, multiple swap slices on separate disks are allowed.

Slices cannot span multiple disks; however, multiple swap slices on separate disks are allowed.

When we discuss logical volumes later in this chapter, you’ll learn how to get around some of these limitations in file systems.

Also follow these guidelines when planning the layout of file systems:

![]() Distribute the workload as evenly as possible among different I/O systems and disk drives. Distribute

Distribute the workload as evenly as possible among different I/O systems and disk drives. Distribute /home and swap directories evenly across disks. A single disk has limitations on how quickly data can be transferred. By spreading this load across more than one disk, you can improve performance exponentially. This concept is described in Chapter 10, “Managing Storage Volumes,” where I describe striping using the Solaris Volume Manager.

![]() Keep projects or groups within their own file system. This enables you to keep better track of data for backups, recovery, and security reasons. Some disks might have better performance than others. For multiple projects, you could create multiple file systems and distribute the I/O workload by putting high-volume projects on separate physical disks.

Keep projects or groups within their own file system. This enables you to keep better track of data for backups, recovery, and security reasons. Some disks might have better performance than others. For multiple projects, you could create multiple file systems and distribute the I/O workload by putting high-volume projects on separate physical disks.

![]() Use the faster drives for file systems that need quick access and the slower drives for data that might not need to be retrieved as quickly. Some systems have drives that were installed as original hardware along with newer, better performing, drives that were added on some time later. Maybe you have a database dedicated to a high-volume project. This would be a perfect candidate to put on the newer, faster disk while a less accessed project could go on the slower disk drive.

Use the faster drives for file systems that need quick access and the slower drives for data that might not need to be retrieved as quickly. Some systems have drives that were installed as original hardware along with newer, better performing, drives that were added on some time later. Maybe you have a database dedicated to a high-volume project. This would be a perfect candidate to put on the newer, faster disk while a less accessed project could go on the slower disk drive.

![]() It is not important for most sites to be concerned about keeping similar types of user files in the same file system.

It is not important for most sites to be concerned about keeping similar types of user files in the same file system.

![]() Occasionally, you might have some users who consistently create small or large files. You might consider creating a separate file system with more inodes for users who consistently create small files. (See the section titled “The inode” later in this chapter for more information on inodes.)

Occasionally, you might have some users who consistently create small or large files. You might consider creating a separate file system with more inodes for users who consistently create small files. (See the section titled “The inode” later in this chapter for more information on inodes.)

We discuss disk slices again in Chapter 2, where we describe setting up disk storage and planning the layout of your disk slices.

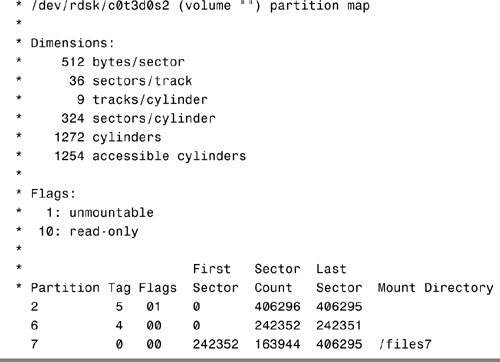

As described earlier, disk configuration information is stored in the disk label. If you know the disk and slice number, you can display information for a disk by using the print volume table of contents (prtvtoc) command. You can specify the volume by specifying any slice defined on the disk (for example, /dev/rdsk/c0t3d0s2 or /dev/rdsk/c0t3d0s*). Regardless of which slice you specify, all slices defined on the disk will be displayed. If you know the target number of the disk but do not know how it is divided into slices, you can show information for the entire disk by specifying either slice 2 or s*. Step by Step 1.2 shows how you can examine information stored on a disk’s label by using the prtvtoc command.

STEP BY STEP

1.2 Examining a Disk’s Label Using the prtvtoc Command

The prtvtoc command shows the number of cylinders and heads, as well as how the disk’s slices are arranged.

The following is an example of running the prtvtoc command on a SCSI disk with an EFI label:

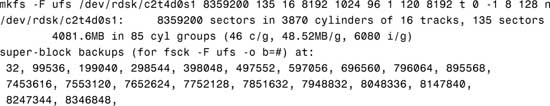

Before you can create a file system on a disk, the disk must be formatted, and you must divide it into slices by using the Solaris format utility. Formatting involves two separate processes:

![]() Writing format information to the disk

Writing format information to the disk

![]() Completing a surface analysis, which compiles an up-to-date list of disk defects

Completing a surface analysis, which compiles an up-to-date list of disk defects

When a disk is formatted, header and trailer information is superimposed on the disk. When the format utility runs a surface analysis, the controller scans the disk for defects. It should be noted that defects and formatting information reduce the total disk space available for data. This is why a new disk usually holds only 90–95% of its capacity after formatting. This percentage varies according to disk geometry and decreases as the disk ages and develops more defects.

The need to perform a surface analysis on a disk drive has dropped as more manufacturers ship their disk drives formatted and partitioned. You should not need to perform a surface analysis within the format utility when adding a disk drive to an existing system unless you think disk defects are causing problems. The primary reason that you would use format is if you want to view or change the partitioning scheme on a disk.

Caution

Always Back Up Your Data Formatting and creating slices is a destructive process, so make sure user data is backed up before you start.

The format utility searches your system for all attached disk drives and reports the following information about the disk drives it finds:

In addition, the format utility is used in disk repair operations to do the following:

![]() Retrieve disk labels

Retrieve disk labels

![]() Repair defective sectors

Repair defective sectors

![]() Format and analyze disks

Format and analyze disks

![]() Partition disks

Partition disks

![]() Label disks (write the disk name and configuration information to the disk for future retrieval)

Label disks (write the disk name and configuration information to the disk for future retrieval)

The Solaris installation program partitions and labels disk drives as part of installing the Solaris release. However, you might need to use the format utility when doing the following:

![]() Displaying slice information

Displaying slice information

![]() Dividing a disk into slices

Dividing a disk into slices

![]() Formatting a disk drive when you think disk defects are causing problems

Formatting a disk drive when you think disk defects are causing problems

![]() Repairing a disk drive

Repairing a disk drive

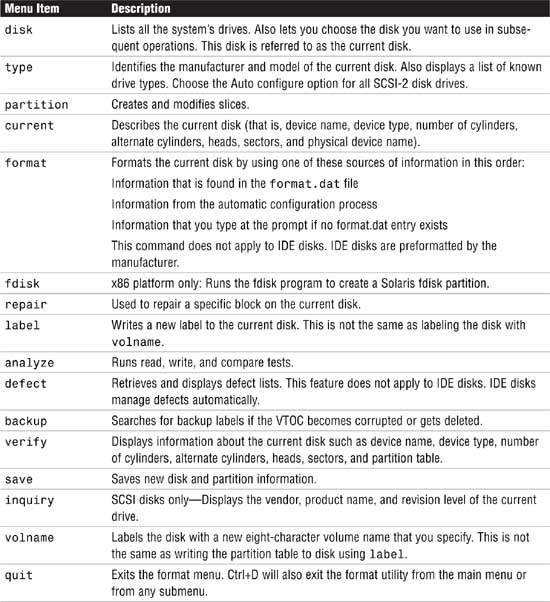

The main reason a system administrator uses the format utility is to divide a disk into disk slices.

Exam Alert

format Utility Pay close attention to each menu item in the format utility and understand what task each performs. Expect to see several questions pertaining to the format utility menus on Exam CX-310-200. Along with adding slices, make sure you know how to remove or resize slices. You may see a scenario where a production system is running out of swap and you need to go into the format utility and add another swap slice.

The process of creating slices is outlined in Step by Step 1.3.

Note

If you are using Solaris on an x86 or x64-based PC system, refer to the next Step by Step to create an FDISK partition before creating the slices.

STEP BY STEP

1.3 Formatting a Disk

1. Become superuser.

2. Type format.

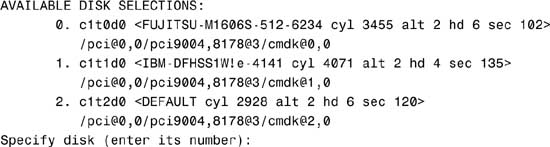

The system responds with this:

Searching for disks...done

AVAILABLE DISK SELECTIONS:

3. Specify the disk (enter its number).

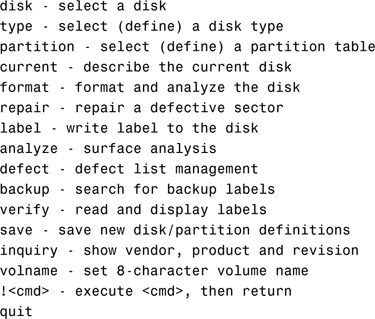

The system responds with the format main menu:

FORMAT MENU:

Table 1.5. describes the format main menu items.

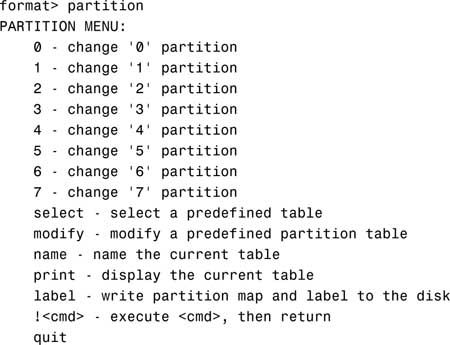

4. Type partition at the format prompt. The partition menu is displayed.

Note

Using Shortcuts in the format Utility It is unnecessary to type the entire command. After you type the first two characters of a command, the format utility recognizes the entire command.

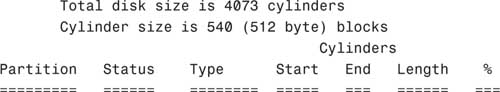

5. Type print to display the current partition map.

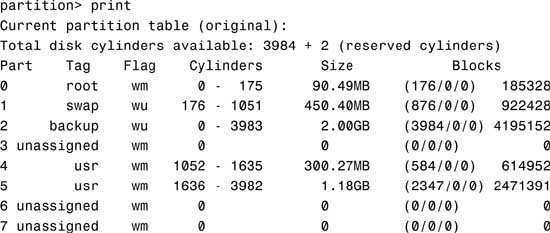

The system responds with this:

The columns displayed with the partition table are

![]() Part—The slice number (0–7).

Part—The slice number (0–7).

![]() Tag—This is an optional value that indicates how the slice is being used. The value can be any of the following names that best fits the function of the file system you are creating:

Tag—This is an optional value that indicates how the slice is being used. The value can be any of the following names that best fits the function of the file system you are creating:

unassigned, boot, root, swap, usr, backup, stand, var, home, alternates, reserved

You may also see tags labeled public or private, which represent Sun StorEdge Volume Manager tags:

![]() Flag—Values in this column can be

Flag—Values in this column can be

![]() wm—The disk slice is writable and mountable.

wm—The disk slice is writable and mountable.

![]() wu—The disk slice is writable and unmountable (such as a swap slice).

wu—The disk slice is writable and unmountable (such as a swap slice).

![]() rm—The disk slice is read-only and mountable.

rm—The disk slice is read-only and mountable.

![]() ru—The disk slice is read-only and unmountable.

ru—The disk slice is read-only and unmountable.

![]() Cylinders—The starting and ending cylinder number for the disk slice.

Cylinders—The starting and ending cylinder number for the disk slice.

![]() Size—The slice size specified as

Size—The slice size specified as

![]() mb—megabytes

mb—megabytes

![]() gb—gigabytes

gb—gigabytes

![]() b—blocks

b—blocks

![]() c—cylinders

c—cylinders

![]() Blocks—The total number of cylinders and the total number of sectors per slice.

Blocks—The total number of cylinders and the total number of sectors per slice.

Note

Wasted Disk Space Wasted disk space occurs during partitioning when one or more cylinders have not been allocated to a disk slice. This may happen intentionally or accidentally. If there are unallocated slices available, then wasted space can possibly be assigned to a slice later on.

You can use the name and save commands in the partition menu to name and save a newly created partition table to a file that can be referenced by name later, when you want to use this same partition scheme on another disk. When issuing the name command, you’ll provide a unique name for this partition scheme and then issue the save command to save the information to the ./format.dat file. Normally this file is located in the /etc directory, so provide the full pathname for /etc/format.dat to update the master file.

6. After you partition the disk, you must label it by typing label at the partition prompt:

partition> label

You are asked for confirmation on labeling the disk as follows:

Ready to label disk, continue?

Enter Y to continue.

Note

Label Your Drive To label a disk means to write slice information onto the disk. If you don’t label the drive when exiting the format utility, your partition changes will not be retained. Get into the habit of labeling at the partition submenu, but you can also label at the format utility main menu as well—you get two chances to remember before exiting the utility.

7. After labeling the disk, type quit to exit the partition menu:

partition> quit

8. Type quit again to exit the format utility:

format> quit

It’s important to point out a few undesirable things that can happen when defining disk partitions with the format utility if you’re not careful. First, be careful not to waste disk space. Wasted disk space can occur when you decrease the size of one slice and do not adjust the starting cylinder number of the adjoining disk slice.

Second, don’t overlap disk slices. Overlapping occurs when one or more cylinders are allocated to more than one disk slice. For example, increasing the size of one slice without decreasing the size of the adjoining slice will create overlapping partitions. The format utility will not warn you of wasted disk space or overlapping partitions.

As described earlier in this chapter, Solaris on x86-based PCs treats disk drives slightly differently than on the SPARC-based systems. On an x86 system, once a disk drive has been physically installed and verified as working, you’ll use the format command to slice the disk, but first an fdisk partition must be created on the new drive. Use the format command as follows:

STEP BY STEP

1.4 Using the format Command

1. As root, type format to get into the format utility.

format

The following menu appears:

2. Enter the number corresponding to the new drive and the following menu will be displayed:

3. Select the fdisk option and the following menu appears:

The recommended default partitioning for your disk is:

a 100% "SOLARIS System" partition.

To select this, please type "y". To partition your disk

differently, type "n" and the "fdisk" program will let you

select other partitions.

4. If you wish to use the entire drive for Solaris enter y. This will return to the format menu. If n is chosen, the fdisk menu will be displayed.

THERE ARE NO PARTITIONS CURRENTLY DEFINED

SELECT ONE OF THE FOLLOWING:

1. Create a partition

2. Change Active (Boot from) partition

3. Delete a partition

4. Exit (Update disk configuration and exit)

5. Cancel (Exit without updating disk configuration)

Enter Selection:

5. Choose 1 to create a Solaris FDISK partition. This is not the same as a Solaris slice.

6. After creating the partition, choose 4 to exit and save. The format menu will return.

7. Choose partition and follow the Step by Step 1.3 procedure described earlier for formatting a disk, beginning at step number 4.

One more item of note: On standard UFS file systems, don’t change the size of disk slices that are currently in use. When a disk with existing slices is repartitioned and relabeled, any existing data will be lost. Before repartitioning a disk, first copy all the data to tape or to another disk.

When using the format utility to change the size of disk slices, a temporary slice is automatically designated that expands and shrinks to accommodate the slice resizing operations. This temporary slice is referred to as the free hog, and it represents the unused disk space on a disk drive. If a slice is decreased, the free hog expands. The free hog is then used to allocate space to slices that have been increased. It does not, however, prevent the overlapping of disk slices as described in the previous section.

The free hog slice exists only when you run the format utility. It is not saved as a permanent slice.

If you need to change the size of slices on a particular disk, you can either re-create the disk slices as outlined in the previous section or use the modify option of the format utility.

Caution

Copy Your Data to Avoid Loss You will lose all data when changing partition sizes. Make sure that you copy your data either to another disk or to tape before continuing.

The modify option allows the root to create slices by specifying the size of each slice without having to keep track of starting cylinder boundaries. It also keeps track of any excess disk space in the temporary free hog slice. To modify a disk slice, follow the process outlined in Step by Step 1.5.

STEP BY STEP

1.5 Modifying a Disk Slice

1. Make a backup of your data. This process destroys the data on this disk slice.

2. As root, enter the partition menu of the format utility, as described in Step by Step 1.3.

3. After printing the existing partition scheme, type modify and press Enter.

Note

Mounted Partitions If you try to modify mounted partitions, you receive an error that states “Cannot modify disk partitions while it has mounted partitions.”

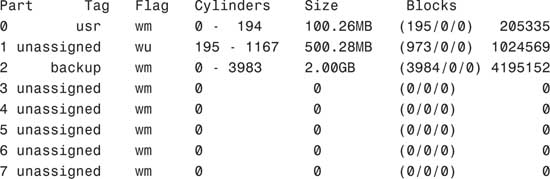

When typing modify, you’ll see the following output on a disk that does not have mounted partitions:

Select partitioning base:

0. Current partition table (original)

1. All Free Hog

Choose base (enter number) [0]?

4. Press Enter to select the default selection. The following is displayed:

Do you wish to continue creating a new partition

table based on above table[yes]?