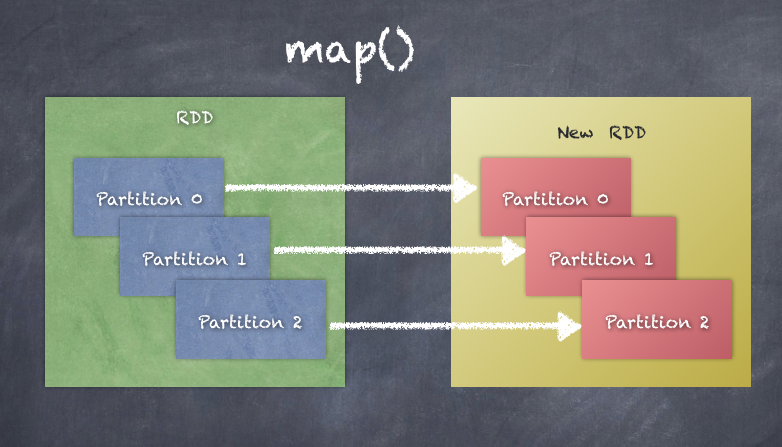

map applies transformation function to input partitions to generate output partitions in the output RDD.

As shown in the following snippet, this is how we can map an RDD of a text file to an RDD with lengths of the lines of text:

scala> val rdd_two = sc.textFile("wiki1.txt")

rdd_two: org.apache.spark.rdd.RDD[String] = wiki1.txt MapPartitionsRDD[8] at textFile at <console>:24

scala> rdd_two.count

res6: Long = 9

scala> rdd_two.first

res7: String = Apache Spark provides programmers with an application programming interface centered on a data structure called the resilient distributed dataset (RDD), a read-only multiset of data items distributed over a cluster of machines, that is maintained in a fault-tolerant way.

scala> val rdd_three = rdd_two.map(line => line.length)

res12: org.apache.spark.rdd.RDD[Int] = MapPartitionsRDD[11] at map at <console>:2

scala> rdd_three.take(10)

res13: Array[Int] = Array(271, 165, 146, 138, 231, 159, 159, 410, 281)

The following diagram explains of how map() works. You can see that each partition of the RDD results in a new partition in a new RDD essentially applying the transformation to all elements of the RDD: