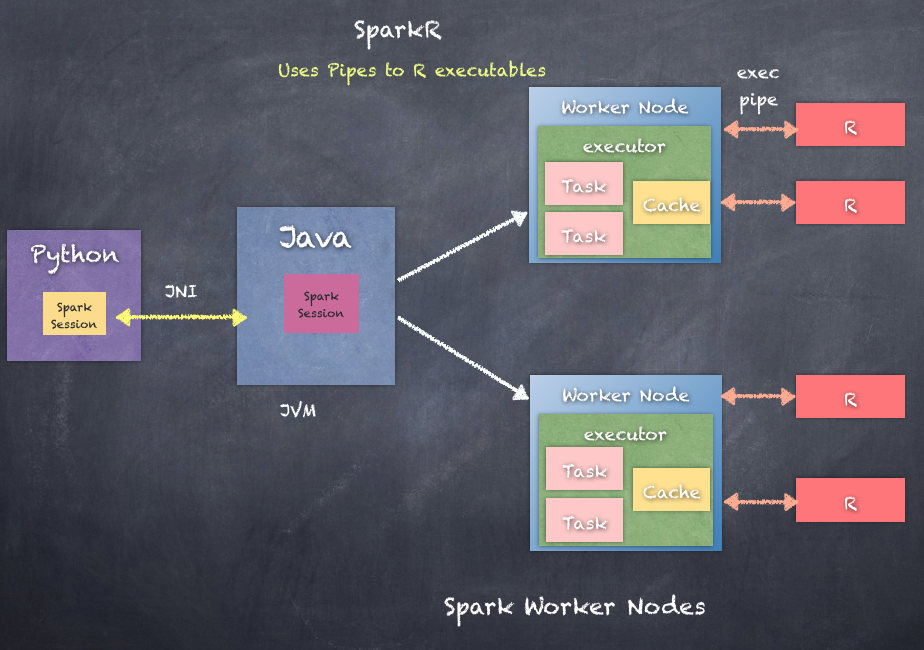

SparkR is an R package that provides a light-weight frontend to use Apache Spark from R. SparkR provides a distributed data frame implementation that supports operations such as selection, filtering, aggregation, and so on. SparkR also supports distributed machine learning using MLlib. SparkR uses R-based SparkContext and R scripts as tasks and then uses JNI and pipes to executed processes to communicate between Java-based Spark clusters and R scripts.

R must be installed on all worker nodes running the Spark executors.

The following is how SparkR works by communicating between Java processed and R scripts: