Editing within a Shot: The Art of Pre-Compositing

Before dividing the visual effects editing process into various procedures, it is important to first explain what visual effects editing is exactly and how it began.

VFX Editors actually do edit, just not in the traditional sense. They edit shots, not sequences. A traditional editor, called a picture editor, cuts together scenes by editing together different camera angles, called shots, to tell a story. For example, a scene may begin with a close-up of a general’s face looking through a telescope followed by a wide shot of a group of soldiers marching toward him on a battlefield and finally ending with a medium shot of the general riding away on his horse. By contrast, a VFX Editor edits within an individual shot by compositing together different elements and assembling them one on top of the other. Pacing and composition within a shot need to be experimented with and locked down before they can blend seamlessly into the larger context of an edited sequence. This process is called pre-compositing.

To illustrate pre-compositing, let’s say the wide shot of the battlefield sequence described before is a visual effects shot containing multiple elements requiring compositing: a BG (background)36 plate of the battlefield, several small groups of soldiers marching on different areas of the field (that when composited together give the impression that hundreds of people were filmed marching at the same time), and pyrotechnic (pyro) elements of shells exploding in the distance. The VFX Editor works with the VFX Supervisor, the Director, and the picture editor to decide which takes of the BG plate, soldier, and pyro elements work best in the shot and on what frames the different pyro elements should enter the shot. The selected elements are layered on top of each other in a digital editing program starting with the BG plate, then the shells, and concluding with the soldiers. To make the shot work, some, or all, of the elements may require a combination of cropping, keying, keyframe animation, resizing, repositioning (repoing), scaling, or retiming.

In essence, the VFX Editor’s job is to help determine what elements are needed for a shot and how they are to interact with each other. The information describing how elements interact with each other in a shot, as referenced in a precomposite (precomp), is called line-up information. Once the Director approves precomps, the line-up information must be delivered to the visual effects facility.

To help facilitate precomping, the VFX Editor should carefully track and organize elements as they are shot so they can be easily located when needed. This is especially important when the Director or VFX Supervisor needs to quickly see alternate takes for a given element. Knowing where all of the elements are ahead of time speeds up the process greatly.

Multiple tools are available to the VFX Editor: nonlinear editing software such as Avid’s Media Composer, Apple’s Final Cut Pro, and Adobe’s After Effects. All or some of these tools may be used on a show. So the more platform-neutral an editor is, the better.

How It Came to Be

In the beginning (circa 1910s), visual effects editing was accomplished in-camera. The Director, actors, and cinematographer would film an action and then literally backwind (rewind) the footage in the movie camera to expose another piece of action over the first. The Director and crew would have to roughly estimate when the second piece of action should start in relation to the first before the camera began rolling again for a second pass. As time went on, this practice of in-camera double exposure soon established itself as an accepted form of trick photography from which more elaborate techniques and hardware would be built. The process was originally crude and imprecise, but it did acknowledge that pacing within a visual effects shot was important to its overall impact.

From the mid 1930s on, visual effects editing was accomplished using the optical printer. The optical printer allowed for individual elements to be composited together photochemically, using a precise projector-to-camera photography setup. The results were exposed onto a new piece of film stock, which then had to be processed at a laboratory in order to be seen. Because visual effects no longer had to be done in-camera, actions intended for a visual effects shot could now be filmed separately to be combined later via optical printing. The VFX Editor could examine the projected pieces of film individually on a viewing device, such as a moviola,37 to get a feel for each element’s timing. The elements could then be placed side by side on a light table to determine exactly how each piece of action should line up, frame for frame, in relation to one another.

Once a locked-sync between elements was determined, the VFX Editor ran test composites on the optical printer, or literally overlaid one piece of film over another on a moviola (a process called bi-packing), while keeping track of how the elements lined up. The routine was time consuming and contained errors that could not be detected until the film was processed overnight and screened the next day, but it was still a huge leap forward from the early days of in-camera compositing.

The digital revolution of the 1990s, with computer graphic animation and digital compositing, not only opened the floodgates on visual effects in general, but also changed the way visual effects editing would be performed from that point forward. Just as most traditional hand-drawn and stop-motion animation would be replaced with computer graphic animation, visual effects editing would no longer be hand tooled using moviolas, light tables, and optical printers. Instead of actual footage to hold up to a light source, photographed imagery could now be digitized into a nonlinear file format to be used with a computer.

As a result, precomposites could now be rendered electronically in minutes as opposed to overnight. Nonlinear editing software allowed the VFX Editor, VFX Supervisor, and Director the ability to closely collaborate in formulating the pacing of a visual effects shot and determine what elements would be required in a fraction of the time it took using the optical printer.

Note also that at this time visual effects editing did not come without its own set of unexpected challenges. For example, Avid’s Media Composer was originally introduced via commercial television as opposed to feature filmmaking. As such, feature picture editors and VFX Editors both had to adapt to the new digital technology. Hence, the VFX Editor was given the challenge of performing multiple checks simply to keep the flow of visual effects editorial information running as smoothly as possible throughout the production pipeline; from visual effects facility to client cutting room (and vice versa), from one visual effects department to another, and from one format to another.

Today, visual effects editors are at a point in which the responsibility of tracking numbers equals or even supersedes the editing process itself. Modern technology allows for the making of visual effects shots to be shared across all visual effects disciplines in order to yield the best results possible for the project at hand. Out of production necessity, the role of the VFX Editor has evolved. The VFX Editor is still responsible for adapting to new techniques and collaborating with all key contributors in order to decipher the most precise visual effects shot count information.

Modern Day Tracking and Disseminating of Information

The Cutting Room’s VFX Editor

As mentioned before, dissemination of information is one of the most important tasks of a VFX Editor. Gathering this information begins when a sequence is far enough along in the editing process, as determined by the Director, VFX Supervisor, or in some cases, the Producers and or the studio, to be turned over38 to a visual effects facility so that artists may begin working on the shot(s). While the type of work for some shots may be obvious, other shots may not be so obvious. Therefore, the VFX Editor must carefully look through the sequence, examining all shots for any potential work, and then check with the VFX Supervisor or picture editors to notify them of what was found that they may not have been aware of.

Before an artist can begin working on a shot, the required elements must be scanned (if they were filmed as opposed to digitally captured) and delivered to the visual effects facility. Some shots may only have a BG plate, while others may have many elements. In addition to the elements visible in the shot, there may also be reference spheres,39 clean plates,40 and various other references that need to be scanned. These may be selected by either the production’s VFX Editor or the visual effects facility’s VFX Editor. The two editors should discuss who should select the pertinent reference elements before the first turnover. Regardless of which editor selects these elements, it is the VFX Editor in the cutting room who will request the scans from the scanning facility.

The scanning facility usually requires key numbers, lab roll numbers, and possibly timecode numbers, which can be exported from the VFX Editor’s editing system into a database, such as FileMaker Pro. How the scanning facility wants the information delivered and in what format can vary. Therefore, the VFX Editor should establish a procedure with the facility ahead of time. The naming convention of scanned files is crucial. As mentioned before, some shots may have multiple elements; therefore, the VFX Editor must distinguish between the different BG, BS (bluescreen) elements, and reference scans to ensure that it is clear to the visual effects facility what each scanned element is for. A typical filename is as follows:

BF010.bg1.1-125.cin

where

BF010 = the shot name41 (i.e., BF = Battle Field and 010 = the tenth shot in the sequence),

bg1 = the element (i.e., background plate #1),

1-25 = the frame range (i.e., 125 frames, starting on frame 1 and ending on frame 125), and

cin = the format extension (i.e., the element was scanned in Cineon format).

Some visual effects facilities and cutting rooms require specific naming conventions. Therefore, how elements are named should be discussed with the facility’s VFX Editor prior to scanning. Some sequences may also have shots going to different visual effects facilities. It is the responsibility of the VFX Editor to ensure that the scanning facility knows exactly where to send the scans.

In addition, not all shots within a sequence are ready to be turned over at the same time. When this happens, the situation is referred to as a partial turnover. The VFX Editor must keep track of which shots have been turned over versus which shots are still being edited or undergoing the bidding process.

Figure 6.44 Sample count sheet. (Image courtesy of Tom Barrett and Greg Hyman.)

Once scanning is complete, it is time to deliver all relevant information to the VFX Editor at the visual effects facility. This information comes in two forms: count sheets and media consolidation. A count sheet is a document that contains all relevant information about a visual effects shot including the total length, handle lengths, key number range scanned, scene and take information, camera report, and lab roll number. It is usually generated from the VFX Editor’s database.

Some shots will be built entirely in the computer (called virtual shots or all-CG shots) and, therefore, won’t have any elements or plates to be scanned. In those cases, the only information on the count sheet might be the length of the shot and any specific notes from the Director or VFX Supervisor. In cases where it may not be clear what needs to be done with a shot, it is essential to highlight that information on the count sheet. Count sheets may be delivered either as part of a database file, as printed documents, or both. The VFX Editor should check with the visual effects facility on what they prefer.

The second requirement for a visual effects facility is an exact copy of the sequence being turned over. This is achieved with a “consolidation” from the picture editor’s editing system that copies all media files associated with the sequence, including picture, sound, and visual effects renders. With these files, the visual effects facility can look at the cut exactly as it is seen by the picture editor. This allows the facility’s visual effects editor access to all metadata (numbers) found on the count sheets that is needed when requesting scans from the cutting room. The consolidation also allows the visual effects facility’s VFX Editor to deconstruct the precomps created by the cutting room’s VFX Editor, thereby, in turn, allowing him or her to pass the line-up information to the artists. If the visual effects facility is small and does not have a VFX Editor or an editing system, then a consolidation is not useful. In those cases, the precomp line-up information must be included in the count sheets.

The Visual Effects Facility’s VFX Editor

Once a sequence has been turned over, the job of the facility’s VFX Editor really begins. After receiving the count sheets and consolidation from the client, the VFX Editor must distribute all pertinent information about the shots to the show’s producers and artists. This usually begins by entering shot information from the count sheets into the facility’s database system. (How much information a production needs is company specific.)

If the visual effects shots have not already been titled by the production’s VFX Editor, the facility’s VFX Editor will need to do that so crew members know what shots in the sequence correspond to which count sheets. QuickTime files of the sequences are usually put online so the artists working on the show may reference them. If the show is heavy with animation requiring lip sync, audio files for each shot will also need to be created and put online for animators to reference for lip sync.

Once scans start arriving from the scanning facility, the VFX Editor must import each element into the editing system and check it against the cut to ensure that the correct number of frames was scanned and delivered. If a problem is discovered with any of the scans, the production’s VFX Editor should be notified so that the problem can be corrected as soon as possible.

Line-up information from the precomps may be the most complicated to pass on to the visual effects crew. This is because the metadata found in the timeline contains numbers that mean nothing to most other crew members: timecode, key numbers, and scene and take numbers. It is the job of the VFX Editor to translate this information into numbers that the visual effects artists and production personnel can use. The best way to do this is to re-create the precomp using the scanned files brought into the project. Basically, layer the elements as they appear in the cut, and reapply all of the effects from the precomp to the scanned elements. Once done, it becomes much easier to tell the artists where each element appears in the shot and when it enters and exits the frame, because the scanned elements are a common point of reference for all involved (i.e., they contain the same set of numbers). This is done in terms of frames, not time. For example, let’s return to the battlefield shot described earlier: Frame 7 of a pyro element explodes on frame 25 of the shot. The line-up note for this element may look like this:

BF010.fg1.1-52.cin

Element frames 7–52 sync up with comp frames 25–70.

(Please scale down to 70% and repo screen right to match reference movie.)

Matters can get complicated when elements need retiming using curve ramps, so using precise language in the instructions is essential. Talking with artists directly about how they prefer to have instructions worded will also help avoid miscommunication. How line-up information is made available to the artists differs with each visual effects facility. It is best to have a database track this information, or at least a spreadsheet that the artist can view easily.

Once all of the information has been distributed to the appropriate people on a show, work on the shots can begin. As different departments (i.e., animation or compositing) run takes,42 the VFX Editor imports them (from digital files) into their editing system so they may be cut into the appropriate sequence(s) and, if need be, exported as an updated QuickTime file for review. This is the dailies process for the VFX Editor and allows artists and supervisors to see their work in context with the rest of the sequence. It is important to make sure that all of the shots received are the correct length and that specific line-up info has been followed correctly. If something is wrong, the artist needs to be informed as soon as possible.

Sometimes the facility’s VFX Editor needs to precomp shots as well. A visual effects facility is often responsible for shooting elements on a stage (miniature elements like ships or planes, debris and smoke), which need to be incorporated into a shot. The VFX Editor will go through the same process as the production’s VFX Editor in determining, with the VFX Supervisor, which takes should be used and how they will interact with the other elements in the shot. For scanning, the VFX Editor may have a counterpart in the production’s cutting room order the scans, or the VFX Editor may communicate with the scanning facility directly. As always, all appropriate line-up information must be communicated with the artists.

Figure 6.45 4-perf 35mm versus 8-perf 35mm film formats. (Image courtesy of David Tanaka.)

When preparing scan requests the VFX Editor must make sure that all key numbers are being tracked correctly in the editing program in case a nonstandard film format was used to shoot the elements. For example, the standard film format used today is referred to as 4-perf 35mm. This means that there are four perforations per frame of film (16 frames per foot) that run vertically (top to bottom) through a projector. However, some films shoot VistaVision, which is 35mm film but with eight perforations per frame (8 frames per foot) that run horizontally (left to right) through a projector. As a result, if the VFX Editor orders a scan from an 8-perf element using 4-perf key numbers, the scan will be wrong.

Periodically, shots need to be sent to the cutting room for the production’s VFX Editor to cut into the movie for the Director to review and give feedback. These files are usually delivered as QuickTime movies. It is the VFX Supervisor’s responsibility to determine what files should be delivered, and it is the facility’s VFX Editor who must keep track of what versions, or takes, of shots are sent and when. If there is a problem with a shot, such as an incorrect length, that information must be communicated to the production’s VFX Editor.

While working with film is becoming rarer because of the dominance of digital editing and compositing, it is still necessary. Some directors prefer to view visual effects shots in a theater on film rather than from HD tape. This is because film is the medium on which most people will watch a movie theatrically, making some directors feel that it is the best context in which to evaluate the quality of the work. Therefore, instead of sending down digital files, the VFX Editor may send film rolls that need to be prepared for projection. As such, it is important for all VFX Editors (whether working in the production’s cutting room or visual effects facility) to understand how to use a traditional flatbed,43 rewinds, and a sync block.44

As the Shot Changes

Even though a sequence may be far enough along in the editing process for delivery to a visual effects facility, the cut is by no means locked.45 The Director and VFX Editor continue cutting the film even as visual effects shots are being worked on and delivered. Because the cut is always fluid, many shots will change in some way, requiring updated information be sent to the visual effects facility. Sometimes a change can be as simple as a shot being shortened by several frames at the head or tail. Other times a shot may be extended or new elements added, requiring new scans. Shots could also be omitted and new ones added. In all of these cases, new count sheets, called change notes (paperwork describing the changes), and new consolidated media need to be sent to the visual effects facility. It is essentially the same process as a turnover only more focused on the changes.

Depending on the Director’s editing style, changes may come weekly or even daily. As a result the VFX Editor must stay on top of cut changes and ensure that the visual effects facilities are updated with the latest information. While picture editors try to inform the VFX Editor of any changes, they are often too busy editing the film to keep track of everything. Therefore, the only way to know for sure what has been changed in a sequence is for the production’s VFX Editor to go through the updated sequence, shot by shot, and compare it against the previous cut. As a double check, the facility’s VFX Editor should do the same when an updated cut is received just to make sure that no changes have inadvertently slipped through the cracks.

It is not only the production’s VFX Editor who has to communicate changes. Oftentimes the visual effects facility is also making changes that need to be communicated back to the cutting room. If a sequence requires a lot of animation or has many all-CG shots, the VFX Supervisor may suggest length changes to better accommodate the wishes of the director. For example, a 50-frame shot was turned over to the visual effects facility with an animatic46 of a CG character reacting to something off screen. But once the actual animated character is added to the shot the animation supervisor realizes that 50 frames are not enough time for the reaction the Director requested. In this case the shot would have to be extended to accommodate the action. In cases like these, the facility’s VFX Editor must inform the production’s VFX Editor of the change needed and why. The production’s VFX Editor then must inform the Director and/or the picture editor to get approval.

Wrapping It Up

As the show approaches completion, much of the production’s VFX Editor’s job is dealing with final renders (called finals47) from the visual effects facility. This can be made more challenging if the director is still making cut changes. On large shows the facility’s VFX Editor may send dozens of shots to the cutting room every day in order to finish the show on time. The VFX Editor in the cutting room cuts these shots into the movie so the Director and the picture editor can evaluate them in context. However, the Director will not be finaling shots in this context because the files are compressed and lack detail. Instead, the Director will look at the shots in a theater, projected from film or an HD format.

Oftentimes the Director sends the shots back to the visual effects facility for additional fixes or changes. It is usually the VFX Coordinators, at both the visual effects facility and the cutting room, who track which shots are finaled and which still require work. But the facility’s VFX Editor still needs to keep track of everything sent to the cutting room and when.

All during this process the production’s VFX Editor is communicating with the digital intermediate (DI) facility to ensure that they have accurate edit decision lists (EDLs) and corresponding film negative from which to conform the movie. As the cut changes, or new shots are delivered, new EDLs may be required.

The frantic and harried nature of the show’s final weeks can lead to mistakes and confusion. Both VFX Editors, with help from the VFX Producer(s), VFX Coordinator(s), and VFX Supervisor(s), need to make sure the DI facility is working with the correct finals to avoid problems during the conform process. Shot lengths are especially important to check during the final weeks of a show. If a short shot is sent to the DI facility, an uncomfortable and costly delay may result.

Conclusion

Over the years a VFX Editor’s job, like many other jobs in the visual effects film industry, has changed, evolving from handling film on flatbeds to punching pixels on a computer. Yet through all the changes, the core responsibility of disseminating vital editorial information regarding visual effects shots to all necessary parties has remained the same.

Not long ago a movie with 200 shots was considered large. Now a large movie exceeds 1000 shots, which in turn translates into a great many precomposites, scans, count sheets, consolidations, and change notes. In other words, a lot of numbers! It is the commitment to keeping these numbers accurate and understood by all involved that has remained a constant to the VFX Editor’s contribution to the art of visual effects. As visual effects movies continue to grow in size, the role of the VFX Editor will continue to grow with it and become all the more essential to the filmmaking process.

COMMUNICATION WITH ARTISTS

Eric Durst

Starting

Visual effects in motion pictures are often the combined work of hundreds of people. The responsibility of the VFX Supervisor is to guide and lead the communication between all of these artists, focusing on a single goal: the visual effects blending seamlessly into the final film.

Because visual effects is a subjective art form, combining aesthetics with large doses of science and mathematics, there is no exact formula. There are, however, certain principles that one can look to for guidance in order to succeed in this process. Regardless of the number of artists involved, whether it is one individual or a massive team, these methods of navigation are similar.

People trying to describe how they want visual effects to look often use words and phrases like “magical,” “ethereal,” and “something that we’ve never seen before.” But in truth, these words and phrases do not represent how things look; they represent how one feels once one has seen them. When guiding artists, it is essential to define the difference between what it takes to manufacture the image and the feeling the image emotionally projects.

A visual effect, regardless of how fanciful or “otherworldly” it may appear, is always made up of specific, definable, and tangible elements from the real world. Color, light, and movement are what the film displays and this is the palette the artist has to work with. So the building blocks are specific descriptions that show the range of colors, the properties of light and how it reacts within the image, and the way these elements move through the frame.

How the viewer responds to this color, light, and movement is an emotional response to the shot and this is independent from the building blocks used to construct the images. This response is what gives the shot meaning and understanding, and the impact these elements produce drives one’s decisions and judgments about what does and does not work.

For every shot and sequence that has visual effects, one must have answers to three basic questions: What? Why? and How? These answers give a context for the visual effects and a supervisor uses this to guide all the artists working on the project

What?: What is the effect is being created? What does it look like? What are the attributes that make it up, and what are all the specific details about the visuals that are being constructed? What are the emotional beats of the shot? What is the feeling that one has when one sees it?

Why?: Why do these visual effects exist? Why is it important in the story? Is it a transition, punctuation, or a seamless shot that goes by without calling any attention to itself?

How?: How can it be achieved? This involves all of the technical and logistical aspects of visual effects pre-production, production, and post-production. This includes how to design the shots and sequences, the principal and visual effects photography, and any elements and source materials that are needed. This also includes all camera equipment, software, workstations, personnel, pipelines, and finances that are required to produce the visual effects shots.

Based on the complexity of the visual effect shots, answers to these questions range from very simple decisions to something short of a Ph.D. thesis.

Working with Teams

Communicating with a team over a period of time can be a little like the Telephone Game, where a message is passed around a circle of people—one by one—only to get a distorted version of the original message at the end. It is important when translating information through a group of people to make sure it is done in a way that is clear and understandable, so the message remains intact. However, communication is often much harder than duplicating a sentence correctly because visual effects must carry both dramatic impact along with visual perfection.

Consider this example: A statement, “THERE’s A FIRE IN THE BACKYARD!!!!,” is passed around a circle of people, delivered with a sense of passion and urgency. This travels around the group and the last person is told, “There’s a fire in the backyard,” delivered in a complete monotone. This is mechanically accurate, but because the delivery is flat and nonemotional, it is not a completely acceptable interpretation of the original statement. In visual effects terms, the shot is technically perfect, but does not work because it lacks the intended impact.

The game is played again, but this time the message at the end of the circle is “THE BACK OF THE HOUSE IS ABLAZE! WE MUST GET OUT IMMEDIATELY!,” delivered with the original sense of passion and urgency. Even though the words are different, the message is more thoroughly understood. In visual effects terms, the shot may not look exactly like the original design, but it feels right so it is successful.

The point is that it is essential to communicate both the visual and emotional parts to get a result that truly works. The supervisor needs to understand how to read between-the-lines and have great insight into what is needed and expected from the shots they are delivering.

Reference and Perspective

To give the clearest possible direction to the artists constructing the shots, many resources need to be brought to the table:

1. Gather and review specific images and reference material that visually describe the shot(s).

2. Gather and review specific motion reference material that describes the kinetic feel of the shot(s).

3. Review all production artwork, pre-production templates, and animatics.

4. Make sure the artists understand the context of their shot(s) by reviewing edited sequences, especially showing the shots surrounding the ones they are working on.

5. Review the expectations of each shot and the degree of detail that is required.

6. Describe the properties of the objects in the frame as being:

• Transparent, translucent, or opaque.

• How visible—do they stand out or do they recede?

• How does light interact—do they emit, absorb, or reflect light?

• What materials are they similar to (plastic, metal, glass, crystal, gas, etc.)?

7. Describe the emotional feeling of the shot in terms of volume. Use phrases that express the visual effect’s impact outside of technical or artistic language that everyone understands.

• How hot or cold is this shot?

• Does the visual effect whisper, speak normally, shout, or scream?

• How bright or dark is it, going from total blackness (0) to the sun (10)?

8. Describe the intent of the visual effects in the sequence.

• Are the visual effects used to punctuate a sequence?

• Are the visual effects a transition?

• Are the visual effects introducing a new idea or concept?

9. Separate technical and artistic considerations to gain an understanding from each perspective.

• Describe the shot from the artistic viewpoint (without any technical considerations).

• Become the disciplined technician and figure out how to accomplish what the artist needs for the shot to work.

• Become the problem solver and create the best result knowing all sides.

10. See the work with fresh eyes, as if one were a member of the audience.

• View shots from how they look and feel, not from how much money or effort was required to create them.

11. Understand all of the visual firepower that can be utilized:

• Software—what software tools are available?

• Machine power—what hardware resources are needed to complete the shot(s)?

• Team—what individual artists are ideal for each shot/sequence?

• Experience—how much artist experience is needed for each shot?

• Time—what is the time needed and allocated for each shot?

• Finances—what are the financial resources for the project?

Shot Production

Filmmaking is evolutionary, and changes and adjustments often occur throughout the post-production process that alter the original plan. The artists follow the lead of the VFX Supervisor, so during this phase it is essential to maintain a clear perspective at all times to keep everyone focused in the right direction.

The VFX Supervisor heads the shot review process, where each shot is analyzed and critiqued on an incremental basis as it progresses to its final state. During this process, his or her thoughts are communicated, as well as those of the director and others, in the clearest manner possible. This is most often accomplished with a phone/video conference call or a face-to-face meeting with the artists.

These reviews also include written, audio, or visual notes to further specify and clarify particular points, ensuring that everyone is in full understanding with each other. A dated record of all comments for each shot should be maintained throughout the production. Because shots can take weeks or months to produce, this history of information can be enormously helpful to everyone in the production pipeline.

Communicating with Artists in Other Departments

Visual effects often join with other departments to help expand their work into the digital realm. Communication of the VFX Supervisor with the artists in these departments is important, so there is a direct connection between the visual effects and live-action areas.

Digital Sets, Environments, and Extensions

When new environments are needed, whether they are sets or locations, visual effects are often used to create them digitally. In live-action production this is the domain of the Production Designer, so good communication between the art department and visual effects is essential to bring the physical and digital worlds together successfully.

Figure 6.46 Visual notes from the film Knowing (2009) with review comments. (Image courtesy of Buf and Summit Entertainment, LLC.)

Digital Actors

Actors and stunt actors are often enhanced or created digitally through the visual effects process, whether with full figures or partial figures (face replacement, etc.). The use of digital techniques is frequently underestimated during live-action photography. The VFX Supervisor should make sure that all needed resources are available, so if digital replacements are required later in the post-production process, the visual effects team is prepared. To ensure this, make certain that extensive photographic reference of the actors, their costumes, and any relevant performance material has been collected. Reference of extras for crowd enhancement is also extremely useful. Communication and coordination with the assistant directors, stunt coordinators, costume designers, and actors are essential for this to go smoothly.

Digital Cinematography

Visual effects shots that require digital lighting and camera moves are extensions of the roles of the Director of Photography and camera operator. Maintaining consistency and visual style in digital shots is enhanced greatly by communication with the DP and camera department. Understanding the look and feel of the original live-action photography goes a long way toward helping the visual effects blend in with the live-action footage.

Digital Sequences

Visual effects is often called on to perform the role of a digital 2nd unit. This is parallel to the work performed by a live-action 2nd unit. By having the ability to generate total environments as well as digital actors, visual effects often creates complete sequences for the film, along with digital shots that cut within the live action. These shots also extend the special effects department’s role by expanding, or digitally manipulating and enhancing practical effects.

Completion

The visual effects in a film represent the efforts of many artists and technicians who have worked together to create images that form a unified vision. This is a challenging task. To succeed requires great communication skills, the ability to motivate and guide a wide variety of personalities, and a high degree of patience and persistence between all parties. The end result, the reward of seeing the final visual effects shots cut into the completed film, is a spectacular thrill that continues to inspire visual effects artists worldwide.

THE HISTORY OF COMPOSITING

Jon Alexander

The History of Optical Technique

In the 1920s simple optical printers were made to duplicate exposed movie film. They consisted of a camera with raw film stock and a projector with previously exposed film that needed to be duplicated. Using machine tool technology, a camera, projector, and lamp house were placed on a lathe bed with mounting platforms that allowed for precision alignment. The camera was focused on the movement of the projector that held the exposed film.

In the 1930s at RKO Studios, Linwood Dunn used traveling mattes to create some of the earliest motion picture special effects. Examples of those effects were basic wipes used as transitions from one scene to another. Using a second projector on the printer to hold traveling mattes, he could expose part of the raw stock film frame, roll it back to a starting frame, and then put the inverse of the matte in the second projector and expose the raw stock a second time without double exposing the originally exposed portion of the film. Although the black-and-white matte film was not totally opaque, it held back enough light that the raw stock would not get any exposure.

In 1940 Larry Butler expanded on this basic premise of holding out one part of a film frame from exposure with a second opaque piece of film. He won an Academy Award for inventing the blue-screen technique to make traveling mattes of moving objects photochemically. This technique would pretty much be the only way to efficiently composite moving objects into film scenes for the next 50 years. It was not until the early 1990s that it made economic sense to move away from film compositing to digital compositing on computers.

Traveling Matte Technique and the Digital Age

In the simplest film composite, one photographed object is cut out so as not to double expose the film and is put over a different background. To cut out an object on film, the object needs to be photographed in such a way that it can be separated from its original background. To do this, the foreground action phase is photographed against a plain backing of which there are various types and colors and methods of illumination. The goal is to create a duplicate negative with a composite of the foreground object over another piece of film of the background. This requires a traveling matte that is a silhouette hold-out that can change from frame to frame with the action of the foreground object. Then the foreground object can be put over the new background without double exposing that area.

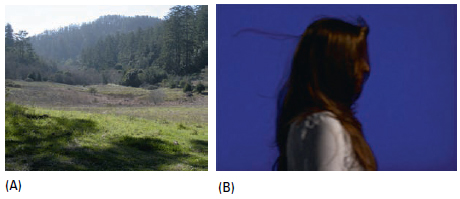

Figures 6.47, 6.48, and 6.49 show images used in making a composite. This is not a very good composite. The foreground character does not integrate well with the background. The mat is a bit dense and the beauty lighting of the model does not match the background. One doesn’t have to be an expert to recognize a bad composite. A perfect composite is one that gives no clue to its separate origins.

Figure 6.47 (A) Background. (B) Blue screen. (Image courtesy of Jon Alexander.)

Figure 6.48 (A) Extraction. (B) Matte. (Image courtesy of Jon Alexander.)

Figure 6.49 Composite.(Image courtesy of Jon Alexander.)

The basic technique for shooting images with the objective of making traveling mattes from the negative for computer graphics compositing is the same as it was for making mattes for film compositing. The nature of computer graphics, however, is such that there is much more latitude in what is acceptable exposure of the original negative.

Making precise mattes with film requires a very specific and narrow range of the color spectrum. If one looks at a perfect blue screen through a corresponding red glass, no color will be seen. The eye (or the camera) just sees black. If that blue does not exist anywhere else in front of the camera, then the rest of the film will get some bit of exposure from which a matte can be made.

It is pretty easy to test for the perfect blue photochemically. A series of exposure wedges reading the processed negative with a densitometer is run. Kodak published AIMs48 for their films. Kodak’s AIMs were suggested values read from the densitometer that, depending on the film stock, would give the maximum separation of the blue screen in order to make hold-out mattes. Through trial and error one can come up with the best negative film exposure AIMs to get the cleanest most precise mattes for the filters. The ideal negative AIMs for digital compositing are still the same as they were with film. The closer to the AIMs, the easier it is to get a perfect matte result. Deviations to a certain extent will make pulling the matte more difficult, but not necessarily impossible as it was in film compositing. Optical compositing techniques might be able to get a reasonable matte even if one-half stop off on film. But values beyond that just can’t get a clean edge. However, the complex algorithms of digital compositing allow for much more latitude.

Once the mattes are extracted, the second and perhaps more important step is replacing the color of the matte screen. Better blending is possible in computer graphics compositing than optical compositing because of the ability to replace the color regionally and to choose the hue of the replacement color. With good mattes and replacement color, making the actual composite is a simple final step.

The advent of computer graphics compositing has also made it possible to use colors other than blue for the matte screen. In older types of films the emulsion layer that was sensitive to blue light always had the biggest grain because silver halide reacts the least to those wavelengths. The larger film grain allowed for a balanced response to the emulsion layers that were sensitive to red and green light. Skin tones also have little blue in them, so if a blue replacement is performed to get rid of the screen those tones will be affected to a lesser extent than a green replacement will. In addition, the blue replacement will have less apparent film grain. Since it is easier now to isolate edges in digital compositing there is more latitude in choosing a background screen color. However, the color replacement of the edge still needs to be dealt with.

Historical Notes on Blue Screen

A short review of some of the requirements for shooting with optical compositing in mind should be of value since these are basically using the same techniques as digital compositing. This will also give an opportunity to comment on requirements that used to be mandatory and are now just suggested. Most of the current confusion in shooting stems from misunderstandings about what is possible today versus what was possible yesterday.

The original traveling-matte system was based on consistency, quality control, and the limits of the optical-photochemical process and dictated by the limitations of three little square pieces of glass in the filter set of the optical printer. These red, green, and blue filters were used to make the bluescreen extractions, mattes, and separations from the original negatives. The resulting elements were used to make the composites.

The biggest problem with the optical-photochemical compositing process was that one fit for the whole matte was necessary. There was really no practical way to make different fits for different parts of the object being matted (although this is something that is done on nearly every digital extraction shot today). Because the matte needed to fit as one, that meant that the colored screen had to have a very consistent exposure over the width of the screen. In addition to the requirement of being evenly lit, a very narrow range of exposure was allowable on the original negative for successfully pulling a matte. Variations in the exposure would mean variations in the density of the mattes and thus dark or light fringing in the composite.

Not only was a good original negative needed, but there also had to be incredible care taken while making the elements, so that all wedge picks49 would match into the final composite. Both wedge and element obviously have to go through chemical development, often several days apart. If the processing were not in control during developing, the elements would have to be made again from the start. Black-and-white film processing has greater tolerance to mixing and temperature variations than color film in general. The tricky part was the close tolerances needed to develop the various gammas of the black-and-white elements. Lack of control in developing could come from any number of reasons. The chemical mixing needs to be extremely consistent. Care needs to be taken not to use the chemicals too long before refreshing. Controlled bath temperature can be tough if the room temperature fluctuates too much. The mattes could not be easily tweaked with additional rotoscoped elements like they can today.

In the years before compositing on computers, most composites were done on optical printers. The exception at ILM would be shots done completely in camera in the matte painting department. In the optical department at ILM, the vast majority of the original elements for the composites were made on the Anderson Printer in a VistaVision format.50

The majority of the final composites were delivered as normal 4-perf 35mm images. Working in the larger VistaVision format helped to compensate for the natural loss of quality in film as it is duplicated. Manipulation of the images was completely photochemical and optical as opposed to mathematical as it is in the computer graphic world. (The math of course is based on the same physical laws of light that dictated the optical printing process.)

The goal in optical compositing was for one matte to work overall to isolate the object in front of the blue screen. This way the optical camera operator could make one fit of the mattes. This matte fit was a visual adjustment and its repetition over a series of takes was based on the skill of the operator to exactly repeat the same placement with the same elements. If the element was damaged it would have to be remade and a different fit would be necessary. This refitting each time the comp is done no longer happens. In computer graphics, once the matte is blended successfully, a compositing script exists that will exactly repeat the line-up. That precise refitting on the optical printer was up to the camera operator.

The other aspect of compositing an element shot in front of a blue screen is replacing the blue of the screen. Ultramarine blue is a natural pigment historically used to give the richest blues to paintings. Because it can eliminate the yellow of white light, it is ideally suited for bluescreen work. The pigment choice has been refined somewhat over the years depending on the medium with which it is to be used. More common terms today are chroma or digital blue. Ultramarine blue was a good choice for pre-Vision films. A considerable amount of light could be thrown on an actor or model without washing out the rich blue hue.

In optical compositing replacing the blue was accomplished by rephotographing the image through a red filter, which would, in a perfect world, make all the blue disappear into black and thus not be carried to the new duplicate negative. Assuming the elements were made successfully, the composite was then in the hands of the optical camera operator. The composite would have to run at least several times. Each time the operator had to line up the camera and elements exactly the same way, and then hope the lab processing was consistent. Each composite was created on film and projected because there was no other way to preview the composite. Today, composites are previewed on computers or servers.

Ideally, a compositor would like to have a bluescreen negative with as much information in it as possible for the sake of pulling a matte with as many details as available. Using the color-difference matting technique means the optical printer compositor will be pulling the mattes from a protection interpositive51 of the original negative. Blue light is the hardest part of the visible spectrum to capture on film and as such the blue record52 silver halide crystals53 are proportionally bigger than those found in the green or red records. The blue resolving power of the film is never as good as the green or red records. Thus, there will not be as much detail in the blue record as in the red or green. In any color-difference matting system, the underlying premise is that the color of the screen that is being used is thrown out. With the exception of rich blues or violet little is lost. Furthermore, little is lost chromatically by throwing out the blue channel and a greater detail of the red and green channels is retained.

The perfect blue color for the screen lies at the center of the blue region of the film spectrum. (The blue region runs between 400 and 500 millimicrons, green 500 to 600, and red 600 to 700.) The peak transmission of a blue screen lies at 450 millimicrons. For practical purposes this color is referred to as ultramarine blue. Because ultraviolet light has a shorter wavelength than visible light, which contaminates the blue record, the photographed matte image will be slightly smaller than the color action image. Thus an enlarging compensation must be made in the printing steps unless corrected lenses are used on the camera to offset image reduction. This aberration often shows up in older lenses whose coatings have deteriorated. But, unlike in the old optical compositing days, this chromatic aberration is easy to fix in computer graphics compositing. A histogram of an image with ultramarine blue shows almost no red or green. In the mathematical world of computer graphics, it is thus easy to isolate and eliminate this color. If there are no transparent objects in the scene or a great amount of motion blur, the threshold of recognition of the color-difference matte can be raised to reproduce medium blues and violets while still maintaining a major discrimination against the blue backing. This means pure blue is not necessary to pull a satisfactory matte digitally. That latitude is very narrow when extracting blue photochemically.

Green screen works the same way as far as color-difference matting (with the obvious subtraction of green rather than blue). The problem with green screen, especially with anything other than fine-grained film, is that by subtracting the green record an incredible amount of detail around the edges is lost. There is also the problem that the green record lays physically below the blue layer and the yellow filter layer. Because of this it is very common to see halation in the optical composite from a greenscreen extraction.

A densitometer can measure how far off the blue/greenscreen AIM54 on the negative is. Following are the neg AIMs with the less than sign (<) indicating that the goal is to get that AIM below the number following it. For example, at ILM’s optical department the bluescreen AIM was “Red 20, Green less than 100, and Blue 235.” The AIMs can vary per film stock and with the red, green, and blue filters used to pull the separations. These ILM values have been adapted from Kodak’s suggestions. They worked with ILM’s particular filters and processing techniques. They would be a good place to start but should certainly be adapted to give the best results for specific red, green, and blue filters, as well as the lab where the film will be processed. Following the AIMs is a little chart that shows how far off in stops the blue or green screen would be based on the densitometer readings.

Bluescreen Neg AIM 20 <100 235

Greenscreen Neg AIM 20 180 <145

Green screen/blue screen

20 120 85 –3 stops 20 40 175

40 140 105 –2 stops 20 60 195

60 160 125 –1 stop 20 80 215

80 180 <145 AIM 20 <100 235

100 200 165 +1 stop 40 120 255

120 220 185 +2 stops 60 140 275

The old rule of thumb for shooting in front of a self-illuminated blue screen55 was that the subject should be shot about 25 feet in front of it to prevent, or at least reduce to a minimum, the blue spill that occurs if the subject is closer. Self-illuminated blue screens are wonderful for the compositor because they tend to be the most even across the field. Of course, this implies availability and by the nature of how they are built, the field of view can be fairly limited. The blue spill is not nearly the problem today that it was in the photochemical compositing world because now a large inner matte can be used to isolate just the edge of the object for the mattes. The color difference of the blue spill to the screen color tends to be severe enough to allow a successful edge blend matte between the spill area and the edge.

In motion control work where there is the luxury of repeating the action, it is always helpful to the compositor to use strong yellow light on the models during the matte pass in order to reduce the amount of blue spill. Since this pass is only shot to make the matte and not to color or shade the model, a strong yellow light will not hurt anything.

It has become more popular to shoot with a frontlit screen (blue or green) during the past few years. As far as compositing goes, there is no advantage to this other than the reduction of potential blue spill. The most important thing about the frontlit screens is that they be consistent side to side. If they are not, problems could occur. For example, assume a screen is uneven; that is, it has seams or is fluttering in the wind. Depending on the object being matted, some allowances are possible. If it is impossible (or not economical) to have a flat consistent screen, then a bit of common sense should rule. For objects with no solid shape (smoke or dust, for example), a screen with a wavy blue would be better than one with seams because it is nearly impossible to separate the seam without leaving some remnant. Conversely, a seam behind a model is easier to deal with because the roto work can be done the same way connection points are matted on bluescreen models. If the perfect chroma AIM can’t be met, then it is better for the screen to be lighter if the foreground object is darker; conversely, the screen should be darker if the foreground object is light. These suggestions applied in the past when the shots were going to be optically composited as well as today. Unlike digital compositing, it was certain that straying too far from the ideal exposure would make it impossible to successfully create a good, clean photochemical separation of the blue screen.

Another large change from days past is the ability of rotoscoping to fix matte problems fairly interactively. In the past it could take days, if not longer, from the time mattes were ordered to when they were drawn and processed. Nowadays if the mattes have problems, it is easy to go back and tweak the specific sections that have problems rather than having to start all over again. Medium to close-up full-body articulation is still very labor intensive, but many other types of roto help are relatively cheap compared to the cost of the time a crew may take on the set or on location. Today, there are a number of good edge-detection algorithms, and short of that there are different ways to extract elements digitally that just were not possible photochemically. Keep in mind that the farther off the target the AIMs are, the less relevance there is to even set up a screen. A lot of money could be wasted lighting a screen that doesn’t ever get used.

Probably the biggest improvement in compositing in computer graphics over the old optical compositing is in matte color replacement. In optical compositing the technique was to attempt to turn the blue screen to black so there would be no exposure added to the final comp due to the blue of the screen. The problem with this approach is that light naturally wraps itself around any edge it encounters. The edges on any image contain contamination of the edge foreground object by whatever color is directly behind it. Obviously as the object travels in front of lighter or darker objects, the light wrap changes. Also all blue cannot be completely eliminated from the foreground objects or their colors won’t look correct. The midtones especially will look processed and have too much contrast.

In optical compositing, an attempt was made to solve the foreground blue replacement problem by using a color-difference matte. The color-difference matte is produced by bi-packing a black-and-white green color separation56 positive with the original negative. This matte registers as density only in those areas of the scene where the blue content is less than the green content. This matte, together with the green positive, represents a faithful duplication of the blue color content within the scene (except of course where the blue content does not exceed that of green). All colors except blue and violet will therefore reproduce in normal values. Desaturated blues (like blue jeans) reproduce acceptably. The blue backing reproduces as black and makes possible normal reproduction of transparent objects in the scene such as smoke, glass, etc., without fringing. But that system is based on the necessity of tying the composite to a single blue replacement.

Due to the interactive nature of matte manipulation within CGI composite scripts, different areas can be tweaked with different screen color replacements. Generally the screen color is only digitally replaced with a dark or a light value run through a luminance mat. In theory, the whole edge could be queried, detecting the color of all regions and replacing the screen color appropriately. It is this ability to manipulate the screen color replacement that allows computer graphics to accept less than perfectly chromatic screen colors. Matte lines do still exist because the extractions are not perfect. But now the screen color is replaced with more appropriate colors behind the objects, so what used to appear as matte lines now appears as nearly correct color edge wrap.

Film versus Digital

One historical advantage of film over digital images is tonal range, in particular detail at the low end. As file size transfer becomes less of an issue with better technology, this difference is disappearing. For the longest time nothing has looked better than a new print in a large format projected to SMPTE57 standards. And nothing has been more disappointing than viewing that same film print after it has been run several hundred times.

Optical compositing obviously was all about manipulating a collection of original negatives. The first step was always to make an interpositive to work from, so the original (read “irreplaceable”) was touched as little as possible. Over the years Kodak, in a nod to the marketing advantage of being involved in the Hollywood film industry, developed and changed film emulsions to suit the needs of visual effects. No one has pressed the limits of film manipulation like the visual effects industry. The old standard film emulsions had huge blue grain compared to the modern Kodak Vision films. And certainly the bottom line for shooting is that no matter how much performance is desired of a piece of film, it is still a photochemical reaction that has absolute limits in science.

In optical compositing the bluescreen negative is re-created using fine-grain black-and-white films. Red, green, and blue records were made and recombined on a new piece of color negative. Mattes were also made on black-and-white acetate or Estar-based film.58 It was a laborious process that required a very precise choreography by the printer operator. Something as simple as a change in the order of placement of color correction filters from one take to another could make the composite unusable.

For years the standards were Panchromatic Separation Film 5235 and SO202 (Estar base). These are no longer being produced by Kodak (2238 is the replacement). The first color negative Vision films greatly reduced grain if properly exposed. They did help, but reacted just like any other film if under- or overexposed. Kodak’s 5277 film59 in particular exhibited very ugly blues if underexposed. The blues were so bad that the blue channel needed to be reprocessed with a mix of the green in order to get any sort of definition. It also got very milky in the blacks when underexposed. The S0214 film was developed to get rid of halation,60 especially when using green screens (but it is not yet in wide use). The 5245 film got contrasty outside but could look great with the use of some scrims. The 5274 film was punchier but tended to be a bit contrasty, whereas 5293 film was pretty predictable for a midspeed film. The 5298 film probably shouldn’t have been used for visual effects, unless the visual goal is that 16mm look (i.e., very grainy).

Optically speaking, some of these adverse film characteristics were impossible to overcome since the frame needed to be dealt with as one image. Area mattes could be made but the process of hand drawing and photographing them could take days, so it was not economically feasible. Artists were quite particular in optical compositing about the AIMs and requirements for how things were shot because there truly were physical limits as to what could be fixed. These requirements would translate back to greater expense on the set because of care needed in shooting. That is probably the ultimate reason for the death of optical compositing because even though it is not necessarily any quicker, digital compositing doesn’t have the photochemical restrictions. And one poor digital compositor laboring away to fix a less-than-ideal blue screen is far less expensive than holding up a complete first unit while the backing gets perfectly lit.

Optical Underexposure Generalizations

• More apparent grain,

• less saturated colors,

• smoky blacks,

• lower contrast, and

• less sharpness.

Optical Overexposure Generalizations

• Less apparent grain,

• more saturated colors,

• richer (blacker) blacks, and

• increased contrast.

Vision films replaced the old standard emulsions of the 1980s and 1990s. Currently Kodak has pushed out Vision 3 emulsions. Sadly, in some respects, the end of shooting on film is in the not too distant future. But just as digital compositing is a vastly superior technique to optical compositing, one would hope HD cameras will eventually have the same latitude as film, with manageable file sizes.

As romantic as it may appear to have been compositing on film, the physical restrictions were a huge detriment to the artistry of making a movie. A printer breakdown in midshot or a mistake at the photo lab could mean hours or days wasted and no quick way to recover. Because of deadline restrictions that meant a limited number of effects shots per movie. A huge show in the 1980s might have had 300 to 400 shots, nothing like the several thousand of today’s big shows.

COMPOSITING OF LIVE-ACTION ELEMENTS

Marshall Krasser

Modern Digital Compositing

With the release of Star Wars in 1977, the magical world of compositing was introduced to the masses on a scale not seen before. In fact, most of the digital artists practicing the trade today were influenced and inspired by this movie. A great deal of the current bluescreen/greenscreen (BS/GS) methodology and technology stemmed from the groundbreaking optical processes that were developed and refined in the 1970s. As just discussed, the photochemical process remained the leading form of screen compositing for feature films until the early 1990s, when more affordable desktop computers became available. The software engineers utilized the knowledge of the photochemical process and developed methods that would allow the same type of work to be done digitally. This opened the door to unlimited possibilities and development.

Prior to this adoption by the film industry, a great deal of groundwork had been developed in the commercial production facilities and broadcast video networks. A simple example was the real-time keying of the local weather forecaster over the weather map. Since film required more resolution than video, it took the development of high-resolution, pin-registered scanning equipment to finally allow the migration from film stock to pixels.

Regardless of the medium, the processes that are in use today are very similar, but the final techniques vary depending on the specifications of the final image (i.e., Film, PAL, NTSC, HD, IMAX, etc.).

Capturing the Image to Composite

Chapter 3 of this book focuses on how to properly acquire/shoot an image for visual effects work. The section titled Bluescreen and Greenscreen Technology specifically delves into great detail on BS/GS methodologies and technologies. Therefore, the topic is only touched upon here in a general way.

Thanks to computer and digital technology, many problems with badly lit or shot elements can be corrected. However, a properly shot element will save time and resources that translate into costs and quality. Having the proper color screen, evenly exposed with a subject the correct distance away from the screen, can only help deliver a better composite—on time and budget.

Emerging Capture Technology Issues

Be aware that most, if not all, video cameras utilize an edge enhancement feature to artificially sharpen the image. This sharpening adds edge ringing artifacts that will result in very undesirable results when keying. Make sure this feature can be disabled on any HD system that is being used. If there is a sharpness issue with the image, it can be corrected in the composite later. Other cameras, such as DVCAM, have very low sampling in chrominance and tend to heavily compress the video data. This adds even more artifacts (noise) to the image that cannot be corrected and makes screen extraction difficult, if not impossible. (Please refer to the section Digital Cinematography in Chapter 3 for more detailed information.)

Emerging Approaches

A new emerging technique, which adds to the BS/GS set of tools, is the retroreflective curtain approach. This uses a retroreflective curtain in the background and a ring of bright LEDs mounted around the camera lens, which mitigates the need for any additional lights to illuminate the background. The advantage is that an extremely small amount of power is used and the LEDs require little or no rigging. This new approach stems from the invention of blue LEDs in the 1990s, which also allow for green LEDs. This process is still being developed but shows excellent potential for small-scale productions and has already been used on a few major projects.

Another emerging technology is color keying that uses a part of the light spectrum that is invisible to the human eye. Called Thermo-Key, it uses infrared as the key color. It isolates the living subject from the background and allows the artist to create a matte of the subject without the need for a screen. This is still in an early stage of development but could hold vast potential in specific situations.

As the capture side of the technology continues to advance, so will the compositing side of technology. But regardless of where the state of the art is at present, one thing remains constant: the need to create a believable composite that is of high quality and is on time and on budget.

After the Shoot

After the files are scanned and loaded online, a first pass at primary color correction to achieve a consistent and standard color basis for compositing is highly recommended.

One approach is to load all of the screen shots into a color correction application that allows images to be viewed sequentially in a thumbnail proof sheet mode. By conforming and setting a base color for the screens, a set of default extraction values can be set for the sequence, which can save time. However, the final subject color conform will need to be handled later in the final composites.

Another approach is to conform all of the subjects in the first pass and let the screens fall as they may. This allows the timer to set the primary color of the subject(s) and removes the need for individual artists to attempt color conforming on their own.

Extractions and the Magic Bullet

The general consensus is that there is no magic bullet when it comes to extractions. All of the techniques and tools available today have strengths and weaknesses. Ultimately, a combination of several techniques is usually most successful. Granted, at times quick and easy extractions can be made on perfectly lit and stationary subjects. However, those are the exception to the norm when budgets and time constraints are factored in. Ultimatte and Primatte are two well-known and powerful extraction tools and, when used alone or combined, can provide excellent results. The Image Based Keyer (IBK) is another tool that is quickly gaining popularity.

One widely used method for keying BS/GS photography involves pulling three primary keys:

1. Inner key: for the inside of the subject, which involves getting a solid interior matte that does not bleed out to the edge. This is a core matte in essence and should be solid enough to kill any small internal errors in the extraction. A good trick to eliminate any stray holes: Max filter the matte +11 and then Min filter it by −11 (values are an example only).

2. Outer key: for the exterior of the image that removes the exterior of the subject and does not bleed into the subject. This is a looser version of the next key below, the edge key, and is there to provide a good edge base on which to build.

3. Edge key: consists of a finer detailed key for the edge material, including hair or clothing fibers. These mattes might be built from a combination of luminance mattes isolated from the individual RGB channels.

These keys should then be used in combination to preserve fine edge detail while maintaining a pure clean and clear core matte.

Other Factors

There has been a recent trend to shoot “dirty,” which is the introduction of elements such as rain, smoke, and dust into screen shoots. This can result in an interaction that cannot be achieved in a sterile screen shot and is increasingly becoming the norm. Shallow focus is another area that can cause special handling of the extractions. These and other factors can force an artist into isolating different areas in a frame (with a garbage matte) and pulling a separate extraction that is later combined into an extraction composite matte.

In the case of smoke being shot in the background plate, the ideal situation is to have the smoke contained spatially behind where the subject needs to be composited. Later in post, additional smoke can be added in front of the screened element. But this cannot always be controlled, so in situations where there is foreground smoke, this smoke should be isolated if possible. Difference matting can work if there is a locked-off camera and action and a clean, smoke-free frame.

Difference matting can only really work in limited situations but is a good first approach. Other options include simply placing the element over the background and then layering additional or “matching” smoke to blend it into the scene. This will cause some modification to the existing look of the plate but is necessary. There have been situations, in extreme conditions, where the entire background scene has been digitally re-created and then the smoke was reintroduced in the composite using CGI or practically shot elements.

Motion Blur

Motion blur can be difficult in any composite. For the best looking composite, one needs to retain as much of this motion blur as possible to avoid strobing. As with most screen extractions, first extract a good core matte and mix it with a softer edge matte extraction. This method will allow the edges to be processed and treated differently and will provide the most flexibility in the end. It is this control and flexibility that discourages some artists from using tools that prebake the “composite” inside of the plug-in. For many reasons, the safest route is to never “composite” in the plug-in.

If motion blur is still an issue, evaluate all of the channels separately to see if the motion blur can be isolated with a luminance extraction. Once it has been isolated and restricted to the desired areas, create a color card that best represents the original color of the subject(s) edges. Composite the color card into the matte, place this result over the background, and composite the keyed subject over this. In special cases, and if the action allows, the element that is being keyed can be translated to fill the motion blur matte. This will give a more accurate and complex color than what may be achieved with a single color card. This trick can work and save the artist from having to resort to the final option of manually re-creating motion blur by painting on the final composite. This method can also be used to capture extremely fine edge detail that the keyer cannot isolate.

Incorrect Exposure

If a subject or the blue or green screen is improperly exposed, there will more than likely be some noise or chatter occurring along the extraction edges. The solution in the past was to expose the screen one stop under the foreground subject to get the greatest saturation and minimize screen spill. Currently, there has been a trend to expose the screen at the same exposure as the foreground subject. This will help eliminate grain issues unless the subject is being lit for nighttime or a darkened environment. Ideally it is better to shoot these dark scenes a stop or two brighter than the target and later reduce the exposure in the composite. The key to this working is having good communication with the Director of Photography prior to the shoot. (Please refer to Digital Cinematography in Chapter 3 for more information on this topic.)

Spill Suppression

With the exception of a black screen, all screens will have a certain amount of color pollution (spill bounce) affecting the subject. Even if the distance from subject to screen is set correctly, some amount will always be there. Most software packages have spill suppression options built in, and these are fairly effective in eliminating the spill. Do not suppress too much of the target color when utilizing this option. This overuse of suppression is one of the big giveaways when it comes to being able to visually detect screen composites. If the plate has been color timed, work should be done in the core color-corrected image. Spill suppression should only be performed on the edges if necessary.

In extreme cases of spill where the image is compromised beyond a process’s reach, a skilled artist can synthetically re-create the bad channel by blending the other channels together and then reintroducing this result into the original RGB file. It is not easy, but it can be done.

Several packages are available that handle edge spill quite well. Most of them use 3D color algorithms to isolate and replace the spill with the color that is appearing behind it. This will not work if further post-processing is required, since it will not allow the use of the process’s composite option.

Degraining Running Footage/Still Images

Adding grain to CG images was discussed in CG Compositing in Chapter 7. When dealing with shot images, it never hurts to run a low-level degraining or blurring pass on an image prior to extracting. The extraction might lose a little detail but the result could be worth the loss. However, the original image should be used for the subject in the composite and not the processed one. In extreme cases, re-creation of the image in areas of fine detail (i.e., a sailing ship’s rigging) may be necessary by tracking in a still image. This tracked piece can be a big time-saver and should be on the list of possible solutions to try. A clean degrained image can be created if there are several frames to work with. By aligning them, adding them together, and doing some math, a very clean and degrained image can be obtained (i.e., for 10 added images simply multiply by 0.1). Remember to add grain back into the final composite to match the surrounding images.

Starting the Composite

It is always beneficial to have access to the individual layers that go into a composite. Current tools may make it difficult to get in and really alter the pieces that go into the composite, but this is an individual decision that needs to be made at the artist’s level.

Generally speaking, today’s screen composites are rarely simple “A over B” processes—and sometimes it appears that the entire alphabet is now involved. This alphabet includes such things as relighting the foreground (FG) element in CG (to simulate outdoors), adding interactive light modulation (sun/shadows), plate flashing, edge warp/spill, edge blending, and chromatic edge work, to name a few. It is also critical that any surrounding live-action shots, or reference images shot at the time of filming, be closely analyzed. Since the ultimate goal of the shot is to cut seamlessly into the movie, all of the tricks and techniques at hand— unorthodox or otherwise—might be needed to achieve this result.

Relighting