Let's first take a look at how John tries to request cloud services. He logs into the Horizon portal using his credentials that we created.

When he logs in, he will see a screen and the TestingCloud tenant, as this is the only one he is associated with. Also, he will only see the project section as he is only a member of the project. He is also presented with the dashboard showing the limits of his project and what has already been consumed:

Now, in order to request for services, he needs to go into the corresponding panel and request for them.

Before this is done, let's understand some base constructs of access and security that the user will be using in order to access his services. If you are familiar with AWS, the same constructs apply here.

We can see the different components from the access and security panel in the project dashboard.

Security groups are at a high level, a basic layer 3 firewall that is wrapped around every compute instance that is launched inside a group. Every instance needs to be launched inside a security group. This contains inbound and outbound rules.

It is also worthwhile to note that servers that are in the same security group also need to have rules permitting them to talk to each other, if they decide to do so. A common good practice is to create a security group per tier/application, whichever way the security needs of the enterprise are met. By default, a default security group is created, which has an – Allow rule. It is recommended that you do not use this security group.

Key pairs are in essence a public/private RSA key (asymmetric encryption) that helps you to log in to the instances that were created without a password. You can choose to create a key outside the OpenStack environment and then import it so that you can continue using your key pairs both inside and outside the OpenStack cloud.

In order to generate a key pair on any Linux machine with ssh-keygen, type the following command:

ssh-keygen -t rsa -f <filename>.key

And after this, just import the public part of your key pair. You should keep the private key somewhere safe.

If you are using Windows, download the Puttygen executable from the Internet say, www.putty.org, and generate the key pair as shown in the following by choosing SSH2-RSA and clicking on the Generate button.

OpenStack also lets you create a key pair by clicking on the Create Keypair button. It will download the private key to your desktop. Please ensure you keep this safe, because it is not backed up anywhere else and without it, you would lose access to any instances that are protected by the key.

Now that we have understood the access and security mechanism, John is ready to request his first VM using the portal. As followers of best practices, we will create a security group for our VM and a key pair to go with it.

Creating a security group is easy; what actually requires a little thought is the rules that need to go in it. So before we create our security group, let's for a moment think about the rules we would put in.

There are two types of rules—ingress and egress (in and out); this is from the perspective of the VM. Any traffic that we need to permit to the VM will be ingress and from the VM will be egress.

So, we need to think about this for a moment. Say we are creating a Linux web server, we will need just one port to be permitted in, Port 80? Maybe, but remember, this security group filters all the traffic, so if we only permit port 80 in, we won't be able to SSH into the machine. So the rules should include every type of traffic you want to be allowed to the machine: traffic to the applications, management, monitoring, everything.

So, in our case, we will allow port 22, 80, and 443 for management and application, and then we will permit port 161 to monitor using SNMP. We will allow all ports from the machine outwards. (This might not be allowed in a secure environment, but for now this is alright). As discussed in the following table:

|

Direction |

Protocol |

Port |

Remote |

|---|---|---|---|

|

In |

TCP |

22 |

0.0.0.0/0 |

|

In |

TCP |

80 |

0.0.0.0/0 |

|

In |

TCP |

443 |

0.0.0.0/0 |

|

In |

UDP |

161 |

0.0.0.0/0 |

|

Out |

ANY |

0.0.0.0/0 |

As you can see, we can create the rules, and we have set any source/destination as the IP range. We could even control this depending on the application. It is also worthwhile knowing that the remote can be an IP CIDR or even a security group. So if we want to permit the servers in the web security group to talk to servers in the app security group, we can create these rules in that fashion.

So, now that we know our security group rules, let's create them. Click on Access & Security, choose the Security Group Tab, and click on Create Security Group.

It will ask for a name and description, fill that in, and click on Create Security Group, you will see that the security group has been created.

By default, this will block all incoming traffic and permit outgoing. We will need to set the rules as we have planned, so let's go ahead and click on Manage Rules:

We will then add rules that we have planned for by clicking on the Add Rule button. There are some predefined rules for ports, we can use these or create custom ones.

After adding the rules, our configuration should look like the following:

Once our security group is created, we proceed to the next step.

We can choose to create a key pair in the OpenStack system or outside it, and then just import the public key. The import option is used in case we want to use an existing key that we used somewhere else, or we can use an external system for enterprise key management. For our purposes, we will just create a key pair.

Click on Access & Security and then click on the Key Pairs tab and click on Create Key Pair:

Give the key pair a name, and it will be created. Please remember to download the private key and store it in a safe place.

Now that we are ready, head over to the instances panel, which displays the currently running instances for the project. Click on Launch Instance.

We will have to fill in some information in all the tabs:

Since we only have one AZ created (by default), we will just use that. We will have to fill in the following details to launch an instance:

- Instance Name: The instance name as it will be shown in the console.

- Flavor: This is the size of instance that will be launched. There are some predefined values that we can see using the

nova flavor-listcommand:

We can also create a new flavor using the

nova flavor-createcommand. - Instance Boot Source: The instance boot source specifies where to boot the instance from; since we are going to use the cirros test image that we created, we will choose that in this case.

- Key Pair: Choose a key pair to use. (We choose the one we created.)

- Security Groups: Choose one or more security groups to use. (We choose Webserver.)

- Networking: We choose the tenant network to use. We can choose to add more than one NIC card, if there are multiple networks. However, in our case, as an administrator, we only created a single NIC:

These are mandatory fields, but we can also inject a post creation customization script and even partitioning in the next tabs, but for our purposes, let's click on the Launch button.

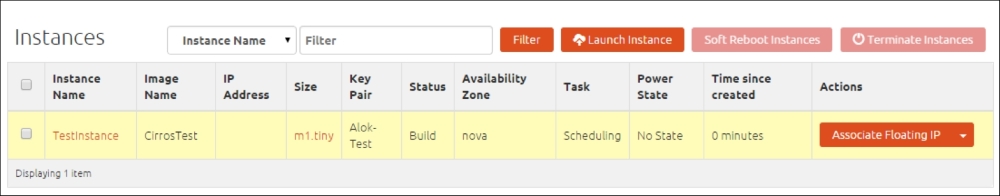

Once the request is submitted, we will see the instance in the list and see the status as scheduled, shown in the following screenshot:

If we go back to where we installed Nova, the scheduler service is responsible for choosing the appropriate compute node on which the instance should reside. The instance powers on after a while and you can SSH into the instance using the private key that you created.

John can similarly request other services such as a block volume, by going to the volumes panel and clicking on Create Volume. So he requests a 5 GB volume so that he can store the images that he will work on, and this will be available even after the instance is terminated.

On clicking on Create Volume, a volume is created. It can then be attached to the instance that we just created. However, after the volume is mounted on the OS, the operating system tools need to be used (such as the fdisk/disk manager) to partition and format the volume and mount it.

Jane, unlike John, is fond of command line (CLI) utilities and wants to perform the same tasks using either CLI tools or a scriptable method. She can choose to either use the APIs directly or install command line tools.

So, say Jane has a Linux machine that she uses as a development machine (running CentOS). She can install command line tools on her machine using either the Python PiP, or she can install them using the yum package manager.

The package name format is python-<project>client, so we can replace the project by Keystone, Glance, Nova, Cinder, and so on. For example, Jane will execute the following commands for the Nova, Glance and Cinder command line tools:

yum install python-novaclient yum install python-cinderclient yum install python-glanceclient

Once the tools are installed, Jane would log in to the GUI once, navigate to the Access & Security panel, click on the API Access tab, and click on Download Openstack RC File:

This will download a shell script that will help Jane to set the environment variables for the command line tools to work. After running the script, it will also prompt for Jane's password and will set the password as an environment variable. She will then have the ability to fire commands to perform the same functions as John, but using the command line tools instead.

In order to create a key pair using the CLI tools, Jane should execute the following command:

nova keypair-add jane-key

This command will create a key called jane-key and display the private key on screen, which Jane has to copy and store in a secure location.

In order to request a server using the command line, Jane needs some information. She needs the flavor ID, image ID, and key name.

She can execute the following command:

nova flavor-list

She will get a list of flavors with their IDs and will see the list of images using the following command:

nova image-list

Jane would now see the following:

So if she wants to spin up a machine, which is m1.tiny, with the cirros test image and with the machine name of janesbox, she can use the following command:

nova boot --flavor 1 --key_name jane-key --image 34b205dc-63aa-4b66-a347-2ab98451252d janesbox

You have to replace the value of your image ID in the preceding command, and your key name, as shown here:

You can see that the machine starts to build like the one we built with the GUI:

So, with the CLI we can perform the same functions as that from the portal. More tech savvy people can make calls directly using a programming language of their choice using the APIs offered by the system.