6

Unbiased FIR State Estimation

The arithmetic mean is the most probable value.

Carl F. Gauss [53], p. 244

It is usually taken for granted that the right method for determining the constants is the method of least squares.

Karl Pearson [146], p. 266

6.1 Introduction

Optimal state estimation requires information about noise and initial values, which is not always available. The requirement of initial values is canceled in the optimal unbiased or ML estimators, which still require accurate noise information. Obviously, these estimators can produce large errors if the noise statistics are inaccurate or the noise is far from Gaussian. In another extreme method of state estimation that gives UFIR estimates corresponding to the LS, the observation mean is tracked only under the zero mean noise assumption. Designed to satisfy only the unbiasedness constraint, such an estimator discards all other requirements and in many cases justifies suboptimality by being more robust. The great thing about the UFIR estimator is that, unlike OFIR, OUFIR, and ML FIR, it only needs an optimal horizon of ![]() points to minimize MSE. It is worth noting that determining

points to minimize MSE. It is worth noting that determining ![]() requires much less effort than noise statistics. Given

requires much less effort than noise statistics. Given ![]() , the UFIR state estimator, which has no other tuning factors, appears to be the most robust in the family of linear state estimators.

, the UFIR state estimator, which has no other tuning factors, appears to be the most robust in the family of linear state estimators.

In this chapter, we discuss different kinds of UFIR state estimators, mainly filters and smoothers and, to a lesser extent, predictors.

6.2 The a posteriori UFIR Filter

Let us consider an LTV system represented in discrete‐time state space with the following state and observation equations, respectively,

These equations can be extended on ![]() as

as

using the definitions of vectors and matrices given for (4.7) and (4.14).

6.2.1 Batch Form

The unbiased filtering problem can be solved if our goal is to satisfy only the unbiasedness condition

and then minimize errors by choosing the optimal horizon of ![]() points.

points.

For the FIR estimate defined as

and the state model represented with the ![]() th row vector in (6.3),

th row vector in (6.3),

the condition (6.5) gives two unbiasedness constraints,

By multiplying both sides of (6.8) from the right‐hand side by the matrix identity ![]() and discarding the nonzero

and discarding the nonzero ![]() on both sides, we find the fundamental gain

on both sides, we find the fundamental gain ![]() of the UFIR filter

of the UFIR filter

where ![]() . Then, referring to the forced gain

. Then, referring to the forced gain ![]() given by (6.9), the a posteriori UFIR filtering estimate can be written as

given by (6.9), the a posteriori UFIR filtering estimate can be written as

As can be seen, the gain ![]() (6.10a) does not contain any information about noise and initial values, which means that the UFIR filter does not have the inherent disadvantages of the KF and OFIR filter.

(6.10a) does not contain any information about noise and initial values, which means that the UFIR filter does not have the inherent disadvantages of the KF and OFIR filter.

The error covariance for the a posteriori UFIR filter (6.11) can be written similarly to the OFIR filter (4.31) as

where the error residual matrices are given by

It now follows that (6.12) has the same structure as (4.55) of the a posteriori OUFIR filter with, however, another gain ![]() in (6.13) and (6.14).

in (6.13) and (6.14).

Because ![]() given by (6.10a) does not require noise covariances, the UFIR filter is not optimal and therefore generally gives larger errors than the OUFIR filter. In practice, however, this flaw can be ignored if a higher robustness is required, as in many applications, especially industrial.

given by (6.10a) does not require noise covariances, the UFIR filter is not optimal and therefore generally gives larger errors than the OUFIR filter. In practice, however, this flaw can be ignored if a higher robustness is required, as in many applications, especially industrial.

Generalized Noise Power Gain

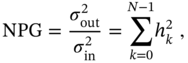

An important indicator of the effectiveness of FIR filtering is the NPG introduced by Trench in [198]. The NPG is the ratio of the output noise variance ![]() to the input noise variance

to the input noise variance ![]() , which is akin to the noise figure in wireless communications. For white Gaussian noise, the NPG is equal to the sum of the squared coefficients of the FIR filter impulse response

, which is akin to the noise figure in wireless communications. For white Gaussian noise, the NPG is equal to the sum of the squared coefficients of the FIR filter impulse response ![]() ,

,

which is the squared norm of ![]() .

.

In state space, the homogeneous gain ![]() represents the coefficients of the FIR filter impulse response. Therefore, the product

represents the coefficients of the FIR filter impulse response. Therefore, the product ![]() plays the role of a generalized NPG (GNPG) [179]. Referring to (6.10a) and (6.10b), GNPG can be written in the following equivalent forms:

plays the role of a generalized NPG (GNPG) [179]. Referring to (6.10a) and (6.10b), GNPG can be written in the following equivalent forms:

It follows that GNPG is a symmetric square matrix ![]() , where the main diagonal components represent the NPGs for the system states, and the remaining components the cross NPGs. The main property of

, where the main diagonal components represent the NPGs for the system states, and the remaining components the cross NPGs. The main property of ![]() is that its trace decreases with increasing horizon length, which provides effective noise reduction. On the other hand, an increase in

is that its trace decreases with increasing horizon length, which provides effective noise reduction. On the other hand, an increase in ![]() causes an increase in bias errors, and therefore

causes an increase in bias errors, and therefore ![]() must be optimally set by choosing an optimal horizon length.

must be optimally set by choosing an optimal horizon length.

6.2.2 Iterative Algorithm Using Recursions

Recursions for the batch a posteriori UFIR filtering estimate (6.11) can be found by decomposing (6.11) as ![]() , where

, where

![]() is the homogeneous estimate and

is the homogeneous estimate and ![]() is the forced estimate.

is the forced estimate.

Using (6.16b), the estimate ![]() can be transformed to

can be transformed to

and, by applying the decomposition

the recursion for the GNPG (6.16b) can be obtained if we transform ![]() as

as

This gives the following forward and backward recursive forms:

Using (6.20) and (6.22) and extracting ![]() from (6.10a), we next transform the product

from (6.10a), we next transform the product ![]() as

as

By combining (6.21) and (6.23), we finally obtain the following recursion for the homogeneous estimate

where ![]() is the bias correction gain of the UFIR filter.

is the bias correction gain of the UFIR filter.

To find the recursion for the forced estimate (6.18), we first show that

Then, by extracting ![]() from (6.19),

from (6.19), ![]() can be transformed as

can be transformed as

Now, by combining (6.25) and (6.26) and substituting (6.22), the forced estimate (6.18) can be represented with the recursion

which, together with (6.24), gives the recursions for the a priori and a posteriori UFIR filtering estimates, respectively,

The recursive computation of the a posteriori UFIR filtering estimate (6.11) can now be summarized with the following theorem.

Proof The derivation of the recursive forms for (6.11) is provided by (6.17)–(6.29).

Note that the need to compute the initial ![]() and

and ![]() in short batch forms arises from the fact that the inverse in (6.16c) does not exist on shorter horizons.

in short batch forms arises from the fact that the inverse in (6.16c) does not exist on shorter horizons.

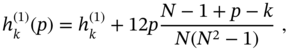

Modified GNPG

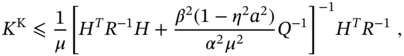

It was shown in [50,195] that the robustness of the UFIR filter can be improved by scaling the GNPG (6.21) with the weight ![]() as

as

The weight ![]() is defined by

is defined by

where ![]() ,

, ![]() is the integer part of

is the integer part of ![]() , and

, and ![]() is the number of the states. The RMS deviation

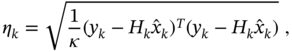

is the number of the states. The RMS deviation ![]() of the estimate is computed using the innovation residual as

of the estimate is computed using the innovation residual as

where ![]() means the dimension of the target motion. With this adaptation of the GNPG to the operation conditions, the UFIR filter can be approximately twice as accurate as the KF when tracking maneuvering targets [50].

means the dimension of the target motion. With this adaptation of the GNPG to the operation conditions, the UFIR filter can be approximately twice as accurate as the KF when tracking maneuvering targets [50].

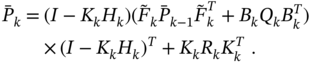

6.2.3 Recursive Error Covariance

Although the UFIR filter does not require error covariance ![]() to obtain an estimate,

to obtain an estimate, ![]() may be required to evaluate the quality of the estimate on a given horizon. Since the batch form (6.12) is not always suitable for fast estimation, next we present the recursive forms obtained in [226].

may be required to evaluate the quality of the estimate on a given horizon. Since the batch form (6.12) is not always suitable for fast estimation, next we present the recursive forms obtained in [226].

Consider ![]() given by (6.12) and represent with

given by (6.12) and represent with

where the components are defined as

Using the decomposition ![]() , we represent matrix

, we represent matrix ![]() recursively as

recursively as

Then, referring to (6.20), (6.22), (6.31), and

and substituting ![]() with

with ![]() , we represent matrices

, we represent matrices ![]() and

and ![]() recursively with

recursively with

Similarly, the recursions for ![]() and

and ![]() can be obtained as

can be obtained as

By combining (6.31), (6.34), (6.35), and (6.36) with (6.30), we finally arrive at the recursive form for the error covariance ![]() of the a posteriori UFIR filter

of the a posteriori UFIR filter

It is worth noting that (6.37) is equivalent to the error covariance of the a posteriori KF (3.77), in which the Kalman gain ![]() must be replaced with the bias correction gain

must be replaced with the bias correction gain ![]() of the UFIR filter. The notable difference is that the Kalman gain minimizes the MSE and is thus optimal, whereas the

of the UFIR filter. The notable difference is that the Kalman gain minimizes the MSE and is thus optimal, whereas the ![]() derived from the unbiased constraint (6.17) makes the UFIR estimate truly unbiased, but noisier, since no effort has been made so far to minimize error covariance. The minimum MSE can be reached in the UFIR filter output at an optimal horizon of

derived from the unbiased constraint (6.17) makes the UFIR estimate truly unbiased, but noisier, since no effort has been made so far to minimize error covariance. The minimum MSE can be reached in the UFIR filter output at an optimal horizon of ![]() points, which will be discussed next.

points, which will be discussed next.

6.2.4 Optimal Averaging Horizon

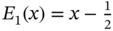

Unlike KF, which has IIR, the UFIR filter operates with data on the horizon ![]() of

of ![]() points, which is sometimes called the FIR filter memory. To minimize MSE,

points, which is sometimes called the FIR filter memory. To minimize MSE, ![]() should be optimally chosen as

should be optimally chosen as ![]() . Otherwise, if

. Otherwise, if ![]() , noise reduction will be ineffective, and, for

, noise reduction will be ineffective, and, for ![]() , bias errors will prevail as shown in Fig. 6.1.

, bias errors will prevail as shown in Fig. 6.1.

Figure 6.1 The RMSE produced by the UFIR filter as a function of  . An optimal balance is achieved when

. An optimal balance is achieved when  , where the UFIR estimate is still less accurate than the KF estimate.

, where the UFIR estimate is still less accurate than the KF estimate.

Since the UFIR filter is not selective, there is no exact relationship between filtering order and memory. Optimizing the memory of a UFIR filter in state space requires finding the derivative of the trace ![]() of the error covariance with respect to

of the error covariance with respect to ![]() , which is problematic, especially for LTV systems. In some cases,

, which is problematic, especially for LTV systems. In some cases, ![]() can be found heuristically for real data. If a reference model or the ground truth is available at the test stage, then

can be found heuristically for real data. If a reference model or the ground truth is available at the test stage, then ![]() can be found by minimizing

can be found by minimizing ![]() . For polynomial models,

. For polynomial models, ![]() was found in [169] analytically through higher order states, which has explicit limitations. If the ground truth is not available, as in many applications, then

was found in [169] analytically through higher order states, which has explicit limitations. If the ground truth is not available, as in many applications, then ![]() can be measured via the derivative of the trace of the measurement residual covariance, as shown in [153]. Next we will discuss several such cases based on the time‐invariant state‐space model

can be measured via the derivative of the trace of the measurement residual covariance, as shown in [153]. Next we will discuss several such cases based on the time‐invariant state‐space model

All methods will be separated into two classes depending on the operation conditions: with and without the ground truth.

Available Ground Truth

When state ![]() is available through the ground truth measurement, which means that

is available through the ground truth measurement, which means that ![]() is also available,

is also available, ![]() can be found by referring to Fig. 6.1 and solving on

can be found by referring to Fig. 6.1 and solving on ![]() the following optimization problem

the following optimization problem

There are several approaches to finding ![]() using (6.40).

using (6.40).

- When

in (6.40) reaches a minimum with

in (6.40) reaches a minimum with  , we can assume that

, we can assume that  and represent the error covariance (6.37) by the discrete Lyapunov equation (A.34)

where

and represent the error covariance (6.37) by the discrete Lyapunov equation (A.34)

where  is required to be stable and matrix

is required to be stable and matrix  is symmetric and positive definite. Note that the bias correction gain

is symmetric and positive definite. Note that the bias correction gain  in (6.41) is related to GNPG

in (6.41) is related to GNPG  , which decreases as the reciprocal of

, which decreases as the reciprocal of  . The solution to (6.41) is given by the infinite sum [83]

whose convergence depends on the model. The trace of (6.42) can be plotted, and then

. The solution to (6.41) is given by the infinite sum [83]

whose convergence depends on the model. The trace of (6.42) can be plotted, and then  can be determined at the point of minimum. For some models,

can be determined at the point of minimum. For some models,  can be found analytically using (6.42) [169].

can be found analytically using (6.42) [169]. - For

given by (6.37), the optimization problem (6.40) can also be solved numerically with respect to

given by (6.37), the optimization problem (6.40) can also be solved numerically with respect to  by increasing

by increasing  until

until  reaches a minimum. However, for some models, there may be ambiguities associated with multiple minima. Therefore, to make the batch estimator as fast as possible,

reaches a minimum. However, for some models, there may be ambiguities associated with multiple minima. Therefore, to make the batch estimator as fast as possible,  should be determined by the first minimum [153]. It is worth noting that the solution (6.42) agrees with the fact that when the model is deterministic,

should be determined by the first minimum [153]. It is worth noting that the solution (6.42) agrees with the fact that when the model is deterministic,  and

and  , and the filter is a perfect match, then we have

, and the filter is a perfect match, then we have  and

and  .

. - From Fig. 6.1 and many other investigations, it can be concluded that the difference between the KF and UFIR estimates vanishes on larger horizons,

[179]. Furthermore, the UFIR filter is low sensitive to

[179]. Furthermore, the UFIR filter is low sensitive to  ; that is, this filter produces acceptable errors when

; that is, this filter produces acceptable errors when  changes up to 30% around

changes up to 30% around  [179]. Thus, we can roughly characterize errors in the UFIR estimate at

[179]. Thus, we can roughly characterize errors in the UFIR estimate at  in terms of the error covariance of the KF. If the product

in terms of the error covariance of the KF. If the product  is invertible, then replacing the Kalman gain

is invertible, then replacing the Kalman gain  with the bias correction gain

with the bias correction gain  in the error covariance of the KF as

in the error covariance of the KF as  and then providing simple transformations give

and then providing simple transformations give

where

is prior error covariance of the KF. For the given (6.43), the backward recursion (6.22) can be used to compute the GNPG back in time as(6.44)

is prior error covariance of the KF. For the given (6.43), the backward recursion (6.22) can be used to compute the GNPG back in time as(6.44)

Because an increase in

always results in a decrease in the trace of

always results in a decrease in the trace of  [179], then it follows that

[179], then it follows that  can be estimated by calculating the number of steps backward until

can be estimated by calculating the number of steps backward until  finally becomes singular. Since

finally becomes singular. Since  is singular when

is singular when  , where

, where  is the number of the states, the optimal horizon can be measured as

is the number of the states, the optimal horizon can be measured as  . This approach can serve when

. This approach can serve when  or when there are enough data points to find

or when there are enough data points to find  . Its advantage is that

. Its advantage is that  can be found even for LTV systems.

can be found even for LTV systems.

The previously considered methods make it possible to obtain ![]() by minimizing

by minimizing ![]() . However, if the ground truth is unavailable and the noise covariances are not well known, then

. However, if the ground truth is unavailable and the noise covariances are not well known, then ![]() measured in this ways may be incorrect. If this is the case, then another approach based on the measurement residual rather than the error covariance may yield better results.

measured in this ways may be incorrect. If this is the case, then another approach based on the measurement residual rather than the error covariance may yield better results.

Unavailable Ground Truth

In cases when the ground truth is not available, ![]() can be estimated using the available measurement residual covariance

can be estimated using the available measurement residual covariance ![]() [153]. To justify the approach, we represent

[153]. To justify the approach, we represent ![]() as

as

where the two last terms strongly depend on ![]() . Now, three limiting cases can be considered:

. Now, three limiting cases can be considered:

- When

, the UFIR filter degree is equal to the number of the data points, noise reduction is not provided, and the error

, the UFIR filter degree is equal to the number of the data points, noise reduction is not provided, and the error  with the sign reversed becomes almost equal to the measurement noise

with the sign reversed becomes almost equal to the measurement noise  . Replacing

. Replacing  with

with  makes (6.45) close to zero

makes (6.45) close to zero

- At the optimal point

, the last two terms in (6.45) vanish by the orthogonality condition, the measurement noise prevails over the estimation error,

, the last two terms in (6.45) vanish by the orthogonality condition, the measurement noise prevails over the estimation error,  , and the residual becomes

, and the residual becomes

- When

, the estimation error is mainly associated with increasing bias errors. Accordingly, the first term in (6.45) becomes dominant, and

, the estimation error is mainly associated with increasing bias errors. Accordingly, the first term in (6.45) becomes dominant, and  approaches it,

approaches it,

The transition between the ![]() values can be learned if we take into account (6.46), (6.47), and (6.48). Indeed, when

values can be learned if we take into account (6.46), (6.47), and (6.48). Indeed, when ![]() , the bias errors are practically insignificant. They grow with increasing

, the bias errors are practically insignificant. They grow with increasing ![]() and approach the standard deviation in the estimate at

and approach the standard deviation in the estimate at ![]() when the filter becomes slightly inconsistent with the system due to process noise.

when the filter becomes slightly inconsistent with the system due to process noise.

Since ![]() decreases monotonously with increasing

decreases monotonously with increasing ![]() due to noise reduction,

due to noise reduction, ![]() is also a monotonic function when

is also a monotonic function when ![]() . It grows monotonously from

. It grows monotonously from ![]() given by (6.46) to

given by (6.46) to ![]() given by (6.47) and passes through

given by (6.47) and passes through ![]() with a minimum rate when

with a minimum rate when ![]() is minimum. It matters that the rate of

is minimum. It matters that the rate of ![]() around

around ![]() cannot be zero due to increasing bias errors. And this is irrespective of the model, as the UFIR filter reduces white noise variance as the reciprocal of

cannot be zero due to increasing bias errors. And this is irrespective of the model, as the UFIR filter reduces white noise variance as the reciprocal of ![]() , and the effect of bias is still small when

, and the effect of bias is still small when ![]() .

.

Another behavior of ![]() can be observed for

can be observed for ![]() , when the bias errors grow not equally in different models. Although

, when the bias errors grow not equally in different models. Although ![]() is always convex on

is always convex on ![]() in stable filters, bias errors affecting its ascending right side can grow either monotonously in polynomial models or eventually oscillating in harmonic models. The latter means that

in stable filters, bias errors affecting its ascending right side can grow either monotonously in polynomial models or eventually oscillating in harmonic models. The latter means that ![]() can reach its value (6.48) with oscillations, even having a constant average rate.

can reach its value (6.48) with oscillations, even having a constant average rate.

It follows from the previous that the derivative of ![]() with respect to

with respect to ![]() can pass through multiple minima and that the first minimum gives the required value of

can pass through multiple minima and that the first minimum gives the required value of ![]() . The approach developed in [153] suggests that

. The approach developed in [153] suggests that ![]() can be determined in the absence of the ground truth by solving the following minimization problem

can be determined in the absence of the ground truth by solving the following minimization problem

where minimization should be ensured by increasing ![]() , starting from

, starting from ![]() , until the first minimum is reached. To avoid ambiguity when solving the problem (6.49), the number of points should be large enough. Moreover,

, until the first minimum is reached. To avoid ambiguity when solving the problem (6.49), the number of points should be large enough. Moreover, ![]() may require smoothing before applying the derivative.

may require smoothing before applying the derivative.

Let us now look at the ![]() properties in more detail and find a stronger justification for (6.49). Since the difference between the a priori and a posteriori UFIR estimates does not affect the dependence of

properties in more detail and find a stronger justification for (6.49). Since the difference between the a priori and a posteriori UFIR estimates does not affect the dependence of ![]() on

on ![]() , we can replace

, we can replace ![]() with

with ![]() ,

, ![]() with

with ![]() , and

, and ![]() with

with ![]() . We also observe that, since

. We also observe that, since ![]() ,

, ![]() ,

, ![]() , and

, and ![]() can be replaced by the UFIR estimate (6.29), the last two terms in (6.45) can be represented as

can be replaced by the UFIR estimate (6.29), the last two terms in (6.45) can be represented as

This transforms (6.45) to

Again we see the same picture. By ![]() , the bias correction gain in the UFIR filter is close to unity,

, the bias correction gain in the UFIR filter is close to unity, ![]() , and (6.50) gives a value close to zero, as in (6.46). When

, and (6.50) gives a value close to zero, as in (6.46). When ![]() ,

, ![]() and

and ![]() are small enough and (6.50) transforms into (6.47). Finally, for

are small enough and (6.50) transforms into (6.47). Finally, for ![]() ,

, ![]() becomes large and

becomes large and ![]() small, which leads to (6.48).

small, which leads to (6.48).

Although we have already discussed many details, it is still necessary to prove that the slope of ![]() is always positive up to and around

is always positive up to and around ![]() and that this function is convex on

and that this function is convex on ![]() . To show this, represent the derivative of

. To show this, represent the derivative of ![]() as

as ![]() , assuming a unit time‐step for simplicity. For (6.50) at the point

, assuming a unit time‐step for simplicity. For (6.50) at the point ![]() we have

we have ![]() and, therefore,

and, therefore, ![]() can be written as

can be written as

Since the GNPG ![]() decreases with increasing

decreases with increasing ![]() , then we have

, then we have ![]() , and it follows that

, and it follows that ![]() and so is the derivative of

and so is the derivative of ![]() ,

,

Thus, ![]() passes through

passes through ![]() with minimum positive slope. Moreover, since bias errors force

with minimum positive slope. Moreover, since bias errors force ![]() to approach

to approach ![]() as

as ![]() increases, function

increases, function ![]() is reminiscent of

is reminiscent of ![]() . Finally, further minimizing

. Finally, further minimizing ![]() with

with ![]() yields

yields ![]() , which can be called the key property of

, which can be called the key property of ![]() .

.

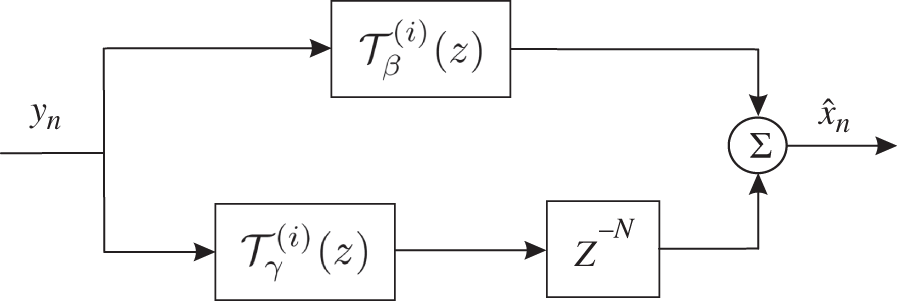

Example 6.1 Optimal horizon for two‐state polynomial model [153]. Consider a two‐state polynomial model (6.38) and (6.39) with  ,

, ![]() ,

, ![]() ,

, ![]() ,

, ![]() ,

, ![]() ,

, ![]() ,

, ![]() ,

, ![]() , and

, and ![]() .

.

Functions ![]() ,

, ![]() , and

, and ![]() computed analytically and numerically are shown in Fig. 6.2. As can be seen,

computed analytically and numerically are shown in Fig. 6.2. As can be seen, ![]() is measured either at the minimum of

is measured either at the minimum of ![]() or at the minimum of

or at the minimum of ![]() . For this model,

. For this model, ![]() can be calculated using the empirical relationship found in [153] using the noise variances. It also gives

can be calculated using the empirical relationship found in [153] using the noise variances. It also gives

Figure 6.2 Determining  for a UFIR filter applied to two‐state polynomial model using functions

for a UFIR filter applied to two‐state polynomial model using functions  ,

,  , and

, and  . Solid lines are theoretical, and dotted lines are experimental.

. Solid lines are theoretical, and dotted lines are experimental.

A example of measuring ![]() for a two‐state harmonic model can be found in [153].

for a two‐state harmonic model can be found in [153].

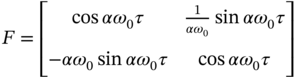

Example 6.2 Filtering a harmonic model [177]. Given a continuous‐time two‐state harmonic model ![]() of a conservative oscillator having the system matrix

of a conservative oscillator having the system matrix  , where

, where ![]() is the fundamental angular frequency,

is the fundamental angular frequency, ![]() , and

, and ![]() is an uncertain factor. An oscillator is observed in discrete time as

is an uncertain factor. An oscillator is observed in discrete time as ![]() with

with ![]() . The stationary noise

. The stationary noise ![]() is zero mean white Gaussian with the covariance

is zero mean white Gaussian with the covariance ![]() , where

, where  and

and ![]() is the uniform double‐sided PSD of

is the uniform double‐sided PSD of ![]() . The measurement noise is

. The measurement noise is ![]() .

.

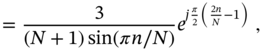

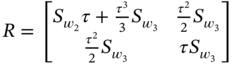

The continuous‐time state model is converted to ![]() , where the system matrix is

, where the system matrix is

and zero mean noise ![]() has the covariance

has the covariance

The KF and UFIR filter are run for ![]() ,

, ![]() ,

, ![]() ,

, ![]() , and

, and ![]() when

when ![]() and

and ![]() otherwise. Filtering errors are shown in Fig. 6.3, where the typical effects inherent to harmonic models are observed. Influenced by the uncertainty

otherwise. Filtering errors are shown in Fig. 6.3, where the typical effects inherent to harmonic models are observed. Influenced by the uncertainty ![]() acting over a short period of time

acting over a short period of time ![]() , KF produces long and oscillating transients, while the UFIR filter returns monotonously to normal filtering after

, KF produces long and oscillating transients, while the UFIR filter returns monotonously to normal filtering after ![]() points.

points.

![Schematic illustration of typical filtering errors produced by the UFIR filter and KF for a harmonic model with a temporary uncertainty in the time span [100120]: (a) first state and (b) second state.](https://imgdetail.ebookreading.net/2023/10/9781119863076/9781119863076__9781119863076__files__images__c06f003.png)

Figure 6.3 Typical filtering errors produced by the UFIR filter and KF for a harmonic model with a temporary uncertainty in the time span  : (a) first state and (b) second state.

: (a) first state and (b) second state.

6.3 Backward a posteriori UFIR Filter

The backward UFIR filter can be derived similarly to the forward UFIR filter if we refer to (5.23) and (5.26) and write the extended model as

for which the definitions of all vectors and matrices can be found after (5.23) and (5.26). Batch and recursive forms for this filter can be obtained as shown next.

6.3.1 Batch Form

The batch backward UFIR estimate ![]() can be defined as

can be defined as

where ![]() is given by (6.53). The homogenous gain

is given by (6.53). The homogenous gain ![]() and the forced gain

and the forced gain ![]() can be determined by satisfying the unbiasedness condition

can be determined by satisfying the unbiasedness condition ![]() applied to (6.54) and the model

applied to (6.54) and the model

which is the last ![]() th row vector in (6.52). This gives two unbiasedness constraints

th row vector in (6.52). This gives two unbiasedness constraints

and the first constraint (6.56) yields the fundamental gain

For ![]() obtained by (6.58), the forced gain is defined by (6.57), and we notice that the same result appears when the noise is neglected in the backward OFIR estimate (5.28). The backward a posteriori UFIR filtering estimate is thus given in a batch form by (6.54) with the gains (6.58) and (6.57).

obtained by (6.58), the forced gain is defined by (6.57), and we notice that the same result appears when the noise is neglected in the backward OFIR estimate (5.28). The backward a posteriori UFIR filtering estimate is thus given in a batch form by (6.54) with the gains (6.58) and (6.57).

The error covariance ![]() for the backward UFIR filter can be written as

for the backward UFIR filter can be written as

where the error residual matrices are specified by

Note that, as in the forward UFIR filter, matrices (6.60) and (6.61) provide an optimal balance between bias and random errors in the backward UFIR filtering estimate ![]() if we optimally set the averaging horizon of

if we optimally set the averaging horizon of ![]() points, as shown in Fig. 6.1 and Fig. 6.2.

points, as shown in Fig. 6.1 and Fig. 6.2.

6.3.2 Recursions and Iterative Algorithm

The batch estimate (6.54) can be computed iteratively on ![]() if we find recursions for

if we find recursions for ![]() and

and ![]() .

.

By introducing the backward GNPG

we write the homogeneous estimate as

and represent recursively the inverse of the GNPG (6.62) by

This gives two recursive forms

Referring to ![]() taken from (6.63a) and (6.63b), we next represent the product

taken from (6.63a) and (6.63b), we next represent the product ![]() as

as

By combining (6.65), (6.66), and (6.67), a recursion for ![]() can now be written as

can now be written as

where ![]() is the bias correction gain of the UFIR filter.

is the bias correction gain of the UFIR filter.

To derive a recursion for the forced gain ![]() , we use the decompositions

, we use the decompositions

follow the derivation of the recursive forms for the backward OFIR filter, provide routine transformations, and arrive at

A simple combination of (6.68) and (6.69) finally gives

where the prior estimate is specified by

The backward iterative a posteriori UFIR filtering algorithm can now be generalized with the pseudocode listed as Algorithm 12. The algorithm starts with the initial ![]() and

and ![]() computed at

computed at ![]() in short batch forms (6.62) and (6.54). The iterative computation is performed back in time on the horizon of

in short batch forms (6.62) and (6.54). The iterative computation is performed back in time on the horizon of ![]() points so that the filter gives estimates from zero to

points so that the filter gives estimates from zero to ![]() .

.

Example 6.3 Forward and backward filtering estimates. Consider a moving vehicle whose trajectory, measured each second, ![]() , by a GPS navigation unit, is used as ground truth. To estimate the vehicle state, use the two‐state polynomial model (6.38) and (6.39) with matrices specified in Example 6.1. The scalar noise components

, by a GPS navigation unit, is used as ground truth. To estimate the vehicle state, use the two‐state polynomial model (6.38) and (6.39) with matrices specified in Example 6.1. The scalar noise components ![]() and

and ![]() are specified with

are specified with ![]() , and

, and ![]() , and the optimal horizon for the UFIR filter is measured as

, and the optimal horizon for the UFIR filter is measured as ![]() .

.

Estimates of the vehicle coordinate ![]() obtained with the forward and backward KF and UFIR filter in the presence of a temporary uncertainty are shown in Fig. 6.4. As can be seen, beyond the uncertainty, all filters give consistent and hardly distinguishable estimates. The uncertainty that occurs as a step function at

obtained with the forward and backward KF and UFIR filter in the presence of a temporary uncertainty are shown in Fig. 6.4. As can be seen, beyond the uncertainty, all filters give consistent and hardly distinguishable estimates. The uncertainty that occurs as a step function at ![]() affects the estimates in such a way that UFIR filters produce larger excursions and shorter transients, while KFs produce smaller excursions and longer transients.

affects the estimates in such a way that UFIR filters produce larger excursions and shorter transients, while KFs produce smaller excursions and longer transients.

Figure 6.4 Estimates of the coordinate  of a moving vehicle obtained using forward and backward KFs and UFIR filters. The GPS‐based ground truth is dashed.

of a moving vehicle obtained using forward and backward KFs and UFIR filters. The GPS‐based ground truth is dashed.

It is worth noting that the typical differences between KFs and UFIR filters illustrated in Fig. 6.4 are recognized as fundamental [179]. Another thing to mention is that forward and backward filters act in opposite directions, so their responses appear antisymmetric.

6.3.3 Recursive Error Covariance

The recursive form for the error covariance (6.59) of the backward a posteriori UFIR filter can be found similarly to the forward UFIR filter. To this end, we first represent (6.59) using (6.60) and (6.61) as

We then find recursions for each of the matrix products in the previous relationship, combine the recursive forms obtained, and finally come up with

It can now be shown that there is no significant difference between the error covariances of the forward and backward UFIR filters. Since these filters process the same data, but in opposite directions, it follows that the errors on the given horizon ![]() are statistically equal.

are statistically equal.

6.4 The  ‐lag UFIR Smoother

‐lag UFIR Smoother

In postprocessing and when filtering stationary and quasi stationary signals, smoothing may be the best choice because it provides better noise reduction. Various types of smoothers can be designed using the UFIR approach, although many of them appear to be equivalent as opposed to OFIR smoothing. Here we will first derive the ![]() ‐lag FFFM UFIR smoother and then show that all other UFIR smoothing structures of this type are equivalent due to their ability to ignore noise.

‐lag FFFM UFIR smoother and then show that all other UFIR smoothing structures of this type are equivalent due to their ability to ignore noise.

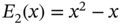

Let us look again at the ![]() ‐lag FFFM FIR smoothing strategy illustrated in Fig. 5.2. The corresponding UFIR smoother can be designed to satisfy the unbiasedness condition

‐lag FFFM FIR smoothing strategy illustrated in Fig. 5.2. The corresponding UFIR smoother can be designed to satisfy the unbiasedness condition

where the ![]() ‐lag estimate can be defined as

‐lag estimate can be defined as

and the state model represented by the ![]() th row vector in (6.3) as

th row vector in (6.3) as

where ![]() is the

is the ![]() th row vector in (4.9) and so is

th row vector in (4.9) and so is ![]() in

in ![]() .

.

6.4.1 Batch and Recursive Forms

Condition (6.73) applied to (6.74) and (6.75) gives two unbiasedness constraints,

and after simple manipulations the first one in (6.76) gives a fundamental UFIR smoother gain ![]() ,

,

Referring to ![]() , we next transform (6.78) to

, we next transform (6.78) to

where ![]() is the homogeneous gain (6.10a) of the UFIR filter, and express the

is the homogeneous gain (6.10a) of the UFIR filter, and express the ![]() ‐lag homogeneous UFIR smoothing estimate in terms of the filtering estimate

‐lag homogeneous UFIR smoothing estimate in terms of the filtering estimate ![]() as

as

that does not require recursion; that is, the recursively computed filtering estimate ![]() is projected into

is projected into ![]() in one step by the matrix

in one step by the matrix ![]() .

.

For the forced estimate, the recursive form

appears if we first represent the last row vector ![]() of the matrix

of the matrix ![]() given by (4.9) as

given by (4.9) as

where matrix ![]() becomes matrix

becomes matrix ![]() if we replace

if we replace ![]() by

by ![]() . Also

. Also ![]() can be written as

can be written as

and then the subsequent modification of (6.81) gives

Next, using the decomposition ![]() , we transform the forced estimate to the form

, we transform the forced estimate to the form

where the ![]() ‐varying product correction term

‐varying product correction term ![]() is computed recursively as

is computed recursively as

By combining (6.80) and (6.82), we finally arrive at the recursion

using which, the recursive ![]() ‐lag FFFM a posteriori UFIR smoothing algorithm can be designed as follows. Reorganize (6.83) as

‐lag FFFM a posteriori UFIR smoothing algorithm can be designed as follows. Reorganize (6.83) as

set ![]() , assign

, assign ![]() , and compute for

, and compute for ![]() until this recursion gives

until this recursion gives ![]() . Given

. Given ![]() and

and ![]() , the smoothing estimate is obtained by (6.84). It is worth noting that in the particular case of an autonomous system,

, the smoothing estimate is obtained by (6.84). It is worth noting that in the particular case of an autonomous system, ![]() , the

, the ![]() ‐lag a posteriori UFIR smoothing estimate is computed using a simple projection (6.80).

‐lag a posteriori UFIR smoothing estimate is computed using a simple projection (6.80).

6.4.2 Error Covariance

In batch form, the ![]() ‐varying error covariance

‐varying error covariance ![]() of the FFFM UFIR smoother is determined by (6.12), although with the renewed matrices,

of the FFFM UFIR smoother is determined by (6.12), although with the renewed matrices,

where the error residual matrices are given by

To find a recursive form for (6.85), we write it in the form

where ![]() is the error covariance (6.37) of the UFIR filter, and represent the

is the error covariance (6.37) of the UFIR filter, and represent the ![]() ‐varying amendment

‐varying amendment ![]() as

as

It can be seen that ![]() naturally becomes zero at

naturally becomes zero at ![]() due to

due to ![]() . Furthermore, the structure of the matrix

. Furthermore, the structure of the matrix ![]() suggests that

suggests that ![]() and also

and also ![]() .

.

By considering several cases of ![]() for

for ![]() ,

,

and reasoning deductively, we obtain the following recursion for ![]() ,

,

where the matrix ![]() is still in batch form as

is still in batch form as

Similarly, we represent ![]() in special cases as

in special cases as

and then replace the sum in the parentheses with

which gives the following recursion for ![]() ,

,

By combining (6.90) and (6.93) with (6.89), we finally obtain the recursive form for the error covariance as

which should be computed starting with ![]() for the initial value

for the initial value

using the matrix ![]() given by (6.91) and

given by (6.91) and ![]() by (6.92). Noticing that the recursive form for the batch matrix

by (6.92). Noticing that the recursive form for the batch matrix ![]() given by (6.92) is not available in this procedure, we postpone to “Problems” the alternative derivation of recursive forms for (6.85).

given by (6.92) is not available in this procedure, we postpone to “Problems” the alternative derivation of recursive forms for (6.85).

Time‐Invariant Case

For LTI systems, the matrix ![]() , given in batch form as (6.92), can easily be represented recursively as

, given in batch form as (6.92), can easily be represented recursively as

and the ![]() ‐lag FFFM UFIR smoothing algorithm can be modified accordingly. Computing the initial

‐lag FFFM UFIR smoothing algorithm can be modified accordingly. Computing the initial ![]() by (6.95) and knowing the matrix

by (6.95) and knowing the matrix ![]() , one can update the estimates for

, one can update the estimates for ![]() as

as

It should be noted that the computation of the error covariance ![]() using (6.99) does not have to be necessarily included in the iterative cycle and can be performed only once after

using (6.99) does not have to be necessarily included in the iterative cycle and can be performed only once after ![]() reaches the required lag‐value.

reaches the required lag‐value.

6.4.3 Equivalence of UFIR Smoothers

Other types of ![]() ‐lag UFIR smoothers can be obtained if we follow the FFBM, BFFM, and BFBM strategies discussed in Chapter . To show the equivalence of these smoothers, we will first look at the FFBM UFIR smoother and then draw an important conclusion.

‐lag UFIR smoothers can be obtained if we follow the FFBM, BFFM, and BFBM strategies discussed in Chapter . To show the equivalence of these smoothers, we will first look at the FFBM UFIR smoother and then draw an important conclusion.

The ![]() ‐lag FFBM UFIR smoother can be obtained similarly to the FFFM UFIR smoother in several steps. Referring to the backward state‐space model in (5.23) and (5.26), we define the state at

‐lag FFBM UFIR smoother can be obtained similarly to the FFFM UFIR smoother in several steps. Referring to the backward state‐space model in (5.23) and (5.26), we define the state at ![]() ) as

) as

and the forward UFIR smoothing estimate as

From (6.100) at the point ![]() , we can also obtain

, we can also obtain

where ![]() is the last row vector in (5.41) and so is

is the last row vector in (5.41) and so is ![]() in

in ![]() .

.

The unbiasedness condition ![]() applied to (6.100) and (6.101) gives

applied to (6.100) and (6.101) gives

Taking into account that ![]() and replacing

and replacing ![]() extracted from (6.102) with

extracted from (6.102) with ![]() , the previous relationship can be split into two unbiasedness constraints

, the previous relationship can be split into two unbiasedness constraints

What now follows is that the first constraint (6.103) is exactly the constraint (6.76) for the FFFM UFIR smoother. We then observe that for ![]() we have

we have ![]() and thus

and thus ![]() can be transformed as

can be transformed as

Finally, we transform the second constraint (6.104) to

and conclude that this is constraint (6.77) for FFFM UFIR smoothing.

Now the following important conclusion can be drawn. Because the FFBM and FFFM UFIR smoothers obey the same unbiasedness constraints in (6.76) and (6.77), then it follows that these smoothers are equivalent. And this is not surprising, since all UFIR structures obeying only the unbiasedness constraint ignore noise. By virtue of that, the forward and backward models become identical at ![]() , and thus the FFFM and FMBM UFIR smoothers are equivalent. In addition, since the forward and backward UFIR filters are equivalent for the same reason, then it follows that the BFFM and BFBM UFIR smoothers are also equivalent, and an important finding follows.

, and thus the FFFM and FMBM UFIR smoothers are equivalent. In addition, since the forward and backward UFIR filters are equivalent for the same reason, then it follows that the BFFM and BFBM UFIR smoothers are also equivalent, and an important finding follows.

Equivalence of UFIR smoothers: Satisfied only the unbiasedness condition,

‐lag FFFM, FFBM, BFFM, and BFBM UFIR smoothers are equivalent.

In view of the previous definition, the ![]() ‐lag FFFM UFIR smoother can be used universally as a UFIR smoother in both batch and iterative forms. Other UFIR smoothing structures such as FFBM, BFFM, and BFBM have rather theoretical meaning.

‐lag FFFM UFIR smoother can be used universally as a UFIR smoother in both batch and iterative forms. Other UFIR smoothing structures such as FFBM, BFFM, and BFBM have rather theoretical meaning.

6.5 State Estimation Using Polynomial Models

There is a wide class of systems and processes whose states change slowly over time and, thus, can be represented by degree polynomials. Examples can be found in target tracking, networking, and biomedical applications. Signal envelopes in narrowband wireless communication channels, remote wireless control, and remote sensing are also slowly changing.

The theory of UFIR state estimation developed for discrete time‐invariant polynomial models [172] implies that the process can be represented on ![]() using the following state‐space equations

using the following state‐space equations

where ![]() and

and ![]() are zero mean noise vectors. The

are zero mean noise vectors. The ![]() power of the system matrix

power of the system matrix ![]() has a specific structure

has a specific structure

which means that, by ![]() , each row in

, each row in ![]() is represented with the descending

is represented with the descending ![]() th degree,

th degree, ![]() , Taylor or Maclaurin series, where

, Taylor or Maclaurin series, where ![]() is the number of the states.

is the number of the states.

6.5.1 Problems Solved with UFIR Structures

The UFIR approach applied to the model in (6.106) and (6.107) to satisfy the unbiasedness condition gives a unique ![]() th degree polynomial impulse response function

th degree polynomial impulse response function ![]() for each of the states separately, where

for each of the states separately, where ![]() is a discrete time shift relative to the current point

is a discrete time shift relative to the current point ![]() . Function

. Function ![]() has many useful properties, which make the UFIR estimate near optimal. Depending on

has many useful properties, which make the UFIR estimate near optimal. Depending on ![]() , the following types of

, the following types of ![]() can be recognized. When

can be recognized. When ![]() , function

, function ![]() is used to obtain UFIR filtering. When

is used to obtain UFIR filtering. When ![]() , function

, function ![]() serves to obtain

serves to obtain ![]() ‐lag UFIR smoothing filtering. Finally, when

‐lag UFIR smoothing filtering. Finally, when ![]() , function

, function ![]() is used to obtain

is used to obtain ![]() ‐step UFIR predictive filtering.

‐step UFIR predictive filtering.

Accordingly, the following problems can be solved by applying UFIR structures to a polynomial signal ![]() measured as

measured as ![]() in the presence of zero mean additive noise:

in the presence of zero mean additive noise:

- Filtering provides an estimate at

based on data taken from

based on data taken from  ,

,

- Smoothing filtering provides a

‐lag,

‐lag,  , smoothing estimate at

, smoothing estimate at  based on data taken from

based on data taken from  ,

,

- Predictive filtering provides a

‐step,

‐step,  , predictive estimate at

, predictive estimate at  based on data taken from

based on data taken from  ,

,

Note that one‐step predictive UFIR filtering,

, was originally developed for polynomial models in [71]. In state space, it is known as RH FIR filtering [106] used in state‐feedback control and MPC.

, was originally developed for polynomial models in [71]. In state space, it is known as RH FIR filtering [106] used in state‐feedback control and MPC. - Smoothing provides a

‐lag smoothing estimate at

‐lag smoothing estimate at  ,

,  , based on data taken from

, based on data taken from  ,

,

- Prediction provides a

‐step,

‐step,  , prediction at

, prediction at  based on data taken from

based on data taken from  ,

,

In the previous definitions of the state estimation problems, the functions ![]() and

and ![]() are not equal for

are not equal for ![]() , but can be transformed into each other. More detail can be found in [181].

, but can be transformed into each other. More detail can be found in [181].

6.5.2 The  ‐shift UFIR Filter

‐shift UFIR Filter

Looking at the details of the UFIR strategy, we notice that the approach that ignores zero mean noise allows us to solve universally the filtering problem (6.109), the smoothing filtering problem (6.110), and the predictive filtering problem (6.111) by obtaining a ![]() ‐shift estimate [176]. The UFIR approach also assumes that a shift to the past can be achieved at point

‐shift estimate [176]. The UFIR approach also assumes that a shift to the past can be achieved at point ![]() using data taken from

using data taken from ![]() with a positive smoother lag

with a positive smoother lag ![]() , a shift to the future at point

, a shift to the future at point ![]() using data taken from

using data taken from ![]() with a positive prediction step

with a positive prediction step ![]() , and that

, and that ![]() means filtering.

means filtering.

Thus, the ![]() ‐shift UFIR filtering estimate can be defined as

‐shift UFIR filtering estimate can be defined as

where the components of the gain ![]() are diagonal matrices specified by the matrix

are diagonal matrices specified by the matrix

whose components, in turn, are the values of the function ![]() . The unbiasedness condition applied to (6.114) gives the unbiasedness constraint

. The unbiasedness condition applied to (6.114) gives the unbiasedness constraint

where ![]() .

.

For the ![]() th system state, the

th system state, the ![]() ‐shift UFIR filtering estimate is defined as

‐shift UFIR filtering estimate is defined as

and the constraint (6.115) is transformed to

where ![]() means the

means the ![]() th row in

th row in ![]() and the remaining

and the remaining ![]() th rows are given by

th rows are given by

It is worth noting that the linear matrix equation 6.117 can be solved analytically for the ![]() th degree polynomial impulse response

th degree polynomial impulse response ![]() . This gives the

. This gives the ![]() ‐varying function

‐varying function

where ![]() ,

, ![]() , and the coefficient

, and the coefficient ![]() is defined by

is defined by

where the determinant ![]() of the

of the ![]() ‐varying Hankel matrix

‐varying Hankel matrix ![]() is specified via the Vandermonde matrix

is specified via the Vandermonde matrix ![]() as

as

and ![]() is the minor of

is the minor of ![]() . The

. The ![]() th component

th component ![]() ,

, ![]() , of matrix (6.122) is the power series

, of matrix (6.122) is the power series

where ![]() is the Bernoulli polynomial.

is the Bernoulli polynomial.

The coefficients of several low‐degree polynomials ![]() , which are most widely used in practice, are given in Table 6.1. Using this table, or (6.121) for higher‐degree systems, one can obtain an analytic form for

, which are most widely used in practice, are given in Table 6.1. Using this table, or (6.121) for higher‐degree systems, one can obtain an analytic form for ![]() as a function of

as a function of ![]() , where

, where ![]() is for UFIR filtering,

is for UFIR filtering, ![]() for UFIR smoothing filtering, and

for UFIR smoothing filtering, and ![]() for UFIR predictive filtering.

for UFIR predictive filtering.

Table 6.1 Coefficients ![]() of Low‐Degree Functions

of Low‐Degree Functions ![]() .

.

| Coefficients | |

|---|---|

| Uniform: | |

| Ramp: | |

| Quadratic: |  |

| |

| |

|

Properties of  ‐shift UFIR Filters

‐shift UFIR Filters

The most important properties of the impulse response function ![]() are listed in Table 6.2, which also summarizes some of the critical findings [181]. If we set

are listed in Table 6.2, which also summarizes some of the critical findings [181]. If we set ![]() , we can use this table to examine the properties of the UFIR filter. We can also characterize the UFIR filter in terms of system theory. Indeed, since the transfer function

, we can use this table to examine the properties of the UFIR filter. We can also characterize the UFIR filter in terms of system theory. Indeed, since the transfer function ![]() of the

of the ![]() th degree UFIR filter is equal to unity at zero frequency,

th degree UFIR filter is equal to unity at zero frequency, ![]() , then it follows that this filter is essentially a low‐pass (LP) filter. Moreover, if we analyze other types of FIR structures in a similar way, we can come to the following general conclusion.

, then it follows that this filter is essentially a low‐pass (LP) filter. Moreover, if we analyze other types of FIR structures in a similar way, we can come to the following general conclusion.

Table 6.2 Main Properties of ![]() .

.

| Property | |

|---|---|

| Region of existence: | |

| Unit area: | |

| Energy (NPG): | |

| Value at zero: | |

| Zero moments: | |

| Orthogonality: |  , , |

, , | |

| Unbiasedness: | |

|

State estimator in the transform domain: All optimal, optimal unbiased, and unbiased state estimators are essentially LP structures.

Since the sum of the values of ![]() is equal to unity, it follows that the UFIR filter is strictly stable. More specifically, it is a BIBO stable filter due to the FIR. The sum of the squared values of

is equal to unity, it follows that the UFIR filter is strictly stable. More specifically, it is a BIBO stable filter due to the FIR. The sum of the squared values of ![]() represents the filter NPG, which is equal to the energy of the function

represents the filter NPG, which is equal to the energy of the function ![]() . The important thing is that NPG is equal to the function

. The important thing is that NPG is equal to the function ![]() at zero, which, in turn, is equal to the zero‐degree coefficient

at zero, which, in turn, is equal to the zero‐degree coefficient ![]() . This means that the denoising properties of the UFIR filter can be fully explored using the value

. This means that the denoising properties of the UFIR filter can be fully explored using the value ![]() . It is also worth noting that the family of functions

. It is also worth noting that the family of functions ![]() and

and ![]() ,

, ![]() , establish an orthogonal basis, and thus high‐degree impulse responses can be computed through low‐degree impulse responses using a recurrence relation.

, establish an orthogonal basis, and thus high‐degree impulse responses can be computed through low‐degree impulse responses using a recurrence relation.

The fact that all moments of the function ![]() are equal to zero means nothing more and nothing less than the UFIR filter is strictly unbiased by design. The unbiasedness of FIR filters can also be checked by equating the area of the transfer function and the area of the squared magnitude frequency response. The same test for unbiasedness in the discrete Fourier transform (DSP) domain is ensured by equating the sum of the DSP values and the sum of the squared magnitude values. At the end of this chapter, when we will consider practical implementations, we will take a closer look at the properties of UFIR filters in the transform domain.

are equal to zero means nothing more and nothing less than the UFIR filter is strictly unbiased by design. The unbiasedness of FIR filters can also be checked by equating the area of the transfer function and the area of the squared magnitude frequency response. The same test for unbiasedness in the discrete Fourier transform (DSP) domain is ensured by equating the sum of the DSP values and the sum of the squared magnitude values. At the end of this chapter, when we will consider practical implementations, we will take a closer look at the properties of UFIR filters in the transform domain.

6.5.3 Filtering of Polynomial Models

The UFIR filter can be viewed as a special case of the ![]() ‐shift UFIR filter when

‐shift UFIR filter when ![]() . This transforms the impulse response function (6.120) to

. This transforms the impulse response function (6.120) to

where the coefficient ![]() is defined by (6.121) as

is defined by (6.121) as

The constant (zero‐degree, ![]() ) FIR function

) FIR function ![]() is used for simple averaging. The ramp (first‐degree,

is used for simple averaging. The ramp (first‐degree, ![]() ) FIR function

) FIR function

is applicable for linear signals. The quadratic (second‐degree, ![]() ) FIR function

) FIR function

is applicable for quadratically changing signals, etc.

There is also a recurrence relation [131]

that can be used when ![]() and

and ![]() to compute

to compute ![]() of any degree in terms of the lower‐degree functions. Several low‐degree functions

of any degree in terms of the lower‐degree functions. Several low‐degree functions ![]() computed using (6.128) are shown in Fig. 6.5.

computed using (6.128) are shown in Fig. 6.5.

Figure 6.5 Low‐degree polynomial FIR functions  .

.

The NPG of a UFIR filter, which is defined as ![]() , suggests that the best noise reduction associated with the lowest NPG is obtained by simple averaging (case

, suggests that the best noise reduction associated with the lowest NPG is obtained by simple averaging (case ![]() in Fig. 6.5). An increase in the filter degree leads to an increase in the filter output noise, and the following statements can be made.

in Fig. 6.5). An increase in the filter degree leads to an increase in the filter output noise, and the following statements can be made.

Noise reduction with polynomial filters: 1) A zero‐degree filter (simple averaging) is optimal in the sense of minimum produced noise; 2) An increase in the filter degree or, which is the same, the number of states leads to an increase in random errors.

Therefore, because of the better noise reduction, the low‐degree UFIR state estimators are most widely used. In Fig. 6.5, we see an increase in ![]() caused by an increase in the degree

caused by an increase in the degree ![]() at

at ![]() .

.

6.5.4 Discrete Shmaliy Moments

In [131], the class of the ![]() th‐degree polynomial FIR functions

th‐degree polynomial FIR functions ![]() , defined by (6.124) and having the previously listed fundamental properties, was tested using the orthogonality condition

, defined by (6.124) and having the previously listed fundamental properties, was tested using the orthogonality condition

where ![]() is the Kronecker symbol and

is the Kronecker symbol and ![]() . It was found that the set of functions

. It was found that the set of functions ![]() for

for ![]() and

and ![]() is orthogonal on

is orthogonal on ![]() with the square of the weighted norm

with the square of the weighted norm ![]() of

of ![]() given by

given by

where ![]() and

and ![]() for

for ![]() is the Pochhammer symbol. The non‐negative weight

is the Pochhammer symbol. The non‐negative weight ![]() in (6.129) is the ramp probability density function

in (6.129) is the ramp probability density function

An applied significance of this property for signal analysis is that, due to the orthogonality, higher‐degree functions ![]() can be computed in terms of lower‐degree functions using a recurrence relation (6.128).

can be computed in terms of lower‐degree functions using a recurrence relation (6.128).

Since the functions ![]() have the properties of discrete orthogonal polynomials (DOP), they were named in [61,162] discrete Shmaliy moments (DSM) and investigated in detail. It should be noted that, due to the embedded unbiasedness, DSM belong to the one‐parameter family of DOP, while the classical Meixner and Krawtchouk polynomials belong to the two‐parameter family, and the most general Hahn polynomials belong to the three‐parameter family of DOP [131]. This makes the DSM more suitable for unbiased analysis and reconstruction if signals than the classical DOP and Tchebyshev polynomials. Note that DSM are also generalized by Hanh's polynomials along with the classical DOP.

have the properties of discrete orthogonal polynomials (DOP), they were named in [61,162] discrete Shmaliy moments (DSM) and investigated in detail. It should be noted that, due to the embedded unbiasedness, DSM belong to the one‐parameter family of DOP, while the classical Meixner and Krawtchouk polynomials belong to the two‐parameter family, and the most general Hahn polynomials belong to the three‐parameter family of DOP [131]. This makes the DSM more suitable for unbiased analysis and reconstruction if signals than the classical DOP and Tchebyshev polynomials. Note that DSM are also generalized by Hanh's polynomials along with the classical DOP.

6.5.5 Smoothing Filtering and Smoothing

Both the ![]() ‐lag UFIR smoothing filtering problem (6.110) and the UFIR smoothing problem (6.112) can be solved universally using (6.120) and (6.121) if we assign

‐lag UFIR smoothing filtering problem (6.110) and the UFIR smoothing problem (6.112) can be solved universally using (6.120) and (6.121) if we assign ![]() ,

, ![]() .

.

Smoothing Filtering

A feature of UFIR smoothing filtering is that the function ![]() exists on the horizon

exists on the horizon ![]() , and otherwise it is equal to zero, while the lag

, and otherwise it is equal to zero, while the lag ![]() is limited to

is limited to ![]() . Zero‐degree UFIR smoothing filtering is still provided by simple averaging. First‐degree UFIR smoothing filtering can be obtained using the

. Zero‐degree UFIR smoothing filtering is still provided by simple averaging. First‐degree UFIR smoothing filtering can be obtained using the ![]() ‐varying ramp response function

‐varying ramp response function

where ![]() is the UFIR filter ramp impulse response (6.126). What can be observed is that better noise reduction is accompanied in (6.132) by loss of stability. Indeed, when

is the UFIR filter ramp impulse response (6.126). What can be observed is that better noise reduction is accompanied in (6.132) by loss of stability. Indeed, when ![]() approaches unity, the second term in (6.132) grows indefinitely, which also follows from NPG of the first‐degree given by

approaches unity, the second term in (6.132) grows indefinitely, which also follows from NPG of the first‐degree given by

Approximation (6.134) valid for large ![]() and

and ![]() clearly shows that increasing the horizon length

clearly shows that increasing the horizon length ![]() leads to a decrease in NPG, which improves noise reduction. Likewise, increasing the lag

leads to a decrease in NPG, which improves noise reduction. Likewise, increasing the lag ![]() results in better noise reduction.

results in better noise reduction.

The features discussed earlier are inherent to UFIR smoothing filters of any degree, although with the important specifics illustrated in Fig. 6.6. This figure shows that all smoothing filters of degree ![]() provide better noise reduction as

provide better noise reduction as ![]() increases, starting from zero. However, NPG can have multiple minima, and therefore the optimal lag

increases, starting from zero. However, NPG can have multiple minima, and therefore the optimal lag ![]() does not necessarily correspond to the middle of the averaging horizon

does not necessarily correspond to the middle of the averaging horizon ![]() . For odd degrees,

. For odd degrees, ![]() can be found exactly in the middle of

can be found exactly in the middle of ![]() , while for even degrees at other points. For more information see [174].

, while for even degrees at other points. For more information see [174].

Figure 6.6 The  ‐varying NPG of a UFIR smoothing filter for several low‐degrees.

‐varying NPG of a UFIR smoothing filter for several low‐degrees.

Smoothing

The smoothing problem defined by (6.112) and discussed in state space in Chapter can be solved if we introduce a gain ![]() as

as

which exists on ![]() with the same major properties as

with the same major properties as ![]() . Indeed, the ramp UFIR smoother can be designed using the FIR function

. Indeed, the ramp UFIR smoother can be designed using the FIR function

The NPG for this smoother is defined by

As expected, NPG (6.138) is exactly the same as (6.133) of the UFIR smoothing filter, since noise reduction is provided by both structures with equal efficiency. Note that similar conclusions can be drawn for other UFIR smoothing structures applied to polynomial models.

6.5.6 Generalized Savitzky‐Golay Filter

A special case of the UFIR smoothing filter was originally shown in [158] and is now called the Savitzky‐Golay (SG) filter. The convolution‐based smoothed estimate appears at the output of the SG filter with a lag ![]() in the middle of the averaging horizon as

in the middle of the averaging horizon as

where the set of ![]() convolution coefficients

convolution coefficients ![]() is determined by the linear LS method to fit with typically low‐degree polynomial processes. Since the coefficients

is determined by the linear LS method to fit with typically low‐degree polynomial processes. Since the coefficients ![]() can be taken directly from the FIR function

can be taken directly from the FIR function ![]() , the SG filter is a special case of (6.110) with the following restrictions:

, the SG filter is a special case of (6.110) with the following restrictions:

- The horizon length

must be odd; otherwise, a fractional number appears in the sum limits.

must be odd; otherwise, a fractional number appears in the sum limits. - The fixed‐lag is set as

, while some applications require different lags and the optimal lag may not be equal to this value.

, while some applications require different lags and the optimal lag may not be equal to this value.

It then follows that the UFIR smoothing filter (6.110), developed for arbitrary ![]() and lags

and lags ![]() , generalizes the SG filter (6.139) in the particular case of odd

, generalizes the SG filter (6.139) in the particular case of odd ![]() and

and ![]() . Also note that the lag in (6.110) can be optimized for even‐degree polynomials as shown in [174].

. Also note that the lag in (6.110) can be optimized for even‐degree polynomials as shown in [174].

6.5.7 Predictive Filtering and Prediction

The predictive filtering problem (6.111) can be solved directly using the ![]() ‐shift FIR function (6.120) if we set

‐shift FIR function (6.120) if we set ![]() . Like filtering and smoothing, zero‐degree UFIR predictive filtering is provided by simple averaging. The first‐degree predictive filter can be designed using a

. Like filtering and smoothing, zero‐degree UFIR predictive filtering is provided by simple averaging. The first‐degree predictive filter can be designed using a ![]() ‐varying ramp function

‐varying ramp function

which makes a difference with smoothing filtering. The NPG of the predictive filter is determined by

and we note an important feature: increasing the prediction step ![]() leads to an increase in NPG, and denoising becomes less efficient. Looking at the region around

leads to an increase in NPG, and denoising becomes less efficient. Looking at the region around ![]() in Fig. 6.5 and referring to (6.138) and (6.142), we come to the obvious conclusion that prediction is less precise than filtering and filtering is less precise than smoothing.

in Fig. 6.5 and referring to (6.138) and (6.142), we come to the obvious conclusion that prediction is less precise than filtering and filtering is less precise than smoothing.

The prediction problem (6.113) can be solved similarly to the smoothing problem by introducing the gain

which exists on ![]() with the same main properties as

with the same main properties as ![]() . The ramp UFIR predictor can be designed using the FIR function

. The ramp UFIR predictor can be designed using the FIR function

and its efficiency can be estimated using the NPG (6.141). Note that similar conclusions can be drawn for other UFIR predictors corresponding to polynomial models.

Example 6.4 Smoothing, filtering, and prediction errors [177]. Consider the dynamics of an object described by the following continuous‐time state model ![]() with

with  . Convert this model in discrete time to

. Convert this model in discrete time to ![]() , where

, where  and

and ![]() . The noise

. The noise ![]() has the covariance

has the covariance  , where

, where ![]() and

and ![]() are the uniform double‐sides PSDs of the second and third state noise, respectively. The object is observed as

are the uniform double‐sides PSDs of the second and third state noise, respectively. The object is observed as ![]() , where

, where ![]() and

and ![]() .

.

To compare ![]() ‐varying estimation errors, we set

‐varying estimation errors, we set ![]() ,

, ![]() , and

, and ![]() and run the

and run the ![]() ‐shift OFIR and UFIR filters. The estimation errors are shown in Fig. 6.7 for

‐shift OFIR and UFIR filters. The estimation errors are shown in Fig. 6.7 for ![]() and

and ![]() . It can be seen that the minimum errors correspond to the lag

. It can be seen that the minimum errors correspond to the lag ![]() and grow at any deviation from this point.

and grow at any deviation from this point.

Figure 6.7 Typical smoothing, filtering, and prediction errors produced by OFIR and UFIR filters for a two‐state tracking model: (a)  and (b)

and (b)  .

.

6.6 UFIR State Estimation Under Colored Noise

Like KF, the UFIR filter can also be generalized for Gauss‐Markov colored noise, if we take into account that unbiased averaging ignores zero mean noise. Accordingly, if we convert a model with colored noise to another with white noise and then ignore the white noise sources, the UFIR filter can be used directly. In Chapter, we generalized KF for CMN and CPN. In what follows, we will look at the appropriate modifications to the UFIR filter and focus on what makes it better in the first place: the ability to filter out more realistic nonwhite noise. Although such modifications require tuning factors, and therefore the filter may be more vulnerable and less robust, the main effect is usually positive.

6.6.1 Colored Measurement Noise

We consider the following state‐space model with Gauss‐Markov CMN, which was used in Chapter to design the GKF,

where ![]() and

and ![]() have the covariances

have the covariances ![]() and

and ![]() . The coloredness factor

. The coloredness factor ![]() is chosen such that the Gauss‐Markov noise

is chosen such that the Gauss‐Markov noise ![]() is always stationary, as required. Using measurement differencing as

is always stationary, as required. Using measurement differencing as ![]() , we write a new observation as

, we write a new observation as

where ![]() is the new observation matrix, the auxiliary matrices are defined as

is the new observation matrix, the auxiliary matrices are defined as ![]() and

and ![]() , and the noise

, and the noise

is zero mean white Gaussian with the properties ![]() ,

, ![]() , and

, and ![]() , where

, where ![]() .

.

It can be seen that the modified state‐space model in (6.145) and (6.148) contains white and time‐correlated noise sources ![]() and

and ![]() . Unlike KF, the UFIR filter does not require any information about noise, except for the zero mean assumption. Therefore, both

. Unlike KF, the UFIR filter does not require any information about noise, except for the zero mean assumption. Therefore, both ![]() and

and ![]() can be ignored, and thus the UFIR filter is unique for both correlated and de‐correlated

can be ignored, and thus the UFIR filter is unique for both correlated and de‐correlated ![]() and

and ![]() . However, the UFIR filter cannot ignore CMN

. However, the UFIR filter cannot ignore CMN ![]() , which is biased on a finite horizon

, which is biased on a finite horizon ![]() .

.