17

Time-Critical Fog Computing for Vehicular Networks

Ahmed Chebaane1, Abdelmajid Khelil1, and Neeraj Suri2

1Department of Software Engineering, Landshut University of Applied Sciences, Landshut, Germany

2Department of Computer Science, Lancaster University, UK

17.1 Introduction

Over the past few years, Internet of Things (IoT) has undoubtedly become an integral part of our quotidian lives connecting objects such as vehicles, machines, and products with various users through the Internet.

Cloud computing has been proposed as a promising approach for IoT applications/services to virtualize things and to deal with analytics, storage, and computation of data generated from IoT devices. This approach is viable in ample cases such as executing the application in the cloud for saving battery lifetime, offering on-demand data storage to the end-users, etc. In the automotive field, cloud computing and IoT support building smart vehicles by facilitating communication across vehicles, infrastructures, and other connected devices, which may improve road safety as well as traffic efficiency [1].

Dew computing, mobile cloud computing (MCC), and vehicular cloud computing (VCC) are variations of cloud computing. When cloud applications assume an active participation of the end devices along with the cloud in the execution of services and applications, cloud computing is also referred to as dew computing [2]. MCC [3] has been introduced to combine both paradigms mobile computing and cloud computing in order to overcome the mobile devices constraints. VCC [1] brings the MCC paradigm to vehicular networks by providing public services such as parking systems, traffic information, etc.

However, knowing that cloud data centers are geographically highly centralized, resulting in a large (network hop) distance between the end device/vehicle and the cloud/data center, usually leading to unpredictable communication delays [4], it is complicated if not impossible to enable time-critical applications with short-lived data across vehicles and clouds. Constraints, failures, and attacks in the heterogeneous computing environment are the typical perturbation of the timeliness in VCC. Therefore, there is a need to manage data very close to the point of use, i.e. end devices, in order to enable low-latency applications.

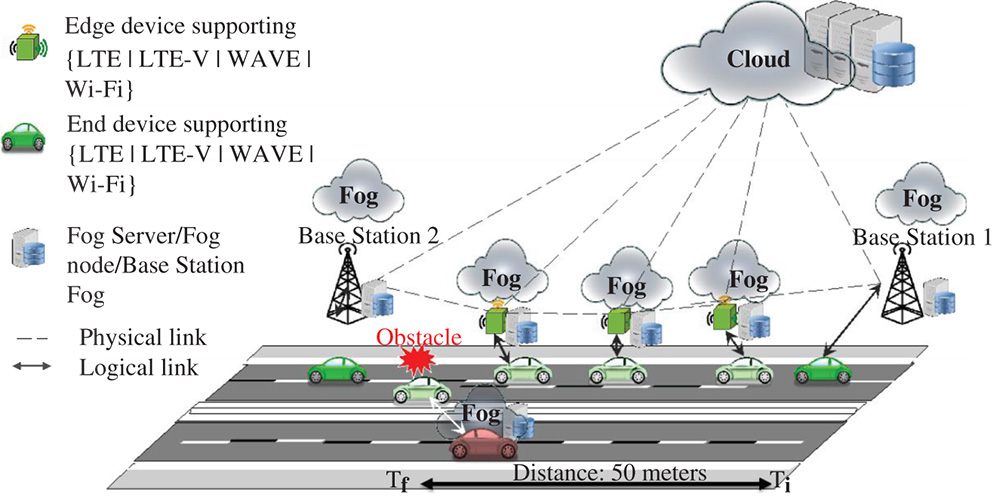

Figure 17.1 Fog computing for vehicular applications.

Fog computing was introduced in 2012 by both industry and academia [5, 6] to address the challenge of delay-sensitive IoT applications (Figure 17.1). Fog computing brings cloud computing to the edge of the network. Instead of centrally running analysis, processing, and storage functions in the cloud, they are now decentralized and running on gateways/fogs very close to the end-user devices, thus, decreasing latency, rendering it more predictable, and preserving data locality and privacy. Accordingly, this network architecture is more suitable for time-critical applications such as vehicle-to-vehicle (V2V), vehicle-to-device (V2D) [7], and vehicle-to-infrastructure (V2I) communication or autonomously driving cars, whose data processing must happen in a delay-sensitive manner. Provided a careful coping with perturbations, fog computing is indeed a highly promising architecture to make finally vehicular networked application into a reality after a long and intensive research over the last two decades.

Edge computing [8] pushes computation/intelligence even closer to the things, i.e. things may play the role of gateways/fogs [5].

We categorize fog computing either in delay-tolerant or delay-critical. Delay-tolerant fog computing addresses distributed applications that may tolerate either high or fluctuating timeliness. Compared to cloud computing, delay-tolerant fog computing still reduces network traffic and gives the data owner more localized control on own data. The main category of delay-tolerant vehicular applications are those that provide entertainment and infotainment for drivers and passengers. Usually, they are not safety-critical and accordingly not delay-critical. In this chapter, we focus on delay-critical vehicular applications such as detection of immediate obstacles on the road, cooperative, or platoon driving. We detail the target scenarios and their requirements on fog computing in Section 17.2.

Vehicular fog computing (VFC) [9, 10] has gained a significant focus in the last few years. In addition, VFC plays a significant role to support high mobility, rise computational capability and decrease communication latency, which suits well delay-sensitive applications. Static fogs for vehicular applications may be implemented on top of available architectures such as traffic lights, traffic signs, street lighting or cellular base stations, toll collect infrastructure, bridges. New architectures, known as mobile fog computing, aim to model vehicles as fog nodes for communication and computation, thus integrating fog computing, edge computing, and Vehicular Ad-hoc NETworks (VANETs) to a homogeneous architecture (Figure 17.1).

VANET computing [11] restricts communication among vehicles using a short-range communication (such as dedicated short-range communication [DSRC], IEEE 802.11p, D2D) to enable vehicles to analyze and to share information between each others. This information could be safety-relevant, e.g. accident prevention, traffic jams, or general information, e.g. position, weather, in order to enhance safety on the road. Compared to fog computing, VANET encompasses only vehicles for computation and sharing information to the neighboring vehicles that are referred to as V2V [12]; however, fog computing includes V2I [12] for the purpose of increasing computation capability and exchange of information for vehicles via infrastructure elements, i.e. fog nodes.

The interworking of cloud, fog, and VANET computing is illustrated in Figure 17.1. This interworking will be the common architecture to address all vehicular applications. However, delay-critical applications will rely either on VANET or fog computing or a combination of both. In the latter case, one may consider vehicles as either end-device or a mobile fog. Accordingly, mobile fog computing is an emerging architecture, where fogs may be mobile.

Though there are surveys on fog computing [4, 13, 14] and several recent papers presenting the VFC architectures, algorithms, etc. for enabling delay-sensitive applications, there is no survey of this emerging field. This chapter aims at filling this gap and presenting a comprehensive survey.

In this chapter, we comprehensively survey the literature on delay-critical fog-based vehicular applications. Nonetheless, we also briefly survey the fog computing support for other delay-critical application domains such as smart grid, industry 4.0 and IoT, while pointing to the potentials of the adoption of the available techniques to the field of vehicular networks.

In Section 17.2, we present the focused applications and their timeliness requirements. In addition, we survey the key perturbations that hinder fulfilling these timeliness requirements. In Section 17.3, we introduce the existing research works to cope with the perturbation in order to meet the timeliness requirements. In Section 17.4, we address the research gaps achieved in this survey and we provide a future research direction In Section 17.5, we conclude the paper.

17.2 Applications and Timeliness Guarantees and Perturbations

In this section, we present various scenarios of time-critical applications and their timeliness requirements. Next, we survey the perturbations that may complicate meeting the desired deadline.

17.2.1 Application Scenarios

We first detail a representative application scenario, i.e. obstacle detection that clearly shows the need for delay-critical fog computing in vehicular networks. Next, we survey a broad class of application scenarios that emphasize these needs.

Consider a (autonomous) vehicle driving with 200 km h−1 on the highway and suddenly an obstacle appears on the road. The obstacle detection application includes the type of obstacle and its velocity is a prerequisite for a suitable decision making. For example, depending on whether the obstacle is a human being, a large object, or something harmless, and depending on the surrounding vehicles, the vehicle should ignore, avoid, or overdrive it. This application could execute directly on the on-board computers of prime and modern vehicles. However, most of vehicles will not be able to do the processing on-board while meeting the deadline due to limited resources. For this purpose, the application (and its data) should be partitioned and selected parts of it should be transmitted with minimal delay and highest reliability to the surrounding fog infrastructure. A cloud solution is not suitable because the latency of the data transfer is not deterministic and may become intolerable (Figure 17.2).

Usually, fogs from the same provider are (directly) interconnected and can exchange data, balance loads among each other, and execute similar measures for reliable and delay-aware computing. When data is processed, the result should be transmitted back to the initiator to take appropriate decisions. For instance, if the obstacle is a human being, an immediate collision avoidance maneuver must be initiated. If it is just a harmless object, such as a tire part, it can be run over. Thus, nobody is endangered by an unnecessary lane change maneuver. This gained knowledge may be then communicated to other affected vehicles, such as those immediately following the considered vehicle.

Figure 17.2 Obstacle detection as an example of delay-critical application scenarios.

In order to make this scenario possible, data processing and distribution should not violate the tolerable delay. At a speed of 200 km h−1, a vehicle covers a distance of about 50 m in 900 ms. Therefore, the decision must be taken in maximum 90 ms, which is the time to execute the application. Therefore, we refer to such applications as short-lived ones.

The aforementioned representative application scenario clearly motivates the necessity of fog computing support to provide for such crucial applications. In the following, we illustrate further delay-critical application classes that require fog computing support.

A cooperative perception class is based on swapping/fusing data from different sensors sources/vehicles and/or infrastructures using wireless networks in order to cooperatively perceive an important context. This information should be treated as a map-merging problem. See-through, lifted-seat, or satellite view are some use cases from the cooperative perception class [15].

A cooperative driving class enables maneuvers to review, share, plan, coordinate, and apply information concerning driving trajectories among vehicles in a safe way including negotiation and optimization of trajectories. The possible cases in this class are lane change warning, lane merge, etc. [15].

The cooperative safety class addresses the presence of vulnerable road user (VRU), where affected vehicles and/or infrastructure entities should interchange the VRU information to improve safety on the road. Moreover, VRU information acquired is processed and analyzed by the on-board unit of the vehicles or external system. The alert message generated is transmitted to the drivers or to the autonomous driving system to take applicable and corrective decisions in order to provide safety. Obstacle detection, collision warning, network-assisted vulnerable pedestrians, and bike driver protection are a possible scenario in this class [15].

Autonomous navigation classes target the building of self-governing real-time intelligent high definition maps of the surrounding area. Precisely, the information comes from the cooperative perception and a well-defined map that provides accurate and optimum performance in achieving autonomous navigation, e.g. high-definition local map acquisition [15].

Autonomous driving classes enable self-driving vehicles through wireless communication that allows the control of the major vehicle component from outside the vehicles to facilitate remote driving, which requires information about the perception layer and infrastructure. An example of the use case is self-driving in the city [15].

17.2.2 Application Model

We now follow an application model that is commonly used in fog computing as well as in other distributed embedded systems communities. An application is represented as a directed acyclic flow graph of tasks [16]. The edges specify data dependencies between tasks. We differentiate two kinds of tasks: execution tasks and communication tasks. An execution task is a composite of code and data. A communication task is the transmission of an execution task from one node to another on a certain communication path.

An application has a certain priority that applies to all its tasks. Each task has specified execution times on selected computing nodes. An application has a timeliness requirement, which is usually based on executing the entire application while meeting a certain deadline. An application deadline is the maximum tolerable delay. The root task is executed on the application initiator (the vehicle that starts the application). The rest of the tasks can be either executed locally or on surrounding fogs. A task is usually represented by an application container along with its dependencies, the task execution time, and priority.

In order to efficiently deploy an application while meeting its timeliness requirements, we usually need a set of middleware building blocks. The goal of these building blocks is to find an assignment of tasks to nodes, and communication tasks to communication links. The key building blocks are resource monitoring and task scheduling (Section 17.2.5).

17.2.3 Timeliness Guarantees

A timeliness guarantee is a fundamental quality level for providing a service delivery that satisfies the application quality of service (QoS) requirements. We identify three main timeliness guarantee levels in the literature: hard real-time (RT), soft RT, and firm RT [17] requirement classes. We survey explicitly the existing efforts to address these requirements.

A hard RT application is defined as follows: any delay in completing application execution within deadline means system failure, which can lead to catastrophic damage on the road and a violation of security requirements. Hard RT requirement uses a preventive version to prioritize tasks for scheduling.

A soft RT application is tolerant with the deadline, which is based on three requirements types: number of deadline misses in an interval of time, tardiness, and probabilistic bounds [18]. Soft RT allows the system to fail respecting the deadline even many times while the tasks are performed correctly. In this case, the result still is useful for the end-user but its utility degrades after passing the desired deadline. Soft RT requirement uses a nonpreemptive version to prioritize tasks for scheduling.

A firm RT application [19] is tolerant to skipping some tasks but still meeting the deadline [20] (also known as weakly hard RT). Unlike soft RT, firm RT applications are not considered to have failed but the result of the request is useless once the system fails to reach the deadline.

In summary, soft RT is soft with the respective deadline, and the result is useful after missing the deadline. In contrast, for hard RT applications, missing the deadline may lead to catastrophic damages. Firm RT is between soft RT and hard RT, as it is strict with the deadline, so the result is useless but no harm happens when missing the deadline. It is noteworthy to mention that RT systems require clock synchronization across multiple networked entities. In vehicular networks, we assume vehicles and fogs are equipped with GPS receivers and therefore all clocks are synchronized with the GPS global clock.

17.2.4 Benchmarking Vehicular Applications Concerning Timeliness Guarantees

We now benchmark the application classes with respect to their timeliness guarantee requirements. As illustrated in Table 17.1, most of the applications require RT communication and computation but depending on the concrete application scenario and context, various RT classes may be required. For example, autonomous navigation and cooperative perception tolerate passing the deadline so that they belong to the soft RT application classes. Cooperative safety and autonomous driving require meeting the deadline. Because of the critical nature of the situation, a deadline in few tens of milliseconds needs to be met in order to avoid a fatal damage. Consequently, they are hard RT class. Cooperative driving mostly requires rm RT requirement due to the necessity to get the result within the deadline in order to enhance safety in the road but nothing critical happens when the execution time has exceeded the deadline.

Table 17.1 Benchmarking of application classes.

| App class | Possible scenario | Need for fog computing | RT class | Timeliness guarantees (deadline) | Fog architecture |

| Cooperative driving | Lane change warning | Timely communication | Firm RT requirements | Few 100–1000 ms | VANET |

| Online and offline analysis | Fog computing | ||||

| Lane merge | |||||

| Privacy preserving, authenticity and integrity | |||||

| Information sharing among V2V and V2I to enhance the QoS and enable the integration of legacy vehicles for calculation. | |||||

| Cooperative safety | Neighbor collision warning | High computation to process the presence of VRU in ultra low-latency | Hard RT requirements | Few 10 ms | VANET |

| Fog computing | |||||

| Obstacle detection | Locally (OnBoard) | ||||

| Real time communication | |||||

| Network assisted vulnerable pedestrian protection | |||||

| Security and Reliability | |||||

| Delay critical for deadline | |||||

| Information sharing among V2V and V2I. | |||||

| Cooperative perception | see-through | Capacity (for analyze and localize of the detected object) | Soft RT requirements | Few seconds | VANET |

| Life-seat | Fog computing | ||||

| Satellite view | |||||

| Heterogeneity among vehicles (computing and communication) | Cloud computing | ||||

| Sharing information about localization and relative position need the communication through the infrastructure in addition to the V2V communication. | |||||

| Autonomous driving | Self driving in the city | Very low latency in communication and computation | Hard RT requirements | Few 10 ms | VANET |

| Fog computing | |||||

| Almost require 100% reliability | Locally (OnBoard) | ||||

| Efficient security | |||||

| Information sharing among V2V and V2I. | |||||

| Autonomous navigation | High-definition local map acquisition | Centralized and decentralized computation in real time | Soft RT requirements | Few seconds | Fog computing |

| Cloud computing | |||||

| Real time distribution of map information | |||||

To guarantee safety and satisfy the service delivery of all these application scenario classes, fog computing is a suitable candidate for computing architecture that enables very low communication and computation delays among vehicles and infrastructures. If needed and possible, fog computing can be seamlessly integrated with cloud computing, which can support tolerant applications such as smart parking with higher computational capability.

Fog computing plays a fundamental role in the cooperative driving class, which can improve cooperation among drivers and enhance safety on the road by applying VANET to coordinate with the infrastructure in order to analyze and exchange information close to the vehicles.

17.2.5 Building Blocks to Reach Timeliness Guarantees

To ensure real-time computation in a distributed, mobile, and heterogeneous infrastructure, fog computing is considered as a viable solution for vehicular applications and services [21]. Resource monitoring, scheduling, RT computation, and RT communication are the key functional blocks to ensure service delivery deadline respecting the QoS of the system [22].

Resource monitoring creates awareness of current and future available networks and computation resources. Accordingly, it is fundamental for task scheduling. The network resource monitoring collects important network indicators such as available channels and their bandwidth in a current or future vehicle location. The computational resource monitoring maintains indicators concerning the available processing and storage resources in time. An integral part of the monitoring is to consider the impact of failures and the availability of the resources.

The resource monitoring function usually is distributed across multiple entities. It is hard to have one central entity that monitors all available resources in a multitenant vehicle environment.

Scheduling tasks target an effective planning of the application tasks depending on available resources and application requirements. The requirements specify the task dependencies, task priority, and the deadline. The resulting schedule is given as an assignment of starting times to every task and communication activity. Automatic rescheduling is also adapted to resolve the issues of the incoming and outgoing fog nodes around the vehicle. The scheduler should guarantee the deadline according to the existing resources.

Similar to resource monitoring, the scheduler functionality is usually shared with multiple nodes.

The RT task computation requires a careful resource management on the node executing a task (Section 17.3.1.2), in order to ensure the overall RT computation of the complete application across vehicles and fog nodes. On node-level this is a well-investigated topic in the literature. On the application level an RT scheduler is indispensable.

RT communication plays an effective role to assure application-level RT computation in fog computing by selecting a suitable fog link to distribute communication tasks, i.e. to off-load/migrate execution tasks for processing in convenient networking delays, which enable RT communication between sensors/fog nodes to fog nodes in vehicular networks. Ge et al. [23] implement the 5G SDN vehicular network paradigm to enhance latency-aware communication time. Therefore, a good management of the network (Section 17.3.1.1) resources can enhance RT communication in VFC.

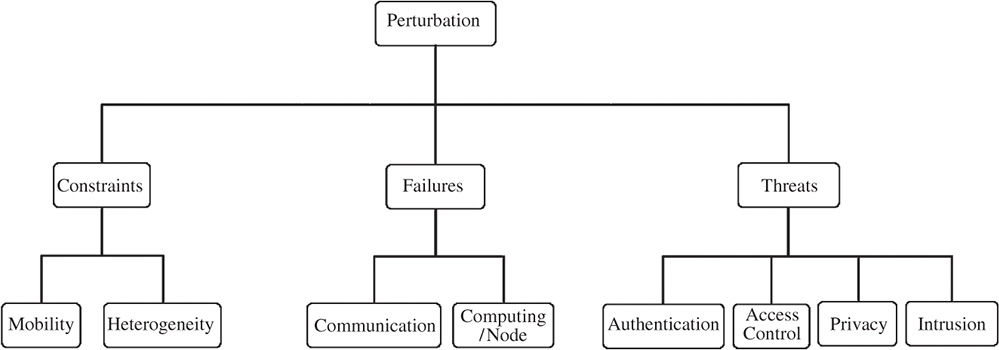

Figure 17.3 Timeliness perturbations.

A communication task usually consists of migrating a task/container such as Docker [24] from the initiator vehicle to the selected fog nodes such as vehicles and roadside units (RSU), while keeping a high fidelity of the applications.

Docker enables application virtualization through containers. These containers combine individual application parts (tasks) together with all necessary auxiliaries. Therefore, Merkel [25] often refers to lightweight virtualization in terms of containers.

On the other hand, perturbation can break communication or computation between vehicles and/or infrastructures, which is a serious problem in such a scenario that needs ultralow latency in communication and computation. We elaborate more on timeliness perturbations in the subsequent section.

17.2.6 Timeliness Perturbations

After defining the applications, their requirements on latency and the building blocks that allow to fulfill these requirements, we now survey the perturbations, i.e. constraints, failures, and threats that complicate the design of delay-critical VFC (Figure 17.3).

17.2.6.1 Constraints

- Mobility. Due to mobility, wireless network characteristics change frequently. For example, the effective available bandwidth is highly dynamic. This depends on the wireless technology (DSRC, 802.11p, WLAN, satellite, LTE-V, D2D, etc.), access coverage, and a number of vehicles that have to share the wireless medium. Other key characteristics of the wireless links are latency and communication costs. These characteristics lead to considerably varied reliability/availability and connectivity of vehicles.

- Heterogeneity. In the vehicular fog environment we usually observe a strong heterogeneity of nodes and links. Nodes strongly vary in computational resources. Prime class vehicles may have sufficient resources to run complex applications/tasks onboard. Other older or lower class vehicles may have highly limited resources. Also, fog nodes may significantly vary with their computational resources.

High mobility and strong heterogeneity in nodes and links obviously complicate monitoring and scheduling, thus fulfilling the timeliness requirements.

17.2.6.2 Failures

- Communication failures. These constitute the majority of failures in the vehicular environment. We distinguish between two types of communication failures:

- Message loss. Messages exchanged between the vehicle and the fog are highly vulnerable to loss due to the high bit error rate of wireless links, network congestion, and collisions. Message loss probably occurs in vehicular environments and needs to be explicitly taken into consideration.

- Network disconnection (or link disruption). Given its mobile nature, a vehicle can enter a geographical area out of coverage of any fog node so that it loses its connection to the network. The vehicle is said to be disconnected from the rest of the network. While disconnected from the network, the vehicle is not able to send or receive messages. As network disconnection is a common occurrence in mobile scenarios, it needs to be explicitly considered.

Communication failures usually lead to delays in the distributed application execution and subsequently to the violation of timeliness requirements.

- Computing/node failures. The failure of computing resources may lead to delays and subsequently to the violation of timeliness requirements. Examples of computing failures: node failures, storage overflow, processor overload, etc.

17.2.6.3 Threats

- Authentication threats. Vehicles continuously join and leave different fog nodes, which mostly interrupt the service continuity of the initiator. In addition, due to the limited resources in the connected vehicle, the initiator fails to authenticate to some fog layers using the traditional authentication (certificates and public-key infrastructure [PKI]).

- Access control threats: In such a scenario, ensuring access permission among the fog and cloud becomes untrustworthy in the vehicular network. Moreover, access the control system fails to control capacity utilization of the resource-constrained nodes as a result power or resources get empty.

- Privacy threats. Usually, the vehicle interacts every time with multiple fog servers. Therefore, most of the fog servers know information about the vehicle, driver, position, etc., which is a risk to share this sensitive data to the other fog nodes. More than that, fog platforms are faced with many threats; as an example, Man-In-The-Middle (MITM) attacks can easily exploit unmanned aerial vehicles (UAVs)-based integrative IoT fog platform [26] to discover sensitive data (e.g. location, fog node identity) [27].

- Intrusion threats. Intruders may harm computation and communication and therefore represent a high risk to violate the timeliness requirements of vehicular application.

17.3 Coping with Perturbation to Meet Timeliness Guarantees

We now survey the available research efforts to cope with the perturbations and still efficiently meet the timeliness requirements despite the perturbations.

17.3.1 Coping with Constraints

17.3.1.1 Network Resource Management

In VFC, network management includes vertical federation that depends on the physical partitioning (ex. 5G, LTE, WIFI, 802.11p), [15, 28–35] and horizontal federation that depends on the time portioning and bandwidth (e.g. slicing, software defined networking [SDN] to manage vehicular neighbor groups [VNGs], network function virtualization [NFV]) [30, 35, 36].

Mobility of vehicles and fogs as well as the heterogeneity of network nodes and links result in continuously changing network resources. Accordingly, an efficient network resource management is indispensable for VFC. In order to enable seamless handover among different fog nodes, Bao et al. [31] develop a follow-me fog (FMF) framework to reduce the latency of the handover scheme in fog computing. In addition, Palattella et al. [37] describe the gap of connectivity and security of the handover in the vehicle-to-everything (V2X) and propose a proactive cross-layer, cross-terrestrial-technology, and cross-slices handover approach based on fog computing, including 5G, to achieve zero-latency handover in the vehicular network. For security they aim to enable a quick authentication and re-authentication handover based on SDN and fog. A detailed overview on this research field as well a proposal for proactive handover can be found in [38].

17.3.1.2 Computational Resource and Data Management

From the point of view of an application initiator (root task), mobility and heterogeneity lead to a permanently changing pool of available and useful computational resources. Accordingly, an efficient resource management is crucial for VFC. The selected resource management technique has an effective impact on enhancing the organization and the optimization of resource allocation among fog nodes ensuring the QoS and minimizing the execution time and cost. Scalability in fog computing enables both horizontal and vertical extensibility of fog resources [39] to cover regularly the high demands of resources for vehicular networks. Resource management involves computation management and data management as shown in Figure 17.4. As defined in Section 17.2, we survey the existing literature that we judge useful/applicable for the VFC.

- Computation management aims to provide proper resources to the application tasks and usually includes resource provisioning, resource consumption, and resource monitoring.

- Resource provisioning allows preparing resources for computation to guarantee the application requirements. Resource provisioning includes resource estimation, fog node selection, and workload allocation.

- Resource estimation allows estimating the necessary resources to allocate in the involved nodes. The estimation should consider the heterogeneity and availability of fog nodes. Aazam et al. [40] propose a flexible resource estimation mechanism based on user characteristics by estimating suitable resources to be allocated. They provide a mathematical model relying on resource relinquish probability to save resources and minimize costs taking into account the application quality. In [41], the authors implement a dynamic resource estimation by reducing the underutilization of resources and enhancing the QoS based on the characteristics of the services reaching devices. In [42], El Kafhali et al. develop a mathematical model by analyzing fog resources, estimating the right amount of fog nodes under any offered workload and the ability for a dynamic scaling following the incoming workload. Malandrino et al. [43] focus on estimating the server utilization and latency in relation with the extension of deployed mobile edge omputing (MEC). These efforts could support the realization of the considered time-critical scenarios for VFC as they allow for proactive decision making.

- Fog node selection. After estimating the required resource, fog node selection assures the selection of the suitable fog nodes for the considered tasks. Li et al. [44] propose two schemes: (1) fog resource reservation that selects and reserves some fog resources to the respective vehicle based on traffic flow prediction methods, and (1) fog resource reallocation of resources depending on the application/task priority. Gedeon et al. [45] implement a brokering mechanism based on helping the client to select the relevant accessible surrogate to off-load computation tasks. The broker is responsible for updating information of all surrogates.

Figure 17.4 Coping with perturbation in DCVF.

- Resource allocation. Resource allocation is the assignment of the needed resources to the concrete application on the selected nodes. Load balancing across the different fog nodes is crucial to assure the availability of required resources, e.g. by delaying or deactivating lower priority tasks where needed. Sutagundar et al. [46] propose a game theory approach in fog-enhanced vehicular services to perform the resource allocation taking into account the resource estimation by predicting the required number of resources at the time of the workload allocation. Deng et al. [47] develop a workload allocation framework by balancing power consumption, delay, and workload allocation in fog–cloud computing environments. The authors [44, 48, 49] address workload allocation taking into account the QoS.

- Resource consumption includes how to schedule a set of tasks and optimize resources, how to off-load a task from the fog node of the requester to the proper workload of the selected nodes, and to scale resources by extending them across vehicular nodes, fogs, and clouds.

- Scheduling and optimization aim to control and optimize resources first and then arrange a set of jobs in the workload while respecting the QoS and the critical nature of the tasks. Park et al. [50] address the problem of critical delay services for the connected vehicles by providing an optimal scheduling algorithm based on Reinforcement Learning Data Scheduling. They focus on the problem proposed by the Markov decision process, which is a reinforcement learning method to increase the transmission number of services within the deadline. Zhu et al. [46] design a Folo model for VFC to optimize the task allocation. The task allocation process is considered a joint optimization problem. A dynamic task allocation approach based on Linear Programming-based Optimization and Binary Particle Swarm-based Optimization has proposed to solve the issue. Other related contributions in this field [22, 48, 51].

Task migration/offloading is a crucial technique to migrate Virtual Machine (VM) or container from Fog node to another Fog node. H. Yao et al. [52] introduce a rod side Cloudlet (RSC) that enable the VM migration in the VCC to improve the response time and reduce network and VM migration cost during vehicle movement. A. Machen et al. [53] show the performance of the container compared to the VM in the proposed layered migration framework among mobile edge Cloud. Additional related research in the service migration where the authors focus on the container in mobile Fog Computing [54–56]. I. Farris et al. [57] aim to enhance the proactive migration of latency-aware applications in MEC by providing two Integers Linear Problem optimization schemas to guarantee the desired Quality of Experience (QoE) and decrease the cost of proactive replication. Wang et al. [58] provide a relevant survey on the service migration in MEC.

Off-loading intends to send tasks from the application initiator to other fogs in order to reduce energy consumption and service delays. This technique is typically used in MCC such as on smart phones. Wu et al. [59] develop a task off-loading strategy in VFC based on the proposed model Direction-based Vehicular Network Model (DVNM) to perform off-loading of the tasks within vehicles and RSUs. Zhang et al. [60] develop an efficient code partition algorithm for MCC based on depth-first search and a linear time-searching scheme to find the convenient points on a sequence of calls for off-loading and integration. This contribution could adapt effectively to the VFC. Zhou et al. [61] provide a good survey that explains the data off-loading techniques for V2V, V2I, and V2X through VANET.

- Scalability. Due to the unlimited resources among cloud and fog, this technique must be able to adopt all kinds of occurrences predictable or unpredictable and maintain the quality of service. Yan et al. [62] propose a user access mode selection mechanism to perform the scalability of the application in F-RAN (fog computing radio access network) taking into account the different nodes locations and QoS requirement of the service accessing entities. Tseng et al. [63] extend the oneM2M platform from cloud to fog computing based on containerization of the oneM2M middle node as a Docker container to make the system highly scalable and resolve the latency issues for some critical applications.

- Resource monitoring control and monitor the events and the performance of each service and then record the results to the concerning services. Monitoring includes resource discovery. Resource discovery aims to discover the available fog resources and provides the necessary information to the application initiator such as location, availability, and the available capacity for use of the discovered fog nodes. In [45, 64, 65] the authors focus on discovering resources in fog computing. On the other hand, Lai et al. [66] provide an efficient schema called two-phase event-monitoring and data-gathering (TPEG) that collect data and monitor frequently the events of the fog nodes in VANET in order to select the suitable amount of data for decision making and avoid the useless messages transmissions based on two-level threshold adjustment (2LTA) algorithm.

- Data management is very challenging because of the limited capacity of the vehicle. Data management needs an optimal separation between effective and ineffective data. The effective data is a sensitive data related to the safety, which must be stored onboard computer. The ineffective data is a soft data that should be moved into the cloud.

After processing the application correctly, the application initiator decides where to store the results/data. Most of the researchers are addressing optimization of the data for storage and then select the data that should be stored on the vehicle or on the cloud data centers [67, 68].

17.3.2 Coping with Failures

Fault tolerance is a fundamental solution to cope with failures. Some related research [69–72] addresses the fault tolerance in fog computing to meet the timeliness guarantees. Kopetz et al. [73] implement a fault-tolerance technique in VFC for the real-time application that well improved the allocation of the time-triggered virtual machine (TTVM) on different fog nodes systems.

In addition, fault tolerance is also highlighted in the connected vehicles to limit the constraint in message delivery infrastructure. Du [74] designs a distributed message delivery system by developing a prototype infrastructure using Kafka [75]. The results show the performance of the proposed prototype in the connected vehicle application, which is highly scalable, fault tolerant, and able to deliver in parallel a big amount of messages in a short time.

17.3.3 Coping with Threats

- Coping with authentication threats. Fog node authentication, service migration authentication, etc. are indispensable for VFC. Dsouza et al. [76] implement a policy-driven security management framework in fog computing, which makes data, devices, instance, and data migration authentication more efficient and enhance the protection of the system for real-time services. Applying the physical contact for preauthentication in local ad-hoc wireless networks [77] and cloudlets authentication in near-field communication (NFC)-based mobile computing [78] on VFC can resolve the problem of authentication and enhance the security.

- Coping with access control threats. In general, access control restricts the access to some services by defining specific rules to each node. Aazam et al. [79] implement a security layer in fog nodes as a smart gateway in order to control the access to such sensitive data that will be uploaded to the cloud. Salonikias [80] proposes a preliminary access control approach called attribute-based access control (ABAC) based on attributes and its security policy to resolve the problem of access control in intelligent transportation system architectures using fog computing for the required sensitive application.

- Coping with privacy threats. Data generated from the vehicles may be very sensitive and require the protection of user privacy. It is necessary to ensure the confidentiality of vehicular fog in a distributed environment, but the subject still is a challenge for future research [9].

- Data privacy. In order to ensure data privacy, the authors [81] provide a privacy preserving vehicular road surface condition monitoring using fog computing based on certificate less aggregate signcryption. Lu et al. [82] design an efficient privacy-preserving aggregation scheme based on homomorphic encryption to improve security and privacy preservation in smart grid communications.

- Location privacy. This part needs proper attention to investigate in location privacy issues on the vehicular fog client, taking into account the high mobility of vehicles as a fog node.

- Usage privacy. In this pattern, utilization of fog nodes services require a usage policy for the customer, taking an example, [83, 84] propose a privacy-preserving mechanism in the smart metering for the smart grid domain. But user usage still is not tackled efficiently in the VFC.

- Network security. The authors [85–87] point on the network security SDN-based in terms of network monitoring and intrusion detection system, network resource access control and network sharing.

- Coping with intrusion threats. The principal role of intrusion detection is to control and monitor all fog nodes from any kind of attacks, such as denial of service (DoS) attacks, insider attack, attacks on VM, and hypervisor [88]. For example, in VANET, Malla et al. [89] provide an efficient solution based on several lines of defense to counter DoS attacks. But mitigating attacks in vehicular networks using fog computing requires a high attention in further investigation.

17.4 Research Gaps and Future Research Directions

Our literature survey has shown that the following aspects are insufficiently addressed in the literature.

17.4.1 Mobile Fog Computing

Fog mobility is considered among the most crucial challenges in fog computing. Existing contributions usually consider static fog nodes and mobile or fixed user devices. Supporting mobility of fog nodes largely remains an open challenge due to the complication of resource and network management. In particular, in vehicular fog environments, the mobility is very high and even further hardens the application and system design. Accordingly, designing an efficient paradigm that can provide a wide range of scenarios and ensure mobility-aware management and coordination among mobile fog nodes is urgently needed.

Resource management due to the mobility of fog nodes, provisioning, virtualization, selection, and scheduling of resources need to be revised and optimized to cope with the continuously changing network topology and the fluctuating resource availability.

- Resource provisioning. Discovering and selecting the relevant fog node in a high-mobility environment is challenging, and perhaps predicting resource discovery in mobile fog can be a suitable solution. In addition, migration of VM/container in other nodes and estimation of the execution time to meet the deadline taking into account heterogeneity of the computing systems. Continuously changing workloads on fogs and nonstatic fog servers will be more challenging.

- Resource consumption. Regarding its heterogeneity and dynamic distributed nature, mobile fog computing requires deep attention to the scalability issues in the fog layer. In addition, efficient scheduling is needed to limit the difficulty in task off-loading. Task classification and optimization can be helpful to resolve these issues; as well as resource provisioning, resource consumption requires a careful investigation.

- Virtualization. Inspired from the cloud data center approach, virtualization of fog computing is a big challenge that targets transforming a fog node into a small-scale data center at the edge network. This challenge can be a suitable solution for VM/container migration or task off-loading. The benefits are an improved QoS, reduced costs, and real-time communication and computation functions.

- Decision. Taking a decision with respect to QoS and service delivery requirement is another research gap to be addressed.

- Resource monitoring. The literature does not meet the needs for more proactiveness in monitoring computational and network resources in VFC. Accordingly, there is an urgent need for techniques that accurately estimate and forecast resource availability and needs in highly dynamic environments.

- Network management. Mobile fog nodes frequently join and leave fog networks, which further complicates the network resource management. In particular, ensuring a seamless zero-delay handover is challenging. Discovering network resources in mobile fog networks is also an interesting and challenging research direction.

17.4.2 Fog Service Level Agreement (SLA)

Service level agreements (SLAs) are widely investigated for cloud computing, however, still need research efforts to adopt them for mobile fog computing with strict timeliness and bandwidth guarantees. This challenge should be addressed by defining and designing metrics and SLA enforcement techniques that are suitable for mobile fog computing. In addition to SLA, security LA (SecLA) represents a real challenge for VFC, due to the high mobility and geographically distributed vehicles that require a privacy-preserving, critical-data protection, and fast authentication.

- Authentication. Due to the resource constraints in the connected vehicle, authentication with the traditional method using certificates and PKI to the fog layers is insufficient. In addition, ensuring service continuity despite mobile fog churns (node joins and leaves) is an open challenge.

- Privacy preserving. Preserving privacy in fog computing is still challenging due to the shared resource of fog node with other nodes, which is a risk to expose some sensitive data.

- Access control. Designing an efficient optimistic access control scheme along with a user permission policy that cover vehicle, fog, and cloud is another potential direction.

- Intrusion detection. Intrusion detection should be carefully designed and implemented across vehicles, fogs, and clouds while ensuring efficient coordination between different levels. These challenges should be contributed taking into account the high mobility of vehicles and the distribution of the fog nodes.

17.5 Conclusion

In this chapter, we illustrate the different application scenario classes and their timeliness requirements. Next, we present the different perturbations that complicate the design of delay-critical VFC. Then, we survey literature on network management, resource management, security, and fault tolerance to cope with perturbation and to guarantee the timeliness requirements. For further investigation in delay-critical VFC, we point out key research gaps and challenges that require deep research attention.

References

- 1 Whaiduzzaman, M., Sookhaka, M., Gani, A., and Buyya, R. (2014). A survey on vehicular cloud computing. Journal of Network and Computer Applications 40: 325–344.

- 2 Skala, K., Davidovic, D., Afgan, E. et al. (2015). Scalable distributed computing hierarchy: cloud, fog and dew computing. Open Journal of Cloud Computing (OJCC) 2 (1): 16–24.

- 3 Dinh, H.T., Lee, C., Niyato, D., and Wang, P. (2013). A survey of mobile cloud computing: architecture, applications, and approaches. Wireless Communications and Mobile Computing.

- 4 Naha, R.K., Garg, S., Georgakopoulos, D. et al. (2018). Fog computing: survey of trends, architectures, requirements, and research directions. IEEE Access 6: 47980–48009.

- 5 OpenFogConsortium, Openfog reference architecture for fog computing 2017, [Online]. Available: https://www.openfogconsortium.org/ra, 2017.

- 6 Bonomi, F., Milito, R., Zhu, J., and Addepalli, S. (2012). Fog computing and its role in the internet of things. In: Proceedings of the First Edition of the MCC Workshop on Mobile Cloud Computing, 13–16. ACM.

- 7 A. Khelil and D. Soldani, On the suitability of device-to-device communications for road Traffic safety. 2014 IEEE World Forum on Internet of Things (WF-IoT), 2014.

- 8 Shi, W., Cao, J., Zhang, Q. et al. (2016). Edge computing: vision and challenges. IEEE Internet of Things Journal 3 (5): 637–646.

- 9 Hou, X., Li, Y., Chen, M. et al. (2016). Vehicular fog computing: a viewpoint of vehicles as the infrastructures. IEEE Transactions on Vehicular Technology 65 (6): 3860–3873.

- 10 Huang, C., Lu, R., and Choo, K. (2017). Vehicular fog computing: architecture use case and security and forensic challenges. IEEE Communications Magazine 55 (11): 105–111.

- 11 Al-Sultan, S., Al-Doori, M.M., Al-Bayatti, A.H., and Zedan, H. (2014). A comprehensive survey on vehicular ad hoc network. Journal of Network and Computer Applications 37: 380–392.

- 12 Hu, F. (2018). Vehicle-to-Vehicle and Vehicle-to-Infrastructure Communications. Boca Raton: CRC Press.

- 13 Mukherjee, M., Shu, L., and Wang, D. (2018). Survey of fog computing: fundamental network applications and research challenges. IEEE Communications Surveys and Tutorials.

- 14 Mouradian, C., Naboulsi, D., Yangui, S. et al. (2017). A comprehensive survey on fog computing: state-of-the-art and research challenges. IEEE Communications Surveys and Tutorials.

- 15 A. E. Fernandez, A. Servel, J. Tiphene et al., 5GCAR scenarios, use cases, requirements and KPIs, Fifth Generation Communication Automotive Research and Innovation, https://5gcar.eu/wp-content/uploads/2017/05/5GCAR_D2.1_v1.0.pdf, 2017.

- 16 Roig, C., Ripoll, A., and Guirado, F. (2007). A new task graph model for mapping message passing applications. IEEE Transactions on Parallel and Distributed Systems.

- 17 Gezer, V., Um, J., and Ruskowski, M. (2018). An introduction to edge computing and a real-time capable server architecture. International Journal on Advances in Intelligent Systems 11 (1 and 2): 105–114.

- 18 G. Lipari and L. Palopoli, Real-time scheduling: from hard to soft real-time systems. https://arxiv.org/abs/1512.01978, 2015.

- 19 Bernat, G., Burns, A., and Llamosi, A. (2001). Weakly hard real-time systems. IEEE Transactions on Computers 50 (4): 308–321.

- 20 T. Kaldewey, C. Lin, and S. Brandt, Firm real-time processing in an integrated real-time system, University of York, Department of Computer Science – Report, Vol. 398, p. 5, 2006.

- 21 Grover, J., Jain, A., Singhal, S., and Yadav, A. (2018). Real-time VANET applications using fog computing. In: Proceedings of First International Conference on Smart System, Innovations and Computing, 683–691. New York: Springer.

- 22 Mahmud, R., Ramamohanarao, K., and Buyya, R. (2017). Latency-aware application module management for fog computing environments. ACM Transactions on Internet Technology (TOIT).

- 23 Ge, X., Li, Z., and Li, S. (2017). 5G software defined vehicular networks. IEEE Communications Magazine 55 (7): 87–93.

- 24 Docker, www.docker.com.

- 25 Merkel, D. (2014). Docker: lightweight Linux containers for consistent development and deployment. Linux Journal 239.

- 26 Motlagh, N.H., Bagaa, M., and Taleb, T. (2017). UAV-based IoT platform: a crowd surveillance use case. IEEE Communications Magazine 55 (2): 128–134.

- 27 Mukherjee, M., Matam, R., Shu, L. et al. (2017). Security and privacy in fog computing: challenges. IEEE Access.

- 28 Virdis, A., Vallati, C., Nardini, G. et al. (2018). D2D communications for large-scale fog platforms: enabling direct M2M interactions. IEEE Vehicular Technology Magazine.

- 29 Froiz-Míguez, I., Fernández-Caramés, T.M., Fraga-Lamas, P., and Castedo, L. (2018). Design, implementation and practical evaluation of an IoT home automation system for fog computing applications based on MQTT and ZigBee-WiFi sensor nodes. Sensors 18: 2660.

- 30 Vinel, A., Breu, J., Luan, T.H., and Hu, H. (2017). Emerging technology for 5G-enabled vehicular networks. IEEE Wireless Communications 24 (6): 12.

- 31 Bao, W., Yuan, D., Yang, Z. et al. (2017). Follow me fog: toward seamless handover timing schemes in a fog computing environment. IEEE Communications Magazine 55 (11): 72–78.

- 32 Xiang, C., Rongqing, Z., and Liuqing, Y. (2019). Introduction to 5G-Enabled VCN. New York: Springer.

- 33 A. Soua and S. Tohme, Multi-level SDN with vehicles as fog computing infrastructures: a new integrated architecture for 5G-VANETs, 21st Conference on Innovation in Clouds, Internet and Networks and Workshops (ICIN), 2018.

- 34 A. A. Khan, M. Abolhasan, and W. Ni, 5G next generation VANETs using SDN and fog computing framework, 15th IEEE Annual Consumer Communications Networking Conference (CCNC), 2018.

- 35 Huang, X., Yu, R., Kang, J. et al. (2017). Exploring mobile edge computing for 5G-enabled software defined vehicular networks. IEEE Wireless Communications 24 (6): 55–63.

- 36 Truong, N.B., Lee, G.M., and Ghamri-Doudane, Y. (2015). Software defined networking-based vehicular Adhoc network with fog computing. In: 2015 IFIP/IEEE International Symposium on Integrated Network Management (IM), 1202–1207. IEEE.

- 37 M. R. Palattella, R. Soua, A. Khelil, and T. Engel, Fog computing as the key for seamless connectivity handover in future vehicular networks, in The Proceedings of the 34th ACM/SIGAPP Symposium on Applied Computing (SAC), 2019.

- 38 A. Khelil, M. R. Palattella, R. Soua, and T. Engel, Fog computing as the key for seamless connectivity handover in future vehicular networks, in The Proceedings of the 34th ACM/SIGAPP Symposium On Applied Computing (SAC), 2019.

- 39 Baccarelli, E., Naranjo, P.G.V., Scarpiniti, M. et al. (2017). Fog of everything: energy-efficient networked computing architectures, research challenges, and a case study. IEEE Access 5: 9882–9910.

- 40 M. Aazam and E. Huh, Dynamic resource provisioning through fog micro datacenter, IEEE International Conference on Pervasive Computing and Communication Workshops (PerCom Workshops), 2015.

- 41 M. Aazam, M. St-Hilaire, C. Lung, and I. Lambadaris, MeFoRE: QoE based resource estimation at fog to enhance QoS in IoT, 23rd International Conference on Telecommunications (ICT), 2016.

- 42 El Kafhali, S. and Salah, K. (2017). Efficient and dynamic scaling of fog nodes for IoT devices. Journal of Supercomputing 73 (12): 5261–5284.

- 43 Malandrino, F., Kirkpatrick, S., and Chiasserini, C.-F. (2016). How close to the edge? Delay/utilization trends in MEC. In: Proceedings of the 2016 ACM Workshop on Cloud-Assisted Networking, 37–42. ACM.

- 44 J. Li, C. Natalino, D. P. Van, L. Wosinska, and J. Chen, Resource management in fog-enhanced radio access network to support real-time vehicular services, IEEE 1st International Conference on Fog and Edge Computing (ICFEC), 2017.

- 45 J. Gedeon, C. Meurisch, D. Bhat et al., Router-based brokering for surrogate discovery in edge computing, IEEE 37th International Conference on Distributed Computing Systems Workshops (ICDCSW), 2017.

- 46 Zhu, C., Tao, J., Pastor, G. et al. (2018). Folo: latency and quality optimized task allocation in vehicular fog computing. IEEE Internet of Things Journal.

- 47 Deng, R., Lu, R., Lai, C. et al. (2016). Optimal workload allocation in fog-cloud computing towards balanced delay and power consumption. IEEE Internet of Things Journal 3: 1171–1181.

- 48 Zeng, D., Gu, L., Guo, S. et al. (2016). Joint optimization of task scheduling and image placement in fog computing supported software-defined embedded system. IEEE Transactions on Computers 65: 3702–3712.

- 49 Y. Chen, J. P. Walters, and S. P. Crago, Load balancing for minimizing deadline misses and total runtime for connected car systems in fog computing, IEEE International Symposium on Parallel and Distributed Processing with Applications and 2017 IEEE International Conference on Ubiquitous Computing and Communications (ISPA/IUCC), 2017.

- 50 Park, S. and Yoo, Y. (2018). Real-time scheduling using reinforcement learning technique for the connected vehicles. In: IEEE 87th Vehicular Technology Conference (VTC Spring), 1–5. IEEE.

- 51 Lin, F., Zhou, Y., Pau, G., and Collotta, M. (2018). Optimization-oriented resource allocation management for vehicular fog computing. IEEE Access 6: 69294–69303.

- 52 Yao, H., Bai, C., Zeng, D. et al. (2015). Migrate or not? Exploring virtual machine migration in roadside cloudlet-based vehicular cloud. Concurrency and Computation: Practice and Experience 27 (18): 5780–5792.

- 53 Machen, A., Wang, S., Leung, K.K. et al. (2016). Migrating running applications across mobile edge clouds: poster. In: Proceedings of the 22nd Annual International Conference on Mobile Computing and Networking (MobiCom), 435–436. ACM.

- 54 Montero, D. and Serral-Gracia, R. (2016). Offloading personal security applications to the network edge: a mobile user case scenario. In: Proceedings of the International Wireless Communications and Mobile Computing Conference (IWCMC), 96–101. IEEE.

- 55 Saurez, E., Hong, K., Lillethun, D. et al. (2016). Incremental deployment and migration of geodistributed situation awareness applications in the fog. In: Proceedings of the 10th ACM International Conference on Distributed and Event-Based Systems (DEBS), 258–269. ACM.

- 56 Wang, S., Urgaonkar, R., He, T. et al. (2017). Dynamic service placement for mobile micro-clouds with predicted future costs. IEEE Transactions on Parallel and Distributed Systems 28 (4): 1002–1016.

- 57 Farris, I., Taleb, T., Bagaa, M., and Flick, H. (2017). Optimizing service replication for mobile delay-sensitive applications in 5G edge network. IEEE International Conference on Communications (ICC): 1–6.

- 58 Wang, S., Xu, J., Zhang, N., and Liu, Y. (2018). A survey on service migration in mobile edge computing. IEEE Access 6: 23511–23528.

- 59 Y. Wu, J. Wu, G. Zhou, and L. Chen, A direction-based vehicular network model in vehicular fog computing, IEEE SmartWorld, Ubiquitous Intelligence Computing, Advanced Trusted Computing, Scalable Computing Communications, Cloud Big Data Computing, Internet of People and Smart City Innovation (SmartWorld/SCALCOM/UIC/ATC/CBDCom/IOP/SCI), 2018.

- 60 Zhang, Y., Liu, H., Jiao, L., and Fu, X. (2012). To offload or not to offload: an efficient code partition algorithm for mobile cloud computing. In: IEEE 1st International Conference on Cloud Networking (CLOUDNET), 80–86. IEEE.

- 61 Zhou, H., Wang, H., Chen, X. et al. (2018). Data offloading techniques through vehicular ad hoc networks: a survey. IEEE Access 6: 65250–65259.

- 62 Yan, S., Peng, M., and Wang, W. (2016). User access mode selection in fog computing based radio access networks. IEEE International Conference on Communications (ICC): 1–6.

- 63 Tseng, C. and Lin, F.J. (2018). Extending scalability of IoT/M2M platforms with fog computing. In: IEEE 4th World Forum on Internet of Things (WF-IoT), 825–830. IEEE.

- 64 Cho, J., Sundaresan, K., Mahindra, R. et al. (2016). Acacia: context-aware edge computing for continuous interactive applications over mobile networks. In: Proceedings of the 12th International on Conference on Emerging Networking Experiments and Technologies, 375–389. ACM.

- 65 Tanganelli, G., Vallati, C., and Mingozzi, E. (2018). Edge-centric distributed discovery and access in the Internet of Things. IEEE Internet of Things Journal 5 (1): 425–438.

- 66 Lai, Y., Yang, F., and Su et al., J. (2017). Fog-based two-phase event monitoring and data gathering in vehicular sensor networks. Sensors, http://www.mdpi.com/1424-8220/18/1/82.

- 67 Shi, H., Chen, N., and Deters, R. (2015). Combining mobile & fog computing using CoAP to link mobile device clouds with fog computing. In: IEEE International Conference on Data Science and Data Intensive Systems, 564–571. IEEE

- 68 Hassan, M.A., Xiao, M., Wei, Q., and Chen, S. (2015). Help your mobile applications with fog computing. In: 12th Annual IEEE International Conference on Sensing, Communication, and Networking – Workshops (SECON Workshops), 1–6. IEEE.

- 69 Wang, K., Shao, Y., Xie, L. et al. (2018). Adaptive and fault-tolerant data processing in healthcare IoT based on fog computing. IEEE Transactions on Network Science and Engineering.

- 70 Zhang, J., Zhou, A., Sun, Q. et al. (2018). Overview on fault tolerance strategies of composite service in service computing. Wireless Communications and Mobile Computing 2018, Article ID 9787503, 8 pp.

- 71 J. P. Araujo Neto, D. M. Pianto, and C. G. Ralha, An agent-based fog computing architecture for resilience on amazon EC2 spot instances, 7th Brazilian Conference on Intelligent Systems (BRACIS), 2018.

- 72 Xu, J.W., Ota, K., Dong, M.X. et al. (2018). SIoTFog: byzantine-resilient IoT fog networking. Frontiers of Information Technology & Electronic Engineering.

- 73 Kopetz, H. and Poledna, S. (2016). In-vehicle real-time fog computing. In: Proceedings of the 2016 46th Annual IEEE/IFIP International Conference Dependable Systems and Networks Workshop (DSN-W), 162–167. IEEE.

- 74 Du, Y., Chowdhury, M., Rahman, M., and Dey, K. (2017). A distributed message delivery infrastructure for connected vehicle technology applications. IEEE Transactions on Intelligent Transportation Systems.

- 75 Kreps, J., Narkhede, N., and Rao, J. (2011). Kafka: a distributed messaging system for log processing. In: Proc. NetDB, 1–7. ACM.

- 76 Dsouza, C., Ahn, G.J., and Taguinod, M. (2014). Preliminary framework and a case study. Policy-driven security management for fog computing. In: Proceedings of the 2014 IEEE 15th International Conference on Information Reuse and Integration, 16–23. IEEE.

- 77 Balfanz, D., Smetters, D., Stewart, P., and Wong, H.C. (2002). Talking to strangers: authentication in ad-hoc wireless networks. In: Proceedings of the Symposium on Network and Distributed System Security, 23–35.

- 78 Bouzefrane, S., Mostefa, A.F.B., Houacine, F., and Cagnon, H. (2014). Cloudlets authentication in NFC-based mobile computing. In: Proceedings of the 2nd IEEE International Conference on Mobile Cloud Computing, Services, and Engineering (MobileCloud), 267–272. Oxford, UK. IEEE.

- 79 Aazam, M. and Huh, E.N. (2014). Fog computing and smart gateway based communication for cloud of things. In: Proceedings of the International Conference on Future Internet of Things and Cloud (FiCloud), 464–470. IEEE.

- 80 Salonikias, S., Mavridis, I., and Gritzalis, D. (2016). Access control issues in utilizing fog computing for transport infrastructure. In: Proceedings of CRITIS, 15–26. Springer.

- 81 Basudan, S., Lin, X., and Sankaranarayanan, K. (2017). A privacy-preserving vehicular crowdsensing-based road surface condition monitoring system using fog computing. IEEE Internet of Things Journal 4 (3): 772–782.

- 82 Lu, R., Liang, X., Li, X. et al. (2012). EPPA: an efficient and privacy-preserving aggregation scheme for secure smart grid communications. IEEE Transactions on Parallel and Distributed Systems 23 (9): 1621–1631.

- 83 A. Rial and G. Danezis, Privacy-preserving smart metering, in Proceedings of the ACM WPES, 2011.

- 84 McLaughlin, S., McDaniel, P., and Aiello, W. (2011). Protecting consumer privacy from electric load monitoring. In: Proceedings of the 18th ACM Conference on Computer and Communications Security, 87–98. ACM.

- 85 S. Shin and G. Gu, CloudWatcher: Network security monitoring using OpenFlow in dynamic cloud networks (or: How to provide security monitoring as a service in clouds), in Proceedings of the 20th IEEE International Conference on Network Protocols, 2012.

- 86 Klaedtke, F., Karame, G.O., Bifulco, R., and Cui, H. (2014). Access control for SDN controllers. In: Proceedings of the Third Workshop on Hot Topics Software Defined Network, 219–220. ACM.

- 87 K.-K. Yap, Y. Yiakoumis, M. Kobayashi et al., Separating authentication, access and accounting: a case study with OpenWiFi, OpenFlow Technical Report 2011-1, 2011.

- 88 Modi, C., Patel, D., Borisaniya, B. et al. (2013). A survey of intrusion detection techniques in cloud. Journal of Network and Computer Applications 36 (1): 42–57.

- 89 Malla, A.M. and Sahu, R.K. (2013). Security attacks with an effective solution for DoS attacks in VANET. International Journal of Computers and Applications 66 (22): 45.