18

A Reliable and Efficient Fog-Based Architecture for Autonomous Vehicular Networks

Shuja Mughal1, Kamran Sattar Awaisi1, Assad Abbas1, Inayat ur Rehman1, Muhammad Usman Shahid Khan2, and Mazhar Ali2

1Department of Computer Science, COMSATS University Islamabad, Islamabad Campus, Pakistan

2Department of Computer Science, COMSATS University Islamabad, Abbottabad Campus, Pakistan

18.1 Introduction

In recent years, technological developments have revolutionized the automobile industry. Cars being manufactured today include features like auto-drive, cruise control, and parking assistance systems. These features allow the car to steer itself on the road or park itself. Major companies today have made a significant leap by creating autonomous vehicles (AVs) [1, 2]. These AVs are also called automated or self-driving vehicles. The AVs have the capability to navigate roadways and environmental contexts by themselves without human input. The AVs have the potential to change the transportation system by preventing deadly crashes, providing help to the elderly and disabled, and saving fuel as described in [2–5].

Cloud computing is a new technology in information technology that separates computing and data away from user devices into large data centers. Cloud computing deals with software, storages services, data access, and computation at a fixed physical location, from which is delivering the services [6]. The cloud comes from telecommunications systems where users access virtual private networks (VPNs) for data communications. With the usage of the Internet around the world in almost every field, cloud computing services are also being provided through various methods. The aim of cloud computing is to make an efficient and effective use of the distributed resources to achieve higher output and to solve complex computation problems. However, limitations of cloud computing have become evident for latency-sensitive applications that require nodes to meet their delay requirements [6–10].

Cloud computing is a centralized computing model where most of the computations are performed in the cloud [6]. Although the data-processing speed has increased with time, the network bandwidth has not increased, which may result in higher latency. Some of the applications require a low latency and mobility support. The examples include AVs, traffic light system, emergency response systems, smart health care, and several other latency-sensitive applications. The delay caused by transferring data is not acceptable as it could be life-threatening. As safety of human life is on the top priority, therefore, no risks can be tolerated in this matter [11–16].

It is also worth mentioning that the aforementioned challenges are caused by the sudden growth of the Internet of Things (IoT) and are hard to address on the cloud model. Therefore, a new platform called fog computing has exhibited tremendous potential to overcome the challenges of low network bandwidth and latency [17]. A key benefit of fog computing is that it allows to perform the computations near the network edges and consequently the issues pertinent to the latency are resolved, particularly for delay-sensitive applications, such as the AVs. In fog environment, some of the decisions of applications can be made locally without having to be transmitted to the cloud, which greatly decreases the latency within the applications [14, 18–29].

This chapter proposes a fog-based architecture to increase the reliability of Autonomous Vehicular Networks (AVNs). To evaluate the effectiveness of the proposed architecture, we created a scenario for both the fog-based architecture and cloud-based architecture on iFogSim. We compared the latency, bandwidth, and scalability of both the fog-based and cloud-based architectures. Experimental results demonstrate that the proposed fog-based architecture not only minimizes the latency but also consumes less bandwidth as compared to the cloud environment.

To address the shortcomings in the existing literature, we take into consideration the following research questions:

- RQ 1: Does the proposed fog-based architecture minimize the latency as compared to cloud-based architecture?

- RQ 2: Does the proposed architecture minimize the bandwidth as compared to cloud-based architecture?

- RQ 2: Does the proposed architecture maximize the scalability as compared to cloud-based architecture?

To answer the previously stated research questions, we implemented the proposed fog-based architecture, analyzed, and compared the execution with the cloud-based architecture. To test our proposed architecture, we used iFogSim to implement both fog-based and cloud-based architectures. We ran multiple simulations of the scenario created on both of the architectures. The results of the simulation presented that the proposed fog-based architecture is not only supportive in minimizing the latency, it also consumes less bandwidth and is more scalable than the cloud-based network.

Several research works have been carried out in the context of fog computing and the AVNs. Hajibaba et al. [6] reviewed modern distributed computing paradigms, such as cloud computing, jungle computing, and fog computing. Madsen et al. [23] worked on the reliability aspects of fog computing in the utility computing era. Truong et al. [25] researched on software-defined networking-based vehicular ad hoc networks (VANETs) with fog computing. The authors made software-defined networks with fog computing for VANETs. Hou et al. [28] offered a viewpoint of vehicles as the infrastructure by using vehicular fog computing, in which the authors described different infrastructures for vehicular fog computing. Moreover, various techniques, applications, and issues pertaining to fog computing in AVN have been described in [14, 18, 21–28, 30].

The rest of the chapter is organized as follows: Section 18.2 presents the proposed methodology, whereas Section 18.3 presents the hypothesis formulation. Section 18.4 presents the simulation design and experimental results, and Section 18.5 concludes the chapter.

18.2 Proposed Methodology

This chapter proposes a fog-based architecture to increase the reliability of AVNs.

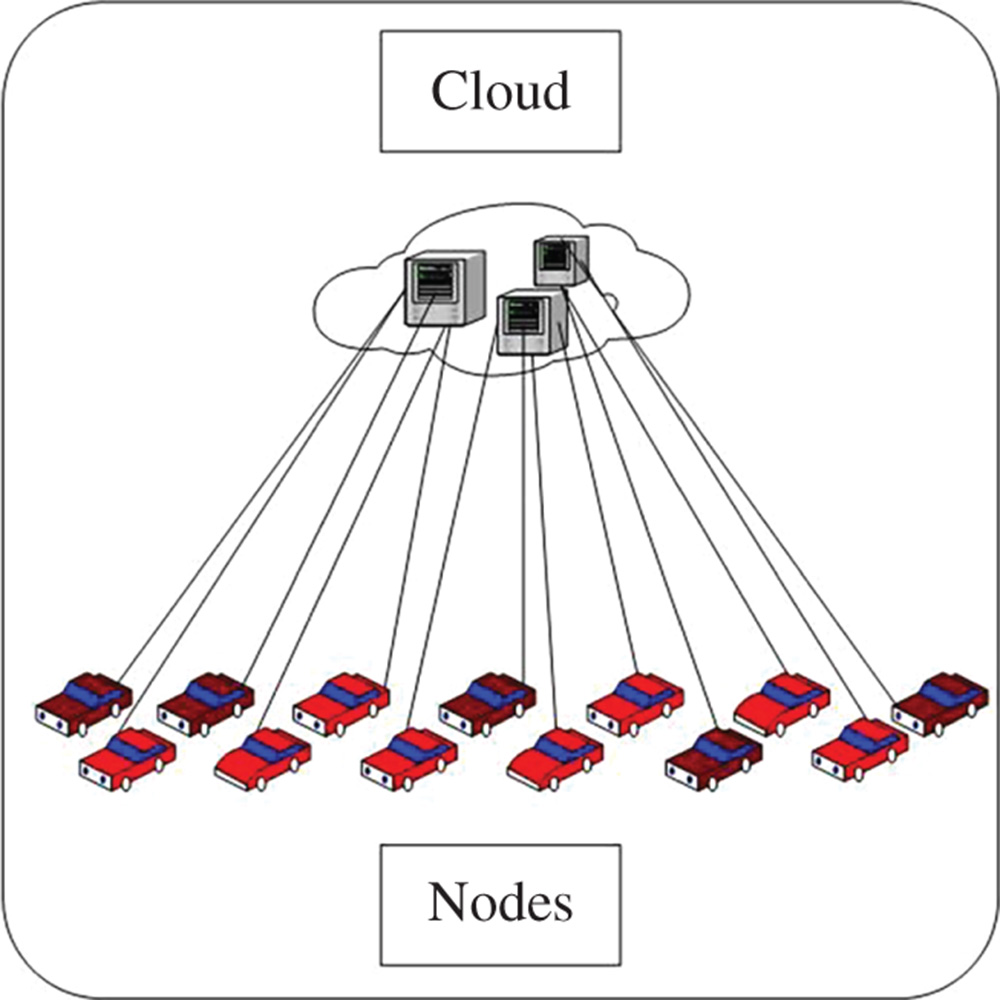

Figure 18.1 shows the cloud-based architecture for the communication in the AVN. In the figure, we can see that all the nodes that are AVs in our case are communicating directly with the cloud. Some of the applications' decisions can be made locally without having to be transmitted to the cloud, but in this case every decision is being made by the cloud, which greatly increases the latency within the applications. This results in significant increase in the latency within the AVN, as all the decisions are being made at the cloud level, instead of being taken locally.

Figure 18.1 Cloud based architecture for the communication between autonomous vehicles.

Figure 18.2 Proposed fog-based architecture for the communication among autonomous vehicles.

Figure 18.2 shows the proposed fog-based architecture for communication in the AVN. In the figure, we can see that all the nodes that are AVs in this case communicate with the fog instead of through direct communication with the cloud, because during the communication of AVs with each other, certain decisions need to be made instantly and, therefore, such processing is performed at the fog nodes in the proposed architecture. If the data cannot be handled by the fog node, then it is transmitted to the cloud. This will result in greatly decreasing the latency within the AVN. It is important to mention that the fog environment is not a replacement of the cloud. Instead the fog computing environment extends the capability of the cloud by pushing the processing close to the network's edges.

Figure 18.3 shows the realistic and practical view of the proposed fog-based architecture for communication in the AVN. In the figure, we can see that all the nodes that are AVs in our case are communicating with the towers that are the fog nodes. The fog nodes subsequently communicate with the data center, i.e. the cloud.

We implemented the proposed fog-based architecture, analyzed, and compared the execution with the cloud-based architecture. To test our proposed architecture, we used iFogSim to implement both the fog-based and the cloud-based architectures. We ran multiple simulations of the scenarios created on both architectures. It is revealed by the simulations that the proposed architecture not only minimized the latency of the network, but also consumed less bandwidth than the cloud-based network. The results also showed that by using the fog-based architecture, the reliability of the AVN is enhanced, as the AVs can communicate with each other in significantly less time than the cloud environment. Communication delays among AVs cannot be tolerated, as such delays may result in loss of human life.

Figure 18.3 Realistic and practical view of the proposed fog-based architecture for the communication among autonomous vehicles.

18.3 Hypothesis Formulation

We formulated the following hypotheses:

- H1: μ(Latency of fog-based architecture) < μ(Latency of cloud-based architecture)

- H10: μ(Latency of fog-based architecture) = μ(Latency of cloud-based architecture)

Where H10 is the null hypothesis on which testing will be done, while H1 is the alternate hypothesis.

- H2: μ(Network usage of fog-based architecture) < μ(Network usage of cloud-based architecture)

- H20: μ(Network usage of fog-based architecture) = μ(Network usage of cloud-based architecture)

Where H20 is the null hypothesis on which testing will be done, while H2 is the alternate hypothesis.

- H3: μ(Scalability of fog-based architecture) > μ(Scalability of cloud-based architecture)

- H30: μ(Scalability of fog-based architecture) = μ(Scalability of cloud-based architecture)

Where H30 is the null hypothesis on which testing will be done, while H3 is the alternate hypothesis.

18.4 Simulation Design

We used iFogSim to implement both fog-based architecture and cloud-based architecture and designed simulation to run on both the architectures. iFogSim is a simulator that models IoT, cloud, and fog environments and also measures the impact of different resource management techniques like latency, network congestion, energy consumption, and cost [29]. During the simulations, we continuously varied the number of vehicles for both the architectures, to see variations in the latency and the bandwidth. For both architectures, the experiments were conducted for 10, 20, 30, 40, 50, 60, 70, 80, 90, and 100 vehicles in simulations.

Different results were seen with the different numbers of vehicles. The details of the results are explained in the next section.

18.4.1 Results and Discussions

This section presents the results for the proposed fog-based architecture and compares the results with the cloud-based implementation. We created a scenario in iFogSim and evaluated the performance of the fog-based and cloud-based implementation for latency and network usage.

Table 18.1 depicts the results of the latency in milliseconds (ms) for both the proposed fog-based and cloud-based architectures. It can be observed that even with 10 vehicles the latency of fog-based architecture is far less than the latency of the cloud-based architecture. Also, we can see a severe increase in the latency of cloud-based architecture with 40 vehicles. Such delays in latency in vehicular area networks, where autonomous cars need to communicate with each other frequently, are not tolerable and can result in fatal accidents. Therefore, the aforementioned results demonstrate the efficacy of the proposed fog-based architecture for the AVs and also enhances the reliability of the smart vehicular environments.

Table 18.2 depicts the log-transformed results of the latency for both proposed fog-based and cloud-based architectures. The reason to depict the log-transformed data here is that the latency values for the cloud are significantly higher and it is impractical to plot with high variations.

Figure 18.4 shows that there is a severe increase in the latency of cloud-based architecture as compared to tfog-based architecture. The y-axis shows the log-transformed latency, while the x-axis shows the two box plots. There are 100 vehicles, which is shown by the boxes, with whiskers for both architectures. Even the whiskers of both architectures do not overlap, which shows that fog-based architecture is far more reliable in terms of latency than cloud-based architecture, in the context of autonomous car environments.

Table 18.1 Results of the latency in the both architectures.

| No. of vehicles | Latency in fog network | Latency in cloud network |

| 10 | 0.457 | 7.03 |

| 20 | 0.81 | 8.76 |

| 30 | 1.171 | 11.48 |

| 40 | 1.528 | 1967 |

| 50 | 1.88 | 3049.5 |

| 60 | 2.243 | 3641.559 |

| 70 | 2.600 | 4000.1 |

| 80 | 2.95 | 4233.75 |

| 90 | 3.31 | 4394.35 |

| 100 | 3.671 | 4509.8 |

Table 18.2 Log-transformed data for latency.

| No. of vehicles | Latency in fog network | Latency in cloud network |

| 10 | −0.34008 | 0.846955 |

| 20 | −0.09151 | 0.942504 |

| 30 | 0.068557 | 1.059942 |

| 40 | 0.184123 | 3.293804 |

| 50 | 0.274158 | 3.484229 |

| 60 | 0.350829 | 3.561287 |

| 70 | 0.414973 | 3.602071 |

| 80 | 0.469822 | 3.626725 |

| 90 | 0.519828 | 3.642895 |

| 100 | 0.564784 | 3.654157 |

Table 18.3 depicts the results of network usage for both the proposed fog-based architecture and the cloud-based architecture. As we can see from the results, even with 100 vehicles, network usage is lower in the fog-based architecture than in the cloud-based architecture. Moreover, it was also observed that network usage with a slightly higher number of vehicles, such as over 30 vehicles, increased severely and consequently reduced the efficacy of the cloud-based architecture.

Figure 18.4 Box plot for the latency for both fog-basedarchitecture and cloud-based architecture.

Table 18.3 Results of the network usage in the both architectures.

| No. of vehicles | Network usage in fog network | Network usage in cloud network |

| 10 | 509.49 | 22096.8 |

| 20 | 1018.98 | 48090.6 |

| 30 | 1528.47 | 78057.76 |

| 40 | 2037.96 | 83795.16 |

| 50 | 2547.45 | 89105.22 |

| 60 | 3056.94 | 95948.98 |

| 70 | 3566.43 | 103678.64 |

| 80 | 4075.92 | 111966.92 |

| 90 | 4585.41 | 120628.58 |

| 100 | 5094.9 | 129553.84 |

Table 18.4 Log-transformed data for network usage.

| No. of vehicles | Network usage in fog network | Network usage in cloud network |

| 10 | 2.707136 | 4.344329 |

| 20 | 3.008166 | 4.68206 |

| 30 | 3.184257 | 4.892416 |

| 40 | 3.309196 | 4.923219 |

| 50 | 3.406106 | 4.949903 |

| 60 | 3.485287 | 4.98204 |

| 70 | 3.552234 | 5.015689 |

| 80 | 3.610226 | 5.04909 |

| 90 | 3.661378 | 5.08145 |

| 100 | 3.707136 | 5.11245 |

Table 18.4 depicts the log-transformed results of network usage for both the proposed fog-based and the cloud-based architectures.

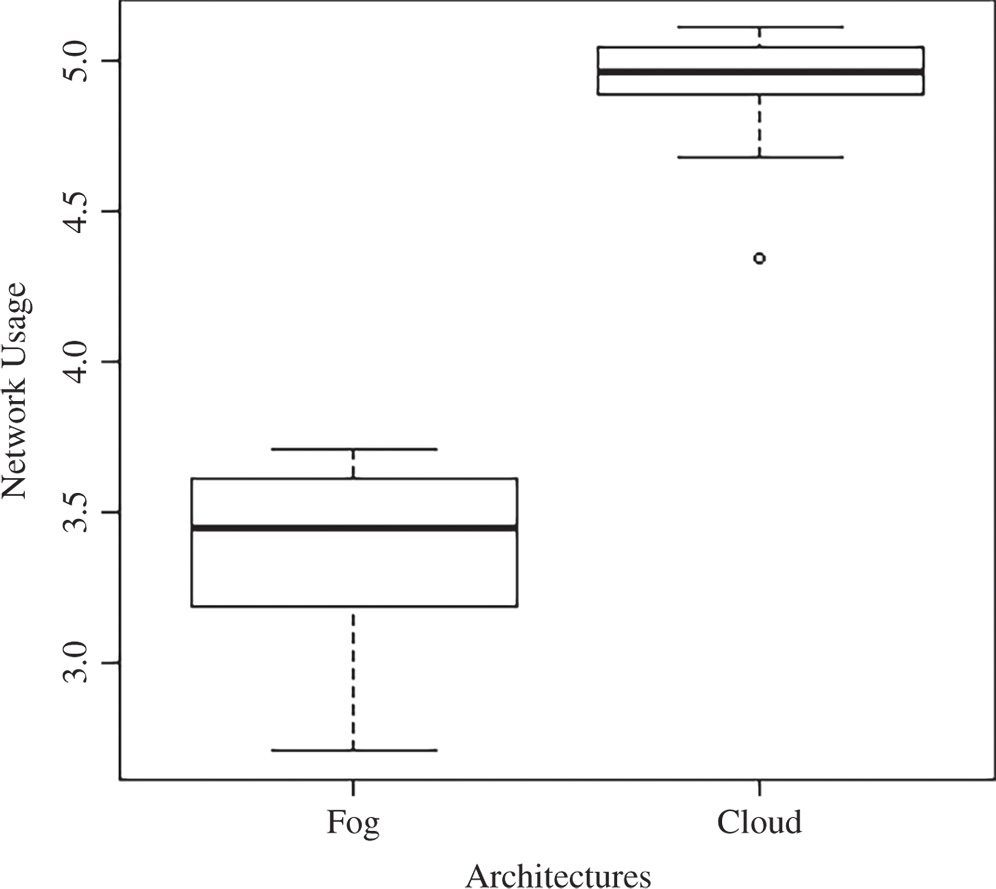

It can be seen in Figure 18.5 that there is a severe increase in network usage of the cloud-based architecture as compared to the fog-based architecture. The y-axis shows the log-transformed network usage while the x-axis shows the two box plots. The numbers of vehicles are 100 which are shown by the boxes, whiskers, and outliers for both architectures. Again, as in Figure 18.4 the whiskers in the box plots of both architectures do not overlap, which shows that fog-based architecture is significantly more reliable in terms of network usage when compared to cloud-based architecture in the given scenario.

18.4.2 Hypothesis Testing

For hypothesis testing, we will start from the first hypothesis, i.e. null hypothesis H10 and alternate hypothesis H1.

18.4.2.1 First Hypothesis

The null hypothesis H10 states:

- H10: μ(Latency of fog-based architecture) = μ(Latency of cloud-based architecture)

We can see in Table 18.1 that, even with 100 vehicles, the latency of fog-based architecture is far less than the latency of the cloud-based architecture. Also, a significant decrease in the latency of cloud-based architecture with 40 vehicles is observed. With the analyzed results, we can say that the fog-based architecture for the AVs has less latency when compared to the cloud-based architecture.

Figure 18.5 Box plot for network usage for both fog-based architecture and cloud-based architecture.

We can reject the null hypothesis H10, which stated that μ(Latency of fog-based architecture) = μ(Latency of cloud-based architecture).

As null hypothesis H10 is rejected, then the alternate hypothesis, which states that μ(Latency of fog-based architecture) < μ(Latency of cloud-based architecture), is accepted.

18.4.2.2 Second Hypothesis

The null hypothesis H20 states:

- H20: μ(Network usage of fog-based architecture) = μ(Network usage of cloud-based architecture)

We can see in Table 18.3 that, even with 10 vehicles, network usage of fog-based architecture is far lower than network usage of the cloud-based architecture. Also, we can see a radical increase in network usage of cloud-based architecture with 30 vehicles. It can be seen that the fog-based architecture for the AVs has lower network usage when compared to the cloud-based architecture.

Figure 18.5 also shows us that network usage of cloud-based architecture when compared to the fog-based architecture is significantly higher. Even the whiskers in the box plots of both architectures don't overlap, which shows that fog-based architecture is far more reliable in terms of network usage when compared to cloud-based architecture.

We can reject the null hypothesis H20, which states that μ(Network usage of fog-based architecture) = μ(Network usage of cloud-based architecture).

Therefore, the alternate hypothesis H2, which states μ(Network usage of fog-based architecture) < μ(Network usage of cloud-based architecture), is accepted.

18.4.2.3 Third Hypothesis

The null hypothesis H30 states:

- H30: μ(Scalability of fog-based architecture) = μ(Scalability of cloud-based architecture)

We can see in Tables 18.1 and 18.3 that, from 10 vehicles to 100 vehicles, the latency and network usage of fog-based architecture is far less than the network usage of the cloud-based architecture. Also, we can see a radical increase in both the latency and network usage of cloud-based architecture with an increase from 30 to 40 vehicles. With the analyzed results, we can say that the fog-based architecture for the AVs is more scalable when compared to the cloud-based architecture.

From Figures 18.4 and 18.5, it can be observed that the latency and network usage of cloud-based architecture when compared to the fog-based architecture is significantly higher. Also, the whiskers in the box plots of the both architectures do not intersect, which shows that fog-based architecture is far more scalable when compared to the cloud-based architecture.

We can reject the null hypothesis H30, which states that μ(Scalability of fog-based architecture) = μ(Scalability of cloud-based architecture).

Therefore, the alternate hypothesis H3, which states that μ(Scalability of fog-based architecture) > μ(Scalability of cloud-based architecture), is accepted.

18.5 Conclusions

In the past few years, due to technological advancements in the fields of artificial intelligence, robotics, sensor technologies, and self-driving vehicles, we are able to sense the surroundings of vehicles in real time. The coming of AVs has caused a radical increase in data traffic over networks. However, the AVs demand low latency and high bandwidth to efficiently communicate with the other vehicles. Connecting the AVs to the cloud only may result in a high rate of delays, which might result in accidents in smart vehicular environments. In this chapter, we proposed a fog-based architecture that not only minimizes the latency and bandwidth, but also increases the scalability as well. To validate our proposed architecture, we used a simulator called iFogSim. We used iFogSim to implement both the fog-based and cloud-based architectures. We performed multiple simulations of the scenario created on both of the architectures. The results of the simulation demonstrated that the proposed fog-based architecture not only minimizes the latency and network usage of the network, but also increases the scalability when compared to the cloud-based network. The results also are evidence of the reliability and effectiveness of fog-based applications for AVNs.

References

- 1 Fagnant, D., J. and Kockelman, K. (2015). Preparing a nation for autonomous vehicles: opportunities, barriers and policy recommendations. Transportation Research Part A: Policy and Practice 77: 167–181.

- 2 Campbell, M., Egerstedt, M., How, J.P., and Murray, R.M. (2010). Autonomous driving in urban environments: approaches, lessons and challenges. Philosophical Transactions of the Royal Society A: Mathematical, Physical and Engineering Sciences 368 (1928): 4649–4672.

- 3 Kevin Bullis, How vehicle automation will cut fuel consumption, MIT's Technology Review, October 24, 2011.

- 4 Chengalva, M.K., Bletsis, R., and Moss, B.P. (2008). Low-cost autonomous vehicles for urban environments. SAE International Journal of Commercial Vehicles 1, 2008-01-2717: 516–526.

- 5 Clements, L.M. and Kockelman, K.M. (2017). Economic effects of automated vehicles. Transportation Research Record 2606 (1): 106–114.

- 6 Hajibaba, M. and Gorgin, S. (2014). A review on modern distributed computing paradigms: cloud computing, jungle computing and fog computing. Journal of Computing and Information Technology 22 (2): 69–84.

- 7 Harauz, J., Kaufman, L.M., and Potter, B. (2009). Data security in the world of cloud computing. In: IEEE Security & Privacy. Copublished by the IEEE Computer and Reliability Societies.

- 8 Dikaiakos, M.D., Katsaros, D., Mehra, P. et al. (2009). Cloud computing: distributed Internet computing for IT and scientific research. IEEE Internet Computing 13 (5): 10–13.

- 9 Stantchev, V., Barnawi, A., Ghulam, S. et al. (2015). Smart items, fog and cloud computing as enablers of servitization in healthcare. Sensors & Transducers 185 (2): –121.

- 10 Yannuzzi, M., Milito, R., Serral-Gracià, R. et al. (2014). Key ingredients in an IoT recipe: fog computing, cloud computing, and more fog computing. In: 2014 IEEE 19th International Workshop on Computer Aided Modeling and Design of Communication Links and Networks (CAMAD), 325–329. IEEE.

- 11 Ken Laberteaux, How might automated driving impact US land use. 2014 Automated Vehicle Symposium, 2014.

- 12 Patrick Lin, The ethics of saving lives with autonomous cars is far murkier than you think. WIRED, July 30, 2013.

- 13 Vaquero, L.M. and Rodero-Merino, L. (2014). Finding your way in the fog: towards a comprehensive definition of fog computing. ACM SIGCOMM Computer Communication Review 44 (5): 27–32.

- 14 Bonomi, F. (2011). Connected vehicles, the Internet of things, and fog computing. In: The Eighth ACM International Workshop on Vehicular Inter-networking (VANET). Las Vegas, USA.

- 15 Cao, Y., Chen, S., Hou, P., and Brown, D. (2015). FAST: A fog computing assisted distributed analytics system to monitor fall for stroke mitigation. In: 2015 IEEE International Conference on Networking, Architecture and Storage (NAS), 2–11. IEEE.

- 16 Arkian, H.R., Diyanat, A., and Pourkhalili, A. (2017). MIST: fog-based data analytics scheme with cost-efficient resource provisioning for IoT crowdsensing applications. Journal of Network and Computer Applications 82: 152–165.

- 17 Dastjerdi, A.V. and Buyya, R. (2016). Fog computing: helping the Internet of Things realize its potential. Computer 49 (8): 112–116.

- 18 Yi, S., Hao, Z., Qin, Z., and Li, Q. (2015). Fog computing: Platform and applications. In: 2015 Third IEEE Workshop on Hot Topics in Web Systems and Technologies (HotWeb), 73–78. IEEE.

- 19 Datta, S.K., Bonnet, C., and Haerri, J. (2015). Fog computing architecture to enable consumer centric internet of things services. In: 2015 International Symposium on Consumer Electronics (ISCE), IEEE.

- 20 Aazam, M. and Huh, E.-N. (2016). Fog computing: the cloud-iot/ioe middleware paradigm. IEEE Potentials 35 (3): 40–44.

- 21 Sarkar, S. and Misra, S. (2016). Theoretical modelling of fog computing: a green computing paradigm to support IoT applications. Iet Networks 5 (2): 23–29.

- 22 Natraj, A. (2016). Fog computing focusing on users at the edge of Internet of things. International Journal of Engineering Research 5 (5): 1004–1008.

- 23 Madsen, H., Burtschy, B., Albeanu, G., and Popentiu-Vladicescu, F.L. (2013). Reliability in the utility computing era: towards reliable fog computing. In: 2013 20th International Conference on Systems, Signals and Image Processing (IWSSIP), 43–46. IEEE.

- 24 Intharawijitr, K., Iida, K., and Koga, H. (2016, 2016). Analysis of fog model considering computing and communication latency in 5G cellular networks. In: IEEE International Conference on Pervasive Computing and Communication Workshops (PerCom Workshops). IEEE.

- 25 Truong, N.B., Lee, G.M., and Ghamri-Doudane, Y. (2015). Software defined networking-based vehicular adhoc network with fog computing. In: 2015 IFIP/IEEE International Symposium on Integrated Network Management (IM). IEEE.

- 26 Amendola, D., Cordeschi, N., and Baccarelli, E. (2016). Bandwidth management VMs live migration in wireless fog computing for 5G networks. In: 2016 5th IEEE International Conference on Cloud Networking (Cloudnet). IEEE.

- 27 Li, J., Jin, J., Yuan, D. et al. (2015). EHOPES: data-centered Fog platform for smart living. In: 2015 International Telecommunication Networks and Applications Conference (ITNAC), 308–313. IEEE.

- 28 Hou, X., Li, Y., Chen, M. et al. (2016). Vehicular fog computing: a viewpoint of vehicles as the infrastructures. IEEE Transactions on Vehicular Technology 65 (6): 3860–3873.

- 29 Gupta, H., Vahid Dastjerdi, A., Ghosh, S.K., and Buyya, R. (2017). iFogSim: A toolkit for modeling and simulation of resource management techniques in the Internet of Things, Edge and Fog computing environments. Software: Practice and Experience 47 (9): 1275–1296.

- 30 Yi, S., Li, C., and Li, Q. (2015). A survey of fog computing: concepts, applications and issues. In: Proceedings of the 2015 Workshop on Mobile Big Data. ACM.