Chapter 1: Introduction to the Data-Driven Edge with Machine Learning

The purpose of this book is to share prescriptive patterns for the end-to-end (E2E) development of solutions that run at the edge, the space in the computing topology nearest to where the analog interfaces the digital and vice versa. Specifically, the book focuses on those edge use cases where machine learning (ML) technologies bring the most value and teaches you how to develop these solutions with contemporary tools provided by Amazon Web Services (AWS).

In this chapter, you will learn about the foundations for cyber-physical outcomes and the challenges, personas, and tools common to delivering these outcomes. This chapter briefly introduces the smart home and industrial internet of things (IoT) settings and sets the scene that will steer the hands-on project built throughout the book. It will describe how ML is transforming our ability to accelerate decision-making beyond the cloud. You will learn about the scope of the E2E project that you will build using AWS services such as AWS IoT Greengrass and Amazon SageMaker. You will also learn what kinds of technical requirements are needed before moving on to the first hands-on chapter, Chapter 2, Foundations of Edge Workloads.

The following topics will be covered in this chapter:

- Living on the edge

- Bringing ML to the edge

- Tools to get the job done

- Demand for smart home and industrial IoT

- Setting the scene: A modern smart home solution

- Hands-on prerequisites

Living on the edge

The edge is the space of computing topology nearest to where the analog interfaces the digital and vice versa. The edge of the first computing systems, such as 1945's Electronic Numerical Integrator and Computer (ENIAC) general-purpose computer, was simply the interfaces used to input instructions and receive printed output. You couldn't access these interfaces without being directly in front of them. With the advent of remote access mainframe computing in the 1970s, the edge of computing moved further out to public terminals that fit on a desk and connected to mainframes via coaxial cable. Users could access the common resources of the local mainframe from the convenience of a lab or workstation to complete their work with advanced capabilities such as word processors or spreadsheets.

The evolution of humans using the edge for computing continued with increases in compute power and decreases in size and cost. The devices we use every day, such as personal computers and smartphones, deliver myriad outcomes for us. Some outcomes are delivered entirely at the edge (on the device), but many work only when connected to the internet and consume remote services. Edge workloads for humans tend to be diverse, multipurpose, and handle a range of dynamisms. We could not possibly enumerate everything we could do with a smartphone and its web browser! These examples of the edge all have in common that humans are both the operator and recipient of a computing task. However, the edge is more than the interface between humans and silicon.

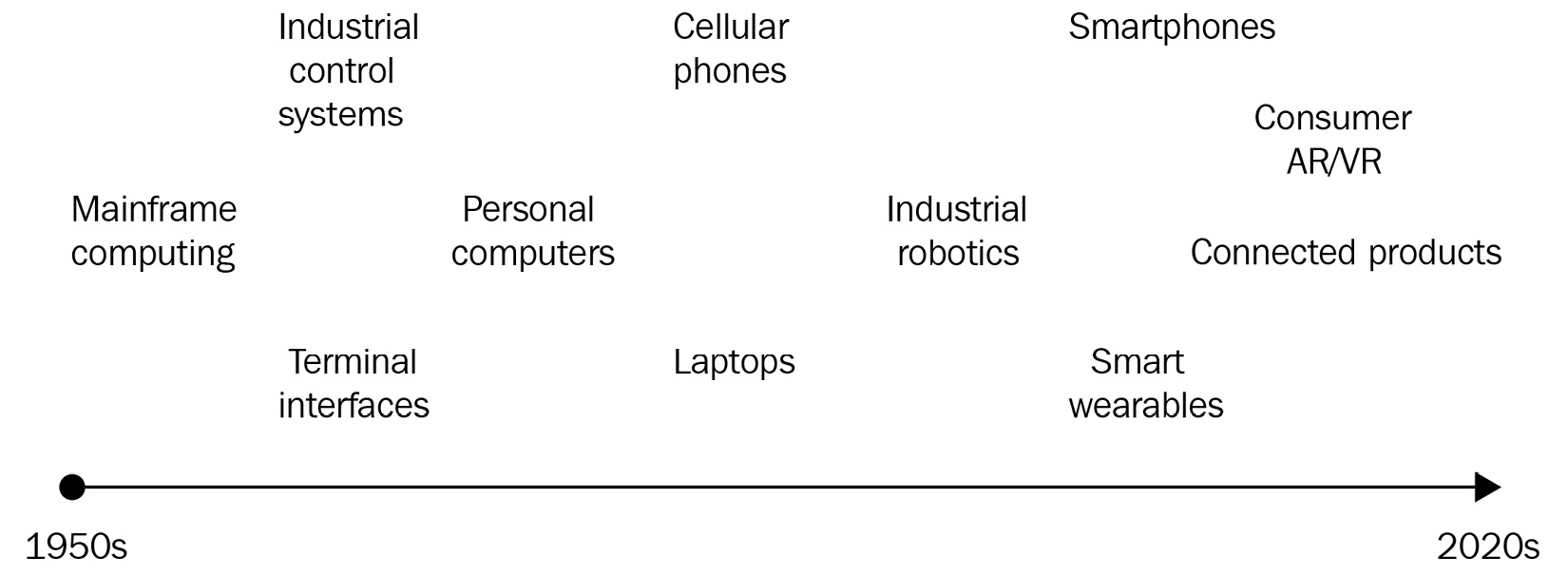

Another important historical trend of the edge is autonomous functionality. We design computing machines to sense and act, then deploy them in environments where there may be no human interaction at all. Examples of the autonomous edge include robotics used in manufacturing assembly, satellites, and weather stations. These edge workloads are distinct from human-driven workloads in that they tend to be highly specialized, single-purpose, and handle little dynamism. They perform a specific set of functions, perform them consistently, and repeat them until obsolescence. The following figure provides a simplistic history of both human-driven interfaces and autonomous machines at the edge over time:

Figure 1.1 – A timeline of cyber-physical interfaces at the edge from 1950 to 2020

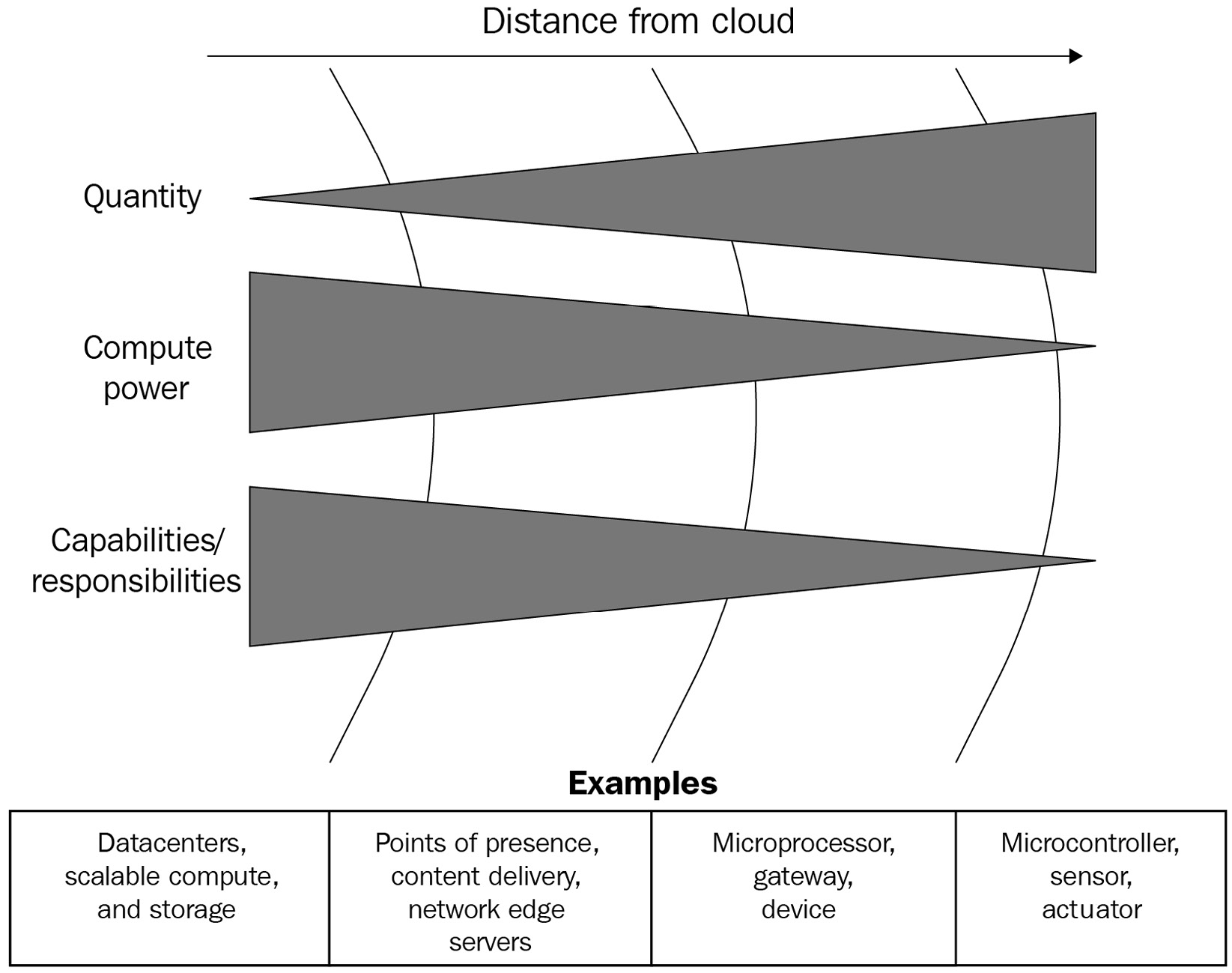

In today's technological advances of wireless communications, microcontrollers and microprocessors, electrical efficiency, and durability, the edge can be anywhere and everywhere. Some of you will be reading this book on an e-reader, a kind of edge device, at 10 km altitude, cruising at 900 kph. The Voyager 1 spacecraft is the most distant manmade edge solution, continuing to operate at the time of this writing 152 AU from Earth! The trend here is that over time, the spectrum of capabilities along the path to and at the edge will continue to grow, as will the length of that path (and the number of points on it!) and the remoteness of where those capabilities can be deployed. The following diagram illustrates the scaling of entities, compute power, and capabilities across the topology of computing:

Figure 1.2 – Illustration of computing scale from the cloud to a sensor

Our world is full of sensors and actuators; over time, more of these devices are joining the IoT. A sensor is any component that takes a measurement from our analog world and converts it to digital data. An actuator is any component that accepts some digital command and applies some force or change out into the analog world. There's so much information out there to collect, reason about, and act upon. Developing edge solutions is an exciting frontier for the following reasons:

- There is a vast set of possibilities and problems to solve in our world today. We need more innovation and solutions to address global outcomes, such as the 17 sustainable development goals defined by the United Nations (UN).

- The shrinking cost factor to develop edge solutions lowers the barrier to experiment.

- Tools that put solution development in the reach of anyone with a desire to learn are maturing and becoming simpler to use.

This book will teach you how to develop the software of edge solutions using modern edge-to-cloud technologies, including how to write software that interacts with physical sensors and actuators, how to process and exchange data with other local devices and the cloud, and how to get value from advanced ML technologies at the edge. More important than the how is the why—in other words: why do we build the solutions this way? This book will also explain the architectural patterns and tips for building well-architected solutions that will last beyond the time of particular technologies and tools.

Implementation details such as programming languages and frameworks come and go with popularity, necessity, and technological breakthroughs. The patterns of what we build and why we build them in particular ways stand the test of time and will serve you for many of your future projects. For example, the 1995 Design Patterns: Elements of Reusable Object-Oriented Software by Gamma, Helm, Johnson, and Vlissides is still guiding software developers today despite the evolution of tools that the authors used at the time. We, the authors, cannot liken ourselves to these great thinkers or their excellent book, but we refer to it as an example of how we approached writing this book.

Common concepts for edge solutions

For the purposes of this book, we will expand the definition of the edge as any component of a cyber-physical solution operating outside of the cloud, its data centers, and away from the internet backbone. Examples of the edge include a radio switch controlling a smart light bulb in a household, sensors recording duty cycles and engine telemetry of a tractor-trailer at a mining site, a turnstile granting access to subway commuters, a weather buoy drifting in the Atlantic, a smartphone using a camera in a new augmented reality (AR) game, and of course, Voyager 1. The environmental control system running in a data center to keep servers cool is still an edge solution; our definition intends to highlight those components that are distant from the gravity of the worldwide computing topology. The following diagram shows examples of computing happening at various distances further from the gravity of data centers in this computing topology:

Figure 1.3 – Examples of the edge at various distances

A cyber-physical solution is one that combines hardware and software for interoperating the digital world with the analog world. If the analog world is a set of properties we can measure about reality and enact changes back upon it, the digital world is the information we capture about reality that we can store, transmit, and reason about. A cyber-physical solution can be self-contained or a closed loop such as a programmable logic controller (PLC) in an industrial manufacturing shop or a digital thermostat in the home. It may perform some task autonomously or at the direction of a local actor such as a person or switch.

An edge solution is, then, a specific extension of a cyber-physical solution in that it implies communication or exchange of information with some other entity at a point in time. It can also operate autonomously, at the direction of a local actor or a remote actor such as a web server. Sensors deliver autonomous functionality in the interest of a person who needs the provided data or are used as input to drive a decision through a PLC or code running on a server. Those decisions, whether derived by computers or people, are then enacted upon through the use of actuators. The analog, human story of reacting to the cold is I feel cold, so I will start a fire to get warm, while the digital story might look like the following pseudocode running a local controller to switch on a furnace:

if( io.read_value(THERMOMETER_PIN) < LOWER_HEAT_THRESHOLD ) {

io.write_value(FURNACE_PIN, HIGH);

}

We will further define edge solutions to have some compute capability such as a microcontroller or microprocessor to execute instructions. These have at least one sensor or actuator to interface with the physical world. Edge solutions will at some point in time interact with another entity on a network or in the physical world, such as a person or another machine.

Based on this definition, what is the edge solution that is nearest to you right now?

Sensors, actuators, and compute capability are the most basic building blocks for an edge solution. The kinds of solutions that we are interested in developing have far more complexity to them. Your responsibility as an IoT architect is to ensure that edge solutions are secure, reliable, performant, and cost-effective. The high bar of a well-architected solution means you'll need to build proficiency in fundamentals such as networking, cryptography, electrical engineering, and operating systems. Today's practical production edge solutions incorporate capabilities such as processing real-time signals, communicating between systems over multiple transmission media, writing firmware updates with redundant failure recovery, and self-diagnosing device health.

This can all feel overwhelming, and the reality is that building solutions for the edge is both complex and complicated. In practice, we use purpose-built tools and durable patterns to focus invested efforts on innovation and problem solving instead of bootstrapping and reinventing the wheel. The goal of this book is to start small with functional outcomes while building up to the big picture of an E2E solution. The learnings along the way will serve you on your journey of building your next solution. While this book doesn't cover every topic in the field, we will take every opportunity to highlight further educational resources to help you build proficiency beyond the included focus areas. And that's just about all in terms of building solutions for the edge! Next, let's review how ML fits in.

Bringing ML to the edge

ML is an incredible technology making headway in solving today's problems. The ability to train computers to process great quantities of information in service of classifying new inputs and predicting results rivals, and in some applications exceeds, what the human brain can accomplish. For this reason, ML defines mechanisms for developing artificial intelligence (AI).

The vast computing power made available by the cloud has significantly reduced the amount of time it takes to train ML models. Data scientists and data engineers can train production models in hours instead of days. Advances in ML algorithms have made the models themselves ever more portable, meaning that running the models can work on computers with smaller compute and memory profiles. The implications of delivering portable ML models cannot be overstated.

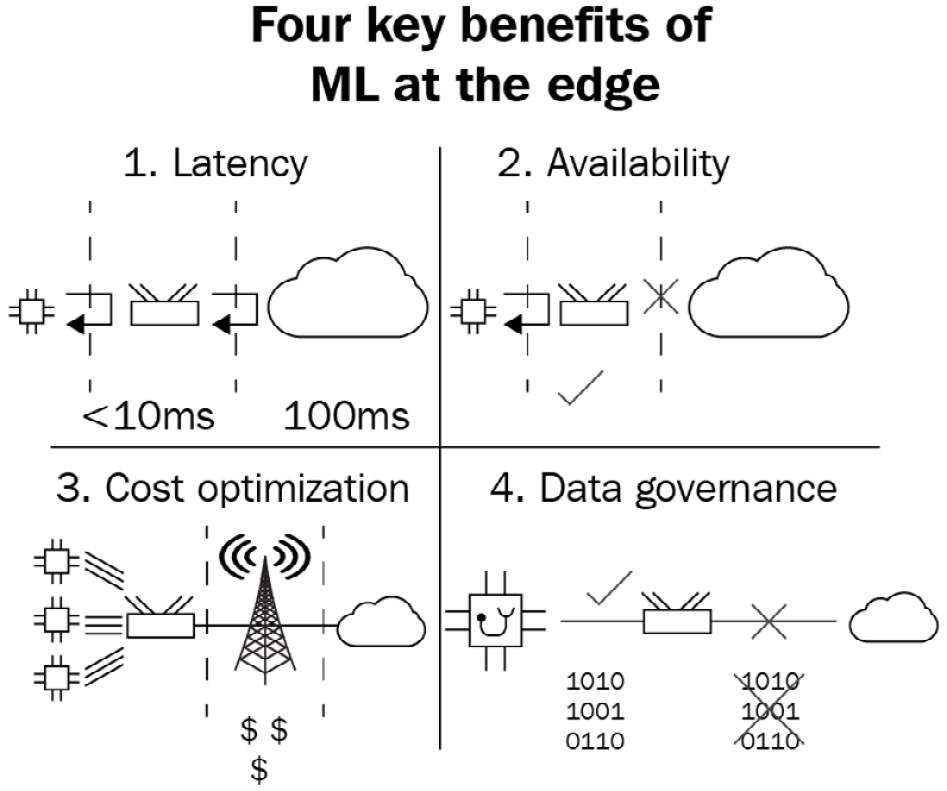

Operating ML models at the edge helps us as architects deliver optimal edge solution design principles. By hosting a portable model at the edge, the proximity to the rest of our solution leads to four key benefits, outlined as follows:

- First, this means the solution can maximize responsiveness for capabilities depending on the results of ML inferences by not waiting for the round-trip latency of a call to a remote server. The latency to interpret myriad signals from an engine about to fail can be made in 10 milliseconds (ms) instead of 100 ms. This degree of latency can make the difference between a safe operation and a catastrophic failure.

- Second, it means the functionality of the solution will not be interrupted by network congestion and can run in a state where the edge solution is disconnected from the public internet. This opens up possibilities for ML solutions to run untethered from cloud services. That imminent engine failure can be detected and prevented regardless of connection availability.

- Third, anytime we can process data locally with an ML model and reduce the quantity of data that ultimately needs to be stored in the cloud, we also get the cost-saving benefits on transmission. Think of an expensive satellite internet provider contract; across that kind of transmission medium, IoT architects only want to transmit data that is absolutely necessary to keep costs down.

- Fourth, another benefit of local data processing is that it enables use cases that must conform to regulation where data must reside in the local country or observe privacy concerns such as healthcare data. Hospital equipment used to save lives arguably needs as much intelligent monitoring as it can get, but the runtime data may not legally be permitted to leave the premises.

These four key benefits are illustrated in the following diagram:

Figure 1.4 – The four key benefits of ML at the edge

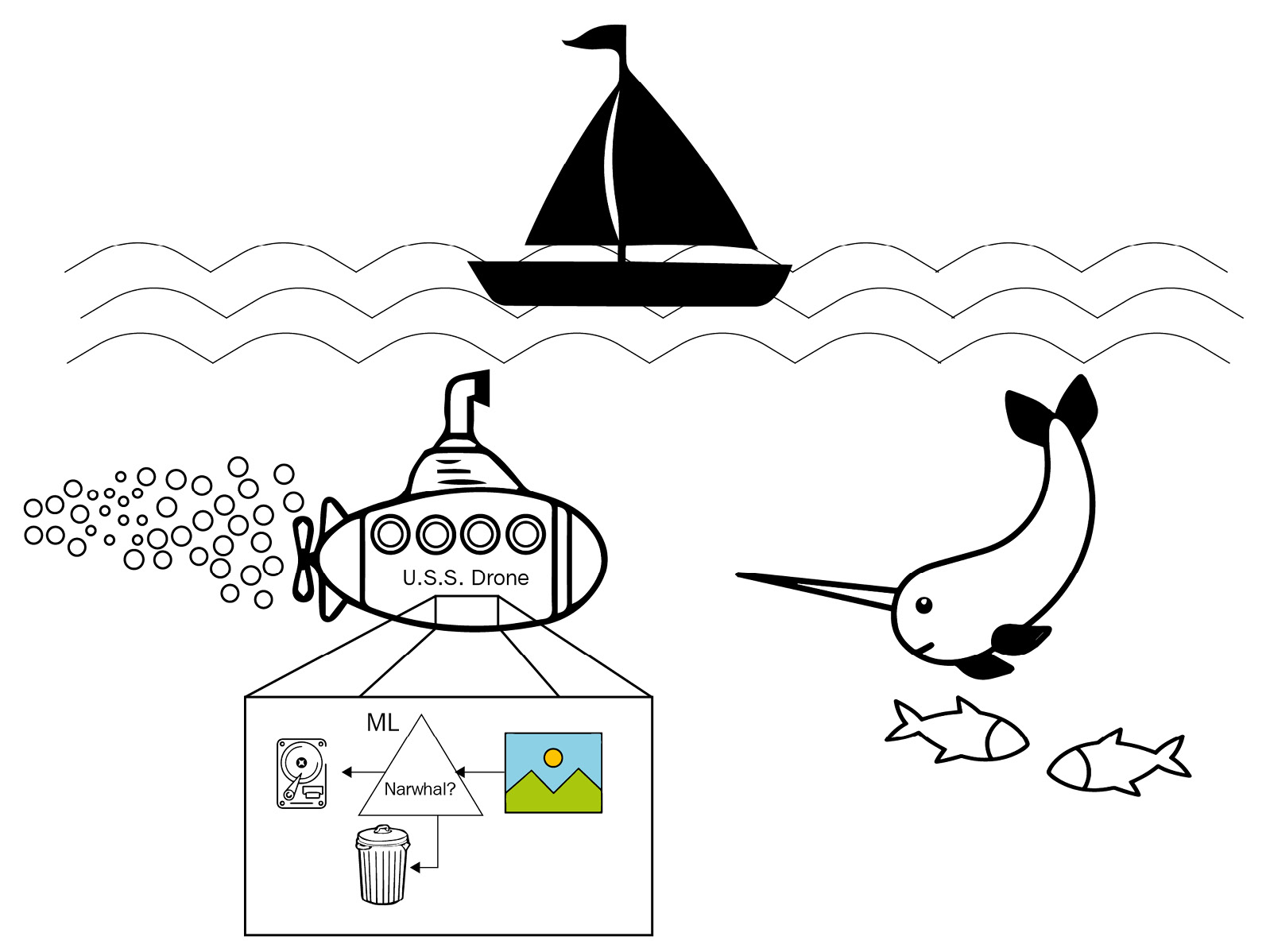

Imagine a submersible drone that can bring with it an ML model that can classify images coming from a video feed. The drone can operate and make inferences on images away from any network connection and can discard any images that don't have any value. For example, if the drone's mission is to bring back only images of narwhals, then the drone doesn't need extensive quantities of storage to save every video clip for later analysis. The drone can use ML to classify images of narwhals and only preserve those for the trip back home. The cost of storage continues to drop over time, but in the precious bill of materials and space considerations of edge solutions such as this one, bringing a portable ML model can ultimately lead to significant cost savings.

The following diagram illustrates this concept:

Figure 1.5 – Illustration of a submersible drone concept processing photographs and storing only those where a local ML model identifies a narwhal in the subject

This book will teach you the basics of training an ML model from the kinds of machine data common to edge solutions, as well as how to deploy such models to the edge to take advantage of combining ML capabilities with the value proposition of running at the edge. We will also teach you about operating ML models at the edge, which means analyzing the performance of models, and how to set up infrastructure for deploying updates to models retrained in the cloud.

Outside the scope of this book's lessons are comprehensive deep dives on the data science driving the field of ML and AI. You do not need proficiency in that field to understand the patterns of ML-powered edge solutions. An understanding of how to work with input/output (I/O) buffers to read and write data in software is sufficient to work through the ML tools used in this book.

Next, let's review the kinds of tools we need to build and the specific tools we will use to build our solution.

Tools to get the job done

This book focuses on tools offered by AWS to deliver ML-based solutions at the edge. Leading with the 2015 launch of the AWS IoT Core service, AWS has built out a suite of IoT services to help developers build cyber-physical solutions that benefit from the power of the cloud. These services range from edge software, such as the FreeRTOS real-time operating system for microcontrollers, to command and control (C2) of device fleets with IoT Core and IoT Device Management, and analytical capabilities for yielding actionable insights from data with services such as IoT SiteWise and IoT Events. The IoT services interplay nicely with Amazon's suite of ML services, enabling developers to ingest massive quantities of data for use in training ML models with services such as Amazon SageMaker. AWS also makes it easy to host trained models as endpoints for making inferences against real-time data or deploying these models to the edge for local inferencing.

There are three kinds of software tools you will need to create and operate a purposeful, intelligent workload at the edge. Next, we will define each tool by its general capabilities and also the specific implementation of the tool we are using, provided by AWS, to build the project in this book. There is always more complexity to any information technology (IT), but for our purposes, these are the three main kinds of tools this book will focus on in order to deliver intelligence to the edge.

Edge runtime

The first tool is a runtime for orchestrating your edge software. The runtime will execute your code and process local events to and from your code. Ideally, this runtime is self-healing, meaning that if any service fails, it should automatically recover by using failovers or restarting the service. These local events can be hardware interrupts that trigger some code to be run, timed events to read inputs from an analog sensor, or translating digital commands to change the state of a connected actuator such as a switch.

The AWS service that is the star of this book is IoT Greengrass. This is the service that we will use for the first kind of tool: the runtime for orchestrating edge software. IoT Greengrass defines both a packaged runtime for orchestrating edge software solutions and a web service for managing fleets of edge deployments running on devices such as gateways. In 2020, AWS released a new major version of IoT Greengrass, version 2, that rearchitected the edge software package as an open source Java project under the Apache 2.0 license. With this version, developers got a new software development model for authoring and deploying linked components that lets them focus on building business applications instead of worrying about the infrastructure of a complex edge-to-cloud solution. We will dive into more details of IoT Greengrass and start building our first application with it in the next chapter.

The following diagram illustrates how IoT Greengrass plays a role both at the edge and in the cloud:

Figure 1.6 – Illustration of how IoT Greengrass plays a role both at the edge and in the cloud

ML

The second tool is an ML library and model. The library dictates how to read and consume the model and how local code can invoke the model, also called making an inference. The model is the output of a training job that packages up the intelligence into a simpler framework for translating new inputs into inferences. We will need a tool to train a new model from a set of data called the training set. Then, that trained model will be packaged up and deployed to our edge runtime tool. The edge solution will need the corresponding library and code that knows how to process new data against the model to yield an inference.

The implementation of our second tool, the ML library and model, is delivered by Amazon SageMaker. SageMaker is Amazon's suite of services for the ML developer. Included are services for preparing data for use in training, building models with built-in or custom algorithms, tuning models, and managing models as application programming interface (API) endpoints or deploying them wherever they need to run. You can even train a model without any prior experience, and SageMaker will analyze your dataset, select an algorithm, build a set of tuned models, and tell you which one is the best fit against your data.

For some AI use cases, such as forecasting numerical series and interpreting human handwriting as text, Amazon offers purpose-built services that have already solved the heavy lifting of training ML models. Please note that the teaching of data science is beyond the scope of this book. We will use popular ML frameworks and algorithms common for delivering outcomes in IoT solutions. We will also provide justification for frameworks and algorithms when we use them to give you some insight into how we arrived at choosing them. We will introduce the concept of deploying ML resources to the edge in Chapter 4, Extending the Cloud to the Edge, and dive deeper into ML workloads in Chapter 7, Machine Learning Workloads at the Edge, and Chapter 9, Fleet Management at Scale.

Communicating with the edge

The third tool is the methodology for communicating with the edge solution. This includes deploying the software and updates to the edge hardware and the mechanism for exchanging bi-directional data. Since the kinds of edge solutions that this book covers are those that interoperate with the cloud, a means of transmitting data and commands between the edge and the cloud is needed. This could be any number of Open Systems Interconnection (OSI) model Layer 7 protocols, with common examples in IoT being HyperText Transfer Protocol (HTTP), Message Queuing Telemetry Transport (MQTT), and Constrained Application Protocol (CoAP).

The AWS IoT suite of services fits the needs here and acts as a bridge between the IoT Greengrass solution running at the edge and the ML capabilities we will use in Amazon SageMaker. IoT Core is a service that provides a scalable device gateway and message broker for both HTTP and MQTT protocols. It will handle the cloud connectivity, authentication and authorization, and routing of messages between the edge and the cloud. IoT Device Management is a service for operating fleets of devices at scale. It will help us define logical groupings of edge devices that will run the same software solutions and deploy updates to our fleet. Most chapters in this book will rely on tools such as these, and there is a focus on the scale of fleet management in Chapter 8, DevOps and MLOps for the Edge, and Chapter 9, Fleet Management at Scale.

With these tools in mind, we will next explore the markets, personas, and use cases where edge-to-cloud solutions with ML capabilities are driving the most demand.

Demand for smart home and industrial IoT

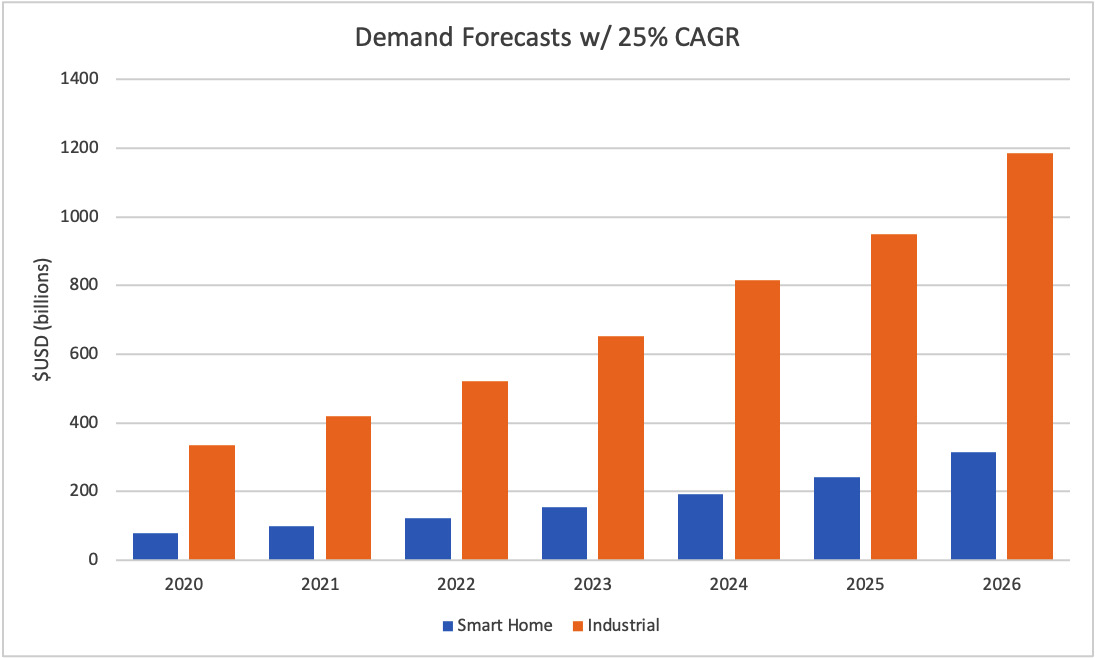

Market trends and analysis point to steep growth in the IoT industry, particularly in the industrial IoT segment. The 2020 Mordor Intelligence report Smart Homes Market – Growth, Trends, COVID-19 Impact, and Forecasts (2021-2026) projects the smart home market to grow from $79 billion US Dollars (USD) in 2020 to reach $313 billion by 2026. Similarly, the 2019 Grand View Research report Industrial Internet Of Things Market Size, Share & Trends Analysis Report By Component (Solution, Services, Platform) By End Use (Manufacturing, Logistics & Transport), By Region, And Segment Forecasts, 2019-2025 projects the industrial IoT market to grow from $214 billion in 2018 to reach $949 billion by 2025. In both studies, the estimated compound annual growth rate (CAGR) is approximately 25-30%. That means there are big opportunities for new products, solutions, and services to find success with businesses and end consumers.

You can see a depiction of market forecasts for smart home and industrial IoT here:

Figure 1.7 – Market forecasts for smart home and industrial IoT

It's important to keep in mind that forecasts are just that: forecasts. The only way those forecasts become reality is if inventors and problem solvers such as you and I get excited and make stuff! The key to understanding the future of smart home and industrial IoT solutions is how they are influenced by the value propositions of complete edge-to-cloud patterns and local ML inferencing. We can reflect on the key benefits of bringing ML to the edge to see how solutions in these markets are ripe for innovation.

Smart home use cases

In smart home solutions, the standard for functionality is oriented around environmental monitoring (temperature/electrical consumption/luminescence), automating state changes (turn this on in the morning and off at night), and introducing convenience where it was not previously possible (turn on the air conditioning when you are on your way home).

The primary persona using the product is the end consumer who lives in the residence where the solution is deployed. Secondary personas are guests of the owner, pets, public utilities, and home security service providers. At the product design level, the chief stakeholders are the IoT architect, security engineer, device manufacturer, and data scientist. Smart home products have been exploring and enjoying critical success when tapping into the power of AI and ML hosted in the cloud.

Here are three ways that deploying ML capabilities to the edge can benefit smart home use cases:

- Voice-assisted interfaces: Smart voice assistants such as Amazon's Alexa rely on the cloud to perform speech-to-text routines in order to process commands and generate audio responses. Running speech recognition models at the edge can help keep some common commands available for consumers even when the network is unavailable. Training models for recognition of who is speaking and incorporating that in responses increases the personalization factor and could make these voice assistants feel even more believable.

- Home security: Recognizing a breach of security has traditionally relied on binary sensors such as passive infrared for motion or magnetic proximity to detect open doors and windows. This simple mechanism can lead to false positives and undetected real security events. The next level of smart security will require complex event detection that analyzes multivariate inputs and confidence scores from trained models. Local models can evaluate whether the consumer is home or away automatically, and the solution can use that to calibrate sensitivity to events and escalate notifications of events. Video camera feeds are a classic example of a high data rate use case that becomes significantly cheaper to use with local processing for determining which clips to upload to the cloud for storage and further processing.

- Sustainability and convenience: Simple thermostats that maintain a temperature threshold are limited to recognizing when the threshold is breached and reacting by engaging a furnace or air conditioning system. Conventional smart home automation improves on this by reading weather forecasts, building a schedule profile of who is present in the home, and obeying rules for economical operation. ML can take us even further by analyzing a wider variety of inputs to determine via a recommendation engine how to achieve personal comfort targets most sustainably. For example, an ML model might identify and tell us that for your specific home, the most sustainable way to cool off in the evenings is to run the air conditioning in 5-minute bursts over 2 hours instead of frontloading for 30 minutes.

Industrial use cases

In industrial verticals such as manufacturing, power and utilities, and supply chain logistics, the common threads to innovating are creating profitable new business models and reducing the costs of existing business models. In order to innovate with the world of IT, these goals can be achieved through a better understanding of customer needs and the operational data generated by the business to test a new hypothesis. That understanding comes from using more of the existing data already collected and acquiring new streams of data needed to resolve hypotheses that lead to valuable new opportunities.

As per the 2015 McKinsey Global Institute report Unlocking the potential of the Internet of Things, only 1% of data collected by a business's IoT sensors is examined. The challenge to using the data is making it accessible to the systems and people that can get value from it. Data has little value when it is ingested at the edge but stored in an on-premises silo that can't afford to ship it to the cloud for analysis. This is where today's edge solutions can turn data into actionable insights with local compute and ML.

Here are three use cases for ML at the edge in industrial IoT settings:

- Predictive maintenance: Industrial businesses invest in and deploy expensive machinery to perform work. This machinery, such as a sheet metal press, computer numerical control (CNC) router, or an excavator, only performs optimally for so many duty cycles before a maintenance operation is needed or, worse, before they experience a failure while on the job. The need to keep machinery in top condition while minimizing downtime and expenses on unnecessary maintenance is a leading use case for industrial IoT and edge solutions. Training models and deploying them at the edge for predictive maintenance detection not only saves businesses from expensive downtime events but builds on the benefits of local ML by ensuring smooth operations in remote environments without high-speed or consistent network access.

- Safety and security: The physical safety and security of employees should be the top concern for any business. Safety first, as it goes. ML-powered edge solutions raise the bar on workplace safety with applications such as computer vision (CV) models to detect when an employee is about to enter a hazardous environment without the required safety equipment, such as a hard hat or safety vest. Similar solutions can also be used to detect when unauthorized personnel are entering (or trying to enter) a restricted area. When it comes to human safety, latency and availability are paramount, so running a fully functional solution at the edge means bringing the ML capabilities with it.

- Quality assurance: When a human operator is inspecting a component or finished product on a manufacturing line, they know which aspects of quality to inspect based on a trained reference (every batch of cookies should taste like this cookie), comparison to a specification (thickness, sheen, strength of aluminum foil), or human perception (do these two blocks of wood have reasonably similar wood grain to be used together?). ML innovates how manufacturers, for example, can capture the intuition of human quality inspectors to increase the scale and precision of their operations. With sensors such as cameras and CV models deployed to the manufacturing environment, it is feasible to inspect every component or final product (instead of an arbitrary sample) with a statistically consistent evaluation applied every time. This also brings a benefit to quality assurance (QA) teams by shifting the focus to inspecting solution performance instead of working on highly repetitive tasks dependent upon rapid subjective analysis. In other words, I'd rather QA a sample of 10,000 items passing inspection from an ML solution instead of every one of those 10,000 items. Running such a solution at the edge delivers on the key benefits of reducing overall data sent to the cloud and minimizing latency for the solution to produce results.

These use cases across smart homes and industry highlight the benefits that can be achieved with ML-powered edge solutions. Lofty forecasts on market growth in IoT are more likely to become reality if there are more developers out there bringing innovative new edge solutions to life! Let's review the smart home solution (and gratis product idea for someone out there to build) that will drive the hands-on material throughout this book.

Setting the scene: A modern smart home solution

The solution you will construct over the chapters of this book is one that models a gateway device for a modern smart home solution. That means we will use the context of a smart home hub for gathering sensor data, analyzing and processing that data, and controlling local devices as functions of detected events, schedules, and user commands. We selected the smart home context as the basis of our solution throughout the book because it is simple to understand and we anticipate many of our readers have read about or personally interacted with smart home controllers. That enables us to use the context of the smart home as a trope to rapidly move through the hands-on chapters and get to the good stuff. If your goals for applying the skills learned in this book reach into other domains, such as industrial IoT, worry not; the technologies and patterns used in this book are applicable beyond the smart home context.

Now, it's time to put on your imagination hat while we dive deeper into the scenario driving our new smart home product! Imagine you are an employee of Home Base Solutions, a company that specializes in bringing new smart home devices to market. Home Base Solutions delivers best-in-class features for customers outfitting their home with smart products for the first time or for experienced customers looking for better service by replacing an older smart home system.

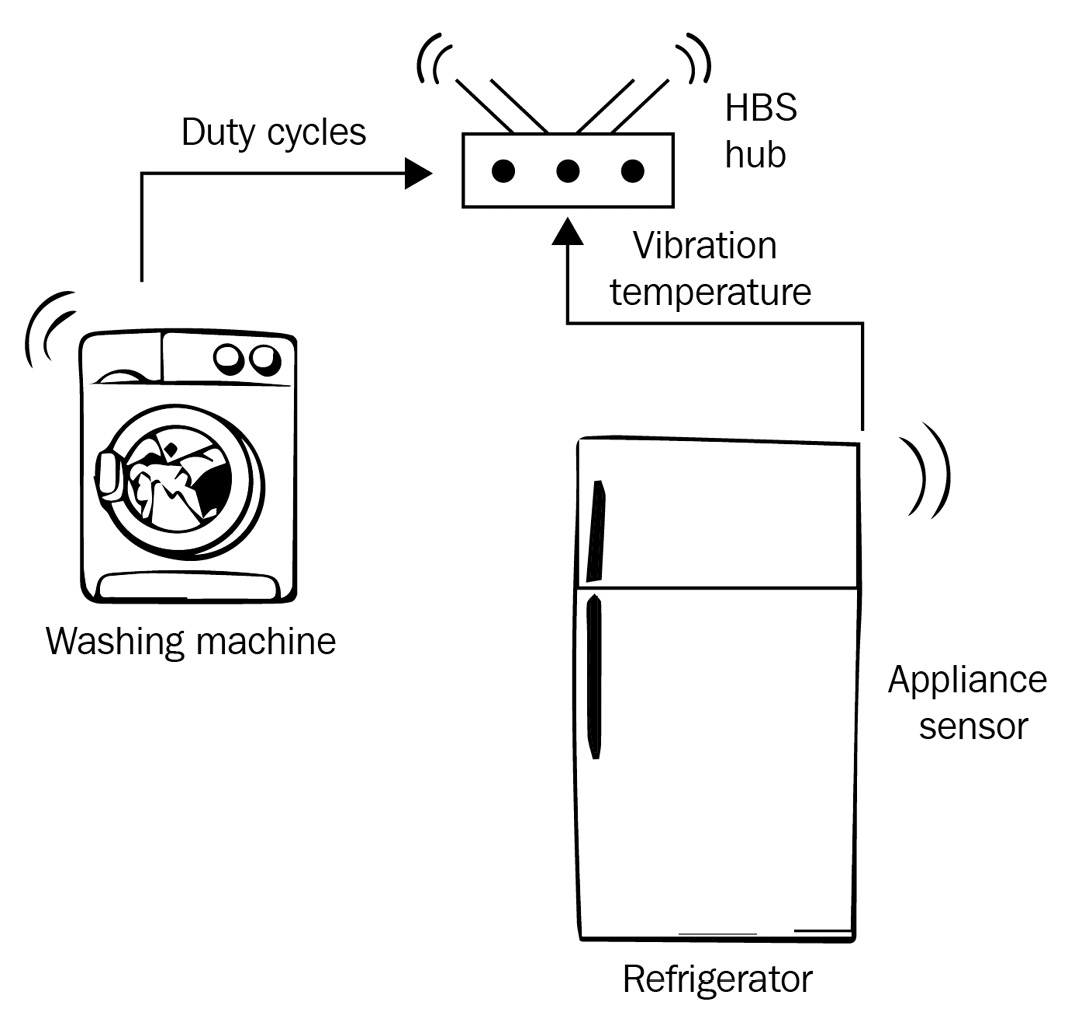

For the next holiday season, Home Base Solutions wants to release a new smart home hub that offers customers something they haven't seen before: a product that includes sensors for monitoring the health of their existing large appliances (such as a furnace or dishwasher) and uses ML to recommend to owners when maintenance is needed. A maintenance recommendation is served when the ML model detects an anomaly in the data from the attached appliance monitoring kit. This functionality must continue to work even when the public internet connection is down or congested, so the ML component cannot operate exclusively on a server in the cloud.

You can see an illustration of the Home Base Solutions appliance monitoring kit here:

Figure 1.8 – Whiteboard sketch of the Home Base Solutions appliance monitoring kit

Your role in the company is the IoT architect, meaning you are responsible for designing the software solution that describes the E2E, edge-to-cloud model of data acquisition, ingestion, storage, analysis, and insight. It is up to you to design how to deliver upon the company's vision to incorporate ML technologies locally in the hub product such that there is no hard dependency on any remote service for continuous operation.

Being the architect also means you are responsible for selecting tools and designing how to operate a production fleet of these devices so that the customer service and fleet operations teams can manage customer devices at scale. You are not required to be a subject-matter expert (SME) on ML—that's where your team's data scientist will step in—but you should design an architecture that is compatible with feeding data to power ML training jobs and running built models on the hub device.

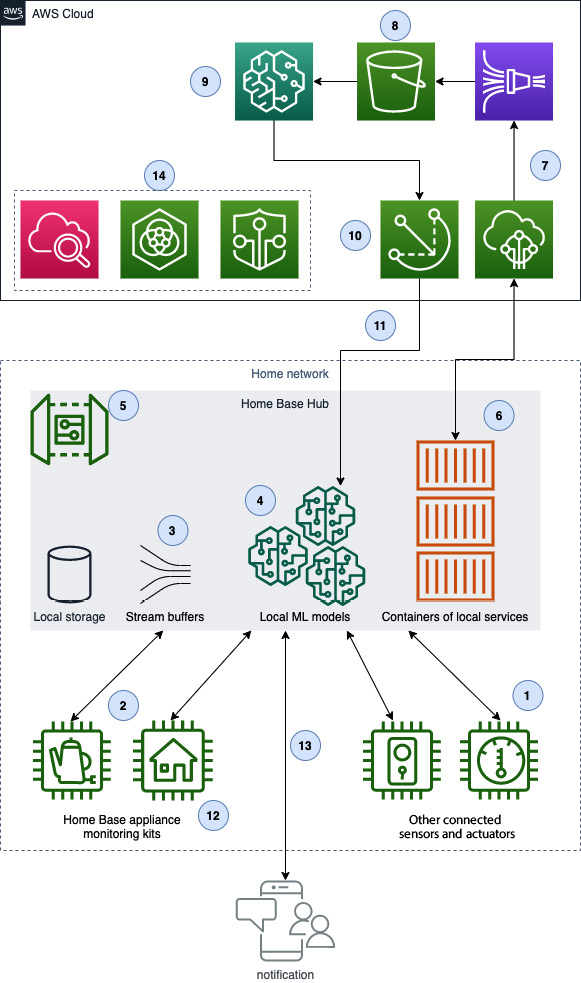

After spending a few weeks researching available software technologies, tools, solution vendors, and cloud services vendors, you decide to try out the following architecture using AWS:

Figure 1.9 – Solution architecture diagram for appliance monitoring kit

It's just a little bit complicated, right? Don't worry if none of this makes sense yet. We will spend the rest of the book's chapters going into depth on these tools, introducing them at the beginner level, the patterns to use in production, and how to combine them to deliver outcomes. Each chapter will focus on one component or sub-section, and over time, we will work toward this total concept. The following is a breakdown of the individual components and their relationships:

- Sensors and actuators controlled by the smart home hub, such as lights and their on/off switches or dimmers. These will be emulated with the Raspberry Pi Sense HAT, your own hardware modules compatible with Raspberry Pi, or via software components.

- Home Base Solutions innovative home appliance monitoring kits. The streaming data from these kits will be implemented in this book as software components.

- Stream buffer running on the smart home hub used to process home appliance runtime data.

- ML models (one per home appliance) stored on the hub to invoke against incoming telemetry streamed from the home appliance monitoring kits.

- This is all through an edge software solution running on the Home Base Solutions hub device and its components that are deployed and run by IoT Greengrass Core software.

- Components running inside the IoT Greengrass edge solution exchange messages with the AWS cloud via MQTT messages and the AWS IoT Core service.

- The rules engine of AWS IoT Core enables light extract-transform-load (ETL) operations and forwarding of messages throughout the cloud side of the solution.

- Home appliance monitoring data is stored in Amazon Simple Storage Service (S3).

- Amazon SageMaker uses appliance monitoring data as inputs for training and retraining ML models.

- The cloud service of IoT Greengrass, using native features of IoT Device Management such as groups and jobs, deploys code and resources down to the fleet of smart home hubs.

- Trained ML models are deployed as resources back to the edge for local inferences.

- A local feedback mechanism, such as a light-emitting diode (LED) or speaker, signals to customers that an anomaly has been detected and that a maintenance activity is suggested.

- A network feedback mechanism, such as a push notification to a mobile application, signals to customers that a maintenance activity is suggested and can provide further context about the anomalous event.

- Customer support and fleet operations teams use a suite of tools such as Fleet Hub, Device Defender, and CloudWatch for monitoring the health of customer devices.

By the end of this book, you will have built this entire solution and have the skills needed to apply a similar solution to your own business needs. The patterns and overall shape of the architecture stay consistent. The implementation details of specific devices, networks, and outcomes needed are what vary from project to project.

Hands-on prerequisites

In order to follow along with the hands-on portions of this book, you will need access to two computer systems.

Note

At the time of authoring, AWS IoT Greengrass v2 did not support Windows installation. The hands-on portions related to the edge solution are specific to Linux and do not run on Windows

System 1: The edge device

The first system will be your edge device, also known as a gateway, since it will act as the proxy for one or more devices and the cloud component of the solution. In IoT Greengrass terminology, this is called a Greengrass core. This system must be a computer running a Linux operating system, or a virtual machine (VM) of a Linux system. The runtime software for AWS IoT Greengrass version 2 (v2) has a dependency on Linux at the time of this writing. The recommendation for this book is to use a Raspberry Pi (hardware version 3B or later) running the latest version of Raspberry Pi OS. Suitable alternatives include a Linux laptop/desktop, a virtualization product such as VirtualBox running a Linux image, or a cloud-hosted Linux instance such as Amazon Elastic Compute Cloud (EC2), Azure Virtual Machines, or DigitalOcean Droplets.

The Raspberry Pi is preferred because it provides the easiest way to interoperate with physical sensors and actuators. After all, we are building an IoT project! That being said, we will provide code samples to emulate the functionality of sensors and actuators for our readers who are using virtual environments to complete the hands-on sections. The recommended expansion kit to cover use cases for sensors and actuators is the Raspberry Pi Sense HAT. There are many kits out there of expansion boards and modules compatible with the Raspberry Pi. The use cases in this book could be accomplished or modified as necessary to fit what you have, though we will not cover alternatives beyond the software samples provided.

You can see a visual representation of the Raspberry Pi 3B with a Sense HAT expansion board here:

Figure 1.10 – Raspberry Pi 3B with Sense HAT expansion board

In order to keep the bill of materials (BOM) low for the solution, we are setting the border of edge communications at the gateway device itself. This means that there are no devices wirelessly communicating with the gateway in this book's solution, although a real-world implementation for the smart home product would likely use some kind of wireless communication.

If you are using the recommended components outlined in this section, you will have access to an array of sensors, buttons, and feedback mechanisms that emulate interoperation between the smart home gateway device and the connected devices installed around the home. In that sense, the communication between devices and the smart home hub becomes an implementation detail that is orthogonal to the software design patterns showcased here.

System 2: Command and control (C2)

The second system will be your C2 environment. This system can be a Windows-, Mac-, or Unix-based operating system from which you will install and use the AWS Command Line Interface (AWS CLI) to configure, update, and manage your fleet of edge solutions. IoT Greengrass supports a local development life cycle, so we will use the edge device directly (or via Secure Shell (SSH) from the second system) to get started, and then in later chapters move exclusively to the C2 system for remote operation.

Here is a simple list of requirements:

- An AWS account

- A user in the AWS account with administrator permissions

- First system (edge device):

- Linux-based operating system such as Raspberry Pi OS or Ubuntu 18.x

- Recommended: Raspberry Pi (hardware revision 3B or later)

- Must be of architecture Armv7l, Armv8 (AArch64), or x86_64

- 1 gigahertz (GHz) central processing unit (CPU)

- 512 megabytes (MB) disk space

- 128 MB random-access memory (RAM)

- Keyboard and display (or SSH access to this system)

- A network connection that can reach the public internet on Transmission Control Protocol (TCP) ports 80, 443, and 8883

- sudo access for installing and upgrading packages via package manager

- (optional) Raspberry Pi Sense HAT or equivalent expansion modules for sensors and actuators

- Linux-based operating system such as Raspberry Pi OS or Ubuntu 18.x

- Second system (C2 system):

- Windows-, Mac-, or Unix-based operating system

- Keyboard and display

- Python 3.7+ installed

- AWS CLI v2.2+ installed

- A network connection that can reach the public internet on TCP ports 80 and 443

Note

If you are creating a new AWS account for this project, you will also need a credit card to complete the signup process. It is recommended to use a new developer account or sandbox account if provisioned by your company's AWS administrator. It is not recommended to experiment with new projects in any account running production services.

All of this is an exhaustive way of saying: if you have a laptop and a Raspberry Pi, you are likely ready to proceed! If you just have a laptop, you can still complete all of the hands-on exercises with a local VM at no additional cost.

Note

Installation instructions for Python and the AWS CLI vary per operating system. Setup for these tools is not covered in this book. See https://www.python.org and https://aws.amazon.com/cli/ for installation and configuration.

Summary

You should now have a working definition of the edge of computing topology and the components of edge solutions such as sensors, actuators, and compute capability. You should be able to identify the value proposition of ML technology for smart home and industrial use cases running at the edge. We created an imaginary company, scoped a new product launch, and described the overall architecture of the solution you will deliver throughout the rest of this book.

In the next chapter, you will take your first steps toward developing the edge solution by learning how to orchestrate code on your edge device with AWS IoT Greengrass. If the prerequisites of your two hands-on systems are ready to go and your AWS account is set up, you are ready to go!

Knowledge check

Before moving on to the next chapter, test your knowledge by answering these questions. The answers can be found at the end of the book:

- What's the difference between a cyber-physical solution and an edge solution?

- At the time it was invented, the automobile was a self-contained mechanical entity, not a cyber-physical solution or an edge solution. At some point in the evolution of the automobile, it started meeting the definition of a cyber-physical solution, and then again meeting the definition of an edge solution. What are the characteristics of automobiles we can find today that meet our definition of an edge solution?

- Has the telephone always been a cyber-physical solution? Why or why not?

- What are the common components of an edge solution?

- What are the three primary types of tools needed to deliver intelligence workloads at the edge?

- What are the four key benefits in edge-to-cloud workloads that can be achieved with ML models running at the edge?

- Who is the primary persona at the heart of any smart home solution?

- Can you identify one more use case for the smart home vertical that ties in with one more of the key benefits for ML-powered edge solutions?

- Who is the primary persona at the heart of any industrial solution?

- Can you identify one more use case for any industrial vertical that ties in with one more of the key benefits of ML-powered edge solutions?

- Is the IoT architect of an ML-powered edge solution typically responsible for the performance accuracy (for example, confidence scores for a prediction) of the models deployed? Why or why not?

References

Take a look at the following resources for additional information on the concepts discussed in this chapter:

- Erich Gamma, Richard Helm, Ralph Johnson, and John Vlissides. 1995. Design Patterns: Elements of Reusable Object-Oriented Software. Addison-Wesley Longman Publishing Co., Inc., USA:

- Smart Homes Market – Growth, Trends, COVID-19 Impact, and Forecasts (2021-2026):

https://www.mordorintelligence.com/industry-reports/global-smart-homes-market-industry

- Industrial Internet Of Things Market Size, Share & Trends Analysis Report By Component, (Solution, Services, Platform), By End Use (Manufacturing, Logistics and Transport), By Region, And Segment Forecasts, 2019-2025:

https://www.grandviewresearch.com/industry-analysis/industrial-internet-of-things-iiot-market

- Unlocking the potential of the Internet of Things:

- The 17 Goals, UN website: