3

Multirate Elastic Adaptive Loss Models

We consider multirate loss models of random arriving calls with fixed bandwidth requirements and elastic bandwidth allocation during service. We consider two types of calls, elastic and adaptive. Elastic calls that can reduce their bandwidth, while simultaneously increasing their service time, compose the so‐called elastic traffic (e.g., file transfer). Adaptive calls that can tolerate bandwidth compression, but their service time cannot be altered, compose the so‐called adaptive traffic (e.g., adaptive video).

3.1 The Elastic Erlang Multirate Loss Model

3.1.1 The Service System

In the elastic EMLM (E‐EMLM), we consider a link of capacity ![]() b.u. that accommodates elastic calls of

b.u. that accommodates elastic calls of ![]() different service classes. Calls of service class

different service classes. Calls of service class ![]() arrive in the link according to a Poisson process with an arrival rate

arrive in the link according to a Poisson process with an arrival rate ![]() and request

and request ![]() b.u. (peak‐bandwidth requirement). To introduce bandwidth compression, we permit the occupied link bandwidth

b.u. (peak‐bandwidth requirement). To introduce bandwidth compression, we permit the occupied link bandwidth ![]() to virtually exceed

to virtually exceed ![]() up to a limit of

up to a limit of ![]() b.u. Suppose that a new call of service class

b.u. Suppose that a new call of service class ![]() arrives in the link while the link is in (macro‐) state

arrives in the link while the link is in (macro‐) state ![]() . Then, for call admission, we consider three cases [1]:

. Then, for call admission, we consider three cases [1]:

- (i) If

, no bandwidth compression takes place and the new call is accepted in the system with its peak‐bandwidth requirement for an exponentially distributed service time with mean

, no bandwidth compression takes place and the new call is accepted in the system with its peak‐bandwidth requirement for an exponentially distributed service time with mean  . In that case, all in‐service calls continue to have their peak‐bandwidth requirement.

. In that case, all in‐service calls continue to have their peak‐bandwidth requirement. - (ii) If

, the call is blocked and lost without further affecting the system.

, the call is blocked and lost without further affecting the system. - (iii) If

, the call is accepted in the system by compressing its peak‐bandwidth requirement, as well as the assigned bandwidth of all in‐service calls (of all service classes). After compression, all calls (both in‐service and new) share the capacity

, the call is accepted in the system by compressing its peak‐bandwidth requirement, as well as the assigned bandwidth of all in‐service calls (of all service classes). After compression, all calls (both in‐service and new) share the capacity  in proportion to their peak bandwidth requirement, while the link operates at its full capacity

in proportion to their peak bandwidth requirement, while the link operates at its full capacity  . This is in fact the so‐called processor sharing discipline [2].

. This is in fact the so‐called processor sharing discipline [2].

When ![]() , the compressed bandwidth

, the compressed bandwidth ![]() of the newly accepted call of service‐class k, is given by [ 1]:

of the newly accepted call of service‐class k, is given by [ 1]:

where ![]() denotes the compression factor.

denotes the compression factor.

Since ![]() , where

, where ![]() is the number of in‐service calls of service‐class k (in the steady state),

is the number of in‐service calls of service‐class k (in the steady state), ![]() and

and ![]() , the values of

, the values of ![]() can be expressed by:

can be expressed by:

To keep constant the product service time by bandwidth per call (when bandwidth compression occurs), the mean service time of the new service‐class ![]() call becomes

call becomes ![]() :

:

The compressed bandwidth of all in‐service calls changes to ![]() for

for ![]() and

and ![]() . Similarly, their remaining service time increases by a factor of

. Similarly, their remaining service time increases by a factor of ![]() . The minimum bandwidth given to a service‐class

. The minimum bandwidth given to a service‐class ![]() call is:

call is:

where ![]() and is common for all service‐classes.

and is common for all service‐classes.

Note that increasing T decreases the ![]() and increases the delay (service‐time) of service‐class

and increases the delay (service‐time) of service‐class ![]() calls (compared to the initial service time

calls (compared to the initial service time ![]() ). Thus, T should be chosen so that this delay remains within acceptable levels.

). Thus, T should be chosen so that this delay remains within acceptable levels.

When an in‐service call, with compressed bandwidth ![]() , completes its service and departs from the system, then the remaining in‐service calls expand their bandwidth to

, completes its service and departs from the system, then the remaining in‐service calls expand their bandwidth to ![]() in proportion to

in proportion to ![]() , as follows:

, as follows:

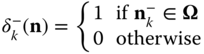

In terms of the system's state‐space ![]() , the CAC is expressed as follows. A new call of service‐class k is accepted in the system if the system is in state

, the CAC is expressed as follows. A new call of service‐class k is accepted in the system if the system is in state ![]() upon a new call arrival, where

upon a new call arrival, where ![]() . Hence, the CBP of service‐class

. Hence, the CBP of service‐class ![]() is determined by the state space

is determined by the state space ![]() :

:

Unfortunately, the compression/expansion of bandwidth destroys the LB between adjacent states in the E‐EMLM, or, equivalently, it destroys the reversibility of the system's Markov chain, and therefore no PFS exists for the values of ![]() (a fact that makes 3.6 inefficient). To show that the Markov chain of the E‐EMLM is not reversible, an efficient way is to apply the so called Kolmogorov's criterion [3,4]: A Markov chain is reversible if and only if the product of transition probabilities along any loop of adjacent states is the same as that for the reversed loop.1

(a fact that makes 3.6 inefficient). To show that the Markov chain of the E‐EMLM is not reversible, an efficient way is to apply the so called Kolmogorov's criterion [3,4]: A Markov chain is reversible if and only if the product of transition probabilities along any loop of adjacent states is the same as that for the reversed loop.1

To circumvent the non‐reversibility problem in the E‐EMLM, ![]() are replaced by the state‐dependent compression factors per service‐class

are replaced by the state‐dependent compression factors per service‐class ![]() , which not only have a similar role with

, which not only have a similar role with ![]() but also lead to a reversible Markov chain [5]. Thus, (3.1) becomes:

but also lead to a reversible Markov chain [5]. Thus, (3.1) becomes:

Reversibility facilitates the recursive calculation of the link occupancy distribution (see Section 3.1.2). To ensure reversibility, ![]() must have the form [ 5]:

must have the form [ 5]:

where ![]() and

and ![]() .

.

In (3.8), ![]() is a state multiplier associated with state

is a state multiplier associated with state ![]() , whose values are chosen so that

, whose values are chosen so that ![]() holds whenever

holds whenever ![]() , that is,

, that is, ![]() is given by [ 5]:

is given by [ 5]:

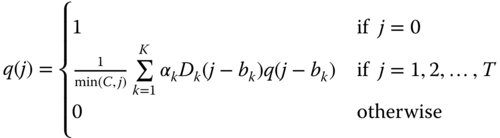

Example 3.2

In order to show that ( 3.8) ensures reversibility in the E‐EMLM, consider a system with two service‐classes and the following four adjacent states (depicted in Figure 3.2): ![]() ,

, ![]() , and

, and ![]() . If we apply the Kolmogorov's criterion flow clockwise = flow counter‐clockwise to the four adjacent states, we have

. If we apply the Kolmogorov's criterion flow clockwise = flow counter‐clockwise to the four adjacent states, we have ![]() . By substituting the values of

. By substituting the values of ![]() according to ( 3.8), we see that the previous equation holds, i.e.,

according to ( 3.8), we see that the previous equation holds, i.e., ![]() , and therefore the Markov chain is reversible.

, and therefore the Markov chain is reversible.

Figure 3.2 State transition diagram of four adjacent states (Example 3.2).

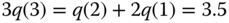

Example 3.3

Consider again Example 3.1 (![]() ).

).

- (a)Draw the modified state transition diagram based on

and determine the values of

and determine the values of  for each state

for each state  .

. - (b)Investigate if reversibility holds in the modified system.

- (c)Write the GB equations of the modified state transition diagram, and determine the values of

and the CBP of both service‐classes.

and the CBP of both service‐classes.

- (a)Figure 3.3 shows the modified state transition diagram of the system, while Table 3.2 presents the 12 states together with the corresponding values of

, which are calculated through the

, which are calculated through the  . (Comparing Figures 3.3 and 3.1, the compression factors

. (Comparing Figures 3.3 and 3.1, the compression factors  have been replaced by

have been replaced by  , which are different per service‐class.)

, which are different per service‐class.) - (b)If we apply the Kolmogorov's criterion (flow clockwise = flow counter‐clockwise) between the four adjacent states (2, 1), (3, 1), (3, 0), and (2, 0), which form a square, then this criterion now holds (i.e., the Markov chain is reversible) since:

, which holds according to Table 3.2.

, which holds according to Table 3.2. - (c) Based on Figure 3.3, we obtain the following 12 GB equations:

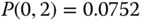

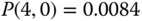

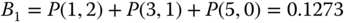

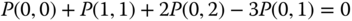

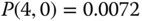

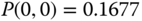

The solution of this linear system is:

Then, based on

and ( 3.6), we obtain the approximate values of CBP:

and ( 3.6), we obtain the approximate values of CBP: (compare with the exact 0.1748)

(compare with the exact 0.1748) (compare with the

(compare with theexact 0.3574)

Figure 3.3 The state space  and the modified state transition diagram (Example 3.3).

and the modified state transition diagram (Example 3.3).

Table 3.2 The values of the state dependent compression factors ![]() (Example 3.3).

(Example 3.3).

| 0 | 0 | 1.0 | 1.00 | 1.00 | 2 | 0 | 1.0 | 1.00 | 1.00 |

| 0 | 1 | 1.0 | 1.00 | 1.00 | 2 | 1 | 1.3333 | 0.75 | 0.75 |

| 0 | 2 | 1.3333 | 0.00 | 0.75 | 3 | 0 | 1.0 | 1.00 | 1.00 |

| 1 | 0 | 1.0 | 1.00 | 1.00 | 3 | 1 | 1.9999 | 0.6667 | 0.50 |

| 1 | 1 | 1.0 | 1.00 | 1.00 | 4 | 0 | 1.3333 | 0.75 | 0.00 |

| 1 | 2 | 1.7778 | 0.75 | 0.5625 | 5 | 0 | 2.2222 | 0.60 | 0.00 |

3.1.2 The Analytical Model

3.1.2.1 Steady State Probabilities

The steady state transition rates of the E‐EMLM are shown in Figure 3.4. According to this, the GB equation (rate in = rate out) for state ![]() is given by:

is given by:

where ![]()

,

,  , and

, and ![]() are the probability distributions of the corresponding states

are the probability distributions of the corresponding states ![]() , respectively.

, respectively.

Figure 3.4 State transition diagram of the E‐EMLM.

Assume now the existence of LB between adjacent states. Equations (3.11) and (3.12) are the detailed LB equations which exist because the Markov chain of the modified model is reversible. Then, based on Figure 3.4, the following LB equations are extracted:

for ![]() and

and ![]() .

.

Based on the LB assumption, the probability distribution ![]() has the solution:2

has the solution:2

where ![]() is the offered traffic‐load in erl and

is the offered traffic‐load in erl and ![]() is the normalization constant given by:

is the normalization constant given by:

Note that although the Markov chain has become reversible, the probability distribution ![]() of (3.13) is not a PFS due to the summation of (3.9) needed for the determination of

of (3.13) is not a PFS due to the summation of (3.9) needed for the determination of ![]() . We proceed by defining

. We proceed by defining ![]() , as:

, as:

where ![]() is the set of states in which exactly

is the set of states in which exactly ![]() b.u. are occupied by all in‐service calls, i.e.,

b.u. are occupied by all in‐service calls, i.e., ![]() .

.

Consider now two different sets of macro‐states: (i) ![]() and (ii)

and (ii) ![]() . For the first set, no bandwidth compression takes place and the values of

. For the first set, no bandwidth compression takes place and the values of ![]() are determined by the classical Kaufman–Roberts recursion (1.39). For the second set, aiming at deriving a similar recursion, we first substitute ( 3.8) in ( 3.11) to obtain:

are determined by the classical Kaufman–Roberts recursion (1.39). For the second set, aiming at deriving a similar recursion, we first substitute ( 3.8) in ( 3.11) to obtain:

Multiplying both sides of (3.16) by ![]() and summing over k, we have:

and summing over k, we have:

Equation (3.17), due to ( 3.9) is written as:

Summing both sides of (3.18) over ![]() and based on (3.15), we have:

and based on (3.15), we have:

or

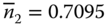

The combination of (1.39) and (3.20) results in the recursive formula of the E‐EMLM [ 5]:

3.1.2.2 CBP, Utilization, and Mean Number of In‐service Calls

The following performance measures can be determined based on (3.21):

- The CBP of service‐class k,

, via:

(3.22)where

, via:

(3.22)where

is the normalization constant.

is the normalization constant. - The link utilization,

, via:

(3.23)

, via:

(3.23)

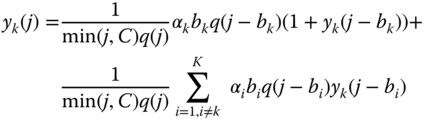

- The average number of service‐class k calls in the system,

, via:

(3.24)where

, via:

(3.24)where

is the average number of service‐class

is the average number of service‐class  calls given that the system (macro‐)state is

calls given that the system (macro‐)state is  , and is determined by [6]:

(3.25)where

, and is determined by [6]:

(3.25)where

, while

, while  for

for  and

and  .

.

Example 3.4

Consider again Example 3.1 (![]() ).

).

- (a)Calculate the (normalized) values of

.

. - (b)Calculate the CBP of both service‐classes.

- (c)Calculate the link utilization.

- (d)Calculate the mean number of in‐service calls of the two service‐classes in the system.

- (a) State probabilities through the recursion ( 3.21):

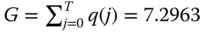

The normalization constant is

. The state probabilities are:

. The state probabilities are:

- (b) The CBP results of the E‐EMLM based on (3.22) are:

(For comparison, the CBP results of the EMLM are

and

and  , for

, for  b.u.)

b.u.) - (c) The link utilization based on (3.23) is:

b.u.

b.u. - (d) We firstly determine the values of

for

for  and

and  based on (3.25):

based on (3.25):

Then, we determine the mean number of in‐service‐calls based on (3.24):

Example 3.5

Consider a link of capacity ![]() b.u. that accommodates calls of

b.u. that accommodates calls of ![]() service‐classes with the following traffic characteristics: service‐class 1

service‐classes with the following traffic characteristics: service‐class 1 ![]() erl,

erl, ![]() b.u., service‐class 2

b.u., service‐class 2 ![]() erl,

erl, ![]() b.u. Assuming four different values of

b.u. Assuming four different values of ![]() , and 56 b.u., calculate the CBP

, and 56 b.u., calculate the CBP ![]() and

and ![]() of the two service‐classes and the link utilization when

of the two service‐classes and the link utilization when ![]() and

and ![]() increase in steps of 2 erl (up to

increase in steps of 2 erl (up to ![]() erl).

erl).

Figure 3.5 presents the CBP of both service‐classes while Figure 3.6 presents the link utilization for the various values of ![]() . Note that when

. Note that when ![]() b.u., the system behaves as in the EMLM. In the

b.u., the system behaves as in the EMLM. In the ![]() ‐axis of both figures, point 1 refers to

‐axis of both figures, point 1 refers to ![]() while point 8 refers to

while point 8 refers to ![]() . According to Figure 3.5, the CBP of both service‐classes decrease as the value of

. According to Figure 3.5, the CBP of both service‐classes decrease as the value of ![]() increases. This is expected due to the bandwidth compression mechanism that let calls enter the system with reduced (compressed) bandwidth. Similarly, the CBP reduction leads to an increase of the link utilization as a result of accepting more calls in the system.

increases. This is expected due to the bandwidth compression mechanism that let calls enter the system with reduced (compressed) bandwidth. Similarly, the CBP reduction leads to an increase of the link utilization as a result of accepting more calls in the system.

Figure 3.5 CBP of both service‐classes in the E‐EMLM (Example 3.5) .

Figure 3.6 Link utilization in the E‐EMLM (Example 3.5) .

3.1.2.3 Relationship between the E‐EMLM and the Balanced Fair Share Model

In the literature, a similar model exists called the balanced fair share model [7,8], which aims to share the capacity of a single link among elastic calls already accepted for service according to a balanced fairness criterion, whereas the E‐EMLM focuses on call admission. In what follows, we show that the bandwidth compression mechanism of the E‐EMLM and the model of [ 7, 8] provide the same bandwidth compression.

In [ 8], a link accommodates Poisson arriving calls of ![]() elastic service‐classes. The link capacity

elastic service‐classes. The link capacity ![]() is shared among calls according to the balanced fairness criterion: when

is shared among calls according to the balanced fairness criterion: when ![]() , all calls use their peak‐bandwidth requirement, while when

, all calls use their peak‐bandwidth requirement, while when ![]() , all calls share the capacity

, all calls share the capacity ![]() in proportion to their peak‐bandwidth requirement and the link operates at its full capacity

in proportion to their peak‐bandwidth requirement and the link operates at its full capacity ![]() . The main difference between the E‐EMLM and the model of [ 8] lies on the fact that in the E‐EMLM, the notion of

. The main difference between the E‐EMLM and the model of [ 8] lies on the fact that in the E‐EMLM, the notion of ![]() allows for admission control, whereas there is no such parameter in [ 8]. The application of balanced fairness in multirate networks and its comparison with other classical bandwidth allocation policies, such as max–min fairness and proportional fairness can be found in [9,10]. See Example 3.11 for a comparison between the approach of [ 9] and the compression mechanism of the E‐EMLM under a threshold policy.

allows for admission control, whereas there is no such parameter in [ 8]. The application of balanced fairness in multirate networks and its comparison with other classical bandwidth allocation policies, such as max–min fairness and proportional fairness can be found in [9,10]. See Example 3.11 for a comparison between the approach of [ 9] and the compression mechanism of the E‐EMLM under a threshold policy.

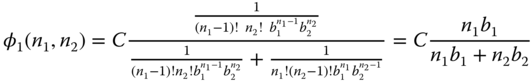

According to [ 8], the balanced fair sharing ![]() of all

of all ![]() calls of service‐class

calls of service‐class ![]() in state

in state ![]() is given by:

is given by:

where ![]() is a balance function defined by:

is a balance function defined by:

and ![]() is the unit line vector with 1 in the

is the unit line vector with 1 in the ![]() element and 0 elsewhere. According to (3.27), when

element and 0 elsewhere. According to (3.27), when ![]() , (3.26) is written as [ 8]:

, (3.26) is written as [ 8]:

The balance fair share model determines (through (3.28)) the total bandwidth which will be allocated to each service‐class, whereas the E‐EMLM determines (through ( 3.8)) the percentage of the peak‐bandwidth per call requirement which will be assigned to each call of each service‐class. This percentage has a limit which is defined through the ![]() parameter, while the absence of

parameter, while the absence of ![]() in the balance fair share model means that there is no limitation in bandwidth compression. The relationship between the

in the balance fair share model means that there is no limitation in bandwidth compression. The relationship between the ![]() of the E‐EMLM and the

of the E‐EMLM and the ![]() of the balance fair share model is

of the balance fair share model is ![]() . To show this, let

. To show this, let ![]() service‐classes (for presentation purposes). Assuming that in state

service‐classes (for presentation purposes). Assuming that in state ![]() , i.e.,

, i.e., ![]() or

or ![]() , calls of service‐class

, calls of service‐class ![]() use their peak‐bandwidth requirements, the balanced fairness allocation gives:

use their peak‐bandwidth requirements, the balanced fairness allocation gives:

Similarly,

So, in state ![]() , where C

, where C ![]() nb, the bandwidth allocated to a service‐class k call is:

nb, the bandwidth allocated to a service‐class k call is:

Based on ( 3.8) and ( 3.9), the E‐EMLM gives:

assuming that ![]() (i.e., no bandwidth compression takes place in state

(i.e., no bandwidth compression takes place in state ![]() ). Combining (3.31) with (3.32), we obtain

). Combining (3.31) with (3.32), we obtain ![]() .

.

3.2 The Elastic Erlang Multirate Loss Model under the BR Policy

3.2.1 The Service System

We now consider the multiservice system of the E‐EMLM under the BR policy (E‐EMLM/ BR): A new service‐class ![]() call is accepted in the link, if after its acceptance, the occupied link bandwidth

call is accepted in the link, if after its acceptance, the occupied link bandwidth ![]() , where

, where ![]() refers to the BR parameter used to benefit (in CBP) calls of other service‐classes apart from

refers to the BR parameter used to benefit (in CBP) calls of other service‐classes apart from ![]() (see also the EMLM/BR in Section 1.3.2).

(see also the EMLM/BR in Section 1.3.2).

In terms of the system state‐space ![]() , the CAC is expressed as follows. A new call of service‐class

, the CAC is expressed as follows. A new call of service‐class ![]() is accepted in the system if the system is in state

is accepted in the system if the system is in state ![]() upon a new call arrival, where

upon a new call arrival, where ![]() . Hence, the CBP of service‐class

. Hence, the CBP of service‐class ![]() is determined by the state space

is determined by the state space ![]() :

:

As far as the compression factors and the state dependent compression factors per service‐class are concerned, they are determined by (3.2) and ( 3.8), respectively.

Example 3.6

Consider again Example 3.1 (![]() ). Assume that

). Assume that ![]() and

and ![]() b.u., so that

b.u., so that ![]() .

.

- (a)Draw the complete state transition diagram of the system and determine the values of

and

and  ), for each state

), for each state  .

. - (b)Write the GB equations, and determine the values of

and the exact CBP of both service‐classes.

and the exact CBP of both service‐classes. - (c)Draw the modified state transition diagram based on

and determine the values of

and determine the values of  for each state

for each state  .

. - (d)Write the GB equations of the modified state transition diagram, and determine the values of

and the CBP of both service‐classes.

and the CBP of both service‐classes.

- (a)Figure 3.7 shows the state space

that consists of 11 permissible states

that consists of 11 permissible states  together with the complete state transition diagram of the system. The corresponding values of

together with the complete state transition diagram of the system. The corresponding values of  and

and  are exactly the same as those presented in Table 3.1 (ignore the last row); for the same state

are exactly the same as those presented in Table 3.1 (ignore the last row); for the same state  , obviously the same

, obviously the same  is determined because it only depends on state

is determined because it only depends on state  .

. - (b) Based on Figure 3.7, we obtain the following 11 GB equations:

The solution of this linear system is:

Then, based on the values of

and (3.33), we obtain the exact value of equalized CBP:

and (3.33), we obtain the exact value of equalized CBP: .

. - (c)Figure 3.8 shows the modified state transition diagram. Similarly to the

, the corresponding values of

, the corresponding values of  are exactly the same as those presented in Table 3.2 (ignore the last row) for the E‐EMLM. Although the E‐EMLM/BR differs from the E‐EMLM in respect of the state transition diagram (and the state space), they both have the same bandwidth compression parameters.

are exactly the same as those presented in Table 3.2 (ignore the last row) for the E‐EMLM. Although the E‐EMLM/BR differs from the E‐EMLM in respect of the state transition diagram (and the state space), they both have the same bandwidth compression parameters. - (d) Based on Figure 3.8, we obtain the following 11 GB equations:

The solution of this linear system is:

Then, based on the values of

and ( 3.33), we obtain the value of equalized CBP:

and ( 3.33), we obtain the value of equalized CBP: ,

,which is quite close to the exact value of 0.3234.

Figure 3.7 The state space  and the state transition diagram (Example 3.6).

and the state transition diagram (Example 3.6).

Figure 3.8 The state space  and the modified state transition diagram (Example 3.6).

and the modified state transition diagram (Example 3.6).

3.2.2 The Analytical Model

3.2.2.1 Link Occupancy Distribution

In the E‐EMLM/BR, the link occupancy distribution, ![]() , can be calculated in an approximate way, according to the Roberts method (see Section 1.3.2.2), which leads to the following recursive formula [11]:

, can be calculated in an approximate way, according to the Roberts method (see Section 1.3.2.2), which leads to the following recursive formula [11]:

This formula is similar to (1.64) of the EMLM/BR. If ![]() for all

for all ![]() then the E‐EMLM results. In addition, if

then the E‐EMLM results. In addition, if ![]() then we have the classical EMLM.

then we have the classical EMLM.

3.2.2.2 CBP, Utilization, and Mean Number of In‐service Calls

The following performance measures can be determined based on (3.34):

- The CBP of service‐class

, via:

(3.35)where

, via:

(3.35)where

is the normalization constant.

is the normalization constant. - The link utilization,

, via ( 3.23).

, via ( 3.23). - The average number of service‐class

calls in the system,

calls in the system,  , via ( 3.24), where

, via ( 3.24), where  are determined by ( 3.25) under the following two assumptions: (i)

are determined by ( 3.25) under the following two assumptions: (i)  when

when  , and (ii)

, and (ii)  when

when  .

.

Example 3.7

Consider again Example 3.6 (![]() ).

).

- (a)Calculate the (normalized) values of

.

. - (b)Calculate the CBP of both service‐classes.

- (c)Calculate the link utilization.

- (d)Calculate the mean number of in‐service calls of the two service‐classes in the system.

- (a) State probabilities through the recursion (3.34):

The normalization constant is:

The state probabilities are:

- (b) The CBP based on (3.35) are:

(compare with the exact value of 0.3234)

(compare with the exact value of 0.3234) - (c) The link utilization based on ( 3.23) is:

b.u.

b.u. - (d) We firstly determine the values of

for

for  and

and  , based on ( 3.25):

, based on ( 3.25):

From ( 3.24), we obtain the mean values of in‐service calls:

.

.

Example 3.8

Consider a link of capacity ![]() b.u. that accommodates calls of

b.u. that accommodates calls of ![]() service‐classes with the following traffic characteristics: service‐class 1

service‐classes with the following traffic characteristics: service‐class 1 ![]() erl,

erl, ![]() b.u., service‐class 2

b.u., service‐class 2 ![]() erl,

erl, ![]() b.u. The maximum allowed bandwidth compression ratio to all in‐service calls is

b.u. The maximum allowed bandwidth compression ratio to all in‐service calls is ![]() . The BR policy is applied in order to achieve CBP equalization between the two service‐classes. Compare the CBP

. The BR policy is applied in order to achieve CBP equalization between the two service‐classes. Compare the CBP ![]() and

and ![]() of the two service‐classes and the link utilization, obtained by the E‐EMLM/BR, the E‐EMLM, the EMLM/BR, and the EMLM, when

of the two service‐classes and the link utilization, obtained by the E‐EMLM/BR, the E‐EMLM, the EMLM/BR, and the EMLM, when ![]() increases in steps of 1 erl (up to

increases in steps of 1 erl (up to ![]() erl). Also provide simulation results for the E‐EMLM/BR.

erl). Also provide simulation results for the E‐EMLM/BR.

For bandwidth compression, we choose ![]() because the maximum bandwidth compression is achieved for

because the maximum bandwidth compression is achieved for ![]() . For CBP equalization under the BR policy, we choose

. For CBP equalization under the BR policy, we choose ![]() and

and ![]() since

since ![]() (in this way we have an equal number of blocking states for the two service classes). Figures 3.9 and 3.10 present the analytical and the simulation CBP results of service‐classes 1 and 2, respectively, in the case of the E‐EMLM. For comparison, we give the corresponding analytical CBP results of the EMLM. In Figure 3.11, we present the analytical and simulation CBP results (equalized CBP) in the case of the E‐EMLM/BR. Simulation results are based on SIMSCRIPT III and are mean values of 12 runs (no reliability ranges are shown because they are very small). For comparison, we give the corresponding analytical results for the EMLM/BR. In Figure 3.12, we present the link utilization (analytical results only) for all models. In the

(in this way we have an equal number of blocking states for the two service classes). Figures 3.9 and 3.10 present the analytical and the simulation CBP results of service‐classes 1 and 2, respectively, in the case of the E‐EMLM. For comparison, we give the corresponding analytical CBP results of the EMLM. In Figure 3.11, we present the analytical and simulation CBP results (equalized CBP) in the case of the E‐EMLM/BR. Simulation results are based on SIMSCRIPT III and are mean values of 12 runs (no reliability ranges are shown because they are very small). For comparison, we give the corresponding analytical results for the EMLM/BR. In Figure 3.12, we present the link utilization (analytical results only) for all models. In the ![]() ‐axis of all figures, point 1 refers to

‐axis of all figures, point 1 refers to ![]() , while point 8 refers to

, while point 8 refers to ![]() . From the figures, we observe the following:

. From the figures, we observe the following:

- (i)Analytical and simulation CBP results are very close.

- (ii)The compression/expansion mechanism of the E‐EMLM and E‐EMLM/BR reduces the CBP compared to those obtained by the EMLM and EMLM/BR, respectively.

- (iii)The compression/expansion mechanism increases the link utilization since it decreases CBP.

Figure 3.9 CBP of service‐class 1 (EMLM, E‐EMLM) (Example 3.8).

Figure 3.10 CBP of service‐class 2 (EMLM, E‐EMLM) (Example 3.8).

Figure 3.11 Equalized CBP (EMLM/BR, E‐EMLM/BR) (Example 3.8).

Figure 3.12 Link utilization for all models (Example 3.8).

3.3 The Elastic Erlang Multirate Loss Model under the Threshold Policy

3.3.1 The Service System

We now consider the multi‐service system of the E‐EMLM under the TH policy (E‐EMLM/ TH), as follows. A new call of service‐class ![]() is accepted in the system, of

is accepted in the system, of ![]() b.u., if [12]:

b.u., if [12]:

- (i) The number of in‐service calls of service‐class

, together with the new call, does not exceed a threshold

, together with the new call, does not exceed a threshold  , i.e.,

, i.e.,  . Otherwise the call is blocked. This constraint expresses the TH policy.

. Otherwise the call is blocked. This constraint expresses the TH policy. - (ii) If constraint (i) is met, then: (a) If

, the call is accepted in the system with

, the call is accepted in the system with  b.u. and remains in the system for an exponentially distributed service time with mean

b.u. and remains in the system for an exponentially distributed service time with mean  . (b) If

. (b) If  the call is accepted by compressing its

the call is accepted by compressing its  together with the bandwidth of all in‐service calls of all service‐classes. The bandwidth compression/expansion mechanism is identical to the one described in Section 3.1.

together with the bandwidth of all in‐service calls of all service‐classes. The bandwidth compression/expansion mechanism is identical to the one described in Section 3.1.

In terms of the system state‐space ![]() , the CAC is expressed as follows. A new call of service‐class

, the CAC is expressed as follows. A new call of service‐class ![]() is accepted in the system if the system is in state

is accepted in the system if the system is in state ![]() upon a new call arrival, where

upon a new call arrival, where ![]() . Hence, the CBP of service‐class

. Hence, the CBP of service‐class ![]() is determined by the state space

is determined by the state space ![]() :

:

Example 3.9

This example clarifies the bandwidth compression‐expansion mechanism in conjunction with the TH policy. Consider a link of ![]() b.u. and let

b.u. and let ![]() b.u. The link accommodates calls of

b.u. The link accommodates calls of ![]() service‐classes whose calls require

service‐classes whose calls require ![]() and

and ![]() b.u., respectively. The TH policy is applied to calls of both service‐classes by assuming that

b.u., respectively. The TH policy is applied to calls of both service‐classes by assuming that ![]() and

and ![]() . For simplicity, assume that

. For simplicity, assume that ![]() .

.

- (a)Draw the complete state transition diagram of the system and determine the values of

and

and  , based on ( 3.2), for each state

, based on ( 3.2), for each state  .

. - (b)Write the GB equations, determine the values of

and the exact CBP of both service‐classes.

and the exact CBP of both service‐classes. - (c)Draw the modified state transition diagram based on

and determine the values of

and determine the values of  , based on ( 3.8) and ( 3.9), for each state

, based on ( 3.8) and ( 3.9), for each state  .

. - (d)Write the GB equations of the modified state transition diagram, determine the values of

and the CBP of both service‐classes.

and the CBP of both service‐classes.

- (a)Figure 3.13 shows the state space

which consists of seven permissible states

which consists of seven permissible states  together with the complete state transition diagram of the system. The corresponding values of

together with the complete state transition diagram of the system. The corresponding values of  and

and  are presented in Table 3.3.

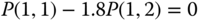

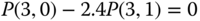

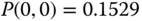

are presented in Table 3.3. - (b) Based on Figure 3.13, we obtain the following seven GB equations:

The solution of this linear system is:

Then, based on the values of

and (3.36), we determine the exact CBP:

and (3.36), we determine the exact CBP:

- (c)Figure 3.14 shows the modified state transition diagram. The corresponding values of

(which are calculated through the

(which are calculated through the  ), are presented in Table 3.4. (Although the state transition diagrams depend on the applied bandwidth sharing policy, e.g., CS, BR or TH, the bandwidth compression parameters,

), are presented in Table 3.4. (Although the state transition diagrams depend on the applied bandwidth sharing policy, e.g., CS, BR or TH, the bandwidth compression parameters,  and

and  , depend only on the number of in‐service calls of each service‐class.)

, depend only on the number of in‐service calls of each service‐class.) - (d) Based on Figure 3.14, we obtain the following seven GB equations:

The solution of this linear system is:

Then, based on the values of

and ( 3.36), we determine the CBP for each service‐class:

and ( 3.36), we determine the CBP for each service‐class: ,

,which are close to the exact values of 0.2436 and 0.6076, respectively.

Figure 3.13 The state space  and the state transition diagram (Example 3.9).

and the state transition diagram (Example 3.9).

Figure 3.14 The state space  and the modified state transition diagram (Example 3.9).

and the modified state transition diagram (Example 3.9).

Table 3.3 The state space and the occupied link bandwidth (Example 3.9).

| (before compression) | (after compression) | |||

| 0 | 0 | 1.00 | 0 | 0 |

| 0 | 1 | 1.00 | 4 | 4 |

| 1 | 0 | 1.00 | 2 | 2 |

| 1 | 1 | 0.6667 | 6 | 4 |

| 2 | 0 | 1.00 | 4 | 4 |

| 2 | 1 | 0.5 | 8 | 4 |

| 3 | 0 | 0.6667 | 6 | 4 |

Table 3.4 The values of the state dependent compression factors ![]() (Example 3.9).

(Example 3.9).

| 0 | 0 | 1.0 | 1.00 | 1.00 | 2 | 0 | 1.0 | 1.00 | 1.00 |

| 0 | 1 | 1.0 | 1.00 | 1.00 | 2 | 1 | 2.5 | 0.6 | 0.4 |

| 1 | 0 | 1.0 | 1.00 | 1.00 | 3 | 0 | 1.5 | 0.6667 | 0.0 |

| 1 | 1 | 1.5 | 0.6667 | 0.6667 |

3.3.2 The Analytical Model

3.3.2.1 Steady State Probabilities

The steady state transition rates of the E‐EMLM/TH are shown in Figure 3.4. According to this, the GB equation for state ![]() is given by (3.10), while the LB equations are given by ( 3.11) and ( 3.12) where:

is given by (3.10), while the LB equations are given by ( 3.11) and ( 3.12) where: ![]() .

.

Based on the LB assumption, the probability distribution ![]() has the solution of ( 3.13) where the normalization constant is given by (3.14). Since

has the solution of ( 3.13) where the normalization constant is given by (3.14). Since ![]() is the occupied link bandwidth, we consider two different sets of macro‐states: (i)

is the occupied link bandwidth, we consider two different sets of macro‐states: (i) ![]() and (ii)

and (ii) ![]() . For set (i), no bandwidth compression takes place and

. For set (i), no bandwidth compression takes place and ![]() are determined via (1.73). For set (ii), we follow the analysis of the E‐EMLM up to (3.19). Since

are determined via (1.73). For set (ii), we follow the analysis of the E‐EMLM up to (3.19). Since ![]() , we have:

, we have:

Thus, ( 3.19) can be written as:

where ![]() is the probability that

is the probability that ![]() b.u. are occupied when the number of service‐class

b.u. are occupied when the number of service‐class ![]() in‐service calls is

in‐service calls is ![]() , and is determined by (1.74). The combination of (1.73) and (3.38) results in the recursive formula of the E‐EMLM/TH [ 12]:

, and is determined by (1.74). The combination of (1.73) and (3.38) results in the recursive formula of the E‐EMLM/TH [ 12]:

3.3.2.2 CBP, Utilization, and Mean Number of In‐service Calls

The following performance measures can be determined based on (3.39):

- The CBP of service‐class

,

,  , via:

(3.40)where

, via:

(3.40)where

is the normalization constant.

is the normalization constant. - The link utilization,

, according to ( 3.23).

, according to ( 3.23). - The average number of service‐class

calls in the system,

calls in the system,  , via:

(3.41)where

, via:

(3.41)where

is the average number of service‐class

is the average number of service‐class  calls given that the system state is

calls given that the system state is  , and is given by:

(3.42)where

, and is given by:

(3.42)where

, while

, while  for

for  and

and  .

.

In ( 3.39), knowledge of ![]() is required. Since

is required. Since ![]() when

when ![]()

![]() , we distinguish two regions of

, we distinguish two regions of ![]() : (i)

: (i) ![]() and (ii)

and (ii) ![]() .

.

For the first region (where no bandwidth compression occurs), consider a system of capacity ![]() that accommodates all service‐classes but service‐class

that accommodates all service‐classes but service‐class ![]() . For this system, we define

. For this system, we define ![]() as follows:

as follows:

Based on ![]() , we compute the unnormalized

, we compute the unnormalized ![]() , recursively, via the following formula:

, recursively, via the following formula:

For the second region (where bandwidth compression occurs), the values of ![]() can be determined (taking into account ( 3.9)) by:

can be determined (taking into account ( 3.9)) by:

where ![]() .

.

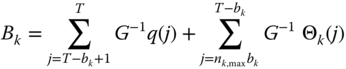

Example 3.10

Consider again Example 3.9 (![]() ).

).

- (a)Calculate the (normalized) values of

in the E‐EMLM/TH based on ( 3.39).

in the E‐EMLM/TH based on ( 3.39). - (b)Calculate the CBP of both service‐classes based on (3.40).

- (a) State probabilities through the recursion ( 3.39): For

(where

(where  ), given that

), given that  , we have:

, we have:

where  calculated via (3.45) while

calculated via (3.45) while

and  calculated via (3.44) while

calculated via (3.44) while  .

.The normalization constant is

. The state probabilities are:

. The state probabilities are:

- (b) The CBP,

and

and  , are:

, are:

Example 3.11

Consider again Example 3.9. We show the relationship between the E‐EMLM/TH and the balanced fair share model of [ 9]. In [ 9], the balanced fair sharing is applied to access tree networks. The loss system of Example 3.9 can be represented as the simple access tree network of Figure 3.15. According to [9], the balanced fair sharing ![]() of all calls of service‐class

of all calls of service‐class ![]() in state

in state ![]() is given by ( 3.26), where

is given by ( 3.26), where ![]() is a balance function for a tree network, defined by:

is a balance function for a tree network, defined by:

where link ![]() has capacity

has capacity ![]() ,

, ![]() is the set of service‐classes going through link

is the set of service‐classes going through link ![]() , and

, and ![]() is the unit line vector with 1 in component

is the unit line vector with 1 in component ![]() and 0 elsewhere.

and 0 elsewhere.

Calculate the values of ![]() for states

for states ![]() , and

, and ![]() of Example 3.9, through ( 3.26) and (3.46) (of the balance fair share model), where bandwidth compression occurs, find the allocated bandwidth per service‐class call and compare it with that of the E‐EMLM/TH. Then, repeat for state (3,1) and comment.

of Example 3.9, through ( 3.26) and (3.46) (of the balance fair share model), where bandwidth compression occurs, find the allocated bandwidth per service‐class call and compare it with that of the E‐EMLM/TH. Then, repeat for state (3,1) and comment.

State (1,1):

- •

where:

where:

Thus

. This means that in state (1,1) the call of service‐class 1 has an allocated bandwidth of

. This means that in state (1,1) the call of service‐class 1 has an allocated bandwidth of  b.u. according to [ 9]. This is the same as the allocated bandwidth provided according to the E‐EMLM/TH (see Table 3.4 for the value of

b.u. according to [ 9]. This is the same as the allocated bandwidth provided according to the E‐EMLM/TH (see Table 3.4 for the value of  ):

):  .

. - •

. This means that in state (1,1) the call of service‐class 2 has an allocated bandwidth of 8/3 b.u. This is the same as the allocated bandwidth provided according to the E‐EMLM/TH (see Table 3.4 for the value of

. This means that in state (1,1) the call of service‐class 2 has an allocated bandwidth of 8/3 b.u. This is the same as the allocated bandwidth provided according to the E‐EMLM/TH (see Table 3.4 for the value of  ):

):  .

.

State (2,1):

- •

where:

where:

Thus

. This means that in state (2,1) a call of service‐class 1 has an allocated bandwidth of

. This means that in state (2,1) a call of service‐class 1 has an allocated bandwidth of  b.u. This is the same as the allocated bandwidth provided according to the E‐EMLM/TH:

b.u. This is the same as the allocated bandwidth provided according to the E‐EMLM/TH:  .

. - •

. This means that in state (2,1) the call of service‐class 2 has an allocated bandwidth of 1.6 b.u. This is the same as the allocated bandwidth provided according to the E‐EMLM/TH:

. This means that in state (2,1) the call of service‐class 2 has an allocated bandwidth of 1.6 b.u. This is the same as the allocated bandwidth provided according to the E‐EMLM/TH:  .

.

State (3,0):

- •

where:

where:

Thus

.

.This means that in state (3,0) a call of service‐class 1 has an allocated bandwidth of

b.u. This is the same as the allocated bandwidth provided according to the E‐EMLM/TH (see Table 3.4):

b.u. This is the same as the allocated bandwidth provided according to the E‐EMLM/TH (see Table 3.4):  .

. - •

(obviously).

(obviously).

Based on the above, we see that the E‐EMLM/TH results in the same bandwidth compression values with those provided by the balanced fair sharing of [ 9].

State (3,1): In the case of the E‐EMLM, the network (Figure 3.15) cannot be in state (3,1) due to the ![]() parameter. The total requested bandwidth (before compression,

parameter. The total requested bandwidth (before compression, ![]() b.u.) would exceed the virtual capacity of

b.u.) would exceed the virtual capacity of ![]() b.u. and therefore the call should be blocked and lost. On the contrary, in the case of the balanced fair share model which does not have the parameter

b.u. and therefore the call should be blocked and lost. On the contrary, in the case of the balanced fair share model which does not have the parameter ![]() , (3,1) is a permissible state and the CAC should allocate the following bandwidth per service‐class call:

, (3,1) is a permissible state and the CAC should allocate the following bandwidth per service‐class call:

- •

where:

where:

Thus

. This means that in state (3,1) a call of service‐class 1 has an allocated bandwidth of

. This means that in state (3,1) a call of service‐class 1 has an allocated bandwidth of  b.u. Compare this value with the corresponding maximum compressed values of the E‐EMLM, which is

b.u. Compare this value with the corresponding maximum compressed values of the E‐EMLM, which is  b.u.

b.u. - •

. This means that in state (3,1) a call of service‐class 2 has an allocated bandwidth of

. This means that in state (3,1) a call of service‐class 2 has an allocated bandwidth of  b.u. Compare this value with the corresponding maximum compressed value of the E‐EMLM, which is

b.u. Compare this value with the corresponding maximum compressed value of the E‐EMLM, which is  b.u.

b.u.

Figure 3.15 The loss system of Example 3.9 as an access tree network (Example 3.11).

Example 3.12

Consider a link of capacity ![]() b.u. and three values of

b.u. and three values of ![]() : (i)

: (i) ![]() b.u., (ii)

b.u., (ii) ![]() b.u., and (iii)

b.u., and (iii) ![]() b.u. The link accommodates calls of

b.u. The link accommodates calls of ![]() service‐classes with the following traffic characteristics:

service‐classes with the following traffic characteristics:

| 5.0 erl | 1.5 erl | 1.0 erl | 2 b.u. | 5 b.u. | 9 b.u. | 25 calls | 11 calls | 6 calls |

- (a)Compare the CBP

, and

, and  of the service‐classes and the link utilization obtained by the E‐EMLM/TH and the EMLM/TH when the offered traffic‐loads

of the service‐classes and the link utilization obtained by the E‐EMLM/TH and the EMLM/TH when the offered traffic‐loads  , and

, and  increase in steps of 1.0, 0.5, and 0.25 erl, respectively, up to

increase in steps of 1.0, 0.5, and 0.25 erl, respectively, up to  . Also provide simulation results for the E‐EMLM/TH.

. Also provide simulation results for the E‐EMLM/TH. - (b)Consider the E‐EMLM/TH and the E‐EMLM and present the analytical CBP of all service‐classes for

b.u. and

b.u. and  3, 4, and 5 calls.

3, 4, and 5 calls.

- (a)

Figures 3.16–3.18 present the analytical and the simulation CBP results of all service‐classes, respectively, in the case of the E‐EMLM/TH. For comparison, we give the corresponding analytical CBP results of the EMLM/TH. In the

‐axis of all figures, point 1 refers to (

‐axis of all figures, point 1 refers to ( , while point 7 refers to

, while point 7 refers to  . Simulation results are based on SIMSCRIPT III and are mean values of seven runs (with very small reliability ranges). In Figure 3.19 we present the link utilization for both models. The figures show the following:

. Simulation results are based on SIMSCRIPT III and are mean values of seven runs (with very small reliability ranges). In Figure 3.19 we present the link utilization for both models. The figures show the following:

- The results obtained by the E‐EMLM/TH formulas are close to the simulation results.

- The bandwidth compression mechanism reduces the CBP of all service‐classes.

- The analytical results of the EMLM/TH fail to approximate the simulation results of the E‐EMLM/TH.

- The link utilization is higher when

b.u., a result that is expected since this value of

b.u., a result that is expected since this value of  achieves the highest CBP reduction (highest bandwidth compression, compared to

achieves the highest CBP reduction (highest bandwidth compression, compared to  or 75 b.u.).

or 75 b.u.).

- (b)In Figures 3.20–3.22 we consider the E‐EMLM/TH together with the E‐EMLM and present the analytical CBP results of all service‐classes for

b.u. and

b.u. and  , and 5 calls. The E‐EMLM fails to approximate the CBP results obtained by the E‐EMLM/TH, in the cases of

, and 5 calls. The E‐EMLM fails to approximate the CBP results obtained by the E‐EMLM/TH, in the cases of  = 3 and 4 (this proves the necessity of the E‐EMLM/TH). The fact that the two models give quite close CBP results for

= 3 and 4 (this proves the necessity of the E‐EMLM/TH). The fact that the two models give quite close CBP results for  is explained as follows. Assuming that only calls of service‐class 3 exist in the link then the theoretical maximum number of calls of service‐class 3 is

is explained as follows. Assuming that only calls of service‐class 3 exist in the link then the theoretical maximum number of calls of service‐class 3 is  ) (each of which occupies

) (each of which occupies  b.u.). Approaching this value makes the E‐EMLM/TH behave as the E‐EMLM. We also see that the increase of

b.u.). Approaching this value makes the E‐EMLM/TH behave as the E‐EMLM. We also see that the increase of  results in the CBP increase for service‐classes 1 and 2 (Figures 3.20 and 3.21, respectively) and the CBP decrease for service‐class 3 (Figure 3.22).

results in the CBP increase for service‐classes 1 and 2 (Figures 3.20 and 3.21, respectively) and the CBP decrease for service‐class 3 (Figure 3.22).

Figure 3.16 CBP of service‐class 1, when  (Example 3.12).

(Example 3.12).

Figure 3.17 CBP of service‐class 2, when  (Example 3.12).

(Example 3.12).

Figure 3.18 CBP of service‐class 3, when  (Example 3.12).

(Example 3.12).

Figure 3.19 Link utilization (Example 3.12).

Figure 3.20 CBP of the first service‐class, when  and

and  (Example 3.12).

(Example 3.12).

Figure 3.21 CBP of the second service‐class, when  and

and  (Example 3.12).

(Example 3.12).

Figure 3.22 CBP of the third service‐class, when  and

and  (Example 3.12).

(Example 3.12).

3.4 The Elastic Adaptive Erlang Multirate Loss Model

3.4.1 The Service System

In the elastic adaptive EMLM (EA‐EMLM), we consider a link of capacity ![]() b.u. that accommodates

b.u. that accommodates ![]() service‐classes which are distinguished into

service‐classes which are distinguished into ![]() elastic service‐classes and

elastic service‐classes and ![]() adaptive service‐classes,

adaptive service‐classes, ![]() . The call arrival process remains Poisson. As already mentioned, adaptive traffic is considered a variant of elastic traffic in the sense that adaptive calls can tolerate bandwidth compression without altering their service time.

. The call arrival process remains Poisson. As already mentioned, adaptive traffic is considered a variant of elastic traffic in the sense that adaptive calls can tolerate bandwidth compression without altering their service time.

The bandwidth compression/expansion mechanism and the CAC of the EA‐EMLM are the same as those of the E‐EMLM (Section 3.1.1). The only difference is in (3.3), which is applied only on elastic calls. Similar to the E‐EMLM, the corresponding Markov chain in the EA‐EMLM does not meet the necessary and sufficient Kolmogorov's criterion for reversibility between four adjacent states.

Example 3.13

Consider Example 3.1 (![]() ) and assume that calls of service‐class 2 are adaptive. This example clarifies the differences between the E‐EMLM and the EA‐EMLM.

) and assume that calls of service‐class 2 are adaptive. This example clarifies the differences between the E‐EMLM and the EA‐EMLM.

- (a)Draw the complete state transition diagram of the system and determine the values of

and

and  for each state

for each state  .

. - (b)Consider state

and explain the bandwidth compression mechanism when an adaptive call of service‐class 2 arrives in the link.

and explain the bandwidth compression mechanism when an adaptive call of service‐class 2 arrives in the link. - (c)Now, consider state

and explain the bandwidth expansion that takes place when an adaptive call of service‐class 2 departs from the system.

and explain the bandwidth expansion that takes place when an adaptive call of service‐class 2 departs from the system. - (d)Write the GB equations, and determine the values of

and the exact CBP of both service‐classes.

and the exact CBP of both service‐classes.

- (a)Figure 3.23 shows the state space

that consists of 12 permissible states

that consists of 12 permissible states  together with the complete state transition diagram of the system. The values of

together with the complete state transition diagram of the system. The values of  and

and  , before and after bandwidth compression has been applied, are the same as those presented in Table 3.1.

, before and after bandwidth compression has been applied, are the same as those presented in Table 3.1. - (b)When a new adaptive call of service‐class 2 arrives in the system while the system is in state

where

where  b.u. then, since in the new state it would be

b.u. then, since in the new state it would be  b.u., the new call is accepted in the system after bandwidth compression to all calls (new and in‐service calls). In the new state of the system,

b.u., the new call is accepted in the system after bandwidth compression to all calls (new and in‐service calls). In the new state of the system,  , based on ( 3.4), calls of both service‐classes compress their bandwidth to

, based on ( 3.4), calls of both service‐classes compress their bandwidth to  b.u.,

b.u.,  b.u. so that

b.u. so that  . As far as service‐time is concerned, the value of

. As far as service‐time is concerned, the value of  becomes

becomes  , while the value of

, while the value of  does not alter.

does not alter. - (c)If an adaptive call of service‐class 2 departs from the system then its assigned bandwidth

b.u. is released to be immediately shared among the remaining calls in proportion to their peak‐bandwidth requirement. The system passes to state

b.u. is released to be immediately shared among the remaining calls in proportion to their peak‐bandwidth requirement. The system passes to state  , the elastic call of service‐class 1 expands its bandwidth to

, the elastic call of service‐class 1 expands its bandwidth to  b.u., and the adaptive call of service‐class 2 to

b.u., and the adaptive call of service‐class 2 to  b.u. Thus,

b.u. Thus,  b.u. As far as the service time of the elastic call is concerned, it decreases to its initial value

b.u. As far as the service time of the elastic call is concerned, it decreases to its initial value  time unit.

time unit. - (d) Based on Figure 3.22, we obtain the following 12 GB equations:

The solution of this linear system is:

Then, based on the values of

and ( 3.6), we determine the exact CBP:

and ( 3.6), we determine the exact CBP: (compare with 0.1748 of the E‐EMLM in Example 3.1)

(compare with 0.1748 of the E‐EMLM in Example 3.1) (compare with 0.3574 of the E‐EMLM in Example 3.1)

(compare with 0.3574 of the E‐EMLM in Example 3.1)The comparison between the CBP obtained in the EA‐EMLM and the E‐EMLM reveals that the E‐EMLM does not approximate the EA‐EMLM. In addition, the CBP of the EA‐EMLM are lower, a fact that it is intuitively expected since, in this example, adaptive calls remain less time in the system than the corresponding elastic calls.

Figure 3.23 The state space  and the state transition diagram (Example 3.13).

and the state transition diagram (Example 3.13).

To circumvent the non‐reversibility problem in the EA‐EMLM, similar to the E‐EMLM, ![]() are replaced by the state‐dependent factors per service‐class

are replaced by the state‐dependent factors per service‐class ![]() ,

, ![]() , which individualize the role of

, which individualize the role of ![]() per service‐class in order to lead to a reversible Markov chain [6]. Thus the compressed bandwidth of service‐class

per service‐class in order to lead to a reversible Markov chain [6]. Thus the compressed bandwidth of service‐class ![]() calls is determined by (3.7), while the values of

calls is determined by (3.7), while the values of ![]() are given by ( 3.8). However, due to the adaptive service‐classes, in the EA‐EMLM the values of the state multipliers

are given by ( 3.8). However, due to the adaptive service‐classes, in the EA‐EMLM the values of the state multipliers ![]() are determined by [ 6]:

are determined by [ 6]:

where ![]() .

.

The derivation of (3.47) is based on the following assumptions:

- (i) The bandwidth of all in‐service calls of service‐class

(elastic or adaptive) is compressed by a factor

(elastic or adaptive) is compressed by a factor  to a new value

to a new value  in state

in state  , where

, where  , so that:

(3.48)

, so that:

(3.48)

- (ii) The product service time by bandwidth per call of service‐class

calls,

calls,  , remains the same in state

, remains the same in state  regardless of the reversibility of the Markov chain. In other words, it holds that:

(3.49)

regardless of the reversibility of the Markov chain. In other words, it holds that:

(3.49) (3.50)

(3.50)

Now, we can derive ( 3.47) by substituting (3.49), 3.50, and 3.8 into 3.48.

Example 3.14

Consider again Example 3.13 ![]() ,

, ![]() .

.

- (a)Draw the modified state transition diagram based on

and determine the values of

and determine the values of  for each state

for each state  .

. - (b)Write the GB equations of the modified state transition diagram, and determine the values of

and the CBP of both service‐classes.

and the CBP of both service‐classes.

- (a)The graphical representation of the modified state transition diagram is identical to that of Figure 3.3. Table 3.5 presents the 12 states together with the corresponding values of

(calculated through

(calculated through  –( 3.47)). Compared to Table 3.2, we see that the existence of adaptive traffic modifies the values of

–( 3.47)). Compared to Table 3.2, we see that the existence of adaptive traffic modifies the values of  and

and  .

. - (b) Based on Figure 3.3 and Table 3.5, we obtain the following 12 GB equations:

The solution of this linear system is:

Then, based on the values of

and ( 3.6), we determine the CBP:

and ( 3.6), we determine the CBP:

(compare with the exact 0.128 in Example 3.13)

(compare with the exact 0.2907 in Example 3.13)

Table 3.5 The values of the state dependent factors ![]() (Example 3.14).

(Example 3.14).

| 0 | 0 | 1.0 | 1.00 | 1.00 | 2 | 0 | 1.0 | 1.00 | 1.00 |

| 0 | 1 | 1.0 | 1.00 | 1.00 | 2 | 1 | 1.1667 | 0.8571 | 0.8571 |

| 0 | 2 | 1.0 | 0.00 | 1.00 | 3 | 0 | 1.0 | 1.00 | 1.00 |

| 1 | 0 | 1.0 | 1.00 | 1.00 | 3 | 1 | 1.5667 | 0.7447 | 0.6383 |

| 1 | 1 | 1.0 | 1.00 | 1.00 | 4 | 0 | 1.3333 | 0.75 | 0.00 |

| 1 | 2 | 1.1333 | 0.8824 | 0.8824 | 5 | 0 | 2.2222 | 0.60 | 0.00 |

3.4.2 The Analytical Model

3.4.2.1 Steady State Probabilities

The steady state transition rates of the EA‐EMLM are shown in Figure 3.4. According to this, the GB equation for state ![]() is given by ( 3.10) and the LB equations by ( 3.11) and ( 3.12).

is given by ( 3.10) and the LB equations by ( 3.11) and ( 3.12).

Similar to the E‐EMLM, we consider two different sets of macro‐states: (i) ![]() and (ii)

and (ii) ![]() . For set (i), no bandwidth compression takes place and

. For set (i), no bandwidth compression takes place and ![]() are determined by the classical Kaufman–Roberts recursion (1.39). For set (ii), we substitute ( 3.8) in ( 3.11) to have:

are determined by the classical Kaufman–Roberts recursion (1.39). For set (ii), we substitute ( 3.8) in ( 3.11) to have:

Multiplying both sides of (3.51a) by ![]() and summing over

and summing over ![]() , we take:

, we take:

Similarly, multiplying both sides of (3.51b) by ![]() and

and ![]() , and summing over

, and summing over ![]() , we take:

, we take:

By adding (3.52) and (3.53), we have:

Based on ( 3.47), (3.54) is written as:

Summing both sides of (3.55) over ![]() and based on the fact that

and based on the fact that ![]() , we have:

, we have:

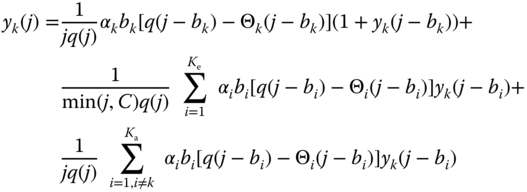

The combination of (1.39) and (3.56) leads to the recursive formula of the EA‐EMLM [ 6]:

3.4.2.2 CBP, Utilization, and Mean Number of In‐service Calls

The following performance measures can be determined based on (3.57):

- The CBP of service‐class

, based on ( 3.22).

, based on ( 3.22). - The link utilization,

, based on ( 3.23).

, based on ( 3.23). - The average number of service‐class

calls in the system,

calls in the system,  , based on ( 3.24) where the values of

, based on ( 3.24) where the values of  are given by (3.58) for elastic traffic and (3.59) for adaptive traffic [ 6]:

(3.58)

are given by (3.58) for elastic traffic and (3.59) for adaptive traffic [ 6]:

(3.58) (3.59)where

(3.59)where

, while

, while  for

for  and

and  .

.

Example 3.15

Consider Example 3.14 (![]() ).

).

- (a)Calculate the (normalized) values of

based on ( 3.57).

based on ( 3.57). - (b)Calculate the CBP of both service‐classes based on ( 3.22).

- (c)Calculate the link utilization based on ( 3.23).

- (d)Calculate the mean number of in‐service calls of the two service‐classes in the system based on ( 3.24), ( 3.58), and ( 3.59).

- (a) State probabilities through the recursion ( 3.57):

The normalization constant is

.

.The state probabilities are:

- (b) The CBP are:

- (c)The link utilization is:

b.u.

b.u. - (d) For

, we initially determine the values of

, we initially determine the values of  for

for  and

and  :

:

Then, based on ( 3.24), we obtain

and

and  .

.

Example 3.16

Consider Example 3.5 (![]() ) and let calls of service‐class 1 be adaptive. Assuming four values of

) and let calls of service‐class 1 be adaptive. Assuming four values of ![]() , and 56 b.u., calculate the CBP

, and 56 b.u., calculate the CBP ![]() and

and ![]() of the two service‐classes and the link utilization when

of the two service‐classes and the link utilization when ![]() and

and ![]() increase in steps of 2 erl (up to

increase in steps of 2 erl (up to ![]() erl). Compare the CBP and the link utilization results with those obtained by the E‐EMLM in Example 3.5.

erl). Compare the CBP and the link utilization results with those obtained by the E‐EMLM in Example 3.5.

Figure 3.24 presents the CBP of both service‐classes while Figure 3.25 presents the link utilization for the three values of ![]() . Observe that when

. Observe that when ![]() b.u., the system behaves as in the EMLM. In the

b.u., the system behaves as in the EMLM. In the ![]() ‐axis of both figures, point 1 refers to

‐axis of both figures, point 1 refers to ![]() , while point 8 refers to

, while point 8 refers to ![]() . According to Figure 3.24, the CBP of both service‐classes decrease as the value of

. According to Figure 3.24, the CBP of both service‐classes decrease as the value of ![]() increases. Compared to Figure 3.5, we see that (i) the CBP of the EA‐EMLM are much lower than the corresponding CBP of the E‐EMLM and (ii) the impact of the increase of

increases. Compared to Figure 3.5, we see that (i) the CBP of the EA‐EMLM are much lower than the corresponding CBP of the E‐EMLM and (ii) the impact of the increase of ![]() on the CBP is higher in the EA‐EMLM. Both (i) and (ii) can be explained by the fact that in‐service calls of service‐class 1 remain less in the system when they are adaptive, thus allowing more calls to be accepted in the system. As far as the link utilization is concerned, the values of

on the CBP is higher in the EA‐EMLM. Both (i) and (ii) can be explained by the fact that in‐service calls of service‐class 1 remain less in the system when they are adaptive, thus allowing more calls to be accepted in the system. As far as the link utilization is concerned, the values of ![]() in the EA‐EMLM are slightly lower (worse) than those of the E‐EMLM because of the adaptive service‐class 1 calls.

in the EA‐EMLM are slightly lower (worse) than those of the E‐EMLM because of the adaptive service‐class 1 calls.

Figure 3.24 CBP of both service‐classes in the EA‐EMLM (Example 3.16).

Figure 3.25 Link utilization in the EA‐EMLM (Example 3.16) .

3.5 The Elastic Adaptive Erlang Multirate Loss Model under the BR Policy

3.5.1 The Service System

We now consider the multiservice system of the EA‐EMLM under the BR policy (EA‐EMLM/BR) [13]. A new service‐class ![]() call is accepted in the link if, after its acceptance, the occupied link bandwidth

call is accepted in the link if, after its acceptance, the occupied link bandwidth ![]() , where

, where ![]() refers to the BR parameter used to benefit (in CBP) calls of other service‐classes apart from

refers to the BR parameter used to benefit (in CBP) calls of other service‐classes apart from ![]() . In terms of the system state‐space

. In terms of the system state‐space ![]() , the CBP is expressed according to ( 3.33).

, the CBP is expressed according to ( 3.33).

Example 3.17

Consider again Example 3.13 ![]() ,

, ![]() . Assume that

. Assume that ![]() and

and ![]() b.u., so that

b.u., so that ![]() .

.

- (a)Draw the complete state transition diagram of the system and determine the values of

and

and  for each state

for each state  .

. - (b)Write the GB equations, determine the values of

and the exact CBP of both service‐classes.

and the exact CBP of both service‐classes. - (c)Draw the modified state transition diagram based on

and determine the values of

and determine the values of  for each state

for each state  .

. - (d)Write the GB equations of the modified state transition diagram, and determine the values of

and the CBP of both service‐classes.

and the CBP of both service‐classes.

- (a)Figure 3.26 shows the state space

that consists of 11 permissible states

that consists of 11 permissible states  together with the complete state transition diagram of the system. The corresponding values of

together with the complete state transition diagram of the system. The corresponding values of  and

and  are exactly the same as those presented in Table 3.1.

are exactly the same as those presented in Table 3.1. - (b) Based on Figure 3.26, we obtain the following 11 GB equations:

The solution of this linear system is:

Then, based on the

and ( 3.32), we obtain the exact value of equalized CBP:

and ( 3.32), we obtain the exact value of equalized CBP:

(compare with 0.3234 of the E‐EMLM/BR in Example 3.6). - (c)The graphical representation of the modified state transition diagram is identical to that of Figure 3.8. The corresponding values of

are exactly the same as those presented in Table 3.5.

are exactly the same as those presented in Table 3.5. - (d) Based on Figure 3.8 and Table 3.5, we obtain the following 11 GB equations:

The solution of this linear system is:

Then, based on

and ( 3.32), we obtain the value of equalized CBP:

and ( 3.32), we obtain the value of equalized CBP:

which is quite close to the exact value of 0.2674.

Figure 3.26 The state space  and the state transition diagram (Example 3.17).

and the state transition diagram (Example 3.17).

3.5.2 The Analytical Model

3.5.2.1 Link Occupancy Distribution

In the EA‐EMLM/BR, the link occupancy distribution, ![]() , is calculated in an approximate way, according to the following recursive formula (Roberts method) [ 13]:

, is calculated in an approximate way, according to the following recursive formula (Roberts method) [ 13]:

This formula is similar to (3.34) of the E‐EMLM/BR. If ![]() for all

for all ![]() , then the EA‐EMLM results. In addition, if

, then the EA‐EMLM results. In addition, if ![]() , then we have the classical EMLM.

, then we have the classical EMLM.

3.5.2.2 CBP, Utilization, and Mean Number of In‐service Calls

The following performance measures can be determined via (3.60):

- The CBP of service‐class

, via ( 3.35).

, via ( 3.35). - The link utilization,

, via ( 3.23).

, via ( 3.23). - The average number of service‐class

calls in the system,

calls in the system,  , is determined by ( 3.24), where

, is determined by ( 3.24), where  are given by ( 3.58) and ( 3.59) for elastic and adaptive service‐classes, respectively, under the assumptions that (i)

are given by ( 3.58) and ( 3.59) for elastic and adaptive service‐classes, respectively, under the assumptions that (i)  when

when  and (ii)

and (ii)  when

when  .

.

Example 3.18

Consider again Example 3.17 ![]() ).

).

- (a)Calculate the (normalized) values of

based on (3.60).

based on (3.60). - (b)Calculate the CBP of both service‐classes based on ( 3.35).

- (c)Calculate the link utilization based on ( 3.23).

- (d)Calculate the mean number of in‐service calls of the two service‐classes in the system based on ( 3.24), ( 3.58), and ( 3.59).

- (a) State probabilities through the recursion (3.60):

The normalization constant is

- (b) The state probabilities are:

- (c) The CBP are:

(compare with the value of 0.3171 obtained in the E‐EMLM/BR of Example 3.7)

- (d) The link utilization is:

b.u.

b.u. - (e) For

, we initially determine the values of

, we initially determine the values of  for

for  and

and  :

:

Then, based on ( 3.24), we have

and

and  .

.

Example 3.19

Consider a link of capacity ![]() b.u. that accommodates calls of four service‐classes, with the following traffic characteristics:

b.u. that accommodates calls of four service‐classes, with the following traffic characteristics:

| 12 erl | 6 erl | 3 erl | 2 erl | 1 b.u. | 2 b.u. | 4 b.u. | 10 b.u. | 9 b.u. | 8 b.u. | 6 b.u. | 0 b.u. |

Calls of service‐classes 1 and 2 are elastic, while calls of service‐classes 3 and 4 are adaptive. Compare the CBP of all service‐classes obtained by the EA‐EMLM/BR and the EA‐EMLM when the offered traffic‐loads increase in steps of 2, 1, 0.5, and 0.25 erl up to ![]() erl. Also provide simulation results for the EA‐EMLM/BR.

erl. Also provide simulation results for the EA‐EMLM/BR.

Figure 3.27 presents the analytical and the simulation equalized CBP results of all service‐classes, respectively, in the case of the EA‐EMLM/BR. For comparison, we include the corresponding analytical CBP results of the EA‐EMLM. In the ![]() ‐axis of Figure 3.27, point 1 refers to

‐axis of Figure 3.27, point 1 refers to ![]() , while point 7 is

, while point 7 is ![]() . Simulation results are based on SIMSCRIPT III and are mean values of 12 runs (no reliability ranges are shown). From Figure 3.27, we observe that (i) analytical and simulation CBP results are very close and (ii) the EA‐EMLM fails to capture the behavior of the EA‐EMLM/BR (hence, both models are necessary).

. Simulation results are based on SIMSCRIPT III and are mean values of 12 runs (no reliability ranges are shown). From Figure 3.27, we observe that (i) analytical and simulation CBP results are very close and (ii) the EA‐EMLM fails to capture the behavior of the EA‐EMLM/BR (hence, both models are necessary).

Figure 3.27 Equalized CBP of the EA‐EMLM/BR and CBP per service‐class of the EA‐EMLM (Example 3.19).

3.6 The Elastic Adaptive Erlang Multirate Loss Model under the Threshold Policy

3.6.1 The Service System

We now consider the EA‐EMLM under the TH policy (EA‐EMLM/TH), as in the case of the E‐EMLM/TH (Section 3.3). That is, the total number of in‐service calls per service‐class must not exceed a threshold (per service‐class). The bandwidth compression/expansion mechanism and the CAC in the EA‐EMLM/TH are the same as those of the E‐EMLM/TH, but ( 3.3) is applied only on elastic calls to satisfy their service time requirement.

Example 3.20

Consider Example 3.9 (![]() ) and assume that calls of service‐class 2 are adaptive. This example clarifies the differences between the E‐EMLM/TH and the EA‐EMLM/TH.

) and assume that calls of service‐class 2 are adaptive. This example clarifies the differences between the E‐EMLM/TH and the EA‐EMLM/TH.

- (a)Draw the complete state transition diagram of the system and determine the values of

and

and  , based on ( 3.2), for each state

, based on ( 3.2), for each state  .

. - (b)Write the GB equations, and determine the values of

and the exact CBP of both service‐classes.

and the exact CBP of both service‐classes. - (c)Draw the modified state transition diagram based on

and determine the values of

and determine the values of  , based on ( 3.8) and ( 3.47), for each state

, based on ( 3.8) and ( 3.47), for each state  .

. - (d)Write the GB equations of the modified state transition diagram, and determine the values of

and the CBP of both service‐classes.

and the CBP of both service‐classes.

- (a)Figure 3.28 shows the state space

that consists of seven permissible states

that consists of seven permissible states  together with the complete state transition diagram of the system. Compared to Figure 3.13, the differences are in

together with the complete state transition diagram of the system. Compared to Figure 3.13, the differences are in  whose values do not alter in states

whose values do not alter in states  and

and  . The corresponding values of

. The corresponding values of  and

and  remain the same as in the case of the E‐EMLM/TH (Table 3.3).

remain the same as in the case of the E‐EMLM/TH (Table 3.3). - (b) According to Figure 3.28, we write the following seven GB equations: