The HTML5 Herald is really becoming quite

dynamic for an “ol’ timey” newspaper! We’ve added a video with the new

video element, made our site available

offline, added support to remember the user’s name and email address, and

used geolocation to detect the user’s location.

But there’s still more we can do to make it even more fun. First, the video is a little at odds with the rest of the paper, since it’s in color. Second, the geolocation feature, while fairly speedy, could use a progress indicator that lets the user know we haven’t left them stranded. And finally, it would be nice to add just one more dynamic piece to our page. We’ll take care of all three of these using the APIs we’ll discuss in this chapter: Canvas, SVG, and Drag and Drop.

With HTML5’s Canvas API, we’re no longer limited to drawing rectangles on our sites. We can draw anything we can imagine, all through JavaScript. This can improve the performance of our websites by avoiding the need to download images off the network. With canvas, we can draw shapes and lines, arcs and text, gradients and patterns. In addition, canvas gives us the power to manipulate pixels in images and even video. We’ll start by introducing some of the basic drawing features of canvas, but then move on to using its power to transform our video—taking our modern-looking color video and converting it into conventional black and white to match the overall look and feel of The HTML5 Herald.

The Canvas 2D Context spec is supported in:

-

Safari 2.0+

-

Chrome 3.0+

-

Firefox 3.0+

-

Internet Explorer 9.0+

-

Opera 10.0+

-

iOS (Mobile Safari) 1.0+

-

Android 1.0+

Canvas was first developed by Apple. Since they already had a framework—Quartz 2D—for drawing in two-dimensional space, they went ahead and based many of the concepts of HTML5’s canvas on that framework. It was then adopted by Mozilla and Opera, and then standardized by the WHATWG (and subsequently picked up by the W3C, along with the rest of HTML5).

There’s some good news here. If you aspire to do development for the iPhone or iPad (referred to jointly as iOS), or for the Mac, what you learn in canvas should help you understand some of the basics concepts of Quartz 2D. If you already develop for the Mac or iOS and have worked with Quartz 2D, many canvas concepts will look very familiar to you.

The first step to using canvas is to add a canvas element to the page:

The text in between the canvas

tags will only be shown if the canvas

element is not supported by the visitor’s browser.

Since drawing on the canvas is done using JavaScript, we’ll need a

way to grab the element from the DOM. We’ll do so by giving our canvas an id:

<canvas id="myCanvas"> Sorry! Your browser doesn’t support Canvas. </canvas>

All drawing on the canvas happens via JavaScript, so let’s make

sure we’re calling a JavaScript function when our page is ready. We’ll

add our jQuery document ready check to a script element at the bottom of the

page:

The canvas element takes both a

width and height attribute, which should also be

set:

<canvas id="myCanvas" width="200" height="200">

Sorry! Your browser doesn’t support Canvas.

</canvas>

Finally, let’s add a border to our canvas to visually distinguish it on the page, using some CSS. Canvas has no default styling, so it’s difficult to see where it is on the page unless you give it some kind of border:

Now that we’ve styled it, we can actually view the canvas container on our page

—Figure 11.1 shows what it looks like.

All drawing on the canvas happens via the Canvas JavaScript API.

We’ve called a function called draw() when our

page is ready, so let’s go ahead and create that function. We’ll add the

function to our script element. The

first step is to grab hold of the canvas element on our page:

<script>

…

function draw() {

var canvas = document.getElementById("myCanvas");

}

</script>

Once we’ve stored our canvas

element in a variable, we need to set up the canvas’s

context. The context is the place where your

drawing is rendered. Currently, there’s only wide support for drawing to

a two-dimensional context. The W3C Canvas spec defines the context in

the CanvasRenderingContext2D object. Most methods

we’ll be using to draw onto the canvas are methods of this

object.

We obtain our drawing context by calling the

getContext method and passing it

the string "2d", since we’ll be drawing in two

dimensions:

function draw() {

var canvas = document.getElementById("myCanvas");

var context = canvas.getContext("2d");

}

The object that’s returned by getContext

is a CanvasRenderingContext2D object. In this

chapter, we’ll refer to it as simply “the context object” for brevity.

Note: WebGL

WebGL is a new API for 3D graphics being managed by the Khronos Group, with a WebGL working group that includes Apple, Mozilla, Google, and Opera.

By combining WebGL with HTML5 Canvas, you can draw in three dimensions. WebGL is currently supported in Firefox 4+, Chrome 8+, and Safari 6. To learn more, see http://www.khronos.org/webgl/.

On a regular painting canvas, before you can begin, you must first

saturate your brush with paint. In the HTML5 Canvas, you must do the

same, and we do so with the

strokeStyle or

fillStyle properties. Both

strokeStyle and fillStyle are

set on a context object. And both take one of three values: a string

representing a color, a

CanvasGradient, or a

CanvasPattern.

Let’s start by using a color string to style the stroke. You can think of the stroke as the border of the shape you’re going to draw. To draw a rectangle with a red border, we first define the stroke color:

function draw() {

var canvas = document.getElementById("myCanvas");

var context = canvas.getContext("2d");

context.strokeStyle = "red";

}

To draw a rectangle with a red border and blue fill, we must also define the fill color:

We can use any CSS color value to set the stroke or fill color, as

long as we specify it as a string: a hexadecimal value like

#00FFFF, a color name like red or

blue, or an RGB value like rgb(0, 0,

255). We can even use

RGBA to set a semitransparent stroke or fill color. Let’s

change our blue fill to blue with a 50% opacity:

Once we’ve defined the color of the stroke and the fill, we’re

ready to actually start drawing! Let’s begin by drawing a rectangle. We

can do this by calling the

fillRect and

strokeRect methods. Both of these

methods take the X and Y coordinates where you want to begin drawing the

fill or the stroke, and the width and the height of the rectangle. We’ll

add the stroke and fill 10 pixels from the top and 10 pixels from the

left of the canvas’s top left corner:

function draw() {

…

context.fillRect(10,10,100,100);

context.strokeRect(10,10,100,100);

}

This will create a semitransparent blue rectangle with a red border, like the one in Figure 11.2.

As you may have gathered, the coordinate system in the canvas element is different from the Cartesian

coordinate system you learned in math class. In the canvas coordinate

system, the top-left corner is (0,0). If the canvas is 200 pixels by 200

pixels, then the bottom-right corner is (200, 200), as Figure 11.3 illustrates.

Instead of a color as our fillStyle, we could

have also used a CanvasGradient or a

CanvasPattern.

We create a CanvasPattern by calling the

createPattern method.

createPattern takes two parameters: the image

to create the pattern with, and how that image should be repeated. The

repeat value is a string, and the valid values are the same as those in

CSS: repeat, repeat-x,

repeat-y, and no-repeat.

Instead of using a semitransparent blue

fillStyle, let’s create a pattern using our bicycle

image. First, we must create an Image object, and

set its src property to our image:

function draw() {

…

var img = new Image();

img.src = "../images/bg-bike.png";

}

Setting the src attribute

will tell the browser to start downloading the image—but if we try to

use it right away to create our gradient, we’ll run into some problems,

because the image will still be loading. So we’ll use the image’s

onload property to create our pattern once the

image has been fully loaded by the browser:

function draw() {

…

var img = new Image();

img.src = "../images/bg-bike.png";

img.onload = function() {

};

}

In our onload event

handler, we call createPattern, passing it the

Image object and the string

repeat, so that our image repeats along both the X

and Y axes. We store the results of

createPattern in the variable

pattern, and set the fillStyle

to that variable:

function draw() {

…

var img = new Image();

img.src = "../images/bg-bike.png";

img.onload = function() {

pattern = context.createPattern(img, "repeat");

context.fillStyle = pattern;

context.fillRect(10,10,100,100);

context.strokeRect(10,10,100,100);

};

}

Note: Anonymous Functions

You may be asking yourself, “what is that

function statement that comes right before the call

to img.onload?” It’s an anonymous

function. Anonymous functions are much like regular

functions except, as you might guess, they don’t have names.

When you see an anonymous function inside of an event listener,

it means that the anonymous function is being bound to that event. In

other words, the code inside that anonymous function will be run when

the load event is fired.

Now, our rectangle’s fill is a pattern made up of our bicycle image, as Figure 11.4 shows.

We can also create a

CanvasGradient to use as our

fillStyle. To create a

CanvasGradient, we call one of two methods:

createLinearGradient(x0, y0, x1,

y1) or

createRadialGradient(x0, y0, r0, x1, y1,

r1); then we add one or more color stops to the

gradient.

createLinearGradient’s

x0 and y0 represent the

starting location of the gradient. x1 and

y1 represent the ending location.

To create a gradient that begins at the top of the canvas and blends the color down to the bottom, we’d define our starting point at the origin (0,0), and our ending point 200 pixels down from there (0,200):

function draw() {

…

var gradient = context.createLinearGradient(0, 0, 0, 200);

}

Next, we specify our color stops. The color stop method is

simply addColorStop(offset, color).

The offset is a value between 0 and 1. An

offset of 0 is at the starting end of the gradient,

and an offset of 1 is at the other end. The

color is a string value that, as with the

fillStyle, can be a color name, a hexadecimal color

value, an rgb() value, or an

rgba() value.

To make a gradient that starts as blue and begins to blend into

white halfway down the gradient, we can specify a blue color stop with

an offset of 0 and a purple color stop with an

offset of 1:

function draw() {

…

var gradient = context.createLinearGradient(0, 0, 0, 200);

gradient.addColorStop(0,"blue");

gradient.addColorStop(1,"white");

context.fillStyle = gradient;

context.fillRect(10,10,100,100);

context.strokeRect(10,10,100,100);

}

Figure 11.5 is the result of setting our

CanvasGradient to be the

fillStyle of our rectangle.

We’re not limited to drawing rectangles—we can draw any shape we can imagine! Unlike rectangles and squares, however, there’s no built-in method for drawing circles, or other shapes. To draw more interesting shapes, we must first lay out the path of the shape.

Paths create a blueprint for your lines, arcs, and shapes, but

paths are invisible until you give them a stroke! When we drew

rectangles, we first set the strokeStyle and then

called fillRect. With more complex shapes, we

need to take three steps: lay out the path, stroke the path, and fill

the path. As with drawing rectangles, we can just stroke the path, or

fill the path—or we can do both.

Let’s start with a simple circle:

function draw() {

var canvas = document.getElementById("myCanvas");

var context = canvas.getContext("2d");

context.beginPath();

}

Now we need to create an arc. An arc is a

segment of a circle; there’s no method for creating a circle, but we can

simply draw a 360° arc. We create it using the

arc method:

function draw() {

var canvas = document.getElementById("myCanvas");

var context = canvas.getContext("2d");

context.beginPath();

context.arc(50, 50, 30, 0, Math.PI*2, true);

}

The arguments for the arc method are as

follows: arc(x, y, radius, startAngle, endAngle,

anticlockwise).

x and y represent

where on the canvas you want the arc’s path to begin. Imagine this as

the center of the circle that you’ll be drawing.

radius is the distance from the center to the

edge of the circle.

startAngle and

endAngle represent the start and end angles along

the circle’s circumference that you want to draw. The units for the

angles are in radians, and a circle is 2π radians. We want to draw a

complete circle, so we will use 2π for the

endAngle. In JavaScript, we can get this value by

multiplying Math.PI by 2.

anticlockwise is an optional argument. If

you wanted the arc to be drawn counterclockwise instead of clockwise,

you would set this value to true. Since we are

drawing a full circle, it doesn’t matter which direction we draw it in,

so we omit this argument.

Our next step is to close the path, since we’ve now finished

drawing our circle. We do that with the

closePath method:

function draw() {

var canvas = document.getElementById("myCanvas");

var context = canvas.getContext("2d");

context.beginPath();

context.arc(100, 100, 50, 0, Math.PI*2, true);

context.closePath();

}

Now we have a path! But unless we stroke it or fill it, we’ll be

unable to see it. Thus, we must set a strokeStyle

if we would like to give it a border, and we must set a

fillStyle if we’d like our circle to have a fill

color. By default, the width of the stroke is 1 pixel—this is stored in

the lineWidth property of the

context object. Let’s make our border a bit bigger by

setting the lineWidth to 3:

function draw() {

var canvas = document.getElementById("myCanvas");

var context = canvas.getContext("2d");

context.beginPath();

context.arc(50, 50, 30, 0, Math.PI*2, true);

context.closePath();

context.strokeStyle = "red";

context.fillStyle = "blue";

context.lineWidth = 3;

}

Lastly, we fill and stroke the path. Note that this time, the

method names are different than those we used with our rectangle. To

fill a path, you simply call fill, and to

stroke it you call stroke:

function draw() {

var canvas = document.getElementById("myCanvas");

var context = canvas.getContext("2d");

context.beginPath();

context.arc(100, 100, 50, 0, Math.PI*2, true);

context.closePath();

context.strokeStyle = "red";

context.fillStyle = "blue";

context.lineWidth = 3;

context.fill();

context.stroke();

}

Figure 11.6 shows the finished circle.

To learn more about drawing shapes, the Mozilla Developer Network has an excellent tutorial.

If we create an image programmatically using the Canvas API, but

decide we’d like to have a local copy of our drawing, we can use the

API’s

toDataURL method to save our

drawing as a PNG or JPEG file.

To preserve the circle we just drew, we could add a new button to our HTML, and open the canvas drawing as an image in a new window once the button is clicked. To do that, let’s define a new JavaScript function:

function saveDrawing() {

var canvas = document.getElementById("myCanvas");

window.open(canvas.toDataURL("image/png"));

}

Next, we’ll add a button to our HTML and call our function when it’s clicked:

<canvas id="myCanvas" width="200" height="200"> Sorry! Your browser doesn’t support Canvas. </canvas> <form> <input type="button" name="saveButton" id="saveButton" ↵value="Save Drawing"> </form> … <script> $('document').ready(function(){ draw(); $('#saveButton').click(saveDrawing); }); …

When the button is clicked, a new window or tab opens up with a PNG file loaded into it, as shown in Figure 11.7.

To learn more about saving our canvas drawings as files, see the W3C Canvas spec and the “Saving a canvas image to file” section of Mozilla’s Canvas code snippets.

We can also draw images into the canvas element. In this example, we’ll be

redrawing into the canvas an image that already exists on the

page.

For the sake of illustration, we’ll be using the HTML5 logo as our image for

the next few examples. Let’s start by adding it to our page in an

img element:

<canvas id="myCanvas" width="200" height="200"> Your browser does not support canvas. </canvas> <img src="../images/html5-logo.png" id="myImageElem">

Next, after grabbing the canvas

element and setting up the canvas’s context, we can grab an image from

our page via document.getElementById:

function draw() {

var canvas = document.getElementById("myCanvas");

var context = canvas.getContext("2d");

var image = document.getElementById("myImageElem");

}

We’ll use the same CSS we used before to make the canvas element visible:

Let’s modify it slightly to space out our canvas and our image:

Figure 11.8 shows our empty canvas next to our image.

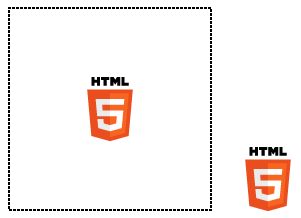

We can use canvas’s

drawImage method to redraw the

image from our page into the canvas:

function draw() {

var canvas = document.getElementById("myCanvas");

var context = canvas.getContext("2d");

var image = document.getElementById("myImageElem");

context.drawImage(image, 0, 0);

}

Because we’ve drawn the image to the (0,0) coordinate, the image appears in the top-left of the canvas, as you can see in Figure 11.9.

We could instead draw the image at the center of the canvas, by

changing the X and Y coordinates that we pass to

drawImage. Since the image is 64 by 64 pixels,

and the canvas is 200 by 200 pixels, if we draw the image to

(68, 68),[17] the image will be in the center of the canvas, as in Figure 11.10.

Redrawing an image element from the page onto a canvas is fairly

unexciting. It’s really no different from using an img element! Where it does become interesting

is how we can manipulate an image after we’ve drawn it into the

canvas.

Once we’ve drawn an image on the canvas, we can use the

getImageData method from the

Canvas API to manipulate the pixels of that image. For example, if we

wanted to convert our logo from color to black and white, we can do so

using methods in the Canvas API.

getImageData will return an

ImageData object, which contains three

properties: width, height, and

data. The first two are self-explanatory, but it’s

the last one, data, that interests us.

data contains information about the pixels in

the ImageData object, in the form of an array.

Each pixel on the canvas will have four values in the

data array—these correspond to that pixel’s R, G,

B, and A values.

The getImageData method allows us to

examine a small section of a canvas, so let’s use this feature to become

more familiar with the data array. getImageData

takes four parameters, corresponding to the four corners of a

rectangular piece of the canvas we’d like to inspect. If we call

getImageData on a very small section of the

canvas, say context.getImageData(0, 0, 1, 1), we’d be

examining just one pixel (the rectangle from 0,0 to 1,1). The array

that’s returned is four items long, as it contains a red, green, blue,

and alpha value for this lone pixel:

function draw() {

var canvas = document.getElementById("myCanvas");

var context = canvas.getContext("2d");

var image = document.getElementById("myImageElem");

// draw the image at x=0 and y=0 on the canvas

context.drawImage(image, 68, 68);

var imageData = context.getImageData(0, 0, 1, 1);

var pixelData = imageData.data;

alert(pixelData.length);

}

The alert prompt confirms that the data array for a one-pixel section of the canvas will have four values, as Figure 11.11 demonstrates.

Let’s look at how we’d go about using

getImageData to convert a full color image into

black and white on a canvas. Assuming we’ve already placed an image onto

the canvas, as we did above, we can use a for loop to

iterate through each pixel in the image, and change it to

grayscale.

First, we’ll call getImageData(0,0,200,200) to

retrieve the entire canvas. Then, we need to grab the red, green, and

blue values of each pixel, which appear in the array in that

order:

function draw() {

var canvas = document.getElementById("myCanvas");

var context = canvas.getContext("2d");

var image = document.getElementById("myImageElem");

context.drawImage(image, 68, 68);

var imageData = context.getImageData(0, 0, 200, 200);

var pixelData = imageData.data;

for (var i = 0; i < pixelData.length; i += 4) {

var red = pixelData[i];

var green = pixelData[i + 1];

var blue = pixelData[i + 2];

}

}

Notice that our for loop is incrementing

i by 4 instead of the usual

1. This is because each pixel takes up four values in

the imageData array—one number each for the R, G, B,

and A values.

Next, we must determine the grayscale value for the current pixel. It turns out that there’s a mathematical formula for converting RGB to grayscale: you simply need to multiply each of the red, green, and blue values by some specific numbers, seen in the code block below:

…

for (var i = 0; i < pixelData.length; i += 4) {

var red = pixelData[i];

var green = pixelData[i + 1];

var blue = pixelData[i + 2];

var grayscale = red * 0.3 + green * 0.59 + blue * 0.11;

}

…

Now that we have the proper grayscale value, we’re going to store

it back into the red, green, and blue values in the

data array:

…

for (var i = 0; i < pixelData.length; i += 4) {

var red = pixelData[i];

var green = pixelData[i + 1];

var blue = pixelData[i + 2];

var grayscale = red * 0.3 + green * 0.59 + blue * 0.11;

pixelData[i] = grayscale;

pixelData[i + 1] = grayscale;

pixelData[i + 2] = grayscale;

}

…

So now we’ve modified our pixel data by individually converting

each pixel to grayscale. The final step? Putting the image data we’ve

modified back into the canvas via a method called

putImageData. This method does

exactly what you’d expect: it takes image data and writes it onto the

canvas. Here’s the method in action:

function draw() {

var canvas = document.getElementById("myCanvas");

var context = canvas.getContext("2d");

var image = document.getElementById("myImageElem");

context.drawImage(image, 60, 60);

var imageData = context.getImageData(0, 0, 200, 200);

var pixelData = imageData.data;

for (var i = 0; i < pixelData.length; i += 4) {

var red = pixelData[i];

var green = pixelData[i + 1];

var blue = pixelData[i + 2];

var grayscale = red * 0.3 + green * 0.59 + blue * 0.11;

pixelData[i] = grayscale;

pixelData[i + 1] = grayscale;

pixelData[i + 2] = grayscale;

}

context.putImageData(imageData, 0, 0);

}

With that, we’ve drawn a black-and-white version of the validation image into the canvas.

If you tried out this code in Chrome or Firefox, you may have

noticed that it failed to work—the image on the canvas is in color.

That’s because in these two browsers, if you try to convert an image on

your desktop in an HTML file that’s also on your desktop, an error will

occur in getImageData. The error is a security

error, though in our case it’s an unnecessary one.

The true security issue that Chrome and Firefox are attempting to stop is a user on one domain from manipulating images on another domain. For example, stopping me from loading an official logo from http://google.com/ and then manipulating the pixel data.

The W3C Canvas spec describes it this way:

Information leakage can occur if scripts from one origin can access information (e.g. read pixels) from images from another origin (one that isn’t the same). To mitigate this,

canvaselements are defined to have a flag indicating whether they are origin-clean.

This origin-clean flag will be set to false if

the image you want to manipulate is on a different domain from the

JavaScript doing the manipulating. Unfortunately, in Chrome and Firefox,

this origin-clean flag is also set to false while

you’re testing from files on your hard drive—they’re seen as files

living on different domains.

If you want to test pixel manipulation using canvas in Firefox or Chrome, you’ll need to either test it on a web server running on your computer (http://localhost/), or test it online on a real web server.

We can take the code we’ve already written to convert a color image to black and white, and enhance it to make our color video black and white, to match the old-timey feel of The HTML5 Herald page. We’ll do this in a new, separate JavaScript file called videoToBW.js, so that we can include it on the site’s home page.

The file begins, as always, by setting up the canvas and the context:

function makeVideoOldTimey ()

{

var video = document.getElementById("video");

var canvas = document.getElementById("canvasOverlay");

var context = canvas.getContext("2d");

}

Next, we’ll add a new event listener to react to the play event firing on the video element.

We want to call a draw function when the

video begins playing. To do so, we’ll add an event listener to our video

element that responds to the

play event:

function makeVideoOldTimey ()

{

var video = document.getElementById("video");

var canvas = document.getElementById("canvasOverlay");

var context = canvas.getContext("2d");

video.addEventListener("play", function(){

draw(video,context,canvas);

},false);

}

The draw function will be called when the

play event fires, and it will be

passed the

video, context, and

canvas objects. We’re using an anonymous function

here instead of a normal named function because we can’t actually pass

parameters to named functions when declaring them as event

handlers.

Since we want to pass several parameters to the

draw function—video,

context, and canvas—we

must call it from inside an anonymous function.

Let’s look at the draw function:

function draw(video, context, canvas)

{

if (video.paused || video.ended)

{

return false;

}

drawOneFrame(video, context, canvas);

}

Before doing anything else, we check to see if the video is paused

or has ended, in which case we’ll just cut the function short by

returning false. Otherwise, we continue onto the

drawOneFrame function. The

drawOneFrame function is nearly identical to

the code we had above for converting an image from color to black and

white, except that we’re drawing the video element onto the canvas instead of a

static image:

function drawOneFrame(video, context, canvas){

// draw the video onto the canvas

context.drawImage(video, 0, 0, canvas.width, canvas.height);

var imageData = context.getImageData(0, 0, canvas.width,

↵canvas.height);

var pixelData = imageData.data;

// Loop through the red, green and blue pixels,

// turning them grayscale

for (var i = 0; i < pixelData.length; i += 4) {

var red = pixelData[i];

var green = pixelData[i + 1];

var blue = pixelData[i + 2];

//we'll ignore the alpha value, which is in position i+3

var grayscale = red * 0.3 + green * 0.59 + blue * 0.11;

pixelData[i] = grayscale;

pixelData[i + 1] = grayscale;

pixelData[i + 2] = grayscale;

}

imageData.data = pixelData;

context.putImageData(imageData, 0, 0);

}

After we’ve drawn one frame, what’s the next step? We need to draw

another frame! The

setTimeout method allows us to

keep calling the draw function over and over

again, without pause: the final parameter is the value for delay—or how

long, in milliseconds, the browser should wait before calling the

function. Because it’s set to 0, we are essentially running

draw continuously. This goes on until the video

has either ended, or been paused:

function draw(video, context, canvas) {

if (video.paused || video.ended)

{

return false;

}

var status = drawOneFrame(video, context, canvas);

// Start over!

setTimeout(function(){ draw(video, context, canvas); }, 0);

}

The net result? Our color video of a plane taking off now plays in black and white!

If we were to view The HTML5 Herald from a file on our computer, we’d encounter security errors in Firefox and Chrome when trying to manipulate an entire video, as we would a simple image.

We can add a bit of error-checking in order to make our video work anyway, even if we view it from our local machine in Chrome or Firefox.

The first step is to add a

try/catch block to

catch the error:

function drawOneFrame(video, context, canvas){

context.drawImage(video, 0, 0, canvas.width, canvas.height);

try {

var imageData = context.getImageData(0, 0, canvas.width,

↵canvas.height);

var pixelData = imageData.data;

for (var i = 0; i < pixelData.length; i += 4) {

var red = pixelData[i];

var green = pixelData[i + 1];

var blue = pixelData[i + 2];

var grayscale = red * 0.3 + green * 0.59 + blue * 0.11;

pixelData[i] = grayscale;

pixelData[i + 1] = grayscale;

pixelData[i + 2] = grayscale;

}

imageData.data = pixelData;

context.putImageData(imageData, 0, 0);

}

catch (err) {

// error handling code will go here

}

}

When an error occurs in trying to call

getImageData, it would be nice to give some

sort of message to the user in order to give them a hint about what may

be going wrong. We’ll do just that, using the

fillText method of the Canvas API.

Before we write any text to the canvas, we should clear what’s

already drawn to it. We’ve already drawn the first frame of the video

into the canvas using the call to drawImage.

How can we clear that out?

It turns out that if we reset the width or height on the canvas, the canvas will be cleared. So, let’s reset the width:

function drawOneFrame(video, context, canvas){

context.drawImage(video, 0, 0, canvas.width, canvas.height);

try {

…

}

catch (err) {

canvas.width = canvas.width;

}

}

Next, let’s change the background color from black to

transparent, since the canvas element is positioned on top of the

video:

function drawOneFrame(video, context, canvas){

context.drawImage(video, 0, 0, canvas.width, canvas.height);

try {

…

}

catch (err) {

canvas.width = canvas.width;

canvas.style.backgroundColor = "transparent";

}

}

Before we can draw any text to the now transparent canvas, we

first must set up the style of our text—similar to what we did with

paths earlier. We do that with the fillStyle

and textAlign methods:

videoToBW.js (excerpt)

function drawOneFrame(video, context, canvas){

context.drawImage(video, 0, 0, canvas.width, canvas.height);

try {

…

}

catch (err) {

canvas.width = canvas.width;

canvas.style.backgroundColor = "transparent";

context.fillStyle = "white";

context.textAlign = "left";

}

}

We must also set the font we’d like to use. The

font property of the context object works the same

way the CSS font property does. We’ll specify a

font size of 18px and a comma-separated list of font families:

function drawOneFrame(video, context, canvas){

context.drawImage(video, 0, 0, canvas.width, canvas.height);

try {

…

}

catch (err) {

canvas.width = canvas.width;

canvas.style.backgroundColor = "transparent";

context.fillStyle = "white";

context.textAlign = "left";

context.font = "18px LeagueGothic, Tahoma, Geneva, sans-serif";

}

}

Notice that we’re using League Gothic; any fonts you’ve included

with @font-face are also available for you to use on

your canvas. Finally, we draw the text. We use a method of the context

object called

fillText, which takes the text to

be drawn and the (x,y) coordinates where it should be placed. Since we

want to write out a fairly long message, we’ll split it up into several

sections, placing each one on the canvas separately:

function drawOneFrame(video, context, canvas){

context.drawImage(video, 0, 0, canvas.width, canvas.height);

try {

…

}

catch (err) {

canvas.width = canvas.width;

canvas.style.backgroundColor = "transparent";

context.fillStyle = "white";

context.textAlign = "left";

context.font = "18px LeagueGothic, Tahoma, Geneva, sans-serif";

context.fillText("There was an error rendering ", 10, 20);

context.fillText("the video to the canvas.", 10, 40);

context.fillText("Perhaps you are viewing this page from", 10,

↵70);

context.fillText("a file on your computer?", 10, 90);

context.fillText("Try viewing this page online instead.", 10,

↵130);

return false;

}

}

As a last step, we return false. This lets us

check in the draw function whether an exception

was thrown. If it was, we want to stop calling

drawOneFrame for each video frame, so we exit

the draw function:

function draw(video, context, canvas) {

if (video.paused || video.ended)

{

return false;

}

var status = drawOneFrame(video, context, canvas);

if (status == false)

{

return false;

}

// Start over!

setTimeout(function(){ draw(video, context, canvas); }, 0);

}

A major downside of canvas in its current form is its lack of accessibility. The canvas doesn’t create a DOM node, is not a text-based format, and is thus essentially invisible to tools like screen readers. For example, even though we wrote text to the canvas in our last example, that text is essentially no more than a bunch of pixels, and is therefore inaccessible.

The HTML5 community is aware of these failings, and while no solution has been finalized, debates on how canvas could be changed to make it accessible are underway. You can read a compilation of the arguments and currently proposed solutions on the W3C’s wiki page.

We already learned a bit about SVG back in Chapter 7, when we used SVG files as a fallback for gradients in IE9 and older versions of Opera. In this chapter, we’ll dive into SVG in more detail and learn how to use it in other ways.

First, a quick refresher: SVG stands for Scalable Vector Graphics. SVG is a specific file format that allows you to describe vector graphics using XML. A major selling point of vector graphics in general is that, unlike bitmap images (such as GIF, JPEG, PNG, and TIFF), vector images preserve their shape even as you blow them up or shrink them down. We can use SVG to do many of the same tasks we can do with canvas, including drawing paths, shapes, text, gradients, and patterns. There are also some very useful open source tools relevant to SVG, some of which we will leverage in order to add a spinning progress indicator to The HTML5 Herald’s geolocation widget.

Basic SVG, including using SVG in an HTML img element, is supported in:

-

Safari 3.2+

-

Chrome 6.0+

-

Firefox 4.0+

-

Internet Explorer 9.0+

-

Opera 10.5+

There is currently no support for SVG in Android’s browser.

Note: XML

XML stands for eXtensible Markup Language. Like HTML, it’s a markup language, which means it’s a system meant to annotate text. Just as we can use HTML tags to wrap our content and give it meaning, so can XML tags be used to describe the content of files.

Unlike canvas, images created with SVG are available via the DOM. This allows technologies like screen readers to see what’s present in an SVG object through its DOM node—and it also allows you to inspect SVG using your browser’s developer tools. Since SVG is an XML file format, it’s also more accessible to search engines than canvas.

Drawing a circle in SVG is arguably easier than drawing a circle with canvas. Here’s how we do it:

<svg xmlns="http://www.w3.org/2000/svg" viewBox="0 0 400 400"> <circle cx="50" cy="50" r="25" fill="red"/> </svg>

The viewBox attribute defines

the starting location, width, and height of the SVG image.

The

circle element defines

a circle, with cx and cy the X and Y coordinates of the center of

the circle. The radius is represented by r, while fill is for the fill style.

To view an SVG file, you simply open it via the menu in any browser that supports SVG. Figure 11.12 shows what our circle looks like.

We can also draw rectangles in SVG, and add a stroke to them, as we did with canvas.

This time, let’s take advantage of SVG being an XML—and thus

text-based—file format, and utilize the desc tag, which allows us to provide a

description for the image we’re going to draw:

<svg xmlns="http://www.w3.org/2000/svg" viewbox="0 0 400 400"> <desc>Drawing a rectangle</desc> </svg>

Next, we populate the rect tag

with a number of attributes that describe the rectangle. This includes

the X and Y coordinate where the rectangle should be drawn, the width

and height of the rectangle, the fill, the stroke, and the width of the

stroke:

<svg xmlns="http://www.w3.org/2000/svg" viewbox="0 0 400 400">

<desc>Drawing a rectangle</desc>

<rect x="10" y="10" width="100" height="100"

fill="blue" stroke="red" stroke-width="3" />

</svg>

Figure 11.13 shows our what our rectangle looks like.

Unfortunately, it’s not always this easy. If you want to create complex shapes, the code begins to look a little scary. Figure 11.14 shows a fairly simple-looking star image from http://openclipart.org/:

And here are just the first few lines of SVG for this image:

<svg xmlns="http://www.w3.org/2000/svg"

width="122.88545" height="114.88568">

<g

inkscape:label="Calque 1"

inkscape:groupmode="layer"

id="layer1"

transform="translate(-242.42282,-449.03699)">

<g

transform="matrix(0.72428496,0,0,0.72428496,119.87078,183.8127)"

id="g7153">

<path

style="fill:#ffffff;fill-opacity:1;stroke:#000000;stroke-width

↵:2.761343;stroke-linecap:round;stroke-linejoin:round;stroke-miterl

↵imit:4;stroke-opacity:1;stroke-dasharray:none;stroke-dashoffset:0"

d="m 249.28667,389.00422 -9.7738,30.15957 -31.91999,7.5995 c -

↵2.74681,1.46591 -5.51239,2.92436 -1.69852,6.99979 l 30.15935,12.57

↵796 -11.80876,32.07362 c -1.56949,4.62283 -0.21957,6.36158 4.24212

↵,3.35419 l 26.59198,-24.55691 30.9576,17.75909 c 3.83318,2.65893 6

↵.12086,0.80055 5.36349,-3.57143 l -12.10702,-34.11764 22.72561,-13

↵.7066 c 2.32805,-1.03398 5.8555,-6.16054 -0.46651,-6.46042 l -33.5

↵0135,-0.66887 -11.69597,-27.26175 c -2.04282,-3.50583 -4.06602,-7.

↵22748 -7.06823,-0.1801 z"

id="path7155"

inkscape:connector-curvature="0"

sodipodi:nodetypes="cccccccccccccccc" />

…

Eek!

To save ourselves some work (and sanity), instead of creating SVG images by hand, we can use an image editor to help. One open source tool that you can use to make SVG images is Inkscape. Inkscape is an open source vector graphics editor that outputs SVG. Inkscape is available for download at http://inkscape.org/.

For our progress-indicating spinner, instead of starting from scratch, we’ve searched the public domain to find a good image from which to begin. A good resource to know about for public domain images is http://openclipart.org/, where you can find images that are copyright-free and free to use. The images have been donated by the creators for use in the public domain, even for commercial purposes, without the need to ask for permission.

We will be using an image of three arrows as the basis of our progress spinner, shown in Figure 11.15. The original can be found at openclipart.org.

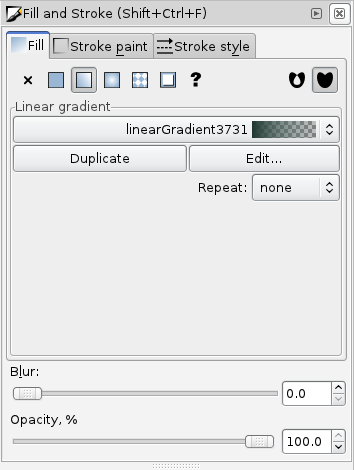

To make our progress spinner match our page a bit better, we can use a filter in Inkscape to make it black and white. Start by opening the file in Inkscape, then choose > > .

You may notice if you test out The HTML5 Herald in Safari that our black-and-white spinner is still ... in color. That’s because SVG filters are a specific feature of SVG yet to be implemented in Safari 5, though it will be part of Safari 6. SVG filters are supported in Firefox, Chrome, and Opera. They’re currently unsupported in all versions of Safari, Internet Explorer, the Android browser, and iOS.

A safer approach would be to avoid using filters, and instead simply modify the color of the original image.

We can do this in Inkscape by selecting the three arrows in the spinner.svg image, and then selecting > . The Fill and Stroke menu will appear on the right-hand side of the screen, as seen in Figure 11.16.

From this menu, we can choose to edit the existing linear gradient by clicking the button. We can then change the Red, Green, and Blue values all to 0 to make our image black and white.

Raphaël is an open source JavaScript library that wraps around SVG. It makes drawing and animating with SVG much easier than with SVG alone.

Much as with canvas, you can also draw images into a container you create using Raphaël.

Let’s add a div to our main

index file, which we’ll use as the container for the SVG elements

we’ll create using Raphaël. We’ve named this div spinner:

<article id="ad4">

<div id="mapDiv">

<h1 id="geoHeading">Where in the world are you?</h1>

<form id="geoForm">

<input type="button" id="geobutton" value="Tell us!">

</form>

<div id="spinner"></div>

</div>

</article>

We have styled this div to be

placed in the center of the parent mapDiv using the

following CSS:

Now, in our geolocation JavaScript, let’s put the spinner in

place while we’re fetching the map. The first step is to turn our

div into a Raphaël container. This

is as simple as calling the

Raphael method, and passing in

the element we’d like to use, along with a width and height:

function determineLocation(){

if (navigator.onLine) {

if (Modernizr.geolocation) {

navigator.geolocation.getCurrentPosition(displayOnMap);

var container = Raphael(document.getElementById("spinner"),

↵125, 125);

Next, we draw the spinner SVG image into the newly created

container with the Raphaël method

image, which is called on a

Raphaël container object. This method takes the path to the image, the

starting coordinates where the image should be drawn, and the width

and height of the image:

var container = Raphael(document.getElementById("spinner"),125,125);

var spinner = container.image("images/spinnerBW.svg",0,0,125,125);

With this, our spinner image will appear when we click on the button in the geolocation widget.

Now that we have our container and the spinner SVG image drawn

into it, we want to animate the image to make it spin. Raphaël has

animation features built in with the

animate method. Before we can

use this method, though, we first need to tell it which attribute to

animate. Since we want to rotate our image, we’ll create an object

that specifies how many degrees of rotation we want.

We create a new object attrsToAnimate,

specifying that we want to animate the rotation, and we want to rotate

by 720 degrees (two full turns):

var container = Raphael(document.getElementById("spinner"),125,125);

var spinner = container.image("images/spinnerBW.png",0,0,125,125);

var attrsToAnimate = { rotation: "720" };

The final step is to call the animate

method, and specify how long the animation should last. In our case,

we will let it run for a maximum of sixty seconds. Since

animate takes its values in milliseconds,

we’ll pass it 60000:

var container = Raphael(document.getElementById("spinner"),125,125);

var spinner = container.image("images/spinnerBW.png",0,0,125,125);

var attrsToAnimate = { rotation: "720" };

spinner.animate(attrsToAnimate, 60000);

That’s great! We now have a spinning progress indicator to keep

our visitors in the know while our map is loading. There’s still one

problem though: it remains after the map has loaded. We can fix this

by adding one line to the beginning of the existing

displayOnMap function:

function displayOnMap(position){

document.getElementById("spinner").style.visibility = "hidden";

This line sets the visibility property of

the spinner element to hidden, effectively hiding

the spinner div and the SVG image

we’ve loaded into it.

Now that we’ve learned about canvas and SVG, you may be asking yourself, which is the right one to use? The answer is: it depends on what you’re doing.

Both canvas and SVG allow you to draw custom shapes, paths, and fonts. But what’s unique about each?

Canvas allows for pixel manipulation, as we saw when we turned our video from color to black and white. One downside of canvas is that it operates in what’s known as immediate mode. This means that if you ever want to add more to the canvas, you can’t simply add to what’s already there. Everything must be redrawn from scratch each time you want to change what’s on the canvas. There’s also no access to what’s drawn on the canvas via the DOM. However, canvas does allow you to save the images you create to a PNG or JPEG file.

By contrast, what you draw to SVG is accessible via the DOM, because its mode is retained mode—the structure of the image is preserved in the XML document that describes it. SVG also has, at this time, a more complete set of tools to help you work with it, like the Raphaël library and Inkscape. However, since SVG is a file format—rather than a set of methods that allows you to dynamically draw on a surface—you can’t manipulate SVG images the way you can manipulate pixels on canvas. It would have been impossible, for example, to use SVG to convert our color video to black and white as we did with canvas.

In summary, if you need to paint pixels to the screen, and have no concerns about the ability to retrieve and modify your shapes, canvas is probably the better choice. If, on the other hand, you need to be able to access and change specific aspects of your graphics, SVG might be more appropriate.

It’s also worth noting that neither technology is appropriate for static images—at least not while browser support remains a stumbling block. In this chapter, we’ve made use of canvas and SVG for a number of such static examples, which is fine for the purpose of demonstrating what they can do. But in the real world, they’re only really appropriate for cases where user interaction defines what’s going to be drawn.

In order to add one final dynamic effect to our site, we’re going to examine the new Drag and Drop API. This API allows us to specify that certain elements are draggable, and then specify what should happen when these draggable elements are dragged over or dropped onto other elements on the page.

Drag and Drop is supported in:

-

Safari 3.2+

-

Chrome 6.0+

-

Firefox 3.5+ (there is an older API that was supported in Firefox 3.0)

-

Internet Explorer 7.0+

-

Android 2.1+

There is currently no support for Drag and Drop in Opera. The API is unsupported by design in iOS, as Apple directs you to use the DOM Touch API instead.

There are two major kinds of functionality you can implement with Drag and Drop: dragging files from your computer into a web page—in combination with the File API—or dragging elements into other elements on the same page. In this chapter, we’ll focus on the latter.

Note: Drag and Drop and the File API

If you’d like to learn more about how to combine Drag and Drop with the File API, in order to let users drag files from their desktop onto your websites, an excellent guide can be found at the Mozilla Developer Network.

The File API is currently only supported in Firefox 3.6+ and Chrome.

There are several steps to adding Drag and Drop to your page:

In order to add a bit of fun and frivolity to our page, let’s add a few images of mice, so that we can then drag them onto our cat image and watch the cat react and devour them. Before you start worrying (or call the SPCA), rest assured that we mean, of course, computer mice. We’ll use another image from OpenClipArt for our mice.

The first step is to add these new images to our

index.html file. We’ll give each mouse image an

id as well:

<article id="ac3">

<hgroup>

<h1>Wai-Aria? HAHA!</h1>

<h2>Form Accessibility</h2>

</hgroup>

<img src="images/cat.png" alt="WAI-ARIA Cat">

<div class="content">

<p id=”mouseContainer”>

<img src="images/computer-mouse-pic.svg" width="30"

↵alt="mouse treat" id="mouse1">

<img src="images/computer-mouse-pic.svg" width="30"

↵alt="mouse treat" id="mouse2">

<img src="images/computer-mouse-pic.svg" width="30"

↵alt="mouse treat" id="mouse3">

</p>

…

Figure 11.17 shows our images in their initial state.

The next step is to make our images draggable. In order to do

that, we add the draggable

attribute to them, and set the value to true:

<img src="images/computer-mouse-pic.svg" width="30" ↵alt="mouse treat" id="mouse1" draggable="true"> <img src="images/computer-mouse-pic.svg" width="30" ↵alt="mouse treat" id="mouse2" draggable="true"> <img src="images/computer-mouse-pic.svg" width="30" ↵alt="mouse treat" id="mouse3" draggable="true">

Important:

draggable Must Be

Set

Note that draggable is

not a Boolean attribute; you have to explicitly

set it to true.

Now that we have set draggable to true, we

have to set an event listener for the dragstart event on each image. We’ll use

jQuery’s bind method to attach the event

listener:

$('document').ready(function() {

$('#mouseContainer img').bind('dragstart', function(event) {

// handle the dragstart event

});

});

DataTransfer objects are one of the new

objects outlined in the Drag and Drop API. These objects allow us to set

and get data about the objects that are being dragged. Specifically,

DataTransfer lets us define two pieces of

information:

-

the type of data we’re saving about the draggable element

-

the value of the data itself

In the case of our draggable mouse images, we want to be able to

store the id of these images, so we

know which image is being dragged around.

To do this, we first need to tell

DataTransfer that we want to save some plain text

by passing in the string text/plain. Then we give it

the id of our mouse image:

$('#mouseContainer img').bind('dragstart', function(event) {

event.originalEvent.dataTransfer.setData("text/plain",

↵event.target.getAttribute('id'));

});

When an element is dragged, we save the id of the element in the

DataTransfer object, to be used again once the

element is dropped. The target property of a dragstart event will be the element that’s

being dragged.

Important:

dataTransfer and

jQuery

The jQuery library’s Event object only

gives you access to properties it knows about. This causes problems

when you’re using new native events like

DataTransfer; trying to access the

dataTransfer property of a jQuery event will

result in an error. However, you can always retrieve the original DOM

event by calling the

originalEvent method on your

jQuery event, as we did above. This will give you access to any

properties your browser supports—in this case that includes the new

DataTransfer object.

Of course, this isn’t an issue if you’re rolling your own JavaScript from scratch!

Now our mouse images are set up to be dragged. Yet, when we try to drag them around, we’re unable to drop them anywhere, which is no fun.

The reason is that by default, elements on the page aren’t set up to receive dragged items. In order to override the default behavior on a specific element, we must stop it from happening. We can do that by creating two more event listeners.

The two events we need to monitor for are

dragover and

drop. As you’d

expect, dragover fires when you

drag something over an element, and drop fires when you drop something on

it.

We’ll need to prevent the default behavior for both these events—since the default prohibits you from dropping an element.

Let’s start by adding an id

to our cat image so that we can bind event handlers to it:

<article id="ac3">

<hgroup>

<h1>Wai-Aria? HAHA!</h1>

<h2 id="catHeading">Form Accessibility</h2>

</hgroup>

<img src="images/cat.png" id="cat" alt="WAI-ARIA Cat">

You may have noticed that we also gave an id to the h2 element. This is so we can change this text

once we’ve dropped a mouse onto the cat.

Now, let’s handle the dragover event:

That was easy! In this case, we merely ensured that the mouse

picture can actually be dragged over the cat picture. We simply need to

prevent the default behavior —and jQuery’s

preventDefault method serves this

purpose exactly.

The code for the drop

handler is a bit more complex, so let us review it piece by piece. Our

first task is to figure out what the cat should say when a mouse is

dropped on it. In order to demonstrate that we can retrieve the id of the dropped mouse from the

DataTransfer object, we’ll use a different phrase

for each mouse, regardless of the order they’re dropped in. We’ve given

three cat-appropriate options: “MEOW!”, “Purr ...”, and

“NOMNOMNOM.”

We’ll store these options inside an object called

mouseHash. The first step is to declare our

object:

Next, we’re going to take advantage of JavaScript’s objects

allowing us to store key/value pairs inside them, as well as storing

each response in the mouseHash object, associating

each response with the id of one of

the mouse images:

$('#cat').bind('drop', function(event) {

var mouseHash = {};

mouseHash['mouse1'] = "NOMNOMNOM";

mouseHash['mouse2'] = "MEOW!";

mouseHash['mouse3'] = "Purr...";

Our next step is to grab the h2

element that we’ll change to reflect the cat’s response:

Remember when we saved the id

of the dragged element to the DataTransfer object

using setData? Well, now we want to retrieve

that id. If you guessed that we

will need a method called getData for this, you

guessed right:

Note that we’ve stored the mouse’s id in a variable called

item. Now that we know which mouse was dropped, and

we have our heading, we just need to change the text to the appropriate

response:

We use the information stored in the item

variable (the dragged mouse’s id)

to retrieve the correct message for the h2 element. For example, if the dragged mouse

is mouse1, calling mouseHash[item]

will retrieve “NOMNOMNOM” and set that as the h2 element’s text.

Given that the mouse has now been “eaten,” it makes sense to remove it from the page:

var mousey = document.getElementById(item); mousey.parentNode.removeChild(mousey);

Last but not least, we must also prevent the default behavior of not allowing elements to be dropped on our cat image, as before:

Figure 11.18 shows our happy cat, with one mouse to go.

We’ve only touched on the basics of the Drag and Drop API, to give

you a taste of what’s available. We’ve shown you how you can use

DataTransfer to pass data from your dragged items

to their drop targets. What you do with this power is up to you!

To learn more about the Drag and Drop API, here are a couple of good resources:

With these final bits of interactivity, our work on The HTML5 Herald has come to an end, and your journey into the world of HTML5 and CSS3 is well on its way! We’ve tried to provide a solid foundation of knowledge about as many of the cool new features available in today’s browsers as possible—but how you build on that is up to you.

We hope we’ve given you a clear picture of how most of these features can be used today on real projects. Many are already well-supported, and browser development is once again progressing at a rapid clip. And when it comes to those elements for which support is still lacking, you have the aid of an online army of ingenious developers. These community-minded individuals are constantly working at coming up with fallbacks and polyfills to help us push forward and build the next generation of websites and applications.

Get to it!