Chapter 6

Equalizers

U. Zölzer

Spectral sound equalization is one of the most important methods for processing audio signals. Equalizers are found in various forms in the transmission of audio signals from a sound studio to the listener. The more complex filter functions are used in sound studios. However, in almost every consumer product like car radios, hi‐fi amplifiers etc., simple filter functions are used for sound equalization. We first discuss basic filter types followed by the design and implementation of recursive audio filters. In the third and fourth sections, linear‐phase non‐recursive filter structures and their implementation are introduced.

6.1 Basics

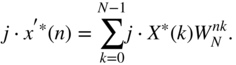

For filtering of audio signals, the following filter types are used.

- Lowpass and highpass filters with cutoff frequency

(3 dB cutoff frequency) are shown with their magnitude response in Fig. 6.1. They have a passband in the lower and higher frequency range, respectively.

(3 dB cutoff frequency) are shown with their magnitude response in Fig. 6.1. They have a passband in the lower and higher frequency range, respectively. - Bandpass and bandstop filters (magnitude responses in Fig. 6.1) have a center frequency

and a lower and upper

and a lower and upper  and

and  cutoff frequency. They have a pass‐ and stopband in the middle of the frequency range. For the bandwidth of a bandpass or a bandstop filter, we have

(6.1)

cutoff frequency. They have a pass‐ and stopband in the middle of the frequency range. For the bandwidth of a bandpass or a bandstop filter, we have

(6.1)

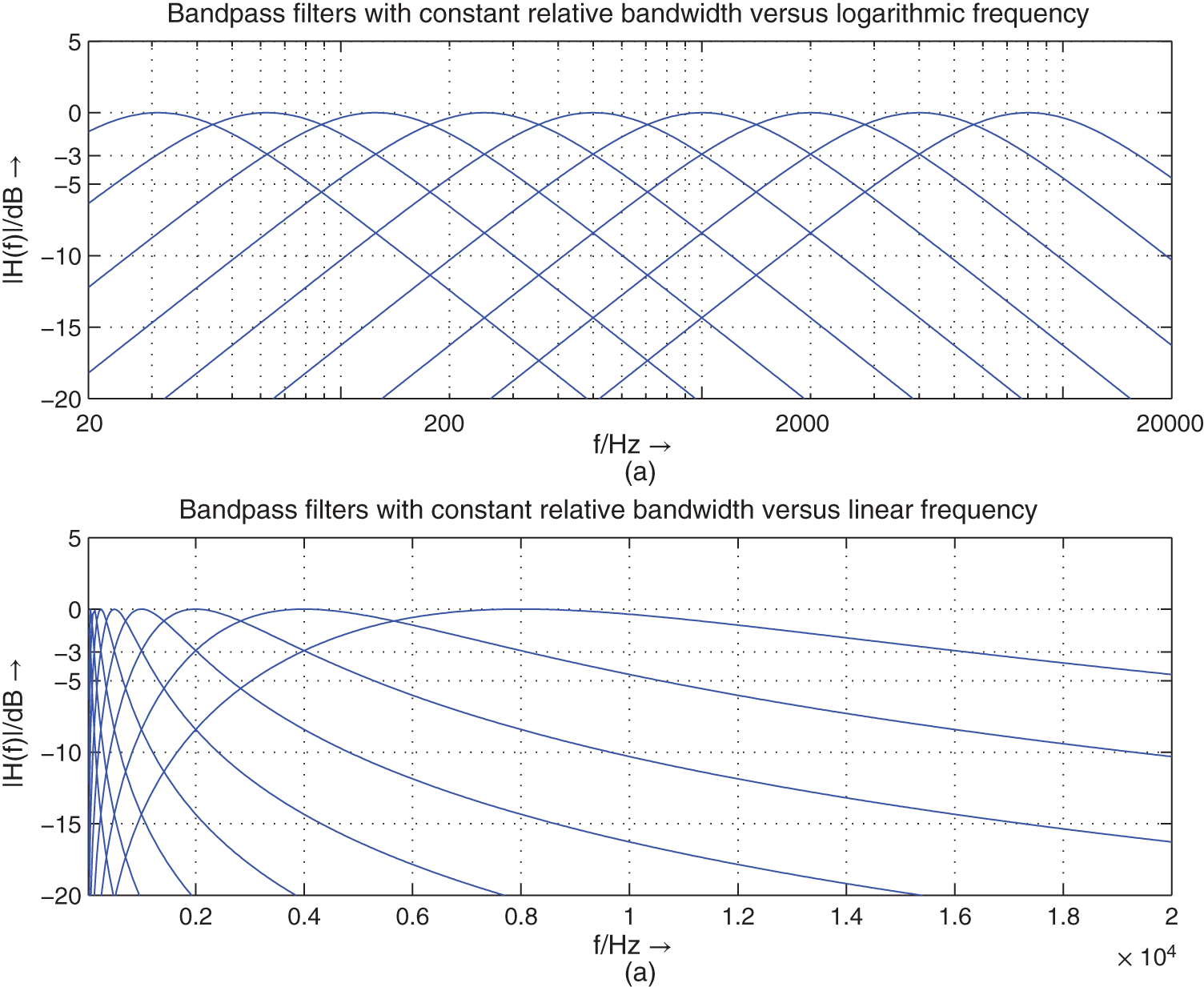

Bandpass filters with a constant relative bandwidth

are very important for audio applications [Cre03]. The bandwidth is proportional to the center frequency, which is given by

are very important for audio applications [Cre03]. The bandwidth is proportional to the center frequency, which is given by  (see Fig. 6.2).

(see Fig. 6.2).

Figure 6.1 Linear magnitude responses of lowpass, highpass, bandpass, and bandstop filters.

Figure 6.2 Logarithmic magnitude responses of bandpass filters with constant relative bandwidth.

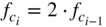

- Octave filters are bandpass filters with special cutoff frequencies given by

(6.2)

(6.3)

(6.3)

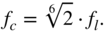

A spectral decomposition of the audio frequency range with octave filters is shown in Fig. 6.3. At the lower and upper cutoff frequency, an attenuation of

dB occurs. The upper octave band is represented as a highpass. A parallel connection of octave filters can be used for a spectral analysis of the audio signal in octave frequency bands. This decomposition is used for the signal power distribution across the octave bands. For the center frequencies of octave bands, we get

dB occurs. The upper octave band is represented as a highpass. A parallel connection of octave filters can be used for a spectral analysis of the audio signal in octave frequency bands. This decomposition is used for the signal power distribution across the octave bands. For the center frequencies of octave bands, we get  . The weighting of octave bands with gain factors

. The weighting of octave bands with gain factors  and summation of the weighted octave bands represents an octave equalizer for sound processing (see Fig. 6.4). For this application, the lower and upper cutoff frequencies need an attenuation of

and summation of the weighted octave bands represents an octave equalizer for sound processing (see Fig. 6.4). For this application, the lower and upper cutoff frequencies need an attenuation of  dB, such that a sinusoid at the crossover frequency has gain of 0 dB. The attenuation of

dB, such that a sinusoid at the crossover frequency has gain of 0 dB. The attenuation of  dB is achieved through a series connection of two octave filters with

dB is achieved through a series connection of two octave filters with  dB attenuation.

dB attenuation.

Figure 6.3 Linear magnitude responses of octave filters and decomposition of an octave band by three one‐third octave filters.

- One‐third octave filters are bandpass filters (see Fig. 6.3) with cutoff frequencies given by

(6.4)

(6.5)

(6.5)

The attenuation at the lower and upper cutoff frequency is

dB. One‐third octave filters split an octave into three frequency bands (see Fig. 6.3).

dB. One‐third octave filters split an octave into three frequency bands (see Fig. 6.3).

Figure 6.4 Parallel connection of bandpass filters (BP) for octave/one‐third octave equalizers with gain factors (

for octave or one‐third octave band).

for octave or one‐third octave band). - Shelving filters and peak filters are special weighting filters, which are based on lowpass/highpass/bandpass filters and a direct path (see Section 6.2.2). They have no stopband compared with lowpass/highpass/bandpass filters. They are used in a series connection of shelving and peak filters, as shown in Fig. 6.5. The lower frequency range is equalized by lowpass shelving filters and the higher frequencies are modified by highpass shelving filters. Both filter types allow the adjustment of the cutoff frequency and gain factor. For the mid‐frequency range, a series connection of peak filters with variable center frequency, bandwidth, and gain factor are used. These shelving and peak filters can also be applied for octave and one‐third octave equalizers in a series connection.

Figure 6.5 Series connection of shelving and peak filters (low‐frequency LF, high‐frequency HF).

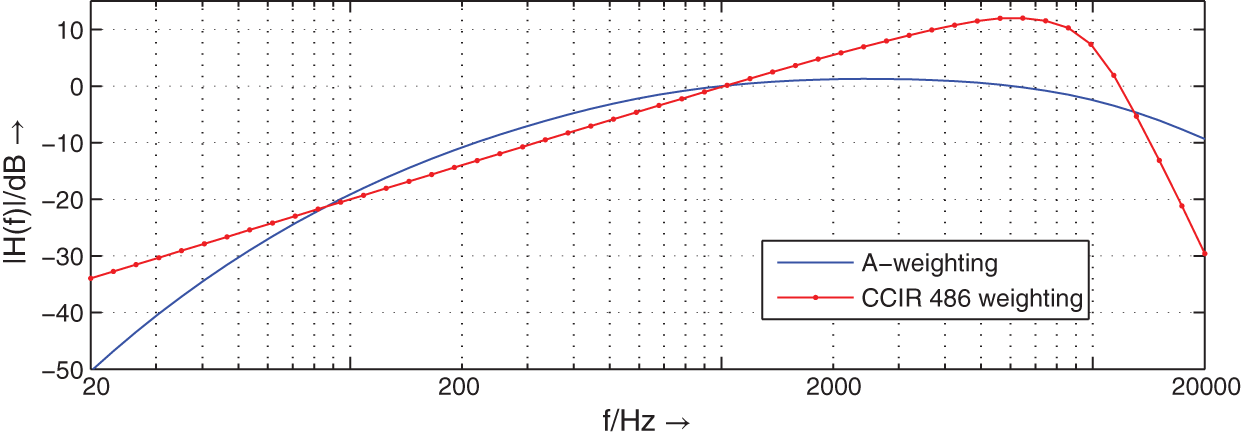

- Weighting filters are used for signal level and noise measurement applications. The signal from a device under test is first passed through the weighting filter and then a root‐mean‐square or peak value measurement is performed. The two most commonly used filters are the A‐weighting filter and the CCIR‐468 weighting filter (see Fig. 6.6). Both weighting filters take the increased sensitivity of human perception in the 1–6 kHz frequency range into account. The 0 dB of the magnitude response of both filters is crossed at 1 kHz. The CCIR‐468 weighting filter has a gain of 12 dB at 6 kHz. A variant of the CCIR‐468 filter is the ITU‐ARM 2‐kHz weighting filter, which is a 5.6‐dB down‐tilted version of the CCIR‐468 filters and passes 0 dB at 2 kHz.

Figure 6.6 Magnitude responses of weighting filters for root‐mean‐square and peak value measurements.

6.2 Recursive Audio Filters

6.2.1 Design

A certain filter response can be approximated by two kinds of transfer function. On the one hand, the combination of poles and zeros leads to a very low‐order transfer function ![]() in fractional form, which solves the given approximation problem. The digital implementation of this transfer function needs recursive procedures owing to its poles. On the other hand, the approximation problem can be solved by placing only zeros in the

in fractional form, which solves the given approximation problem. The digital implementation of this transfer function needs recursive procedures owing to its poles. On the other hand, the approximation problem can be solved by placing only zeros in the ![]() ‐plane. This transfer function

‐plane. This transfer function ![]() has, in addition to its zeros, a corresponding number of poles at the origin of the

has, in addition to its zeros, a corresponding number of poles at the origin of the ![]() ‐plane. The order of this transfer function, for the same approximation conditions, is substantially higher than for transfer functions consisting of poles and zeros. In view of an economical implementation of a filter algorithm in terms of complexity, recursive filters achieve shorter computing time owing to their lower order. For a sampling rate of 48 kHz, the algorithm has

‐plane. The order of this transfer function, for the same approximation conditions, is substantially higher than for transfer functions consisting of poles and zeros. In view of an economical implementation of a filter algorithm in terms of complexity, recursive filters achieve shorter computing time owing to their lower order. For a sampling rate of 48 kHz, the algorithm has ![]() of processing time available. With the digital signal processors (DSPs) presently available, it is easily possible to implement recursive digital filters for audio applications within this sampling period using only one DSP. To design the typical audio equalizers, we will start with filter designs in the S‐domain. These filters will then be mapped to the Z‐domain by the bilinear transformation.

of processing time available. With the digital signal processors (DSPs) presently available, it is easily possible to implement recursive digital filters for audio applications within this sampling period using only one DSP. To design the typical audio equalizers, we will start with filter designs in the S‐domain. These filters will then be mapped to the Z‐domain by the bilinear transformation.

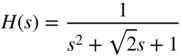

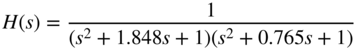

Lowpass/Highpass Filters. To limit the audio spectrum, lowpass and highpass filters with Butterworth response are used in analog mixers. They offer a monotonic passband and a monotonically decreasing stopband attenuation per octave (![]() dB/oct.) that is determined by the filter order. Lowpass filters of the second and fourth order are commonly used. The normalized and denormalized second‐order lowpass transfer functions are given by

dB/oct.) that is determined by the filter order. Lowpass filters of the second and fourth order are commonly used. The normalized and denormalized second‐order lowpass transfer functions are given by

where ![]() is the cutoff frequency and

is the cutoff frequency and ![]() is the pole quality factor. The

is the pole quality factor. The ![]() ‐factor

‐factor ![]() of a Butterworth approximation is equal to 1/

of a Butterworth approximation is equal to 1/![]() . The denormalization of a transfer function is obtained by replacing the Laplace variable

. The denormalization of a transfer function is obtained by replacing the Laplace variable ![]() by

by ![]() in the normalized transfer function.

in the normalized transfer function.

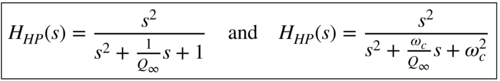

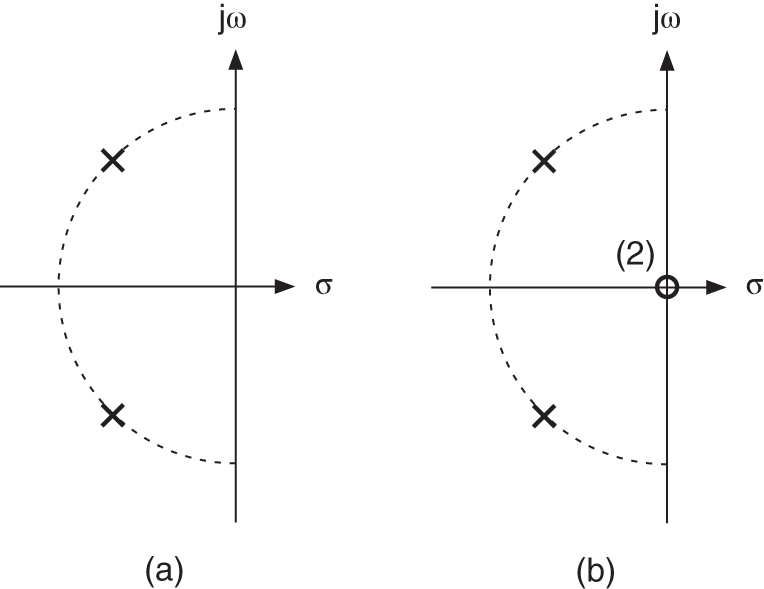

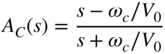

The corresponding second‐order highpass transfer functions

are obtained by a lowpass to highpass transformation. Figure 6.7 shows the pole‐zero locations in the s‐plane. The amplitude frequency response of a highpass filter with a 3‐dB cutoff frequency of 50 Hz and a lowpass filter with a 3‐dB cutoff frequency of 5000 Hz are shown in Fig. 6.8. Second‐ and fourth‐order filters are shown.

Figure 6.7 Pole‐zero location for (a) second‐order lowpass and (b) second‐order highpass.

Figure 6.8 Frequency response of lowpass and highpass filters – highpass  = 50 Hz (second/fourth order), lowpass

= 50 Hz (second/fourth order), lowpass  = 5000 Hz (second/fourth order).

= 5000 Hz (second/fourth order).

Table 6.1 summarizes the transfer functions of lowpass and highpass filters with Butterworth response.

Table 6.1 Transfer functions of lowpass and highpass filters.

| Lowpass |  | second order |

| fourth order | |

| Highpass |  | second order |

| fourth order |

Bandpass and bandstop filters. The normalized and denormalized bandpass transfer functions of second order are

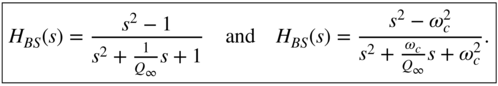

and the bandstop transfer functions are given by

The relative bandwidth can be expressed by the ![]() ‐factor

‐factor

which is the ratio of center frequency ![]() and the 3‐dB bandwidth given by

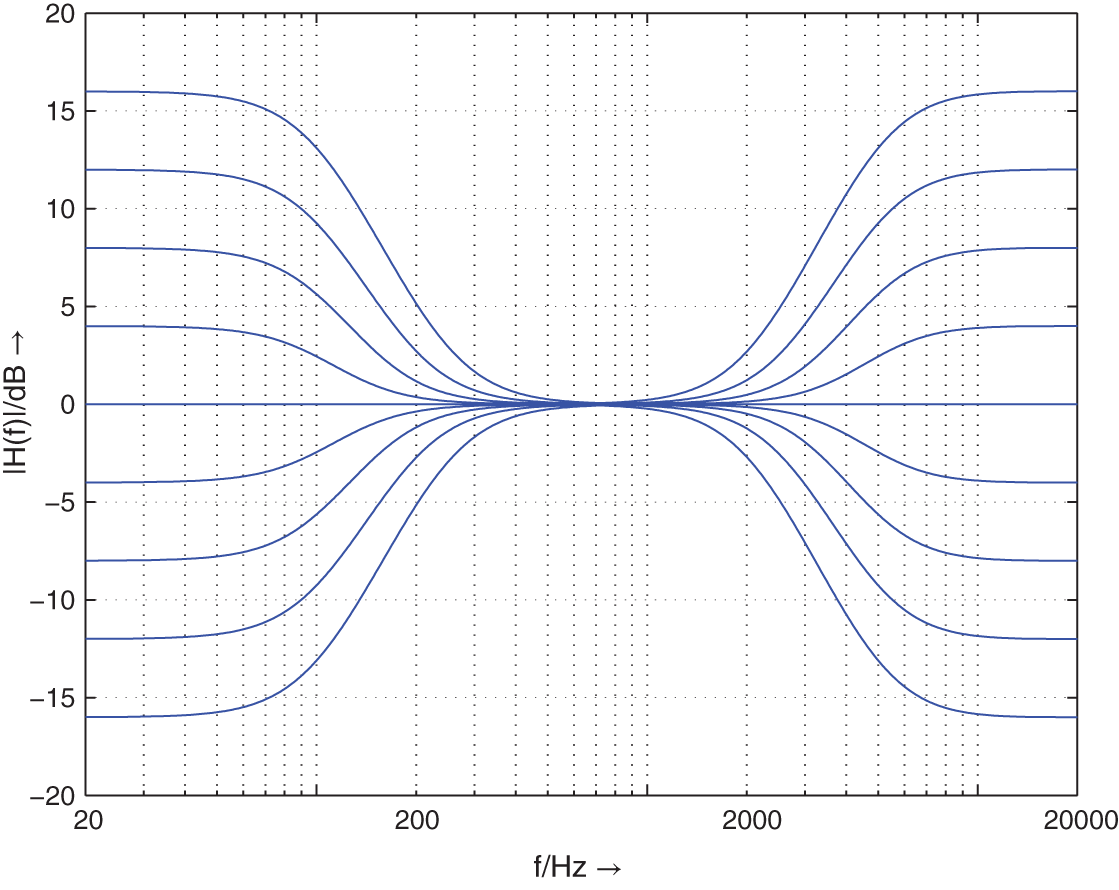

and the 3‐dB bandwidth given by ![]() . The magnitude responses of bandpass filters with constant relative bandwidth are shown in Fig. 6.2. Such kinds of filters are also called constant‐Q filters. The geometric symmetric behavior of the frequency response regarding the center frequency

. The magnitude responses of bandpass filters with constant relative bandwidth are shown in Fig. 6.2. Such kinds of filters are also called constant‐Q filters. The geometric symmetric behavior of the frequency response regarding the center frequency ![]() is clearly noticeable (symmetry regarding the center frequency using a logarithmic frequency axis).

is clearly noticeable (symmetry regarding the center frequency using a logarithmic frequency axis).

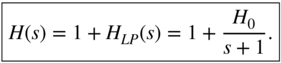

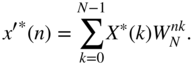

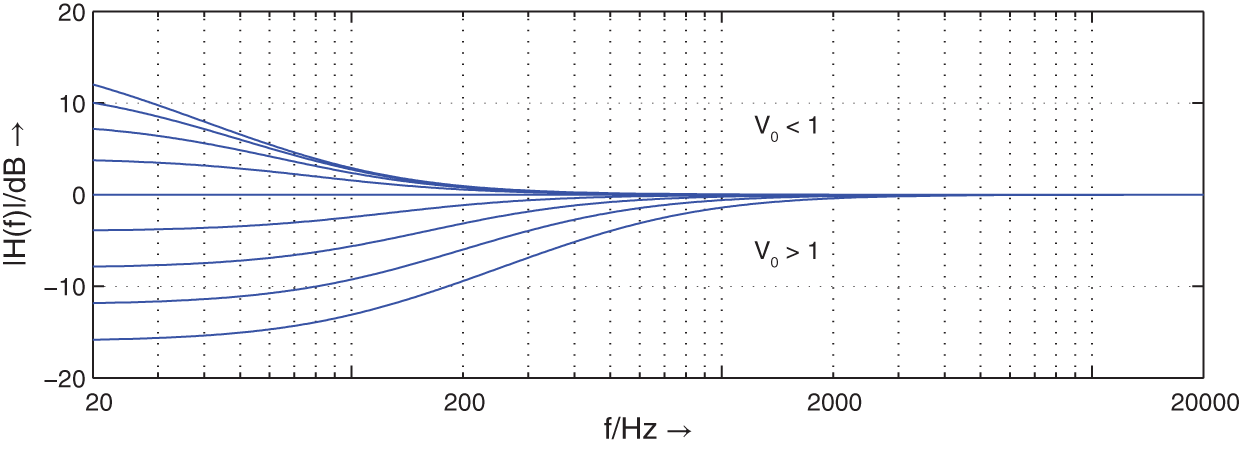

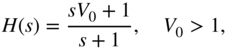

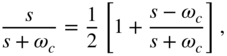

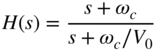

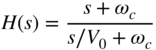

Shelving Filters. In addition to the purely band‐limiting filters like lowpass and highpass filters, shelving filters are used to perform weighting of certain frequencies. A simple approach for a first‐order lowpass shelving filter is given by

It consists of a first‐order lowpass filter with dc amplification of ![]() connected in parallel with an allpass system of transfer function equal to 1. Equation (6.11) can be written as

connected in parallel with an allpass system of transfer function equal to 1. Equation (6.11) can be written as

where ![]() determines the amplification at

determines the amplification at ![]() . By changing the parameter

. By changing the parameter ![]() , any desired boost (

, any desired boost (![]() ) and cut (

) and cut (![]() ) level can be adjusted. Figure 6.9 shows the frequency responses for

) level can be adjusted. Figure 6.9 shows the frequency responses for ![]() Hz. For

Hz. For ![]() , the cutoff frequency is dependent on

, the cutoff frequency is dependent on ![]() and is moved toward lower frequencies.

and is moved toward lower frequencies.

Figure 6.9 Frequency response of transfer function (6.12) with varying  and cutoff frequency

and cutoff frequency  Hz.

Hz.

To obtain a symmetrical frequency response with respect to the zero‐decibel line without changing the cutoff frequency, it is necessary to invert the transfer function (6.12) in the case of cut (![]() ). This has the effect of swapping poles with zeros and leads to the transfer function

). This has the effect of swapping poles with zeros and leads to the transfer function

for the cut case. Figure 6.10 shows the corresponding frequency responses for varying ![]() .

.

Figure 6.10 Frequency responses of transfer function (6.13) with varying  and cutoff frequency

and cutoff frequency  Hz.

Hz.

Finally, Figure 6.11 shows the locations of poles and zeros for both the boost and the cut cases. By moving zeros and poles on the negative ![]() ‐axis, boost and cut can be adjusted.

‐axis, boost and cut can be adjusted.

Figure 6.11 Pole‐zero locations of a first‐order low‐frequency shelving filter.

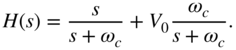

The equivalent shelving filter for high frequencies can be obtained by

which is a parallel connection of a first‐order highpass with gain ![]() and a system with transfer function equal to 1. In the case of boost, the transfer function can be written with

and a system with transfer function equal to 1. In the case of boost, the transfer function can be written with ![]() as

as

and for cut, we get

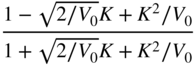

The parameter ![]() determines the value of the transfer function

determines the value of the transfer function ![]() at

at ![]() for high‐frequency shelving filters.

for high‐frequency shelving filters.

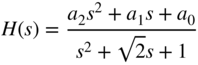

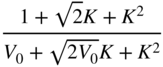

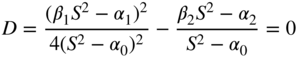

To increase the slope of the filter response in the transition band, a general second‐order transfer function

is considered, in which complex zeros are added to the complex poles. The calculation of poles leads to

If the complex zeros

are moved on a straight line with the help of the parameter ![]() (see Fig. 6.12 ), the transfer function

(see Fig. 6.12 ), the transfer function

of a second‐order low‐frequency shelving filter is obtained. The parameter ![]() determines the boost for low frequencies. The cut case can be achieved by inversion of Eq. (6.20).

determines the boost for low frequencies. The cut case can be achieved by inversion of Eq. (6.20).

Figure 6.12 Pole‐zero locations of a second‐order low‐frequency shelving filter.

A lowpass to highpass transformation of Eq. (6.20) provides the transfer function

of a second‐order high‐frequency shelving filter. The zeros

are moved on a straight line toward the origin with increasing ![]() (see Fig. 6.13 ). The cut case is obtained by inverting the transfer function (6.21). Figure 6.14 shows the amplitude frequency response of a second‐order low‐frequency shelving filter with cutoff frequency 100 Hz and a second‐order high‐frequency shelving filter with cutoff frequency 5000 Hz (parameter

(see Fig. 6.13 ). The cut case is obtained by inverting the transfer function (6.21). Figure 6.14 shows the amplitude frequency response of a second‐order low‐frequency shelving filter with cutoff frequency 100 Hz and a second‐order high‐frequency shelving filter with cutoff frequency 5000 Hz (parameter ![]() ).

).

Figure 6.13 Pole‐zero locations of second‐order high‐frequency shelving filter.

Figure 6.14 Frequency responses of second‐order low‐/high‐frequency shelving filters – low‐frequency shelving filter  = 100 Hz (parameter

= 100 Hz (parameter  ), high‐frequency shelving filter

), high‐frequency shelving filter  = 5000 Hz (parameter

= 5000 Hz (parameter  ).

).

Peak Filter. Another equalizer used for boosting or cutting any desired frequency is the peak filter. A peak filter can be obtained by a parallel connection of a direct path and a bandpass according to

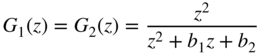

With the help of a second‐order bandpass transfer function

the transfer function

of a peak filter can be derived. It can be shown that the maximum of the amplitude frequency response at the center frequency is determined by the parameter ![]() . The relative bandwidth is fixed by the

. The relative bandwidth is fixed by the ![]() ‐factor. The geometrical symmetry of the frequency response relative to the center frequency remains constant for the transfer function of a peak filter given by Eq. (6.25). The poles and zeros lie on the unit circle. By adjusting the parameter

‐factor. The geometrical symmetry of the frequency response relative to the center frequency remains constant for the transfer function of a peak filter given by Eq. (6.25). The poles and zeros lie on the unit circle. By adjusting the parameter ![]() , the complex zeros are moved with respect to the complex poles. Figure 6.15 shows this for the boost and cut cases. With increasing

, the complex zeros are moved with respect to the complex poles. Figure 6.15 shows this for the boost and cut cases. With increasing ![]() ‐factor, the complex poles move toward the

‐factor, the complex poles move toward the ![]() ‐axis on the unit circle.

‐axis on the unit circle.

Figure 6.15 Pole‐zero locations of a second‐order peak filter.

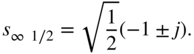

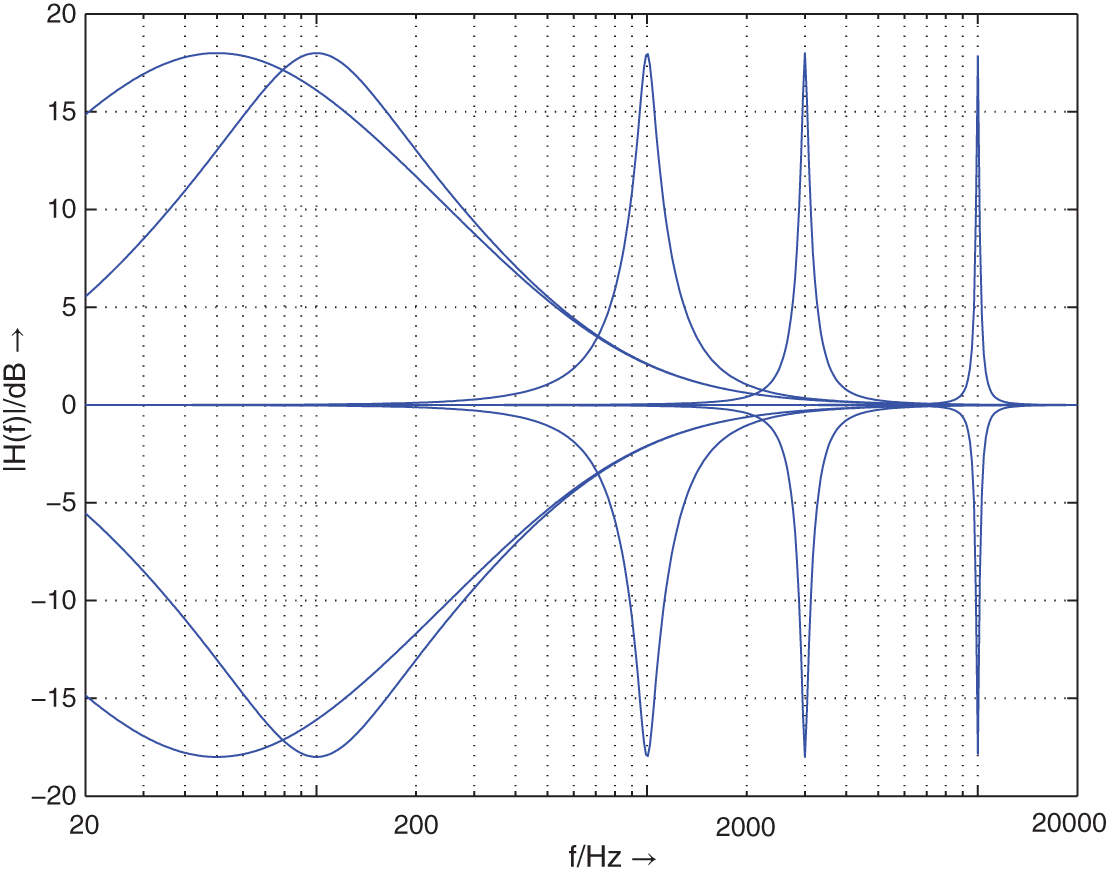

Figure 6.16 shows the amplitude frequency response of a peak filter by changing the parameter ![]() at a center frequency of 500 Hz and a

at a center frequency of 500 Hz and a ![]() ‐factor of 1.25. Figure 6.17 shows the variation of the

‐factor of 1.25. Figure 6.17 shows the variation of the ![]() ‐factor

‐factor ![]() at a center frequency of 500 Hz, a boost/cut of

at a center frequency of 500 Hz, a boost/cut of ![]() dB, and

dB, and ![]() ‐factor of 1.25. Finally, the variation of the center frequency with boost and cut of

‐factor of 1.25. Finally, the variation of the center frequency with boost and cut of ![]() dB and a

dB and a ![]() ‐factor 1.25 is shown in Fig. 6.18 .

‐factor 1.25 is shown in Fig. 6.18 .

Figure 6.16 Frequency response of a peak filter –  = 500 Hz,

= 500 Hz,  = 1.25, cut parameter

= 1.25, cut parameter  .

.

Figure 6.17 Frequency responses of peak filters –  = 500 Hz, boost/cut

= 500 Hz, boost/cut  dB,

dB,  = 0.707, 1.25, 2.5, 3, 5.

= 0.707, 1.25, 2.5, 3, 5.

Figure 6.18 Frequency responses of peak filters – boost/cut  dB,

dB,  = 1.25,

= 1.25,  = 50, 200, 1000, 4000 Hz.

= 50, 200, 1000, 4000 Hz.

Mapping to Z‐domain. To implement a digital filter, the filter designed in the S‐domain with transfer function ![]() is converted to the Z‐domain with the help of a suitable transformation to obtain the transfer function

is converted to the Z‐domain with the help of a suitable transformation to obtain the transfer function ![]() . The impulse‐invariant transformation is not suitable as it leads to overlapping effects if the transfer function

. The impulse‐invariant transformation is not suitable as it leads to overlapping effects if the transfer function ![]() is not band limited to half the sampling rate. An independent mapping of poles and zeros from the S‐domain into poles and zeros in the Z‐domain is possible with help of the bilinear transformation given by

is not band limited to half the sampling rate. An independent mapping of poles and zeros from the S‐domain into poles and zeros in the Z‐domain is possible with help of the bilinear transformation given by

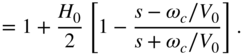

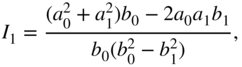

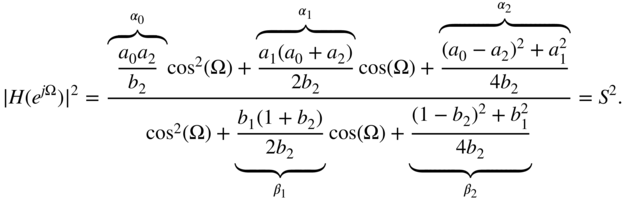

Tables 6.2 , 6.3 , 6.4 , and 6.5 contain the coefficients of the second‐order transfer function

which are determined by the bilinear transformation and the auxiliary variable ![]() for all discussed audio filter types. Further filter designs of peak and shelving filters are discussed in [Moo83, Whi86, Sha92, Bri94, Orf96a, Dat97, Cla00, Väl16]. A method for reducing the warping effect of the bilinear transform is proposed in [Orf96b]. Strategies for time‐variant switching of audio filters can be found in [Rab88, Mou90, Zöl93, Din95, Väl98].

for all discussed audio filter types. Further filter designs of peak and shelving filters are discussed in [Moo83, Whi86, Sha92, Bri94, Orf96a, Dat97, Cla00, Väl16]. A method for reducing the warping effect of the bilinear transform is proposed in [Orf96b]. Strategies for time‐variant switching of audio filters can be found in [Rab88, Mou90, Zöl93, Din95, Väl98].

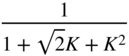

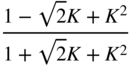

Table 6.2 Lowpass/highpass/bandpass filter design.

| Lowpass (second‐order) | ||||

|  |  |  |  |

| Highpass (second‐order) | ||||

|  |  |  |  |

| Bandpass (second‐order) | ||||

|  |  |  | |

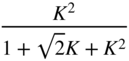

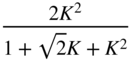

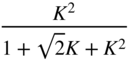

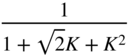

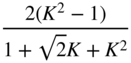

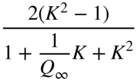

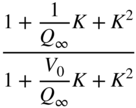

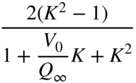

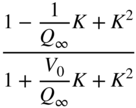

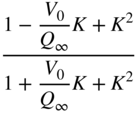

Table 6.3 Peak filter design with gain ![]() in dB.

in dB.

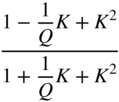

| Peak (boost | ||||

|  |  |  |  |

| Peak (cut | ||||

|  |  |  |  |

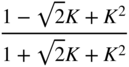

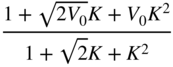

Table 6.4 Low‐frequency shelving filter design with gain ![]() in dB.

in dB.

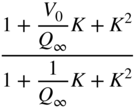

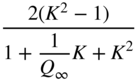

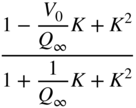

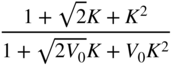

| Low‐frequency shelving (Boost | ||||

| | | | | |

|  |  |  |  |

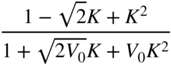

| Low‐frequency shelving (Cut | ||||

| | | | | |

|  |  |  |  |

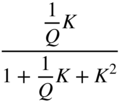

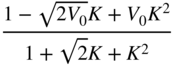

Table 6.5 High‐frequency shelving filter design with gain ![]() in dB.

in dB.

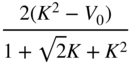

| High‐frequency shelving (Boost | ||||

| | | | | |

|  |  |  |  |

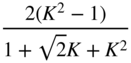

| High‐frequency shelving (Cut | ||||

| | | | | |

|  |  |  |  |

6.2.2 Parametric Filter Structures

Parametric filter structures allow direct access to the parameters of the transfer function, like center/cutoff frequency, bandwidth, and gain, through the control of associated coefficients. To modify one of these parameters, it is therefore not necessary to compute a complete set of coefficients for a second‐order transfer function, but instead only one coefficient in the filter structure is calculated.

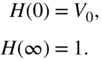

An independent control of gain, cutoff/center frequency, and bandwidth for shelving and peak filters is achieved by a feed‐forward (FF) structure for boost and a feed‐backward (FB) structure for cut, as shown in Fig. 6.19 . The corresponding transfer functions are

The boost/cut factor is ![]() . For digital filter implementations, it is necessary for the FB case that the inner transfer function be of the form

. For digital filter implementations, it is necessary for the FB case that the inner transfer function be of the form ![]() to ensure causality. A parametric filter structure proposed by Harris [Har93] is based on the FF/FB technique, but the frequency response shows slight deviations near

to ensure causality. A parametric filter structure proposed by Harris [Har93] is based on the FF/FB technique, but the frequency response shows slight deviations near ![]() and

and ![]() from the desired one. This arises from the

from the desired one. This arises from the ![]() in the FF/FB branch. Delay‐free loops inside filter computations can be solved by the methods presented in [Här98, Fon01, Fon03]. Higher‐order parametric filter designs have been introduced in [Kei04, Orf05, Hol06a, Hol06b, Hol06c, Hol06d]. It is possible to implement typical audio filters with only an FF structure. The complete decoupling of the control parameters is possible for the boost case, but there remains a coupling between bandwidth and gain factor for the cut case. In the following, two approaches for parametric audio filter structures based on an allpass decomposition of the transfer function will be discussed.

in the FF/FB branch. Delay‐free loops inside filter computations can be solved by the methods presented in [Här98, Fon01, Fon03]. Higher‐order parametric filter designs have been introduced in [Kei04, Orf05, Hol06a, Hol06b, Hol06c, Hol06d]. It is possible to implement typical audio filters with only an FF structure. The complete decoupling of the control parameters is possible for the boost case, but there remains a coupling between bandwidth and gain factor for the cut case. In the following, two approaches for parametric audio filter structures based on an allpass decomposition of the transfer function will be discussed.

Figure 6.19 Filter structure for implementing boost and cut filters.

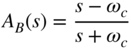

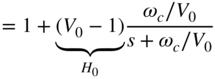

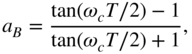

Regalia filter [Reg87]. The denormalized transfer function of a first‐order shelving filter is given by

with

A decomposition of Eq. (6.30) leads to

The lowpass and highpass transfer functions in Eq. (6.31) can be expressed by an allpass decomposition of the form

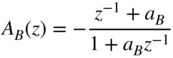

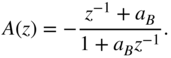

With the allpass transfer function

for boost, Eq. (6.30) can be rewritten as

The bilinear transformation ![]() leads to

leads to

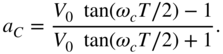

with

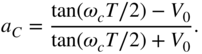

and the frequency parameter

A filter structure for direct implementation of Eq. (6.36) is presented in Fig. 6.20 a. Other possible structures can be seen in Fig. 6.20 b,c. For the cut case ![]() , the cutoff frequency of the filter moves towards lower frequencies [Reg87].

, the cutoff frequency of the filter moves towards lower frequencies [Reg87].

Figure 6.20 Filter structures by Regalia.

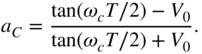

To retain the cutoff frequency for the cut case [Zöl95], the denormalized transfer function of a first‐order shelving filter (cut)

with the boundary conditions

can be decomposed as follows:

With the allpass decompositions

and the allpass transfer function

for cut, Eq. (6.39) can be rewritten as

The bilinear transformation leads to

with

and the frequency parameter

Owing to (Eqs. 6.45) and (6.36), boost and cut can be implemented with the same filter structure (see Fig. 6.20 ). However, it has to be noted that the frequency parameter ![]() , as in Eq. (6.47) for cut, depends on the cutoff frequency and gain.

, as in Eq. (6.47) for cut, depends on the cutoff frequency and gain.

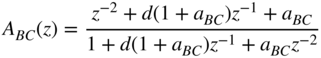

A second‐order peak filter is obtained by a lowpass to bandpass transformation according to

For an allpass, as given in (Eqs. 6.37) and (6.46), the second‐order allpass is given by

with parameters (cut as in [Zöl95])

The center frequency ![]() is fixed by the parameter

is fixed by the parameter ![]() , the bandwidth

, the bandwidth ![]() by the parameters

by the parameters ![]() and

and ![]() , and gain

, and gain ![]() by the parameter

by the parameter ![]() .

.

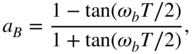

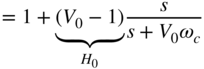

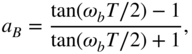

Simplified Allpass Decomposition [Zöl95]. The transfer function of a first‐order low‐frequency shelving filter can be a decomposed as

with

The transfer function (6.55) is composed of a direct branch and a lowpass filter. The first‐order lowpass filter is again implemented by an allpass decomposition. Applying the bilinear transformation to Eq. (6.55) leads to

with

For cut, the following decomposition can be derived:

The bilinear transformation applied to Eq. (6.63) again gives Eq. (6.59). The filter structure is identical for boost and cut. The frequency parameter ![]() for boost and

for boost and ![]() for cut can be calculated as

for cut can be calculated as

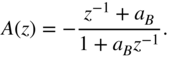

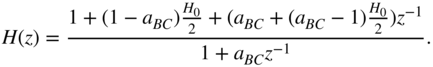

The transfer function of a first‐order low‐frequency shelving filter can be calculated as

With ![]() , the signal flow chart in Fig. 6.21 shows a first‐order lowpass filter and a first‐order low‐frequency shelving filter.

, the signal flow chart in Fig. 6.21 shows a first‐order lowpass filter and a first‐order low‐frequency shelving filter.

Figure 6.21 Low‐frequency shelving filter and first‐order lowpass filter.

The decomposition of a denormalized transfer function of a first‐order high‐frequency shelving filter can be given in the form of

where

The transfer function results by adding a highpass filter to a constant. Applying the bilinear transformation to Eq. (6.68) gives

with

For cut, the decomposition can be given by

which in return results in Eq. (6.71) after a bilinear transformation. The boost and cut parameters can be calculated as

The transfer function of a first‐order high‐frequency shelving filter can then be written as

With ![]() , the signal flow chart in Fig. 6.22 shows a first‐order highpass filter and a high‐frequency shelving filter.

, the signal flow chart in Fig. 6.22 shows a first‐order highpass filter and a high‐frequency shelving filter.

Figure 6.22 First‐order high‐frequency shelving and highpass filters.

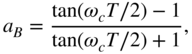

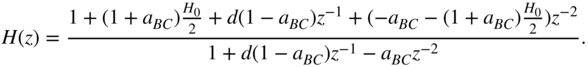

The implementation of a second‐order peak filter can be carried out with a lowpass to bandpass transformation of a first‐order shelving filter. However, the addition of a second‐order bandpass filter to a constant branch also results in a peak filter. With the help of an allpass implementation of a bandpass filter, as given by

and

a second‐order peak filter can be expressed as

The bandwidth parameters ![]() and

and ![]() for boost and cut are given by

for boost and cut are given by

The center frequency parameter ![]() and the coefficient

and the coefficient ![]() are given by

are given by

The transfer function of a second‐order peak filter results in

The signal flow charts for a second‐order peak filter and a second‐order bandpass filter are shown in Fig. 6.23 .

Figure 6.23 Second‐order peak filter and bandpass filter.

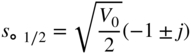

Figure 6.24 Low‐frequency first‐order shelving filter ( dB;

dB;  = 20, 50, 100, 1000 Hz).

= 20, 50, 100, 1000 Hz).

Figure 6.25 First‐order high‐frequency shelving filter ( dB;

dB;  = 1, 3, 5, 10, 16 kHz).

= 1, 3, 5, 10, 16 kHz).

Figure 6.26 Second‐order peak filter ( dB;

dB;  = 50, 100, 1000, 3000, 10000 Hz;

= 50, 100, 1000, 3000, 10000 Hz;  = 100 Hz).

= 100 Hz).

The frequency responses for high‐frequency shelving, low‐frequency shelving, and peak filters are shown in Figs. 6.24 , 6.25 , and 6.26 .

6.2.3 Quantization Effects

The limited word length for digital recursive filters leads to two different types of quantization error. The quantization of the coefficients of a digital filter results in linear distortion which can be noticed as a deviation from the ideal frequency response. The quantization of the signal inside a filter structure is responsible for the maximum dynamic range and determines the noise behavior of the filter. Owing to rounding operations in a filter structure, round‐off noise is produced. Another effect of the signal quantization is limit cycles. They can be classified as overflow limit cycles, small‐scale limit cycles, and limit cycles correlated with the input signal. Limit cycles are very disturbing owing to their small‐band (sinusoidal) nature. The overflow limit cycles can be avoided by suitable scaling of the input signal. The effects of other errors mentioned above can be reduced by increasing the word lengths of the coefficient and the state variables of the filter structure.

The noise behavior and coefficient sensitivity of a filter structure depend on the topology and the cutoff frequency (position of the poles in the Z‐domain) of the filter. Because common audio filters operate between 20 Hz and 20 kHz at a sampling rate of 48 kHz, the filter structures are subjected to specially strict criteria with respect to error behavior. The frequency range for equalizers is between 20 Hz and 4–6 kHz because the human voice and many musical instruments have their formants in that frequency region. For given coefficient and signal word‐lengths (like in a digital signal processor), a filter structure with low round‐off noise for audio application can lead to a suitable solution. For this, the following second‐order filter structures are compared.

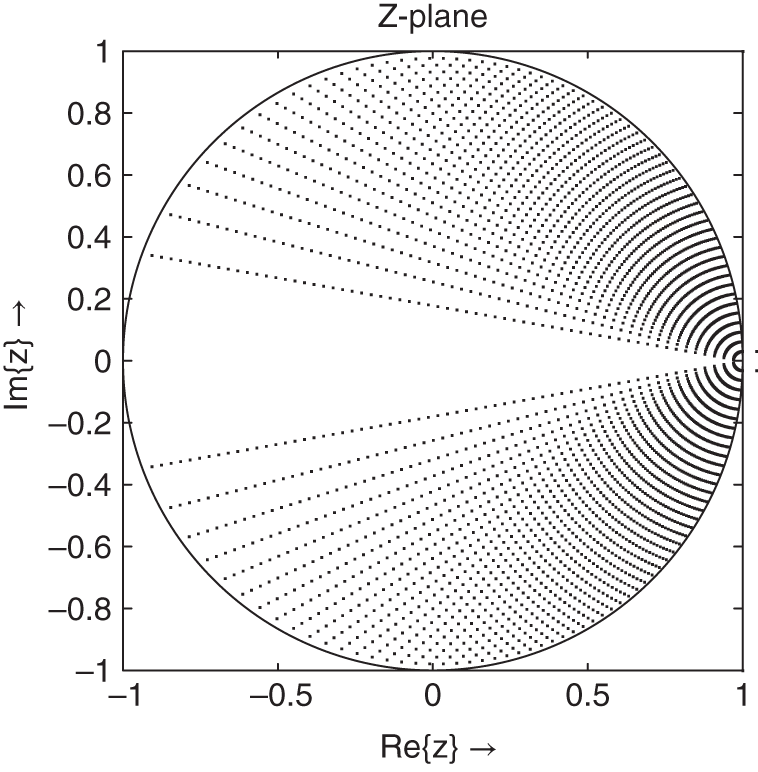

The basis of the following considerations is the relationship between the coefficient sensitivity and round‐off noise. This was first stated by Fettweis [Fet72]. By increasing the pole density in a certain region of the ![]() ‐plane, the coefficient sensitivity and the round‐off noise of the filter structure are reduced. Owing to these improvements, the coefficient word‐length as well as signal word‐length can be reduced. Work in designing digital filters with minimum word‐length for coefficients and state variables was first carried out by Avenhaus [Ave71].

‐plane, the coefficient sensitivity and the round‐off noise of the filter structure are reduced. Owing to these improvements, the coefficient word‐length as well as signal word‐length can be reduced. Work in designing digital filters with minimum word‐length for coefficients and state variables was first carried out by Avenhaus [Ave71].

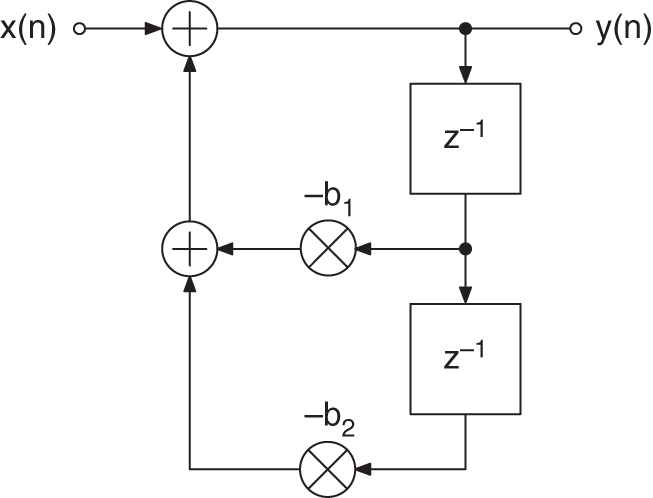

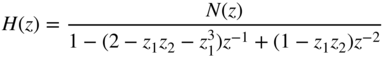

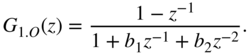

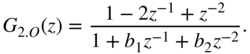

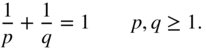

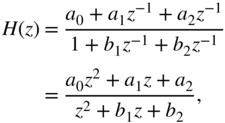

Typical audio filters like highpass/lowpass, peak/shelving filters can be described by the second‐order transfer function

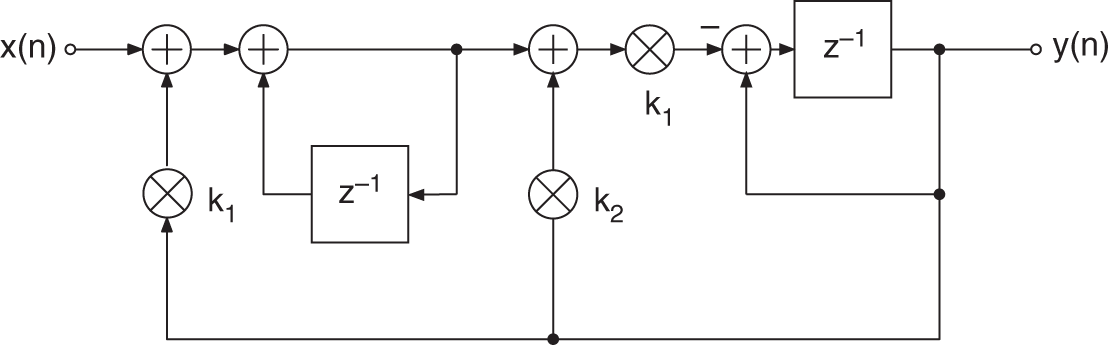

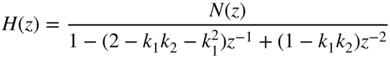

The recursive part of the difference equation, which can be derived from the transfer function (6.88), is considered more closely, because it plays a major role in affecting the error behavior. Owing to the quantization of the coefficients in the denominator in Eq. (6.88), the distribution of poles in the ![]() ‐plane is restricted (see Fig. 6.27 for 6‐bit quantization of coefficients). The pole distribution in the second quadrant of the

‐plane is restricted (see Fig. 6.27 for 6‐bit quantization of coefficients). The pole distribution in the second quadrant of the ![]() ‐plane is the mirror image of the first quadrant. Figure 6.28 shows a block diagram of the recursive part. Another equivalent representation of the denominator is given by

‐plane is the mirror image of the first quadrant. Figure 6.28 shows a block diagram of the recursive part. Another equivalent representation of the denominator is given by

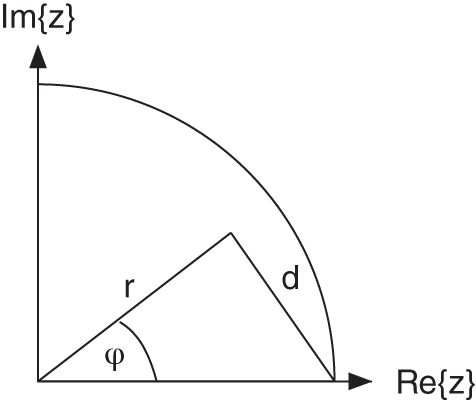

Here ![]() is the radius and

is the radius and ![]() the corresponding phase of the complex poles. By quantizing these parameters, the pole distribution is altered in contrast to the case where

the corresponding phase of the complex poles. By quantizing these parameters, the pole distribution is altered in contrast to the case where ![]() and

and ![]() are quantized, as in Eq. (6.88).

are quantized, as in Eq. (6.88).

Figure 6.27 Direct‐form structure – pole distribution (6‐bit quantization).

Figure 6.28 Direct‐form structure – block diagram of recursive part.

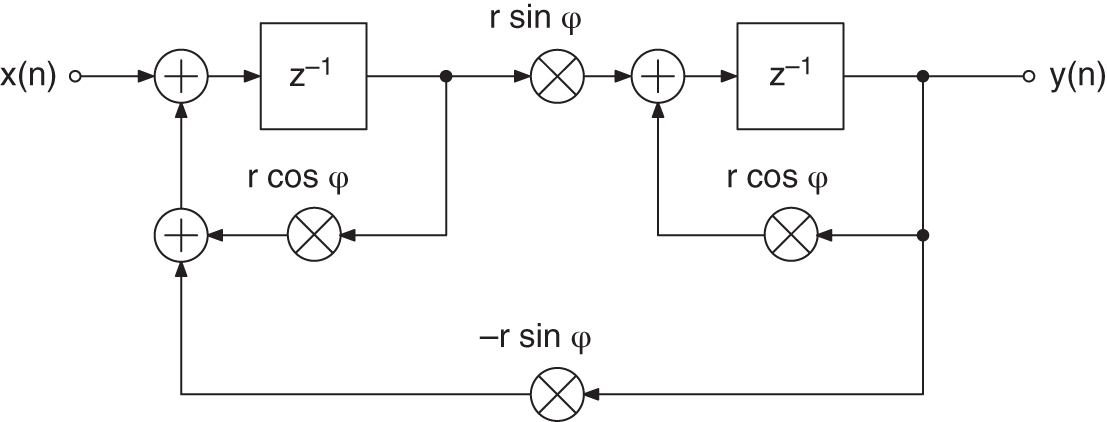

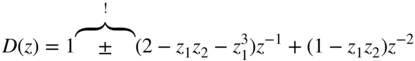

The state variable structure [Mul76, Bom85] is based on the approach by Gold and Rader [Gol67], which is given by

The possible pole locations are shown in Fig. 6.29 for 6‐bit quantization (block diagram of recursive part is shown in Fig. 6.30 ). Owing to the quantization of real and imaginary parts, a uniform grid of different pole locations results. In contrast to direct quantization of the coefficients ![]() and

and ![]() in the denominator, the quantization of the real and imaginary parts leads to an increase in the pole density at

in the denominator, the quantization of the real and imaginary parts leads to an increase in the pole density at ![]() . The possible pole locations in the second quadrant in the

. The possible pole locations in the second quadrant in the ![]() ‐plane are the mirror images of those in the first quadrant.

‐plane are the mirror images of those in the first quadrant.

Figure 6.29 Gold and Rader – pole distribution (6‐bit quantization).

Figure 6.30 Gold and Rader – block diagram of recursive part.

In [Kin72], a filter structure is suggested which has a pole distribution as shown in Fig. 6.31 (for the block diagram of recursive part, see Fig. 6.32 ).

Figure 6.31 Kingsbury – pole distribution (6‐bit quantization).

Figure 6.32 Kingsbury – block diagram of recursive part.

The corresponding transfer function

shows that in this case, the coefficients ![]() and

and ![]() can be obtained by a linear combination of the quantized coefficients

can be obtained by a linear combination of the quantized coefficients ![]() and

and ![]() . The distance

. The distance ![]() of the pole from the point

of the pole from the point ![]() determines the coefficients

determines the coefficients

as illustrated in Fig. 6.33 .

Figure 6.33 Geometric interpretation.

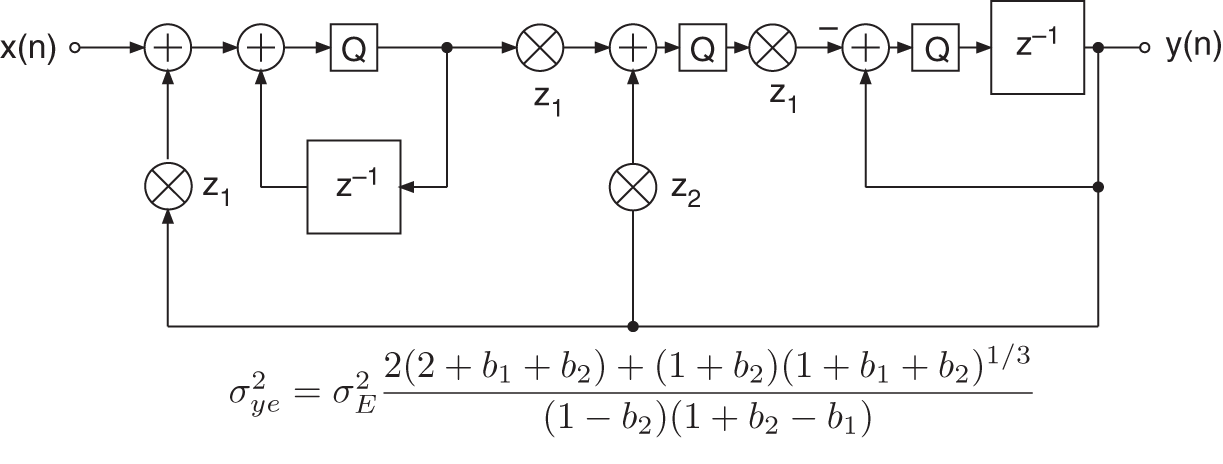

The filter structures under consideration show that by a suitable linear combination of quantized coefficients, any desired pole distribution can be obtained. An increase of the pole density at ![]() can be achieved by influencing the linear relationship between the coefficient

can be achieved by influencing the linear relationship between the coefficient ![]() and the distance

and the distance ![]() from

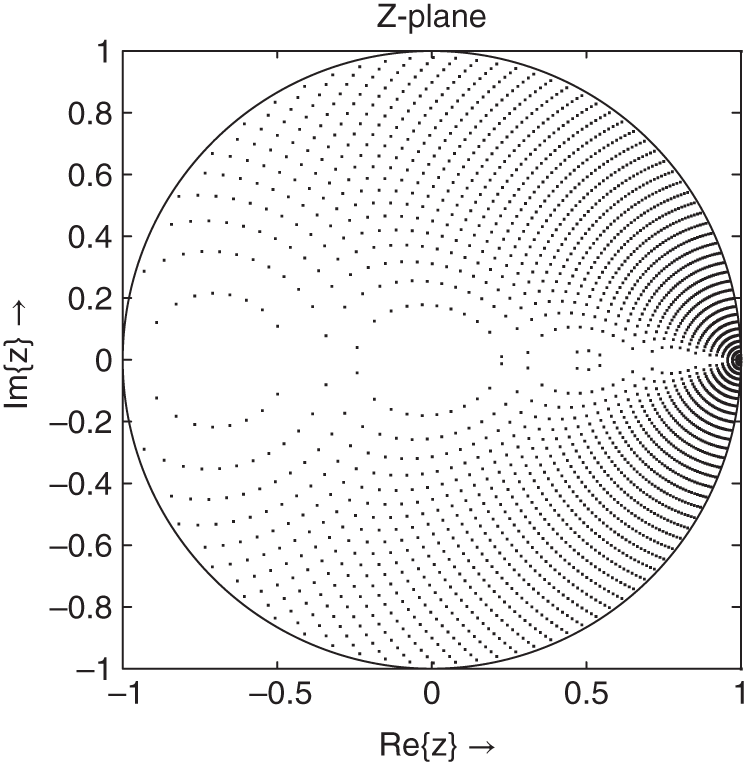

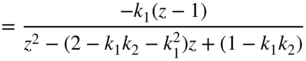

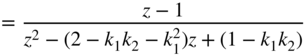

from ![]() [Zöl89, Zöl90]. The nonlinear relationship of the new coefficients gives the following structure with the transfer function

[Zöl89, Zöl90]. The nonlinear relationship of the new coefficients gives the following structure with the transfer function

and coefficients

with

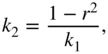

The pole distribution of this structure is shown in Fig. 6.34 . The block diagram of the recursive part is illustrated in Fig. 6.35 . An increase in the pole density at ![]() in contrast to previous pole distributions is noticed. The pole distributions of the Kingsbury and Zölzer structures show a decrease in the pole density for higher frequencies. For the pole density, a symmetry with respect to the imaginary axis, as in the case of the direct‐form structure and the Gold and Rader structure, is not possible. However, changing the sign in the recursive part of the difference equation results in a mirror image of the pole density. The mirror image can be achieved through a change of sign in the denominator polynomial. The denominator polynomial

in contrast to previous pole distributions is noticed. The pole distributions of the Kingsbury and Zölzer structures show a decrease in the pole density for higher frequencies. For the pole density, a symmetry with respect to the imaginary axis, as in the case of the direct‐form structure and the Gold and Rader structure, is not possible. However, changing the sign in the recursive part of the difference equation results in a mirror image of the pole density. The mirror image can be achieved through a change of sign in the denominator polynomial. The denominator polynomial

shows that the real part depends on the coefficient of ![]() .

.

Figure 6.34 Zölzer – pole distribution (6‐bit quantization).

Figure 6.35 Zölzer – block diagram of recursive part.

Analytical Comparison of Noise Behavior of Different Filter Structures

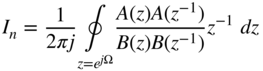

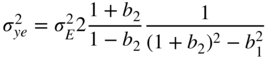

In this section, recursive filter structures are analyzed in terms of their noise behavior in fixed‐point arithmetic [Zöl89, Zöl90, Zöl94]. The block diagrams provide the basis of an analytical calculation of noise power owing to the quantization of state variables. First of all, the general case is considered in which quantization is performed after multiplication. For this purpose, the transfer function ![]() of every multiplier output to the output of the filter structure is determined.

of every multiplier output to the output of the filter structure is determined.

For this error analysis, it is assumed that the signal within the filter structure covers the whole dynamic range so that the quantization error ![]() is not correlated with the signal. Consecutive quantization error samples are not correlated with each other so that a uniform power density spectrum results [Sri77]. It can also be assumed that different quantization errors

is not correlated with the signal. Consecutive quantization error samples are not correlated with each other so that a uniform power density spectrum results [Sri77]. It can also be assumed that different quantization errors ![]() are uncorrelated within the filter structure. Owing to the uniform distribution of the quantization error, the variance can be given by

are uncorrelated within the filter structure. Owing to the uniform distribution of the quantization error, the variance can be given by

The quantization error is added at every point of quantization and is filtered by the corresponding transfer function ![]() to the output of the filter. The variance of the output quantization noise (owing to the noise source

to the output of the filter. The variance of the output quantization noise (owing to the noise source ![]() ) is given by

) is given by

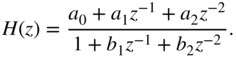

Exact solutions for the ring integral (6.100) can be found in [Jur64] for transfer functions up to the fourth order. With the ![]() norm of a periodic function

norm of a periodic function

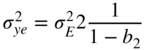

the superposition of the noise variances leads with Eq. (6.101) to the total output noise variance

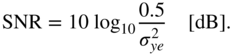

The signal‐to‐noise ratio (SNR) for a full‐range sinusoid can be written as

The ring integral

is given in [Jur64] for first‐order systems by

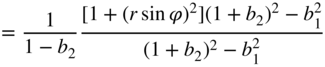

and for second‐order systems by

In the following, an analysis of the noise behavior for different recursive filter structures will be made. The noise transfer functions of individual recursive parts are responsible for noise shaping.

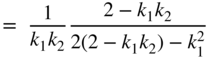

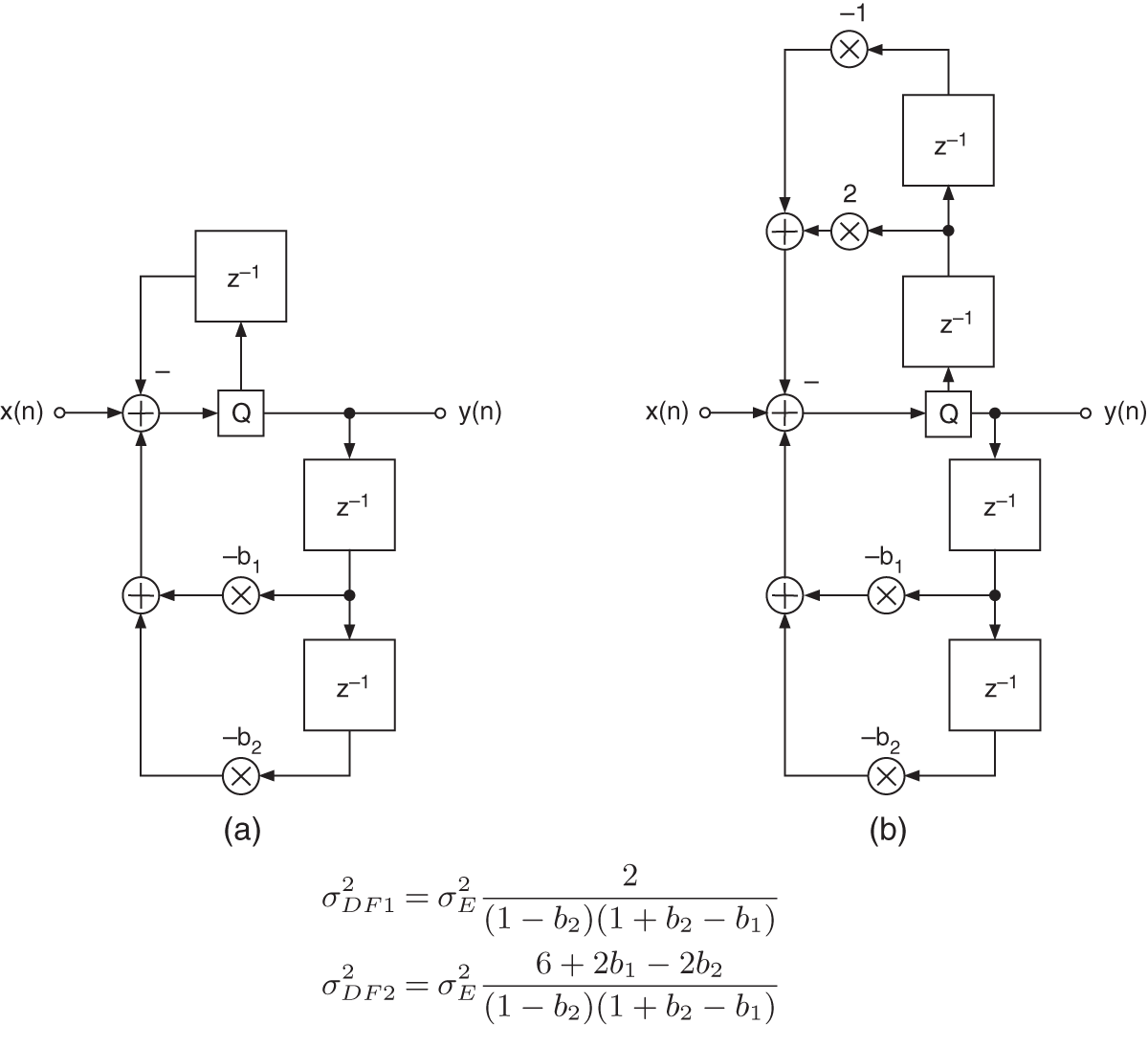

The error transfer function of a second‐order direct‐form structure (see Fig. 6.36 ) has only complex poles (see Table 6.6 ).

Figure 6.36 Direct form with additive error signal.

Table 6.6 Direct‐form – a) noise transfer function, b) quadratic ![]() norm, and c) output noise variance in the case of quantization after every multiplication.

norm, and c) output noise variance in the case of quantization after every multiplication.

| a) |  |

| b) |  |

| c) |  |

The implementation of poles near the unit circle leads to high amplification of the quantization error. The effect of the pole radius on the noise variance can be observed in the equation for output noise variance. The coefficient ![]() approaches 1 which leads to a huge increase in the output noise variance.

approaches 1 which leads to a huge increase in the output noise variance.

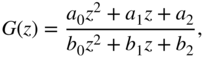

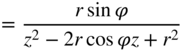

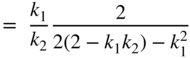

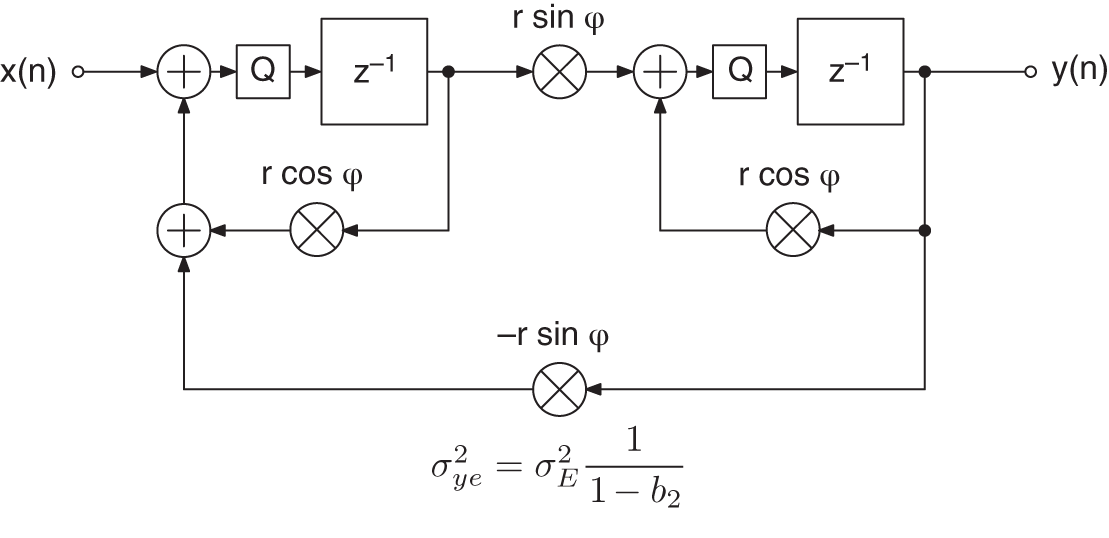

The Gold and Rader filter structure (Fig. 6.37 ) has an output noise variance that depends on the pole radius (see Table 6.7 ) and is independent of the pole phase. The latter fact is because of the uniform grid of the pole distribution. An additional zero on the real axis (![]() ) directly beneath the poles reduces the effect of the complex poles.

) directly beneath the poles reduces the effect of the complex poles.

Figure 6.37 Gold and Rader structure with additive error signals.

Table 6.7 Gold and Rader – a) noise transfer function, b) quadratic ![]() norm, and c) output noise variance in the case of quantization after every multiplication.

norm, and c) output noise variance in the case of quantization after every multiplication.

| a) |  | |

| b) |  | |

| ||

| c) |  |

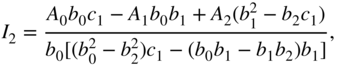

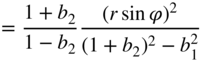

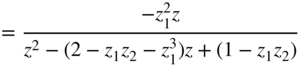

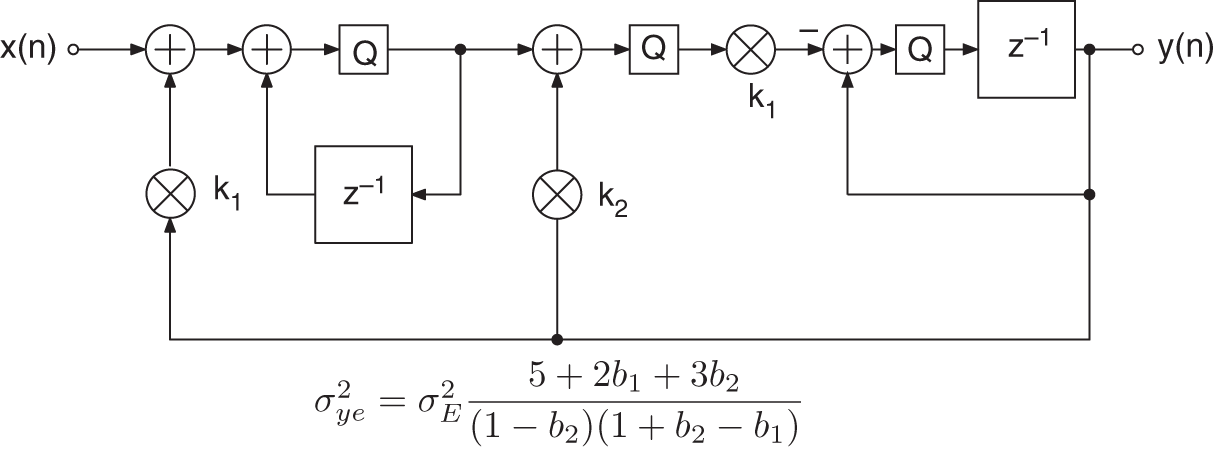

The Kingsbury filter (Fig. 6.38 and Table 6.8 ) and the Zölzer filter (Fig. 6.39 and Table 6.9 ), which is derived from it, show that the noise variance depends on the pole radius. The noise transfer functions have a zero at ![]() in addition to the complex poles. This zero reduces the amplifying effect of the pole near the unit circle at

in addition to the complex poles. This zero reduces the amplifying effect of the pole near the unit circle at ![]() .

.

Figure 6.38 Kingsbury structure with additive error signals.

Table 6.8 Kingsbury – a) noise transfer function, b) quadratic ![]() norm, and c) output noise variance in the case of quantization after every multiplication.

norm, and c) output noise variance in the case of quantization after every multiplication.

| a) |  | |

| ||

| ||

| b) |  | |

| ||

| ||

| c) |  | |

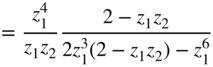

Figure 6.39 Zölzer structure with additive error signals.

Table 6.9 Zölzer – a) noise transfer function, b) quadratic ![]() norm, and c) output noise variance in the case of quantization after every multiplication.

norm, and c) output noise variance in the case of quantization after every multiplication.

| a) |  | |

| ||

| ||

| b) |  | |

| ||

| ||

| c) |  | |

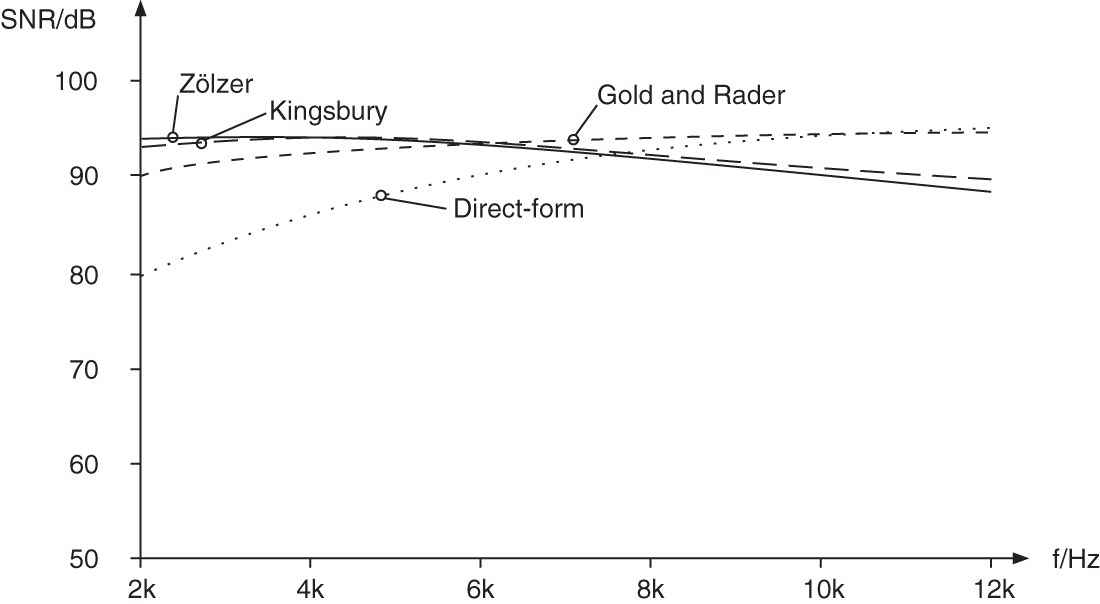

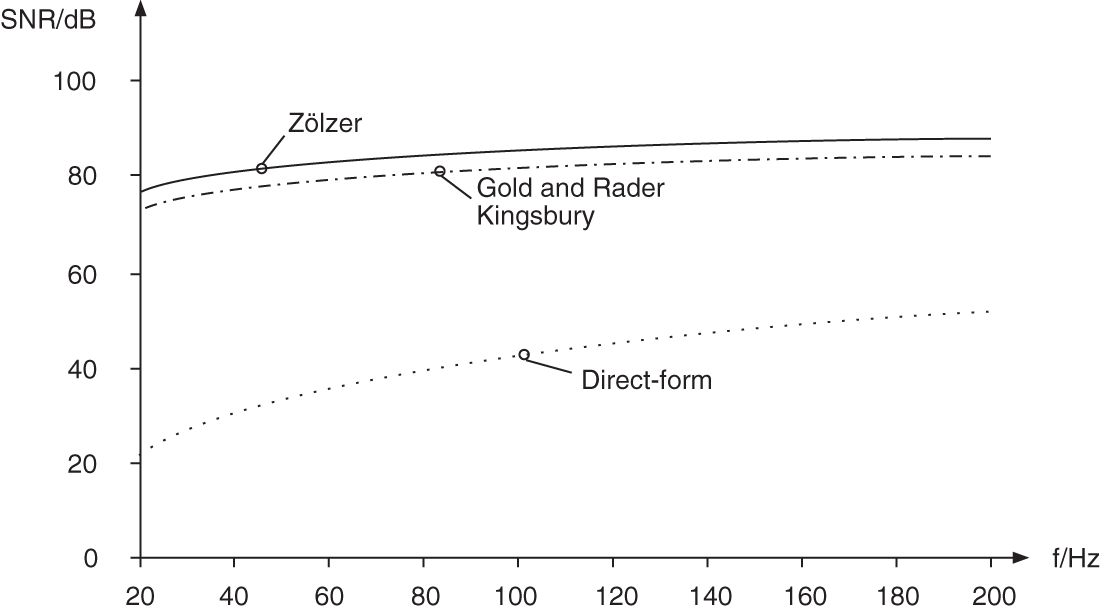

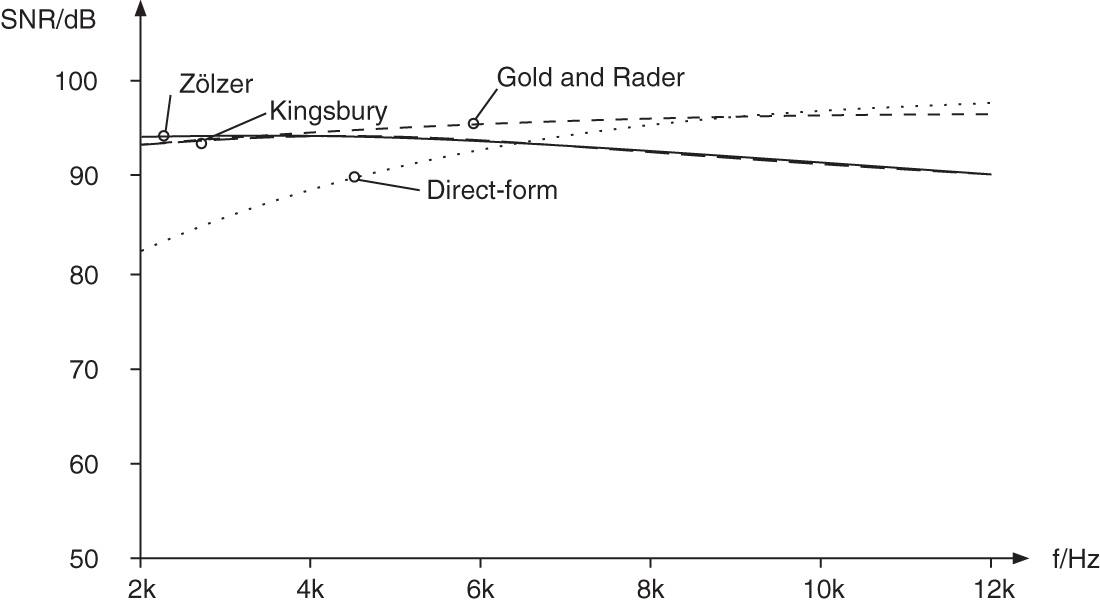

Figure 6.40 shows the SNR versus the cutoff frequency for the four filter structures presented above. The signals are quantized to 16 bit. Here, the poles move with increasing cutoff frequency on the curve characterized by the ![]() ‐factor

‐factor ![]() in the

in the ![]() ‐plane. For very small cutoff frequencies, the Zölzer filter shows an improvement of 3 dB in terms of SNR compared with the Kingsbury filter and an improvement of 6 dB compared with the Gold and Rader filter. Up to 5 kHz, the Zölzer filter yields better results (see Fig. 6.41 ). From 6 kHz onwards, the reduction of pole density in this filter leads to a decrease in the SNR (see Fig. 6.41 ).

‐plane. For very small cutoff frequencies, the Zölzer filter shows an improvement of 3 dB in terms of SNR compared with the Kingsbury filter and an improvement of 6 dB compared with the Gold and Rader filter. Up to 5 kHz, the Zölzer filter yields better results (see Fig. 6.41 ). From 6 kHz onwards, the reduction of pole density in this filter leads to a decrease in the SNR (see Fig. 6.41 ).

With regard to the implementation of the these filters with digital signal processors, a quantization after every multiplication is not necessary. Quantization takes place when the accumulator has to be stored to memory. This can be seen in Figs. 6.42 , 6.43 , 6.44 , and 6.45 by introducing quantizers where they really occur. The resulting output noise variances are also shown. The SNR is plotted versus the cutoff frequency in Figs. 6.46 and 6.47 . In the case of direct‐form and Gold and Rader filters, the SNR increases by 3 dB whereas the output noise variance for the Kingsbury filter remains unchanged. The Kingsbury filter and the Gold and Rader filters exhibit similar results up to a frequency of 200 kHz (see Fig. 6.46 ). The Zölzer filter demonstrates an improvement of 3 dB compared with these structures. For frequencies of up to 2 kHz (see Fig. 6.47 ), it is seen that the increased pole density leads to an improvement of the SNR as well as a reduced effect owing to coefficient quantization.

Figure 6.40 SNR versus cutoff frequency – quantization of products ( Hz).

Hz).

Figure 6.41 SNR versus cutoff frequency – quantization of products ( kHz).

kHz).

Figure 6.42 Direct‐form filter – quantization after accumulator.

Figure 6.43 Gold and Rader filter – quantization after accumulator.

Figure 6.44 Kingsbury filter – quantization after accumulator.

Figure 6.45 Zölzer filter – quantization after accumulator.

Figure 6.46 SNR versus cutoff frequency – quantization after accumulator ( Hz).

Hz).

Figure 6.47 SNR versus cutoff frequency – quantization after accumulator ( kHz).

kHz).

Noise Shaping in Recursive Filters

The analysis of the noise transfer function of different structures shows that for three structures with low round‐off noise, a zero at ![]() occurs in the transfer functions

occurs in the transfer functions ![]() of the error signals in addition to the complex poles. This zero near the poles reduces the amplifying effect of the pole. If it is now possible to introduce another zero into the noise transfer function, then the effect of the poles is compensated for to a larger extent. The procedure of feeding back the quantization error, as shown in Chapter 2, produces an additional zero in the noise transfer function [Tra77, Cha78, Abu79, Bar82, Zöl89]. The feedback of the quantization error is first demonstrated with the help of the direct‐form structure, as shown in Fig. 6.48 . This generates a zero at

of the error signals in addition to the complex poles. This zero near the poles reduces the amplifying effect of the pole. If it is now possible to introduce another zero into the noise transfer function, then the effect of the poles is compensated for to a larger extent. The procedure of feeding back the quantization error, as shown in Chapter 2, produces an additional zero in the noise transfer function [Tra77, Cha78, Abu79, Bar82, Zöl89]. The feedback of the quantization error is first demonstrated with the help of the direct‐form structure, as shown in Fig. 6.48 . This generates a zero at ![]() in the noise transfer function given by

in the noise transfer function given by

Figure 6.48 Direct‐form with noise shaping.

The resulting variance ![]() of the quantization error at the output of the filter is presented in Fig. 6.48 . To produce two zeros at

of the quantization error at the output of the filter is presented in Fig. 6.48 . To produce two zeros at ![]() , the quantization error is fed back over two delays weighted with 2 and

, the quantization error is fed back over two delays weighted with 2 and ![]() (see Fig. 6.48 b). The noise transfer function is, hence, given by

(see Fig. 6.48 b). The noise transfer function is, hence, given by

The SNR of the direct form is plotted versus the cutoff frequency in Fig. 6.49 . Even a single zero significantly improves the SNR in the direct form. The coefficients ![]() and

and ![]() approach

approach ![]() and 1, respectively, with the decrease of the cutoff frequency. With this, the error is filtered with a second‐order highpass. The introduction of the additional zeros in the noise transfer function only affects the noise signal of the filter. The input signal is only affected by the transfer function

and 1, respectively, with the decrease of the cutoff frequency. With this, the error is filtered with a second‐order highpass. The introduction of the additional zeros in the noise transfer function only affects the noise signal of the filter. The input signal is only affected by the transfer function ![]() . If the feedback coefficients are chosen equal to the coefficients

. If the feedback coefficients are chosen equal to the coefficients ![]() and

and ![]() in the denominator polynomial, complex zeros are produced that are identical with the complex poles. The noise transfer function

in the denominator polynomial, complex zeros are produced that are identical with the complex poles. The noise transfer function ![]() is then reduced to unity. The choice of complex zeros directly at the location of the complex poles corresponds to double‐precision arithmetic.

is then reduced to unity. The choice of complex zeros directly at the location of the complex poles corresponds to double‐precision arithmetic.

Figure 6.49 SNR – Noise shaping in direct‐form filter structures.

Figure 6.50 Gold and Rader filter with noise shaping.

In [Abu79], an improvement of the noise behavior for the direct form in any desired location of the ![]() ‐plane is achieved by placing additional simple‐to‐implement complex zeros near the poles. For implementing filter algorithms with digital signal processors, these kinds of suboptimal zero are easily realized. Because the Gold and Rader, Kingsbury, and Zölzer filter structures already have zeros in their respective noise transfer functions, it is sufficient to use a simple feedback for the quantization error. By virtue of this extension, the block diagrams in Figs. 6.50 , 6.51 , and 6.52 are obtained.

‐plane is achieved by placing additional simple‐to‐implement complex zeros near the poles. For implementing filter algorithms with digital signal processors, these kinds of suboptimal zero are easily realized. Because the Gold and Rader, Kingsbury, and Zölzer filter structures already have zeros in their respective noise transfer functions, it is sufficient to use a simple feedback for the quantization error. By virtue of this extension, the block diagrams in Figs. 6.50 , 6.51 , and 6.52 are obtained.

Figure 6.51 Kingsbury filter with noise shaping.

Figure 6.52 Zölzer filter with noise shaping.

The effect of noise shaping on SNR is shown in Figs. 6.53 and 6.54 . The almost ideal noise behavior of all filter structures for 16‐bit quantization and very small cutoff frequencies can be observed. The effect of this noise shaping for increasing cutoff frequencies is shown in Fig. 6.54 . The compensating effect of the two zeros at ![]() is reduced.

is reduced.

Figure 6.53 SNR – noise shaping (20–200 Hz).

Figure 6.54 SNR – noise shaping (200 Hz–12 kHz).

Scaling

In a fixed‐point implementation of a digital filter, a transfer function from the input of the filter to a junction within the filter has to be determined, as well as the transfer function from the input to the output. By scaling the input signal, it has to be guaranteed that the signals remain within the number range at each junction and at the output.

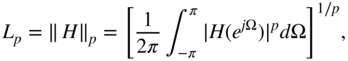

To calculate scaling coefficients, different criteria can be used. The ![]() norm is defined as

norm is defined as

and an expression for the ![]() norm follows for

norm follows for ![]() :

:

The ![]() norm represents the maximum of the amplitude frequency response. In general, the modulus of the output is

norm represents the maximum of the amplitude frequency response. In general, the modulus of the output is

with

For the ![]() ,

, ![]() , and

, and ![]() norms, the explanations in Table 6.10 can be used.

norms, the explanations in Table 6.10 can be used.

Table 6.10 Commonly used scaling.

| p | q | |

|---|---|---|

| 1 | given max. value of input spectrum | |

| scaling w.r.t. the | ||

| 1 | given | |

| scaling w.r.t. the | ||

| 2 | 2 | given |

| scaling w.r.t. the |

With

the ![]() norm is given by

norm is given by

For a sinusoidal input signal of amplitude one, we get ![]() . For

. For ![]() to be valid, the scaling factor must be chosen to be

to be valid, the scaling factor must be chosen to be

The scaling of the input signal is carried out with the maximum of the amplitude frequency response with the goal that for ![]() ,

, ![]() . As a scaling coefficient for the input signal, the highest scaling factor

. As a scaling coefficient for the input signal, the highest scaling factor ![]() is chosen. For determining the maximum of the transfer function

is chosen. For determining the maximum of the transfer function

of a second‐order system

the maximum value can be calculated as

With ![]() , it follows that

, it follows that

The solution of Eq. (6.124) leads to ![]() , which must be real (

, which must be real (![]() ) for the maximum/minimum to occur at a real frequency. For a single solution (repeated roots) of the above quadratic equation, the discriminant must be

) for the maximum/minimum to occur at a real frequency. For a single solution (repeated roots) of the above quadratic equation, the discriminant must be ![]() (

(![]() ). It follows that

). It follows that

and

The solution of Eq. (6.126) gives two solutions for ![]() . The solution with the larger value is chosen. If the discriminant

. The solution with the larger value is chosen. If the discriminant ![]() is not greater than zero, the maximum lies at

is not greater than zero, the maximum lies at ![]() (

(![]() ) or

) or ![]() (

(![]() ), as given by

), as given by

or

Limit Cycles

Limit cycles are periodic processes in a filter which can be measured as sinusoidal signals. They arise owing to the quantization of state variables. The different types of limit cycle and the methods necessary to prevent them are briefly listed below:

- overflow limit cycles

saturation curve

saturation curve scaling

scaling - limit cycles for vanishing input

noise shaping

noise shaping dithering

dithering - limit cycles correlated with the input signal

noise shaping

noise shaping dithering

dithering

6.3 Non‐recursive Audio Filters

For implementing linear phase audio filters, non‐recursive filters are used. The basis of an efficient implementation is the fast convolution

where the convolution in the time domain is performed by transforming the signal and the impulse response into the frequency domain, multiplication of the corresponding Fourier transforms, and inverse Fourier transform of the product into the time domain signal (see Fig. 6.55 ). The transform is carried out by a discrete Fourier transform of length ![]() , such that

, such that ![]() is valid and time‐domain aliasing is avoided.

is valid and time‐domain aliasing is avoided.

Figure 6.55 Fast convolution of signal  of length

of length  and impulse response

and impulse response  of length

of length  delivers the convolution result

delivers the convolution result  of length

of length  .

.

First we will discuss the basics. We then introduce the convolution of long sequences followed by a filter design for linear phase filters.

6.3.1 Basics of Fast Convolution

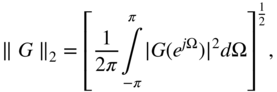

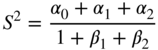

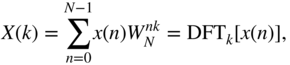

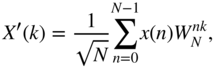

IDFT Implementation with DFT Algorithm. The discrete Fourier transformation (DFT) is described by

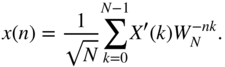

and the inverse discrete Fourier transformation (IDFT) by

Without scaling factor ![]() , we write

, we write

so that the following symmetrical transformation algorithms hold:

The IDFT differs from the DFT only by its sign in the exponential term.

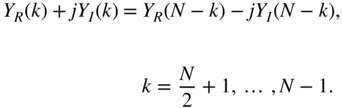

An alternative approach for calculating the IDFT with the help of a DFT is described as follows [Cad87, Duh88]. We will make use of the relationships

Conjugating Eq. (6.133) gives

The multiplication of Eq. (6.138) by ![]() leads to

leads to

Conjugating and multiplying Eq. (6.139) by ![]() results in

results in

An interpretation of (Eqs. 6.137) and (6.140) suggests the following way of performing the IDFT with the DFT algorithm:

- exchange the real with the imaginary part of the spectral sequence

- transformation with DFT algorithm

- exchange the real with the imaginary part of the time sequence

For implementation on a digital signal processor, the use of DFT saves memory for IDFT.

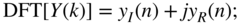

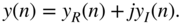

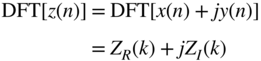

Discrete Fourier Transformation of Two Real Sequences. In many applications, stereo signals that consist of a left and right channel are processed. With the help of the DFT, both channels can be transformed simultaneously into the frequency domain [Sor87, Ell82].

For a real sequence ![]() ,

,

For a discrete Fourier transformation of two real sequences ![]() and

and ![]() , a complex sequence is first formed according to

, a complex sequence is first formed according to

The Fourier transformation gives

where

Because ![]() and

and ![]() are real sequences, it follows from Eq. (6.142) that

are real sequences, it follows from Eq. (6.142) that

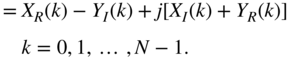

Considering the real part of ![]() , adding (Eqs. 6.148) and (6.151) gives

, adding (Eqs. 6.148) and (6.151) gives

and subtraction of Eq. (6.151) from Eq. (6.148) results in

Considering the imaginary part of ![]() , adding (Eqs. 6.148) and (6.151) gives

, adding (Eqs. 6.148) and (6.151) gives

and subtraction of Eq. (6.151) from Eq. (6.148) results in

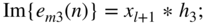

Hence the spectral functions are given by

and

Fast Convolution if Spectral Functions are Known. The spectral functions ![]() ,

, ![]() , and

, and ![]() are known. With the help of Eq. (6.148), the spectral sequence can be formed by

are known. With the help of Eq. (6.148), the spectral sequence can be formed by

Filtering is done by multiplication in the frequency domain:

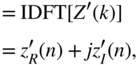

The inverse transformation gives

so that the filtered output sequence is given by

The filtering of a stereo signal can hence be done by transformation into the frequency domain, multiplication of the spectral functions, and inverse transformation of left and right channels.

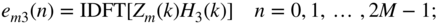

6.3.2 Fast Convolution of Long Sequences

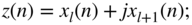

The fast convolution of two real input sequences ![]() and

and ![]() of length

of length ![]() with the impulse response

with the impulse response ![]() of length

of length ![]() leads to the output sequences

leads to the output sequences

of length ![]() . The implementation of a non‐recursive filter with fast convolution becomes more efficient than the direct implementation of an FIR filter for filter lengths

. The implementation of a non‐recursive filter with fast convolution becomes more efficient than the direct implementation of an FIR filter for filter lengths ![]() . Therefore, the following procedure will be performed:

. Therefore, the following procedure will be performed:

- formation of a complex sequence

(6.175)

- Fourier transformation of the impulse response

that is padded with zeros to a length

that is padded with zeros to a length  (6.176)

(6.176)

- Fourier transformation of the sequence

that is padded with zeros to a length

that is padded with zeros to a length  (6.177)

(6.177)

- formation of a complex output sequence

(6.178)

(6.179)

(6.179) (6.180)

(6.180)

- formation of a real output sequence

(6.181)

(6.182)

(6.182)

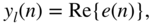

Figure 6.56 Fast convolution with partitioning of the input signal  into blocks of length

into blocks of length  .

.

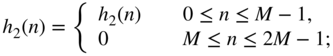

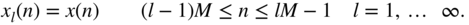

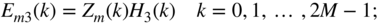

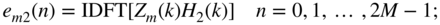

For the convolution of an infinite‐length input sequence (see Fig. 6.56 ) with an impulse response ![]() , the input sequence is partitioned into sequences

, the input sequence is partitioned into sequences ![]() of length

of length ![]() :

:

The input sequence is given by superposition of finite‐length sequences according to

The convolution of the input sequence with the impulse response ![]() of length

of length ![]() gives

gives

The term in brackets corresponds to the convolution of a finite‐length sequence ![]() of length

of length ![]() with the impulse response of length

with the impulse response of length ![]() . The output signal can be given as a superposition of convolution products of length

. The output signal can be given as a superposition of convolution products of length ![]() . With these partial convolution products,

. With these partial convolution products,

the output signal can be written as

Figure 6.57 Partitioning of the impulse response  .

.

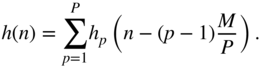

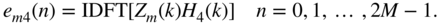

If the length ![]() of the impulse response is very long, it can be similarly partitioned into

of the impulse response is very long, it can be similarly partitioned into ![]() parts each of length

parts each of length ![]() (see Fig. 6.57 ). With

(see Fig. 6.57 ). With

it follows that

With ![]() and Eq. (6.189), the following partitioning can be done:

and Eq. (6.189), the following partitioning can be done:

This can be rewritten as

An example of partitioning the impulse response into ![]() parts is graphically shown in Fig. 6.58 . This leads to

parts is graphically shown in Fig. 6.58 . This leads to

Figure 6.58 Scheme for a fast convolution with  .

.

The procedure of a fast convolution by partitioning the input sequence ![]() , as well as the impulse response

, as well as the impulse response ![]() , is given in the following for the example in Fig. 6.58 .

, is given in the following for the example in Fig. 6.58 .

- Decomposition of the impulse response

of length

of length  :

(6.196)

:

(6.196) (6.197)

(6.197) (6.198)

(6.198) (6.199)

(6.199)

- Zero‐padding of partial impulse responses up to a length

:

(6.200)

:

(6.200) (6.201)

(6.201) (6.202)

(6.202) (6.203)

(6.203)

- Calculating and storing

(6.204)

- Decomposition of the input sequence

into partial sequences

into partial sequences  of length

of length  :

(6.205)

:

(6.205)

- Nesting partial sequences:

(6.206)

- Zero‐padding of complex sequence

up to a length

up to a length  :

(6.207)

:

(6.207)

- Fourier transformation of the complex sequences

:

(6.208)

:

(6.208)

- Multiplication in the frequency domain:

(6.209)

(6.210)

(6.210) (6.211)

(6.211) (6.212)

(6.212) (6.213)

(6.213)

- Inverse transformation:

(6.214)

(6.215)

(6.215) (6.216)

(6.216) (6.217)

(6.217)

- Determination of partial convolutions:

(6.218)

(6.219)

(6.219) (6.220)

(6.220) (6.221)

(6.221) (6.222)

(6.222) (6.223)

(6.223) (6.224)

(6.224) (6.225)

(6.225)

- Overlap‐Add of partial sequences, increments

and

and  , and back to step 5.

, and back to step 5.

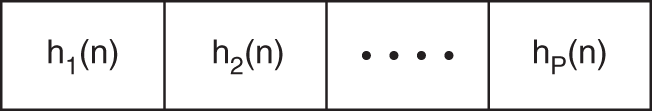

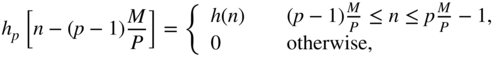

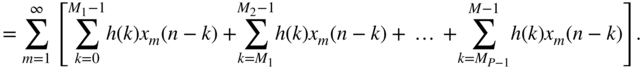

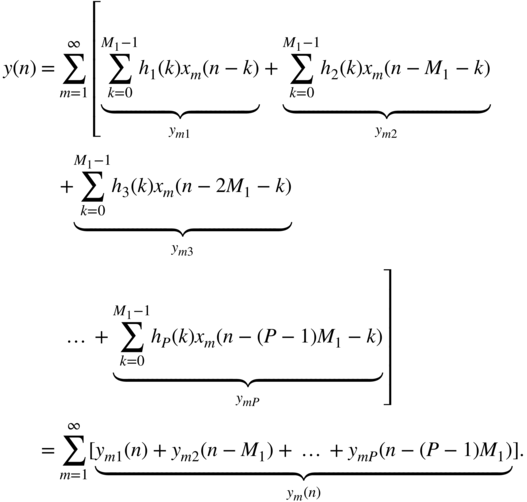

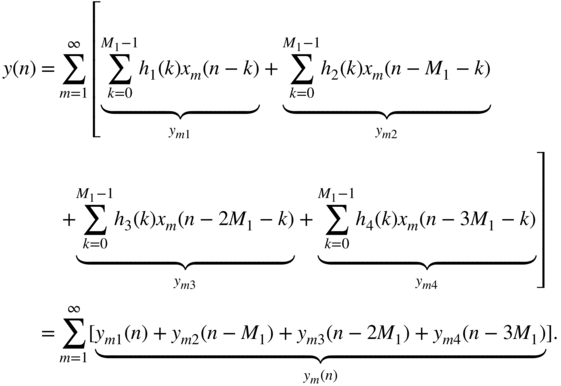

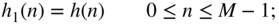

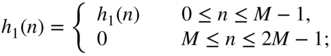

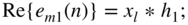

Based on the partitioning of the input signal and the impulse response and the following Fourier transform, the result of each single convolution is only available after a delay of one block of samples. Different methods to reduce computational complexity or overcome the block delay have been proposed [Soo90, Gar95, Ege96, Mül99, Mül01, Garc02]. These methods make use of a hybrid approach where the first part of the impulse response is used for time‐domain convolution and the other parts are used for fast convolution in the frequency domain. Figure 6.59 a,b demonstrates a simple derivation of the hybrid convolution scheme, which can be described by the decomposition of the transfer function according to

where the impulse response has length ![]() and

and ![]() is the number of smaller partitions of length

is the number of smaller partitions of length ![]() . Figure 6.59 c,d shows two different signal flow graphs for the decomposition given by Eq. (6.226) of the entire transfer function. Especially, Fig. 6.59 d highlights (with gray background) that in each branch

. Figure 6.59 c,d shows two different signal flow graphs for the decomposition given by Eq. (6.226) of the entire transfer function. Especially, Fig. 6.59 d highlights (with gray background) that in each branch ![]() , a delay of

, a delay of ![]() occurs and each filter

occurs and each filter ![]() has the same length and makes use of the same state variables. This means that they can be computed in parallel in the frequency domain with

has the same length and makes use of the same state variables. This means that they can be computed in parallel in the frequency domain with ![]() ‐FFTs/IFFTs and the outputs have to be delayed according to

‐FFTs/IFFTs and the outputs have to be delayed according to ![]() , as shown in Fig. 6.59 e. A further simplification shown in Fig. 6.59 f leads to one input

, as shown in Fig. 6.59 e. A further simplification shown in Fig. 6.59 f leads to one input ![]() ‐FFT and block delays

‐FFT and block delays ![]() for the frequency vectors. Then, parallel multiplications with

for the frequency vectors. Then, parallel multiplications with ![]() of length

of length ![]() and the summation of all intermediate products are performed before one output

and the summation of all intermediate products are performed before one output ![]() ‐IFFT for the overlap and add operation in the time domain is used. The first part of the impulse response, represented by

‐IFFT for the overlap and add operation in the time domain is used. The first part of the impulse response, represented by ![]() , is performed by direct convolution in the time domain. The frequency‐ and time‐domain parts are then overlapped and added. An alternative realization for fast convolution is based on the overlap and save operation.

, is performed by direct convolution in the time domain. The frequency‐ and time‐domain parts are then overlapped and added. An alternative realization for fast convolution is based on the overlap and save operation.

Figure 6.59 Hybrid fast convolution.

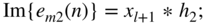

6.3.3 Filter Design by Frequency Sampling

Audio filter design for non‐recursive filter realizations by fast convolution can be carried out by the frequency sampling method. For linear phase systems,

where ![]() is a real valued amplitude response and

is a real valued amplitude response and ![]() is the length of the impulse response. The magnitude

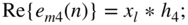

is the length of the impulse response. The magnitude ![]() is calculated by sampling in the frequency domain at equidistant places

is calculated by sampling in the frequency domain at equidistant places

according to

Hence, a filter can be designed by fulfilling conditions in the frequency domain. The linear phase is determined as

Owing to the real transfer function ![]() for an even filter length, we have to fulfill

for an even filter length, we have to fulfill

This has to be taken into consideration when designing filters of even length ![]() . The impulse response

. The impulse response ![]() is obtained through an

is obtained through an ![]() ‐point IDFT of the spectral sequence

‐point IDFT of the spectral sequence ![]() . This impulse response is extended with zero‐padding to the length

. This impulse response is extended with zero‐padding to the length ![]() and then transformed by an

and then transformed by an ![]() ‐point DFT resulting in the spectral sequence

‐point DFT resulting in the spectral sequence ![]() of the filter.

of the filter.

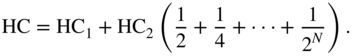

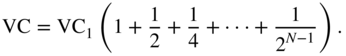

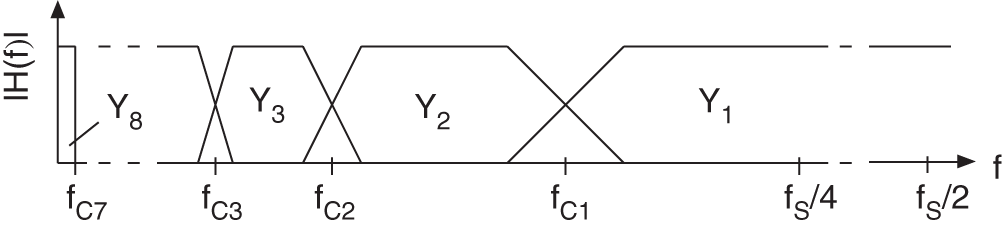

6.4 Multi‐complementary Filter Bank

The sub‐band processing of audio signals is mainly used in source coding applications for efficient transmission and storing. The basis for the sub‐band decomposition is critically sampled filter banks [Vai93, Fli00]. These filter banks allow a perfect reconstruction of the input provided there is no processing within the sub‐bands. They consist of an analysis filter bank for decomposing the signal in critically sampled sub‐bands and a synthesis filter bank for reconstructing the broadband output. The aliasing in the sub‐bands is eliminated by the synthesis filter bank. Nonlinear methods are used for coding the sub‐band signals. The reconstruction error of the filter bank is negligible compared with the errors arising from the coding/decoding process. Using a critically sampled filter bank as a multiband equalizer, multiband dynamic range control, or multiband room simulation, the processing in the sub‐bands leads to aliasing at the output. To avoid aliasing, a multi‐complementary filter bank [Fli92, Zöl92, Fli00] is presented which enables an aliasing‐free processing in the sub‐bands and leads to a perfect reconstruction of the output. It allows a decomposition into octave frequency bands which are matched to the human ear.

6.4.1 Principles

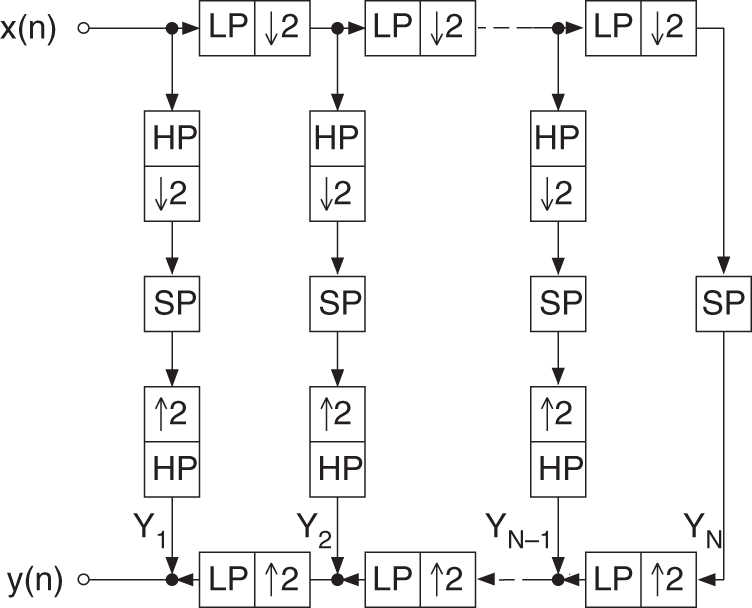

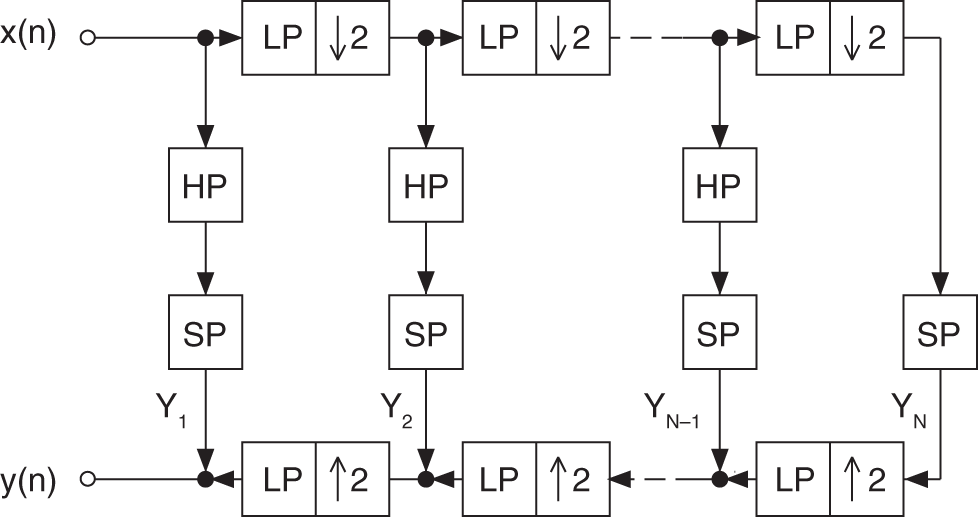

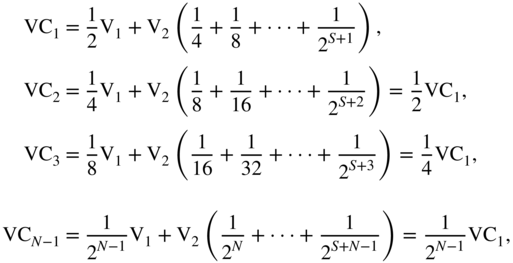

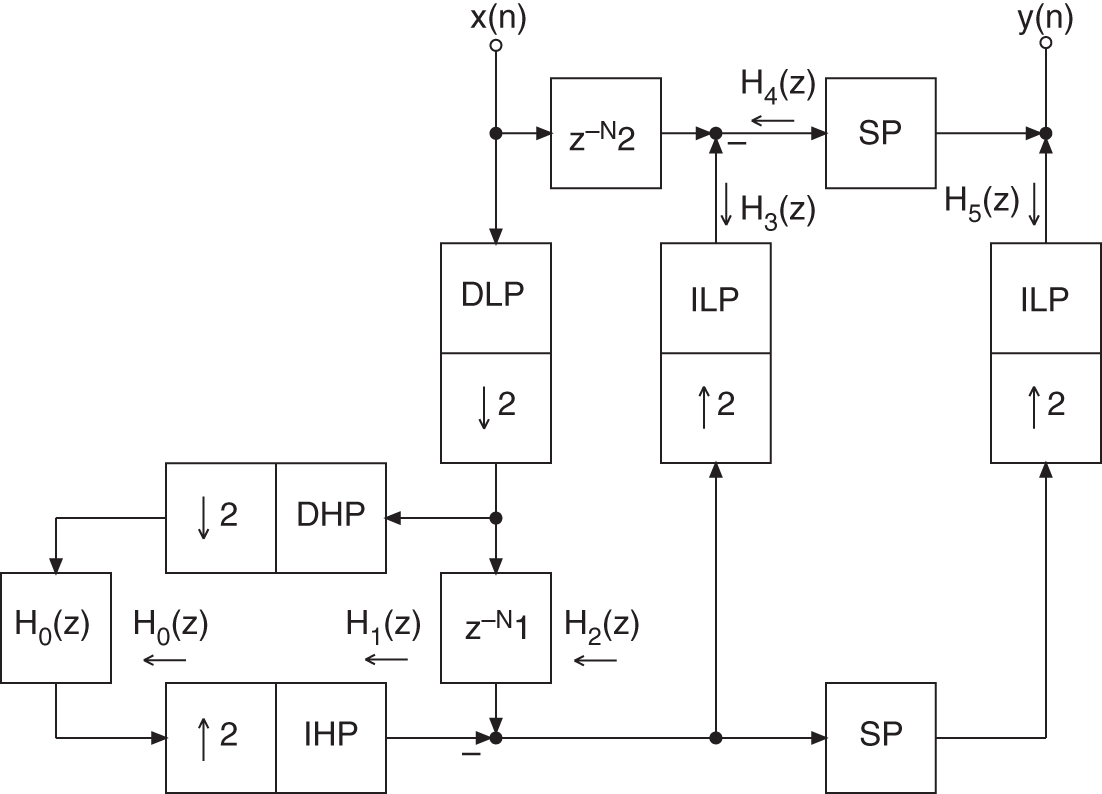

Figure 6.61 shows an octave‐band filter bank with critical sampling. It performs a successive lowpass/highpass decomposition into half‐bands followed by downsampling by a factor of two. The decomposition leads to the sub‐bands ![]() to

to ![]() (see Fig. 6.62 ). The transition frequencies of this decomposition are given by

(see Fig. 6.62 ). The transition frequencies of this decomposition are given by

To avoid aliasing in sub‐bands, a modified octave‐band filter bank is considered, which is shown in Fig. 6.63 for a two‐band decomposition. The cutoff frequency of the modified filter bank is moved from ![]() to a lower frequency. This means that in downsampling the lowpass branch, no aliasing occurs in the transition band (e.g. cutoff frequency

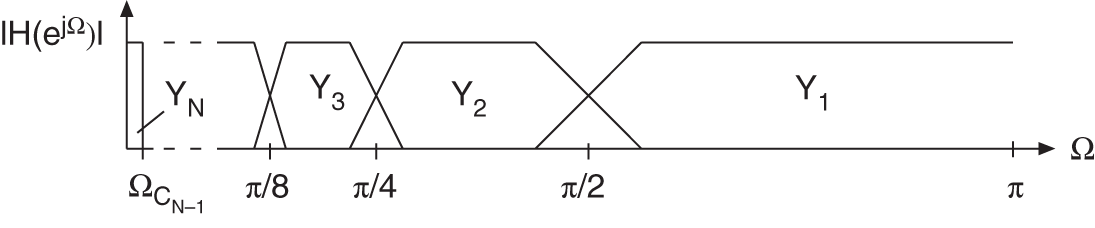

to a lower frequency. This means that in downsampling the lowpass branch, no aliasing occurs in the transition band (e.g. cutoff frequency ![]() ). The broader highpass branch cannot be downsampled. A continuation of the described two‐band decomposition leads to the modified octave‐band filter bank shown in Fig. 6.64 . The frequency bands are depicted in Fig. 6.65 showing that besides the cutoff frequencies

). The broader highpass branch cannot be downsampled. A continuation of the described two‐band decomposition leads to the modified octave‐band filter bank shown in Fig. 6.64 . The frequency bands are depicted in Fig. 6.65 showing that besides the cutoff frequencies

the bandwidth of the sub‐bands is reduced by a factor of two. The highpass sub‐band ![]() is an exception.

is an exception.

Figure 6.61 Octave‐band QMF bank (SP = signal processing, LP = lowpass, HP = highpass).

Figure 6.62 Octave‐frequency bands.

Figure 6.63 Two‐band decomposition.

Figure 6.64 Modified octave‐band filter bank.

Figure 6.65 Modified octave decomposition.

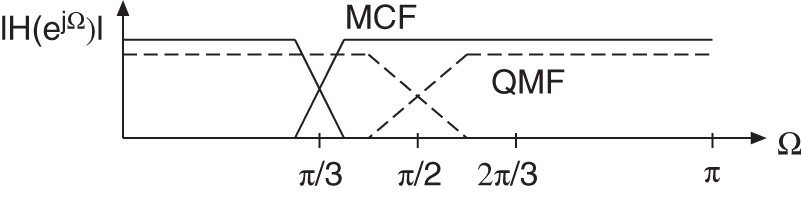

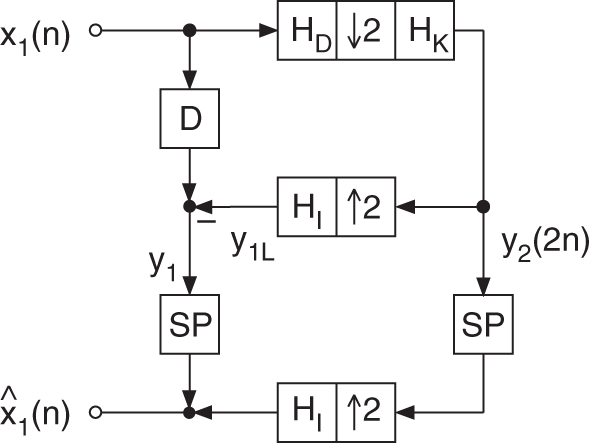

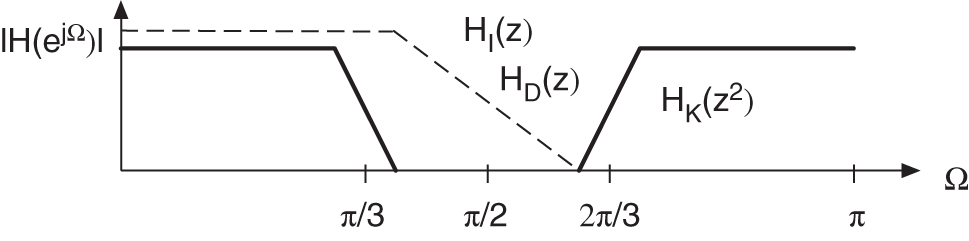

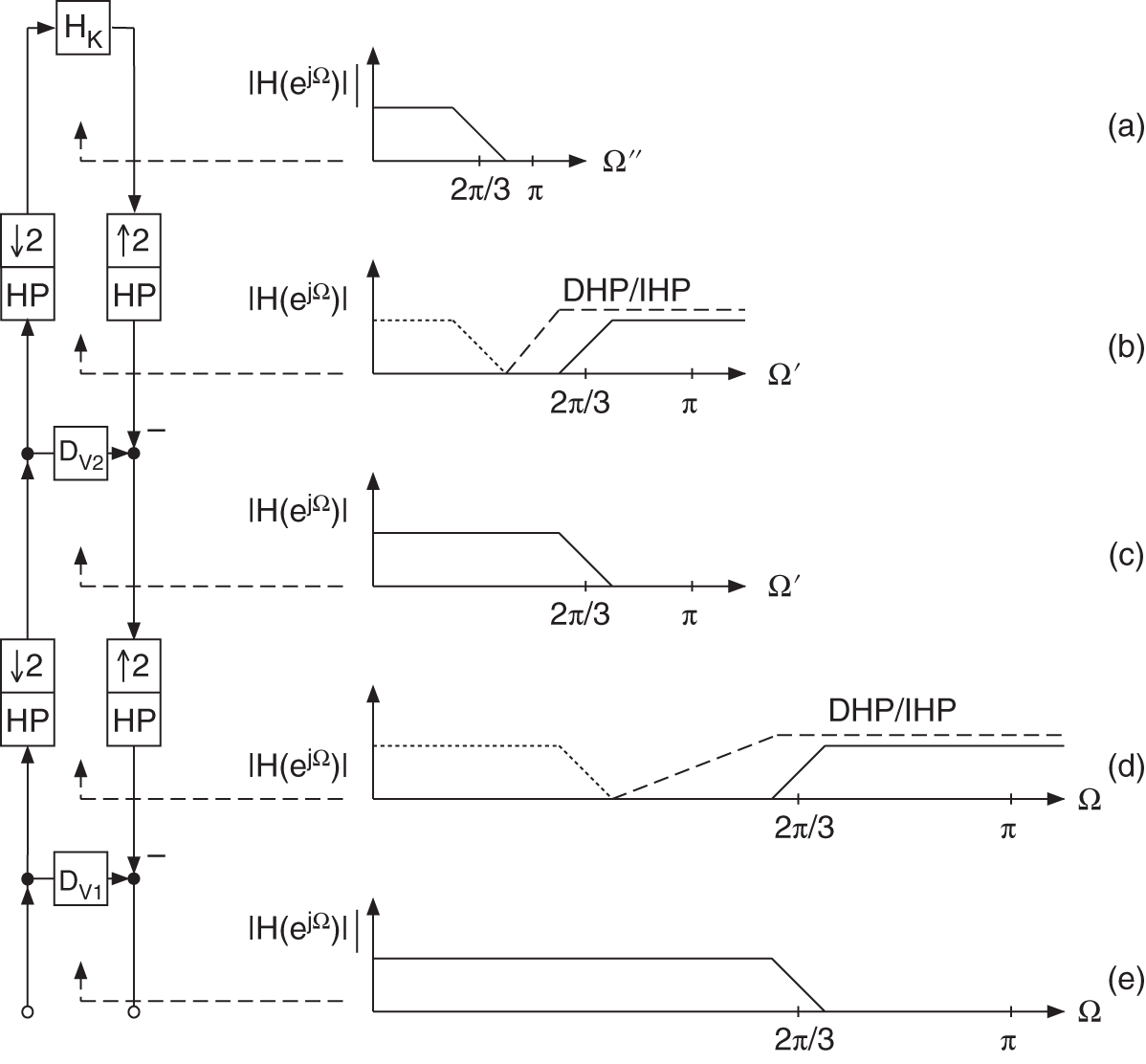

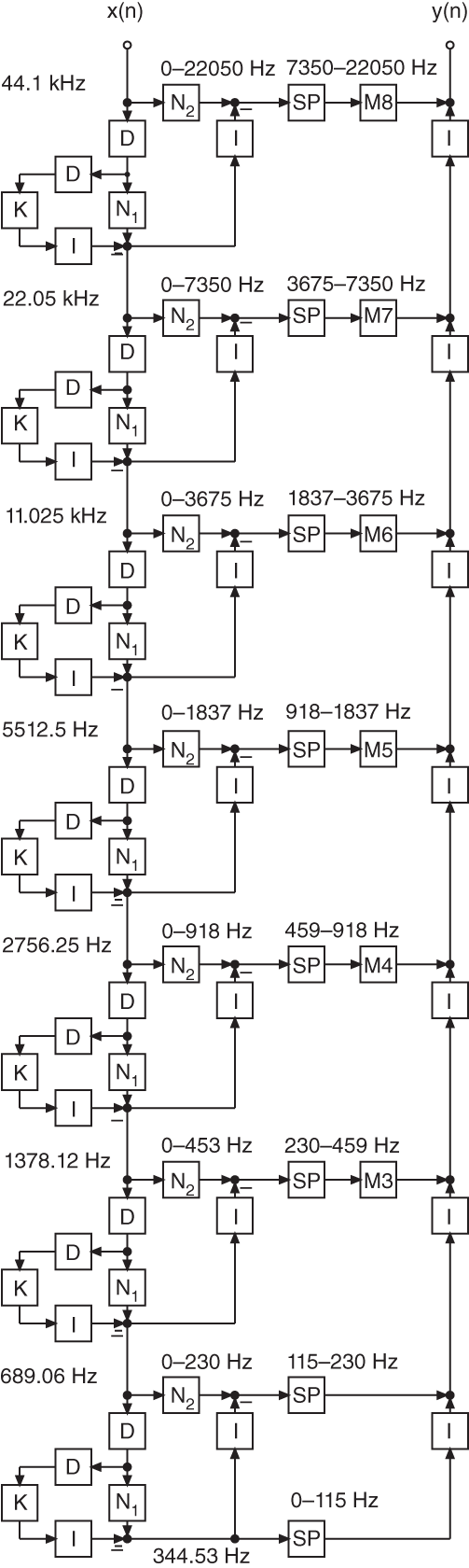

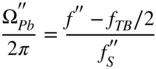

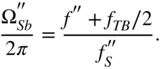

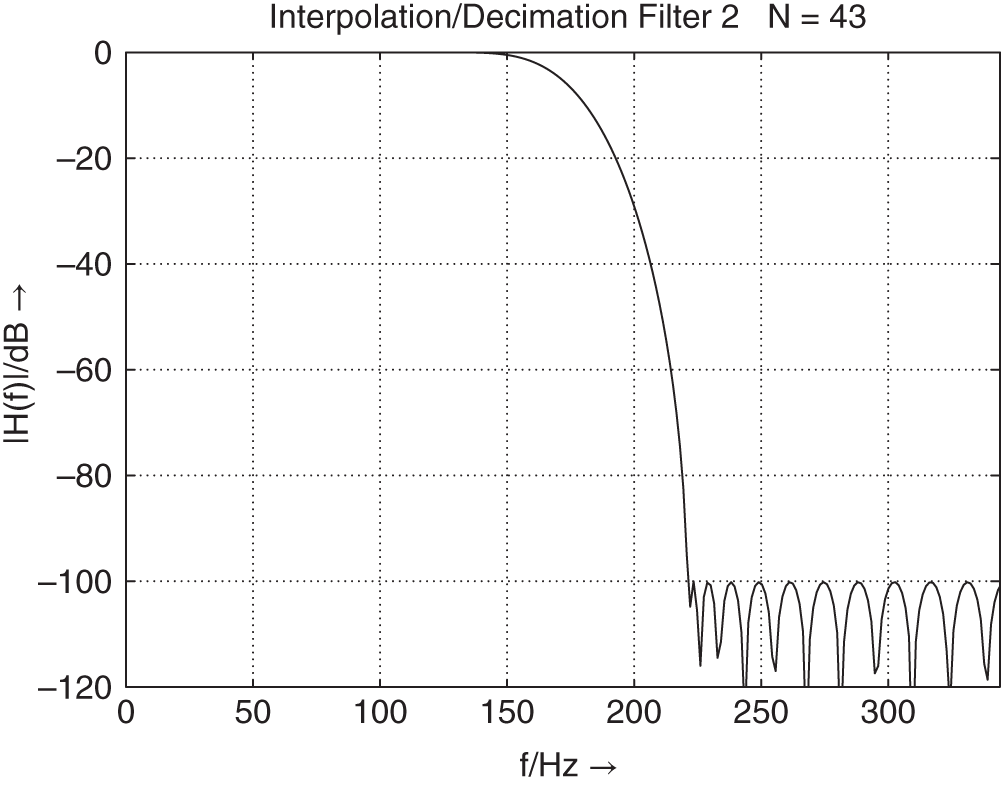

The special lowpass/highpass decomposition is carried out by a two‐band complementary filter bank, as shown in Fig. 6.66 . The frequency responses of a decimation filter ![]() , interpolation filter

, interpolation filter ![]() , and kernel filter

, and kernel filter ![]() are shown in Fig. 6.67 .

are shown in Fig. 6.67 .

Figure 6.66 Two‐band complementary filter bank.

Figure 6.67 Design of  ,

,  , and

, and  .

.

The lowpass filtering of a signal ![]() is done with the help of a decimation filter

is done with the help of a decimation filter ![]() , the downsampler of factor two, and the kernel filter

, the downsampler of factor two, and the kernel filter ![]() , and leads to

, and leads to ![]() . The Z‐transform of

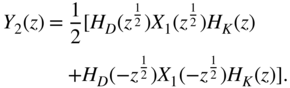

. The Z‐transform of ![]() is given by

is given by

The interpolated lowpass signal ![]() is generated by upsampling by a factor of two and filtering with the interpolation filter

is generated by upsampling by a factor of two and filtering with the interpolation filter ![]() . The Z‐transform of

. The Z‐transform of ![]() is given by

is given by

The highpass signal ![]() is obtained by subtracting the interpolated lowpass signal

is obtained by subtracting the interpolated lowpass signal ![]() from the delayed input signal

from the delayed input signal ![]() . The Z‐transform of the highpass signal is given by

. The Z‐transform of the highpass signal is given by

The lowpass and highpass signals are processed individually. The output signal ![]() is formed by adding the highpass signal to the upsampled and filtered lowpass signal. With (Eqs. 6.237) and (6.239), the Z‐transform of

is formed by adding the highpass signal to the upsampled and filtered lowpass signal. With (Eqs. 6.237) and (6.239), the Z‐transform of ![]() can be written as

can be written as

Equation (6.240) shows the perfect reconstruction of the input signal which is delayed by ![]() sampling units.

sampling units.

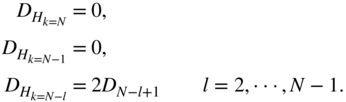

The extension to ![]() sub‐bands and implementing the kernel filter using complementary techniques [Ram88, Ram90] leads to the multi‐complementary filter bank, as shown in Fig. 6.68 . Delays are integrated in the highpass (

sub‐bands and implementing the kernel filter using complementary techniques [Ram88, Ram90] leads to the multi‐complementary filter bank, as shown in Fig. 6.68 . Delays are integrated in the highpass (![]() ) and bandpass sub‐bands (

) and bandpass sub‐bands (![]() to

to ![]() ) to compensate for the group delay. The filter structure consists of

) to compensate for the group delay. The filter structure consists of ![]() horizontal stages. The kernel filter is implemented as a complementary filter in

horizontal stages. The kernel filter is implemented as a complementary filter in ![]() vertical stages. The design of the latter shall be discussed later. The vertical delays in the extended kernel filters (

vertical stages. The design of the latter shall be discussed later. The vertical delays in the extended kernel filters (![]() to

to ![]() ) compensate group delays caused by forming the complementary component. At the end of each of these vertical stages is the kernel filter

) compensate group delays caused by forming the complementary component. At the end of each of these vertical stages is the kernel filter ![]() . With

. With

the signals ![]() can be written as a function of the signals

can be written as a function of the signals ![]() as

as

with

and with ![]() , the delays are given by

, the delays are given by

Perfect reconstruction of the input signal can be achieved if the horizontal delays ![]() are given by

are given by

Figure 6.68 Multi‐complementary filter bank.

The implementation of the extended vertical kernel filters is done by calculating complementary components, as shown in Fig. 6.69 . After upsampling, interpolating with a highpass (Fig. 6.69 b) and forming the complementary component, the kernel filter ![]() with frequency response as in Fig. 6.69 a becomes lowpass with frequency response as illustrated in Fig. 6.69 c. The slope of the filter characteristic remains constant whereas the cutoff frequency is doubled. A subsequent upsampling with an interpolation highpass (Fig. 6.69 d) and complement filtering leads to the frequency response in Fig. 6.69 e. With the help of this technique, the kernel filter is implemented at a reduced sampling rate. The cutoff frequency is moved to a desired cutoff frequency by using decimation/interpolation stages with complement filtering.