Chapter 31. Advanced Class Topics

This chapter concludes our look at OOP in Python by presenting a few more advanced class-related topics: we will survey subclassing built-in types, “new-style” class changes and extensions, static and class methods, function decorators, and more.

As we’ve seen, Python’s OOP model is, at its core, very simple, and some of the topics presented in this chapter are so advanced and optional that you may not encounter them very often in your Python applications-programming career. In the interest of completeness, though, we’ll round out our discussion of classes with a brief look at these advanced tools for OOP work.

As usual, because this is the last chapter in this part of the book, it ends with a section on class-related “gotchas,” and the set of lab exercises for this part. I encourage you to work through the exercises to help cement the ideas we’ve studied here. I also suggest working on or studying larger OOP Python projects as a supplement to this book. As with much in computing, the benefits of OOP tend to become more apparent with practice.

Note

Content note: This chapter collects advanced class topics, but some are even too advanced for this chapter to cover well. Topics such as properties, descriptors, decorators, and metaclasses are only briefly mentioned here, and are covered more fully in the final part of this book. Be sure to look ahead for more complete examples and extended coverage of some of the subjects that fall into this chapter’s category.

Extending Built-in Types

Besides implementing new kinds of objects, classes are sometimes used to extend the functionality of Python’s built-in types to support more exotic data structures. For instance, to add queue insert and delete methods to lists, you can code classes that wrap (embed) a list object and export insert and delete methods that process the list specially, like the delegation technique we studied in Chapter 30. As of Python 2.2, you can also use inheritance to specialize built-in types. The next two sections show both techniques in action.

Extending Types by Embedding

Remember those set functions we wrote in Chapters 16 and 18? Here’s what they look like brought back to life as a Python class. The following example (the file setwrapper.py) implements a new set object type by moving some of the set functions to methods and adding some basic operator overloading. For the most part, this class just wraps a Python list with extra set operations. But because it’s a class, it also supports multiple instances and customization by inheritance in subclasses. Unlike our earlier functions, using classes here allows us to make multiple self-contained set objects with preset data and behavior, rather than passing lists into functions manually:

class Set: def __init__(self, value = []): # Constructor self.data = [] # Manages a list self.concat(value) def intersect(self, other): # other is any sequence res = [] # self is the subject for x in self.data: if x in other: # Pick common items res.append(x) return Set(res) # Return a new Set def union(self, other): # other is any sequence res = self.data[:] # Copy of my list for x in other: # Add items in other if not x in res: res.append(x) return Set(res) def concat(self, value): # value: list, Set... for x in value: # Removes duplicates if not x in self.data: self.data.append(x) def __len__(self): return len(self.data) # len(self) def __getitem__(self, key): return self.data[key] # self[i] def __and__(self, other): return self.intersect(other) # self & other def __or__(self, other): return self.union(other) # self | other def __repr__(self): return 'Set:' + repr(self.data) # print()

To use this class, we make instances, call methods, and run defined operators as usual:

x = Set([1, 3, 5, 7]) print(x.union(Set([1, 4, 7]))) # prints Set:[1, 3, 5, 7, 4] print(x | Set([1, 4, 6])) # prints Set:[1, 3, 5, 7, 4, 6]

Overloading operations such as indexing enables instances of

our Set class to masquerade as

real lists. Because you will interact with and extend this class in

an exercise at the end of this chapter, I won’t say much more about

this code until Appendix B.

Extending Types by Subclassing

Beginning with Python 2.2, all the built-in types in the

language can now be subclassed directly. Type-conversion functions

such as list, str, dict, and tuple have become built-in type

names—although transparent to your script, a type-conversion call

(e.g., list('spam')) is now

really an invocation of a type’s object constructor.

This change allows you to customize or extend the behavior of

built-in types with user-defined class statements: simply subclass the new

type names to customize them. Instances of your type subclasses can

be used anywhere that the original built-in type can appear. For

example, suppose you have trouble getting used to the fact that

Python list offsets begin at 0 instead of 1. Not to worry—you can

always code your own subclass that customizes this core behavior of

lists. The file typesubclass.py

shows how:

# Subclass built-in list type/class # Map 1..N to 0..N-1; call back to built-in version. class MyList(list): def __getitem__(self, offset): print('(indexing %s at %s)' % (self, offset)) return list.__getitem__(self, offset - 1) if __name__ == '__main__': print(list('abc')) x = MyList('abc') # __init__ inherited from list print(x) # __repr__ inherited from list print(x[1]) # MyList.__getitem__ print(x[3]) # Customizes list superclass method x.append('spam'), print(x) # Attributes from list superclass x.reverse(); print(x)

In this file, the MyList

subclass extends the built-in list’s __getitem__ indexing method only to map

indexes 1 to N back to the required 0 to N−1. All it really does is

decrement the submitted index and call back to the superclass’s

version of indexing, but it’s enough to do the trick:

% python typesubclass.py

['a', 'b', 'c']

['a', 'b', 'c']

(indexing ['a', 'b', 'c'] at 1)

a

(indexing ['a', 'b', 'c'] at 3)

c

['a', 'b', 'c', 'spam']

['spam', 'c', 'b', 'a']This output also includes tracing text the class prints on

indexing. Of course, whether changing indexing this way is a good

idea in general is another issue—users of your MyList class may very well be confused by

such a core departure from Python sequence behavior. The ability to

customize built-in types this way can be a powerful asset,

though.

For instance, this coding pattern gives rise to an alternative way to code a set—as a subclass of the built-in list type, rather than a standalone class that manages an embedded list object, as shown earlier in this section. As we learned in Chapter 5, Python today comes with a powerful built-in set object, along with literal and comprehension syntax for making new sets. Coding one yourself, though, is still a great way to learn about type subclassing in general.

The following class, coded in the file setsubclass.py, customizes lists to add

just methods and operators related to set processing. Because all

other behavior is inherited from the built-in list superclass, this makes for a shorter

and simpler alternative:

class Set(list):

def __init__(self, value = []): # Constructor

list.__init__([]) # Customizes list

self.concat(value) # Copies mutable defaults

def intersect(self, other): # other is any sequence

res = [] # self is the subject

for x in self:

if x in other: # Pick common items

res.append(x)

return Set(res) # Return a new Set

def union(self, other): # other is any sequence

res = Set(self) # Copy me and my list

res.concat(other)

return res

def concat(self, value): # value: list, Set . . .

for x in value: # Removes duplicates

if not x in self:

self.append(x)

def __and__(self, other): return self.intersect(other)

def __or__(self, other): return self.union(other)

def __repr__(self): return 'Set:' + list.__repr__(self)

if __name__ == '__main__':

x = Set([1,3,5,7])

y = Set([2,1,4,5,6])

print(x, y, len(x))

print(x.intersect(y), y.union(x))

print(x & y, x | y)

x.reverse(); print(x)Here is the output of the self-test code at the end of this file. Because subclassing core types is an advanced feature, I’ll omit further details here, but I invite you to trace through these results in the code to study its behavior:

% python setsubclass.py

Set:[1, 3, 5, 7] Set:[2, 1, 4, 5, 6] 4

Set:[1, 5] Set:[2, 1, 4, 5, 6, 3, 7]

Set:[1, 5] Set:[1, 3, 5, 7, 2, 4, 6]

Set:[7, 5, 3, 1]There are more efficient ways to implement sets with

dictionaries in Python, which replace the linear scans in the set

implementations shown here with dictionary index operations

(hashing) and so run much quicker. (For more details, see

Programming

Python.) If you’re interested in sets, also take

another look at the set object

type we explored in Chapter 5; this type

provides extensive set operations as built-in tools. Set

implementations are fun to experiment with, but they are no longer

strictly required in Python today.

For another type subclassing example, see the implementation

of the bool type in Python 2.3

and later. As mentioned earlier in the book, bool is a subclass of int with two instances (True and False) that behave like the integers

1 and 0 but inherit custom string-representation

methods that display their names.

The “New-Style” Class Model

In Release 2.2, Python introduced a new flavor of classes, known as “new-style” classes; classes following the original model became known as “classic classes” when compared to the new kind. In 3.0 the class story has merged, but it remains split for Python 2.X users:

As of Python 3.0, all classes are automatically what we used to call “new-style,” whether they explicitly inherit from

objector not. All classes inherit fromobject, whether implicitly or explicitly, and all objects are instances ofobject.In Python 2.6 and earlier, classes must explicitly inherit from

object(or another built-in type) to be considered “new-style” and obtain all new-style features.

Because all classes are automatically new-style in 3.0, the

features of new-style classes are simply normal class features. I’ve

opted to keep their descriptions in this section separate, however, in

deference to users of Python 2.X code—classes in such code acquire

new-style features only when they are derived from object.

In other words, when Python 3.0 users see descriptions of “new-style” features in this section, they should take them to be descriptions of existing features of their classes. For 2.6 readers, these are a set of optional extensions.

In Python 2.6 and earlier, the only syntactic difference for

new-style classes is that they are derived from either a built-in

type, such as list, or a special

built-in class known as object. The

built-in name object is provided to

serve as a superclass for new-style classes if no other built-in type

is appropriate to use:

class newstyle(object):

...normal code...Any class derived from object, or any other built-in type, is

automatically treated as a new-style class. As long as a built-in type

is somewhere in the superclass tree, the new class is treated as a

new-style class. Classes not derived from built-ins such as object are considered classic.

New-style classes are only slightly different from classic classes, and the ways in which they differ are irrelevant to the vast majority of Python users. Moreover, the classic class model still available in 2.6 works exactly as it has for almost two decades.

In fact, new-style classes are almost completely backward

compatible with classic classes in syntax and behavior; they mostly

just add a few advanced new features. However, because they modify a

handful of class behaviors, they had to be introduced as a distinct

tool so as to avoid impacting any existing code that depends on the

prior behaviors. For example, some subtle differences, such as

diamond pattern inheritance search and the behavior of

built-in operations with managed attribute methods such as __getattr__, can cause some legacy code to

fail if left unchanged.

The next two sections provide overviews of the ways the new-style classes differ and the new tools they provide. Again, because all classes are new-style today, these topics represent changes to Python 2.X readers but simply additional advanced class topics to Python 3.0 readers.

New-Style Class Changes

New-style classes differ from classic classes in a number of ways, some of which are subtle but can impact existing 2.X code and coding styles. Here are some of the most prominent ways they differ:

- Classes and types merged

Classes are now types, and types are now classes. In fact, the two are essentially synonyms. The

type(I)built-in returns the class an instance is made from, instead of a generic instance type, and is normally the same asI.__class__. Moreover, classes are instances of thetypeclass,typemay be subclassed to customize class creation, and all classes (and hence types) inherit fromobject, which comes with a small set of default operator overloading methods.- Inheritance search order

Diamond patterns of multiple inheritance have a slightly different search order—roughly, they are searched across before up, and more breadth-first than depth-first.

- Attribute fetch for built-ins

The

__getattr__and__getattribute__methods are no longer run for attributes implicitly fetched by built-in operations. This means that they are not called for__X__operator overloading method names—the search for such names begins at classes, not instances.- New advanced tools

New-style classes have a set of new class tools, including slots, properties, descriptors, and the

__getattribute__method. Most of these have very specific tool-building purposes.

We discussed the third of these changes briefly in a sidebar in Chapter 27, and we’ll revisit it in depth in the contexts of attribute management in Chapter 37 and privacy decorators in Chapter 38. Because the first and second of the changes just listed can break existing 2.X code, though, let’s explore these in more detail before moving on to new-style additions.

Type Model Changes

In new-style classes, the distinction between type and

class has vanished entirely. Classes themselves are types: the

type object generates classes as

its instances, and classes generate instances of their type. If

fact, there is no real difference between built-in types like lists

and strings and user-defined types coded as classes. This is why we

can subclass built-in types, as shown earlier in this

chapter—because subclassing a built-in type such as list qualifies a class as new-style, it

becomes a user-defined type.

Besides allowing us to subclass built-in types, one of the contexts where this becomes most obvious is when we do explicit type testing. With Python 2.6’s classic classes, the type of a class instance is a generic “instance,” but the types of built-in objects are more specific:

C:misc>c:python26python>>>class C: pass# Classic classes in 2.6 ... >>>I = C()>>>type(I)# Instances are made from classes <type 'instance'> >>>I.__class__<class __main__.C at 0x025085A0> >>>type(C)# But classes are not the same as types <type 'classobj'> >>>C.__class__AttributeError: class C has no attribute '__class__' >>>type([1, 2, 3])<type 'list'> >>>type(list)<type 'type'> >>>list.__class__<type 'type'>

But with new-style classes in 2.6, the type of a class

instance is the class it’s created from, since classes are simply

user-defined types—the type of an instance is its class, and the

type of a user-defined class is the same as the type of a built-in

object type. Classes have a __class__ attribute now, too, because they

are instances of type:

C:misc>c:python26python>>>class C(object): pass# New-style classes in 2.6 ... >>>I = C()>>>type(I)# Type of instance is class it's made from <class '__main__.C'> >>>I.__class__<class '__main__.C'> >>>type(C)# Classes are user-defined types <type 'type'> >>>C.__class__<type 'type'> >>>type([1, 2, 3])# Built-in types work the same way <type 'list'> >>>type(list)<type 'type'> >>>list.__class__<type 'type'>

The same is true for all classes in Python 3.0, since all classes are automatically new-style, even if they have no explicit superclasses. In fact, the distinction between built-in types and user-defined class types melts away altogether in 3.0:

C:misc>c:python30python>>>class C: pass# All classes are new-style in 3.0 ... >>>I = C()>>>type(I)# Type of instance is class it's made from <class '__main__.C'> >>>I.__class__<class '__main__.C'> >>>type(C)# Class is a type, and type is a class <class 'type'> >>>C.__class__<class 'type'> >>>type([1, 2, 3])# Classes and built-in types work the same <class 'list'> >>>type(list)<class 'type'> >>>list.__class__<class 'type'>

As you can see, in 3.0 classes are types, but types are also

classes. Technically, each class is generated by a

metaclass—a class that is normally either type itself, or a subclass of it

customized to augment or manage generated classes. Besides impacting

code that does type testing, this turns out to be an important hook

for tool developers. We’ll talk more about metaclasses later in this

chapter, and again in more detail in Chapter 39.

Implications for type testing

Besides providing for built-in type customization and metaclass hooks, the merging of classes and types in the new-style class model can impact code that does type testing. In Python 3.0, for example, the types of class instances compare directly and meaningfully, and in the same way as built-in type objects. This follows from the fact that classes are now types, and an instance’s type is the instance’s class:

C:misc>c:python30python>>>class C: pass... >>>class D: pass... >>>c = C()>>>d = D()>>>type(c) == type(d)# 3.0: compares the instances' classes False >>>type(c), type(d)(<class '__main__.C'>, <class '__main__.D'>) >>>c.__class__, d.__class__(<class '__main__.C'>, <class '__main__.D'>) >>>c1, c2 = C(), C()>>>type(c1) == type(c2)True

With classic classes in 2.6 and earlier, though, comparing

instance types is almost useless, because all instances have the

same “instance” type. To truly compare types, the instance

__class__ attributes must be

compared (if you care about portability, this works in 3.0, too,

but it’s not required there):

C:misc>c:python26python>>>class C: pass... >>>class D: pass... >>>c = C()>>>d = D()>>>type(c) == type(d)# 2.6: all instances are same type True >>>c.__class__ == d.__class__# Must compare classes explicitly False >>>type(c), type(d)(<type 'instance'>, <type 'instance'>) >>>c.__class__, d.__class__(<class __main__.C at 0x024585A0>, <class __main__.D at 0x024588D0>)

And as you should expect by now, new-style classes in 2.6 work the same as all classes in 3.0 in this regard—comparing instance types compares the instances’ classes automatically:

C:misc>c:python26python>>>class C(object): pass... >>>class D(object): pass... >>>c = C()>>>d = D()>>>type(c) == type(d)# 2.6 new-style: same as all in 3.0 False >>>type(c), type(d)(<class '__main__.C'>, <class '__main__.D'>) >>>c.__class__, d.__class__(<class '__main__.C'>, <class '__main__.D'>)

Of course, as I’ve pointed out numerous times in this book,

type checking is usually the wrong thing to do in Python programs

(we code to object interfaces, not object types), and the more

general isinstance built-in is

more likely what you’ll want to use in the rare cases where

instance class types must be queried. However, knowledge of

Python’s type model can help demystify the class model in

general.

All objects derive from “object”

One other ramification of the type change in the

new-style class model is that because all classes derive (inherit)

from the class object either

implicitly or explicitly, and because all types are now classes,

every object derives from the object built-in class, whether directly

or through a superclass. Consider the following interaction in

Python 3.0 (code an explicit object superclass in 2.6 to make this

work equivalently):

>>>class C: pass... >>>X = C()>>>type(X)# Type is now class instance was created from <class '__main__.C'> >>>type(C)<class 'type'>

As before, the type of a class instance is the class it was

made from, and the type of a class is the type class because classes and types

have merged. It is also true, though, that the instance and class

are both derived from the built-in object class, since this is an implicit

or explicit superclass of every class:

>>>isinstance(X, object)True >>>isinstance(C, object)# Classes always inherit from object True

The same holds true for built-in types like lists and

strings, because types are classes in the new-style model—built-in

types are now classes, and their instances derive from object, too:

>>>type('spam')<class 'str'> >>>type(str)<class 'type'> >>>isinstance('spam', object)# Same for built-in types (classes) True >>>isinstance(str, object)True

In fact, type itself

derives from object, and

object derives from type, even though the two are different

objects—a circular relationship that caps the object model and

stems from the fact that types are classes that generate

classes:

>>>type(type)# All classes are types, and vice versa <class 'type'> >>>type(object)<class 'type'> >>>isinstance(type, object)# All classes derive from object, even type True >>>isinstance(object, type)# Types make classes, and type is a class True >>>type is objectFalse

In practical terms, this model makes for fewer special cases

than the prior type/class distinction of classic classes, and it

allows us to write code that assumes and uses an object superclass. We’ll see examples of

the latter later in the book; for now, let’s move on to explore

other new-style changes.

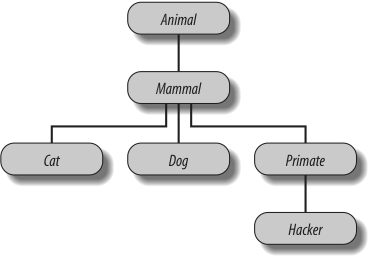

Diamond Inheritance Change

One of the most visible changes in new-style classes is their slightly different inheritance search procedures for the so-called diamond pattern of multiple inheritance trees, where more than one superclass leads to the same higher superclass further above. The diamond pattern is an advanced design concept, is coded only rarely in Python practice, and has not been discussed in this book, so we won’t dwell on this topic in depth.

In short, though, with classic classes, the inheritance search procedure is strictly depth first, and then left to right—Python climbs all the way to the top, hugging the left side of the tree, before it backs up and begins to look further to the right. In new-style classes, the search is more breadth-first in such cases—Python first looks in any superclasses to the right of the first one searched before ascending all the way to the common superclass at the top. In other words, the search proceeds across by levels before moving up. The search algorithm is a bit more complex than this, but this is as much as most programmers need to know.

Because of this change, lower superclasses can overload attributes of higher superclasses, regardless of the sort of multiple inheritance trees they are mixed into. Moreover, the new-style search rule avoids visiting the same superclass more than once when it is accessible from multiple subclasses.

Diamond inheritance example

To illustrate, consider this simplistic incarnation of the diamond

multiple inheritance pattern for classic classes. Here, D’s superclasses B and C both lead to the same common ancestor,

A:

>>>class A:attr = 1# Classic (Python 2.6) >>>class B(A):# B and C both lead to Apass>>>class C(A):attr = 2>>>class D(B, C):pass# Tries A before C >>>x = D()>>>x.attr# Searches x, D, B, A 1

The attribute here is found in superclass A, because with classic classes, the

inheritance search climbs as high as it can before backing up and

moving right—Python will search D, B,

A, and then C, but will stop when attr is found in A, above B.

However, with new-style classes derived from a built-in like

object, and all classes in 3.0,

the search order is different: Python looks in C (to the right of B) before A (above B). That is, it searches D, B,

C, and then A, and in this case, stops in C:

>>>class A(object):attr = 1# New-style ("object" not required in 3.0) >>>class B(A):pass>>>class C(A):attr = 2>>>class D(B, C):pass# Tries C before A >>>x = D()>>>x.attr# Searches x, D, B, C 2

This change in the inheritance search procedure is based

upon the assumption that if you mix in C lower in the tree, you probably intend

to grab its attributes in preference to A’s. It also assumes that C is always intended to override

A’s attributes in all contexts,

which is probably true when it’s used standalone but may not be

when it’s mixed into a diamond with classic classes—you might not

even know that C may be mixed

in like this when you code it.

Since it is most likely that the programmer meant that

C should override A in this case, though, new-style

classes visit C first.

Otherwise, C could be

essentially pointless in a diamond context: it could not customize

A and would be used only for

names unique to C.

Explicit conflict resolution

Of course, the problem with assumptions is that they assume things. If this search order deviation seems too subtle to remember, or if you want more control over the search process, you can always force the selection of an attribute from anywhere in the tree by assigning or otherwise naming the one you want at the place where the classes are mixed together:

>>>class A:attr = 1# Classic >>>class B(A):pass>>>class C(A):attr = 2>>>class D(B, C):attr = C.attr# Choose C, to the right >>>x = D()>>>x.attr# Works like new-style (all 3.0) 2

Here, a tree of classic classes is emulating the search

order of new-style classes: the assignment to the attribute in

D picks the version in C, thereby subverting the normal

inheritance search path (D.attr

will be lowest in the tree). New-style classes can similarly

emulate classic classes by choosing the attribute above at the

place where the classes are mixed together:

>>>class A(object):attr = 1# New-style >>>class B(A):pass>>>class C(A):attr = 2>>>class D(B, C):attr = B.attr# Choose A.attr, above >>>x = D()>>>x.attr# Works like classic (default 2.6) 1

If you are willing to always resolve conflicts like this, you can largely ignore the search order difference and not rely on assumptions about what you meant when you coded your classes.

Naturally, attributes picked this way can also be method functions—methods are normal, assignable objects:

>>>class A:def meth(s): print('A.meth')>>>class C(A):def meth(s): print('C.meth')>>>class B(A):pass>>>class D(B, C): pass# Use default search order >>>x = D()# Will vary per class type >>>x.meth()# Defaults to classic order in 2.6 A.meth >>>class D(B, C): meth = C.meth# Pick C's method: new-style (and 3.0) >>>x = D()>>>x.meth()C.meth >>>class D(B, C): meth = B.meth# Pick B's method: classic >>>x = D()>>>x.meth()A.meth

Here, we select methods by explicitly assigning to names lower in the tree. We might also simply call the desired class explicitly; in practice, this pattern might be more common, especially for things like constructors:

class D(B, C):

def meth(self): # Redefine lower

...

C.meth(self) # Pick C's method by callingSuch selections by assignment or call at mix-in points can

effectively insulate your code from this difference in class

flavors. Explicitly resolving the conflicts this way ensures that

your code won’t vary per Python version in the future (apart from

perhaps needing to derive classes from object or a built-in type for the

new-style tools in 2.6). To trace how new-stye inheritance works

by default, see also the class.mro() method mentioned in the

preceding chapter’s class lister examples.

Note

Even without the classic/new-style class divergence, the explicit method resolution technique shown here may come in handy in multiple inheritance scenarios in general. For instance, if you want part of a superclass on the left and part of a superclass on the right, you might need to tell Python which same-named attributes to choose by using explicit assignments in subclasses. We’ll revisit this notion in a “gotcha” at the end of this chapter.

Also note that diamond inheritance patterns might be more

problematic in some cases than I’ve implied here (e.g., what if

B and C both have required constructors that

call to the constructor in A?). Since such contexts are rare in

real-world Python, we’ll leave this topic outside this book’s

scope (but see the super

built-in function for hints—besides providing generic access to

superclasses in single inheritance trees, super supports a cooperative mode for

resolving some conflicts in multiple inheritance trees).

Scope of search order change

In sum, by default, the diamond pattern is searched differently for classic and new-style classes, and this is a nonbackward-compatible change. Keep in mind, though, that this change primarily affects diamond pattern cases of multiple inheritance; new-style class inheritance works unchanged for most other inheritance tree structures. Further, it’s not impossible that this entire issue may be of more theoretical than practical importance—because the new-style search wasn’t significant enough to address until Python 2.2 and didn’t become standard until 3.0, it seems unlikely to impact much Python code.

Having said that, I should also note that even though you

might not code diamond patterns in classes you write yourself,

because the implied object

superclass is above every class in 3.0, every

case of multiple inheritance exhibits the diamond pattern today.

That is, in new-style classes, object automatically plays the role that

the class A does in the example

we just considered. Hence the new-style search rule not only

modifies logical semantics, but also optimizes performance by

avoiding visiting the same class more than once.

Just as important, the implied object superclass in the new-style model

provides default methods for a variety of built-in operations,

including the __str__ and

__repr__ display format

methods. Run a dir(object) to

see which methods are provided. Without the new-style search

order, in multiple inheritance cases the defaults in object would always override

redefinitions in user-coded classes, unless they were always made

in the leftmost superclass. In other words, the new-style class

model itself makes using the new-style search order more

critical!

For a more visual example of the implied object superclass in 3.0, and other

examples of diamond patterns created by it, see the ListTree class’s output in the lister.py example in the preceding

chapter, as well as the classtree.py tree walker example in Chapter 28.

New-Style Class Extensions

Beyond the changes described in the prior section (which, frankly, may be too academic and obscure to matter to many readers of this book), new-style classes provide a handful of more advanced class tools that have more direct and practical application. The following sections provide an overview of each of these additional features, available for new-style class in Python 2.6 and all classes in Python 3.0.

Instance Slots

By assigning a sequence of string attribute names to a

special __slots__ class

attribute, it is possible for a new-style class to both limit the

set of legal attributes that instances of the class will have and

optimize memory and speed performance.

This special attribute is typically set by assigning a

sequence of string names to the variable __slots__ at the top level of a class statement: only those names in the

__slots__ list can be assigned as

instance attributes. However, like all names in Python, instance

attribute names must still be assigned before they can be

referenced, even if they’re listed in __slots__. For example:

>>>class limiter(object):...__slots__ = ['age', 'name', 'job']... >>>x = limiter()>>>x.age# Must assign before use AttributeError: age >>>x.age = 40>>>x.age40 >>>x.ape = 1000# Illegal: not in __slots__ AttributeError: 'limiter' object has no attribute 'ape'

Slots are something of a break with Python’s dynamic nature,

which dictates that any name may be created by assignment. However,

this feature is envisioned as both a way to catch “typo” errors like

this (assignments to illegal attribute names not in __slots__ are detected), as well as an

optimization mechanism. Allocating a namespace dictionary for every

instance object can become expensive in terms of memory if many

instances are created and only a few attributes are required. To

save space and speed execution (to a degree that can vary per

program), instead of allocating a dictionary for each instance, slot

attributes are stored sequentially for quicker lookup.

Slots and generic code

In fact, some instances with slots may not have a __dict__ attribute dictionary at all,

which can make some metaprograms more complex (including some

coded in this book). Tools that generically list attributes or

access attributes by string name, for example, must be careful to

use more storage-neutral tools than __dict__, such as the getattr, setattr, and dir built-in functions, which apply to

attributes based on either __dict__ or __slots__ storage. In some cases, both

attribute sources may need to be queried for completeness.

For example, when slots are used, instances do not normally

have an attribute dictionary—Python uses the class

descriptors feature covered in Chapter 37 to allocate space for slot

attributes in the instance instead. Only names in the slots list

can be assigned to instances, but slot-based attributes can still

be fetched and set by name using generic tools. In Python 3.0 (and

in 2.6 for classes derived from object):

>>>class C:...__slots__ = ['a', 'b']# __slots__ means no __dict__ by default ... >>>X = C()>>>X.a = 1>>>X.a1 >>>X.__dict__AttributeError: 'C' object has no attribute '__dict__' >>>getattr(X, 'a')1 >>>setattr(X, 'b', 2)# But getattr() and setattr() still work >>> X.b 2 >>>'a' in dir(X)# And dir() finds slot attributes too True >>>'b' in dir(X)True

Without an attribute namespaces dictionary, it’s not possible to assign new names to instances that are not names in the slots list:

>>>class D:...__slots__ = ['a', 'b']...def __init__(self): self.d = 4# Cannot add new names if no __dict__ ... >>>X = D()AttributeError: 'D' object has no attribute 'd'

However, extra attributes can still be accommodated by

including __dict__ in __slots__, in order to allow for an

attribute namespace dictionary. In this case,

both storage mechanisms are used, but generic

tools such as getattr allow us

to treat them as a single set of attributes:

>>>class D:...__slots__ = ['a', 'b', '__dict__']# List __dict__ to include one too ...c = 3# Class attrs work normally ...def __init__(self): self.d = 4# d put in __dict__, a in __slots__ ... >>>X = D()>>>X.d4 >>>X.__dict__# Some objects have both __dict__ and __slots__ {'d': 4} # getattr() can fetch either type of attr >>>X.__slots__['a', 'b', '__dict__'] >>>X.c3 >>>X.a# All instance attrs undefined until assigned AttributeError: a >>>X.a = 1>>>getattr(X, 'a',), getattr(X, 'c'), getattr(X, 'd') (1, 3, 4)

Code that wishes to list just all instance attributes

generically, though, may still need to allow for both storage

forms, since dir also returns

inherited attributes (this relies on dictionary iterators to

collect keys):

>>>for attr in list(X.__dict__) + X.__slots__:...print(attr, '=>', getattr(X, attr))d => 4 a => 1 b => 2 __dict__ => {'d': 4}

Since either can be omitted, this is more correctly coded as

follows (getattr allows for

defaults):

>>>for attr in list(getattr(X, '__dict__', [])) + getattr(X, '__slots__', []):...print(attr, '=>', getattr(X, attr))d => 4 a => 1 b => 2 __dict__ => {'d': 4}

Multiple __slot__ lists in superclasses

Note, however, that this code addresses only slot names in

the lowest __slots__ attribute inherited by an instance. If

multiple classes in a class tree have their own __slots__ attributes, generic programs

must develop other policies for listing attributes (e.g.,

classifying slot names as attributes of classes, not

instances).

Slot declarations can appear in multiple classes in a class tree, but they are subject to a number of constraints that are somewhat difficult to rationalize unless you understand the implementation of slots as class-level descriptors (a tool we’ll study in detail in the last part of this book):

If a subclass inherits from a superclass without a

__slots__, the__dict__attribute of the superclass will always be accessible, making a__slots__in the subclass meaningless.If a class defines the same slot name as a superclass, the version of the name defined by the superclass slot will be accessible only by fetching its descriptor directly from the superclass.

Because the meaning of a

__slots__declaration is limited to the class in which it appears, subclasses will have a__dict__unless they also define a__slots__.

In terms of listing instance attributes generically, slots

in multiple classes might require manual class tree climbs,

dir usage, or a policy that

treats slot names as a different category of names

altogether:

>>>class E:...__slots__ = ['c', 'd']# Superclass has slots ... >>>class D(E):...__slots__ = ['a', '__dict__']# So does its subclass ... >>>X = D()>>>X.a = 1; X.b = 2; X.c = 3# The instance is the union >>>X.a, X.c(1, 3) >>>E.__slots__# But slots are not concatenated ['c', 'd'] >>>D.__slots__['a', '__dict__'] >>>X.__slots__# Instance inherits *lowest* __slots__ ['a', '__dict__'] >>>X.__dict__# And has its own an attr dict {'b': 2} >>>for attr in list(getattr(X, '__dict__', [])) + getattr(X, '__slots__', []):...print(attr, '=>', getattr(X, attr))... b => 2 # Superclass slots missed! a => 1 __dict__ => {'b': 2} >>>dir(X)# dir() includes all slot names [...many names omitted... 'a', 'b', 'c', 'd']

When such generality is possible, slots are probably best

treated as class attributes, rather than trying to mold them to

appear the same as normal instance attributes. For more on slots

in general, see the Python standard manual set. Also watch for an

example that allows for attributes based on both __slots__ and __dict__ storage in the Private decorator discussion of

Chapter 38.

For a prime example of why generic programs may need to care about slots, see the lister.py display mix-in classes example in the multiple inheritance section of the prior chapter; a note there describes the example’s slot concerns. In such a tool that attempts to list attributes generically, slot usage requires either extra code or the implementation of policies regarding the handling of slot-based attributes in general.

Class Properties

A mechanism known as properties

provides another way for new-style classes to define automatically

called methods for access or assignment to instance attributes. At

least for specific attributes, this feature is an alternative to

many current uses of the __getattr__ and __setattr__ overloading methods we studied

in Chapter 29. Properties have a

similar effect to these two methods, but they incur an extra method

call only for accesses to names that require dynamic computation.

Properties (and slots) are based on a new notion of attribute

descriptors, which is too advanced for us to cover here.

In short, a property is a type of object assigned to a

class attribute name. A property is generated by calling the

property built-in with three

methods (handlers for get, set, and delete operations), as well as a

docstring; if any argument is passed as None or omitted, that operation is not

supported. Properties are typically assigned at the top level of a

class statement [e.g., name = property(...)]. When thus assigned,

accesses to the class attribute itself (e.g., obj.name) are automatically routed to one

of the accessor methods passed into the property. For example, the

__getattr__ method allows classes

to intercept undefined attribute references:

>>>class classic:...def __getattr__(self, name):...if name == 'age':...return 40...else:...raise AttributeError... >>>x = classic()>>>x.age# Runs __getattr__ 40 >>>x.name# Runs __getattr__ AttributeError

Here is the same example, coded with properties instead (note

that properties are available for all classes but require the

new-style object derivation in

2.6 to work properly for intercepting attribute assignments):

>>>class newprops(object):...def getage(self):...return 40...age = property(getage, None, None, None)# get, set, del, docs ... >>>x = newprops()>>>x.age# Runs getage 40 >>>x.name# Normal fetch AttributeError: newprops instance has no attribute 'name'

For some coding tasks, properties can be less complex and quicker to run than the traditional techniques. For example, when we add attribute assignment support, properties become more attractive—there’s less code to type, and no extra method calls are incurred for assignments to attributes we don’t wish to compute dynamically:

>>>class newprops(object):...def getage(self):...return 40...def setage(self, value):...print('set age:', value)...self._age = value...age = property(getage, setage, None, None)... >>>x = newprops()>>>x.age# Runs getage 40 >>>x.age = 42# Runs setage set age: 42 >>>x._age# Normal fetch; no getage call 42 >>>x.job = 'trainer'# Normal assign; no setage call >>>x.job# Normal fetch; no getage call 'trainer'

The equivalent classic class incurs extra method calls for

assignments to attributes not being managed and needs to route

attribute assignments through the attribute dictionary (or, for

new-style classes, to the object

superclass’s __setattr__) to

avoid loops:

>>>class classic:...def __getattr__(self, name):# On undefined reference ...if name == 'age':...return 40...else:...raise AttributeError...def __setattr__(self, name, value):# On all assignments ...print('set:', name, value)...if name == 'age':...self.__dict__['_age'] = value...else:...self.__dict__[name] = value... >>>x = classic()>>>x.age# Runs __getattr__ 40 >>>x.age = 41# Runs __setattr__ set: age 41 >>>x._age# Defined: no __getattr__ call 41 >>>x.job = 'trainer'# Runs __setattr__ again set: job trainer >>>x.job# Defined: no __getattr__ call 'trainer'

Properties seem like a win for this

simple example. However, some applications of __getattr__ and __setattr__ may still require more dynamic

or generic interfaces than properties directly provide. For example,

in many cases, the set of attributes to be supported cannot be

determined when the class is coded, and may not even exist in any

tangible form (e.g., when delegating arbitrary

method references to a wrapped/embedded object generically). In such

cases, a generic __getattr__ or a

__setattr__ attribute handler

with a passed-in attribute name may be preferable. Because such

generic handlers can also handle simpler cases, properties are often

an optional extension.

For more details on both options, stay tuned for Chapter 37 in the final part of this book. As we’ll see there, it’s also possible to code properties using function decorator syntax, a topic introduced later in this chapter.

__getattribute__ and Descriptors

The __getattribute__

method, available for new-style classes only, allows a class

to intercept all attribute references, not just

undefined references, like __getattr__. It is also somewhat trickier

to use than __getattr__: it is

prone to loops, much like __setattr__, but in different ways.

In addition to properties and operator overloading methods,

Python supports the notion of attribute

descriptors—classes with __get__ and __set__ methods, assigned to class

attributes and inherited by instances, that intercept read and write

accesses to specific attributes. Descriptors are in a sense a more

general form of properties; in fact, properties are a simplified way

to define a specific type of descriptor, one that runs functions on

access. Descriptors are also used to implement the slots feature we

met earlier.

Because properties, __getattribute__, and descriptors are

somewhat advanced topics, we’ll defer the rest of their coverage, as

well as more on properties, to Chapter 37

in the final part of this book.

Metaclasses

Most of the changes and feature additions of new-style classes integrate with the notion of subclassable types mentioned earlier in this chapter, because subclassable types and new-style classes were introduced in conjunction with a merging of the type/class dichotomy in Python 2.2 and beyond. As we’ve seen, in 3.0, this merging is complete: classes are now types, and types are classes.

Along with these changes, Python also grew a more coherent

protocol for coding metaclasses,

which are classes that subclass the type object and intercept class creation

calls. As such, they provide a well-defined hook for management and

augmentation of class objects. They are also an advanced topic that

is optional for most Python programmers, so we’ll postpone further

details here. We’ll meet metaclasses briefly later in this chapter

in conjunction with class decorators, and we’ll explore them in full

detail in Chapter 39, in the final part of

this book.

Static and Class Methods

As of Python 2.2, it is possible to define two kinds of methods within a class that can be called without an instance: static methods work roughly like simple instance-less functions inside a class, and class methods are passed a class instead of an instance. Although this feature was added in conjunction with the new-style classes discussed in the prior sections, static and class methods work for classic classes too.

To enable these method modes, special built-in functions called

staticmethod and classmethod must be called within the class,

or invoked with the decoration syntax we’ll meet later in this

chapter. In Python 3.0, instance-less methods called only through a

class name do not require a staticmethod declaration, but such methods

called through instances do.

Why the Special Methods?

As we’ve learned, a class method is normally passed an instance object in its first argument, to serve as the implied subject of the method call. Today, though, there are two ways to modify this model. Before I explain what they are, I should explain why this might matter to you.

Sometimes, programs need to process data associated with classes instead of instances. Consider keeping track of the number of instances created from a class, or maintaining a list of all of a class’s instances that are currently in memory. This type of information and its processing are associated with the class rather than its instances. That is, the information is usually stored on the class itself and processed in the absence of any instance.

For such tasks, simple functions coded outside a class can

often suffice—because they can access class attributes through the

class name, they have access to class data and never require access

to an instance. However, to better associate such code with a class,

and to allow such processing to be customized with inheritance as

usual, it would be better to code these types of functions inside

the class itself. To make this work, we need methods in a class that

are not passed, and do not expect, a self instance argument.

Python supports such goals with the notion of static

methods—simple functions with no self argument that are nested in a class

and are designed to work on class attributes instead of instance

attributes. Static methods never receive an automatic self argument, whether called through a

class or an instance. They usually keep track of information that

spans all instances, rather than providing behavior for

instances.

Although less commonly used, Python also supports the notion

of class methods—methods of a class that are

passed a class object in their first argument instead of an

instance, regardless of whether they are called through an instance

or a class. Such methods can access class data through their

self class argument even if

called through an instance. Normal methods (now known in formal

circles as instance methods) still receive a

subject instance when called; static and class methods do

not.

Static Methods in 2.6 and 3.0

The concept of static methods is the same in both Python 2.6 and 3.0, but its implementation requirements have evolved somewhat in Python 3.0. Since this book covers both versions, I need to explain the differences in the two underlying models before we get to the code.

Really, we already began this story in the preceding chapter, when we explored the notion of unbound methods. Recall that both Python 2.6 and 3.0 always pass an instance to a method that is called through an instance. However, Python 3.0 treats methods fetched directly from a class differently than 2.6:

In other words, Python 2.6 class methods always require an

instance to be passed in, whether they are called through an

instance or a class. By contrast, in Python 3.0 we are required to

pass an instance to a method only if the method expects one—methods

without a self instance argument

can be called through the class without passing an instance. That

is, 3.0 allows simple functions in a class, as long as they do not

expect and are not passed an instance argument. The net effect is

that:

In Python 2.6, we must always declare a method as static in order to call it without an instance, whether it is called through a class or an instance.

In Python 3.0, we need not declare such methods as static if they will be called through a class only, but we must do so in order to call them through an instance.

To illustrate, suppose we want to use class attributes to count how many instances are generated from a class. The following file, spam.py, makes a first attempt—its class has a counter stored as a class attribute, a constructor that bumps up the counter by one each time a new instance is created, and a method that displays the counter’s value. Remember, class attributes are shared by all instances. Therefore, storing the counter in the class object itself ensures that it effectively spans all instances:

class Spam:

numInstances = 0

def __init__(self):

Spam.numInstances = Spam.numInstances + 1

def printNumInstances():

print("Number of instances created: ", Spam.numInstances)The printNumInstances

method is designed to process class data, not instance data—it’s

about all the instances, not any one in

particular. Because of that, we want to be able to call it without

having to pass an instance. Indeed, we don’t want to make an

instance to fetch the number of instances, because this would change

the number of instances we’re trying to fetch! In other words, we

want a self-less “static” method.

Whether this code works or not, though, depends on which Python you use, and which way you call the method—through the class or through an instance. In 2.6 (and 2.X in general), calls to a self-less method function through both the class and instances fail (I’ve omitted some error text here for space):

C:misc>c:python26python>>>from spam import Spam>>>a = Spam()# Cannot call unbound class methods in 2.6 >>>b = Spam()# Methods expect a self object by default >>>c = Spam()>>>Spam.printNumInstances()TypeError: unbound method printNumInstances() must be called with Spam instance as first argument (got nothing instead) >>>a.printNumInstances()TypeError: printNumInstances() takes no arguments (1 given)

The problem here is that unbound instance methods aren’t

exactly the same as simple functions in 2.6. Even though there are

no arguments in the def header,

the method still expects an instance to be passed in when it’s

called, because the function is associated with a class. In Python

3.0 (and later 3.X releases), calls to self-less methods made

through classes work, but calls from instances fail:

C:misc>c:python30python>>>from spam import Spam>>>a = Spam()# Can call functions in class in 3.0 >>>b = Spam()# Calls through instances still pass a self >>>c = Spam()>>>Spam.printNumInstances()# Differs in 3.0 Number of instances created: 3 >>>a.printNumInstances()TypeError: printNumInstances() takes no arguments (1 given)

That is, calls to instance-less methods like printNumInstances made through the

class fail in Python 2.6 but work in Python

3.0. On the other hand, calls made through an

instance fail in both Pythons, because an

instance is automatically passed to a method that does not have an

argument to receive it:

Spam.printNumInstances() # Fails in 2.6, works in 3.0 instance.printNumInstances() # Fails in both 2.6 and 3.0

If you’re able to use 3.0 and stick with calling self-less methods through classes only, you already have a static method feature. However, to allow self-less methods to be called through classes in 2.6 and through instances in both 2.6 and 3.0, you need to either adopt other designs or be able to somehow mark such methods as special. Let’s look at both options in turn.

Static Method Alternatives

Short of marking a self-less method as special, there are a few

different coding structures that can be tried. If you want to call

functions that access class members without an instance, perhaps the

simplest idea is to just make them simple functions outside the

class, not class methods. This way, an instance isn’t expected in

the call. For example, the following mutation of spam.py works the same in Python 3.0 and

2.6 (albeit displaying extra parentheses in 2.6 for its print statement):

def printNumInstances():

print("Number of instances created: ", Spam.numInstances)

class Spam:

numInstances = 0

def __init__(self):

Spam.numInstances = Spam.numInstances + 1

>>> import spam

>>> a = spam.Spam()

>>> b = spam.Spam()

>>> c = spam.Spam()

>>> spam.printNumInstances() # But function may be too far removed

Number of instances created: 3 # And cannot be changed via inheritance

>>> spam.Spam.numInstances

3Because the class name is accessible to the simple function as a global variable, this works fine. Also, note that the name of the function becomes global, but only to this single module; it will not clash with names in other files of the program.

Prior to static methods in Python, this structure was the general prescription. Because Python already provides modules as a namespace-partitioning tool, one could argue that there’s not typically any need to package functions in classes unless they implement object behavior. Simple functions within modules like the one here do much of what instance-less class methods could, and are already associated with the class because they live in the same module.

Unfortunately, this approach is still less than ideal. For one thing, it adds to this file’s scope an extra name that is used only for processing a single class. For another, the function is much less directly associated with the class; in fact, its definition could be hundreds of lines away. Perhaps worse, simple functions like this cannot be customized by inheritance, since they live outside a class’s namespace: subclasses cannot directly replace or extend such a function by redefining it.

We might try to make this example work in a version-neutral way by using a normal method and always calling it through (or with) an instance, as usual:

class Spam:

numInstances = 0

def __init__(self):

Spam.numInstances = Spam.numInstances + 1

def printNumInstances(self):

print("Number of instances created: ", Spam.numInstances)

>>> from spam import Spam

>>> a, b, c = Spam(), Spam(), Spam()

>>> a.printNumInstances()

Number of instances created: 3

>>> Spam.printNumInstances(a)

Number of instances created: 3

>>> Spam().printNumInstances() # But fetching counter changes counter!

Number of instances created: 4Unfortunately, as mentioned earlier, such an approach is completely unworkable if we don’t have an instance available, and making an instance changes the class data, as illustrated in the last line here. A better solution would be to somehow mark a method inside a class as never requiring an instance. The next section shows how.

Using Static and Class Methods

Today, there is another option for coding simple functions

associated with a class that may be called through either the class

or its instances. As of Python 2.2, we can code classes with static

and class methods, neither of which requires an instance argument to

be passed in when invoked. To designate such methods, classes call

the built-in functions staticmethod and

classmethod, as hinted in the earlier discussion of new-style

classes. Both mark a function object as special—i.e., as requiring

no instance if static and requiring a class argument if a class

method. For example:

class Methods:

def imeth(self, x): # Normal instance method: passed a self

print(self, x)

def smeth(x): # Static: no instance passed

print(x)

def cmeth(cls, x): # Class: gets class, not instance

print(cls, x)

smeth = staticmethod(smeth) # Make smeth a static method

cmeth = classmethod(cmeth) # Make cmeth a class methodNotice how the last two assignments in this code simply

reassign the method names smeth and cmeth. Attributes are created and changed

by any assignment in a class

statement, so these final assignments simply overwrite the

assignments made earlier by the defs.

Technically, Python now supports three kinds of class-related methods: instance, static, and class. Moreover, Python 3.0 extends this model by also allowing simple functions in a class to serve the role of static methods without extra protocol, when called through a class.

Instance methods are the normal (and default) case that we’ve seen in this book. An instance method must always be called with an instance object. When you call it through an instance, Python passes the instance to the first (leftmost) argument automatically; when you call it through a class, you must pass along the instance manually (for simplicity, I’ve omitted some class imports in interactive sessions like this one):

>>>obj = Methods()# Make an instance >>>obj.imeth(1)# Normal method, call through instance <__main__.Methods object...> 1 # Becomes imeth(obj, 1) >>>Methods.imeth(obj, 2)# Normal method, call through class <__main__.Methods object...> 2 # Instance passed explicitly

By contrast, static methods are called

without an instance argument. Unlike simple functions outside a

class, their names are local to the scopes of the classes in which

they are defined, and they may be looked up by inheritance.

Instance-less functions can be called through a class normally in

Python 3.0, but never by default in 2.6. Using the staticmethod built-in allows such methods

to also be called through an instance in 3.0 and through both a

class and an instance in Python 2.6 (the first of these works in 3.0

without staticmethod, but the

second does not):

>>>Methods.smeth(3)# Static method, call through class 3 # No instance passed or expected >>>obj.smeth(4)# Static method, call through instance 4 # Instance not passed

Class methods are similar, but Python automatically passes the class (not an instance) in to a class method’s first (leftmost) argument, whether it is called through a class or an instance:

>>>Methods.cmeth(5)# Class method, call through class <class '__main__.Methods'> 5 # Becomes cmeth(Methods, 5) >>>obj.cmeth(6)# Class method, call through instance <class '__main__.Methods'> 6 # Becomes cmeth(Methods, 6)

Counting Instances with Static Methods

Now, given these built-ins, here is the static method equivalent of this section’s instance-counting example—it marks the method as special, so it will never be passed an instance automatically:

class Spam:

numInstances = 0 # Use static method for class data

def __init__(self):

Spam.numInstances += 1

def printNumInstances():

print("Number of instances:", Spam.numInstances)

printNumInstances = staticmethod(printNumInstances)Using the static method built-in, our code now allows the self-less method to be called through the class or any instance of it, in both Python 2.6 and 3.0:

>>>a = Spam()>>>b = Spam()>>>c = Spam()>>>Spam.printNumInstances()# Call as simple function Number of instances: 3 >>>a.printNumInstances()# Instance argument not passed Number of instances: 3

Compared to simply moving printNumInstances outside the class, as

prescribed earlier, this version requires an extra staticmethod call; however, it localizes

the function name in the class scope (so it won’t clash with other

names in the module), moves the function code closer to where it is

used (inside the class

statement), and allows subclasses to customize

the static method with inheritance—a more convenient approach than

importing functions from the files in which superclasses are coded.

The following subclass and new testing session illustrate:

class Sub(Spam):

def printNumInstances(): # Override a static method

print("Extra stuff...") # But call back to original

Spam.printNumInstances()

printNumInstances = staticmethod(printNumInstances)

>>> a = Sub()

>>> b = Sub()

>>> a.printNumInstances() # Call from subclass instance

Extra stuff...

Number of instances: 2

>>> Sub.printNumInstances() # Call from subclass itself

Extra stuff...

Number of instances: 2

>>> Spam.printNumInstances()

Number of instances: 2Moreover, classes can inherit the static method without redefining it—it is run without an instance, regardless of where it is defined in a class tree:

>>>class Other(Spam): pass# Inherit static method verbatim >>>c = Other()>>>c.printNumInstances()Number of instances: 3

Counting Instances with Class Methods

Interestingly, a class method can do similar work here—the following has the same behavior as the static method version listed earlier, but it uses a class method that receives the instance’s class in its first argument. Rather than hardcoding the class name, the class method uses the automatically passed class object generically:

class Spam:

numInstances = 0 # Use class method instead of static

def __init__(self):

Spam.numInstances += 1

def printNumInstances(cls):

print("Number of instances:", cls.numInstances)

printNumInstances = classmethod(printNumInstances)This class is used in the same way as the prior versions, but

its printNumInstances method

receives the class, not the instance, when called from both the

class and an instance:

>>>a, b = Spam(), Spam()>>>a.printNumInstances()# Passes class to first argument Number of instances: 2 >>>Spam.printNumInstances()# Also passes class to first argument Number of instances: 2

When using class methods, though, keep in mind that they

receive the most specific (i.e., lowest) class

of the call’s subject. This has some subtle implications when trying

to update class data through the passed-in class. For example, if in

module test.py we subclass to

customize as before, augment Spam.printNumInstances to also display its

cls argument, and start a new

testing session:

class Spam:

numInstances = 0 # Trace class passed in

def __init__(self):

Spam.numInstances += 1

def printNumInstances(cls):

print("Number of instances:", cls.numInstances, cls)

printNumInstances = classmethod(printNumInstances)

class Sub(Spam):

def printNumInstances(cls): # Override a class method

print("Extra stuff...", cls) # But call back to original

Spam.printNumInstances()

printNumInstances = classmethod(printNumInstances)

class Other(Spam): pass # Inherit class method verbatimthe lowest class is passed in whenever a class method is run, even for subclasses that have no class methods of their own:

>>>x, y = Sub(), Spam()>>>x.printNumInstances()# Call from subclass instance Extra stuff... <class 'test.Sub'> Number of instances: 2 <class 'test.Spam'> >>>Sub.printNumInstances()# Call from subclass itself Extra stuff... <class 'test.Sub'> Number of instances: 2 <class 'test.Spam'> >>>y.printNumInstances()Number of instances: 2 <class 'test.Spam'>

In the first call here, a class method call is made through an

instance of the Sub subclass, and

Python passes the lowest class, Sub, to the class method. All is well in

this case—since Sub’s

redefinition of the method calls the Spam superclass’s version explicitly, the

superclass method in Spam

receives itself in its first argument. But watch what happens for an

object that simply inherits the class method:

>>>z = Other()>>>z.printNumInstances()Number of instances: 3 <class 'test.Other'>

This last call here passes Other to Spam’s class method. This works in this

example because fetching the counter finds it

in Spam by inheritance. If this

method tried to assign to the passed class’s

data, though, it would update Other, not Spam! In this specific case, Spam is probably better off hardcoding its

own class name to update its data, rather than relying on the

passed-in class argument.

Counting instances per class with class methods

In fact, because class methods always receive the lowest class in an instance’s tree:

Static methods and explicit class names may be a better solution for processing data local to a class.

Class methods may be better suited to processing data that may differ for each class in a hierarchy.

Code that needs to manage per-class instance counters, for example, might be best off leveraging class methods. In the following, the top-level superclass uses a class method to manage state information that varies for and is stored on each class in the tree—similar in spirit to the way instance methods manage state information in class instances:

class Spam:

numInstances = 0

def count(cls): # Per-class instance counters

cls.numInstances += 1 # cls is lowest class above instance

def __init__(self):

self.count() # Passes self.__class__ to count

count = classmethod(count)

class Sub(Spam):

numInstances = 0

def __init__(self): # Redefines __init__

Spam.__init__(self)

class Other(Spam): # Inherits __init__

numInstances = 0

>>> x = Spam()

>>> y1, y2 = Sub(), Sub()

>>> z1, z2, z3 = Other(), Other(), Other()

>>> x.numInstances, y1.numInstances, z1.numInstances

(1, 2, 3)

>>> Spam.numInstances, Sub.numInstances, Other.numInstances

(1, 2, 3)Static and class methods have additional advanced roles,

which we will finesse here; see other resources for more use

cases. In recent Python versions, though, the static and class

method designations have become even simpler with the advent of

function decoration syntax—a way to apply one function to another

that has roles well beyond the static method use case that was its

motivation. This syntax also allows us to augment classes in

Python 2.6 and 3.0—to initialize data like the numInstances counter in the last

example, for instance. The next section explains how.

Decorators and Metaclasses: Part 1

Because the staticmethod call

technique described in the prior section initially seemed obscure to

some users, a feature was eventually added to make the operation

simpler. Function decorators provide a way to

specify special operation modes for functions, by wrapping them in an

extra layer of logic implemented as another function.

Function decorators turn out to be general tools: they are useful for adding many types of logic to functions besides the static method use case. For instance, they may be used to augment functions with code that logs calls made to them, checks the types of passed arguments during debugging, and so on. In some ways, function decorators are similar to the delegation design pattern we explored in Chapter 30, but they are designed to augment a specific function or method call, not an entire object interface.

Python provides some built-in function decorators for operations such as marking static methods, but programmers can also code arbitrary decorators of their own. Although they are not strictly tied to classes, user-defined function decorators often are coded as classes to save the original functions, along with other data, as state information. There’s also a more recent related extension available in Python 2.6 and 3.0: class decorators are directly tied to the class model, and their roles overlap with metaclasses.

Function Decorator Basics

Syntactically, a function decorator is a sort of runtime declaration

about the function that follows. A function decorator is coded on a

line by itself just before the def statement that defines a function or

method. It consists of the @ symbol, followed

by what we call a metafunction—a function (or other callable

object) that manages another function. Static methods today, for example, may be coded with

decorator syntax like this:

class C:

@staticmethod # Decoration syntax

def meth():

...Internally, this syntax has the same effect as the following (passing the function through the decorator and assigning the result back to the original name):

class C:

def meth():

...

meth = staticmethod(meth) # Rebind nameDecoration rebinds the method name to the decorator’s result.

The net effect is that calling the method function’s name later

actually triggers the result of its staticmethod decorator first. Because a

decorator can return any sort of object, this allows the decorator

to insert a layer of logic to be run on every call. The decorator

function is free to return either the original function itself, or a

new object that saves the original function passed to the decorator

to be invoked indirectly after the extra logic layer runs.

With this addition, here’s a better way to code our static

method example from the prior section in either Python 2.6 or 3.0

(the classmethod decorator is

used the same way):

class Spam:

numInstances = 0

def __init__(self):

Spam.numInstances = Spam.numInstances + 1

@staticmethod

def printNumInstances():

print("Number of instances created: ", Spam.numInstances)

a = Spam()

b = Spam()

c = Spam()

Spam.printNumInstances() # Calls from both classes and instances work now!

a.printNumInstances() # Both print "Number of instances created: 3"Keep in mind that staticmethod is still a built-in function;

it may be used in decoration syntax, just because it takes a

function as argument and returns a callable. In fact, any such

function can be used in this way—even user-defined functions we code

ourselves, as the next section explains.

A First Function Decorator Example

Although Python provides a handful of built-in functions that can be used as decorators, we can also write custom decorators of our own. Because of their wide utility, we’re going to devote an entire chapter to coding decorators in the final part of this book. As a quick example, though, let’s look at a simple user-defined decorator at work.

Recall from Chapter 29 that the

__call__ operator overloading

method implements a function-call interface for class instances. The

following code uses this to define a class that saves the decorated

function in the instance and catches calls to the original name.

Because this is a class, it also has state information (a counter of

calls made):

class tracer:

def __init__(self, func):

self.calls = 0

self.func = func

def __call__(self, *args):

self.calls += 1

print('call %s to %s' % (self.calls, self.func.__name__))

self.func(*args)

@tracer # Same as spam = tracer(spam)

def spam(a, b, c): # Wrap spam in a decorator object

print(a, b, c)

spam(1, 2, 3) # Really calls the tracer wrapper object

spam('a', 'b', 'c') # Invokes __call__ in class

spam(4, 5, 6) # __call__ adds logic and runs original objectBecause the spam function

is run through the tracer

decorator, when the original spam

name is called it actually triggers the __call__ method in the class. This method

counts and logs the call, and then dispatches it to the original

wrapped function. Note how the *name argument syntax is used to pack and

unpack the passed-in arguments; because of this, this decorator can

be used to wrap any function with any number of positional

arguments.

The net effect, again, is to add a layer of logic to the

original spam function. Here is

the script’s output—the first line comes from the tracer class, and the second comes from

the spam function:

call 1 to spam 1 2 3 call 2 to spam a b c call 3 to spam 4 5 6

Trace through this example’s code for more insight. As it is,

this decorator works for any function that takes positional

arguments, but it does not return the decorated function’s

result, doesn’t handle

keyword arguments, and cannot decorate class

method functions (in short, for methods its

__call__ would be passed a

tracer instance only). As we’ll

see in Part VIII, there are a variety of

ways to code function decorators, including nested def statements; some of the alternatives

are better suited to methods than the version shown here.

Class Decorators and Metaclasses

Function decorators turned out to be so useful that Python 2.6

and 3.0 expanded the model, allowing decorators to be applied to

classes as well as functions. In short, class

decorators are similar to function decorators, but they

are run at the end of a class

statement to rebind a class name to a callable. As such, they can be

used to either manage classes just after they are created, or insert

a layer of wrapper logic to manage instances when they are later

created. Symbolically, the code structure:

def decorator(aClass): ... @decorator class C: ...

is mapped to the following equivalent:

def decorator(aClass): ... class C: ... C = decorator(C)

The class decorator is free to augment the class itself, or return an object that intercepts later instance construction calls. For instance, in the example in the section Counting instances per class with class methods, we could use this hook to automatically augment the classes with instance counters and any other data required:

def count(aClass):

aClass.numInstances = 0

return aClass # Return class itself, instead of a wrapper

@count

class Spam: ... # Same as Spam = count(Spam)

@count

class Sub(Spam): ... # numInstances = 0 not needed here

@count

class Other(Spam): ...Metaclasses are a similarly advanced class-based tool whose roles

often intersect with those of class decorators. They provide an

alternate model, which routes the creation of a class object to a

subclass of the top-level type

class, at the conclusion of a class statement:

class Meta(type):

def __new__(meta, classname, supers, classdict): ...

class C(metaclass=Meta): ...In Python 2.6, the effect is the

same, but the coding differs—use a class attribute instead of a

keyword argument in the class

header:

class C:

__metaclass__ = Meta

...The metaclass generally redefines the __new__ or __init__ method of the type class, in order to assume control of

the creation or initialization of a new class object. The net

effect, as with class decorators, is to define code to be run

automatically at class creation time. Both schemes are free to

augment a class or return an arbitrary object to replace it—a

protocol with almost limitless class-based possibilities.

For More Details