12

Lighting Using the Universal Render Pipeline

Lighting is a complex topic and there are several possible ways to handle it, with each one having its pros and cons. In order to get the best possible quality and performance, you need to know exactly how your renderer handles lighting, and that is exactly what we are going to do in this chapter. We will discuss how lighting is handled in Unity’s Universal Render Pipeline (URP), as well as how to properly configure it to adapt our scene’s mood with proper lighting effects.

In this chapter, we will examine the following lighting concepts:

- Applying lighting

- Applying shadows

- Optimizing lighting

At the end of the chapter, we will have properly used the different Unity Illumination systems like Direct Lights and Lightmapping to reflect a cloudy and rainy night.

Applying lighting

When discussing ways to process lighting in a game, there are two main ways we can do so, known as Forward Rendering and Deferred Rendering. Both handle lighting in a different order, with different techniques, requirements, pros, and cons. Forward Rendering is usually recommended for performance, while Deferred Rendering is usually recommended for quality. The latter is used by the High Definition Render Pipeline of Unity, the renderer used for high-quality graphics in high-end devices.

At the time of writing this book, Unity is developing a performant version for URP. Also, in Unity, the Forward Renderer comes with two modes: Multi-Pass Forward, which is used in the Built-In Renderer (the old Unity Renderer), and Single Pass Forward, which is used in URP. Again, both have their pros and cons.

Choosing between them depends on the kind of game you are creating and the platform you need to run the game on. Your chosen option will change a lot due to the way you apply lighting to your scene, so it’s crucial you understand which system you are dealing with.

In the next section, we will discuss the following real-time lighting concepts:

- Discussing lighting methods

- Configuring ambient lighting with skyboxes

- Configuring lighting in URP

Let’s start by comparing the previously mentioned lighting methods.

Discussing lighting methods

To recap, we mentioned three main ways of processing lighting at the beginning of this chapter:

- Forward Rendering (Single Pass)

- Forward Rendering (Multi-Pass)

- Deferred Rendering

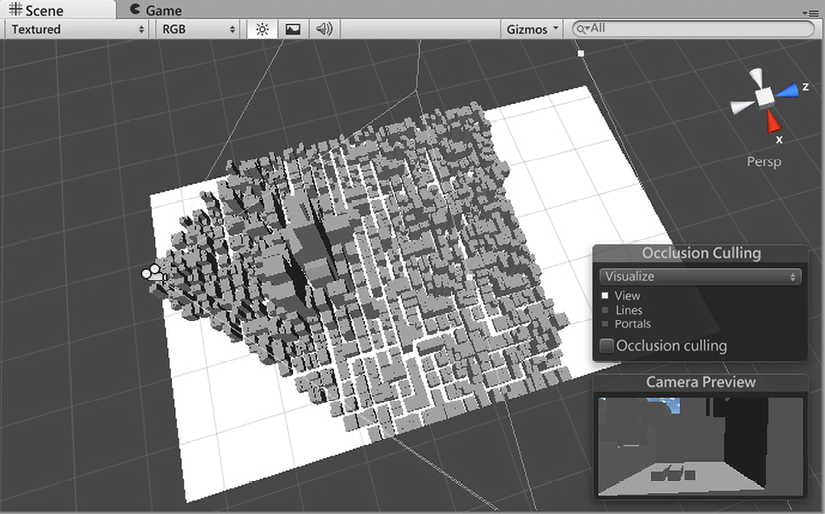

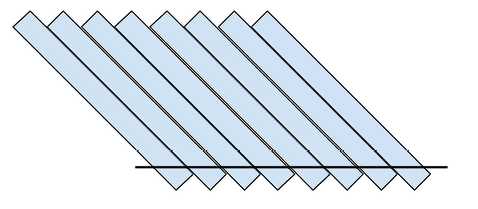

Before we look at the differences between each, let’s talk about the things they have in common. Those three renderers start drawing the scene by determining which objects can be seen by the camera—that is, the ones that fall inside the camera’s frustum, and provide a giant pyramid that can be seen when you select the camera:

Figure 12.1: Camera’s frustum showing only the objects that can be seen by it

After that, Unity will order them from the nearest to the camera to the farthest (transparent objects are handled a little bit differently, but let’s ignore that for now). It’s done like this because it’s more probable that objects nearer to the camera will cover most of the camera, so they will occlude others (will block other objects from being seen), preventing us from wasting resources calculating pixels for the occluded ones.

Finally, Unity will try to render the objects in that order. This is where differences start to arise between lighting methods, so let’s start comparing the two Forward Rendering variants. For each object, Single Pass Forward Rendering will calculate the object’s appearance, including all the lights that are affecting the object, in one shot, or what we call a draw call.

A draw call is the exact moment when Unity asks the video card to actually render the specified object. All the previous work was just preparation for this moment. In the case of the Multi-Pass Forward Renderer, by simplifying a little bit of the actual logic, Unity will render the object once per light that affects the object; so, if the object is being lit by three lights, Unity will render the object three times, meaning that three draw calls will be issued, and three calls to the GPU will be made to execute the rendering process:

Figure 12.2: Left image, first draw call of a sphere affected by two lights in Multi-Pass; middle image, second draw call of the sphere; and right image, the combination of both draw calls

Now is when you are probably thinking, “Why should I use Multi-Pass? Single Pass is more performant!” And yes, you are right! Single Pass is much more performant than Multi-Pass, meaning our game will run at higher frames per second, and here comes the great but. A draw call in a GPU has a limited amount of operations that can be executed, so you have a limit to the complexity of the draw call. Calculating the appearance of an object and all the lights that affect it is very complex, and in order to make it fit in just one draw call, Single Pass executes simplified versions of lighting calculations, meaning less lighting quality and fewer features. They also have a limit on how many lights can be handled in one shot, which, at the time of writing this book, is eight per object, although you can configure fewer if you want, but the default value is good for us. This sounds like a small number, but it’s usually just enough.

On the other side, Multi-Pass can apply any number of lights you want and can execute different logic for each light. Let’s say our object has four lights that are affecting it, but there are two lights that are affecting it drastically because they are nearer or have higher intensity, while the remaining ones affecting the object are just enough to be noticeable. In this scenario, we can render the first two lights with higher quality and the remaining ones with cheap calculations—no one will be able to tell the difference.

In this case, Multi-Pass can calculate the first two lights using Pixel Lighting and the remaining ones using Vertex Lighting. The difference is in their names; Pixel calculates light per object’s pixel, while Vertex calculates lighting per object vertex and fills the pixels between these vertexes, thereby interpolating information between vertexes. You can clearly see the difference in the following images:

Figure 12.3: Left image, a sphere being rendered with Vertex Lighting; right image, a sphere being rendered with Pixel Lighting

In Single Pass, calculating everything in a single draw call forces you to use Vertex Lighting or Pixel Lighting; you cannot combine them.

So, to summarize the differences between Single and Multi-Pass, in Single, you have better performance because each object is just drawn once, but you are limited to the number of lights that can be applied, while in Multi-Pass, you need to render the object several times, but with no limits on the number of lights, and you can specify the exact quality you want for each light. There are other things to consider, such as the actual cost of a draw call (one draw call can be more expensive than two simple ones), and special lighting effects such as toon shading, but let’s keep things simple.

Finally, let’s briefly discuss Deferred. Even though we are not going to use it, it’s interesting to know why we are not doing that. After determining which objects fall inside the frustum and ordering them, Deferred will render the objects without any lighting, generating what is called a G-Buffer. A G-Buffer is a set of several images that contain different information about the objects of the scene, such as the colors of their pixels (without lighting), the direction of each pixel (known as Normals), and how far from the camera the pixels are.

You can see a typical example of a G-Buffer in the following image:

Figure 12.4: Left image, plain colors of the object; middle image, depths of each pixel; and right image, normals of the pixels

Normals are directions, and the x, y, and z components of the directions are encoded in the RGB components of the colors.

After rendering all the objects in the scene, Unity will iterate over all lights that can be seen in the camera, thus applying a layer of lighting over the G-Buffer, taking information from it to calculate that specific light. After all the lights have been processed, you will get the following result:

Figure 12.5: Combination of the three lights that were applied to the G-Buffer shown in the previous image

As you can see, the Deferred part of this method comes from the idea of calculating lighting as the last stage of the rendering process. This is better because you won’t waste resources calculating lighting from objects that can potentially be occluded. If the floor of the image is being rendered first in Forward mode, the pixels that the rest of the objects are going to occlude were calculated in vain. Also, there’s the pro that Deferred just calculates lighting in the exact pixels that the light can reach. As an example, if you are using a flashlight, Unity will calculate lighting only in the pixels that fall inside the cone of the flashlight. The con here is that Deferred is not supported by some relatively old video cards and that you can’t calculate lighting with Vertex Lighting quality, so you will need to pay the price of Pixel Lighting, which is not recommended on low-end devices (or even necessary in simple graphics games).

So, why are we using URP with Single Pass Forward? Because it offers the best balance between performance, quality, and simplicity. In this game, we won’t be using too many lights, so we won’t worry about the light number limitations of Single Pass. If you need more lights, you can use Deferred, but consider the extra hardware requirements and the performance cost of not having per-vertex lighting options. Now that we have a very basic notion of how URP handles lighting, let’s start using it!

Configuring ambient lighting with skyboxes

There are different light sources that can affect a scene, such as the sun, flashlights, light bulbs, and more. Those are known as Direct Lights—that is, objects that emit light rays. Then, we have Indirect Light, which represents how the Direct Light bounces on other objects, like walls. However, calculating all the bounces of all the rays emitted by all the lights is extremely costly in terms of performance and requires special hardware that supports ray tracing. The problem is that not having Indirect Light will generate unrealistic results, where you can observe places where the sunlight doesn’t reach being completely dark because no light is bouncing from other places where light hits.

In the next image you can see an example of how this could look in a wrongly configured scene:

Figure 12.6: Shadows projected on a mountain without ambient lighting

If you ever experience this problem, the way to solve it performantly is using approximations of those bounces. These are what we call Ambient Light. This represents a base layer of lighting that usually applies a little bit of light based on the color of the sky, but you can choose whatever color you want. As an example, on a clear night, we can pick a dark blue color to represent the tint from the moonlight.

If you create a new scene in Unity 2022, usually this is done automatically, but in cases where it isn’t, or the scene was created through other methods, it is convenient to know how to manually trigger this process by doing the following:

- Click on Window | Rendering | Lighting. This will open the Scene Lighting Settings window:

Figure 12.7: Lighting Settings location

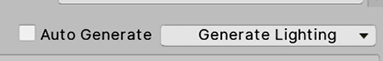

- Click the Generate Lighting button at the bottom of the window. If you haven’t saved the scene so far, a prompt will ask you to save it, which is necessary:

Figure 12.8: Generate Lighting button

- See the bottom-right part of the Unity window to check the progress calculation bar to check when the process has finished:

Figure 12.9: Lighting generation progress bar

- You can now see how completely dark areas are now lit by the light being emitted by the sky:

Figure 12.10: Shadows with ambient lighting

Now, by doing this, we have better lighting, but it still looks like a sunny day. Remember, we want to have rainy weather. In order to do that, we need to change the default sky too so that it’s cloudy. You can do that by downloading a skybox. The current sky you can see around the scene is just a big cube containing textures on each side, and those textures have a special projection to prevent us from detecting the edges of the cube. We can download six images for each side of the cube and apply them to have whatever sky you want, so let’s do that:

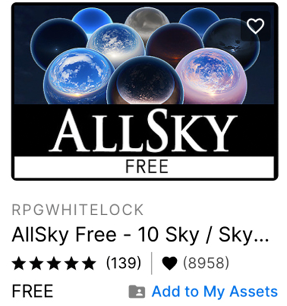

- You can download skybox textures from wherever you want, but here, I will choose the Asset Store. Open it by going to Window | Asset Store and going to the Asset Store website.

- Look for Categories | 2D | Textures & Materials | Sky in the category list on the right. Remember that you need to make that window wider if you can’t see the category list:

Figure 12.11: Textures & Materials

- Remember to check the Free Assets checkbox in the Price options.

- Pick any skybox you like for a rainy day. Take into account that there are different formats for skyboxes. We are using the six-image format, so check that before downloading one. There’s another format called Cubemap, which is essentially the same, but we will stick with the six-image format as it is the simplest one to use and modify. In my case, I have chosen the skybox pack shown in Figure 12.12. Download and import it, as we did in Chapter 5, Introduction to C# and Visual Scripting:

Figure 12.12: Selected skybox set for this book

- Create a new material by using the + icon in the Project window and selecting Material.

- Set the Shader option of that material to Skybox/6 sided. Remember that the skybox is just a cube, so we can apply a material to change how it looks. The skybox shader is prepared to apply the six textures.

- Drag the six textures to the Front, Back, Left, Right, Up, and Down properties of the material. The six downloaded textures will have descriptive names so that you know which textures go where:

Figure 12.13: Skybox material settings

- Drag the material directly into the sky in the Scene view. Be sure you don’t drag the material into an object because the material will be applied to it.

- Repeat steps 1 to 4 of the ambient light calculation steps (Lighting Settings | Generate Lighting) to recalculate it based on the new skybox. In the following image, you can see the result of my project so far:

Figure 12.14: Applied skybox

Now that we have a good base layer of lighting, we can start adding light objects.

Configuring lighting in URP

We have three main types of Direct Lights we can add to our scene:

- Directional Light: This is a light that represents the sun. This object emits light rays in the direction it is facing, regardless of its position; the sun moving 100 meters to the right won’t make a big difference. As an example, if you slowly rotate this object, you can generate a day/night cycle:

Figure 12.15: Directional Light results

- Point Light: This light represents a light bulb, which emits rays in an omnidirectional way. The difference it has compared to Directional Lights is that its position matters because it’s closer to our objects. Also, because it’s a weaker light, the intensity of this light varies according to the distance, so its effect has a range—the further the object from the light, the weaker the received intensity:

Figure 12.16: Point Light results

- Spotlight: This kind of light represents a light cone, such as the one emitted by a flashlight. It behaves similarly to point lights in that its position matters and the light intensity decays over a certain distance. But here the direction it points to (hence its rotation) is also important, given it will specify where to project the light:

Figure 12.17: Spotlight results

So far, we have nice, rainy, ambient lighting, but the only Direct Light we have in the scene, the Directional Light, won’t look like this, so let’s change that:

- Select the Directional Light object in the Hierarchy window and then look at the Inspector window.

- Click the Color property in the Emission section to open the Color Picker.

- Select a dark gray color to achieve sun rays partially occluded by clouds.

- Set Shadow Type to No Shadows. Now that we have a cloudy day, the sun does not project clear shadows, but we will talk more about shadows in a moment:

Figure 12.18: Soft directional light with no shadows

Now that the scene is darker, we can add some lights to light up the scene, as follows:

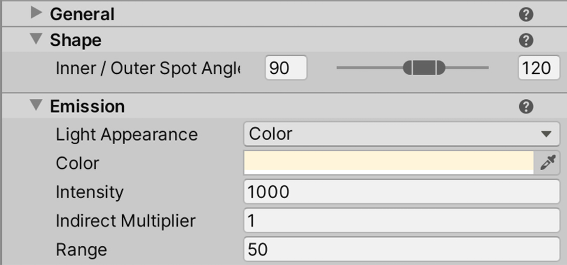

- Create a Spotlight by going to GameObject | Light | Spotlight.

- Select it. Then, in the Inspector window, set Inner/Output Spot Angle of the Shape section to 90 and 120, which will increase the angle of the cone.

- Set Range in the Emission section to

50, meaning that the light can reach up to 50 meters, decaying along the way. - Set Intensity in the Emission section to

1000:

Figure 12.19: Spotlight settings

Figure 12.20: Spotlight placement

- Duplicate that light by selecting it and pressing Ctrl+D (Command+D on a Mac).

- Put it in the opposite corner of the base:

Figure 12.21: Two Spotlight results

You can keep adding lights to the scene but take care that you don’t go too far—remember the light’s limits. Also, you can download some light posts to put in where the lights are located to visually justify the origin of the light. Now that we have achieved proper lighting, we can talk about shadows.

Applying shadows

Maybe you are thinking that we already have shadows in the scene, but actually, we don’t. The darker areas of the object, the ones that are not facing the lights, don’t have shadows—they are not being lit, and that’s quite different from a shadow. In this case, we are referring to the shadows that are projected from one object to another—for example, the shadow of the player being projected on the floor, or from the mountains to other objects. Shadows can increase the quality of our scene, but they also cost a lot to calculate, so we have two options: not using shadows (recommended for low-end devices such as mobiles) or finding a balance between performance and quality according to our game and the target device.

In this section, we are going to discuss the following topics about shadows:

- Understanding shadow calculations

- Configuring performant shadows

Let’s start by discussing how Unity calculates shadows.

Understanding shadow calculations

In game development, it is well-known that shadows are costly in terms of performance, but why? An object has a shadow when a light ray hits another object before reaching it. In that case, no lighting is applied to that pixel from that light. The problem here is the same problem we have with the light that ambient lighting simulates—it would be too costly to calculate all possible rays and its collisions. So, again, we need an approximation, and here is where Shadow Maps kick in.

A Shadow Map is an image that’s rendered from the point of view of the light, but instead of drawing the full scene with all the color and lighting calculations, it will render all the objects in grayscale, where black means that the pixel is very far from the camera and whiter means that the pixel is nearer to the camera. If you think about it, each pixel contains information about where a ray of light hits. By knowing the position and orientation of the light, you can calculate the position where each “ray” hit using the Shadow Map.

In the following image, you can see the shadow map of our Directional Light:

Figure 12.22: Shadow Map generated by the Directional Light of our scene

Each type of light calculates shadow maps slightly differently, especially the Point Light. Since it’s omnidirectional, it needs to render the scene several times in all its directions (Front, Back, Left, Right, Up, and Down) in order to gather information about all the rays it emits. We won’t talk about this in detail here, though, as we could talk about it all day.

Now, something important to highlight here is that shadow maps are textures, and as such, they have a resolution. The higher the resolution, the more “rays” our shadow map calculates. You are probably wondering what a low-resolution shadow map looks like when it has only a few rays in it. Take a look at the following image to see one:

Figure 12.23: Hard Shadows rendered with a low-resolution Shadow Map

The problem here is that having fewer rays generates bigger shadow pixels, resulting in a pixelated shadow. Here, we have our first configuration to consider: what is the ideal resolution for our shadows? You will be tempted to just increase it until the shadows look smooth, but of course, that will increase how long it will take to calculate it, so it will impact the performance considerably unless your target platform can handle it (mobiles definitely can’t). Here, we can use the Soft Shadows trick, where we can apply a blurring effect over the shadows to hide the pixelated edges, as shown in the following image:

Figure 12.24: Soft Shadows rendered with a low-resolution Shadow Map

Of course, the blurry effect is not free, but combining it with low-resolution shadow maps, if you accept its blurry result, can generate a nice balance between quality and performance.

Now, low-resolution shadow maps have another problem, which is called Shadow Acne. This is the lighting error you can see in the following image:

Figure 12.25: Shadow Acne from a low-resolution Shadow Map

A low-resolution shadow map generates false positives because it has fewer “rays” calculated. The pixels to be shaded between the rays need to interpolate information from the nearest ones. The lower the Shadow Map’s resolution, the larger the gap between the rays, which means less precision and more false positives. One solution would be to increase the resolution, but again, there will be performance issues (as always). We have some clever solutions to this, such as using depth bias. An example of this can be seen in the following image:

Figure 12.26: A false positive between two far “rays.” The highlighted area thinks the ray hit an object before reaching it.

The concept of depth bias is simple—so simple that it seems like a big cheat, and actually, it is, but game development is full of them! To prevent false positives, we “push” the rays a little bit further, just enough to make the interpolated rays reach the surface being lit:

Figure 12.27: Rays with a depth bias to eliminate false positives

Of course, as you are probably expecting, they don’t solve this problem easily without having a caveat. Pushing depth generates false negatives in other areas, as shown in the following image. It looks like the cube is floating, but actually, it is touching the ground—the false negatives generate the illusion that it is floating:

Figure 12.28: False negatives due to a high depth bias

Of course, we have a counter trick to this situation known as normal bias. This pushes the object’s mesh along the direction they are facing, not the rays. This one is a little bit tricky, so we won’t go into too much detail here, but the idea is that combining a little bit of depth bias and another bit of normal bias will reduce the false positives, but not completely eliminate them. Therefore, we need to learn how to live with that and hide these shadow discrepancies by cleverly positioning objects:

Figure 12.29: Reduced false positives, which is the result of combining depth and normal bias

There are several other aspects that affect how shadow maps work, with one of them being the light range. The smaller the light range, the less area the shadows will cover. The same shadow map resolution can add more detail to that area, so try to reduce the light ranges as much as you can, as we will do in the next section.

I can imagine your face right now, and yes, lighting is complicated, and we’ve only just scratched the surface! But keep your spirits up! After a little trial and error fiddling with the settings, you will understand it better. We’ll do that in the next section.

If you are really interested in learning more about the internals of the shadow system, I recommend that you look at the concept of Shadow Cascades, an advanced topic about Directional Lights and shadow map generation.

Configuring performant shadows

Because we are targeting mid-end devices, we will try to achieve a good balance of quality and performance here, so let’s start enabling shadows just for the spotlights. The Directional Light shadow won’t be that noticeable, and actually, a rainy sky doesn’t generate clear shadows, so we will use that as an excuse to not calculate those shadows. In order to do this, do the following:

- Select both spotlights by clicking them in the Hierarchy while pressing Ctrl (Command on Mac). This will ensure that any changes made in the Inspector window will be applied to both:

Figure 12.30: Selecting multiple objects

- In the Inspector window, set Shadow Type in the Shadows section to Soft Shadows. We will be using low-resolution shadow maps here and the soft mode can help to hide the pixelated resolution:

Figure 12.31: Soft Shadows setting

- Select Directional light and set Shadow Type to No Shadows to prevent it from casting shadows:

Figure 12.32: No Shadows setting

- Create a cube (GameObject | 3D Object | Cube) and place it near one of the lights, just to have an object that we can cast shadows on for testing purposes.

Now that we have a base test scenario, let’s fiddle with the shadow maps resolution settings, preventing shadow acne in the process:

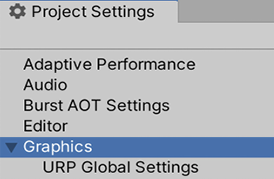

- Go to Edit | Project Settings.

- In the left-hand side list, look for Graphics and click it:

Figure 12.33: Graphics settings

- In the properties that appear after selecting this option, click in the box below Scriptable Render Pipeline Settings—the one that contains a name. In my case, this is URP-HighFidelity, but yours may be different if you have a different version of Unity:

Figure 12.34: Current Render Pipeline setting

- Doing that will highlight an asset in the Project window, so be sure that the window is visible before selecting it. Select the highlighted asset:

Figure 12.35: Current pipeline highlighted

- This asset has several graphics settings related to how URP will handle its rendering, including lighting and shadows. Expand the Lighting section to reveal its settings:

Figure 12.36: Pipeline lighting settings

- The Shadow Resolution setting under the Additional Lights subsection represents the shadow map resolution for all the lights that aren’t the Directional Light (since it’s the Main Light). Set it to

1024if it’s not already at that value. - Under the Shadows section, you can see the Depth and Normal Bias settings, but those will affect all lights. Even if right now our Directional Light doesn’t have shadows, we want only to affect Additional Lights bias values as they have a different Atlas Resolution compared to the Main one (Directional Light), so instead, select out spotlights and set Bias to Custom and Depth and Normal Bias to

0.25in order to reduce them as much as we can before we remove the shadow acne:

Figure 12.37: Bias settings

- This isn’t entirely related to shadows, but in the Univeral RP settings asset, you can change the Per Object Light limit to increase or reduce the number of lights that can affect the object (no more than eight). For now, the default is good as is.

- In case you followed the shadow cascades tip presented earlier, you can play with the Cascades value a little bit to enable shadows for Directional Light to note the effect. Remember that those shadow settings only work for Directional Light.

- We don’t have shadows in Directional Light, but in any other case, consider reducing the Max Distance value in the Shadows section, which will affect the Directional Light shadows range.

- Select both lights in the Hierarchy and set them so that they have a 40-meter Range. See how the shadows improve in quality before and after this change.

Remember that those values only work in my case, so try to fiddle with the values a little bit to see how that changes the result—you may find a better setup for your scene if it was designed differently from mine. Also, remember that not having shadows is always an option, so consider that if your game is running low on frames per second, also known as FPS (and there isn’t another performance problem lurking).

You probably think that that is all we can do about performance in terms of lighting, but luckily, that’s not the case! We have another resource we can use to improve it further known as static lighting.

Optimizing lighting

We mentioned previously that not calculating lighting is good for performance, but what about not calculating lights, but still having them? Yes, it sounds too good to be true, but it is actually possible (and, of course, tricky). We can use a technique called static lighting or baking, which allows us to calculate lighting once and use the cached result.

In this section, we will cover the following concepts related to static lighting:

- Understanding static lighting

- Baking lightmaps

- Applying static lighting to dynamic objects

Understanding static lighting

The idea is pretty simple: just do the lighting calculations once, save the results, and then use those instead of calculating lighting all the time.

You may be wondering why this isn’t the default technique to use. This is because it has some limitations, with the big one being dynamic objects. Precalculating shadows means that they can’t change once they’ve been calculated, but if an object that is casting a shadow is moved, the shadow will still be there, so the main thing to take into account here is that you can’t use this technique with moving objects. Instead, you will need to mix static or baked lighting for static objects and real-time lighting for dynamic (moving) objects. Also, consider that aside from this technique being only valid for static objects, it is also only valid for static lights. Again, if a light moves, the precalculated data becomes invalid.

Another limitation you need to take into account is that precalculated data can have a huge impact on memory. That data occupies space in RAM, maybe hundreds of MB, so you need to consider if your target platform has enough space. Of course, you can reduce the precalculated lighting quality to reduce the size of that data, but you need to consider if the loss of quality deteriorates the look and feel of your game too much. As with all options regarding optimization, you need to balance two factors: performance and quality.

We have several kinds of precalculated data in our process, but the most important one is what we call lightmaps. A lightmap is a texture that contains all the shadows and lighting for all the objects in the scene, so when Unity applies the precalculated or baked data, it will look at this texture to know which parts of the static objects are lit and which aren’t.

You can see an example of a lightmap in the following image:

Figure 12.38: Left, a scene with no lighting; middle, a lightmap holding precalculated data from that scene; and right, the lightmap being applied to the scene

Having lightmaps has its own benefits. The baking process is executed in Unity, before the game is shipped to users, so you can spend plenty of time calculating stuff that you can’t do in runtime, such as improved accuracy, light bounces, light occlusion in corners, and light from emissive objects. However, that can also be a problem. Remember, dynamic objects still need to rely on real-time lighting, and that lighting will look very different compared to static lighting, so we need to tweak them a lot for the user to not notice the difference.

Now that we have a basic notion of what static lighting is, let’s dive into how to use it.

Baking lightmaps

To use lightmaps, we need to make some preparations regarding the 3D models. Remember that meshes have UVs, which contain information about which part of the texture needs to be applied to each part of the model. Sometimes, to save texture memory you can apply the same piece of texture to different parts. For example, in a car’s texture, you wouldn’t have four wheels; you’d just have one, and you can apply that same piece of texture to all the wheels. The problem here is that static lighting uses textures the same way, but here, it will apply the lightmaps to light the object. In the wheel scenario, the problem would be that if one wheel receives shadows, all of them will have it, because all the wheels are sharing the same texture space. The usual solution is to have a second set of UVs in the model with no texture space being shared, just for use with lightmapping.

Sometimes, downloaded models are already prepared for lightmapping, and sometimes, they aren’t, but luckily, Unity has us covered in those scenarios. To be sure a model will calculate lightmapping properly, let’s make Unity automatically generate the Lightmapping UVs by doing the following:

- Select the mesh asset (FBX) in the Project window.

- In the Model tab, look for the Generate Lightmap UVs checkbox at the bottom and check it.

- Click the Apply button at the bottom:

Figure 12.39: Generate Lightmap setting

- Repeat this process for every model. Technically, you can only do this in the models where you get artifacts and weird results after baking lightmaps, but for now, let’s do this in all the models just in case.

After preparing the models for being lightmapped, the next step is to tell Unity which objects are not going to move. To do so, do the following:

- Select the object that won’t move.

- Check the Static checkbox in the top-right of the Inspector window:

Figure 12.40: Static checkbox

- Repeat this for every static object (this isn’t necessary for lights; we will deal with those later).

- You can also select a container of several objects, check the Static checkbox, and click the Yes, All Children button in the prompt to apply the checkbox to all child objects.

Consider that you may not want every object, even if it’s static, to be lightmapped, because the more objects you lightmap, the more texture size you will require. As an example, the terrain could be too large and would consume most of the lightmapping’s size. Usually, this is necessary, but in our case, the spotlights are barely touching the terrain. Here, we have two options: leave the terrain as dynamic, or better, directly tell the spotlights to not affect the terrain since one is only lit by ambient lighting and the Directional Light (which is not casting shadows). Remember that this is something we can do because of our type of scene; however, you may need to use other settings in other scenarios. You can exclude an object from both real-time and static lighting calculations by doing the following:

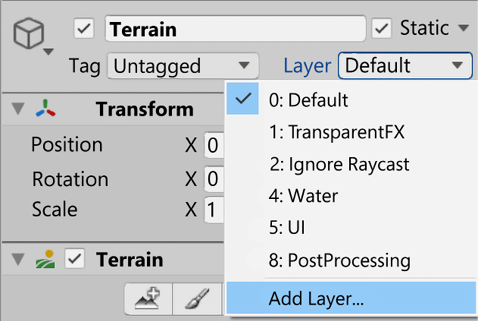

- Select the object to exclude.

- In the Inspector window, click the Layer dropdown and click on Add Layer…:

Figure 12.41: Layer creation button

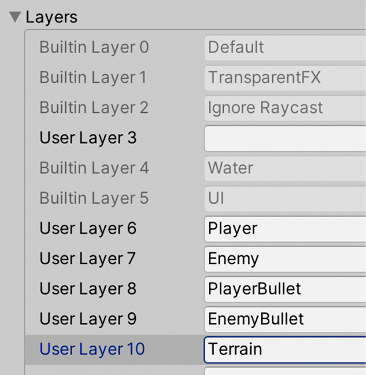

- Here, you can create a layer, which is a group of objects that are used to identify which objects are not going to be affected by lighting. In the Layers list, look for an empty space and type in any name for those kinds of objects. In my case, I will only exclude the terrain, so I have just named it Terrain:

Figure 12.42: Layers list

- Once again, select the terrain, go to the Layer dropdown, and select the layer you created in the previous step. This way, you can specify that this object belongs to that group of objects:

Figure 12.43: Changing a GameObject’s layer

- Select all the spotlights lights, look for the Culling Mask in the Rendering section in the Inspector window, click it, and uncheck the layer you created previously. This way, you can specify that those lights won’t affect that group of objects:

Figure 12.44: Light Culling Mask

- Now, you can see how those selected lights are not illuminating or applying shadows to the terrain.

Now, it’s time for the lights since the Static checkbox won’t work for them. For them, we have the following three modes:

- Realtime: A light in Realtime mode will affect all objects, both static and dynamic, using real-time lighting, meaning there’s no pre-calculation. This is useful for lights that are not static, such as the player’s flashlight, a lamp that is moving due to the wind, and so on.

- Baked: The opposite of Realtime, this kind of light will only affect static objects with lightmaps. This means that if the player (dynamic) moves under a baked light on the street (static), the street will look lit, but the player will still be dark and won’t cast any shadows on the street. The idea is to use this on lights that won’t affect any dynamic object, or on lights that are barely noticeable on them, so that we can increase performance by not calculating them.

- Mixed: This is the preferred mode in case you are not sure which one to use. This kind of light will calculate lightmaps for static objects, but will also affect dynamic objects, combining its Realtime lighting with the baked one (like Realtime lights also do).

In our case, our Directional Light will only affect the terrain, and because we don’t have shadows, applying lighting to it is relatively cheap in URP, so we can leave the Directional Light in Realtime so that it won’t take up any lightmap texture area.

Our spotlights are affecting the base, but actually, they are only applying lighting to them—we have no shadows because our base is empty. In this case, it is preferable to not calculate lightmapping whatsoever, but for learning purposes, I will add a few objects as obstacles to the base to cast some shadows and justify the use of lightmapping, as shown in the following image:

Figure 12.45: Adding objects to project light

Here, you can see how the original design of our level changes constantly during the development of the game, and that’s something you can’t avoid—bigger parts of the game will change over time. Now, we are ready to set up the Light Modes and execute the baking process, as follows:

- Select the Directional Light in the Hierarchy.

- Set the Mode property of the General section in the Inspector window to Realtime (if it’s not already in that mode).

- Select both Spotlights.

- Set their Render Mode to Mixed:

Figure 12.46: Mixed lighting setting for Spotlights, the mode will be Realtime for the Directional Light

- Open the Lighting Settings window (Window | Rendering | Lighting).

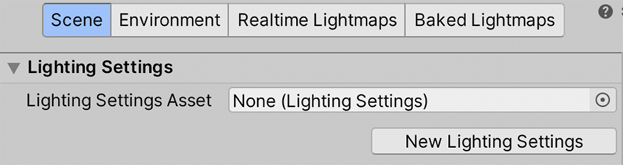

- We want to change some of the settings of the baking process. In order to enable the controls for this, click the New Lighting Settings button. This will create an asset with lightmapping settings that can be applied to several scenes in case we want to share the same settings multiple times:

Figure 12.47: Creating lighting settings

- Reduce the quality of lightmapping, just to make the process go faster. Just to iterate, the lighting can easily be reduced by using settings such as Lightmap Resolution, Direct Samples, Indirect Samples, and Environment Samples, all of them located under the Lightmapping Settings category. In my case, I have those settings applied, as shown in the following image. Note that even reducing those will take time; we have too many objects in the scene due to the modular level design:

Figure 12.48: Scene lighting settings

- Click Generate Lighting, which is the same button we used previously to generate ambient lighting.

- Wait for the process to complete. You can do this by checking the progress bar at the bottom-right of the Unity editor. Note that this process could take even hours in large scenes, so be patient:

Figure 12.49: Baking progress bar

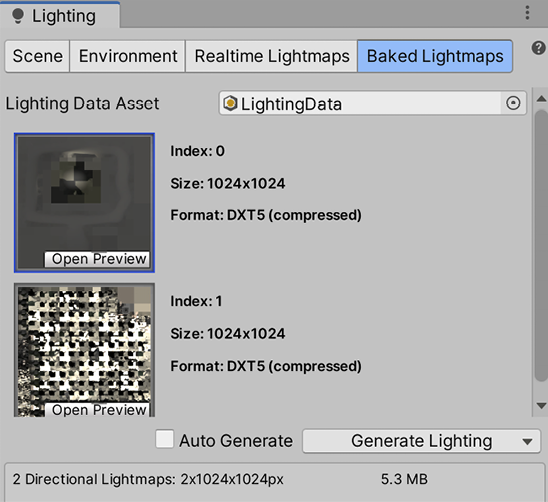

- After the process has completed, you can check the bottom part of the Lighting settings window, where you can see how many lightmaps need to be generated. We have a maximum lightmap resolution, so we probably need several of them to cover the entire scene. Also, it informs us of their size so that we can consider their impact in terms of RAM. Finally, you can check out the Baked Lightmaps section to see them:

Figure 12.50: Generated lightmaps

- Now, based on the results, you can move objects, modify light intensities, or make whatever correction you would need in order to make the scene look the way you want and recalculate the lighting every time you need to. In my case, those settings gave me good enough results, which you can see in the following image:

Figure 12.51: Lightmap result

We still have plenty of small settings to touch on, but I will leave you to discover those through trial and error or by reading the Unity documentation about lightmapping over at: https://docs.unity3d.com/Manual/Lightmappers.html. Reading the Unity manual is a good source of knowledge and I recommend that you start using it—any good developer, no matter how experienced, should read the manual.

Applying static lighting to static objects

When marking objects as static in your scene, you probably figured out that all the objects in the scene won’t move, so you probably checked the static checkbox for everyone. That’s ok, but you should always put a dynamic object into the scene to really be sure that everything works ok—no games have totally static scenes. Try adding a capsule and moving it around to simulate our player, as shown in the following image. If you pay attention to it, you will notice something odd—the shadows being generated by the lightmapping process are not being applied to our dynamic object:

Figure 12.52: Dynamic object under a lightmap’s precalculated shadow

You may be thinking that Mixed Light Mode was supposed to affect both dynamic and static objects, and that is exactly what it’s doing. The problem here is that everything related to static objects is pre-calculated into those lightmap textures, including the shadows they cast, and because our capsule is dynamic, it wasn’t there when the pre-calculation process was executed. So, in this case, because the object that cast the shadow was static, its shadow won’t affect any dynamic object.

Here, we have several solutions. The first would be to change the Static and Realtime mixing algorithm to make everything near the camera use Realtime lighting and prevent this problem (at least near the focus of attention of the player), which will have a big impact on performance. The alternative is to use Light Probes. When we baked information, we only did that on lightmaps, meaning that we have information on lighting just over surfaces, not in empty spaces. Because our player is traversing the empty spaces between those surfaces, we don’t know exactly how the lighting would look in those spaces, such as the middle of a corridor. Light Probes are a set of points in those empty spaces where Unity also pre-calculates information, so when some dynamic object passes through the Light Probes, it will sample information from them. In the following image, you can see some Light Probes that have been applied to our scene. You will notice that the ones that are inside shadows are going to be dark, while the ones exposed to light will have a greater intensity.

This effect will be applied to our dynamic objects:

Figure 12.53: Spheres representing Light Probes

If you move your object through the scene now, it will react to the shadows, as shown in the following two images, where you can see a dynamic object being lit outside a baked shadow and being dark inside:

Figure 12.54: Dynamic object receiving baked lighting from Light Probes

In order to create Light Probes, do the following:

- Create a group of Light Probes by going to GameObject | Light | Light Probe Group.

- Fortunately, we have some guidelines on how to locate them. It is recommended to place them where the lighting changes, such as inside and outside shadow borders. However, that is complicated. The simplest and recommended approach is to just drop a grid of Light Probes all over your playable area. To do that, you can simply copy and paste the Light Grid Group several times to cover the entire base:

Figure 12.55: Light Probe grid

- Another approach would be to select one group and click the Edit Light Probes button to enter Light Probe edit mode:

Figure 12.56: Light Probe Group edit button

- Click the Select All button and then Duplicate Selected to duplicate all the previously existing probes.

- Using the translate gizmo, move them next to the previous ones, extending the grid in the process. Consider that the nearer the probes are, you more you will need to cover the terrain, which will generate more data. However, Light Probes data is relatively cheap in terms of performance, so you can have lots of them, as seen in Figure 12.55.

- Repeat steps 4 and 5 until you’ve covered the entire area.

- Regenerate lighting with the Generate Lighting button in Lighting Settings.

With that, you have pre-calculated lighting on the Light Probes affecting our dynamic objects, combining both worlds to get cohesive lighting.

Summary

In this chapter, we discussed several lighting topics, such as how Unity calculates lights and shadows, how to deal with different light sources such as direct and indirect lighting, how to configure shadows, how to bake lighting to optimize performance, and how to combine dynamic and static lighting so that the lights aren’t disconnected from the world they affect. This was a long chapter, but lighting deserves that. It is a complex subject that can improve the look and feel of your scene drastically, as well as reduce your performance dramatically. It requires a lot of practice and here, we tried to summarize all the important knowledge you will need to start experimenting with it. Be patient with this topic; it is easy to get incorrect results, but you are probably just one checkbox away from solving it.

Now that we have improved all we can in the scene settings, in the next chapter, we will apply a final layer of graphic effects using the Unity Post-Processing Stack, which will apply full-screen image effects—the ones that will give us that cinematic look and feel that all games have nowadays.

Join us on Discord!

Read this book alongside other users, Unity game development experts, and the author himself.

Ask questions, provide solutions to other readers, chat with the author via Ask Me Anything sessions, and much more.

Scan the QR code or visit the link to join the community.