Overview of HDR Video

1 Introduction

Welcome to the book “HDR video: Concepts, Technologies and Applications.” This book contains chapters from experts in high dynamic range (HDR) video covering a wide collection of their latest research and development across all aspects of the HDR pipeline from Capture, to Manipulate and Display. Why you should read this book is that many people, the editors included, think HDR is going to be the biggest qualitative change in TV quality since color. To anyone who has seen high quality HDR content on state-of-the-art HDR displays, the step change from existing display technology is clear.

But what is HDR? It is imagery that is as subtle, bright, and vibrant as the real world. There were analog HDR technologies in the past (e.g., a good 35-mm slide with a very bright projector), but now that almost all content is digital, we can make a more precise definition: HDR is more brightness than we are used to with traditional imaging. This means “enough” bits for brightness (not the 8 bits per channel of previous technologies), and for the highest brightness of the displayed imagery to be “bright enough.” What is “enough” is one of the main debates of HDR, and a source of disagreement between academia and industry. The first research papers on HDR appeared in the early 1990s (e.g., [1, 2]), so why has it taken more than 20 years for HDR to appear in consumer televisions?

First, remember we saw the same slow progress for HD TV that also took decades for the industry to deploy for the good reason that adapting an entire industry of capture, transmission, and display is a very hard process. Second, advances in LED technology were needed to make the displays commercially attractive; we have finally reached that point. Finally, the much easier transition in resolution had to be played out to its limit. We seem to have reached that in 4K, except for specialized applications. As televisions make the move toward HDR, this book also includes a chapter from an economist, Christopher Moir, that examines factors that may influence the widespread uptake of HDR (Fig. 1).

HDR is coming, and it going to impact every aspect of imaging.

This book is a major outcome of the EU Cost Action IC1005 that ran from May 2011 to May 2015 [3]. The key goals of IC1005 were to coordinate research and development in the field of High Dynamic Range (HDR) imaging across Europe, develop a vibrant community, together propose a new set of standards for the complete HDR pipeline, and establish Europe firmly as the world leader in HDR. Back in 2011, HDR was relatively unknown and the term was easily confused with HD = High Definition, that is, a screen resolution of 1920×1080 pixels. In fact, at that time, IC1005 considered supporting the term “True Brightness” to replace HDR to help minimize the confusion.

In 2012 the specification for UHDTV, ITU-R Recommendation BT.2020 was announced [4]. This contained five components to move televisions a significant step forward: Higher screen resolution 4K (3840 × 2160 pixels) and 8K (7680 × 4320 pixels); higher dynamic range; wider color gamut and higher frame rate. Consumer 4K televisions were quick to appear, but despite the significant increase in screen resolution, they failed to gain substantial market penetration. A key problem is that, unless one is very close to the screen, it is quite difficult for a viewer to see a difference between an HD and a 4K image. Of all the components of BT.2020, many user studies, for example, those by the 4Ever project [5], have clearly shown that dynamic range is the feature that users most notice, especially in a dark viewing environment. In 2016, the term HDR was thus seized upon by TV manufacturers as a “marketing tool” to sell more televisions.

This chapter documents the rise of HDR video and shows how IC1005 has tried to guide the process.

2 COST Action IC1005

COST Actions are European Union funded initiatives to bring together researchers, engineers, and scholars from EU and some other countries to develop new ideas and joint projects [6]. COST does not fund research itself, but rather networking, including short term scientific missions (STSMs) to enable young researchers to spend time in other labs across Europe.

Built around the three guiding principles of “coordination, collaboration, and acceleration,” COST Action IC1005 “HDRi: The digital capture, storage, transmission, and display of real-world lighting,” involved the key people and institutions undertaking or interested in HDR research and development across Europe (Fig. 2). At the end of the Action there were attendees from 44 institutions across 26 countries. Members of IC1005 played a leading role in substantially raising public awareness of HDR through their dissemination activities, including presenting the world’s first end-to-end real-time HDR systems at events such as IBC (Europe’s largest broadcast event) and NAB (which annually attracts over 92,000 participants), providing their vision of the future of television at the European Broadcasting Union’s annual conference, and hosting a dedicated event to showcase the benefits of HDR technology to national broadcasters. From the beginning, IC1005 engaged the services of professional cartoonists Lance Bell and Danny Burgess to graphic record all the meetings. As the cartoons in this chapter show, this resulted in highly valuable material for dissemination activities and other publicity material.

A first task of the COST Action was to define (Fig. 3) the terminology used within the HDR community to ensure seamless communication between academic and industrial members. The dynamic range of a scene is the ratio of the maximum light intensity to the minimum light intensity. However, what constituted high dynamic range was less clear. IC1005 agreed in September 2013 to use the term f-stop (or stop) to refer to the following contrast ratios:

X f-stops = difference of 2X = 2X : 1

So

16 f-stops = difference of 216 = 65, 536 : 1

This is normally noted as 100,000:1; approximately what the eye can see in a scene with no adaptation.

20 f-stops = difference of 220 = 1, 048, 576 : 1

This is normally noted as 1,000,000:1; approximately what the eye can see in a scene with minimal (no noticeable) adaptation.

From this the following were defined:

Standard dynamic range (SDR) is ≤ 10 f-stops (aka low dynamic rage (LDR));

Enhanced dynamic range (EDR) is > 10 f-stops and ≤ 16 f-stops;

High dynamic range (HDR) is > 16 f-stops.

This definition of SDR, EDR, and HDR was subsequently adopted by the MPEG adhoc committee on HDR in their Call for Evidence document in February 2015 [7] and has largely been accepted by the scientific community. However, it is important to point out that the media industry does not make any distinction between EDR and HDR. Therefore many current commercial HDR claims are actually EDR, according to the IC1005 and MPEG scales of dynamic range. There are a number of other ways of defining dynamic range. Table 2 provides a useful means for converting from one definition to another.

Table 1

Objective measure of camera dynamic range [16]

| Camera | Stops @ 0.5 RMS noise | Vendor spec. |

| RED DRAGON—HDR x6, Log Film, Total | 11.8 + ∼3 | 16.5+ |

| ARRI Alexa—Log | 13.9 | 14.0 |

| Canon 5DM3—Magic Lantern H.264 (ISO 400/1600) | 11.4 | 10.0 |

| Black Magic Cinema 4K—Film | 9.0 | 12.0 |

Table 2

Dynamic Range Conversion Chart

| Dynamic Range Representations | Number of Bits Required | Terms | HVS | ||||||

| Density | Decades | Contrast Ratio | DR (dB) | DR (Stops) | Linear | Log | LogLuv | ||

| Log10(CR) | 10 | 10 Density | 20Log10(CR) | Log2(CR) | 1% RS | 1% RS | 0.27% RS | ||

| 0 | 100 | 1.0 | 0 | 0.0 | 0.0 | 0.0 | 0.0 | LDR | HVS with no adaption |

| 0.15 | 1.4 | 3 | 0.5 | 7.1 | 5.1 | 7.0 | |||

| 0.2 | 1.6 | 4 | 0.7 | 7.3 | 5.5 | 7.4 | |||

| 0.3 | 2.0 | 6 | 1.0 | 7.6 | 6.1 | 8.0 | |||

| 0.4 | 2.5 | 8 | 1.3 | 8.0 | 6.5 | 8.4 | |||

| 0.6 | 4.0 | 12 | 2.0 | 8.6 | 7.1 | 9.0 | |||

| 0.8 | 6.3 | 16 | 2.7 | 9.3 | 7.5 | 9.4 | |||

| 1 | 101 | 10 | 20 | 3.3 | 10.0 | 7.9 | 9.7 | ||

| 1.2 | 16 | 24 | 4.0 | 10.6 | 8.1 | 10.0 | |||

| 1.4 | 25 | 28 | 4.7 | 11.3 | 8.3 | 10.2 | |||

| 1.6 | 40 | 32 | 5.3 | 12.0 | 8.5 | 10.4 | |||

| 1.8 | 63 | 36 | 6.0 | 12.6 | 8.7 | 10.6 | |||

| 2 | 102 | 100 | 40 | 6.6 | 13.3 | 8.9 | 10.7 | ||

| 2.2 | 158 | 44 | 7.3 | 14.0 | 9.0 | 10.9 | |||

| 2.4 | 251 | 48 | 8.0 | 14.6 | 9.1 | 11.0 | |||

| 2.6 | 398 | 52 | 8.6 | 15.3 | 9.2 | 11.1 | |||

| 2.8 | 631 | 56 | 9.3 | 15.9 | 9.3 | 11.2 | |||

| 3 | 103 | 1000 | 60 | 10.0 | 16.6 | 9.4 | 11.3 | ||

| 3.2 | 1585 | 64 | 10.6 | 17.3 | 9.5 | 11.4 | EDR | ||

| 3.4 | 2512 | 68 | 11.3 | 17.9 | 9.6 | 11.5 | |||

| 3.6 | 3981 | 72 | 12.0 | 18.6 | 9.7 | 11.6 | |||

| 3.8 | 6310 | 76 | 12.6 | 19.3 | 9.8 | 11.7 | HVS with minimal adaption | ||

| 4 | 104 | 10,000 | 80 | 13.3 | 19.9 | 9.9 | 11.7 | ||

| 4.2 | 15,849 | 84 | 14.0 | 20.6 | 9.9 | 11.8 | |||

| 4.4 | 25,119 | 88 | 14.6 | 21.3 | 10.0 | 11.9 | |||

| 4.6 | 39,811 | 92 | 15.3 | 21.9 | 10.1 | 11.9 | |||

| 4.8 | 63,096 | 96 | 15.9 | 22.6 | 10.1 | 12.0 | |||

| 5 | 105 | 100,000 | 100 | 16.6 | 23.3 | 10.2 | 12.1 | HDR | |

| 5.2 | 158,489 | 104 | 17.3 | 23.9 | 10.2 | 12.1 | |||

| 5.4 | 251,189 | 108 | 17.9 | 24.6 | 10.3 | 12.2 | |||

| 5.6 | 398,107 | 112 | 18.6 | 25.2 | 10.3 | 12.2 | |||

| 5.8 | 630,957 | 116 | 19.3 | 25.9 | 10.4 | 12.3 | |||

| 6 | 106 | 106 | 120 | 19.9 | 26.6 | 10.4 | 12.3 | ||

| 6.2 | 106.2 | 124 | 20.6 | 27.2 | 10.5 | 12.4 | HVS with full adaption | ||

| 6.4 | 106.4 | 128 | 21.3 | 27.9 | 10.5 | 12.4 | |||

| 6.6 | 106.6 | 132 | 21.9 | 28.6 | 10.6 | 12.5 | |||

| 6.8 | 106.8 | 136 | 22.6 | 29.2 | 10.6 | 12.5 | |||

| 7 | 107 | 107 | 140 | 23.3 | 29.9 | 10.7 | 12.5 | ||

| 7.2 | 107.2 | 144 | 23.9 | 30.6 | 10.7 | 12.6 | |||

| 7.4 | 107.4 | 148 | 24.6 | 31.2 | 10.7 | 12.6 | |||

| 7.6 | 107.6 | 152 | 25.2 | 31.9 | 10.8 | 12.7 | |||

| 7.8 | 107.8 | 156 | 25.9 | 32.6 | 10.8 | 12.7 | |||

| 8 | 108 | 108 | 160 | 26.6 | 33.2 | 10.9 | 12.7 | ||

| 9 | 109 | 109 | 180 | 29.9 | 36.5 | 11.0 | 12.9 | ||

| 10 | 1010 | 1010 | 200 | 33.2 | 39.9 | 11.2 | 13.1 | ||

| 11 | 1011 | 1011 | 220 | 36.5 | 43.2 | 11.3 | 13.2 | ||

| 12 | 1012 | 1012 | 240 | 39.9 | 46.5 | 11.4 | 13.3 | ||

| 13 | 1013 | 1013 | 260 | 43.2 | 49.8 | 11.6 | 13.4 | ||

| 14 | 1014 | 1014 | 280 | 46.5 | 53.2 | 11.7 | 13.5 | ||

| 15 | 1015 | 1015 | 300 | 49.8 | 56.5 | 11.8 | 13.6 | ||

| 16 | 1016 | 1016 | 320 | 53.2 | 59.8 | 11.9 | 13.7 | ||

| 17 | 1017 | 1017 | 340 | 56.5 | 63.1 | 11.9 | 13.8 | ||

| 18 | 1018 | 1018 | 360 | 59.8 | 66.4 | 12.0 | 13.9 | ||

Source: Courtesy of Brian Karr.

One of the key impacts of IC1005, was the push for standards (Fig. 4) that can bring about the seamless connection of the three components of the end-to-end HDR pipeline: Capture, Manipulate, and Display. Only through such standardization will the uptake of HDR be widespread and sustainable. In this endeavor, IC1005 offered their own solution and made significant input to MPEG’s goal of introducing HDR into their standards. However, MPEG chose not accept IC1005’s solution and have preferred to go for SMPTE ST2084 [8] and ARIB STD-B67 [9]. Details of IC1005’s proposal, together with source code, etc., are available from Ref. [10].

In addition to proposing a new standard for the entire HDR pipeline, IC1005 also delivered, via four Working Groups, the following:

• HDR file formats for individual frames used in high fidelity applications, and for complete sequences of HDR footage for distribution and delivery.

• HDR quality metrics for three different purposes: the first for assessing the quality of the image that is captured and stored, compared to the real world scene. The second to enable quality of the compressed footage to be judged, and the third metric for allowing a detailed comparison of the image that is finally displayed to the viewer compared to the real scene that was captured.

• An HDR compression benchmark: a straightforward compression benchmark, including challenging test footage, against which future commercial compression algorithms can be compared.

• An uptake plan that served to highlight at all stages of the project where the partners can make major contributions in support of the widespread adoption of HDR and generate substantial interest in the Action and HDR.

The Action also resulted in significant dissemination of information and contributed to advancing the scientific/technological state-of-the-art in the area of HDR imaging. This was achieved via scientific publications, numerous meetings, training schools, STSMs, conferences, workshops, newsletters, etc. In total, members of IC1005 chaired 4 special sessions on HDR at major international conferences; edited 3 special issues on HDR for three leading journals, and edited 2 books on HDR video, including this one.

2.1 Success Stories

In the four years of operation, COST Action IC1005 achieved significant success in three key areas:

• closing the gap in expertise,

• transfer of knowledge, and

• raising awareness of HDR.

While a number of participants in IC1005 were already world-leading authorities on HDR, many others only had an interest in the field. Access to the experts and also novel HDR equipment played an important role in allowing these initial non-experts to become authorities in their own right and thus help to significantly raise the profile of HDR in their respective countries. The vibrant HDR community that IC1005 fostered allowed knowledge to be transferred from the academic partners to industry and the industrial needs to be transferred to the academics, to the mutual benefit of both. Now HDR is perhaps the leading topic within the television industry and amongst related standardization bodies. Below are a few examples of the successes that IC1005 has helped to bring about (Fig. 5).

2.1.1 HDR in Dental X-ray Imaging

Zeljen Trpovski, Faculty of Technical Sciences, Novi Sad,Serbia

Although Zeljen Trpovski had an interest in HDR imaging for 10 years, IC1005 spurred him on to undertake some new challenging applications. The application he chose was the use of HDR in dental X-ray images (Fig. 6). Dental radiologic images contain many details and their good distinguishing and accurate location are of significant interest in several medical fields. IC1005 provided him with the opportunity to meet with experts and gain new and interesting ideas for the continuation of his research. Particularly useful was the provision of the HDR MATLAB toolbox by Dr Banterle IT MC substitute. Preliminary results were presented at the first International Conference and SME Workshop on HDR imaging in 2013 [11].

2.1.2 HDR Tone Mapping in Game Development

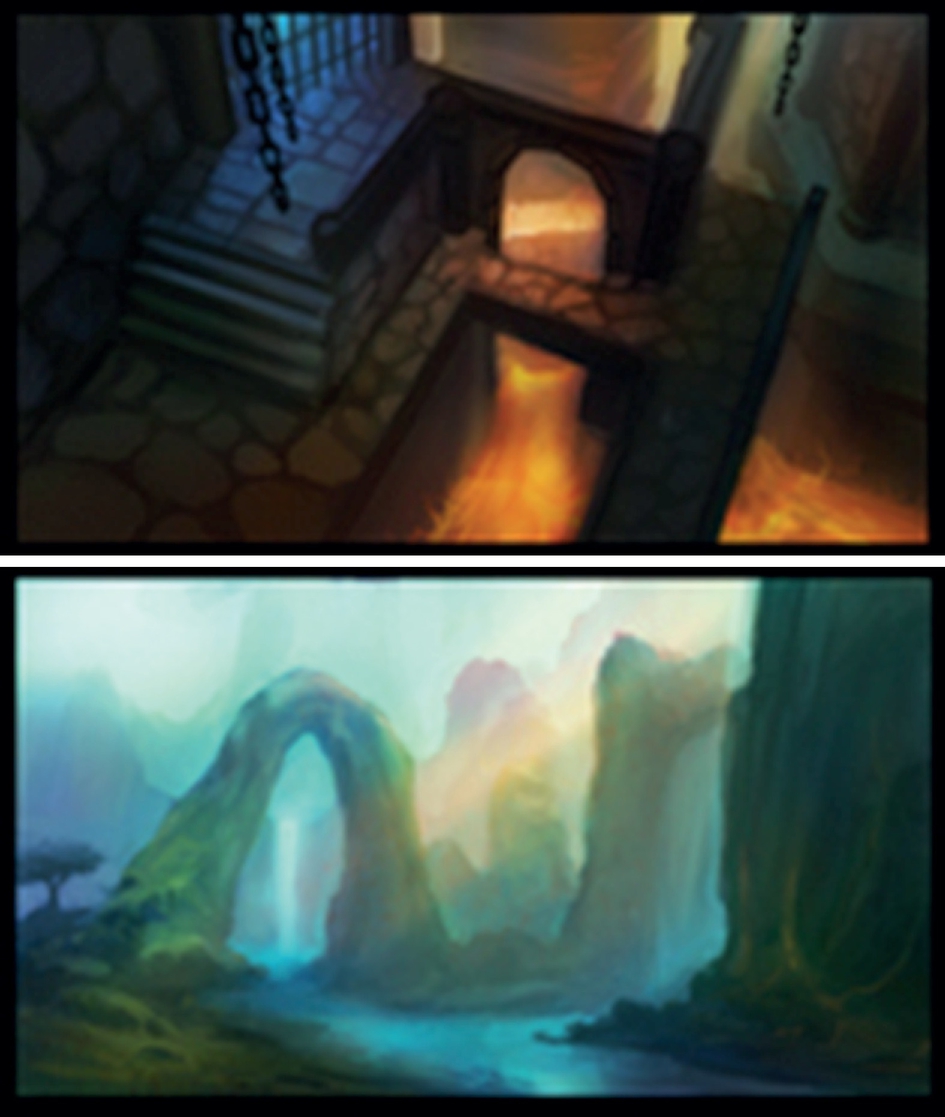

Igor Nedelkovski, University “St. Kliment Ohridski,” Bitola, FYR Macedonia

Prior to COST Action IC1005 Action, researchers from the Faculty of Technical Sciences, University “St. Kliment Ohridski”—Bitola (FTS) and Motion Universe (MU), a small company owned by ex-students of the FTS were very experienced with computer graphics, but had little or no knowledge about HDR. As a result of their active participation in the COST Action, including attending the working group and management committee meetings and the Training Schools, substantial knowledge was gained; so much so that they decided to apply this knowledge to the development of a computer game for mobile platforms (Android, iOS, Blackberry). The game, Escape Medieval, was thus rendered almost entirely using HDR tone mapping (Fig. 7). As the images show, the quality of the resultant game was significantly higher than similar, competitive mobile games available on the market today. As a result of this success HDR will be introduced into computer graphics courses at FTS and the knowledge disseminated to other Universities and businesses in the Western Balkans.

2.1.3 New PhD Topic at Vienna University of Technology

Margrit Gelautz, TU Vienna

The COST Action motivated TU Vienna to combine their previous experience on stereo analysis with HDR topics (Fig. 8). A new Ph.D. topic on “HDR Stereo Matching” was formulated and financially supported by the Vienna Ph.D. School of Informatics (http://www.informatik.tuwien.ac.at/teaching/phdschool). TU Vienna published papers at the two HDRi Workshops and the Special Session on HDRi at Eusipco 2014. The knowledgeable and very detailed feedback on the papers by the reviewers (chosen amongst the experts of the COST Action) gave TU Vienna valuable input to improve the papers and stimulate future work.

2.1.4 The Growth of HDR in France

Kadi Bouatouch and Rémi Cozot, University of Rennes, Ronan Boitard, Technicolor

Inspired by the vision of HDR that IC1005 was putting forward, the following companies and institutions in France (Fig. 9): Technicolor, Telecom ParisTech, University of Nantes, Dxo Lab, Dxo Sig, Binocle, Transvideo, Thomson Video Networks, AcceptTV, TF1 and Polymorph, put together the Nevex proposal. This nationally-funded project considered the design of a complete HDR chain: acquisition, compression, transmission, and display. Members of Nevex were very active in IC1005. A key output of this project was the groundbreaking Ph.D. by R. Boitard on HDR video tone mapping.

2.1.5 HDR Imaging of Rocket Launches

Brian Karr, Kennedy Space Center, Alan Chalmers, University of Warwick

Contact was made between the head of the Advanced Imaging Lab at Kennedy Space Center, Brian Karr, and the Chair of IC1005, Alan Chalmers, as a direct result of IC1005’s dissemination activity at NAB in April 2013. The outcome of this meeting was that a team from goHDR/University of Warwick went to the Kennedy Space Center in July 2013 to film a rocket launch in HDR (Fig. 10).

2.1.6 World’s First Complete Real-Time HDR Broadcast

Alan Chalmers, University of Warwick, Igor Olaizola,Vicomtech, Domenico Toffoli, SIM2

Assisted by earlier STSM visits between the University of Warwick, UK and Vicomtech, ES, in April 2014 a world first end-to-end live HDR pipeline was demonstrated at NAB 2014 (Fig. 11). This annual event attracts over 92,000 participants and thus it was deemed a highly appropriate venue to show this system for the first time. The system was shown on a stand at the Futures Park section of NAB as part of an IC1005 dissemination activity. The system attracted significant interest. The work for NAB 2014 led directly to the world’s first professional end-to-end HDR pipeline when the prestigious German camera manufacturer ARRI joined the project. This professional system was showcased at NAB 2015, again as part of an IC1005 dissemination activity.

2.1.7 Evaluation of HDR Usage for Performance Art Projects

Emmanouela Vogiatzaki, RFSAT Ltd and University of Peloponnesus, Artur Krukowski, Intracom S. A. Telecom Solutions, Alan Chalmers, University of Warwick

Following a visit by Vogiatzaki to the University of Warwick in July 2014, a range of trials of HDR cameras has been undertaken to capture scenographies of performance projects in theatrical environments (Fig. 12). The challenge was to achieve sufficient dynamic range for capturing both subjects in deep shadows simultaneously with brightly lit main subjects. The results have shown some clear advantages of using HDR technology in the performing arts, however more work is needed before such technology can be used in live performances.

3 The HDR Video Pipeline

With HDR video, the full range of lighting in the scene can be captured and delivered to the viewer. In order to achieve such a “scene referred” ability, 32 bit IEEE floating point values are needed to be used to represent each color channel. With 96 bits per pixel (bpp), compared with just 24 bpp for SDR, this means a single HDR frame of uncompressed 4K UHD resolution (3840×2160 pixels) 4:4:4 requires approximately 94.92 MB of storage, and a minute of data at 30 fps needs 166 GB. Such a large amount of data cannot be handled on existing ICT infrastructure, and thus efficient compression is one of the keys necessary for the success of HDR video.

3.1 Capture

Until single sensors are capable of capturing the full range of light in a scene, a number of other approaches are necessary. One of the most popular methods of HDR capture is to use multiple exposures with different exposure times to create a single HDR frame [12]. However, if anything in the scene, or the camera moves, while the exposures are being taken, undesirable ghosting artefacts can occur. In this case, sophisticated deghosting algorithms, such as those described in Chapter 1, are required. This is even more of a challenge if live broadcast of HDR video is desired [13]. Another approach is to use multiple sensors with the same integration time through a single lens. A number of prototypes of such systems have been built, for example, [14] (which was able to achieve 20 f-stops at 30fps), and [15], but as yet, these are not widely available. A survey of 18 commercial cameras was undertaken in [16] to objectively measure the dynamic range they were capable of capturing. The goal was to determine their suitability for capturing all the detail during a rocket launch. Table 1 shows some results from that survey. Details of how the dynamic range of the cameras were measured is given in Chapter 4.

3.2 Compress

As mentioned above, compression is one of the keys to the successful uptake of HDR video on existing ICT infrastructure. A number of HDR video compression methods have been proposed in the last decade. Initially the evaluation of these was restricted by the lack of available HDR video footage. This is no longer a problem, with a number of sources of HDR video now available, including from the University of Stuttgart [17] and for the MPEG community.

As discussed further in Chapter 8, HDR video compression may be classified as one-stream or two-stream [18]. The one-stream approach utilizes a single layer transfer function to map the HDR content to a fixed number of bits, typically 10 or 12. Examples include [19–24]. One problem with one-stream methods is that they do not work for 8 bit devices, which includes a large number of legacy displays and mobile devices. With mobile devices, this is a major limitation as more and more video is being consumed on them; more than 51% of traffic on mobile devices is video viewing [25]

In two-stream compression methods, there is one input HDR video stream to the encoder that produces two bit streams as output. Two stream methods can be used for 8 bit and higher infrastructures. These streams can consist of (1) a standard compliant bit stream, for example, HEVC Main 10, H.264, etc., and (2) one another stream corresponding to additional data to reconstruct the HDR video. At the decoder, these two streams are recombined to produce the HDR video stream. Examples of two-stream compression for HDR video include [26–29].

3.3 Display

In January 2016 a consortium of TV manufacturers, broadcasters, and content producers was formed. Known as the UHD Alliance [30], their goal is to promote the criteria by which they would judge future platforms to be suitable for delivering a “premium 4K experience.” Despite IC1005’s definition of HDR being adopted by MPEG [7], UHD Alliance proposed two of their own definitions of HDR, both of which are “display referred”:

1. 1000 nits peak brightness and <0.05 nits black level (contrast ratio 20,000:1, 14.3 f-stops),

2. >540 nits brightness and <0.0005 nits black level (assuming it is possible to measure 0.0005 nits, this give a contrast ratio 1,080,000:1, ≈20 f-stops).

These two definitions for HDR were chosen to satisfy manufacturers of different television technologies. Option 1 is for those with LED based TVs which have higher brightness, but inferior black levels, while Option 2 is for OLED based TVs which have deep blacks, but much lower peak brightness levels [31].

The UHD Alliance’s “displayed referred” definition of HDR has already led to a number TV manufacturers marketing their televisions as “HDR displays.” Such “consumer HDR” displays, however, have a major limitation. They do not provide an overall brighter image. Instead the average picture level (APL) of the frames is maintained and an HDR effect is achieved by providing (a) more details in the darker regions of the scene and (b) having (a few) brighter highlights [32]. Because of the HDR metadata, the TV has to be driven at its maximum backlight capacity in order to provide the required highlights. However, this means that the overall brightness of the screen cannot simply be increased as you can with an SDR display. HDR content on these displays should thus not be viewed in ambient lighting levels exceeding 5 nits [33]. The situation is even worse for HDR displays based on OLED technology. For such displays, darker than 5 nits viewing conditions, and even the absence of white walls is recommended [33]. A key reason for this limitation is that every value of the ST.2084 transfer function is directly mapped to the same specific output luminance level on all HDR displays. This absolute mapping is not flexible and is unable to adapt to different ambient lighting levels.

The 1000nit peak luminance of consumer HDR displays is significantly lower than the 4000nit HDR displays that have been available from innovative Italian company, SIM2, since 2009. Derived from the pioneering HDR displays developed by Brightside [34], SIM2’s latest HDR47 display has a peak luminance of 6000 nits (Fig. 13). This is achieved via a backlight of 2202 LEDs each of which has 12 bits of brightness resolution. A key feature of the SIM2 display is the attention to high power management and heat cooling. This allows the 6000nit display to have a 1.5-kW power supply limit with a standard 110V plug. A 10,000nit display is due to be showcased at IBC in September 2016.

4 Discussion

Public awareness of HDR has come a long way in the last 5 years. Most of the major television manufacturers are marketing HDR-TVs, while camera manufactures are providing more f-stops from their cameras. For compression, MPEG are also close to proposing a standard which is likely to include both the Perceptual Quantization (PQ) and Hybrid Log-Gamma (HLG) methods. In July 2016 the European Broadcasting Union (EBU) announced ITU-R BT.2100-0; their recommendations for “Image parameter values for high dynamic range television for use in production and international programme exchange” [35]. The recommendation also covers PQ and HLG. Interestingly, BT.2100 acknowledges: “The Administrations of France and the Netherlands have expressed concerns regarding the characteristics and performance of HDR-TV. Further studies are needed and may lead to a revision of this Recommendation, as appropriate, under the terms of Resolution ITU-R 1-7” [35].

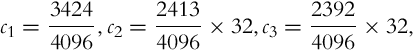

There is growing evidence that the methods being considered and adopted by the manufacturers and standards bodies are not the best. PQ and HLG are computationally expensive:

where  ,

,  ,

,

Furthermore, HLG includes a branch instruction, which limits its efficient implementation in hardware.

where r = 0.5, a = 0.17883277, b = 0.28466892, c = 0.55991073.

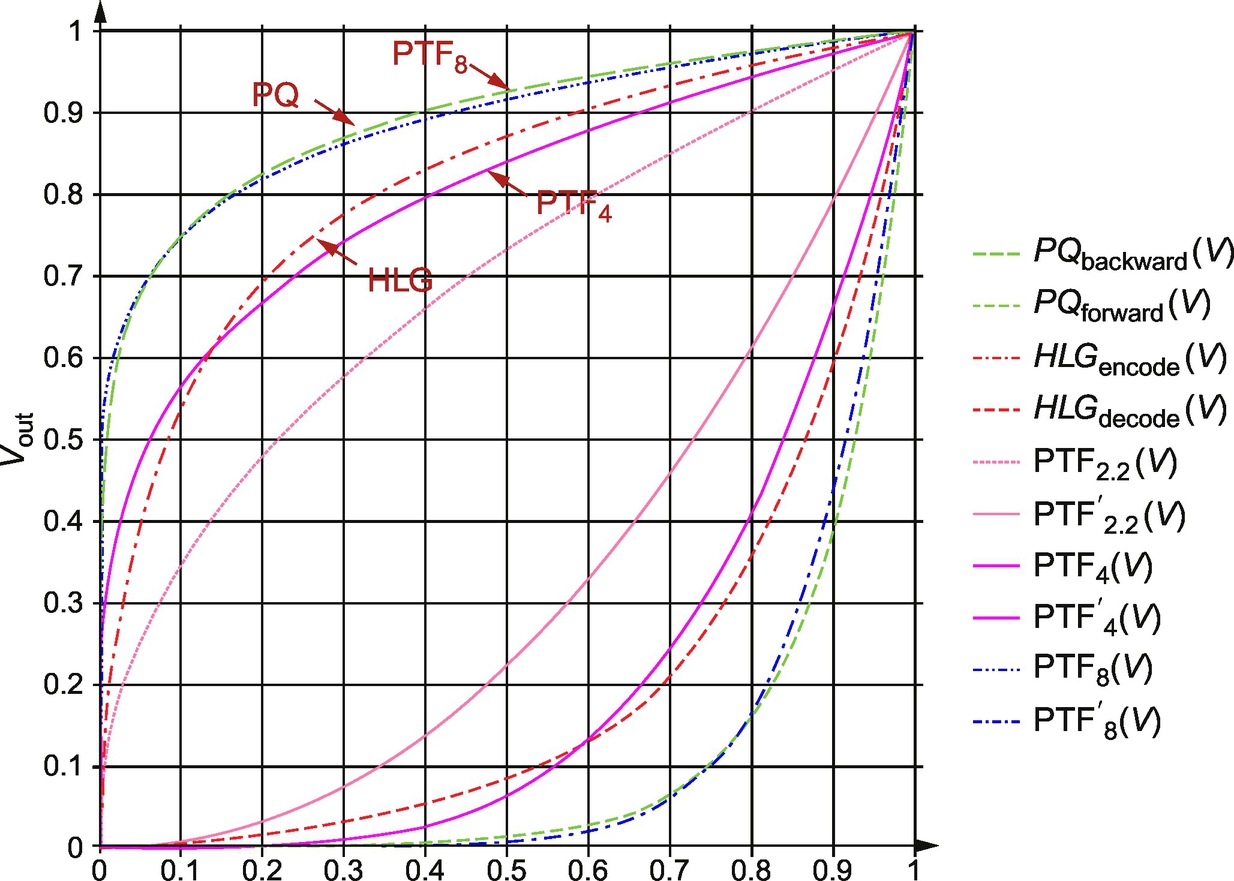

The recently proposed power transfer function (PTF) [36], on the other hand, is highly efficient (29 times more efficient than PQ, and 1.5 times more efficient than even an LUT implementation of PQ or HLG, without the memory overheads) and has been shown to deliver better quality than either PQ or HLG [36]:

where γ is a variable, for example, 4 or 8, V is normalized HDR.

As Fig. 14 shows, PTF with γ = 8 is very similar to the PQ curve, while γ = 4 closely approximates HLG. When compressing HDR video with PTF the input HDR frames first have to be normalized to the range [0, 1] with a normalization factor ℵ using the relation L = S = ℵ where S is full range HDR data. Unlike 10-bit PQ, which is limited by a peak luminance of 4000 nits (12-bit PQ has a limit of 10,000nit), PTF is not limited by a specific peak luminance.

HDR first wave—consumer HDR: The current trend of HDR, such as espoused by the UHD Alliance can be considered a “first wave” of HDR products. The HDR pipeline is very much “display referred”; constrained by the peak luminance that consumer HDR displays are capable of showing, typically 1000 nits. One positive outcome of this “first wave” is far wider user recognition of the term HDR (albeit not the definition proposed by IC1005 and adopted by MPEG). Although HDR TVs will be sold in increasing numbers in the coming months, especially as more HDR content becomes available, at some point viewers will no longer want to watch HDR in a dark room; this will give rise to a “second wave” of HDR technology.

HDR second wave—future-proof HDR: The second wave of HDR technology will be “scene referred,” with the HDR pipeline no longer constrained by what a display is capable of showing. Rather the full range of lighting captured in a scene will be transmitted along the pipeline and the best image possible delivered for a given display, the ambient lighting conditions, and any creative intent. Known as true-HDR, this approach enables HDR content to be displayed directly on an HDR display while, on an SDR display, a tone mapper can be chosen, dynamically, to best suit the current scene, creative intent and ambient light conditions [18]. True-HDR enables every pixel of each HDR frame to be modulated if necessary. This allows objects to be tracked, for example, a golf ball up against a sky, of something coming off a vehicle during a rocket launch, by having their pixels at one at one exposure, while the rest of the scene could be at another. The ability to modulate individual pixels can be taken further. “Personalized pixels” can provide content creators additional creativity by enabling them to deliberately hide detail, such as clues, in areas of the scene which can only be discovered by the user interacting with the content by exploring the exposures of those pixels (Fig. 15).

This is an exciting time for HDR video: The limitations of the “first wave” will begin to be appreciated and we will move to much more flexible, compelling, future-proof “second wave.” This book contains many valuable contributions that will provide the foundation for this “second wave.”

Appendix

Table 2 shows the dynamic range conversion chart.