2

Benefits of Hyper-Converged Infrastructure

The previous chapter provided an overview of the VxRail Appliance 7.x system. You learned about the architecture, features, management, and documentation resources for VxRail, such as automated deployment, one-click upgrade, and storage policy-based management. You learned how to help and offload the operation and configuration of VxRail in comparison with the traditional server and storage architecture. The VxRail 7.x platform runs on the latest generation of Dell PowerEdge hardware, the 15th generation. Compared to the previous generation of Dell PowerEdge hardware, the performance and configuration are improved in the latest version.

VxRail Appliance is a Hyper-Converged Infrastructure (HCI) that is a self-contained infrastructure platform, and you can select different hardware components (such as CPU processors, memory, network connectivity, a network daughter card, GPUs, NVMe, and all-flash disk devices) to build your environment based on your hardware and software requirements. If you don’t have hardware and software requirements in the initial phase, you can build your VxRail cluster with the minimum configuration (that is, three VxRail nodes with the same model and configuration). You can easily scale up or scale out your VxRail cluster when you need to upgrade or expand the resources on the VxRail platform in the future. The scaling feature is one key benefit of VxRail Appliance. VxRail 15th generation hardware enhances some features and cluster deployment, and you will learn about the benefits of this latest generation of HCI in this chapter.

This chapter includes the following main topics:

- What’s new in VxRail 15th generation?

- VxRail nodes with vSAN

- VxRail dynamic nodes

- VxRail satellite nodes

What’s new in VxRail 15th generation?

VxRail supports various use cases, such as big data analytics, virtual desktop infrastructure (VDI), remote office, and Brand Office. In VxRail 7.x, support to use your own VMware vSphere and vSAN license or obtain VMware licenses from Dell Technologies is available. VxRail provides the following benefits:

- It can deliver a hybrid cloud with VMware Cloud Foundation on VxRail, including Dell Technologies Cloud Platform, VMware Cloud on Dell EMC, and VMware Validated Designs (VVD).

- It can deliver cloud-based management and analytics services for the VxRail platform.

- It supports the services of active-active data centers and active-passive solutions.

- It supports end-to-end lifecycle management and support.

The following table shows a summary of improvements on four new platforms:

|

CPU |

Memory |

Connectivity |

Storage | |

|

E660 |

New CPU processors up to 40 cores. |

Next-generation Intel Optane persistent memory. |

Supports PCIe Generation 4. |

Supports PCIe Generation 4 NVMe cache devices. |

|

E660F |

N/A |

Supports up to 8 TB of memory. |

SAS HBA with x16 SAS lanes. |

Supports NVMe cache drives on VxRail V and S Series. |

|

E670F |

N/A |

Supports up to 8 TB of 2nd generation Intel Optane persistent memory. |

Supports quad-port 25 Gb OCP 3.0 networking. |

Supports 12 TB NL-SAS capacity drives on VxRail S Series. |

|

V670F |

N/A |

N/A |

Supports hot-pluggable Boot Optimized Storage Solution (BOSS). |

Supports the additional four capacity disk slots on VxRail P Series for up to 184 TB of storage. |

Table 2.1 – A summary of improvements on each node in VxRail 15th generation

VxRail 14th generation (prior to VxRail 7.0.240) only supported one type of VxRail cluster deployment (Standard Cluster) configured with physical compute and storage resources on each node. The compute and storage resources can be increased at the same time when you add one or more nodes into the existing VxRail cluster. VxRail v4.7.100 supports the vSAN two-node cluster with a direct-connect configuration, but this configuration does not support scaling. We will discuss the details of the vSAN two-node cluster in Chapter 6, Design of vSAN 2-Node Cluster on VxRail. VxRail also supports vSAN Stretched Clusters; we will discuss the details in Chapter 7, Design of Stretched Cluster on VxRail. VxRail 15th generation (VxRail 7.0.240 or later) supports another type of VxRail cluster deployment, Dynamic Node Cluster. From VxRail 7.0.300, it supports VxRail satellite nodes. When initializing a VxRail 7.x system, the Dynamic Node Cluster can be selected in the VxRail Deployment Wizard.

Figure 2.1 – Dell EMC VxRail Deployment Wizard

The following sections will provide an overview of and discuss the fundamental differences between each VxRail node, that is, VxRail nodes with vSAN, VxRail dynamic nodes, and VxRail satellite nodes.

VxRail nodes with vSAN

The VxRail Standard Cluster is one type of VxRail cluster deployment; it requires a minimum of three nodes with the same model in the VxRail cluster, which all VxRail hardware models support. Each VxRail node includes cache disks (Flash or NVMe) and capacity disks for this cluster type. In Figure 2.2, there are four nodes in the VxRail cluster, and each node consists of a Disk Group with one cache disk and two capacity disks. It can automatically build the VxRail cluster and create a local vSAN datastore as primary storage after the deployment is complete.

Figure 2.2 – The diagram of VxRail nodes with vSAN

VxRail standard clusters also support connectivity with external storage, such as Dell EMC storage, HCI Mesh, or third-party storage. All VxRail nodes support adding one Fibre Channel HBA except the VxRail D and G Series. You can set up the connectivity of the VxRail cluster and secondary storage with Fibre Channel or iSCSI Channel.

VxRail standard cluster with external storage

In Figure 2.3, there is a VxRail standard cluster with four nodes connected with a Dell storage array over Fibre Channel. In this configuration, you can move the virtual machines across the vSAN datastore and SAN datastore with vSphere Storage vMotion. The virtual machine can be shared between the primary and secondary storage resources.

Figure 2.3 – VxRail standard cluster with external storage

Figure 2.3 gives an overview of a VxRail standard cluster with external storage.

Important Note

VxRail Lifecycle Management (one-click upgrade) does not include the firmware upgrade of an external storage array.

We will discuss the VxRail standard cluster with vSAN HCI Mesh in the next section.

VxRail standard cluster with vSAN HCI Mesh

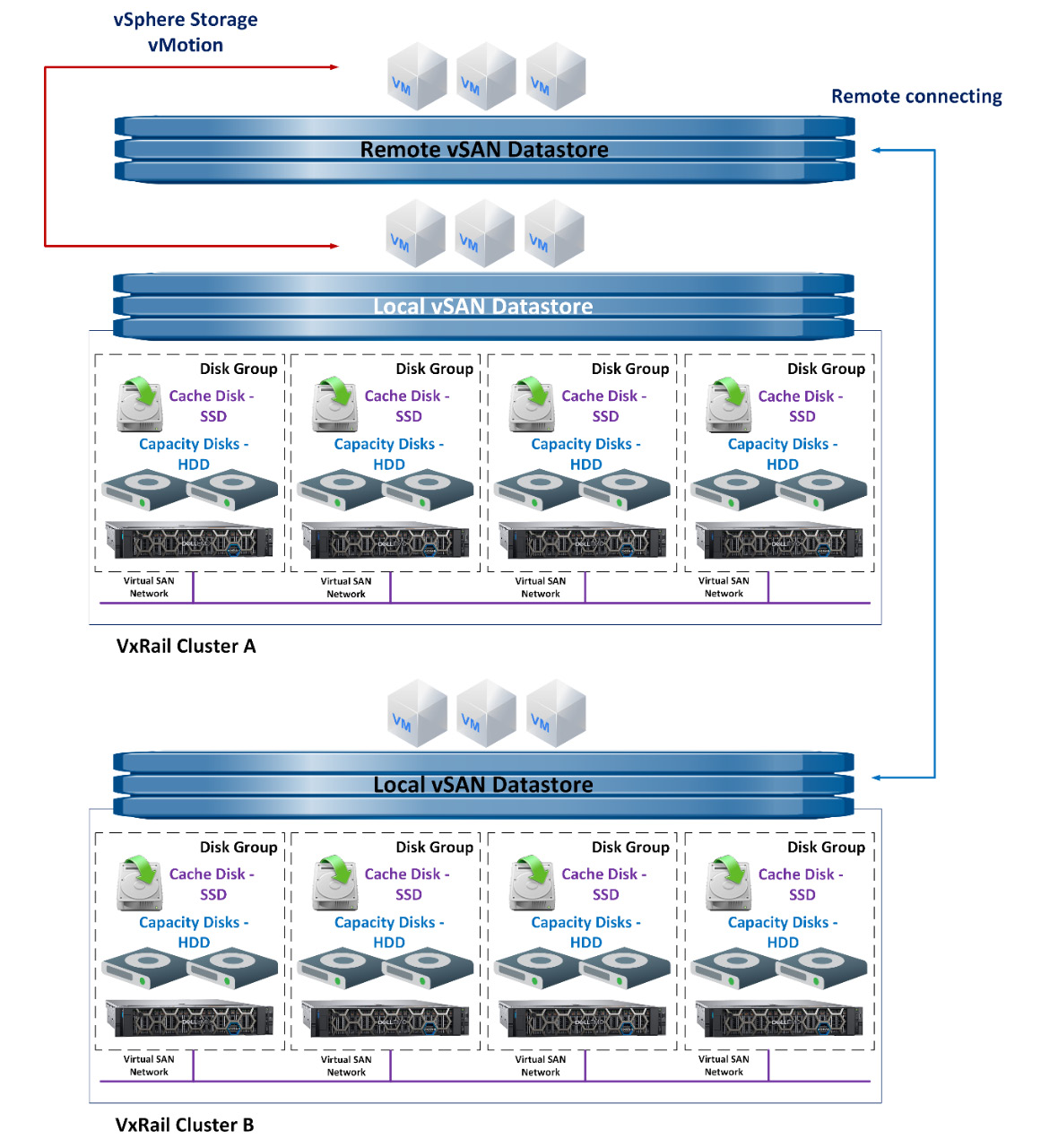

From VxRail 7.0.100, VxRail supports vSAN HCI Mesh. With this feature, we have a local vSAN datastore on a VxRail cluster that can be shared with other VxRail clusters. In Figure 2.4, there are two VxRail standard clusters (VxRail Cluster A and Cluster B) with four nodes. The local vSAN datastore on VxRail Cluster B can be shared with VxRail Cluster A.

Figure 2.4 – VxRail standard clusters with vSAN HCI Mesh

This configuration lets you move the virtual machines across the local vSAN datastore and remote vSAN datastore with vSphere Storage vMotion. The virtual machine can be shared between the local and remote storage resources.

If you want to enable a VxRail standard cluster with vSAN HCI Mesh, please prepare the following:

- VxRail software 7.0.100 or later.

- A single vCenter instance to manage each VxRail cluster that enables vSAN HCI Mesh.

- A VMware vSAN Enterprise or Enterprise Plus license is required for the VxRail cluster to share the vSAN datastore.

- A maximum of 1 millisecond round-trip time for VxRail clusters between all VxRail nodes in the cluster.

VxRail standard cluster deployment is suitable for most common scenarios; this cluster type has the following features:

- It can deliver a single management dashboard of computing and storage resources. And it is easy to handle the life cycle management in the HCI platform.

- It can deliver a simple deployment and user-friendly operation.

- The storage resources are not dependent on secondary storage.

- For system scale-out, the compute and storage resources can be increased simultaneously. It can deliver scalability and high-availability (HA) requirements.

- Support configuring the external storage resources on the VxRail cluster as a secondary storage array.

In some scenarios, VxRail standard cluster deployment may not be suitable, as follows:

- The storage requirements are very large.

- The environment is a remote office or Brand Office.

- The service-level agreement and HA of applications are very high. For example, deploying a VxRail Stretched Cluster to protect all application virtual machines.

- The customer wants to increase the storage resources for system scale-out, and the compute resources are not required.

Thanks to the examples in Figure 2.3 and Figure 2.4, you understand the benefits of VxRail nodes with vSAN and which scenarios are suitable for this type of cluster deployment.

In VxRail 14th generation, you can only use the VxRail standard cluster deployment. We will discuss another type of VxRail cluster in the next section, VxRail dynamic nodes.

VxRail dynamic nodes

The VxRail dynamic node cluster is a type of VxRail cluster deployment that requires a minimum of two nodes with the same model in the VxRail cluster, supported by three VxRail hardware models, E660F, P670F, and V670F. These nodes are built on Dell PowerEdge 15th-generation servers. VxRail dynamic nodes do not include any storage resources and only consist of the compute resources. However, these nodes can also deliver all the benefits of the VxRail Appliance system, except the storage resources. If you choose this type of cluster deployment, you need to connect to an external storage array. The external storage supports various options, including Dell PowerStore, Dell PowerMax, and Dell Unity XT. In Figure 2.5, there is a VxRail dynamic node cluster with four nodes connected to an external storage array. In this type of cluster deployment, the connectivity of the VxRail dynamic node and external storage is in Fibre Channel (FC in the diagram).

Figure 2.5 – VxRail dynamic node cluster

If you choose to use a VxRail dynamic node cluster deployment, you need to consider the following:

- If you want to scale out the system and you need to increase the compute resources but storage resources are not required, a VxRail dynamic node is a good option.

- If you plan to reuse the existing storage resources as the primary storage.

- There is Dell-certified storage as the primary storage for the VxRail dynamic node cluster in your environment.

- When you choose the supported storage for the VxRail dynamic node, please check the VxRail Support Matrix for compatibility.

- For HA and redundancy on each VxRail dynamic node, I suggest installing two Fibre Channel dual-port adapters per VxRail dynamic node. The Fibre Channel adapter supports Emulex and QLogic models.

- Lifecycle management does not include the primary storage resources in a single management dashboard of computing resources.

- Make sure there are two Fibre Channel switches for the connections of each VxRail dynamic node.

Important Note

The VMware vSAN license is not required on each VxRail dynamic node if you plan to deploy the VxRail dynamic node cluster.

We will discuss the VxRail dynamic node cluster with vSAN HCI Mesh in the next section.

VxRail dynamic node cluster with vSAN HCI Mesh

VxRail dynamic node clusters also support vSAN HCI Mesh. In this feature, the local vSAN datastore on a VxRail cluster can be shared with VxRail dynamic node clusters. Now we will discuss two vSAN HCI Mesh scenarios.

Scenario 1

In Figure 2.6, VxRail Cluster A has four nodes and a local vSAN datastore across this VxRail cluster. This local vSAN datastore is shared with the VxRail dynamic node cluster. In this configuration, the virtual machines on the VxRail dynamic node cluster can use the storage resources on VxRail Cluster A. You can move the virtual machines on the VxRail dynamic node cluster into either VxRail Cluster A or the remote vSAN datastore using vSphere Storage vMotion.

Figure 2.6 – A VxRail dynamic node cluster with vSAN HCI Mesh

If you choose to use a VxRail dynamic node cluster with vSAN HCI Mesh, you need to consider the following prerequisites:

- VxRail 7.0.100 or later.

- A VMware vSAN Enterprise or Enterprise Plus license is required for the VxRail cluster shared with the vSAN datastore.

- When you choose the supported storage for the VxRail dynamic node, please check the VxRail Support Matrix for compatibility.

- If the vSAN datastore is already shared with other clusters, ensure it only supports the maximum of five clusters connected to this vSAN datastore.

- Both the VxRail dynamic node cluster and the VxRail cluster sharing its vSAN datastore need to be managed by the same vCenter instance.

- The round-trip time latency between the dynamic nodes and the VxRail cluster sharing its vSAN datastore must be less than 5 milliseconds.

Scenario 2

In Figure 2.7, each VxRail cluster has four nodes and a local vSAN datastore across this VxRail cluster. This local vSAN datastore is shared with VxRail Dynamic Node Cluster A and Dynamic Node Cluster B.

Figure 2.7 – The two VxRail dynamic clusters with vSAN HCI Mesh

If you choose two VxRail dynamic node clusters with vSAN HCI Mesh, you need to consider the following requirements:

- VxRail 7.0.240 or later.

- A VMware vSAN Enterprise or Enterprise Plus license is only required for the VxRail cluster shared with the vSAN datastore. The vSAN license is not required on each VxRail dynamic node cluster.

- When you choose the supported storage for the VxRail dynamic node, please check the VxRail Support Matrix for compatibility.

- If the vSAN datastore is already shared with other clusters, ensure it only supports the maximum of five clusters connected to this vSAN datastore.

- Both the VxRail dynamic node cluster and the VxRail cluster sharing its vSAN datastore need to be managed by the same vCenter instance.

- The round-trip time latency between the dynamic nodes and the VxRail cluster sharing its vSAN datastore must be less than 5 milliseconds.

Important Note

In VxRail 7.0.240 and above, the vSAN license is not required on each VxRail dynamic node cluster or VxRail standard cluster if vSAN HCI Mesh is enabled on the VxRail cluster.

Thanks to the preceding two scenarios in Figure 2.6 and Figure 2.7, you now understand the benefits of VxRail dynamic nodes and which scenarios are suitable for this type of cluster deployment.

VxRail satellite nodes

In the preceding sections, we learned the difference between VxRail nodes with vSAN and VxRail dynamic nodes. From VxRail 7.0.320 or above, the VxRail satellite node is available. This node is a type of VxRail node and it supports lifecycle management through VxRail Manager. But it only requires a single IP address to connect to the VxRail cluster in an HQ data center.

In Figure 2.8, there is a VxRail cluster with four nodes in the HQ data center and one VxRail satellite node in each remote site (A, B, and C). Each VxRail satellite node and VxRail cluster is managed by a single vCenter instance through a Wide Area Network (WAN) in the HQ data center. If you deployed these nodes in your environment, the virtual machines could be running on local RAID storage (PERPC H755 controller) or secondary storage.

Figure 2.8 – VxRail satellite nodes

If you plan to deploy VxRail satellite nodes in your environment, you need to consider the following prerequisites:

- VxRail 7.0.320 or later is required to support VxRail satellite nodes.

- VxRail satellite nodes do not support vSAN HCI Mesh.

- VxRail satellite nodes are supported in a single instance, and it cannot support node expansion.

- VxRail satellite nodes are used in remote sites or edge locations.

- If a VxRail cluster with a vSAN datastore is already deployed in your environment, then you can add the satellite nodes into the VxRail cluster for the management of each satellite node.

- Data and applications are not protected from node failure.

- The PERC H755 controller provides local RAID protection, including RAID 0, 1, 5, 6, 10, 50, and 60.

- VxRail satellite nodes support the VMware Standard edition or Enterprise Plus edition.

- VxRail satellite nodes only support customer-managed vCenter Server; embedded vCenter Server is not supported.

Important Note

The VMware vSAN license is not required on each VxRail satellite node if you plan to deploy VxRail satellite nodes.

Thanks to Figure 2.8, you have had an overview of VxRail satellite nodes. Now we will discuss an example of VxRail satellite nodes in the next section.

Scenario

In Figure 2.9, you can see there is a VxRail management cluster in the primary data center. The VxRail management cluster is used as a VxRail with vSAN cluster, which is managed by a customer-managed vCenter. The single VxRail management cluster can manage many VxRail satellite nodes in different locations. The lifecycle management of VxRail satellite nodes is supported in this scenario.

Figure 2.9 – An example configuration of VxRail satellite nodes

You now understand the benefits of VxRail satellite nodes and which scenarios are suitable for this type of cluster deployment. This table shows a summary of each kind of VxRail deployment:

|

VxRail Node with vSAN |

VxRail Dynamic Node |

VxRail Satellite Node | |

|

Hardware model |

It supports all VxRail models |

It supports VxRail E660F, P670F, and V670F |

It supports VxRail E660, E660F, and V670F |

|

Boot device |

BOSS with RAID-1 |

BOSS with RAID-1 |

BOSS with RAID-1 |

|

Primary storage |

VMware vSAN |

VMware vSAN HCI Mesh or Dell EMC storage |

Local RAID storage PERC H755 |

|

Secondary storage |

VMware vSAN HCI Mesh, Dell EMC storage, or third-party SAN |

VMware vSAN HCI Mesh, Dell EMC storage, or third-party SAN |

Dell EMC storage or third-party SAN |

|

Scaling |

Scale from 2 to 64 nodes |

Scale from 2 to 96 nodes |

It is not supported |

|

VxRail Lifecycle Management |

It is supported |

It is supported but not included in the storage |

It is supported |

|

VMware vSAN license |

It supports all VMware vSAN editions |

No VMware vSAN license required |

No VMware vSAN license required |

|

Used scenario |

All common cases |

Independent scaling of computing resources |

Edge |

Table 2.2 – A summary of each kind of VxRail deployment

In this section, you got an overview of VxRail satellite nodes and their advantages.

Important Note

In a VxRail node with vSAN, the boot device is a single drive only in G Series. In the VxRail dynamic node, the vSAN license is not required in VxRail software 7.0.240 or later.

Summary

In this chapter, you learned about the new features and hardware in VxRail 15th generation, including VxRail cluster types, vSAN dynamic nodes, and VxRail satellite nodes. You also explored the benefits of each kind of VxRail deployment and the scenarios in which each deployment is suitable.

In the next chapter, you will learn how to design a vCenter server for the VxRail 7 system, including an internal vCenter server with external DNS and internal DNS, an external vCenter server with external DNS, and an internal vCenter server with a customer-supplied virtual distributed switch.

Questions

The following is a short list of review questions to help reinforce your learning and help you identify areas that require some improvement:

- Which VxRail cluster types can be configured in the VxRail Deployment Wizard?

- VxRail standard cluster

- VxRail stretched cluster

- VxRail dynamic node cluster

- VxRail satellite node cluster

- VxRail two-node cluster

- Which storage types can be configured in the VxRail Deployment Wizard?

- Standard vSAN

- iSCSI Channel array

- vSAN HCI Mesh

- Dell EMC storage array

- Fibre Channel array

- All of the above

- Which VxRail software version can vSAN HCI Mesh support?

- VxRail 4.5.xxx

- VxRail 4.7.xxx

- VxRail 7.0.xxx

- VxRail 4.7.100

- VxRail 7.0.100

- None of the above

- Which VMware vSAN licenses are required for the VxRail cluster to share the vSAN datastore when vSAN HCI Mesh is enabled?

- VMware vSAN Standard edition

- VMware vSAN Advanced edition

- VMware vSAN Enterprise edition

- VMware vSAN Enterprise Plus edition

- All of the above

- Which VxRail feature is not supported on the VxRail dynamic node cluster?

- VMware vSphere vMotion

- VMware vSphere HA

- VMware vSphere DRS

- Define a vSAN datastore

- Connect to a remote vSAN datastore

- What scenarios are not recommended to deploy a VxRail standard cluster?

- The storage resource requirements are very large.

- When you scale out the system, the compute and storage resources can be increased simultaneously.

- The environment is a remote office or brand office.

- The environment is a primary data center.

- The external storage resources on the VxRail cluster need to be configured as a secondary storage array.

- Which VxRail cluster types can be supported with vSAN HCI Mesh?

- VxRail two-node cluster

- VxRail three-node cluster

- VxRail dynamic node cluster

- VxRail satellite node cluster

- VxRail stretched cluster

- All of the above

- Which VxRail software version can support VxRail satellite nodes?

- VxRail 4.5.xxx

- VxRail 4.7.xxx

- VxRail 7.0.xxx

- VxRail 4.7.100

- VxRail 7.0.300

- All of the above

- Which configuration is only supported with an external vCenter Server?

- VxRail standard cluster

- VxRail dynamic node cluster

- VxRail satellite node

- VxRail two-node cluster

- VxRail stretched cluster

- None of the above

- Which VxRail node can support local RAID storage PERC H755?

- VxRail node with vSAN

- VxRail satellite node

- VxRail dynamic node

- VxRail D560

- VxRail G560

- All of the above

- Which use cases are recommended for VxRail satellite nodes?

- Active-active data centers

- Disaster recovery solutions

- Remote office

- Edge

- Independent scaling of computing resources

- None of the above

- A customer plans to deploy the new VxRail Appliance version in their environment; the requirements are listed here:

- Reuse the existing storage array.

- Independent scaling of computing resources.

- The life cycle management of storage is not required.

- The Fibre Channel connection is supported.

Which diagram is recommended configuration for this use case?

- VxRail standard cluster

Figure 2.10 – VxRail standard cluster environment

- VxRail dynamic node cluster

Figure 2.11 – VxRail dynamic node cluster environment

- VxRail management cluster

Figure 2.12 – VxRail management cluster environment

- VxRail standard cluster with external storage array

Figure 2.13 – VxRail standard cluster with external storage array environment

- VxRail dynamic node cluster with vSAN HCI Mesh

Figure 2.14 – VxRail dynamic node cluster with vSAN HCI Mesh environment