Chapter 16

The Failure of Invariance

All of us think of ourselves as rational beings even in times of crisis, applying the laws of probability in cool and calculated fashion to the choices that confront us. We like to believe we are above-average in skills, intelligence, farsightedness, experience, refinement, and leadership. Who admits to being an incompetent driver, a feckless debater, a stupid investor, or a person with an inferior taste in clothes?

Yet how realistic are such images? Not everyone can be above average. Furthermore, the most important decisions we make usually occur under complex, confusing, indistinct, or frightening conditions. Not much time to consult the laws of probability. Life is not a game of balla. It often comes trailing Kenneth Arrow’s clouds of vagueness.

And yet most humans are not utterly irrational beings who take risks without forethought or who hide in a closet when anxiety strikes. As we shall see, the evidence suggests that we reach decisions in accord with an underlying structure that enables us to function predictably and, in most instances, systematically. The issue, rather, is the degree to which the reality in which we make our decisions deviates from the rational decision models of the Bernoullis, Jevons, and von Neumann. Psychologists have spawned a cottage industry to explore the nature and causes of these deviations.

The classical models of rationality—the model on which game theory and most of Markowitz’s concepts are based—specifies how people should make decisions in the face of risk and what the world would be like if people did in fact behave as specified. Extensive research and experimentation, however, reveal that departures from that model occur more frequently than most of us admit. You will discover yourself in many of the examples that follow.

![]()

The most influential research into how people manage risk and uncertainty has been conducted by two Israeli psychologists, Daniel Kahneman and Amos Tversky. Although they now live in the United States—one at Princeton and the other at Stanford—both served in the Israeli armed forces during the 1950s. Kahneman developed a psychological screening system for evaluating Israeli army recruits that is still in use. Tversky served as a paratroop captain and earned a citation for bravery. The two have been collaborating for nearly thirty years and now command an enthusiastic following among both scholars and practitioners in the field of finance and investing, where uncertainty influences every decision.1

Kahneman and Tversky call their concept Prospect Theory. After reading about Prospect Theory and discussing it in person with both Kahneman and Tversky, I began to wonder why its name bore no resemblance to its subject matter. I asked Kahneman where the name had come from. “We just wanted a name that people would notice and remember,” he said.

Their association began in the mid-1960s when both were junior professors at Hebrew University in Jerusalem. At one of their first meetings, Kahneman told Tversky about an experience he had had while instructing flight instructors on the psychology of training. Referring to studies of pigeon behavior, he was trying to make the point that reward is a more effective teaching tool than punishment. Suddenly one of his students shouted, “With respect, Sir, what you’re saying is literally for the birds. . . . My experience contradicts it.”2 The student explained that the trainees he praised for excellent performance almost always did worse on their next flight, while the ones he criticized for poor performance almost always improved.

Kahneman realized that this pattern was exactly what Francis Galton would have predicted. Just as large sweetpeas give birth to smaller sweetpeas, and vice versa, performance in any area is unlikely to go on improving or growing worse indefinitely. We swing back and forth in everything we do, continuously regressing toward what will turn out to be our average performance. The chances are that the quality of a student’s next landing will have nothing to do with whether or not someone has told him that his last landing was good or bad.

“Once you become sensitized to it, you see regression everywhere,” Kahneman pointed out to Tversky.3 Whether your children do what they are told to do, whether a basketball player has a hot hand in tonight’s game, or whether an investment manager’s performance slips during this calendar quarter, their future performance is most likely to reflect regression to the mean regardless of whether they will be punished or rewarded for past performance.

Soon the two men were speculating on the possibility that ignoring regression to the mean was not the only way that people err in forecasting future performance from the facts of the past. A fruitful collaboration developed between them as they proceeded to conduct a series of clever experiments designed to reveal how people make choices when faced with uncertain outcomes.

Prospect Theory discovered behavior patterns that had never been recognized by proponents of rational decision-making. Kahneman and Tversky ascribe these patterns to two human shortcomings. First, emotion often destroys the self-control that is essential to rational decision-making. Second, people are often unable to understand fully what they are dealing with. They experience what psychologists call cognitive difficulties.

The heart of our difficulty is in sampling. As Leibniz reminded Jacob Bernoulli, nature is so varied and so complex that we have a hard time drawing valid generalizations from what we observe. We use shortcuts that lead us to erroneous perceptions, or we interpret small samples as representative of what larger samples would show.

Consequently, we tend to resort to more subjective kinds of measurement: Keynes’s “degrees of belief figure more often in our decision-making than Pascal’s Triangle, and gut rules even when we think we are using measurement. Seven million people and one elephant!

We display risk-aversion when we are offered a choice in one setting and then turn into risk-seekers when we are offered the same choice in a different setting. We tend to ignore the common components of a problem and concentrate on each part in isolation—one reason why Markowitz’s prescription for portfolio-building was so slow to find acceptance. We have trouble recognizing how much information is enough and how much is too much. We pay excessive attention to low-probability events accompanied by high drama and overlook events that happen in routine fashion. We treat costs and uncompensated losses differently, even though their impact on wealth is identical. We start out with a purely rational decision about how to manage our risks and then extrapolate from what may be only a run of good luck. As a result, we forget about regression to the mean, overstay our positions, and end up in trouble.

Here is a question that Kahneman and Tversky use to show how intuitive perceptions mislead us. Ask yourself whether the letter K appears more often as the first or as the third letter of English words. You will probably answer that it appears more often as the first letter. Actually, K appears as the third letter twice as often. Why the error? We find it easier to recall words with a certain letter at the beginning than words with that same letter somewhere else.

![]()

The asymmetry between the way we make decisions involving gains and decisions involving losses is one of the most striking findings of Prospect Theory. It is also one of the most useful.

Where significant sums are involved, most people will reject a fair gamble in favor of a certain gain—$100,000 certain is preferable to a 50–50 possibility of $200,000 or nothing. We are risk-averse, in other words.

But what about losses? Kahneman and Tversky’s first paper on Prospect Theory, which appeared in 1979, describes an experiment showing that our choices between negative outcomes are mirror images of our choices between positive outcomes.4 In one of their experiments they first asked the subjects to choose between an 80% chance of winning $4,000 and a 20% chance of winning nothing versus a 100% chance of receiving $3,000. Even though the risky choice has a higher mathematical expectation—$3,200—80% of the subjects chose the $3,000 certain. These people were risk-averse, just as Bernoulli would have predicted.

Then Kahneman and Tversky offered a choice between taking the risk of an 80% chance of losing $4,000 and a 20% chance of breaking even versus a 100% chance of losing $3,000. Now 92% of the respondents chose the gamble, even though its mathematical expectation of a loss of $3,200 was once again larger than the certain loss of $3,000. When the choice involves losses, we are risk-seekers, not risk-averse.

Kahneman and Tversky and many of their colleagues have found that this asymmetrical pattern appears consistently in a wide variety of experiments. On a later occasion, for example, Kahneman and Tversky proposed the following problem.5 Imagine that a rare disease is breaking out in some community and is expected to kill 600 people. Two different programs are available to deal with the threat. If Program A is adopted, 200 people will be saved; if Program B is adopted, there is a 33% probability that everyone will be saved and a 67% probability that no one will be saved.

Which program would you choose? If most of us are risk-averse, rational people will prefer Plan A’s certainty of saving 200 lives over Plan B’s gamble, which has the same mathematical expectancy but involves taking the risk of a 67% chance that everyone will die. In the experiment, 72% of the subjects chose the risk-averse response represented by Program A.

Now consider the identical problem posed differently. If Program C is adopted, 400 of the 600 people will die, while Program D entails a 33% probability that nobody will die and a 67% probability that 600 people will die. Note that the first of the two choices is now expressed in terms of 400 deaths rather than 200 survivors, while the second program offers a 33% chance that no one will die. Kahneman and Tversky report that 78% of their subjects were risk-seekers and opted for the gamble: they could not tolerate the prospect of the sure loss of 400 lives.

This behavior, although understandable, is inconsistent with the assumptions of rational behavior. The answer to a question should be the same regardless of the setting in which it is posed. Kahneman and Tversky interpret the evidence produced by these experiments as a demonstration that people are not risk-averse: they are perfectly willing to choose a gamble when they consider it appropriate. But if they are not risk-averse, what are they? “The major driving force is loss aversion,” writes Tversky (italics added). “It is not so much that people hate uncertainty—but rather, they hate losing.”6 Losses will always loom larger than gains. Indeed, losses that go unresolved—such as the loss of a child or a large insurance claim that never gets settled—are likely to provoke intense, irrational, and abiding risk-aversion.7

Tversky offers an interesting speculation on this curious behavior:

Probably the most significant and pervasive characteristic of the human pleasure machine is that people are much more sensitive to negative than to positive stimuli. . . . [T]hink about how well you feel today, and then try to imagine how much better you could feel. . . . [T]here are a few things that would make you feel better, but the number of things that would make you feel worse is unbounded.8

One of the insights to emerge from this research is that Bernoulli had it wrong when he declared, “[The] utility resulting from any small increase in wealth will be inversely proportionate to the quantity of goods previously possessed.” Bernoulli believed that it is the pre-existing level of wealth that determines the value of a risky opportunity to become richer. Kahneman and Tversky found that the valuation of a risky opportunity appears to depend far more on the reference point from which the possible gain or loss will occur than on the final value of the assets that would result. It is not how rich you are that motivates your decision, but whether that decision will make you richer or poorer. As a consequence, Tversky warns, “our preferences . . . can be manipulated by changes in the reference points.”9

He cites a survey in which respondents were asked to choose between a policy of high employment and high inflation and a policy of lower employment and lower inflation. When the issue was framed in terms of an unemployment rate of 10% or 5%, the vote was heavily in favor of accepting more inflation to get the unemployment rate down. When the respondents were asked to choose between a labor force that was 90% employed and a labor force that was 95% employed, low inflation appeared to be more important than raising the percentage employed by five points.

Richard Thaler has described an experiment that uses starting wealth to illustrate Tversky’s warning.10 Thaler proposed to a class of students that they had just won $30 and were now offered the following choice: a coin flip where the individual wins $9 on heads and loses $9 on tails versus no coin flip. Seventy percent of the subjects selected the coin flip. Thaler offered his next class the following options: starting wealth of zero and then a coin flip where the individual wins $39 on heads and wins $21 on tails versus $30 for certain. Only 43 percent selected the coin flip.

Thaler describes this result as the “house money” effect. Although the choice of payoffs offered to both classes is identical—regardless of the amount of the starting wealth, the individual will end up with either $39 or $21 versus $30 for sure—people who start out with money in their pockets will choose the gamble, while people who start out with empty pockets will reject the gamble. Bernoulli would have predicted that the decision would be determined by the amounts $39, $30, or $21 whereas the students based their decisions on the reference point, which was $30 in the first case and zero in the second.

Edward Miller, an economics professor with an interest in behavioral matters, reports a variation on these themes. Although Bernoulli uses the expression “any small increase in wealth,” he implies that what he has to say is independent of the size of the increase.11 Miller cites various psychological studies that show significant differences in response, depending on whether the gain is a big one or a small one. Occasional large gains seem to sustain the interest of investors and gamblers for longer periods of time than consistent small winnings. That response is typical of investors who look on investing as a game and who fail to diversify; diversification is boring. Well-informed investors diversify because they do not believe that investing is a form of entertainment.

![]()

Kahneman and Tversky use the expression “failure of invariance” to describe inconsistent (not necessarily incorrect) choices when the same problem appears in different frames. Invariance means that if A is preferred to B and B is preferred to C, then rational people will prefer A to C; this feature is the core of von Neumann and Morgenstern’s approach to utility. Or, in the case above, if 200 lives saved for certain is the rational decision in the first set, saving 200 lives for certain should be the rational decision in the second set as well.

But research suggests otherwise:

The failure of invariance is both pervasive and robust. It is as common among sophisticated respondents as among naive ones. . . . Respondents confronted with their conflicting answers are typically puzzled. Even after rereading the problems, they still wish to be risk averse in the “lives saved” version; they will be risk seeking in the “lives lost” version; and they also wish to obey invariance and give consistent answers to the two versions. . . .

The moral of these results is disturbing. Invariance is normatively essential [what we should do], intuitively compelling, and psychologically unfeasible.12

The failure of invariance is far more prevalent than most of us realize. The manner in which questions are framed in advertising may persuade people to buy something despite negative consequences that, in a different frame, might persuade them to refrain from buying. Public opinion polls often produce contradictory results when the same question is given different twists.

Kahneman and Tversky describe a situation in which doctors were concerned that they might be influencing patients who had to choose between the life-or-death risks in different forms of treatment.13 The choice was between radiation and surgery in the treatment of lung cancer. Medical data at this hospital showed that no patients die during radiation but have a shorter life expectancy than patients who survive the risk of surgery; the overall difference in life expectancy was not great enough to provide a clear choice between the two forms of treatment. When the question was put in terms of risk of death during treatment, more than 40% of the choices favored radiation. When the question was put in terms of life expectancy, only about 20% favored radiation.

One of the most familiar manifestations of the failure of invariance is in the old Wall Street saw, “You never get poor by taking a profit.” It would follow that cutting your losses is also a good idea, but investors hate to take losses, because, tax considerations aside, a loss taken is an acknowledgment of error. Loss-aversion combined with ego leads investors to gamble by clinging to their mistakes in the fond hope that some day the market will vindicate their judgment and make them whole. Von Neumann would not approve.

The failure of in variance frequently takes the form of what is known as “mental accounting,” a process in which we separate the components of the total picture. In so doing we fail to recognize that a decision affecting each component will have an effect on the shape of the whole. Mental accounting is like focusing on the hole instead of the doughnut. It leads to conflicting answers to the same question.

Kahneman and Tversky ask you to imagine that you are on your way to see a Broadway play for which you have bought a ticket that cost $40.14 When you arrive at the theater, you discover you have lost your ticket. Would you lay out $40 for another one?

Now suppose instead that you plan to buy the ticket when you arrive at the theater. As you step up to the box office, you find that you have $40 less in your pocket than you thought you had when you left home. Would you still buy the ticket?

In both cases, whether you lost the ticket or lost the $40, you would be out a total of $80 if you decided to see the show. You would be out only $40 if you abandoned the show and went home. Kahneman and Tversky found that most people would be reluctant to spend $40 to replace the lost ticket, while about the same number would be perfectly willing to lay out a second $40 to buy the ticket even though they had lost the original $40.

This is a clear case of the failure of invariance. If $80 is more than you want to spend on the theater, you should neither replace the ticket in the first instance nor buy the ticket in the second. If, on the other hand, you are willing to spend $80 on going to the theater, you should be just as willing to replace the lost ticket as you are to spend $40 on the ticket despite the disappearance of the original $40. There is no difference other than in accounting conventions between a cost and a loss.

Prospect Theory suggests that the inconsistent responses to these choices result from two separate mental accounts, one for going to the theater, and one for putting the $40 to other uses—next month’s lunch money, for example. The theater account was charged $40 when the ticket was purchased, depleting that account. The lost $40 was charged to next month’s lunch money, which has nothing to do with the theater account and is off in the future anyway. Consequently, the theater account is still awaiting its $40 charge.

Thaler recounts an amusing real-life example of mental accounting.15 A professor of finance he knows has a clever strategy to help him deal with minor misfortunes. At the beginning of the year, the professor plans for a generous donation to his favorite charity. Anything untoward that happens in the course of the year—a speeding ticket, replacing a lost possession, an unwanted touch by an impecunious relative—is then charged to the charity account. The system makes the losses painless, because the charity does the paying. The charity receives whatever is left over in the account. Thaler has nominated his friend as the world’s first Certified Mental Accountant.

In an interview with a magazine reporter, Kahneman himself confessed that he had-succumbed to mental accounting. In his research with Tversky he had found that a loss is less painful when it is just an addition to a larger loss than when it is a free-standing loss: losing a second $100 after having already lost $100 is less painful than losing $100 on totally separate occasions. Keeping this concept in mind when moving into a new home, Kahneman and his wife bought all their furniture within a week after buying the house. If they had looked at the furniture as a separate account, they might have balked at the cost and ended up buying fewer pieces than they needed.16

![]()

We tend to believe that information is a necessary ingredient to rational decision-making and that the more information we have, the better we can manage the risks we face. Yet psychologists report circumstances in which additional information gets in the way and distorts decisions, leading to failures of in variance and offering opportunities for people in authority to manipulate the kinds of risk that people are willing to take.

Two medical researchers, David Redelmeier and Eldar Shafir, reported in the Journal of the American Medical Association on a study designed to reveal how doctors respond as the number of possible options for treatment is increased.17 Any medical decision is risky—no one can know for certain what the consequences will be. In each of Redelmeier and Shafir’s experiments, the introduction of additional options raised the probability that the physicians would choose either the original option or decide to do nothing.

In one experiment, several hundred physicians were asked to prescribe treatment for a 67-year-old man with chronic pain in his right hip. The doctors were given two choices: to prescribe a named medication or to “refer to orthopedics and do not start any new medication”; just about half voted against any medication. When the number of choices was raised from two to three by adding a second medication option, along with “refer to orthopedics,” three-quarters of the doctors voted against medication and for “refer to orthopedics.”

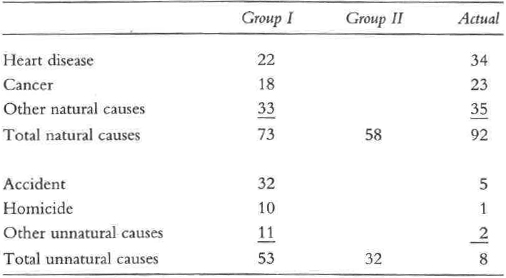

Tversky believes that “probability judgments are attached not to events but to descriptions of events . . . the judged probability of an event depends upon the explicitness of its description.”18 As a case in point, he describes an experiment in which 120 Stanford graduates were asked to assess the likelihood of various possible causes of death. Each student evaluated one of two different lists of causes; the first listed specific causes of death and the second grouped the causes under a generic heading like “natural causes.”

The following table shows some of the estimated probabilities of death developed in this experiment:

These students vastly overestimated the probabilities of violent deaths and underestimated deaths from natural causes. But the striking revelation in the table is that the estimated probability of dying under either set of circumstances was higher when the circumstances were explicit as compared with the cases where the students were asked to estimate only the total from natural or unnatural causes.

In another medical study described by Redelmeier and Tversky, two groups of physicians at Stanford University were surveyed for their diagnosis of a woman experiencing severe abdominal pain.19 After receiving a detailed description of the symptoms, the first group was asked to decide on the probability that this woman was suffering from ectopic pregnancy, a gastroenteritis problem, or “none of the above.” The second group was offered three additional possible diagnoses along with the choices of pregnancy, gastroenteritis, and “none of the above” that had been offered to the first group.

The interesting feature of this experiment was the handling of the “none of the above” option by the second group of doctors. Assuming that the average competence of the doctors in each group was essentially equal, one would expect that that option as presented to the first group would have included the three additional diagnoses with which the second group was presented. In that case, the second group would be expected to assign a probability to the three additional diagnoses plus “none of the above” that was approximately equal to the 50% probability assigned to “none of the above” by the first group.

That is not what happened. The second group of doctors assigned a 69% probability to “none of the above” plus the three additional diagnoses and only 31% to the possibility of pregnancy or gastroenteritis—to which the first group had assigned a 50% probability. Apparently, the greater the number of possibilities, the higher the probabilities assigned to them.

![]()

Daniel Ellsberg (the same Ellsberg as the Ellsberg of the Pentagon Papers) published a paper back in 1961 in which he defined a phenomenon he called “ambiguity aversion.”20 Ambiguity aversion means that people prefer to take risks on the basis of known rather than unknown probabilities. Information matters, in other words. For example, Ellsberg offered several groups of people a chance to bet on drawing either a red ball or a black ball from two different urns, each holding 100 balls. Urn 1 held 50 balls of each color; the breakdown in Urn 2 was unknown. Probability theory would suggest that Urn 2 was also split 50–50, for there was no basis for any other distribution. Yet the overwhelming preponderance of the respondents chose to bet on the draw from Urn 1.

Tversky and another colleague, Craig Fox, explored ambiguity aversion more deeply and discovered that matters are more complicated than Ellsberg suggested.21 They designed a series of experiments to discover whether people’s preference for clear over vague probabilities appears in all instances or only in games of chance.

The answer came back loud and clear: people will bet on vague beliefs in situations where they feel especially competent or knowledgeable, but they prefer to bet on chance when they do not. Tversky and Fox concluded that ambiguity aversion “is driven by the feeling of incompetence . . . [and] will be present when subjects evaluate clear and vague prospects jointly, but it will greatly diminish or disappear when they evaluate each prospect in isolation.”22

People who play dart games, for example, would rather play darts than games of chance, although the probability of success at darts is vague while the probability of success at games of chance is mathematically predetermined. People knowledgeable about politics and ignorant about football prefer betting on political events to betting on games of chance set at the same odds, but they will choose games of chance over sports events under the same conditions.

![]()

In a 1992 paper that summarized advances in Prospect Theory, Kahneman and Tversky made the following observation: “Theories of choice are at best approximate and incomplete . . . Choice is a constructive and contingent process. When faced with a complex problem, people . . . use computational shortcuts and editing operations.”23 The evidence in this chapter, which summarizes only a tiny sample of a huge body of literature, reveals repeated patterns of irrationality, inconsistency, and incompetence in the ways human beings arrive at decisions and choices when faced with uncertainty.

Must we then abandon the theories of Bernoulli, Bentham, Jevons, and von Neumann? No. There is no reason to conclude that the frequent absence of rationality, as originally defined, must yield the point to Macbeth that life is a story told by an idiot.

The judgment of humanity implicit in Prospect Theory is not necessarily a pessimistic one. Kahneman and Tversky take issue with the assumption that “only rational behavior can survive in a competitive environment, and the fear that any treatment that abandons rationality will be chaotic and intractable.” Instead, they report that most people can survive in a competitive environment even while succumbing to the quirks that make their behavior less than rational by Bernoulli’s standards. “[P]erhaps more important,” Tversky and Kahneman suggest, “the evidence indicates that human choices are orderly, although not always rational in the traditional sense of the word.”24 Thaler adds: “Quasi-rationality is neither fatal nor immediately self-defeating.”25 Since orderly decisions are predictable, there is no basis for the argument that behavior is going to be random and erratic merely because it fails to provide a perfect match with rigid theoretical assumptions.

Thaler makes the same point in another context. If we were always rational in making decisions, we would not need the elaborate mechanisms we employ to bolster our self-control, ranging all the way from dieting resorts, to having our income taxes withheld, to betting a few bucks on the horses but not to the point where we need to take out a second mortgage. We accept the certain loss we incur when buying insurance, which is an explicit recognition of uncertainty. We employ those mechanisms, and they work. Few people end up in either the poorhouse or the nuthouse as a result of their own decision-making.

Still, the true believers in rational behavior raise another question. With so much of this damaging evidence generated in psychology laboratories, in experiments with young students, in hypothetical situations where the penalties for error are minimal, how can we have any confidence that the findings are realistic, reliable, or relevant to the way people behave when they have to make decisions?

The question is an important one. There is a sharp contrast between generalizations based on theory and generalizations based on experiments. De Moivre first conceived of the bell curve by writing equations on a piece of paper, not, like Quetelet, by measuring the dimensions of soldiers. But Galton conceived of regression to the mean—a powerful concept that makes the bell curve operational in many instances—by studying sweetpeas and generational change in human beings; he came up with the theory after looking at the facts.

Alvin Roth, an expert on experimental economics, has observed that Nicholas Bernoulli conducted the first known psychological experiment more than 250 years ago: he proposed the coin-tossing game between Peter and Paul that guided his uncle Daniel to the discovery of utility.26 Experiments conducted by von Neumann and Morgenstern led them to conclude that the results “are not so good as might be hoped, but their general direction is correct.”27 The progression from experiment to theory has a distinguished and respectable history.

It is not easy to design experiments that overcome the artificiality of the classroom and the tendency of respondents to lie or to harbor disruptive biases—especially when they have little at stake. But we must be impressed by the remarkable consistency evident in the wide variety of experiments that tested the hypothesis of rational choice. Experimental research has developed into a high art.a

Studies of investor behavior in the capital markets reveal that most of what Kahneman and Tversky and their associates hypothesized in the laboratory is played out by the behavior of investors who produce the avalanche of numbers that fill the financial pages of the daily paper. Far away from laboratory of the classroom, this empirical research confirms a great deal of what experimental methods have suggested about decision-making, not just among investors, but among human beings in general.

As we shall see, the analysis will raise another question, a tantalizing one. If people are so dumb, how come more of us smart people don’t get rich?

aKahneman has described his introduction to experimentation when one of his professors told the story of a child being offered the choice between a small lollipop today or a larger lollipop tomorrow. The child’s response to this simple question correlated with critical aspects of the child’s life, such as family income, one or two parents present, and degree of trust.

Notes

1. A large literature is available on the theories and backgrounds of Kahneman and Tversky, but McKean, 1985, is the most illuminating for lay readers.

2. McKean, 1985, p. 24.

3. Ibid., p. 25

4. Kahneman and Tversky, 1979, p. 268.

5. McKean, 1985, p. 22; see also Kahneman and Tversky, 1984.

6. Tversky, 1990, p. 75. At greater length on this subject, see Kahneman and Tversky, 1979.

7. I am grateful to Dr. Richard Geist of Harvard Medical School for bringing this point to my attention.

8. Tversky, 1990, p. 75.

9. Ibid., p. 75.

10. Ibid., pp. 58–60.

11. Miller, 1995

12. Kahneman and Tversky, 1984.

13. McKean, 1985, p. 30.

14. Ibid., p. 29.

15. This anecdote appears in an unpublished Thaler paper tided “Mental Accounting Matters.”

16. McKean, 1985, p. 31.

17. Redelmeier and Shafir, 1995, pp. 302–305.

18. Tversky and Koehler, 1994, p. 548.

19. Redelmeier, Koehler, Lieberman, and Tversky, 1995.

20. Ellsberg, 1961.

21. Fox and Tversky, 1995.

22. Ibid., pp. 587–588.

23. Tversky and Kahneman, 1992.

24. Kahneman and Tversky, 1973.

25. Thaler, 1995.

26. Kagel and Roth, 1995, p. 4.

27. Von Neumann and Morgenstern, 1953, p. 5.