Building Entity Graphs for the Web of Things Management

Lina Yao⁎; Quan Z. Sheng†; Anne H.H. Ngu‡; Xue Li§; Boualem Benatallah⁎; Xianzhi Wang⁎ ⁎School of Computer Science and Engineering, University of New South Wales, Sydney, NSW, Australia

†Department of Computing, Macquarie University, Sydney, NSW, Australia

‡Department of Computer Science, Texas State University, San Marcos, TX, United States

§School of Information Technology and Electrical Engineering, University of Queensland, Brisbane, QLD, Australia

Abstract

With recent advances in radio-frequency identification (RFID), wireless sensor networks, and Web services, physical things are becoming an integral part of the emerging ubiquitous Web. Finding correlations among ubiquitous things is a crucial prerequisite for many important applications such as things search, discovery, classification, recommendation, and composition. This article building an entity graph for Web of Things, whereas we propose a novel graph-based approach for discovering underlying connections of things via mining the rich content embodied in the human-thing interactions in terms of user, temporal and spatial information. We model this various information using two graphs, namely a spatiotemporal graph and a social graph. Then, random walk with restart (RWR) is applied to find proximities among things, a relational graph of things, entity graph, indicating implicit correlations of things is learned. The correlation analysis lays a solid foundation contributing to improved effectiveness in things management and analytics. To demonstrate the utility of the proposed approach, we present two typical applications and a systematic case study in regards to a flexible feature-based classification framework and a unified probabilistic factor based framework, respectively. Our evaluation exhibits the strength and feasibility of approach.

Keywords

Internet of Things; Correlation discovery; Recommendation; Classification; Matrix factorization; Random walk with restart

10.1 Introduction

Since its birth in the early 1990s, the World Wide Web has been the heart of the research, development, and innovation in the world. Indeed, it has changed our world and society so quickly and profoundly over the last two decades by sharing knowledge and connecting people. Very recently, the World Wide Web is beginning to connect ordinary things in the physical world, also called the “Web of Things” (WoT) [5,9,17,3,36]. As indicated by the inventor of the World Wide Web, Tim Berners-Lee, “it isn't the documents which are actually interesting; it is the things they are about!”.1 WoT aims to connect everyday objects, such as coats, shoes, watches, ovens, washing machines, bikes, cars, and even humans, plants, animals, and changing environments, to the Internet to enable communication/interactions between these objects. Being widely regarded as one of the most important technologies that are going to change our world in the coming decade, the ultimate goal of WoT is to enable computers to see, hear, and sense the real world.

While such a ubiquitous WoT environment offers the capability of integrating information from both the physical and virtual worlds, which leads to tremendous business and social opportunities (e.g., efficient supply chains, independent living of elderly persons, and improved environmental monitoring), it also presents significant challenges [8,2,30,26,22]. With many things connected and interact over the Web, there is an urgent need to efficiently index, organize, and manage these things for object search, recommendation, mash-up, and effectively revealing interesting patterns from things.

Before effectively and efficiently classifying, managing, and recommending ubiquitous things, a fundamental task is to discover relations among things. Indeed, finding implicit correlations among things is a much more challenging task than finding relations for documents, web pages, and people, due to the following unique characteristics of things on the Web.

• Lack of uniform features. Things are diverse and heterogeneous in terms of functionality, access methods, or descriptions. Some things have meaningful descriptions while many others do not [6,40]. As a result, it is quite challenging to discover the implicit correlations among heterogeneous things. Things cannot be easily represented in a meaningful feature space. They usually only have very short textual descriptions and lack a uniform way of describing the properties and the services they offer [11].

• Lack of structural interconnections. Correlations among things are not obvious and are difficult to discover. Unlike social networks of people, where users have observable links and connections, things often exist in isolated settings and the explicit interconnections between them are typically limited. Such high-level structural interconnection information (e.g., a water tap and a cutting board are likely to be used together when cooking) are implicit in general [38].

• Contextual uncertainty. Things are tightly bound to contextual information (e.g., location, time, status), as well as user behaviors (e.g., activities involving things), as things usually provide functionality-oriented services (e.g., washing vegetables for a water tap). Unfortunately, contextual information associated with things is highly dynamic (e.g., the location of a moving person changes all the time) and has no obvious, easily indexable properties, which is unlike those static, human-readable texts in the case of documents [9,39]. Capturing discriminative contextual information carried by things, therefore, is of paramount importance in effective things management.

To illustrate the conception of Internet of Things and implication of multiple relationships, we firstly take the Tony's One Day as an illustrative story to demonstrate the typical heterogeneous interactions in Internet of Things (see Fig. 10.1).

Tony's home is a typical smart home (➀ in Fig. 10.1), where all the home appliances are already connected and enabled to the Internet and can be acknowledged and controlled by the Web interface. Tony goes to work around 7:00 am before he leaves for work, he usually sets the rules for the air conditioner, when the temperature reaches to 30 °C, air conditioner in the house will be automatically turned on to cool down his house. He also sets the cleaner and washing machine to begin to work around 10:00 am.

When he arrives near to his workplace, he needs to check the parking availability through his mobile phone. It is hard to find an available one efficiently, usually people have to drive around to find it. Thanks to the Smart Parking system (➁ in Fig. 10.1), Tony only needs to subscribe this smart parking system, he can retrieve the latest status of parking lots in real-time, in the meanwhile, Tony's location can also be collected by the parking system through his GPS. The parking planner can guide and recommend him to the most appropriate parking space.

After settling down in his office, Tony is a hydraulician and needs to monitor and track the information every day from the reservoir about the water and other related information. Thanks to Internet of Things, even though the reservoir is in a remote suburb, Tony can easily obtain desirable information, which is harvested by the sensors installed around the reservoir and in the water, and the sensor values are transmitted to Tony's office. Then tony can use these data for analyzing and modeling about the research (➃ in Fig. 10.1). Around 11:00 am, Tony received a Tweet notifying from his washing machine and vacuum that cleaning and washing are already finished.

After work, Tony goes to the Gym for his routine exercise, he wears the monitoring devices which generate his personal health data during the working out, and the data can be sent to an online medical analyzer in real-time so that his coach can adjust Tony's exercise plan instantly based on the outcome of the analyzer. Tony also likes to share his working-out record with his friends on Facebook, where he authorizes his friends to access his working out records so as to share the information amongst friends (➂ in Fig. 10.1). When Tony drives back home, he turns the automatic warming program to let the warm water fill in his bath tub, so that he can have a comfortable bath right after he gets home.

From Tony's One Day, we can identify two different levels of Thing-to-Thing relationships existing in Internet of Things. The most basic one is direct and explicit connections between things. For example, Tony specifies a series of rules in his smart home. Taking the simplest example, when the thermometer detects the corresponding temperature (i.e., 30 °C), it will trigger air conditioner to power on and begin to cool down the house. Another one is the latent and implicit connection between things, which can not be observed directly. For example, what is the connection between Tony's vacuum and his microwave?

Things may exhibit similar properties to humans when interacting in a social environment. Smart objects that communicate in co-operation with context-aware applications, may affect changes in their real-world environment which may have a significant impact on underlying networking structures. We argue there are two kinds of relationships: one is the explicit connection which can be direct and observed (i.e., thermometer and air conditioner in Tony's home). Another one is the implicit connection, recall the relationship between Tony's vacuum and his microwave. This kind of relationship is our main target since they can not be observed and need to be learned and derived.

The regularities of users' interactions can be recorded as things usage events in our work. Things usage events are growing data repository: every time people has interaction with an object, it will generate an event item. With the development of ubiquitous computing techniques, including wireless sensor network, RFID, and Web Services etc., monitoring and tracking the objects usage events are becoming handier. The things usage events logs have been becoming popular and significant like the click-through data in web mining or check-in records in location-based research. The ever growing objects use events can contribute to many research areas including mining the relationships of objects and human behavior analysis by mining the people's objects use records. In this chapter, we will use the things usage events as the only source to exploit the implicit relationships among ubiquitous things.

Therefore, the problem targeted in this chapter can be formulated as discovering the latent correlations among things by exploiting observable things usage events with the goal of automatically distinguishing strong correlations of things from the weak ones. A thing usage event happens when a person interacts with a particular thing (see solid lines in Fig. 10.1). View from thing in the usage events, it carries three-dimensional information: location, time, and user. These usage events can be captured explicitly (Section V-B), e.g., via RFID and sensor readings), and provide rich information to discover implicit connections (i.e., correlations) among things (dotted lines in Fig. 10.2(B)).

Some research efforts have proposed to explore things similarity and relations from semantic Web perspective [18,5]. In such cases, explicit relations of things can be characterized by using keyword-based, textual-level calculations. However, physical things also hold implicit relations due to their more distinctive structures and connections in terms of functionalities (i.e., usefulness), as well as non-functional attributes (i.e., availability). Different things provide different functionalities (e.g., microwave and printer), and might be of interest to different groups of people. With the recent development of technologies such as radio frequency identification (RFID), wireless sensors, and Web services, human-thing interactions can be easily recorded and obtained (e.g., RFID readings). These interactions are not completely random. They carry rich information that can be harnessed and utilized to uncover the implicit relations. Although correlations between things are implicit, we argue that they can be captured by exploring regularities of user interactions with similar things.

This work targets mining useful information for unveiling implicit correlations of things from the contextual information of human-thing interactions. Our proposed method, DisCor-T (discovering correlations of things), should be effective in capturing and reflecting the hidden structure of things from things usage events in the modeling stage and efficient in inferring the related things in the inferring stage. Specifically, we present a novel approach that converts the things usage events into a relational graph of things (RGT) by extracting three-dimensional contextual information contained in event history. The RGT graph underpins many important applications. We particularly present an application scenario to show its benefits in serving things clustering and annotation. To the best of our knowledge, no previous work has systematically studied mining the relationships of ubiquitous objects in WoT. The main contributions of our work can be summarized as follows:

• We study the problem of managing ubiquitous things in the emerging Web of Things environment, which have unique characteristics (e.g., short descriptions, diverse, dynamic and noisy). We propose to investigate human-thing interactions from three contextual aspects: user, temporal, and spatial. Accordingly, we develop two graph presentations that approximate corresponding relationships from user-thing interactions. These graphs lay the foundation for uncovering latent correlations among things.

• We develop an algorithm for discovering the latent correlations among things by applying Random Walk with Restart over the two contextual graphs. The learned correlations are used to construct the relational graph of things (RGT), which can help in a number of important applications on things management. In particular, we focus on two fundamental use cases of entity graph on things annotation and recommendation to showcase the effectiveness of our approach.

• We establish a testbed environment where things are tagged by RFID and sensors and things usage events are collected in real-time. Using this real-world data with ∼20,000 records collected from the testing environment over a period of four months, we conduct extensive experimental studies to demonstrate the feasibility of our proposed approach.

10.2 Background

In this section, we first describe several application scenarios underpinned by the techniques discussed. We then formally formulate the research problems target by our work.

10.2.1 Motivating Applications

Discovering underlying similarities except keyword-based similarity allows for more meaningful and accurate things recommendation, classification and even context-aware activity recognition. We briefly discuss some of the areas where things contextual similarity can be applicable.

• Recommendation. Things recommendation is a crucial step for promoting and taking full advantage of the Web of Things (WoT), where it benefits the individuals, businesses, and society on a daily basis in terms of two main aspects. On the one hand, it can deliver relevant things to users based on users' preferences and interests. On the other hand, it serves to optimize the time and cost of using WoT in a particular situation [39]. The underlying correlations of things can enhance the performance of the generalized recommendation systems in the Web of Things in terms of two main points. Firstly, due to the sparsity of thing-user interactions, widely used collaborative filtering recommendation systems fail to find similar users or things, since these methods assume that two users have invoked at least some things in common. Moreover, users who have never used any things can not be fed good results in the first place (i.e., the cold start problem). Secondly, physical things have more distinctive structures and connections in terms of functionalities in real life (i.e., usefulness), as well as non-functionalities (i.e., availability), which are saliently highlighted in the contextual information of human-thing interactions.

• Searching. Developing efficient searching approaches is a crucial challenge with the rapid increase of a vast amount of things connected to the Web. Our approach adds one additional dimension to assist and reinforce current search techniques. For instance, existing semantic-based solutions do not make full use of the rich information contained in users' historical interactions with things (e.g., implicit relations of different things). Our approach can effectively capture such information, which can be integrated into existing search solutions, whereas the strength of latent connections between things/objects can be leveraged to predict which things will possibly co-occur. In this way, it would be complimentary to many semantic-based solutions for better performance, which usually require preparation of prior knowledge such as defining the descriptions of things and their corresponding characteristics and concepts. Our primary work on searching with entity graph for the Web of Things can be found at [38].

• Context-aware Activity Recognition. Recognizing human activities from sensor readings has recently attracted much research interest in pervasive computing due to its potential in many applications, such as assisted living of older people and healthcare. This task is particularly challenging because human activities are often performed in a not only simple (i.e., sequential) but also complex (i.e., interleaved or concurrent) manner in real life [34]. Our proposed approach provides a new useful means to infer human activities by taking advantages of reasoning relationships of globally unique object instances. For example, dense sensing-based activity monitoring learns human activities by detecting and analyzing human-object interactions. By discovering correlations of objects, we can cluster and organize things into differently structured groups based on their underlying relationships. In many cases, an activity could involve multiple relevant things including not only the things with similar functionalities but also things with complementary functionalities, which can be effectively uncovered by our proposed approach. Pairwise things with strong correlations indicate either they have similar functionalities (i.e., microwave and toaster) or them have a higher likelihood to be used together. For instance, a water tap and a chopping board are both in use when we prepare meals since most of the time we need to wash cooking ingredients (e.g., vegetables) before cutting them.

10.2.2 Problem Statement and Definitions

The only data source used in our work is human-thing interactions, namely things usage events. Each event happens when a person interacts with a particular thing, which carries three kinds of information: location, timestamp, and user. Each usage event record can be defined as a quadruplet ThingID, UserID, Timestamp, Location described as follows.

The problem targeted in this article can be therefore formulated as discovering the latent correlations among things by exploiting observable human-thing interactions with the goal of automatically distinguishing strong correlations of things from the weak ones. As illustrated in Fig. 10.2, each node denotes a thing (represented as a ball) in a three-dimensional space of identity, spatiality, and temporality. Things are discrete without distinctive and explicit correlations (Fig. 10.2(A)). However, our proposed approach can derive latent connections among these things and form a relational graph of things, where their implicit relatedness can be revealed (Fig. 10.2(B)). Therefore, our goal can be formulated as follows in Problem 10.1. The derived latent relations are a set of real-valued numbers between 0 and 1 in this work.

To complete this goal, there are two sequential subproblems we need to solve, which are defined as Subproblem 10.1.1 and Subproblem 10.1.2, respectively.

10.3 Proposed Methodology

Our approach for correlation discovery of things involves two main stages corresponding to two subproblems defined in Section 10.2.2. First, we extract two types of graphs, namely the location-time-thing graph (Fig. 10.3(A)) and the user-thing graph (Fig. 10.3(B)). The graphs are deduced from thing usage events, which reflect object and its three related information in terms of spatiotemporal and social aspects. Then we perform a random walk on these two graphs respectively to inference relationships of pairwise things and sum them up as the overall pairwise correlations of things.

The first stage centers around building two graphs from things usage events. As illustrated in Fig. 10.3, the spatio-temporal graph in Fig. 10.3(A) captures the relations between things and their temporal and geographical influence, while the social graph in Fig. 10.3(B) captures the social influence among users on interacting things. The technical details on how to construct these graphs will be described in Section 10.3.1 and Section 10.3.2 respectively. In the second stage, our goal is to derive the pairwise relevance scores for things. To achieve this, a random walk with restart (RWR) [33] is performed on the two constructed graphs. A relevance score is produced for any given node to any other node in the graph, presented as a converged probability. The value of the relevance score reflects the correlation strength between a pair of things. Based on the relevance scores, a top-k correlation graph of things can be constructed, upon which many advanced things management problems such as annotation and clustering can be solved by tapping the wealth of literature in graph algorithms. The technical details on this part can be found in Section 10.3.3.

10.3.1 Spatio-Temporal Graph Construction

A spatiotemporal graph such as the one shown in Fig. 10.3(A) reflects the temporal pattern and spatial information hidden in the things usage events. In our approach, the spatial and temporal information of things usage events is treated as inseparable since they are mutually influential on detecting the correlations among things. Unlike virtual resources such as web pages, music or images, physical things such as restaurants and cookware usually provide more distinguished functionalists, and are more connected with people's daily lives. Some such distinctive features of physical things are their physical locations and functioning times. For example, kitchenware is more frequently used during dining times and they have higher likelihood to stay in a kitchen or similar locations (e.g., a dining room). We specifically explore the integrity of spatial and temporal information in the ubiquitous things environment via finding the periodical pattern between time and locations.

Generally, the timing of access to similar things may be similar. For example, restaurants are likely to be visited by people during lunch or dinner times. For the spatial information, we also argue in this paper that geographical influence to user activities cannot be ignored, i.e., a user tends to interact with the nearby things rather than the distant ones [41]. For example, if a user is at her office, she has a higher probability of using office facilities such as telephone, desktop computer, printer, and seminar rooms.

A spatiotemporal graph has three sets of nodes, namely locations, things, and timestamps. It contains one type of intra-relation (i.e., representing similarities between locations) and three types of inter-relations between locations, things, and timestamps. Edges between times and things can be obtained from usage events, say, the weight of edge ![]() and

and ![]() is proportional to the number of times objects o is used in a location loc and at timestamp ts. The inter-relation between location and time

is proportional to the number of times objects o is used in a location loc and at timestamp ts. The inter-relation between location and time ![]() , indicates the periodical patterns. Formally, we define the spatio-temporal graph

, indicates the periodical patterns. Formally, we define the spatio-temporal graph ![]() as the following:

as the following:

The corresponding weight matrix ![]() of graph

of graph ![]() can be formulated as:

can be formulated as:

where each of the entries in Eq. (10.1) can be obtained as the following. ![]() indicates the similarity of each pair of locations. Given two locations, we measure their similarity using the Jaccard coefficient between the sets of things used at each location:

indicates the similarity of each pair of locations. Given two locations, we measure their similarity using the Jaccard coefficient between the sets of things used at each location:

where ![]() and

and ![]() denote the set of used things at location i and location j respectively.

denote the set of used things at location i and location j respectively. ![]() and

and ![]() should be 0 since we do not consider the relationships between timestamps and the ones between things.

should be 0 since we do not consider the relationships between timestamps and the ones between things. ![]() and its transpose

and its transpose ![]() are integers, indicating how often a thing is accessed at a location. Similarly,

are integers, indicating how often a thing is accessed at a location. Similarly, ![]() and its transpose

and its transpose ![]() are integers, which indicate how often a thing is accessed at a particular time.

are integers, which indicate how often a thing is accessed at a particular time.

For defining relationship between time stamps and locations and their corresponding weight ![]() of graph

of graph ![]() , we propose periodic patterns between locations and timestamps. Given a sequence of locations

, we propose periodic patterns between locations and timestamps. Given a sequence of locations ![]() , our aim is to find their corresponding time period. To obtain relationship between time and location, we analyze the potential periods for each location and find the periodical pattern between locations and timestamps. A periodic pattern represents the repeat of certain usage event at a specific location with the certain time interval(s).

, our aim is to find their corresponding time period. To obtain relationship between time and location, we analyze the potential periods for each location and find the periodical pattern between locations and timestamps. A periodic pattern represents the repeat of certain usage event at a specific location with the certain time interval(s).

Periodic patterns can be extracted by analyzing things usage events. In particular, we build a time series dataset for each location where the elements of the time series data are the number of time slots (e.g., 0 for the period of 0:00–1:00; 1 for 1:00–2:00 and so on) that a thing at a location is invoked. We can clearly observe such periodic pattern from the example relating to a kettle in the kitchen from Fig. 10.4(A) and its periodogram in Fig. 10.4(B).

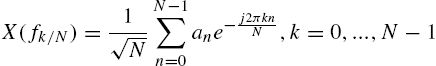

Given a sequence of locations, we adopt the Discrete Fourier Transform (DFT) method to detect the time periods in this discrete time-series sequence [31]. For each location, we define an integer sequence ![]() , where

, where ![]() if the thing is used at this location at time i, and 0 otherwise. Essentially, this sequence can be transformed into a sequence of n complex numbers

if the thing is used at this location at time i, and 0 otherwise. Essentially, this sequence can be transformed into a sequence of n complex numbers ![]() from the time domain to the frequency domain:

from the time domain to the frequency domain:

where ![]() denotes the frequency that each coefficient captures. As a result, DFT transforms the original sequences as a linear combination of the complex sinusoids

denotes the frequency that each coefficient captures. As a result, DFT transforms the original sequences as a linear combination of the complex sinusoids ![]() . The Fourier coefficients represent the amplitude of each of these sinusoids after sequences S is projected on them.

. The Fourier coefficients represent the amplitude of each of these sinusoids after sequences S is projected on them.

We aim at capturing the general shape of time-series data (e.g., thing usage over time) as “economically” as possible. To do so, we propose to use a spartan representation2 from which one could reconstruct the signal using just its dominant frequencies (i.e., the ones that carry most of the signal energy). A popular way to identify the power content of each frequency is by calculating the power spectral density (PSD) of the sequence which indicates the signal power at each frequency in the spectrum. A well-known estimator of the PSD is the periodogram. The periodogram P is a vector comprised of the squared magnitude of the Fourier coefficients ![]() :

:

The k dominant frequencies appear as peaks in the periodogram (and correspond to the coefficients with the highest magnitude). In order to specify which frequencies are important, we need to set a threshold and identify those frequencies higher than this threshold. Each element of the periodogram provides the power at frequency ![]() or, equivalently, at period

or, equivalently, at period ![]() . That is, coefficient

. That is, coefficient ![]() corresponds to periods

corresponds to periods ![]() . Interested readers are referred to [31].

. Interested readers are referred to [31].

When obtaining the periodogram of each location, we can decide their corresponding peak points based on preset threshold. From the periodogram, we can find the location and its corresponding time range. One benefit of using the periodogram is that we can visually identify the peaks as the k most dominant periods (period = 1/frequency). For automatically returning the important periods for a set of location sequences, we can simply set a threshold in the power spectrum to distinguish the dominant periods.

10.3.2 Social Graph Construction

Users' relations (e.g., friendships) can have a significant impact on things usage patterns. Personal tastes are correlated. Research in [10] shows that friendships and relations between users play a substantial role in human decision making in social networks. For instance, people usually turn to a friend's advice about a commodity (e.g., hair straighter) or a restaurant before they go for them. For exploring the impact social links between users on things' correlation discovery, we also construct a social graph, which is an augmented bipartite graph representing user interactions with things based on things usage events. As shown in Fig. 10.3(B), such a graph contains two sets of entities, users U and things O. There is one type of intra-relation between users (also called social connections) and one type of inter-relations: edges between users and things that can be obtained from usage events. Formally, the social graph is defined as the following:

The corresponding weight matrix ![]() of graph

of graph ![]() can be formulated as:

can be formulated as:

The entries in Eq. (10.5) can be obtained as follows: ![]() and its transpose

and its transpose ![]() should be proportional to the number of times of a thing being used by the users.

should be proportional to the number of times of a thing being used by the users. ![]() should be zero since we do not consider relationships between things. The weight

should be zero since we do not consider relationships between things. The weight ![]() of edges

of edges ![]() indicates the user similarity influenced by the social links between users, reflecting the homophily meaning that similar users may have similar interests. We use the cosine similarity to calculate

indicates the user similarity influenced by the social links between users, reflecting the homophily meaning that similar users may have similar interests. We use the cosine similarity to calculate ![]() as follows:

as follows:

where ![]() ,

, ![]() is the set of the user i's friends (i.e.,

is the set of the user i's friends (i.e., ![]() ),

), ![]() is the binary vector of things used by user i,

is the binary vector of things used by user i, ![]() is the L-2 norm of vector

is the L-2 norm of vector ![]() , and α is a parameter that reflects the preference for transitioning to a user who interacted with the same things.

, and α is a parameter that reflects the preference for transitioning to a user who interacted with the same things.

10.3.3 Correlation Inference

After the two graphs ![]() and

and ![]() are constructed, we can perform the random walk with restart (RWR) [33] to derive the correlation between each pair of things. RWR provides a good relevance score between two nodes in a graph and has been successfully used in many applications such as automatic image captioning, recommendation systems, and link prediction. The goal of using RWR in our work is to find other things that have top-k highest relevance scores for a given thing. The values of the relevance scores imply the strength of the correlations among things. In the following, we focus on using RWR on the spatiotemporal graph

are constructed, we can perform the random walk with restart (RWR) [33] to derive the correlation between each pair of things. RWR provides a good relevance score between two nodes in a graph and has been successfully used in many applications such as automatic image captioning, recommendation systems, and link prediction. The goal of using RWR in our work is to find other things that have top-k highest relevance scores for a given thing. The values of the relevance scores imply the strength of the correlations among things. In the following, we focus on using RWR on the spatiotemporal graph ![]() for discovering correlations between things.

for discovering correlations between things.

We assume the random walker starts from a thing node ![]() on

on ![]() . The random walker iteratively transits to other nodes which have edges with

. The random walker iteratively transits to other nodes which have edges with ![]() , with the probability proportional to the edge weight between them. At each step,

, with the probability proportional to the edge weight between them. At each step, ![]() also has a restart probability c to return to itself. We can obtain the steady-state probability of

also has a restart probability c to return to itself. We can obtain the steady-state probability of ![]() visiting another vertex

visiting another vertex ![]() when the RWR process is converged. The RWR process can be formulated as

when the RWR process is converged. The RWR process can be formulated as

where ![]() , and weight matrix from graph

, and weight matrix from graph ![]() is

is ![]() (Section 10.3.1),

(Section 10.3.1), ![]() with i-th entry is 1, all other entries are 0. Eq. (10.7) can be further formulated as:

with i-th entry is 1, all other entries are 0. Eq. (10.7) can be further formulated as:

where I is an identity matrix and ![]() is the transition matrix, which can be obtained based on weight matrix

is the transition matrix, which can be obtained based on weight matrix ![]() of

of ![]() by row normalization:

by row normalization:

where ![]() is a diagonal matrix with

is a diagonal matrix with ![]() . The random walker on thing

. The random walker on thing ![]() traverses randomly along its edges to the neighboring nodes based on the transition probability

traverses randomly along its edges to the neighboring nodes based on the transition probability ![]() , and the probability of taking a particular edge

, and the probability of taking a particular edge ![]() ,

,![]() is proportional to the edge weight over all the outgoing edges from

is proportional to the edge weight over all the outgoing edges from ![]() based on Eq. (10.9).

based on Eq. (10.9).

In Eq. (10.8), ![]() defines all the steady-state probabilities of random walk with restart.

defines all the steady-state probabilities of random walk with restart. ![]() is the t-th order transition matrix, whose elements

is the t-th order transition matrix, whose elements ![]() can be interpreted as the total probability for a random walker that begins at node i and ends at node j after t iterations, considering all possible paths between i and j. Since in our case we only consider relevance score between two things, if we vary the value of t, we can explicitly explore the relationship between two things at different scales. The steady-state probabilities for each pair of nodes can be obtained by recursively processing Random Walk and Restart until convergence. The converged probabilities give us the long-term visiting rates from any given node to any other node. This way, we can obtain the relevance scores of all pairs of thing nodes, denoted by

can be interpreted as the total probability for a random walker that begins at node i and ends at node j after t iterations, considering all possible paths between i and j. Since in our case we only consider relevance score between two things, if we vary the value of t, we can explicitly explore the relationship between two things at different scales. The steady-state probabilities for each pair of nodes can be obtained by recursively processing Random Walk and Restart until convergence. The converged probabilities give us the long-term visiting rates from any given node to any other node. This way, we can obtain the relevance scores of all pairs of thing nodes, denoted by ![]() . It should be noted that the results can be calculated more efficiently by using the Fast Random Walk with Restart implementation [29] via low-rank approximation and graph partition.

. It should be noted that the results can be calculated more efficiently by using the Fast Random Walk with Restart implementation [29] via low-rank approximation and graph partition.

Similarly, the transition probability matrix ![]() for the social graph

for the social graph ![]() can be obtained using:

can be obtained using:

where ![]() is a diagonal matrix with

is a diagonal matrix with ![]() . Accordingly, we can obtain the relevance scores of things on this graph

. Accordingly, we can obtain the relevance scores of things on this graph ![]() .

.

The overall relevance score (i.e., the correlation value) of any pair of things can be calculated using

where ![]() and

and ![]() , which are regulatory factors affecting the weight on the social influence and the spatio-temporal influence.

, which are regulatory factors affecting the weight on the social influence and the spatio-temporal influence.

With obtained correlation values, we could construct a top-k correlation graph of things by connecting each thing with the things that have top-k overall correlation values ![]() . Formally, the graph is defined as the following:

. Formally, the graph is defined as the following:

10.4 Applicability of DisCor-T: Things Classification

The top-k correlation graph G is essentially a graph representing the relationships among things. For instance, from our experiment, we found that the top four things most close to a three-seated sofa are a modular sofa, leather chair, high chair, and wooden chair. Using the constructed G, many problems centered around things management (e.g., things discovery, search, and recommendation) can be solved and explored further by exploiting existing graph algorithms. In this section, we will showcase the feasibility and effectiveness of our proposed DisCor-T by detailing one important research problem, automatic things annotation, which will be used later to evaluate the performance of our proposed approach to correlation discovery.

Automatically predicting appropriate tags (i.e., category labels) for unlabeled things can save manual labeling workload and has important research significance. Although some things have been labeled with useful tags (e.g., cooking, office), which are crucial for assisting users in searching and exploring new things, as well as recommending them, some other things may not have any meaningful labels at all. Furthermore, a thing might be associated with multiple categories. For instance, a microwave oven can be categorized in Cooking and also Home Appliance.

The aim of things annotation is that when given a new thing, the classifier automatically decides whether this thing belongs to the category of the corresponding labels. The algorithm can be divided into two stages: i) extracting features from the top-k correlation graph G and things, and ii) performing multi-label classification of things. We extract three kinds of features ![]() ,

, ![]() and

and ![]() from RGT in terms of label property, link structures and node attributes respectively.

from RGT in terms of label property, link structures and node attributes respectively.

Extracting Feature

This feature represents the label probabilities for unknown things, which can be computed using generative Bayesian rules from G, where each unknown thing ![]() is to be assigned one or multiple labels

is to be assigned one or multiple labels ![]() . We propose to formulate our solution as posterior probability

. We propose to formulate our solution as posterior probability ![]() . Once we know these probabilities, it is straightforward to assign

. Once we know these probabilities, it is straightforward to assign ![]() the label having the top-K largest probabilities,

the label having the top-K largest probabilities,

where the prior distribution probability ![]() can be easily calculated from the training dataset. Let

can be easily calculated from the training dataset. Let ![]() be the training dataset, having

be the training dataset, having ![]() things with label k. Then

things with label k. Then ![]() can be calculated using:

can be calculated using:

where Z is a normalizing constant and the conditional probability ![]() indicates the relevance score between testing thing

indicates the relevance score between testing thing ![]() and things in the training dataset

and things in the training dataset ![]() .

. ![]() denotes the steady state probability between

denotes the steady state probability between ![]() and

and ![]() , which can be obtained from Eq. (10.8) in our RWR process. The distribution

, which can be obtained from Eq. (10.8) in our RWR process. The distribution ![]() is set as a uniform distribution

is set as a uniform distribution ![]() . The probability

. The probability ![]() can be predicted in Eq. (10.13), and the labels with different posterior probabilities can be assigned to the testing thing. As a result, we can get the label probabilities for each testing object.

can be predicted in Eq. (10.13), and the labels with different posterior probabilities can be assigned to the testing thing. As a result, we can get the label probabilities for each testing object.

Extracting Latent Feature

With RGT, we can easily extract features of things that indicate the things relationship with different communities on G. In reality, things usually hold multiple relations. For instance, a thing might be shared among its owner, owner's friends, co-workers, or family members. It might also be connected to other things based on functionality or non-functionality attributes. Detecting such relations from RGT, which can be used as a structural feature for things annotation, is naturally related to the task of modularity-based community detection [15]. Modularity is to evaluate the goodness of a partition of undirected graphs. The reason that we choose this method is that modularity has been shown to be an effective quantity to measure community structure in many complex networks [28].

Modularity ![]() is like a statistical test that the null model is a uniform random graph model, where one vertex connects to others with uniform probability. It is a measure of how far the interaction deviates from a uniform random graph with the same degree distribution. Modularity is defined as:

is like a statistical test that the null model is a uniform random graph model, where one vertex connects to others with uniform probability. It is a measure of how far the interaction deviates from a uniform random graph with the same degree distribution. Modularity is defined as:

where ![]() is the adjacent matrix on the graph RGT, m is the number of edges of the matrix,

is the adjacent matrix on the graph RGT, m is the number of edges of the matrix, ![]() and

and ![]() denote the in-degree of vertex i and out-degree of vertex j, and

denote the in-degree of vertex i and out-degree of vertex j, and ![]() are the Kronecker delta function that takes the value 1 if node

are the Kronecker delta function that takes the value 1 if node ![]() and

and ![]() belong to the same community, 0 otherwise. A larger modularity

belong to the same community, 0 otherwise. A larger modularity ![]() indicates denser within-group interaction. So that, the modularity-based algorithm aims to find a community structure such that

indicates denser within-group interaction. So that, the modularity-based algorithm aims to find a community structure such that ![]() is maximized. In [20], Newman proposes an efficient solution by reformulating

is maximized. In [20], Newman proposes an efficient solution by reformulating ![]() as:

as:

where ![]() is the binary matrix indicating which community each node belongs to. B is the modularity function, is defined as the following:

is the binary matrix indicating which community each node belongs to. B is the modularity function, is defined as the following:

Since our relational graph of things (RGT) is a weighted and directed graph, we need to make some modifications on ![]() to solve the equation. This involves two steps.

to solve the equation. This involves two steps.

In the first step, we extend ![]() to directed graphs. Based on [15], we rewrite the modularity matrix

to directed graphs. Based on [15], we rewrite the modularity matrix ![]() as the following:

as the following:

where ![]() are the in-degrees and out-degrees of all the nodes on the RGT graph. In the second step, we extend

are the in-degrees and out-degrees of all the nodes on the RGT graph. In the second step, we extend ![]() to weighted graphs. To do so, we conduct further modification based on Eq. (10.17). It can be rewritten further as below:

to weighted graphs. To do so, we conduct further modification based on Eq. (10.17). It can be rewritten further as below:

where ![]() is the sum of weights of all edges in the RGT graph replacing the adjacency matrix

is the sum of weights of all edges in the RGT graph replacing the adjacency matrix ![]() ,

, ![]() and

and ![]() are the sum of the weights of incoming edges adjacent to vertex

are the sum of the weights of incoming edges adjacent to vertex ![]() and the outgoing edges adjacent to vertex

and the outgoing edges adjacent to vertex ![]() on the RGT graph respectively. After these two steps, it should be noted that different from undirected situation,

on the RGT graph respectively. After these two steps, it should be noted that different from undirected situation, ![]() is not symmetric. To use the spectral optimization method proposed by Newman in [20], we restore symmetry by adding

is not symmetric. To use the spectral optimization method proposed by Newman in [20], we restore symmetry by adding ![]() to its own transpose [15], thereby the new

to its own transpose [15], thereby the new ![]() is:

is:

We are then able to calculate all the eigenvectors corresponding to the top-k positive eigenvalue of ![]() and assign communities based on the elements of the eigenvector [21]. We take the obtained modularity vectors as the latent features, which indicate things relationships to communities (i.e., a larger value means a closer relationship with a community).

and assign communities based on the elements of the eigenvector [21]. We take the obtained modularity vectors as the latent features, which indicate things relationships to communities (i.e., a larger value means a closer relationship with a community).

Extracting Feature

It is the set of content-based features extracted from thing descriptions. We convert the keywords vectors into tf-idf format, which assigns each term x a weight in a thing's description d. ![]() , where

, where ![]() , the number of times word x occurs in the corresponding thing's description d, and idf is the inverse text frequency which is defined as :

, the number of times word x occurs in the corresponding thing's description d, and idf is the inverse text frequency which is defined as : ![]() , where

, where ![]() is the number of texts in our dataset, and

is the number of texts in our dataset, and ![]() is the number of texts where the word x occurs at least once.

is the number of texts where the word x occurs at least once.

Based on our experience in ontology bootstrapping for Web services [25], we exploit Term Frequency/Inverse Document Frequency (TF/IDF)—a common method in IR for generating a robust set of representative keywords from a corpus of documents—to analyze things' descriptions. It should be noted that the common implementation of TF/IDF gives equal weights to the term frequency and inverse document frequency (i.e., ![]() ). We choose to give higher weight to the idf value (i.e.,

). We choose to give higher weight to the idf value (i.e., ![]() ). The reason behind this modification is to normalize the inherent bias of the tf measure in short documents.

). The reason behind this modification is to normalize the inherent bias of the tf measure in short documents.

Finally, the set of feature vectors for the N things in the dataset ![]() where

where ![]() is the feature vector for each thing, m is the size of vocabulary we produced. For better performance, we perform a cosine normalization for tf-idf vectors:

is the feature vector for each thing, m is the size of vocabulary we produced. For better performance, we perform a cosine normalization for tf-idf vectors: ![]() [24].

[24].

Building a Discriminative Classifier

After obtaining the features based on attributes of G and things, we combine the features (![]() ) together and feed them into a discriminative classifier.

) together and feed them into a discriminative classifier.

Our method is a very flexible feature-based method, where the structural features can be put into any discriminative classifier for classification. In this paper, we evaluate our method based on SVM and Logistic regression. Specifically, we adopt LibSVM [4] for one-vs-rest classification.

Discussion

The high and real-time streams of interactions between human and ubiquitous things call for online processing techniques that are suitable to large-scale datasets and can be rapidly updated, adapted to reflect the constantly evolving the contextual similarities due to changing contextual information of things (e.g., social networks, status, locations etc). Our proposed model can be easily extended to deal with large-scale IoT data streams with online processing and incremental techniques due to the following characteristics of the model:

• We characterize each thing as a discriminative feature descriptor including static features (e.g., content-based features) and easily integrated dynamic features (e.g., locations, and instantaneous status of things). The feature vectors can be continuously updated with users' interactions with things in a real-time manner. It is noted that we only focus on training an offline model with the mixture of static and dynamic features, which provides the possibility of online learning, such as partaking in an incremental activity learning a framework that is able to continuously update and learn newly seen data.

• Our proposed model does not require any explicit input from users. All the contextual information is automatically obtained from users' social networks, localization techniques and sensor technologies in a non-obtrusive way.

• The process of contextual similarity calculation works based on the random walk techniques, which has been successfully used in large-scale online search engines. This type of techniques can be easily parallelized (e.g., using Hadoop framework for improving performance) and processed in real time.

10.5 Applicability of DisCor-T: Things Recommendation

Things recommendation is a crucial step for promoting and taking full advantage of Internet of Things (IoT), where it benefits the individuals, businesses, and society on a daily basis in terms of two main aspects. On the one hand, it can deliver relevant things to users based on users' preferences and interests. On the other hand, it can also serve to optimize the time and cost of using IoT in a particular situation. Physical things, in reality, have multiple unique attributes. For example, they have stated (e.g., in use or not in use; expired or not expired). When a certain thing is in use, it can not be used simultaneously by another user. Under this circumstance, a recommender system can refer the user to a list of things which have same or similar functionalities. For example, if microwave 1 is in use, microwave 2 will be recommended to a user who would like to warm her food.

In this section, we briefly introduce a probabilistic model for effective Thing-of-Interest recommendation over the entity graph, RGT. More details can refer to [39]. Fig. 10.5 shows our model that fuses social network, things correlations and user-thing interactions, and incorporates three relationships: user-user connections ![]() , thing-thing correlations

, thing-thing correlations ![]() (obtained from RGT) and user-thing interactions (thing usage)

(obtained from RGT) and user-thing interactions (thing usage) ![]() with shared factors

with shared factors ![]() and

and ![]() . We describe how to encode these two relationship matrices.

. We describe how to encode these two relationship matrices.

and

and  denote users friendship and things correlations, while yij denote interactions between users and things; ui,

denote users friendship and things correlations, while yij denote interactions between users and things; ui,  , vj,

, vj,  are the latent factors induced from the three relationships

are the latent factors induced from the three relationshipsEncoding User Friendships

User affinity can directly adopt the method in Section 10.3.2. We construct a directed weighted graph ![]() , whose vertex set

, whose vertex set ![]() corresponds to users set

corresponds to users set ![]() , edges set

, edges set ![]() represents the friendships between users and the range of their associated weight are in

represents the friendships between users and the range of their associated weight are in ![]() . Bigger weights represent stronger ties between users.

. Bigger weights represent stronger ties between users. ![]() indicates the user similarity influenced by the social links between users, reflecting the homophily (i.e., similar users may have similar interests). We use the cosine similarity to calculated

indicates the user similarity influenced by the social links between users, reflecting the homophily (i.e., similar users may have similar interests). We use the cosine similarity to calculated ![]() as follows:

as follows:

where ![]() ,

, ![]() is the set of the user i's friends (i.e.,

is the set of the user i's friends (i.e., ![]() ),

), ![]() is the binary vector of things used by user i,

is the binary vector of things used by user i, ![]() is the L-2 norm of vector

is the L-2 norm of vector ![]() , and α is a parameter that reflects the preference for transitioning to a user who interacts with the same things.

, and α is a parameter that reflects the preference for transitioning to a user who interacts with the same things.

After we obtain the users friendship matrix from ![]() , we factorize users' friendship matrix to derive a high-quality, low dimensional feature representation to user-based latent features vectors

, we factorize users' friendship matrix to derive a high-quality, low dimensional feature representation to user-based latent features vectors ![]() and factor-based latent feature vectors

and factor-based latent feature vectors ![]() on analyzing the social network graph

on analyzing the social network graph ![]() . The conditional probability of

. The conditional probability of ![]() over the observed social network is determined by:

over the observed social network is determined by:

Similar to the Web link adjacency [43], if a user i has lots of links to other users, the trust value of ![]() should be decreased. Whereas if a user i is trusted by many other users, the trust value of

should be decreased. Whereas if a user i is trusted by many other users, the trust value of ![]() should be increased, since the user can be considered as local authority.

should be increased, since the user can be considered as local authority. ![]() should be adjusted as:

should be adjusted as:

where ![]() represents the outdegree of node i, while

represents the outdegree of node i, while ![]() indicates the indegree of

indicates the indegree of ![]() . Eq. (10.21) can be reformulated as:

. Eq. (10.21) can be reformulated as:

Encoding User-Thing Interactions

User-thing interactions ![]() are embodied by the usage frequency of thing i by user j in a certain time frame. We can map the usage frequency to interval

are embodied by the usage frequency of thing i by user j in a certain time frame. We can map the usage frequency to interval ![]() by using function

by using function ![]() without loss of generality, where

without loss of generality, where ![]() and

and ![]() are the maximum and minimum usage values respectively. The dyadic relationship between a user and a thing does not only depend on their latent factor

are the maximum and minimum usage values respectively. The dyadic relationship between a user and a thing does not only depend on their latent factor ![]() , whose vulnerability is that it makes use of past interactions and can not handle brand new things well, i.e., cold start problem. To tackle this issue, we use the explicit features directly by profiling users observable features

, whose vulnerability is that it makes use of past interactions and can not handle brand new things well, i.e., cold start problem. To tackle this issue, we use the explicit features directly by profiling users observable features ![]() (i.e., age, gender, location etc.) and things observable features

(i.e., age, gender, location etc.) and things observable features ![]() (i.e., textual description of things functionalities). Here, c and d are the dimensionalities of users observable features and things observable features respectively. The dyadic relationship (thing usage value) depends on not only the inner product of latent factors of users and things, but also their observable features. Things usage value

(i.e., textual description of things functionalities). Here, c and d are the dimensionalities of users observable features and things observable features respectively. The dyadic relationship (thing usage value) depends on not only the inner product of latent factors of users and things, but also their observable features. Things usage value ![]() can be defined as the following conditional probability:

can be defined as the following conditional probability:

We adopt the bilinear product to specify the similarity between user observable features and thing observable features [7]. The pairwise similarity between ![]() and

and ![]() can be denoted as:

can be denoted as:

where w is a column vector of entries ![]() ,

, ![]() denotes the Kronecker product of

denotes the Kronecker product of ![]() and

and ![]() . Eq. (10.25) can be rewritten as:

. Eq. (10.25) can be rewritten as:

where matrix ![]() is a weight coefficients capturing pairwise associations between user i's explicit feature vector and thing j's explicit feature vector. The thing usage value depends on both the inner product of user and thing latent factors and the bilinear product of user observable features and thing observable features. Eq. (10.24) can be reformulated as:

is a weight coefficients capturing pairwise associations between user i's explicit feature vector and thing j's explicit feature vector. The thing usage value depends on both the inner product of user and thing latent factors and the bilinear product of user observable features and thing observable features. Eq. (10.24) can be reformulated as:

Model Learning

Given a training dataset for ![]() , the joint posterior probability of model parameters Σ can be obtained through Bayes' theorem:

, the joint posterior probability of model parameters Σ can be obtained through Bayes' theorem:

Maximizing Eq. (10.28) can be converted to minimizing the negative logarithm of ![]() via:

via:

where ℓ⋅ is a loss function (we adopt the most widely used ![]() loss), and

loss), and ![]() are trade-off parameters. A gradient descent process can be implemented to solve the parameters. Given a training dataset

are trade-off parameters. A gradient descent process can be implemented to solve the parameters. Given a training dataset ![]() , the objective function in Eq. (10.29) can be found by performing gradient descent in

, the objective function in Eq. (10.29) can be found by performing gradient descent in ![]() ,

, ![]() and

and ![]() .

.

where δ is the learning rate. After we obtain the optimal parameters ![]() , we can use them to predict the given testing data

, we can use them to predict the given testing data ![]()

10.6 Experiments

10.6.1 Experiment Setup

Due to the lack of experimental public data sets, we set up a testbed that consists of several different places in the first author's home (e.g., bedroom, bathroom, garage, kitchen etc), where approximate 127 physical things (e.g., couch, laptop, microwave, fridge etc.) are monitored by attaching RFID and sensors. Table 10.1 presents the statistics of things used in this paper. The details in regards to this testbed can refer to [37]. To collect the records of things usage events, we need to figure out i) how to detect a usage event when it is happening; and ii) how to retrieve this thing's corresponding three contextual information. There are two ways to detect usage events of things with two identification technologies used, namely senor-based state changes and RFID-based mobility detection.

Table 10.1

Dataset

| No. | Category | # Things | # Labels |

| 1 | Entertainment | 28 | 118 |

| 2 | Office | 20 | 51 |

| 3 | Cooking | 25 | 103 |

| 4 | Transportation | 11 | 24 |

| 5 | Medicine/Medical | 10 | 18 |

| 6 | Home Appliances | 33 | 83 |

To conduct experimental studies, we manually labeled 127 things with 397 different labels. It should be noted that some things belong to multiple categories, therefore having multiple labels. For example, a Wii device belongs to category label Entertainment as well as Home Appliance. This dataset serves as the ground-truth dataset in our experiments for performance evaluation. Ten volunteers participated in the data collection phase by interacting with RFID tagged things for a period of four months, generating 20,179 records on the interactions of the things tagged in the experiments.

10.6.2 Evaluation on Thing Annotation

We randomly removed the category tags of a certain percentage, ranging from 10% to 50%, of things from each category of the ground-truth dataset. These things were used to test our approach while the rest were used as the training set. We iterated five times for each training percentage and took the averaged value as the final result. Our algorithm produces a vector of probabilities, representing the assignment probabilities of all labels for an unknown object. In our experiments, we ranked these probabilities and chose the top k labels to compare with the ground truth labels. The k value was set to the number of ground truth labels for each unknown object and it varies from object to object. The parameters α and β were set as 0.5 each.

We particularly compared the annotation performance by using i) the features obtained from G, ii) the features obtained from thing descriptions (i.e., content features ![]() ), and iii) the combination of the both. Each process was repeated 10 times and the average results were recorded. Similar observations were obtained for different testing percentages. Fig. 10.6 shows the result when we removed 30% of things from each category of the ground-truth dataset. Descriptions of things are normally short and noisy, it is therefore not surprising that the performance based on content features only is worse than the one based on implicit structural features (i.e.,

), and iii) the combination of the both. Each process was repeated 10 times and the average results were recorded. Similar observations were obtained for different testing percentages. Fig. 10.6 shows the result when we removed 30% of things from each category of the ground-truth dataset. Descriptions of things are normally short and noisy, it is therefore not surprising that the performance based on content features only is worse than the one based on implicit structural features (i.e., ![]() ) in most categories. The consistent good performance from the latent features also indicates that our top-k correlation graphs G is able to capture the correlations among things well. From the figure, we can see that by combining the two together, the performance of all six categories is increased and is the best consistently among the three.

) in most categories. The consistent good performance from the latent features also indicates that our top-k correlation graphs G is able to capture the correlations among things well. From the figure, we can see that by combining the two together, the performance of all six categories is increased and is the best consistently among the three.

10.6.3 Evaluation on Recommendation

We compare the prediction accuracy of our approach based on fusing social networks and things correlations (FST) with some state-of-the-art approaches based on probabilistic factor analysis: Probabilistic Matrix Factorization (PMF) [23], SoRec [16] and SVD++ [12]. This experiment evaluated our approach, in particular, its capability in handling the cold start problem, which refers to providing accurate prediction when some users only use few things or even have no usage historical records at all. In order to verify the capability of our approach to predicting the usage value of things that have not been used, we randomly selected and marked off ![]() of data (

of data (![]() , 20 and 50) from our dataset as training data and different number of latent factors (5 and 10) to test all the methods. The experimental results are shown in Table 10.2.

, 20 and 50) from our dataset as training data and different number of latent factors (5 and 10) to test all the methods. The experimental results are shown in Table 10.2.

Table 10.2

MAE Comparison with Other Approaches

| Training Data | 10% | 20% | 50% | |||

| # of Factors | 5 | 10 | 5 | 10 | 5 | 10 |

| PMF | 0.8635 | 0.8544 | 0.8245 | 0.8153 | 0.7662 | 0.7495 |

| SVD++ | 0.8425 | 0.8311 | 0.8004 | 0.7982 | 0.7410 | 0.7273 |

| SoRec | 0.8103 | 0.7978 | 0.7872 | 0.7723 | 0.7317 | 0.7168 |

| FST | 0.7303 | 0.7246 | 0.7112 | 0.6948 | 0.6631 | 0.6406 |

From the table, it is clear that our approach outperforms other methods on different training ratios and different number of factors, especially when the training data is small. PMF is a pure probabilistic factor model. Relying heavily on user-thing usage matrix, it can not deal with the circumstance where little interactions information is available. SoRec works better than PMF and SVD++ because of its aggregation of user-user internal information (social links). Our approach not only incorporates users and things internal information, but also defines the explicit features (i.e., content) for users (e.g., users profile) and things (e.g., description of things functionalities), which makes our approach performing better when there is a cold start problem. The experimental result further demonstrates the effectiveness on improving the recommendation accuracy by incorporating things correlations.

10.7 Related Work

Finding related and similar things is a key service and the most straightforward method of finding related things is the traditional keyword-based search, where user querying keyword is matched with the extracted description of things including textual descriptions on thing's functionalities and non-functional properties. For example, in Microsearch [27] and Snoogle [32], each sensor is attached to a connected object, which carries a keyword-based description of each object. Following an ad hoc query consisting of a list of keywords, the system returns a ranked list of the top k entities matching this query. As we pointed out, this method can not work well for ubiquitous things due to unique characteristics, e.g., insufficient description of things, the inconsistency of the meaning of the textual information, more importantly, this solution does not make use of implicit inter-correlations between things and their rich contextual information.

Another mainstream solution is via semantic Web-related techniques. Such solutions typically use the metadata annotation (e.g., details related to a sensor such as sensor type, manufacturer, capability and contextual information), then use a query language to search related available things [18,5]. Online sensors such as Pachube,3 GSN [1], Microsoft SensorMap [19] and linked sensor middleware [13] support search for sensors based on textual metadata that describes the sensors (e.g., type and location of a sensor, functional and non-functional attributes, object to which the sensor is attached), which is manually entered by the person who deploys the sensor. Other users can then search for sensors with certain metadata by entering appropriate keywords. Unfortunately, these ontology and their use are rather complex and it is uncertain whether end users can provide correct descriptions of sensors and their deployment context without the help from experts. In other words, such methods require extensive prior knowledge. There are efforts to provide a standardized vocabulary to describe sensors and their properties such as SensorML4 and the Semantic Sensor Network Ontology (SSN),5 but not widely adopted.

The above solutions are time-consuming and require expert knowledge. For example, the descriptions of things and their corresponding characteristics and ontology need to be predefined under a uniform format such as Resource Description Framework (RDF) or Schema.org.6 In addition, the methods do not make full use of the rich information on users' historical interactions with things, which may imply containing implicit relations of different entities. For example, if some users have the similar usage pattern on certain things, it may indicate some close connections among these things. Existing solutions can not capture such information well. We propose to extract the underlying connections between things by exploiting the human-thing interactions in the ubiquitous environment. Our method not only takes rich contextual information of human-thing interactions into account but also utilizes the historical pattern by analyzing the past human-thing interactions.

10.8 Conclusion

Recent advances in radio-frequency identification (RFID), wireless sensor networks and Web services have made it possible to bridge the physical and digital worlds together, where ubiquitous things are becoming an integral part of our daily lives. Despite the exciting potential of this prosperous era, there are many challenges that persist. In this paper, we propose a novel model that derives latent correlations among things by exploiting user, temporal, and spatial information captured from things usage events. This correlation analysis can help solve many challenging issues in managing things such as things search, recommendation, annotation, classification, and clustering. The experimental results demonstrate the utility of our approach.

We view the work presented in this paper as a first step towards effective management of things in the emerging Web of Things (WoT) era.

There are a few interesting directions that we plan to work in the future:

• Real-time things status update. In the real situation, physical things are more dynamic compared to traditional Web resources. Examples of such dynamic features include availability, and changing attributes (e.g., geographical information, status). We plan to improve our model so that it can adaptively propagate up-to-date information from things correlations network and make more accurate recommendations.

• Scalability. We plan to improve the scalability of our approach by adopting constraints in searching a local area. This can be realized by applying generalized clustering algorithms on hypergraphs. The search space can be significantly pruned in this way. We also plan to evaluate the improved approach using real-world large-scale WoT datasets. We notice that some parameters (e.g., α and β) in our approach need to be tuned in a category specific way. This might be a burden to apply the algorithm to other WoT datasets. We will investigate ways to reduce the workload of parameter tuning e.g., by some meta-feature based methods or multi-task learning [14,42].

• Thing-to-Thing communications. Our current model works based on human-thing interactions to extract the latent connections between things. The communications between things are getting more prevalent with the development of communication technologies, which represent a rich source to exploit for making our current model more robust. Extending our model by analyzing and exploring the thing-to-thing communications is another future work.