This chapter will continue walking through the framework implementation by focusing on how CSLA .NET provides support for mobile objects. Chapter 1 introduced the concept of mobile objects, including the idea that in an ideal world, business logic would be available both on the client workstation (or web server) and on the application server. In this chapter, the implementation of data access is designed specifically to leverage the concept of mobile objects by enabling objects to move between client and server. When on the client, all the data binding and UI support from Chapters 7 to 13 is available to a UI developer; and when on the server, the objects can be persisted to a database or other data store.

Chapter 2 discussed the idea of a data portal. The data portal combines the channel adapter and message router design patterns to provide a simple, clearly defined point of entry to the server for all data access operations. In fact, the data portal entirely hides whether an application server is involved, allowing an application to switch between 2-tier and 3-tier physical deployments without changing any code.

A UI developer is entirely unaware of the use of a data portal. Instead, the UI developer will interact only with the business objects created by the business developer.

The business developer will make use of the DataPortal class from the Csla namespace to create, retrieve, update, and delete all business object data. This DataPortal class is the single entry point to the entire data portal infrastructure, which enables mobile objects and provides access to server-side resources such as distributed transaction support. The key features enabled by the data portal infrastructure include

Enabling mobile objects

Providing a consistent coding model for root and child objects

Hiding the network transport (channel adapter)

Exposing a single point of entry to the server (message router)

Exposing server-side resources (database engine, distributed transactions, etc.)

Allowing objects to persist themselves or to use an external persistence model

Unifying context (passing context data to/from client and server)

Using Windows integrated (AD) security

Using CSLA .NET custom authentication (including impersonation)

Meeting all those needs means that the data portal is a complex entity. While to a business developer, it appears to consist only of the simple DataPortal class, there's actually a lot going on behind that class.

I've already discussed much of the authentication and authorization support in Chapter 12, but I will discuss some aspects of authentication in this chapter as well. Because the data portal is so complex, I'll spend some time discussing its design before walking through the implementation details.

One of the primary goals of object-oriented programming is to encapsulate all the functionality (data and implementation) for a domain object into a single class. This means, for instance, that all the business logic responsible for editing customer information should be in a CustomerEdit class.

In many cases, the business logic in an object directly supports a rich, interactive user experience. This is especially true for WPF or Windows Forms applications, in which the business object implements validation and calculation logic that should be run as the user enters values into each field on a form. To achieve this, the objects should be running on the client workstation or web server to be as close to the user as possible.

At the same time, most applications have back-end processing that is not interactive. In an n-tier deployment, this non-interactive business logic should run on an application server. Yet good object-oriented design dictates that all business logic should be encapsulated within objects rather than spread across the application. This can be challenging when an object needs to both interact with the user and perform back-end processing. Effectively, the object needs to be on the client sometimes and on the application server other times.

The idea of mobile objects solves this problem by allowing an object to physically move from one machine to another. This means it really is possible to have an object run on the client to interact with the user, then move to the application server to do back-end work like interacting with the database.

A key goal of the data portal is to enable the concept of mobile objects. In the end, not only will objects be able to go to the application server to persist their data to a database, but they will also be able to handle any other non-interactive back-end business behaviors that should run on the application server.

At the same time, as discussed in Chapter 1, it is important to maintain clear logical layers in the application architecture. This means that the business logic and data access should be logically separated.

The term logically separated can mean many things. You might consider logical separation to include putting all your data access code into a limited set of predefined methods, and I think that is perfectly valid. Or you might consider logical separation to mean putting the data access code into a separate class or a separate assembly. To me, those are valid as well.

The data portal enables several techniques for logical separation:

Data access code goes into a limited set of predefined methods.

Data access code goes into a separate data access class (and optionally separate assembly), invoked by the business object.

Data access code goes into a separate object factory class (and optionally separate assembly), invoked by the data portal.

All of these techniques provide logical separation of layers, though the latter two offer the psychological benefit of putting the code into a separate class for clarity.

In Chapters 4 and 5, I walked through the coding model for the various stereotypes directly supported by CSLA .NET. As you can see from the code templates in Chapter 5, the data portal is used to create, retrieve, update, and delete both root and child objects, using a relatively consistent coding pattern for both.

A root object typically implements a set of DataPortal_XYZ methods such as DataPortal_Create() and DataPortal_Fetch(). These methods are invoked by the data portal in response to the business object's factory method calling DataPortal.Create() and DataPortal.Fetch().

Similarly, a child object typically implements a set of Child_XYZ methods such as Child_Create() and Child_Fetch(). These methods are invoked by the data portal in response to the child object's factory method calling DataPortal.CreateChild() and DataPortal.FetchChild().

Additionally, the field manager discussed in Chapter 7 plays a role in the process. In a parent business object's DataPortal_Insert() or DataPortal_Update() method, the object must update its child objects as well. This can be done in a single line of code.

FieldManager.UpdateChildren();

The field manager loops through all child references and has the data portal update each one by calling the appropriate Child_XYZ method based on the state of the child object.

BusinessListBase also participates by providing a prebuilt Child_Update() implementation that updates the collection's list of deleted items and active items. In fact, this method is useful even for a root collection, because the collection's DataPortal_Update() can look like this:

protected override void DataPortal_Update()

{

// open database connection

Child_Update();

// close database connection

}But what's even nicer is that for a child collection, the business developer typically has to write no code at all in the collection. The data portal handles updating a child collection automatically as long as the child objects implement Child_XYZ methods.

The data portal combines two common design patterns: channel adapter and message router.

The channel adapter pattern provides a great deal of flexibility for n-tier applications by allowing the application to switch between 2-tier and 3-tier models, as well as between various network protocols.

The message router pattern helps to decouple the client and server by providing a clearly defined, single point of entry for all server interaction. Each call to the server is routed to an appropriate server-side object.

Channel Adapter

Chapter 1 discussed the costs and benefits of physical n-tier deployments. Ideally, an application will use as few physical tiers as possible. At the same time, it is good to have the flexibility to switch from a 2-tier to a 3-tier model, if needed, to meet future scalability or security requirements.

Switching to a 3-tier model means that there's now a network connection between the client (or web server) and the application server. The primary technology provided by .NET for such communication is WCF. However, this is just one (though the recommended one) of several options:

WCF

Remoting

ASP.NET Web Services (ASMX)

Enterprise Services (DCOM)

To avoid being locked into a single network communication technology, the data portal applies the channel adapter design pattern.

The channel adapter pattern allows the specific network technology to be changed through configuration rather than through code. A side effect of the implementation shown in this chapter is that no network is also an option. Thus, the data portal provides support for 2-tier or 3-tier deployment. In the 3-tier case, it supports various network technologies, all of which are configurable without changing any code.

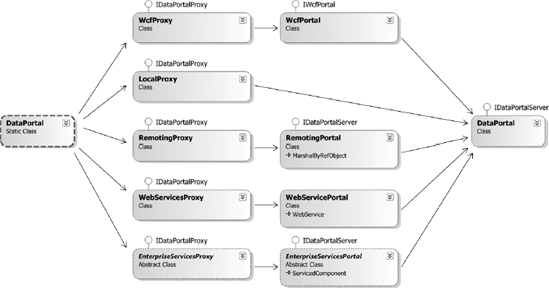

Figure 15-1 illustrates the flow of a client call as it flows through the data portal.

Switching from one channel to another is done by changing a configuration file, not by changing code. Notice that the LocalProxy channel communicates directly with the DataPortal object (from the Csla.Server namespace) on the right. This is because it bypasses the network entirely, interacting with the object in memory on the client. All the other channel proxies use network communication to interact with the server-side object.

Tip

The data portal also allows you to create your own proxy/host combination so you can support network channels other than those implemented in this chapter.

Table 15-1 lists the types required to implement the channel adapter portion of the data portal.

Table 15.1. Types Required for the Channel Adapter Pattern

Type | Namespace | Description |

|---|---|---|

|

| Utility class that encapsulates the use of reflection and dynamic method invocation to find method information and invoke methods |

|

| Exception thrown by the data portal when an exception occurs while calling a data access method |

|

| Attribute applied to a business object's data access methods to force the data portal to always run that method on the client, bypassing the configuration settings |

|

|

|

|

| Primary entry point to the data portal infrastructure; used by business developers |

|

| Primary entry point to the data portal for asynchronous behaviors; used by business developers |

|

| Portal to the message router functionality on the server; acts as a single point of entry for all server communication |

|

| Interface defining the methods required for data portal host objects |

|

| Interface defining the methods required for client-side data portal proxy objects |

|

| Uses WCF to communicate with a WCF server running in IIS, WAS, or a custom host (typically a Windows service) |

|

| Exposed on the server by IIS, WAS, or a custom host; called by |

|

| Loads the server-side data portal components directly into memory on the client and runs all "server-side" operations in the client process |

|

| Uses .NET Remoting to communi-cate with a remoting server running in IIS or within a custom host (typically a Windows service) |

|

| Exposed on the server by IIS or a custom host; called by |

|

| Uses Enterprise Services (DCOM) to communicate with a server running in COM+ |

|

| Exposed on the server by Enterprise Services; called by |

|

| Uses Web Services to communi-cate with a service hosted in IIS |

|

| Exposed on the server as a web service by IIS; called by |

The .NET Remoting, Web Services, and Enterprise Services technologies are supported primarily for backward compatibility with older versions of CSLA .NET. I recommend using the WCF technology, and that is the technology I'll focus on in this chapter.

The point of the channel adapter is to allow a client to use the data portal without having to worry about how that call will be relayed to the Csla.Server.DataPortal object. Once the call makes it to the server-side DataPortal object, the message router pattern becomes important.

Message Router

One important lesson to be learned from the days of COM and MTS/COM+ is that it isn't wise to expose large numbers of classes and methods from a server. When a server exposes dozens or even hundreds of objects, the client must be aware of all of them in order to function.

Note

Sadly, this lesson doesn't seem to have informed the designs of many service-oriented systems. Fortunately, the representational state transfer (REST) movement has picked up on the idea of limiting the entry points to a server and is helping to shape the industry in this direction.

If the client is aware of every server-side object, we get tight coupling and fragility. Any change to the server objects typically changes the server's public API, thus breaking all of the clients, often including those clients who aren't even using the object that was changed.

One way to avoid this fragility is to add a layer of abstraction. Specifically, you can implement the server to have a single point of entry that exposes a limited number of methods. This keeps the server's API clear and concise, minimizing the need for a server API change. The data portal will expose only the five methods listed in Table 15-2.

Table 15.2. Methods Exposed by the Data Portal

Method | Purpose |

|---|---|

| Creates a new object, loading it with default values from the database |

| Retrieves an existing object, first loading it with data from the database |

| Inserts, updates, or deletes data in the database corresponding to an existing object |

| Deletes data in the database corresponding to an existing object |

| Executes a command stereotype object on the server |

Of course, the next question is, with a single point of entry, how do your clients get at the dozens or hundreds of objects on the server? It isn't like they aren't needed! That is the purpose of the message router.

The single point of entry to the server routes all client calls to the appropriate server-side object. If you think of each client call as a message, then this component routes messages to your server-side objects. In CSLA .NET, the message router is Csla.Server.DataPortal. Notice that it is also the endpoint for the channel adapter pattern discussed earlier; the data portal knits the two patterns together into a useful whole.

For Csla.Server.DataPortal to do its work, all server-side objects must conform to a standard design so the message router knows how to invoke them. Remember, the message router merely routes messages to objects—it is the object that actually does useful work in response to the message.

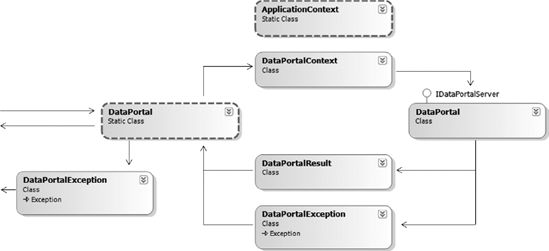

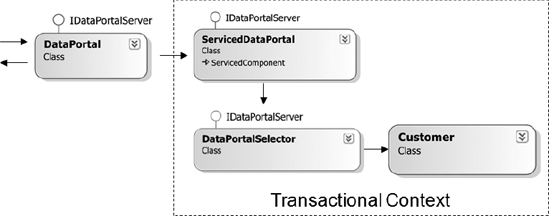

Figure 15-2 illustrates the flow of a call through the message router implementation. The DataPortal class (on the left of Figure 15-2) represents the Csla.Server.DataPortal—which was the rightmost entity in Figure 15-1. It relies on a SimpleDataPortal object to do the actual message routing—a fact that will become important shortly for support of distributed transactions.

The SimpleDataPortal object routes each client call (message) to the actual business object that can handle the message. These are the same business classes and objects that make up the application's business logic layer.

In other words, the same exact objects used by the UI on the client are also called by the data portal on the server. This allows the objects to run on the client to interact with the user, and to run on the server to do back-end processing as needed.

The FactoryDataPortal object routes each client call (message) to an object factory that can handle the message. If a business developer chooses to use this object factory approach, he'll need to create an object factory for each root business object, so that factory object can create, retrieve, update, and delete the business object and its data.

Table 15-3 lists the classes needed, in addition to Csla.DataPortal and Csla.Server.DataPortal, to implement the message router behavior.

Table 15.3. Types Required for the Message Router

Type | Namespace | Description |

|---|---|---|

|

| Creates a |

|

| Creates a COM+ distributed transaction and then delegates the call to |

|

| Determines whether to invoke the managed data portal ( |

|

| Entry point to the server, implementing the message router behavior and routing client calls to the appropriate business object on the server |

|

| Entry point to the server, implementing the message router behavior and routing client calls to the appropriate object factory on the server |

|

| Interface that defines the required behavior for a non-nested criteria class |

|

| Optional base class for use when building criteria objects; criteria objects contain the criteria or key data needed to create, retrieve, or delete an object's data |

|

| Optional prebuilt criteria class that passes a single identifying value through the data portal for use in identifying the object to create, retrieve, or delete |

|

| Utility class that encapsulates the use of reflection and dynamic method invocation to find method information and to invoke methods |

Notice that neither the channel adapter nor message router explicitly deal with moving objects between the client and server. This is because the .NET runtime typically handles object movement automatically as long as the objects are marked as Serializable or DataContract.

The ICriteria interface listed in Table 15-3 can be implemented by any criteria class, and must be implemented by any criteria class that is not nested inside its business class. For example, CriteriaBase and SingleCriteria implement this interface. During the implementation of SimpleDataPortal later in the chapter, you'll see how this interface is used.

Several different technologies support database transactions, including transactions in the database itself, ADO.NET, Enterprise Services, and System.Transactions. When updating a single database (even multiple tables), any of them will work fine, and your decision will often be based on which is fastest or easiest to implement.

If your application needs to update multiple databases, however, the options are a bit more restrictive. Transactions that protect updates across multiple databases are referred to as distributed transactions. In SQL Server, you can implement distributed transactions within stored procedures. Outside the database, you can use Enterprise Services or System.Transactions.

Distributed transaction technologies use the Microsoft DTC to manage the transaction across multiple databases. There is a substantial performance cost to enlisting the DTC in a transaction. Your application, the DTC, and the database engine(s) all need to interact throughout the transactional process to ensure that a consistent commit or rollback occurs, and this interaction takes time.

Historically, you had to pick one transactional approach for your application. This often meant using distributed transactions even when they weren't required—and paying that performance cost.

The System.Transactions namespace offers a compromise through the TransactionScope object. It starts out using nondistributed transactions (like those used in ADO.NET), and thus offers high performance for most applications. However, as soon as your code uses a second database within a transaction, TransactionScope automatically enlists the DTC to protect the transaction. This means you get the benefits of distributed transactions when you need them, but you don't pay the price for them when they aren't needed.

Warning

The TransactionScope object is a little tricky, because it will enlist the DTC if more than one database connection is opened, even to the same exact database. Later in the chapter, I'll discuss the ConnectionManager, ObjectContextManager, and ContextManager classes, which are designed to help you avoid this issue.

The data portal allows the developer to specify which transactional technology to use for each of a business object's data access methods. The TransactionalDataPortal and ServicedDataPortal classes in Figure 15-2 wrap the client's call in a TransactionScope or COM+ transactional context as requested.

The Csla.Server.DataPortal object uses the Transactional attribute to determine what type of transactional approach should be used for each call by the client. Ultimately, all calls end up being handled by SimpleDataPortal or FactoryDataPortal, which route the call to an appropriate object. The real question is whether that object will run within a preexisting transactional context or not.

The Transactional attribute is applied to the data access methods on the business object or factory object. The code in Csla.Server.DataPortal looks at the object's data access method that will ultimately be invoked by SimpleDataPortal or FactoryDataPortal, and it finds the value of the Transactional attribute (if any). Table 15-4 lists the options for this attribute.

Table 15.4. Transactional Options Supported by the Data Portal

Attribute | Result |

|---|---|

None | The business object does not run within a preexisting transactional context and so must implement its own transactions using stored procedures or ADO.NET. |

| Same as "none" in the previous entry. |

| The business object runs within a COM+ distributed transactional context. |

| The business object runs within a |

By extending the message router concept to add transactional support, the data portal makes it easy for a business developer to leverage either Enterprise Services or System.Transactions as needed. At the same time, the complexity of both technologies is reduced by abstracting them within the data portal. If neither technology is appropriate for your needs, you can always choose to not use the Transactional attribute, and then you can manage the transactions yourself in your data access code.

A key goal for the data portal is to provide a consistent environment for the business objects. At a minimum, this means that both client and server should run under the same user identity (impersonation) and the same culture (localization). The business developer should be able to pass other arbitrary information between client and server as well.

In addition to context information, exception data from the server should flow back to the client with full fidelity. This is important both for debugging and at runtime. The UI often needs to know the specifics about any server-side exceptions in order to properly notify the user about what happened and then to take appropriate steps.

Figure 15-3 shows the objects used to flow data from the client to the server and back again to the client.

The arrows pointing off the left side of the diagram indicate communication with the calling code—typically the business object's factory methods. A business object calls Csla.DataPortal to invoke one of the data portal operations. Csla.DataPortal calls Csla.Server.DataPortal (using the channel adapter classes not shown here), passing a DataPortalContext object along with the actual client request.

The DataPortalContext object contains several types of context data, as listed in Table 15-5.

Table 15.5. Context Data Contained Within DataPortalContext

Context Data | Description |

|---|---|

| Collection of context data that flows from client to server and then from server back to client; changes on either end are carried across the network |

| Collection of context data that flows from client to server; changes on the server are not carried back to the client |

| Client's |

| A flag indicating whether |

| Client thread's culture, which flows from the client to the server |

| Client thread's UI culture, which flows from the client to the server |

The GlobalContext and ClientContext collections are exposed to both client and server code through static methods on the Csla.ApplicationContext class. All business object and UI code will use properties on the ApplicationContext class to access any context data. The LocalContext property of ApplicationContext is not transported through the data portal, because it is local to each individual machine.

When a call is made from the client to the server, the client's context data must flow to the server; the data portal does this by using the DataPortalContext object.

The Csla.Server.DataPortal object accepts the DataPortalContext object and uses its data to ensure that the server's context is set up properly before invoking the actual business object code. This means that by the time the business developer's code is running on the server, the server's IPrincipal, culture, and ApplicationContext are set to match those on the client.

Warning

The exception to this is when using Windows integrated (AD) security. In that case, you must configure the server technology (such as IIS) to use Windows impersonation, or the server will not impersonate the user identity from the client.

There are two possible outcomes of the server-side processing: either it succeeds or it throws an exception.

If the call to the business object succeeds, Csla.Server.DataPortal returns a DataPortalResult object back to Csla.DataPortal on the client. The DataPortalResult object contains the information listed in Table 15-6.

Csla.DataPortal puts the GlobalContext data from DataPortalResult into the client's Csla.ApplicationContext, thus ensuring that any changes to that collection on the server are reflected on the client. It then returns the ReturnObject value as the result of the call itself.

Table 15.6. Context Data Contained Within DataPortalResult

Context Data | Description |

|---|---|

| Collection of context data that flows from client to server and then from server back to client; changes on either end are carried across the network |

| The business object being returned from the server to the client as a result of the data portal operation |

You may use the bidirectional transfer of GlobalContext data to generate a consolidated list of debugging or logging information from the client, to the server, and back again to the client. On the other hand, if an exception occurs on the server—either within the data portal itself or, more likely, within the business object's code—that exception must be returned to the client. Either the business object or the UI on the client can use the exception information to deal with the exception in an appropriate manner.

In some cases, it can be useful to know the exact state of the business object graph on the server when the exception occurred. To this end, the object graph is also returned in the case of an exception. Keep in mind that it is returned as it was at the time of the exception, so the objects are often in an indeterminate state.

If an exception occurs on the server, Csla.Server.DataPortal catches the exception and wraps it as an InnerException within a Csla.Server.DataPortalException object. This DataPortalException object contains the information listed in Table 15-7.

Table 15.7. Context Data Contained Within Csla.Server.DataPortalException

Context Data | Description |

|---|---|

| The actual server-side exception (which may also have |

| The stack trace information for the server-side exception |

| A |

Again, Csla.DataPortal uses the information in the exception object to restore the ApplicationContext object's GlobalContext. Then it throws a Csla.DataPortalException, which is initialized with the data from the server.

The Csla.DataPortalException object is designed for use by business object or UI code. It provides access to the business object as it was on the server at the time of the exception. It also overrides the StackTrace property to append the server-side stack trace to the client-side stack trace, so the result shows the entire stack trace from where the exception occurred on the server all the way back to the client code.

Note

Csla.DataPortal always throws a Csla.DataPortalException in case of failure. You must use either its InnerException or BusinessException properties, or the GetBaseException() method to retrieve the original exception that occurred.

In addition to Csla.DataPortal and Csla.Server.DataPortal, the types in Table 15-8 are required to implement the context behaviors discussed previously.

Table 15.8. Types Required to Implement Context Passing and Location Transparency

Type | Namespace | Description |

|---|---|---|

|

| Provides access to the |

|

| Exception thrown by the data portal in case of any server-side exceptions; the server-side exception is an |

|

| Transfers context data from the client to the server on every data portal operation |

|

| Transfers context and result data from the server to the client on every successful data portal operation |

|

| Transfers context and exception data from the server to the client on every unsuccessful data portal operation |

This infrastructure ensures that business code running on the server will share the same key context data as the client. It also ensures that the client's IPrincipal object is transferred to the server when the application is using custom authentication. This is important information, not only for basic impersonation, but also for enabling authorization on the server.

The data portal ensures that the application server will use the same principal object as the client when using custom authentication. And when using Windows AD authentication, you can configure your application server to use impersonation, so it runs under the same Windows identity as the client code.

Either way, because the client principal is available on the server, all the authorization features described in Chapter 12 are available to the business developer on both the client and the server. This means the per-property and object-level authorization rules associated with business objects are enforced whether the code is running on the client or server.

Note

You should be aware that Windows integrated security has limits on how far it can impersonate a user. Normally, impersonation can only occur across one network hop, which would be from a client workstation to the application server. Using advanced Windows network configuration options, it may be possible to extend impersonation beyond one hop. Advanced Windows network configuration is outside the scope of this book.

However, some applications may require a higher-level authorization check to decide whether to allow a client request to be processed on the server atall. This check would occur before any attempt is made to invoke the data access methods in the business or factory object.

The data portal supports this concept by allowing a business developer to create an object that implements the IAuthorizeDataPortal interface from the Csla.Server namespace. If you want to use this feature, the application server's config file needs to include an entry in the appSettings element, specifying the assembly qualified name of this class—for example:

<add key="CslaAuthorizationProvider"

value="NamespaceName.TypeName, AssemblyName" />The IAuthorizeDataPortal interface requires that the class implement a single method: Authorize(). If the client call should not be allowed, this method should throw an exception; otherwise, the client call will be processed normally.

Note

The data portal creates exactly one instance of the specified type. Because most application servers are multithreaded, you must ensure that the code you write in the Authorize() method is thread-safe.

This Authorize() method is invoked after the data portal has restored the client's principal (if using custom authentication), LocalContext, and GlobalContext onto the server's thread. The method is passed a request object containing the values listed in Table 15-9.

Table 15.9. Values Provided to the Authorize Method

Property | Description |

|---|---|

| Type of business object to be affected by the client request |

| Criteria object or business object provided by the client |

| Data portal operation requested by the client; member of the |

Your authorization class would look like this:

public class CustomAuthorizer : Csla.Server.IAuthorizeDataPortal

{

public void Authorize(AuthorizationRequest clientRequest)

{

// perform authorization here

// throw exception to stop processing

}

}This technique allows high-level control over client requests. If a request is allowed to continue processing, all the normal authorization behaviors described in Chapter 12 continue to apply. This is an optional feature, and by default, all data portal requests are allowed.

Thus far, I've been discussing the data portal in terms of synchronous behaviors. Each call to a static method of the Csla.DataPortal class is a synchronous operation.

However, the DataPortal<T> class provides asynchronous versions of the static methods on the non-generic DataPortal class. When performing asynchronous operations, it is necessary to have a consistent object through which the completion callback can arrive. This means that you must create an instance of DataPortal<T> to make asynchronous calls, and each instance can only have one outstanding asynchronous call running at a time.

Using DataPortal<T> is relatively straightforward.

var dp = new DataPortal<CustomerEdit>();

dp.BeginFetch(new SingleCriteria<CustomerEdit, int>(123),

(s, e) =>

{

// process result here

});You can use a lambda (as shown), an anonymous delegate, or a delegate reference to another method to implement the callback. Or you can set up an event handler for the FetchCompleted event on the DataPortal<T> object before starting the asynchronous call. The important thing is that the developer remembers that the callback will occur when the asynchronous operation is complete, and that BeginFetch() is a nonblocking call.

The DataPortal<T> class is really just a wrapper around the Csla.DataPortal class, designed to make normal synchronous data portal calls but from a background thread. To create the background threads, the DataPortal<T> methods use the standard .NET BackgroundWorker component.

By using the BackgroundWorker, the data portal gains some important benefits. All the background operations are executed on threads from the .NET thread pool, and all that work is abstracted by the BackgroundWorker component. More importantly, the BackgroundWorker component automatically marshals its asynchronous callback events onto the UI thread when running in WPF or Windows Forms.

This means that the event indicating that the background task is complete is running automatically on the UI thread in those environments, which dramatically simplifies related UI code. Even better, this reduces bugs that are commonly encountered where the UI developer forgets to marshal to the UI thread before interacting with visual controls.

Prior to CSLA .NET 3.6, all data portal calls were routed to methods implemented in the business class itself. These methods are called the DataPortal_XYZ methods—for example, DataPortal_Create().

In CSLA .NET 3.6, the concept of an object factory has been introduced as an option a business developer can use instead of the traditional data portal behavior. In this case, the developer puts an ObjectFactory attribute on the business class, which instructs the data portal to use the specified object factory to handle all persistence operations related to the business object type—for instance:

[ObjectFactory("MyProject.CustomerFactory, MyProject")]

public class CustomerEdit : BusinessBase<CustomerEdit>This instructs the data portal to create an instance of a CustomerFactory class, using the string parameter as an assembly-qualified type name for the factory. The CustomerFactory object must implement methods to create, fetch, update, and delete CustomerEdit business objects. By default, these methods are named Create(), Fetch(), Update(), and Delete().

The factory object may choose to inherit from ObjectFactory in the Csla.Server namespace. The ObjectFactory class includes protected methods that make it easier to implement typical object factory behaviors. Here's the shell of an object factory:

public class CustomerFactory : ObjectFactory

{

public object Create()

{

// create object and load with defaults

MarkNew(result);

return result;

}public object Fetch(SingleCriteria<CustomerEdit, int> criteria)

{

// create object and load with data

MarkOld(result);

return result;

}

public object Update(object obj)

{

// insert/update/delete object and its child objects

MarkOld(obj); // make sure to mark all child objects as old too

return obj;

}

public void Delete(SingleCriteria<CustomerEdit, int> criteria)

{

// delete object data based on criteria

}

}The MarkNew() and MarkOld() methods manipulate the status of the business object, as discussed in Chapter 8. When using the object factory model, the object factory assumes all responsibility for creating and managing the business object and its state. This includes not only the root object, but all child objects as well.

While factory objects require more work to implement than the traditional data portal technique, they enable the use of some external data access technologies that can't be invoked from inside an already existing business object instance. For example, you may use a data access tool that creates business object instances directly. Obviously, you can't use such a technology from inside an already existing business object, if that technology will be creating the business object instance!

At this point, you should have a good understanding of the various areas of functionality provided by the data portal, and the various classes and types used to implement that functionality. The rest of the chapter will walk through those classes. As with the previous chapters, not all code is shown in this chapter, so you'll want to get the code download for the book to follow along. You can download the code from the Source Code/Download area of the Apress website (www.apress.com/book/view/1430210192) or from www.lhotka.net/cslanet/download.aspx.

In order to support persistence—the ability to save and restore from the database—objects need to implement methods that the UI can call. They also need to implement methods that can be called by the data portal on the server.

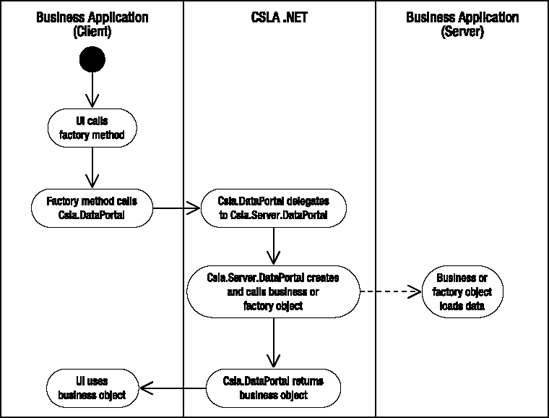

Figure 15-4 shows the basic process flow when the UI code wants to get a new business object or load a business object from the database.

Following the class-in-charge model from Chapter 2, you can see that the UI code calls a factory method on the business class. The factory method then calls the appropriate method on the Csla.DataPortal class to create or retrieve the business object. The Csla.Server.DataPortal object then creates the object and invokes the appropriate data access method (DataPortal_Create() or DataPortal_Fetch()). The populated business object is returned to the UI, which the application can then use as needed.

Immediate deletion follows the same basic process, with the exception that no business object is returned to the UI as a result.

The BusinessBase and BusinessListBase classes implement a Save() method to make the update process work, as illustrated by Figure 15-5. The process is almost identical to creating or loading an object, except that the UI starts off by calling the Save() method on the object to be saved, rather than invoking a factory method on the business class.

Chapter 2 discussed the class-in-charge model and factory methods. When the UI needs to create or retrieve a business object, it will call a factory method that abstracts that behavior. You can implement factory methods in any class you choose as either instance or static methods. I prefer to implement them as static methods in the business class for the object they create or retrieve, as I think it makes them easier to find. Some people prefer to create a separate factory class with instance methods.

This means a CustomerEdit class will include static factory methods such as GetCustomer() and NewCustomer(), both of which return a CustomerEdit object as a result. It may also implement a DeleteCustomer() method, which would have no return value. The implementation of these methods would typically look like this:

public static CustomerEdit NewCustomer()

{

return DataPortal.Create<CustomerEdit>();

}

public static CustomerEdit GetCustomer(int id)

{

return DataPortal.

Fetch<CustomerEdit>(new SingleCriteria<CustomerEdit, int>(id));

}public static void DeleteCustomer(int id)

{

DataPortal.Delete(new SingleCriteria<CustomerEdit, int>(id));

}These are typical examples of factory methods implemented for most root objects.

Although I won't use the following technique in the rest of the book, you can create a factory class with instance methods if you prefer:

public class CustomerFactory

{

public virtual CustomerEdit NewCustomer()

{

return DataPortal.Create<CustomerEdit>();

}

public virtual CustomerEdit GetCustomer(int id)

{

return DataPortal.

Fetch<CustomerEdit>(new SingleCriteria<CustomerEdit, int>(id));

}

public virtual void DeleteCustomer(int id)

{

DataPortal.Delete(new SingleCriteria<CustomerEdit, int>(id));

}

}The methods are virtual, because the primary motivation for using a factory class like this is to allow subclassing of the factory to customize the behavior.

I'll be using static factory methods throughout the rest of the book.

The factory methods cover creating, retrieving, and deleting objects. This leaves inserting and updating (and deferred deletion). In both of these cases, the object already exists in memory, so the Save() and BeginSave() methods are instance methods on any editable object.

The Save() method allows synchronous save operations and is the simplest to use.

_customer = _customer.Save();

The BeginSave() method allows asynchronous save operations and is harder to use, because your code must work in an asynchronous manner, including providing a callback handler that is invoked when the operation is complete.

// disable UI elements that can't be used during save

_customer.BeginSave(SaveComplete);

// ...

private void SaveComplete(object sender, SavedEventArgs e)

{

if (e.Error != null)

{

// handle exception here

}else

{

_customer = e.NewObject;

// update the UI or other code to use the result

// re-enable any disabled UI elements

}

}Notice that the address to SaveComplete() is provided as a parameter to the BeginSave() method. This means SaveComplete() is invoked when the asynchronous operation completes. In WPF and Windows Forms, this callback occurs on the UI thread.

Both Save() and BeginSave() ultimately do the same thing in that they insert, update, or delete the editable root business object.

One Save() method can be used to support inserting and updating an object's data because all editable objects have an IsNew property. Recall that the definition of a "new" object is that the object's primary key value doesn't exist in the database. This means that if IsNew is true, then Save() causes an insert operation; otherwise, Save() causes an update operation.

BusinessBase and BusinessListBase are the base classes for all editable business objects, and both of these base classes implement Save() methods and BeginSave().

Synchronous Save Methods

Here are the two overloads for Save() in BusinessBase:

public virtual T Save(){T result;if (this.IsChild)throw new NotSupportedException(Resources.NoSaveChildException);if (EditLevel > 0)throw new Validation.ValidationException(Resources.NoSaveEditingException);if (!IsValid && !IsDeleted)throw new Validation.ValidationException(Resources.NoSaveInvalidException);if (IsDirty)result = (T)DataPortal.Update(this);elseresult = (T)this;OnSaved(result, null);return result;}public T Save(bool forceUpdate){if (forceUpdate && IsNew){// mark the object as old - which makes it// not dirtyMarkOld();// now mark the object as dirty so it can saveMarkDirty(true);}return this.Save();}

The first Save() method is the primary one that does the real work. It implements a set of common rules that make sense for most objects. Specifically, it does the following:

Ensures that the object is not a child (since child objects must be saved as part of their parent)

Makes sure that the object isn't currently being edited (a check primarily intended to assist with debugging)

Checks to see if the object is valid; invalid objects can't be saved

Checks to make sure the object is dirty; there's no sense saving unchanged data into the database

Notice that the method is virtual, so if a business developer needs a different set of rules for an object, it is possible to override this method and implement something else.

The second Save() method exists to support stateless web applications and the scenario where business objects are used to implement XML services. It allows a service author to create a new instance of the object, load it with data, and then force the object to do an update (rather than an insert) operation. The reason for this is that when creating a stateless web page or service that updates data, the web page or application calling the server typically passes all the data needed to update the database; there's no need to retrieve the existing data just to overwrite it. This optional overload of Save() enables those scenarios.

This is done by first calling MarkOld() to set IsNew to false, and then calling MarkDirty() to set IsDirty to true.

In either case, it is the DataPortal.Update() call that ultimately triggers the data portal infrastructure to move the object to the application server so it can interact with the database.

It is important to notice that the Save() method returns an instance of the business object. Recall that .NET doesn't actually move objects across the network; rather, it makes copies of the objects. The DataPortal.Update() call causes .NET to copy this object to the server so the copy can update itself into the database. That process could change the state of the object (especially if you are using primary keys assigned by the database or timestamps for concurrency). The resulting object is then copied back to the client and returned as a result of the Save() method.

Note

It is critical that the UI updates all its references to use the new object returned by Save(). Failure to do this means that the UI will be displaying and editing old data from the old version of the object. Do not call Save() like this:

_customer.Save();

Do call Save() like this:

_customer = _customer.Save();

The same basic code can be found in BusinessListBase as well.

Asynchronous BeginSave Methods

There are four overloads of BeginSave(), because it can optionally accept the forceUpdate parameter like Save(), and it can also optionally accept a delegate reference to a method that will be invoked when the asynchronous operation is complete.

All the overloads invoke the following method:

public virtual void BeginSave(EventHandler<SavedEventArgs> handler){if (this.IsChild){NotSupportedException error = new NotSupportedException(Resources.NoSaveChildException);OnSaved(null, error);if (handler != null)handler(this, new SavedEventArgs(null, error));}else if (EditLevel > 0){Validation.ValidationException error = new Validation.ValidationException(Resources.NoSaveEditingException);OnSaved(null, error);if (handler != null)handler(this, new SavedEventArgs(null, error));}else if (!IsValid && !IsDeleted){Validation.ValidationException error = new Validation.ValidationException(Resources.NoSaveEditingException);OnSaved(null, error);if (handler != null)handler(this, new SavedEventArgs(null, error));}else{if (IsDirty){DataPortal.BeginUpdate<T>(this, (o, e) =>{T result = e.Object;OnSaved(result, e.Error);if (handler != null)handler(result, new SavedEventArgs(result, e.Error));});}else{OnSaved((T)this, null);if (handler != null)handler(this, new SavedEventArgs(this, null));}}}

Like the Save() method, BeginSave() performs a series of checks to see if the object should really be saved. However, rather than throwing exceptions immediately, the exceptions are routed to the callback handler and to any code that handles the business object's Saved event.

I chose to do this because it allows the business developer to put all her exception-handling code into the callback handler. If BeginSave() actually threw exceptions, the business or UI developer would need to handle exceptions when calling BeginSave() and also in the callback handler, because any asynchronous exceptions would always be handled in the callback handler. The approach taken by this implementation allows the business or UI developer to do all work in one location.

The overall structure and process of BeginSave() is the same as for Save(), except that the BeginUpdate() method is called on the data portal instead of Update(). A lambda expression is used to handle the asynchronous callback from the data portal method.

DataPortal.BeginUpdate<T>(this, (o, e) =>

{

T result = e.Object;

OnSaved(result, e.Error);

if (handler != null)

handler(result, new SavedEventArgs(result, e.Error));

});When the asynchronous data portal method completes, the OnSaved() method is called to raise the Saved event on the business object, and the callback handler is also invoked if it isn't null.

Editable objects may contain child objects—either editable child objects or editable child collections (which in turn contain editable child objects). If managed backing fields are used (see Chapter 7) to maintain the child object references, then the field manager can be used to simplify the process of saving the child objects.

The FieldDataManager class implements an UpdateChildren() method that can be called by an editable object's insert or update data access method. The UpdateChildren() method loops through all the managed backing fields and uses the data portal to update all child objects. Here's the code:

public void UpdateChildren(params object[] parameters){foreach (var item in _fieldData){if (item != null){object obj = item.Value;if (obj is IEditableBusinessObject || obj is IEditableCollection)Csla.DataPortal.UpdateChild(obj, parameters);}}}

The data portal's UpdateChild() method is invoked on each child object, which causes the data portal to invoke the appropriate Child_XYZ method on each child object. I'll discuss this feature of the data portal later in this chapter.

Editable collections contain editable child objects. Updating child objects in an editable collection must be done in a specific manner, because the collection contains a list of deleted items as well as a list of active (nondeleted) items.

The deleted item list must be updated first, so those items are deleted before any active items can be updated. This is because it is quite possible that an active item will replace one of the deleted items, so updating the active list first could result in primary key collisions in the database.

Rather than having the business developer write the code to update all the child objects in every collection, BusinessListBase implements a Child_Update() method that does the work. Here's that method:

[EditorBrowsable(EditorBrowsableState.Advanced)]protected virtual void Child_Update(params object[] parameters){var oldRLCE = this.RaiseListChangedEvents;this.RaiseListChangedEvents = false;try{foreach (var child in DeletedList)DataPortal.UpdateChild(child, parameters);DeletedList.Clear();foreach (var child in this)DataPortal.UpdateChild(child, parameters);}finally{this.RaiseListChangedEvents = oldRLCE;}}

The RaiseListChangedEvents property is set to false to prevent the collection from raising events during the update process. This helps avoid both performance issues and UI "flickering" as the update occurs (otherwise, these events may cause data binding to refresh the UI as each item is updated).

Then the items in DeletedList are updated, which really means they are all deleted. This is handled by the data portal's UpdateChild() method, which invokes each child object's Child_DeleteSelf() method. Because they've been deleted, DeletedList is then cleared so it reflects the state of the underlying data store.

Now the active items in the collection are updated, which means they are inserted or updated based on the state of each child object. The data portal's UpdateChild() method handles those details, calling the appropriate Child_Insert() or Child_Update() method.

Finally, the RaiseListChangedEvents property is restored to its previous value, so the collection can continue to be used normally.

A business developer may explicitly call Child_Update() in an editable root collection as I discussed earlier in this chapter. Normally, a business developer needs to write no code for an editable child collection, because the data portal will automatically call the Child_Update() method when the parent object calls FieldManager.UpdateChildren() to update its children (including the editable child collection).

This completes the features provided by the business object base classes that are required for the data portal to function. Before covering the data portal implementation in detail, I need to discuss how the data portal uses (and avoids) reflection.

The data portal dynamically invokes methods on business and factory objects. Invoking methods dynamically requires the use of reflection, and that can cause performance issues when used frequently. Since the data portal can be used to create, retrieve, update, and delete child objects, it is quite possible for hundreds of methods to be invoked to save just one object graph.

The .NET Framework supports the concept of dynamic method invocation, where reflection is used just once to create a dynamic delegate reference to a method. That delegate is used to actually invoke the method, resulting in performance nearly as good as a strongly typed call to the method.

Of course, that dynamic delegate must be stored somewhere, so we need to cache the delegates and retrieve them from the cache. The end result is that using dynamic delegates is still slower than strongly typed method calls, but it's faster than using reflection on each call.

The Csla.Reflection namespace includes classes used by the data portal (and other parts of CSLA .NET) to dynamically invoke methods using dynamic delegates. Table 15-10 lists the types in that namespace.

Table 15.10. Types in the Csla.Reflection Namespace

Type | Description |

|---|---|

| Thrown when a method can't be invoked dynamically |

| Maintains a reference to a dynamic method delegate, along with related metadata |

| Creates a dynamic method delegate |

| Provides a wrapper around any object to simplify the invocation of dynamic methods on that object |

| Defines the key information for a dynamic method, so the DynamicMethodHandle can be stored and retrieved as necessary |

| Provides an abstract API for use when dynamically calling methods on objects |

Creating and using dynamic method delegates is complex and is outside the scope of this book. You should realize, however, that the MethodCaller and LateBoundObject classes are designed as the public entry points to this functionality, and they are used by the data portal implementation.

The heart of the subsystem is the MethodCaller class. This class exposes the methods listed in Table 15-11; these methods enable the dynamic invocation of methods.

Table 15.11. Public Methods on MethodCaller

Method | Description |

|---|---|

| Creates an instance of an object |

| Invokes a method by name, if that method exists on the target object |

| Invokes a method by name, throwing an exception if that method doesn't exist |

| Gets a |

| Gets a |

| Gets a list of |

| Returns a business object type based on a supplied criteria object, taking into account the |

The MethodCaller class also includes the code to cache dynamic method delegates, and that technique is used automatically when CallMethod() and CallMethodIfImplemented() are used. In other words, MethodCaller uses reflection only to create dynamic method delegates, and it uses those delegates to make all method calls to the target objects. The method delegates are cached for the lifetime of the AppDomain, so they're typically created only once each time an application is run.

The GetObjectType Method

I do want to explore one method in a little more detail. The GetObjectType() method is designed specifically to support the data portal, and it's important to understand how it uses criteria objects to identify the business object type. Both Csla.DataPortal and Csla.Server.DataPortal use this method to determine the type of business object involved in the data portal request. This method uses the criteria object supplied by the factory method in the business class to find the type of the business object itself.

This method supports the two options discussed in Chapter 5: where the criteria class is nested within the business class, and where the criteria object inherits from Csla.CriteriaBase (and thus implements ICriteria).

public static Type GetObjectType(object criteria){var strong = criteria as ICriteria;if (strong != null){// get the type of the actual business object// from the ICriteriareturn strong.ObjectType;}else if (criteria != null){// get the type of the actual business object// based on the nested class scheme in the bookreturn criteria.GetType().DeclaringType;}else return null;}

If the criteria object implements ICriteria, then the code will simply cast the object to type ICriteria and retrieve the business object type by calling the ObjectType property.

With a nested criteria class, the code gets the type of the criteria object and then returns the DeclaringType value from the Type object. The DeclaringType property returns the type of the class within which the criteria class is nested.

The LateBoundObject class is designed to act as a wrapper around any .NET object, making it easy to dynamically invoke methods on that object. It is used like this:

lateBound = new LateBoundObject<CustomerEdit>(_customer);

lateBound.CallMethod("SomeMethod", 123, "abc");Behind the scenes, the wrapper object simply delegates all calls to MethodCaller, and you could choose to use MethodCaller directly. The reason for using LateBoundObject is to write code that is easier to read.

Now let's move on and implement the data portal itself, feature by feature. The data portal is designed to provide a set of core features, including

Implementing a channel adapter

Supporting distributed transactional technologies

Implementing a message router

Transferring context and providing location transparency

The remainder of the chapter will walk through each functional area in turn, discussing the implementation of the classes supporting the concept.

The data portal is exposed to the business developer through the Csla.DataPortal class. This class implements a set of static methods to make it as easy as possible for the business developer to create, retrieve, update, or delete objects. All the channel adapter behaviors are hidden behind the Csla.DataPortal class.

The data portal routes client calls to the server based on the client application's configuration settings in its config file. If the configuration is set to use an actual application server, the client call is sent across the network using the channel adapter pattern. However, there are cases in which the business developer knows that there's no need to send the call across the network—even if the application is configured that way.

The most common example of this is in the creation of new business objects. The DataPortal.Create() method is called to create a new object, and it in turn triggers a call to the business object's DataPortal_Create() method or a factory object's Create() method. Either way, the target method loads the business object with default values from the database.

But what if an object doesn't need to load defaults from the database? In that case, there would be no reason to go across the network at all, and it would be nice to short-circuit the call so that particular object's create method would run on the client.

This is the purpose behind the RunLocal attribute. A business developer can mark a data access method with this attribute to tell the data portal to force the call to run on the client, regardless of how the application is configured in general. Such a business method would look like this:

[RunLocal]

private void DataPortal_Create(Criteria criteria)

{

// set default values here

}The data portal always invokes this DataPortal_Create() method without first crossing a network boundary. So if DataPortal.Create() were called on the client, this method would run on the client, and if DataPortal.Create() were called on the application server, the DataPortal_Create() method would run on the server.

When using a factory object, the attribute is applied to the factory method.

public class CustomerFactory : ObjectFactory

{

[RunLocal]

public void Create()

{

// create object and

// set default values here

}

}If the assembly containing the factory has been deployed to the client, the data portal will find this attribute and will invoke the Create() method on the client. If you don't deploy your factory assembly to the client, obviously the code must run on the server, so the data portal would ignore the RunLocal attribute.

The primary entry point for the entire data portal infrastructure is the Csla.DataPortal class. Business developers use the methods on this class to trigger all the data portal behaviors. This class is involved in both the channel adapter implementation and in handling context information. This section will focus on the channel adapter code in the class, while I'll discuss the context-handling code later in the chapter.

The Csla.DataPortal class exposes five primary methods, described in Table 15-12, that can be called by business logic to create, retrieve, update, or delete root objects, or to execute command objects.

Table 15.12. Methods Exposed by the Data Portal for Root Objects

Method | Description |

|---|---|

| Calls |

| Calls |

| Calls |

| Calls |

| Calls |

The data portal also includes methods used to create, retrieve, update, or delete child objects. Table 15-13 lists these methods.

Table 15.13. Methods Exposed by the Data Portal for Child Objects

Method | Description |

|---|---|

| Calls |

| Calls |

| Calls ChildDataPortal, which then invokes the |

The class also raises two static events that the business developer or UI developer can handle. The DataPortalInvoke event is raised before the server is called, and the DataPortalInvokeComplete event is raised after the server call has returned.

Behind the scenes, each DataPortal method determines the network protocol to be used when contacting the server in order to delegate the call to Csla.Server.DataPortal. Of course, Csla.Server.DataPortal ultimately delegates the call to Csla.Server.SimpleDataPortal and then to the business object on the server.

The Csla.DataPortal class is designed to expose static methods. As such, it is a static class.

public static class DataPortal{}

This ensures that instances of the class won't be created.

Each of the five data portal methods works in a similar manner. I'm not going to walk through all five; instead, I'll discuss the Fetch() method in some detail, and I'll briefly cover the Update() method (because it is somewhat unique). First, though, you should be aware of the events raised by the DataPortal class on each call, and how the data portal connects with the server.

DataPortal Events

The DataPortal class defines two events: DataPortalInvoke and DataPortalInvokeComplete.

public static event Action<DataPortalEventArgs> DataPortalInvoke;public static event Action<DataPortalEventArgs> DataPortalInvokeComplete;private static void OnDataPortalInvoke(DataPortalEventArgs e){Action<DataPortalEventArgs> action = DataPortalInvoke;if (action != null)action(e);}private static void OnDataPortalInvokeComplete(DataPortalEventArgs e){Action<DataPortalEventArgs> action = DataPortalInvokeComplete;if (action != null)action(e);}

These follow the standard approach by providing helper methods to raise the events.

Also notice the use of the Action<T> generic template. The .NET Framework provides this as a helper when declaring events that have a custom EventArgs subclass as a single parameter. A corresponding EventHandler<T> template helps when declaring the standard sender and EventArgs pattern for event methods.

A DataPortalEventArgs object is provided as a parameter to these events. This object includes information of value when handling the event as described in Table 15-14.

Table 15.14. Properties of the DataPortalEventArgs Class

Property | Description |

|---|---|

| The data portal context passed to the server |

| The data portal operation requested by the caller |

| Any exception that occurred during processing |

| The type of business object |

This information can be used by code handling the event to better understand all the information being passed to the server as part of the client message.

Creating the Proxy Object

One of the most important functions of Csla.DataPortal is to determine the appropriate network protocol (if any) to be used when interacting with Csla.Server.DataPortal. Each protocol, or data portal channel, is managed by a proxy object that implements the IDataPortalProxy interface from the Csla.DataPortalClient namespace. This interface ensures that all proxy classes implement the methods required by Csla.DataPortal.

The proxy object to be used is defined in the application's configuration file. That's the web.config file for ASP.NET applications, and myprogram.exe.config for Windows applications (where myprogram is the name of your program). Within Visual Studio, a Windows configuration file is named app.config, so I'll refer to them as app.config files from here forward.

Config files can include an <appSettings> section to store application settings, and it is in this section that the CSLA .NET configuration settings are located. The following shows how this section would look for an application set to use WCF:

<appSettings>

<add key="CslaDataPortalProxy"

value="Csla.DataPortalClient.WcfProxy, Csla"/>

</appSettings>The CslaDataPortalProxy key defines the proxy class that should be used by the data portal. Different proxy objects may require or support other configuration data. In this example, you must also configure WCF itself by including a top-level system.serviceModel element in your app.config file—for example:

<system.serviceModel>

<client>

<endpoint name="WcfDataPortal"

address="http://serverName/virtualRoot/WcfPortal.svc"

binding="wsHttpBinding"

contract="Csla.Server.Hosts.IWcfPortal" />

</client>

</system.serviceModel>Normally only the highlighted line needs to be changed to properly specify the URL to the application server.

The GetDataPortalProxy() method uses this information to create an instance of the correct proxy object.

private static Type _proxyType;private static DataPortalClient.IDataPortalProxyGetDataPortalProxy(bool forceLocal){if (forceLocal){return new DataPortalClient.LocalProxy();}else{Csla.DataPortalClient.IDataPortalProxy portal;string proxyTypeName = ApplicationContext.DataPortalProxy;if (proxyTypeName == "Local")portal = new DataPortalClient.LocalProxy();else{if (_proxyType == null){_proxyType = Type.GetType(proxyTypeName, true, true);}portal = (DataPortalClient.IDataPortalProxy)Activator.CreateInstance(_proxyType);}return portal;}}

The proxy object is created on each call to avoid possible threading issues. Since the data portal can be used asynchronously, it is important to avoid the use of static fields because they'd be shared across multiple threads. The alternative is to use instance fields, but then locking code is required, and locking can lead to performance issues.

Notice that the _proxyType field is static, so it's shared across all threads. The data portal configuration comes from the config file and is the same for all threads in the application, so this value can be safely shared.

If the forceLocal parameter is true, then only a local proxy is returned. The LocalProxy object is a special proxy that doesn't use any network protocols at all, but rather runs the "server-side" data portal components directly within the client process. I'll cover this class later in the chapter.

When forceLocal is false, the real work begins. First, the proxy string is retrieved from the CslaDataPortalProxy key in the config file by calling the ApplicationContext.DataPortalProxy property. The property reads the config file and returns the value associated with the CslaDataPortalProxy key.

If that key value is "Local", then again an instance of the LocalProxy class is created and returned. The ApplicationContext.DataPortalProxy method also returns a LocalProxy object if the key is not found in the config file. This makes LocalProxy the default proxy.

If some other config value is returned, then it is parsed and used to create an instance of the appropriate proxy class.

if (_proxyType == null)

{

string typeName =

proxyTypeName.Substring(0, proxyTypeName.IndexOf(",")).Trim();

string assemblyName =

proxyTypeName.Substring(proxyTypeName.IndexOf(",") + 1).Trim();

_proxyType = Type.GetType(typeName + "," + assemblyName, true, true);

}

portal = (DataPortalClient.IDataPortalProxy)

Activator.CreateInstance(_proxyType);In the preceding <appSettings> example, notice that the value is a comma-separated value with the full class name on the left and the assembly name on the right. This follows the .NET standard for describing classes that are to be dynamically loaded.

The config value is parsed to pull out the full type name and assembly name. Then Activator.CreateInstance() is called to create an instance of the object. The .NET runtime automatically loads the assembly if needed.

The result is that the appropriate proxy object is returned for use by the data portal in communicating with the server-side components.

Root Object Data Access Methods

The five data portal methods listed in Table 15-12 are all relatively similar in that they follow the same basic process. I'll walk through the Fetch() method in some detail so you can see how it works. All five methods follow this basic flow:

Ensure the user is authorized to perform the action.

Get metadata for the business method to be ultimately invoked.

Get the data portal proxy object.

Create a

DataPortalContextobject.Raise the

DataPortalInvokeevent.Delegate the call to the proxy object (and thus to the server).

Handle and throw any exceptions.

Restore the

GlobalContextreturned from the server.Raise the

DataPortalInvokeCompleteevent.Return the resulting business object (if appropriate).

Let's look at the Fetch() method in detail.

The Fetch Method

There are several Fetch() method overloads, all of which ultimately delegate to the actual implementation.

public static object Fetch(Type objectType, object criteria){Server.DataPortalResult result = null;Server.DataPortalContext dpContext = null;try{OnDataPortalInitInvoke(null);

if (!Csla.Security.AuthorizationRules.CanGetObject(objectType))throw new System.Security.SecurityException(string.Format(Resources.UserNotAuthorizedException,"get",objectType.Name));var method =Server.DataPortalMethodCache.GetFetchMethod(objectType, criteria);DataPortalClient.IDataPortalProxy proxy;proxy = GetDataPortalProxy(method.RunLocal);dpContext =new Server.DataPortalContext(GetPrincipal(),proxy.IsServerRemote);OnDataPortalInvoke(new DataPortalEventArgs(dpContext, objectType, DataPortalOperations.Fetch));try{result = proxy.Fetch(objectType, criteria, dpContext);}catch (Server.DataPortalException ex){result = ex.Result;if (proxy.IsServerRemote)ApplicationContext.SetGlobalContext(result.GlobalContext);string innerMessage = string.Empty;if (ex.InnerException is Csla.Reflection.CallMethodException){if (ex.InnerException.InnerException != null)innerMessage = ex.InnerException.InnerException.Message;}else{innerMessage = ex.InnerException.Message;}throw new DataPortalException(String.Format("DataPortal.Fetch {0} ({1})", Resources.Failed, innerMessage),ex.InnerException, result.ReturnObject);}if (proxy.IsServerRemote)ApplicationContext.SetGlobalContext(result.GlobalContext);OnDataPortalInvokeComplete(new DataPortalEventArgs(dpContext, objectType, DataPortalOperations.Fetch));}catch (Exception ex){OnDataPortalInvokeComplete(new DataPortalEventArgs(dpContext, objectType, DataPortalOperations.Fetch, ex));

throw;}return result.ReturnObject;}

The generic overloads simply cast the result so the calling code doesn't have to.

public static T Fetch<T>(){return (T)Fetch(typeof(T), EmptyCriteria);}

Remember that the data portal can return virtually any type of object, so the actual Fetch() method implementation must deal with results of type object.

Looking at the code, you should see all the steps listed in the preceding bulleted list. The first is to ensure the user is authorized.

if (!Csla.Security.AuthorizationRules.CanGetObject(objectType))

throw new System.Security.SecurityException(

string.Format(Resources.UserNotAuthorizedException,

"get",

objectType.Name));Then the DataPortalMethodCache is used to retrieve (or create) the metadata for the business method that will ultimately be invoked on the server.

var method =

Server.DataPortalMethodCache.GetFetchMethod(objectType, criteria);The result is a DataPortalMethodInfo object that contains metadata for the business method. At this point, the most important bit of information is whether the RunLocal attribute has been applied to the method on the business class. This Boolean value is used as a parameter to the GetDataPortalProxy() method, which returns the appropriate proxy object for server communication.

DataPortalClient.IDataPortalProxy proxy;

proxy = GetDataPortalProxy(method.RunLocal);Next, a DataPortalContext object is created and initialized. The details of this object and the means of dealing with context information are discussed later in the chapter.

dpContext =

new Server.DataPortalContext(GetPrincipal(),

proxy.IsServerRemote);Then the DataPortalInvoke event is raised, notifying client-side business or UI logic that a data portal call is about to take place.

OnDataPortalInvoke(new DataPortalEventArgs(

dpContext, objectType, DataPortalOperations.Fetch));Finally, the Fetch() call itself is delegated to the proxy object.

result = proxy.Fetch(objectType, criteria, dpContext);

All a proxy object does is relay the method call across the network to Csla.Server.DataPortal, so you can almost think of this as delegating the call directly to Csla.Server.DataPortal, which in turn delegates to either FactoryDataPortal or SimpleDataPortal. The ultimate result is that a factory object's Fetch() method or the business object's DataPortal_Fetch() method is invoked on the server.

Note

Remember that the default is that the "server-side" code actually runs in the client process on the client workstation (or web server). Even so, the full sequence of events described here occur—just much faster than if network communication were involved.

An exception could occur while calling the server. Most likely, the exception could occur in the business logic running on the server, though exceptions can also occur due to network issues or similar problems. When an exception does occur in business code on the server, it will be reflected here as a Csla.Server.DataPortalException, which is caught and handled.

result = ex.Result;

if (proxy.IsServerRemote)

ApplicationContext.SetGlobalContext(result.GlobalContext);

string innerMessage = string.Empty;

if (ex.InnerException is Csla.Reflection.CallMethodException)

{

if (ex.InnerException.InnerException != null)

innerMessage = ex.InnerException.InnerException.Message;

}

else

{

innerMessage = ex.InnerException.Message;

}

throw new DataPortalException(

String.

Format("DataPortal.Fetch {0} ({1})", Resources.Failed, innerMessage),