3

MODERN CONTROL-SYSTEM DESIGN USING STATE-SPACE, POLE PLACEMENT, ACKERMANN'S FORMULA, ESTIMATION, ROBUST CONTROL, AND H∞ TECHNIQUES

3.1. INTRODUCTION

State-space analysis was introduced in Chapter 1, and has been used in parallel with the classical frequency-domain analyses techniques presented in Chapter 2. The state-space approach is applicable to a wider class of problems such as multiple-input/multiple-output (MIMO) control systems. Chapter 2 applied the frequency-domain approaches such as the Bode diagram, and the root locus to linear control-system design.

In the design of a control system, the question arises as to where to place the closed-loop roots. In Section 2.9 which presented the root-locus method, we could specify where to place the dominant-pair of complex-conjugate roots in order to obtain a desired transient response. However, we could not do so with great certainty because we were never sure what effect the higher-order poles would have on the second-order approximation.

The control-system design engineer desires to have design methods available which would enable the design to proceed by specifying all of the closed-loop poles of higher-order control systems. Unfortunately, the frequency-domain design methods presented in Chapter 2 do not permit the control-system engineer to specify all poles in control systems which are higher than two because they do not provide a sufficient number of unknowns for solving uniquely for the specified closed-loop poles. This problem is overcome using state-space methods which provide additional adjustable parameters, and methods for determining these parameters.

This chapter presents a modern control-system design method using state-space techniques known as pole placement or pole assignment. This design technique is similar to what we did in Section 2.9 where we placed two dominant complex-conjugate poles of the closed-loop transfer function in desired locations in order to obtain desirable transient responses. However, in this chapter, we will show how pole placement allows the control-system engineer to place all of the poles of the closed-loop transfer function in desirable locations. Ackermann's formula is also presented for designs using pole placement for application in those control systems that require feedback from state variables which are not phase variables (where each subsequent state variable is defined as the derivative of the previous state variable). A practical problem arises with the pole placement method involving cost and the availability of determining (measuring) all of the system variables needed for obtaining a solution. In many practical control systems, all of the system state variables may not be available due to cost considerations, environmental considerations (e.g., nuclear power plant control systems), and the availability of transducers to measure certain states. For these cases, it is necessary for the control-system engineer to estimate the state variables that cannot be measured from the state variables that can be measured. Therefore, in addition to pole placement, this chapter also presents the very important subjects of controllability, observability, and estimation.

This chapter on modern control-system design also presents the design of robust control systems. Robust control systems are concerned with determining a stabilizing controller that achieves feedback performance in terms of stability and accuracy requirements, but the controller must achieve performance that is robust (insensitive) to plant uncertainty, parameter variation, and external disturbances. The design of two-degrees-of-freedom compensation control systems exhibiting desirable robustness to plant uncertainty, parameter variation, and external disturbances is presented.

This chapter concludes with an introduction to H∞ control concepts which is a new technique that emerged in the 1980s that combines both the frequency- and time-domain approaches to provide a unified design approach. The H∞ approach has dominated the trend of control-system development in the 1980s and 1990s. The H∞ control-system design approach expands on the concept of robustness presented in this chapter, sensitivity (presented in Chapter 1), together with the frequency and state-variable domain techniques presented in this book. The H∞ approach is applied to determine the optimum sensitivity for control systems.

3.2. POLE-PLACEMENT DESIGN USING LINEAR-STATE-VARIABLE FEEDBACK

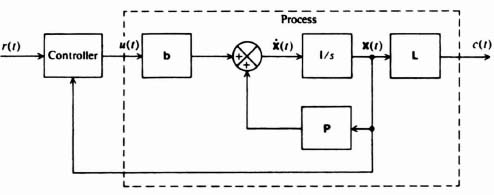

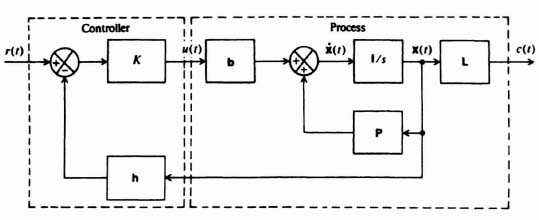

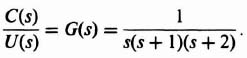

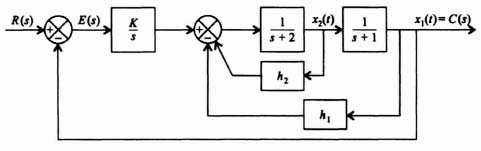

Having presented methods for designing linear control systems using classical techniques, let us now look at the problem of specifying pole placement from the viewpoint of state-variable feedback [1]. In order to do this, let us first look at the basic feedback problem illustrated in Figure 3.1. This figure illustrates the concept of feeding back the states of the process in addition to that of the output. Because a linear process can be characterized by the phase-variable canonical equations

Figure 3.1 General feedback system problem illustrating feedback of the output state and the states of the process.

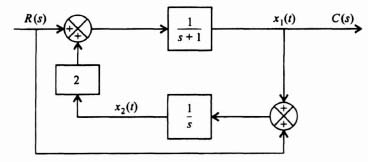

let us consider the configuration of Figure 3.2. It is important to observe from this figure that the control signal is generated from a knowledge of the reference input r(t) and the state variables x(t). Note that r(t), u(t), and c(t) represent scalars.

In general, the control input u can be represented as

![]()

Rather than considering the controller in such a broad sense, let us consider the specific condition of linear state-variable feedback where the controller weights the sum of the state variables in a linear manner. In addition, it is assumed that the controller provides a linear gain K which multiplies the difference between the reference input and the linear weighted sum of state variables fed back. Therefore, u(t) can be represented as

where hi is defined as the ith feedback coefficient. In matrix form, u(t) can be represented as

where

Figure 3.2 General feedback system with state-variable feedback.

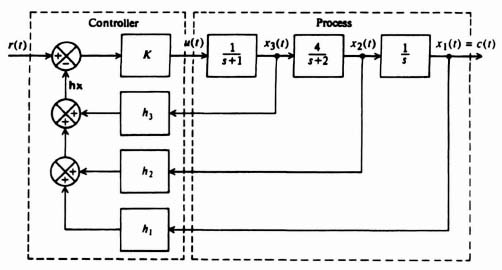

Figure 3.3 presents a matrix representation of the concept of linear-state-variable feedback, and Figure 3.4 is an example of a physical representation of a typical system as implied by Figure 3.3. In the following discussion, it is assumed that all state variables are directly available for measurement and control. In practice, this is not always possible, and techniques for modifying and extending the design procedure presented, to the case where all the state variables are not available, are also discussed in Sections 3.6 and 3.7.

How does linear feedback of the state variables affect the behavior of the process given by Eqs. (3.1) and (3.2)? This can easily be determined by substituting Eq. (3.4) into Eq. (3.1):

Figure 3.3 Linear-state-variable feedback representation.

Figure 3.4 Example of pole placement design using linear-state-variable feedback.

Simplifying Eq. (3.7), and incorporating Eq. (3.2), we obtain the closed-loop equation

where

is the closed-loop-system matrix. Comparing Eq. (3.1) and (3.2) with (3.8) and (3.9), we observe that they are identical except that the P matrix has been replaced by Ph and u(t) becomes Kr(t).

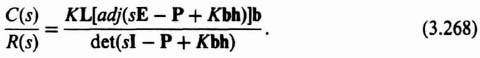

How can we relate the closed-loop-system matrix Ph to the closed-loop transfer function, C(s)/R(s)? This can be accomplished by taking the Laplace transform of Eqs. (3.8) and (3.9). Because the results will be used to find a transfer function, all initial conditions are assumed to be zero:

Solving for X(s) from Eq. (3.11), we get

The inverse matrix [sI – Ph]−1 is defined as the closed-loop resolvent matrix, Φh(s), where

Therefore, Eq. (3.13) may be rewritten as

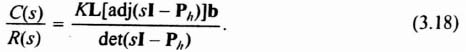

Substituting Eq. (3.15) into Eq. (3.12), we obtain a relation between C(s) and R(s):

Therefore, the closed-loop transfer function in terms of the closed-loop resolvent matrix is given by

In addition, the characteristic equation in terms of the closed-loop-system matrix can also easily be determined, simply by substituting the numerator and denominator portions of the inverse matrix, Φh(s):

The corresponding characteristic equation of the closed-loop system in terms of the closed-loop system matrix is given by

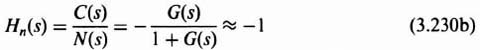

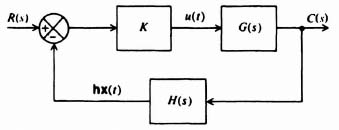

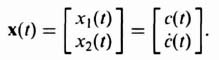

Because we are concerned with synthesizing control systems in terms of pole placement design using linear-state-variable feedback concepts, we would like to force the system illustrated in Figure 3.3 into the generalized form illustrated in Figure 3.5, and study its properties. Let us first consider the derivation of H(s). From Figure 3.5 we observe that

Substituting Eq. (3.12) into Eq. (3.20), we obtain

After substitution of Eq. (3.15) for X(s), Eq. (3.21) becomes

The term G(s) can also be derived in terms of Φh(s). The closed-loop transfer function of the system illustrated in Figure 3.5 is given by

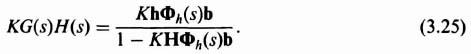

Substituting Eqs. (3.17) and (3.22) into Eq. (3.23), we obtain the expression

Combining Eqs. (3.22) and (3.24), the open-loop transfer function is found to be given by

Figure 3.5 An equivalent model of Figure 3.3.

Let us compare Eqs. (3.17), (3.22)–(3.24), and (3.25) in order to draw conclusions regarding G(s), H(s), the open-loop transfer function KG(s)H(s), and the closed-loop transfer function C(s)/R(s). These characteristics will be important for designing systems using pole placement techniques with linear-state-variable feedback techniques in the following section. Based on these five equations, we can state the following properties:

- The poles of KG(s)H(s) are the poles of G(s).

- The zeros of C(s)/R(s) are the zeros of G(s).

- The pole-zero excess of C(s)/R(s) must be equal to the pole-zero excess of G(s).

With these properties as a basis, we consider in the following section the design of control systems from the viewpoint of linear-state-variable feedback.

3.3. CONTROLLER DESIGN USING POLE PLACEMENT AND LINEAR-STATE-VARIABLE FEEDBACK TECHNIQUES

The preceding section has indicated several important relationships between open-loop and closed-loop transfer functions. This is very important in the design of control systems for the case where the closed-loop transfer function is specified and it is desired to determine the open-loop transfer function. A typical problem might specify the desired velocity constant; then use is made of Eq. (C.29) in Appendix C which gave the velocity constant in terms of the closed-loop poles and zeros. The problem is to determine the resulting linear-state-variable feedback system.

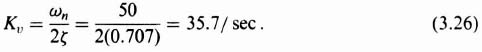

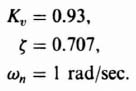

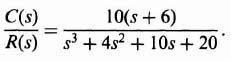

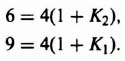

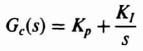

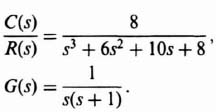

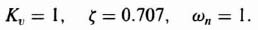

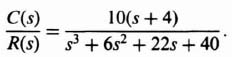

Let us illustrate the procedure by considering the following problem. It is desired that the closed-loop characteristics of a unity-feedback control system be given by the following parameters:

![]()

What form of closed-loop transfer function will satisfy these requirements? Let us first try a simple quadratic control system having a pair of complex-conjugate poles. From Eq. (C.31) of Appendix C, such a system has a velocity constant given by

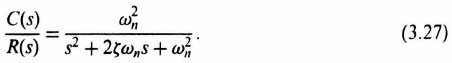

Therefore, a simple quadratic control system having a pair of complex-conjugate poles will satisfy these specifications. From Eq. (B.18) of Appendix B,

![]()

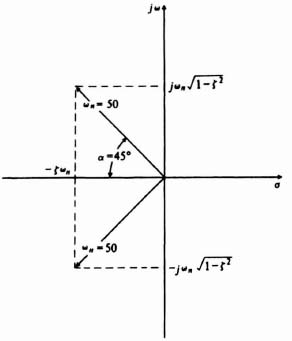

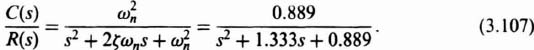

For a damping ratio of 0.707, α = 45° and the relations among the complex-conjugate poles, ωn and ζ are illustrated in Figure 3.6. Therefore, the closed-loop control system is given by

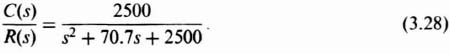

By substituting ζ = 0.707 and ωn = 50 into Eq. (3.27), we obtain the following desired closed-loop transfer function:

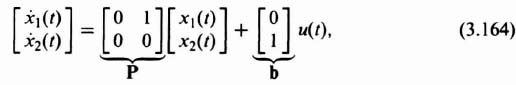

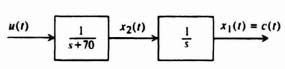

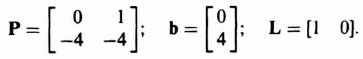

Let us assume that the open-loop process that is being controlled is illustrated in Figure 3.7. The corresponding state-variable representation is readily found to be

where

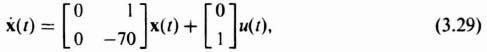

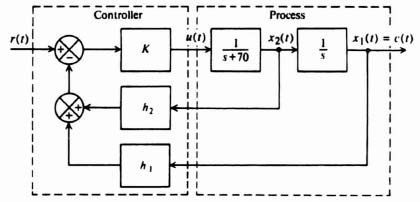

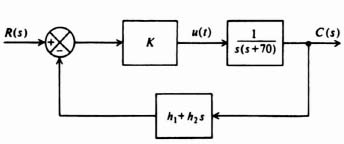

The resulting linear-state-variable feedback representation is illustrated in Figure 3.8. This feedback represenation can be simplified by the configuration illustrated

Figure 3.7 Open-loop process to be controlled.

Figure 3.8 State-variable feedback representation of system.

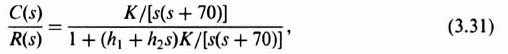

in Figure 3.9. The resulting closed-loop transfer function is given by

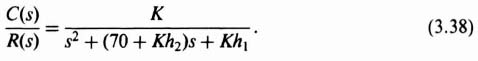

which can be reduced to the following expression:

The values of K1h1, and h2 can be found from Eqs. (3.28) and (3.32). The following set of simultaneous equations result:

We have three equations and three unknowns. Solving, we find that h1 = 1, K = 2500, and h2 = 2.8 × 10−4. The final step is to draw the root locus and examine the relative stability, and the sensitivity as a function of slight gain variations. For this simple system, the final step is not necessary.

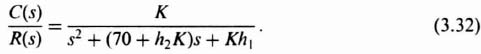

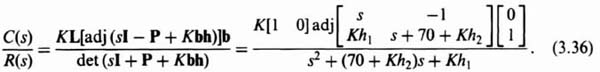

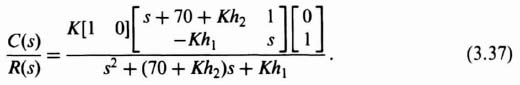

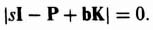

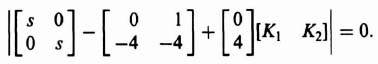

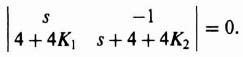

Although this simple example has been solved using block diagrams and transfer functions, it could also have been solved using the matrix-algebra approach. To illustrate this, let us pick up this problem from Eq. (3.28) which is the desirable closed-loop transfer function. We want to determine the closed-loop transfer function

Figure 3.9 Equivalent configuration of Figure 3.8.

for the linear-state-variable-feedback control system using Eq. (3.18) and knowledge of the P and B matrices from Eq. (3.29), and the matrix L from Eq. (3.30) as follows:

Simplifying Eq. (3.36), we obtain the following:

Simplifying Eq. (3.37) results in the following expression for the closed-loop transfer function of the system:

Equation (3.38) is identical to the closed-loop transfer function we obtained in Eq. (3.32) which was obtained from the block diagram shown in Figure 3.8. Therefore, we repeat the process of setting like terms equal to each other from Eq. (3.38) and Eq. (3.28). The resulting three simultaneous equations of (3.33) through (3.35) will be identical, and the resulting parameters of K = 1, h1 = 1, and h2 = 2.8 × 10−4 will be the same as found before.

With this fundamental example as a basis, the general pole placement design procedure can be formulated as follows:

- Determine the desired closed-loop transfer function based on the discussion of Appendix C.

- Determine the representation of the process to be controlled.

- Represent the closed-loop system in terms of an equivalent linear-state-variable-feedback configuration.

- Determine the closed-loop transfer function C(s)/R(s) from the equivalent model in terms of K and h.

- Equate the C(s)/R(s) expressions from Steps 1 and 4 and determine K and h.*

- Plot the resulting root locus of KG(s)H(s) and evaluate the relative stability and sensitivity as a function of gain variations.

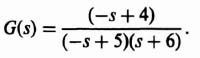

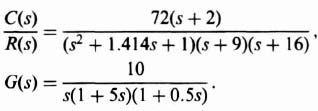

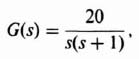

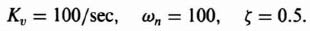

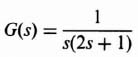

Let us apply this pole placement procedure next to the following more complex example. The problem concerns the control of a process in a unity-feedback closed-loop system whose transfer function is given by

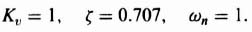

It is assumed that the transient response of the system is governed by a pair of dominant complex-conjugate poles, and that the following parameters are desired:

What should the closed-loop transfer function be? From Eq. (3.26), a pair of complex-conjugate poles in the denominator would only have a velocity constant given by

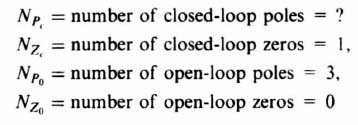

Therefore, a simple pair of complex-conjugate poles is inadequate to meet the velocity constant requirement of 0.93. By examining Eq. (C.29), we conclude that a zero Z must be added to the closed-loop transfer function. How many poles should the closed-loop system have? Because

where

therefore,

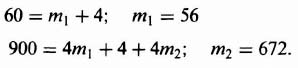

and

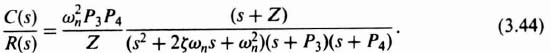

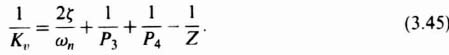

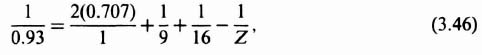

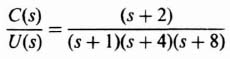

Since the resulting unity-feedback, closed-loop transfer function has to have one zero and four poles, it has the following general form: The value of the zero Z can be found from Eq. (5.35) as follows:

The value of the zero Z can be found from Eq. (5.35) as follows:

Due to external overall system factors in which this feedback system is to operate, it is assumed that the poles at P3 and P4 are specified to occur at 9 and 16, respectively. Therefore,

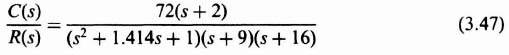

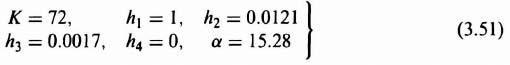

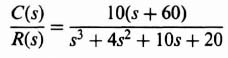

so that Z = 2, and the desired closed-loop transfer function is given by

or

Because the zeros of G(s) must be the same as that of C(s)/R(s), we must also add the factor (s + 2) to the numerator of G(s). Then, to satisfy Eq. (3.41), we must add a pole factor (s + α) to the denominator of G(s). The resulting compensating network to be added to G(s) is given by

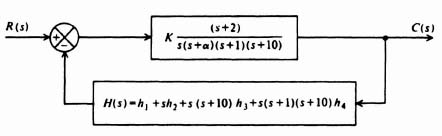

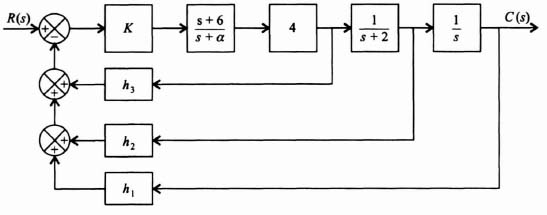

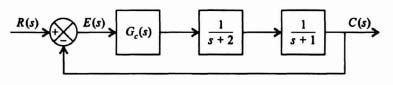

where α is a pole of the open-loop transfer function which is to be determined. The resulting linear-state-variable feedback system is illustrated in Figure 3.10. The problem remaining is to select the values of K, α, and h.

Figure 3.10 State-variable feedback representation of system.

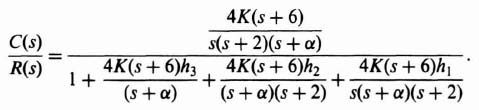

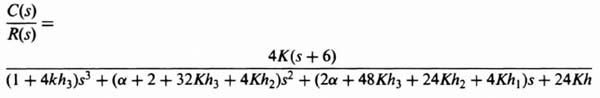

An equivalent block diagram of this system is illustrated in Figure 3.11. The resulting closed-loop transfer function from this equivalent model is given by

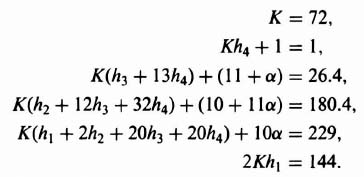

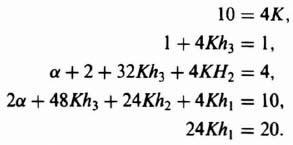

Equating the two forms of C(s)/R(s) given by Eqs. (3.48) and (3.50), the following set of equations is obtained:

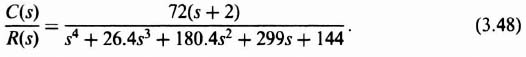

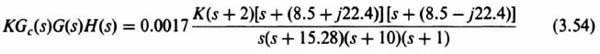

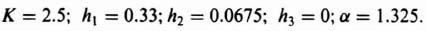

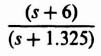

Notice that we have six simultaneous equations with six unknowns (K, h1, h2, h3, h4, and α). Solving these equations, we obtain the following expressions:

From Eq. (3.49), the resulting compensation network, Gc(s), is given by

which is a phase-lead network.

It is important to emphasize that α could have turned out to be negative for a different set of specifications This would be undesirable, because it would result in a zero in the right-half plane; this system would be unstable. In other cases, the system might be conditionally stable.

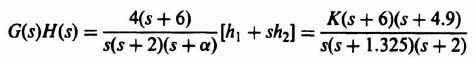

Our results of this pole placement example can be evaluated most conveniently on a root-locus plot. To obtain the root locus of the compensated system, the open-loop

Figure 3.11 Equivalent block diagram for system illustrated in Figure 3.10.

transfer function will be obtained. For the values of the parameters found in Eq. (3.51), H(s) results in the following:

Combining Eqs. (3.39), (3.52), and (3.53) we obtain the following transfer function for the open-loop system:

The resulting root locus is plotted in Figure 3.12. Observe that the resulting root locus is stable for all values of K from zero to infinity. The locations of the dominant complex-conjugate roots for K = 72 are indicated.

It is important to emphasize again that the discussion of linear-state-variable feedback in this and the preceding section has assumed that all of the state variables are accessible. This is not always the case. This is analyzed further in Sections 3.6 and 3.7.

3.4. CONTROLLABILITY

The presentation of linear-state-variable feedback in Sections 3.2 and 3.3 assumed that all of the states are observable and measurable, and available to accept control signals (controllable). The concepts of controllability [2–4], and observability also playa very important role in optimal control theory (presented in the accompanying volume). Before designing a control system, we must determine whether it is controllable and its states are observable, since the conditions on controllability and observability often govern the existence of a solution to an optimal control system. Kalman [2,3] first introduced the concepts of controllability and observability in 1960. These concepts are basic in modern optimal control theory. This section develops mathematical tests to determine controllability, and the following section presents mathematical tests for determining observability.

Figure 3.12 Root locus for the system of Figure 3.11 with the parameters of Eq. (3.51)

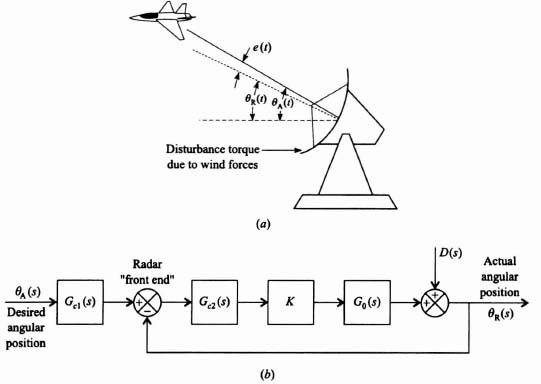

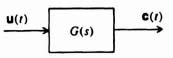

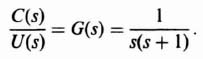

In order to introduce the concept of controllability, let us consider the simple open-loop system illustrated in Figure 3.13. A system is completely controllable if there exists a control which transfers every initial state at t = t0 to any final state at t = T for all t0, and T. Qualitatively, this means that the system G(s) is controllable if every state variable of G can be affected by the input signal u(t). However, if one (or several) of the state variables is (or are) not affected by u(t), then this (or these) state variable(s) cannot be controlled in a finite amount of time by u(t) and the system is not completely controllable.

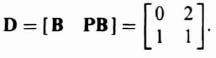

A. Controllability by Inspection

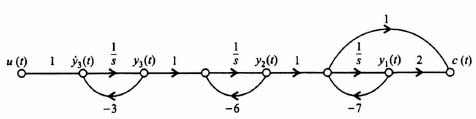

As an example of a system which is not completely controllable, let us consider the signal-flow diagram illustrated in Figure 3.14. This system contains four states, only two of which are affected by u(t). This input only affects the states x1(t) and x2(t). It has no effect on x3(t) and x4(t). Therefore, x3(t) and x4(t) are uncontrollable. This means that it is impossible for u(t) to change x3(t) from initial state x3(0) to final state x3(T) in a finite time interval T and the system is not completely controllable.

B. The Controllability Matrix

Let us now consider this problem more precisely and establish a mathematical criterion for determining whether a system is controllable. We limit our discussion to linear constant systems. Assume that the system is described by

Figure 3.13 Open-loop system containing several inputs and outputs.

Figure 8.14 Signal-flow graph of a system that is not completely controllable.

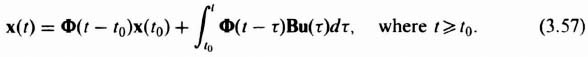

The solution of Eq. (3.55) can be expressed as Eq. (2.265)‡:

Let us assume that the desired final state of our system at t = tf is zero:

Using Eqs. (2.259)‡ and (2.261)‡, we can write Eq. (3.57) as

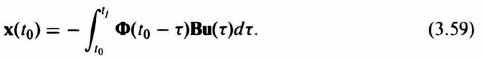

The state-transition matrix can be expressed from Eq. (2.322)‡ as

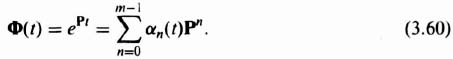

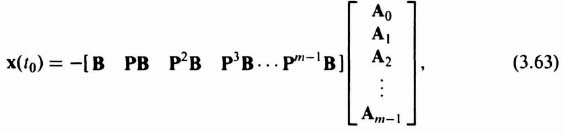

Here, x is an m × 1 vector, P is an m × m matrix, u is an r × 1 vector, B is an m × r matrix, and αn(t) is a scalar function of t. (This form results from application of the Cayley–Hamilton theorem). Substituting Eq (3.60) into Eq. (3.59), we obtain the following expression for x(t0):

Because the matrices P and B are not functions of τ, we can rewrite Eq. (3.61) as

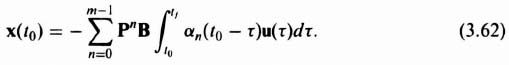

Equation (3.62) can be rewritten as

where

If we define

where D, the controllability matrix, is an m × mr matrix and A is an mr × 1 vector, then Eq. (3.63) becomes

For a given initial state x(t0), the input u can be found to drive the state to x(tf) = 0 for a finite time interval tf − t0 if Eq. (3.67) has a solution. A unique solution occurs only if there is a set of m linearly independent column vectors in the matrix D. If u is a scalar, then D is an m × m square matrix, and Eq. (3.67) represents a set of m linearly independent equations which have a solution if D is nonsingular or the determinant of D is not zero. The controllability criterion thus states that the system of Eqs. (3.55) and (3.56) is completely controllable if D [see Eq. (3.65)] contains m linearly independent column vectors or, if u is a scalar, D is nonsingular.

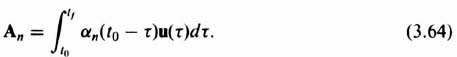

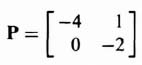

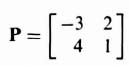

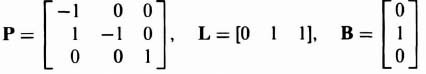

In order to illustrate this mathematical controllability concept, consider a second-order system where

and

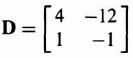

Then, from Eq. (3.65),

The resulting matrix D is singular (its determinant is zero) and the system is therefore not completely controllable.

As a second example, consider a second-order system where

and

Then, from Eq. (3.65)

The resulting matrix D is nonsingular and the system, therefore, is completely controllable.

3.5. OBSERVABILITY

In order to introduce the concept of observability [2–4], let us again consider the simple open-loop system illustrated in Figure 3.13. A system is completely observable if, given the control and the output over the interval t0 ![]() t

t ![]() T, one can determine the initial state x(t0). Qualitatively, the system G is observable if every state variable of G affects some of the outputs in c. It is very often desirable to determine information regarding the system states based on measurements of c. However, if we cannot observe one or more of the states from the measurements of c, then the system is not completely observable. We had assumed the systems were observable in our discussion of pole placement using linear-state variable feedback in Sections 3.2 and 3.3.

T, one can determine the initial state x(t0). Qualitatively, the system G is observable if every state variable of G affects some of the outputs in c. It is very often desirable to determine information regarding the system states based on measurements of c. However, if we cannot observe one or more of the states from the measurements of c, then the system is not completely observable. We had assumed the systems were observable in our discussion of pole placement using linear-state variable feedback in Sections 3.2 and 3.3.

A. Observability by Inspection

As an example of a system which is not completely observable, let us consider the signal-flow diagram illustrated in Figure 3.15. This system contains four states, only two of which are observable. The states x3(t) and x4(t) are not connected to the output c(t) in any manner. Therefore, x3(t) and x4(t) are not observable and the system is not completely observable.

B. The Observability Matrix

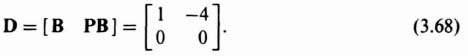

Let us now consider this problem more precisely and establish a mathematical criterion for determining whether a system is completely observable. Again, we limit our discussion to linear constant systems of the form

where x is an m × 1 vector, P is an m × m matrix, u is an r × 1 vector, B is an m × r matrix, c is a p × 1 vector, and L is a p × m matrix. The solution of Eq. (3.69) is given by [see Eq. (3.57)]

Figure 3.15 Signal-flow graph of a system that is not completely observable.

It will now be shown that observability depends on the matrices P and L. Substituting Eq. (3.71) into Eq. (3.70), we obtain

From the definition of observability we can see that the observability of x(t0) depends on the term LΦ(t − t0)x(t0). Therefore, the output c(t) when u = 0 is given by

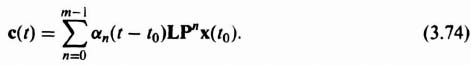

Substituting Eq. (3.60) into Eq. (3.73), we obtain the following expression for the output c(t):

Equation (3.74) indicates that if the output c(t) is known over the time interval t0 ![]() t

t ![]() T, then x(t0) is uniquely determined from this equation if x(t0) is a linear combination of (LjPn)T for n = 0, 1, 2, …, m − 1, and j = 1, 2, 3,…, r. The matrix Lj is the 1 × m matrix formed by the elements of the jth row of L. Because

T, then x(t0) is uniquely determined from this equation if x(t0) is a linear combination of (LjPn)T for n = 0, 1, 2, …, m − 1, and j = 1, 2, 3,…, r. The matrix Lj is the 1 × m matrix formed by the elements of the jth row of L. Because ![]() , we let U be the m × mr matrix defined by

, we let U be the m × mr matrix defined by

The observability criterion states that the system is completely observable if there is a set of m linearly independent column vectors in the observability matrix U.

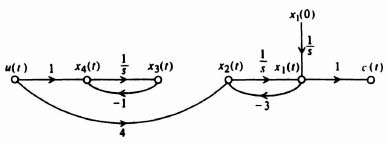

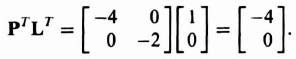

In order to illustrate the observability concept mathematically, consider a second-order system where

and

![]()

Therefore,

and

Substituting these values into Eq. (3.75), we obtain

Because U is singular, the system is not completely observable.

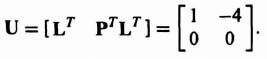

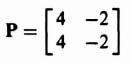

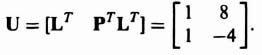

As a second example, consider the second-order system where

and

![]()

Therefore,

and

Substituting these values into Eq. (3.75), we obtain

Because U has two independent columns, the system is completely observable.

3.6. ACKERMANN'S FORMULA FOR DESIGN USING POLE PLACEMENT [5–7]

In addition to the method of matching the coefficients of the desired characteristic equation with the coefficients of det (sI − Ph) as given by Eq. (3.19), Ackermann has developed a competing method. The pole placement method using the matching of coefficients of the desired characteristic equation with the coefficients of Eq. (3.19) is very useful for control systems which are represented in phase-variable form, where phase variable refers to systems where each subsequent state variable is defined as the derivative of the previous state variable. Some control systems require feedback from state variables which are not phase variables. Such high-order control systems can lead to very complex calculations for the feedback gains. Ackermann's method simplifies this problem by transforming the control system to phase variables, determining the feedback gains, and transforming the designed control system back to its original state-variable representation.

Let us represent a control system which is not represented in phase-variable form by the following:

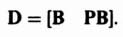

We will assume that the controllability matrix [see Eq. (3.65)] can be represented by

Subscript y is used to designate the original, non-phase-variable, controllability matrix. We will next assume that the control system can be transformed into the phase-variable representation using the following transformation:

Substituting Eq. (3.79) into Eq. (3.76) and Eq. (3.77), we obtain:

Using the transformation of Eq. (3.79), the controllability matrix for the transformed system defined by Eqs. (3.80) and (3.81) is:

The subscript x is used to designate the phase-variable form of the transformed control system. Equation (3.82) can be simplified to

Therefore, substituting Eq. (3.78) into Eq. (3.83), we find that

The transformation matrix A can be found from

Therefore, the transformation matrix A can be determined from the controllability matrices defined by Eqs. (3.65) and (3.83). Once the control system is transformed to the phase-variable form, the feedback gains h can be determined as described in Section 3.2.

Returning to Eq. (3.4) to represent u(t) for the control system in phase-variable form,

the following is obtained by substituting Eq. (3.86) into Eq. (3.80):

Equation (3.87) can be simplified to the following phase-variable state equation:

The output equation remains as shown in Eq. (3.81):

Because Eqs. (3.88) and (3.89) are in phase-variable form, the rules for pole placement developed in Section 3.2 for phase-variable systems are valid for this representation. We now have to transform Eqs. (3.88) and (3.89) back to the original state and output equation representation by using the transformation provided by Eq. (3.79):

Substituting Eq. (3.90) into Eq. (3.88), we obtain the following:

Therefore, Eq. (3.91) reduces to the following state equation:

The output equation is found by substituting Eq. (3.79) in Eq. (3.89):

By comparing Eq. (3.92) with Eqs. (3.8) and (3.10), we find that the state-variable feedback constants for the original system are

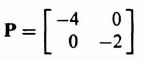

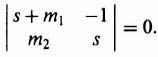

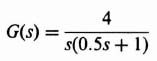

A. Example Applying Ackermann's Formula for Design using Pole Placement

We wish to apply the pole placement concepts using Ackermann's design formula to a system that uses linear-state-variable feedback. The system specifications require an overshoot of 4.33% and a settling time of 6 sec. The process transfer function is

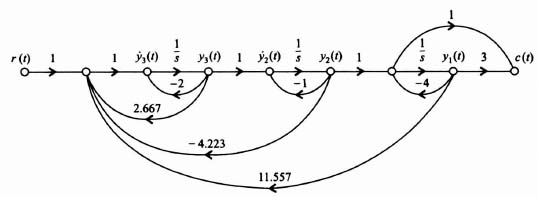

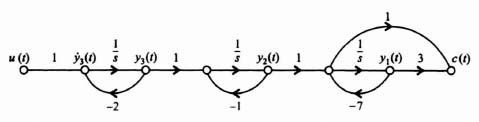

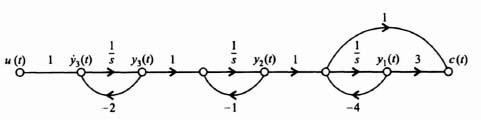

and the control system's signal-flow graph is given in Figure 3.16.

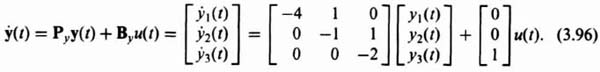

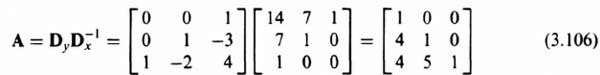

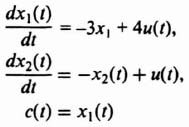

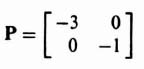

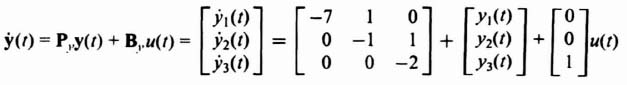

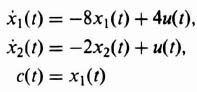

The state equation for the process illustrated in Figure 3.16 is:

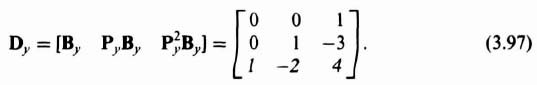

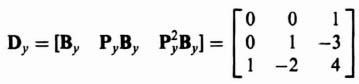

Since we are going to need the controllability matrix Dy to convert this original system to phase-variable form [see Eq. (3.85)], let us compute this controllability matrix for this original system:

The value of the determinant of Dy is −1, it is nonsingular, and the original control system is controllable as expected from inspection of Figure 3.16.

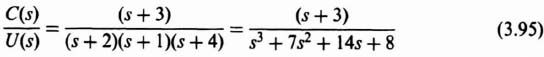

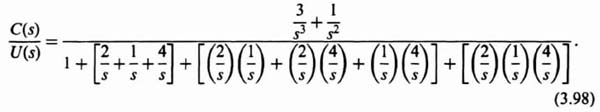

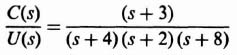

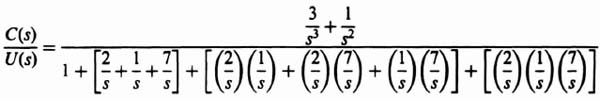

The next step is to transform the original system to the phase-variable form. This can easily be obtained from the transfer function C(s)/U(s), which can be determined from Figure 3.16:

Eq. (3.98) can be reduced to the following [as stated in Eq. (3.95)]:

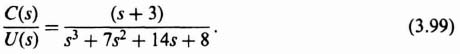

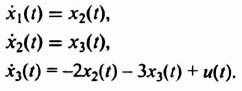

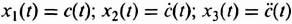

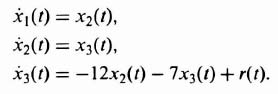

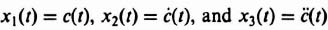

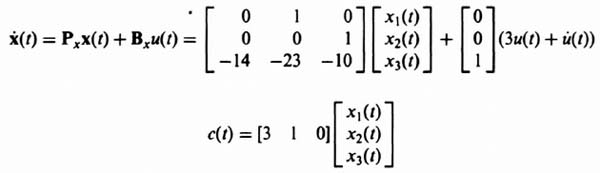

We can determine the phase-variable state equations which represent the transfer function given by Eq. (3.99). Defining x1(t) = c(t), x2(t) = c(t), and x3(t) = ![]() , we obtain the following:

, we obtain the following:

Figure 3.16 Signal-flow graph representation of process whose transfer function is defined in Eq. (3.95).

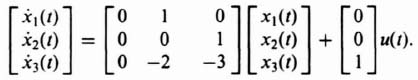

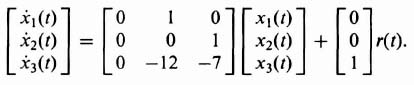

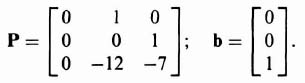

Therefore, the state and output equations in matrix vector form of the phase-variable control system are given by:

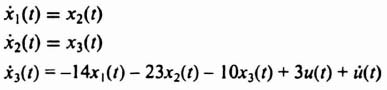

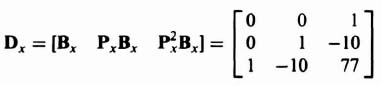

The resulting controllability matrix for the phase-variable form can be obtained from Eqs. (3.65) and (3.103) and is given by:

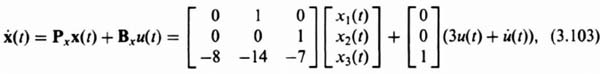

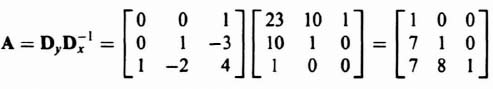

Note that the determinant of Dx is −1 indicating that the determinant is nonsingular, and the phase-variable form is also controllable, as expected. The transformation matrix, defined in Eq. (3.85), can be determined from Eqs. (3.97) and (3.105) as follows:

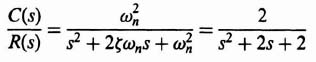

The next step in the procedure is to design the controller (see Figure 3.3) using the phase-variable representation, after which we will transform the design back to the original system by using Eq. (3.106). The 4.33 percent overshoot specified can be obtained from a second-order control system having a damping ratio of 0.707 [see Eq. (B.33)]. The settling time of 6 sec can be obtained from a second-order control system having a damping ratio of 0.707 and ωn = 0.943 [see Eq. (5.41)‡]. Therefore, the following second-order control system can meet the design specifications:

Since it was shown in Section 3.2 that zeros of closed-loop systems are zeros of open-loop systems, then we can select the third pole at s = −3 which will also cancel the zero at s = −3. Therefore, the characteristic equation of the desired closed-loop system is

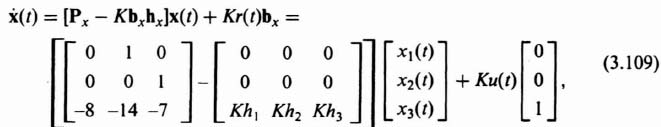

The state and output equations for the phase-variable form with linear-state-variable-feedback can be obtained from Eqs. (3.8), (3.9), and (3.10) as follows:

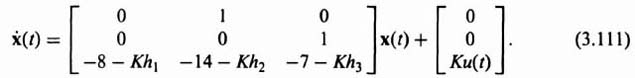

Eq. (3.109) reduces to the following:

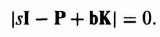

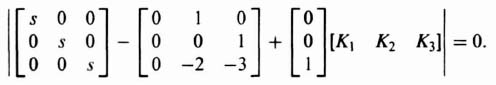

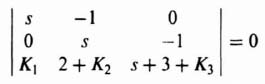

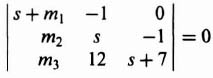

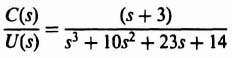

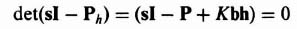

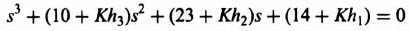

The resulting characteristic equation can be obtained from Eqs. (3.10) and (3.19):

Substituting −(Px − Kbxhx) from Eq. (3.111) into Eq. (3.112), we obtain the following:

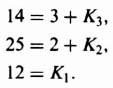

The resulting characteristic equation is given by:

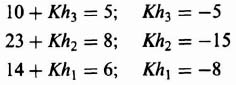

Comparing Eq. (3.114) and Eq. (3.108), we obtain the following three equations:

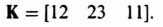

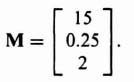

Therefore,

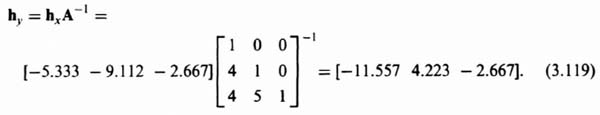

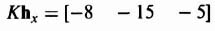

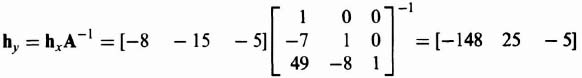

We will now transform Khx as shown in Eq. (3.118) back to the original system using Eq. (3.94) and Eq. (3.106) as follows:

Figure 3.17 Resulting closed-loop control system with linear-state-variable-feedback designed using Ackermann's Formula.

The resulting closed-loop control system with linear-state-variable-feedback is shown in Figure 3.17.

3.7. ESTIMATOR DESIGN IN CONJUNCTION WITH THE POLE PLACEMENT APPROACH USING UNEAR-STATE-VARIABLE FEEDBACK

In the discussion of Sections 3.2 and 3.3 on linear-state-variable feedback, it was assumed that all of the states are observable and measurable, and available to accept control signals (controllable). As Sections 3.4 and 3.5 on controllability and observability have shown, some states of a feedback control system may not always be controllable and/or observable. In some systems, the system may be observable, but all of the states may not be measured due to physical limitations (e.g., chemical process control systems), or it may be due to cost restrictions that limit the use of costly sensors needed to measure all of the states. It is assumed in this section that the system is observable (no part of the system is disconnected physically from the output), but measurements are being made only on some of the states, and we wish to estimate all of the states.

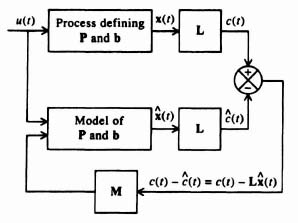

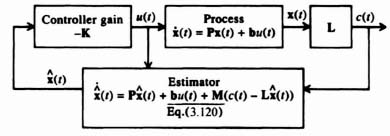

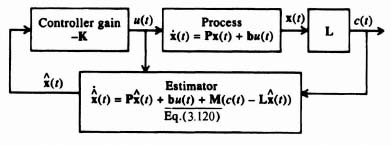

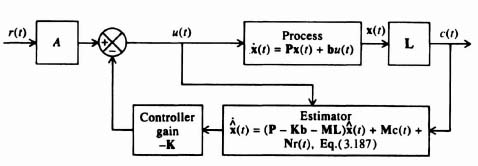

Let us focus attention on the process portion of the system illustrated in Figure 3.3. We wish to use the closed-loop estimator system shown in Figure 3.18 for determining an estimate of the state vector x(t) and output c(t) [8]. This estimator feeds back the difference between the measured output c(t) and the estimated output c(t) that is obtained from a model of the process. Therefore,

where M defines the gain factors mi, which are selected to obtain desirable error characteristics of the state vector x, and ![]() represents the estimate of the state x(t):

represents the estimate of the state x(t):

The error in the state estimate, ![]() , can be derived from

, can be derived from

Figure 3.18 An estimator system.

The derivative of the ![]() can be obtained by subtracting

can be obtained by subtracting ![]() [given by Eq. (3.120)] from

[given by Eq. (3.120)] from ![]() given by the system dynamics:

given by the system dynamics:

Subtracting Eq. (3.120) from Eq. (3.123), we obtain the following:

Substituting Eq. (3.2),

into Eq. (3.126), we obtain

Using the definition of error in the state estimate as given by (3.122), Eq. (3.128) reduces to

![]()

or

It is shown in Section 6.2‡ on the state-variable determination of the characteristic equation that a state equation, as given by Eq. (6.15)‡,

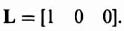

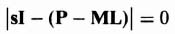

has a characteristic equation given by Eq. (6.22)‡. Similarly, the characteristic equation of Eq. (3.129) is given by

The objective of the control-system engineer is to select P – ML so that it has stable roots in order for ![]() to decay to zero. It is also desirable to have the root location produce a fast transient response so that the estimation error decays very fast to zero. Notice that the estimation error

to decay to zero. It is also desirable to have the root location produce a fast transient response so that the estimation error decays very fast to zero. Notice that the estimation error ![]() will converge to zero independent of the forcing function u(t). Therefore, stability is determined from the homogeneous solution to the system (with u(t) = 0), as opposed to its particular solution (with u(t) finite).

will converge to zero independent of the forcing function u(t). Therefore, stability is determined from the homogeneous solution to the system (with u(t) = 0), as opposed to its particular solution (with u(t) finite).

The design procedure for determining M is to specify the desired location of the estimator roots (e.g., α1, α2,…, αn) from which the desired estimator characteristic equation can be specified:

We can then solve for M by comparing the coefficients in Eqs (3.131) and (3.132).

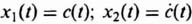

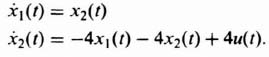

Let us consider the design of M for a simple second-order system whose differential equation is given by

![]()

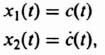

Defining its two states as

we obtain its state equations as

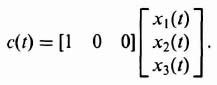

and its output equation as

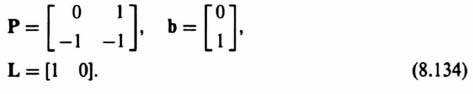

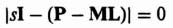

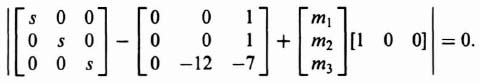

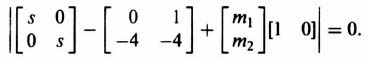

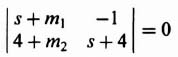

Therefore, for this system, we obtain P, b, and L to be as follows:

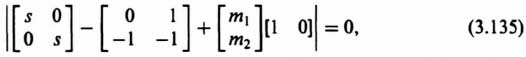

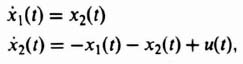

Substituting Eq. (3.134) into Eq. (3.131), we obtain the following:

The resulting characteristic equation is given by

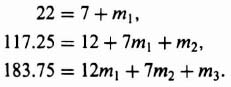

Where do we desire to place the two second-order roots? The primary goal is to design the estimator to be very fast compared to that of the controller. Therefore, let us assume that the controller is critically damped and the controller's second-order characteristic equation has a pair of real roots located at s = β1 = β2 = 2. Let us assume that we wish the estimator to be critically damped and have the two second-order roots of the estimator located at s = α1 = α2 = 20 in Eq. (3.132), which will ensure a very fast response compared to that of the controller. Therefore, Eq. (3.132) for this example becomes

Comparing the coefficients of Eqs. (3.138) and (3.139), we obtain the following two sumultaneous equations to solve:

Solving Eqs. (3.140) and (3.141), we obtain:

Designing a combined compensator of a controller and estimator is illustrated in Section 3.8.

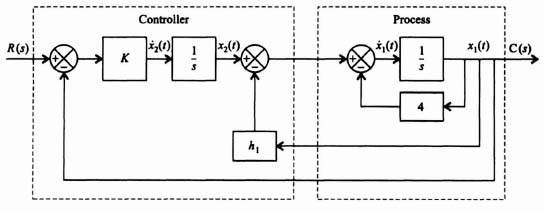

3.8. COMBINED COMPENSA FOR DESIGN INCLUDING A CONTROLLER AND AN ESTIMATOR FOR A REGULATOR SYSTEM

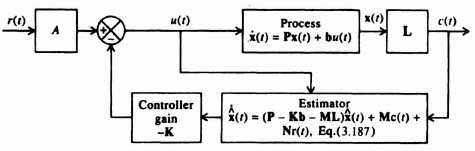

This section considers the combined compensator design of a controller and estimator for a regulator in which the reference input equals zero, and for the case where the reference input is finite. The block diagram of this regulator is shown in Figure 3.19, which combines the concepts illustrated in Figure 3.3 for the controller and Figure 3.18 for the estimator. We wish to determine in this system the effect of using the estimated state vector ![]() , instead of x(t) on the system dynamics [8].

, instead of x(t) on the system dynamics [8].

Figure 3.19 Regulator system (where the reference input r = 0) containing combined controller and estimator.

Let us consider the effect of driving the controller with ![]() instead of x(t). From the process dynamics shown in Figure 3.3,

instead of x(t). From the process dynamics shown in Figure 3.3,

From Figure 3.19, we also know that

Therefore, substituting Eq. (3.143) into Eq. (3.142), we obtain

In terms of the state estimation error ![]() defined in Eq. (3.122), Eq. (3.144) becomes

defined in Eq. (3.122), Eq. (3.144) becomes

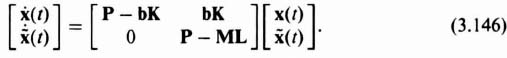

Combining Eqs. (3.145) and (3.129), we obtain an overall equation for the state vector x and its error ![]() as follows:

as follows:

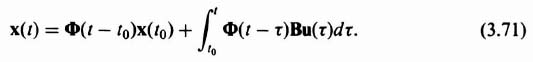

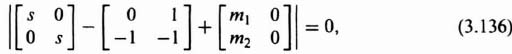

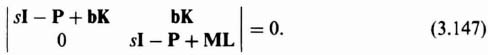

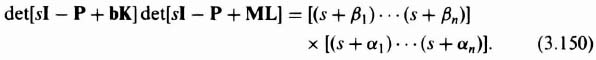

The characteristic equation of this closed-loop combined controller and estimator system can be obtained in a manner similar to that obtained for the estimator alone [see Eq. (3.131)]:

We can write this equation as

The first determinant specifies the characteristic equation of the controller, and the second determinant specified the characteristic equation of the estimator [which is identical to Eq. (3.131)]. As we did for the case of the estimator alone in Section 3.7, Eq. (3.132), we can now define the combined desirable location of estimator roots

![]()

and controller roots

![]()

and specify the combined estimator and controller's characteristic equation as

We can now simultaneously determine the controller gain K and estimator coefficients M by setting Eqs. (3.148) and (3.149) equal to each other:

Therefore, we can observe from Eq. (3.150) that the roots of the combined controller and estimator is the sum of the controller and estimator roots found independently [8]. The primary concept to recognize in the combined compensator design for the controller and estimator is to make the estimator respond much faster than the controller, as we do not want the system's transient response limited by that of the estimator (which we can control by proper design).

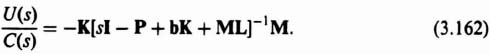

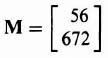

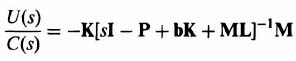

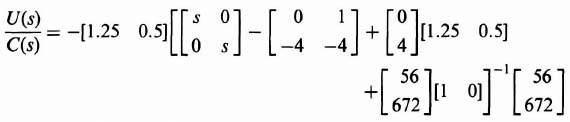

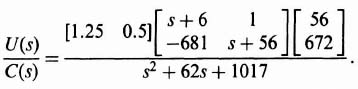

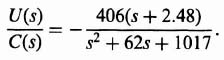

It is useful to compare the modern pole placement method using the state-variable feedback method with the conventional transfer-function method for the design of the compensator as we have done in Section 3.2, when we found the open-loop transfer function KG(s)H(s) in terms of h, Φh(s) and b in Eq. (3.25). We wish to find the transfer function of the combined controller and estimator, U(s)/C(s). Let us reconsider the estimator equation, Eq. (3.120), and incorporate it in the control-law equation, Eq. (3.143), because the controller is part of the compensator:

Simplifying, we obtain

Let us compare Eq. (3.152) with the state equation of the process:

whose characteristic equation we know is given by

using the same reasoning we used in obtaining Eqs. (6.22)‡, (3.19), and (3.131). By comparing Eqs. (3.152) and (3.153), we obtain the characteristic equation of the compensator as follows:

The resulting compensator may not result in a stable system because the roots of Eq. (3.155) have not been specified in advance. This is similar to our results in Section 3.3, where we found that design using linear-state-variable feedback did not ensure a stable system.

Before finding the transfer function representing the compensator, U(s)/C(s), we first find the transfer function of the process from Eq. (3.153):

Simplifying,

Solving for X(s), we obtain

Since

![]()

and its Laplace transform is

we can combine Eqs. (3.158) and (3.159) to relate the output C(s) and input U(s) of the process.

In order to find the transfer function of the process C(s)/U(s), we assume that the initial condition, x(0), equals zero. Therefore,

By analogy, we compare Eqs. (3.153) [and its resulting transfer function given by Eq. (3.161)] with Eq. (3.152), and conclude that the transfer function of the compensator defined by Eq. (3.152) is given by

When we determine the transfer function U(s)/C(s) using this procedure, we will find that the resulting transfer function will result in a phase-lead network, a phase-lag network, or a phase-lag-lead network.

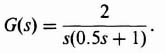

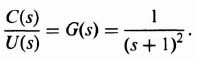

To illustrate this approach for obtaining the transfer function of the compensator, consider a process whose transfer function is given by

Therefore,

![]()

Defining the state variables of this second-order system as

we obtain the state equation to be

and the output equation is

Let us assume that the design specification for the controller is

Therefore, the complex-conjugate roots of the controller are located at −1 ± j1.414 and ac(s) for the controller is given by

We can determine the controller gain K by equating like powers of s from Eq. (3.166) and that part of Eq. (3.148) concerned with the controller:

Substituting for P and b from Eq. (3.164) into Eq. (3.167), we obtain the following:

This simplifies to

from which we obtain the characteristic equation of the controller:

Comparing like coefficients in Eqs. (3.166) and (3.170), we find the controller gains to be K1 = 3 and K2 = 2:

As discussed previously, we desire the estimator to have a much faster response than the controller. Therefore, let us assume the design specification of the estimator to be

Therefore, the complex-conjugate roots of the estimator are located at −8.5 ± j14.7, and αe(s) for the estimator is given by

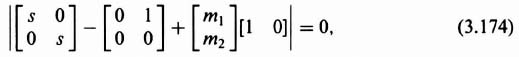

The resulting estimator feedback gain matrix is found from Eq. (3.131) [for the estimator portion of Eq. (3.148)] as follows:

Substituting the matrix values into Eq. (3.173), we obtain

which reduces to:

The resulting characteristic equation in terms of m1 and m2 is given by

Setting like coefficients in Eqs. (3.172) and (3.175) equal to each other, we obtain m1 = 17 and m2 = 288.3:

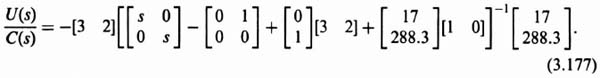

The resulting compensator transfer function is obtained by substituting parameters obtained from Eqs. (3.164), (3.165), (3.171), and (3.176) into Eq. (3.162) as follows:

Upon further simplification, we obtain the following:

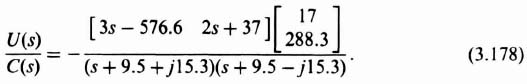

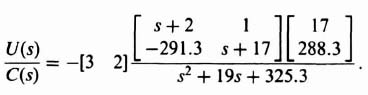

The resulting transfer function of the compensator is given by the following equation:

Analysis of Eq. (3.179) shows that the compensator has the form of a phase-lead network with a zero at −1.38 and with two complex-conjugate poles as opposed to a simple pole as defined by the conventional phase-lead network of Eq. (2.6).

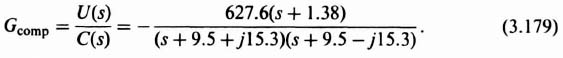

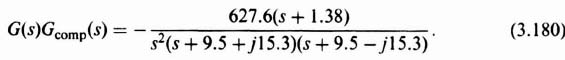

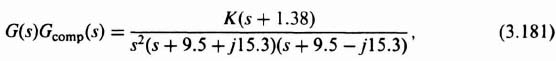

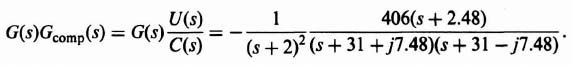

We can analyze the resulting system using the conventional root-locus and Bode-diagram methods. To obtain the open-loop transfer function for analyses, we combine Eqs. (3.163) and (3.179):

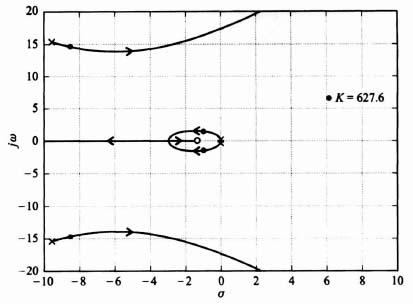

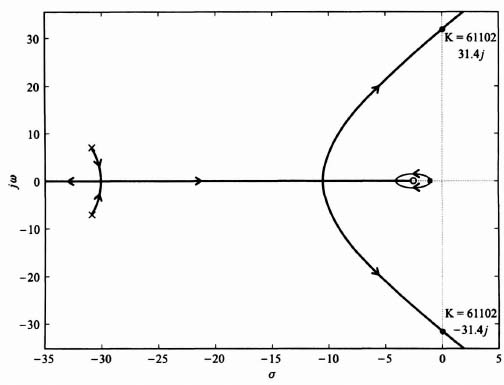

Replacing the specific gain of −627.6 with the variable gain K, the root locus can be evaluated from

which is shown in Figure 3.20. Observe that the root locus goes through the roots chosen in Eqs. (3.166) and (3.172) when K = 627.6. These roots are shown in Figure 3.20 by solid dots. This figure was obtained using MATLAB, and is contained in the M-file that is part of my AMCSTD Toolbox which can be retrieved from The MathWorks anonymous FTP server at ftp://ftp.mathworks.com/pub/books/advshinners.

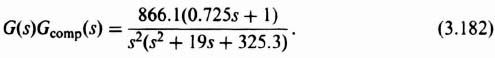

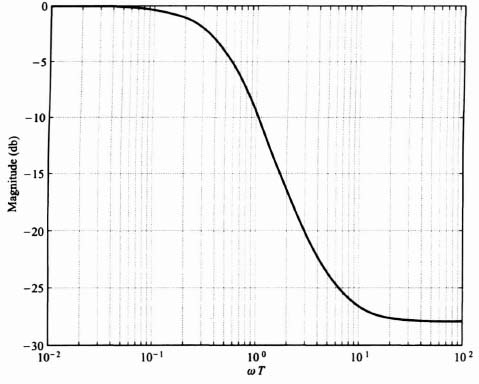

To draw the Bode diagram, we consider the modified form of Eq. (3.180):

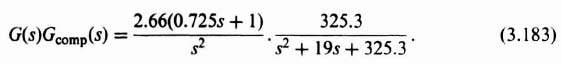

The resulting Bode diagram is drawn from the following simplification to Eq. (3.182):

Figure 3.20 Root locus for compensated system of Figure 3.19 where G(s)Gcomp(s) = (K(s + 1.38))/(s2(s + 9.5 + j15.3)(s + 9.5 − j15.3)).

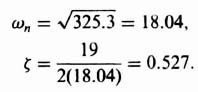

The quadratic poles in the denominator have an undamped natural frequency ωn and a damping ratio ζ given by

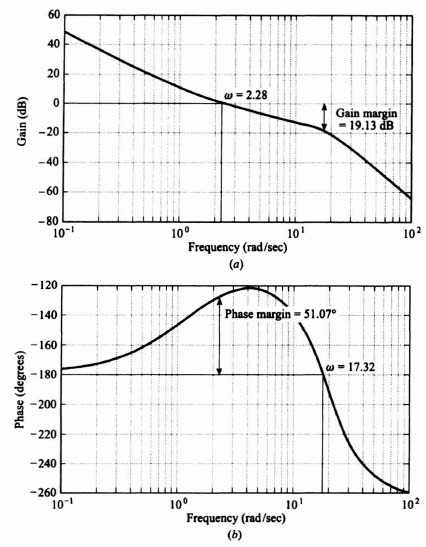

The resulting Bode diagram is shown in Figure 3.21. This figure was also obtained using MATLAB and is contained in the M-file that is part of my AMCSTD Toolbox.

We conclude that the uncompensated transfer function

![]()

has its phase margin increased from 0 to 51.07 degrees at its gain crossover frequency of 2.28 rad/sec., and its gain margin is increased from minus infinity to 19.13 dB (phase crossover frequency occurs at ω = 17.32 rad/sec) when we use the compensator of Eq. (3.179). Notice that the crossover frequency of 2.28 rad/sec is approximately consistent with the controller's closed-loop roors of ![]() rad/sec and ζ = 0.85. This is a reasonable result, as the slower roots of the controller are more dominant than the faster estimator roots, on the system response.

rad/sec and ζ = 0.85. This is a reasonable result, as the slower roots of the controller are more dominant than the faster estimator roots, on the system response.

A complete case study for the design of a combined controller and estimator for a regulator, using the techniques presented in Section 3.7 and 3.8, is presented in Chapter 7.

Figure 3.21 Bode diagram for compensated system of Figure 3.19 where G(s)Gcomp(s) = ((2.66(0.725s + 1))/s2)(325.3/(s2 + 19s + 325.3)).

3.9. EXTENSION OF COMBINED COMPENSATOR DESIGN INCLUDING A CONTROLLER AND AN ESTIMATOR FOR SYSTEMS CONTAINING A REFERENCE INPUT

How can we extend the concepts developed in the previous section for regulator design, shown in Figure 3.19 (where the reference input r(t) = 0), to the more general problem where the reference input exists? Several methods exist which can be used to design such a system [8,9]. This section will consider the configuration illustrated in Figure 3.22. The design goal of the approach to be presented is to have the state-estimation error ![]() be independent of the reference input r(t) (e.g.,

be independent of the reference input r(t) (e.g., ![]() should be uncontrollable from r(t)). This is a very important consideration, as we do not want the state-estimation error to be dependent on the type and level of the input.

should be uncontrollable from r(t)). This is a very important consideration, as we do not want the state-estimation error to be dependent on the type and level of the input.

Let us reconsider the controller equation (3.143),

Figure 3.22 Addition of the reference input to the system shown in Figure 3.19 containing a combined controller and estimator.

and the estimator equation (3.152)

The reference input r(t) will be introduced to these equations by adding a term Ar(t) to the controller equation (3.184), and a term Nr(t) to the estimator equation (3.185) (where N is a vector). Therefore, Eqs. (3.184) and (3.185) become

What kind of system do Eqs. (3.186) and (3.187) infer? Does it result in the configuration of Figure 3.22? To answer this question, let us first substitute

into Eq. (3.187) and, thereby, eliminate the output c(t) from Eq. (3.187):

To find the estimation error (which we want to be independent of r(t)), let us difference ![]() [from Eq. (3.189)] and the state equation

[from Eq. (3.189)] and the state equation

We will first substitute Eq. (3.186) into Eq. (3.190):

Subtracting Eq. (3.189) from Eq. (3.191), we obtain the following for the derivative of the estimation error:

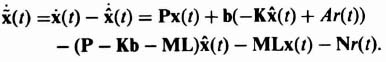

Simplifying, we obtain the following equation:

In order to eliminate r(t) from Eq. (3.192), it is necessary that

Therefore, the design criterion of the control-system engineer is to invoke Eq. (3.193). Substituting Eqs. (3.186) and (3.193) into Eq. (3.187), we obtain the following:

Simplifying, we find that

or

Notice that Eq. (3.196) is the same estimator equation defined in Eq. (3.120). It is important to emphasize that this occurs only when bA = N as defined in Eq. (3.193). Therefore we conclude that the introduction of the reference input by adding the term Ar(t) in the controller equation (3.186) and a term Nr(t) to Eq. (3.187) results in the configuration shown in Figure 3.22.

Complete design examples for the design of the controller, estimator, and compensator, with their associated root-locus and Bode-diagram analyses of the resulting design are found in Chapter 7. In Section 7.5, the state-variable design for the controller and full-order estimator for a space vehicle is presented. In Problem 7.6, the state-variable design for the controller and full-order estimator of a chemical process control system is analyzed.

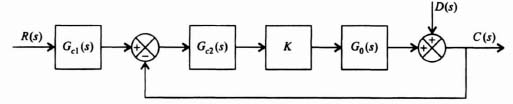

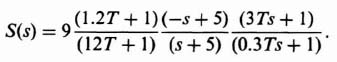

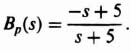

3.10. ROBUST CONTROL SYSTEMS [10–14]

Robust control is concerned with determining a stabilizing controller that achieves feedback performance in terms of stability and accuracy requirements, but the control must achieve the performance that is robust (insensitive) to plant uncertainty, parameter variation, and external disturbances. We know from the previous discussion in this book that feedback reduces the effects of external disturbances (Section 1.7) and parameter variations (Section 1.7). However, this is only achieved with relatively high loop gain which limits stability. Robust control is basically the same problem that was addressed in the 1930s by Black, Bode, and Nyquist. Modem robust control revolves around the feedback configurations illustrated in Figures 2.5, 2.6, and 2.7 which illustrate two-degrees-of-freedom compensation systems.

Figure 3.23 Control system with a two-degrees-of-freedom series controller Gc1(s) and a forward-loop-controller Gc2(s).

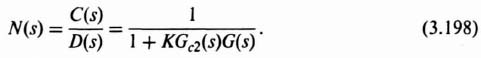

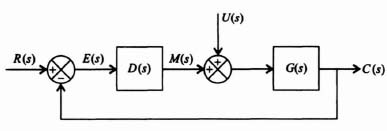

Let us consider the control system illustrated in Figure 3.23 which contains a disturbance D(s), and it contains a two-degrees-of-freedom series controller Gc1(s) and a forward-loop controller Gc2(s). In this control system's operation, the amplifier gain, K, can vary.

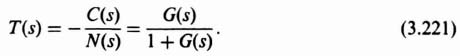

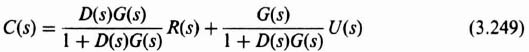

For this control system, the overall transfer function, C(s)/R(s), is given by

The transfer function relating the disturbance D(s) to the output C(s) is given by

The design approach in robust control systems is to choose the controller Gc1(s) so that the desired closed-loop transfer function H(s) is obtained, and to choose the controller Gc2(s) so that the output, C(s), is insensitive to the disturbance D(s) over the frequency range in which D(s) is dominant.

The sensitivity of H(s) due to variations of K is given by

For the control system of Figure 3.23,

Substituting Eqs. (3.197) and (3.200) into Eq. (3.199), we obtain the following:

It is important to recognize that for this control system, both C(s)/D(s) given by Eq. (3.198) and the sensitivity of H(s) with respect to K given by Eq. (3.201) are identical. This is a very important result which implies that we can use the same control-system techniques to suppress the affect of the disturbance D(s) and robustness (insensitivity) with respect to variations of K.

A. Design Example Illustrating Robustness and Disturbance Rejection

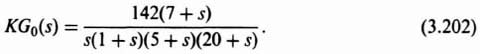

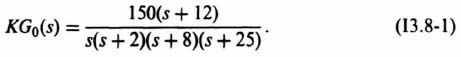

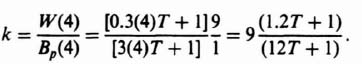

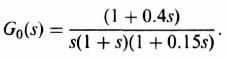

We will next analyze how the two-degrees-of-freedom control system illustrated in Figure 3.23 can achieve a high-gain which will satisfy the robustness and performance requirements while, at the same time, minimizing the affects of the disturbance. We will analyze the system of Figure 3.23 with the following transfer function which represents a fourth-order process KG0(s):

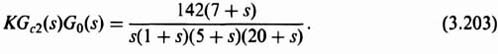

We will assume at this point that Gc1(s) = Gc2(s) = 1. Our problem is to investigate the affect of the variation of K. The transfer function of KGc2(s)G0(s) is given by:

We want to consider the variation of the gain from 142 to double that amount (i.e., 248) and to one-half that amount (i.e., 71).

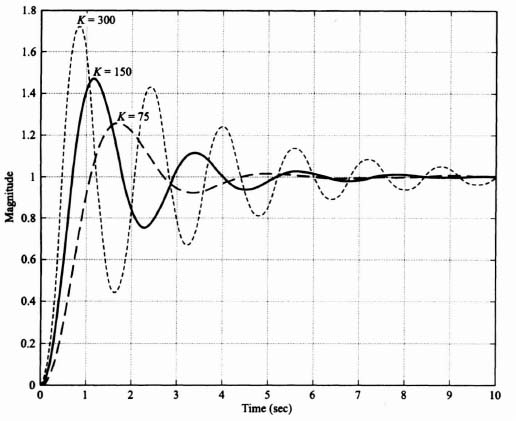

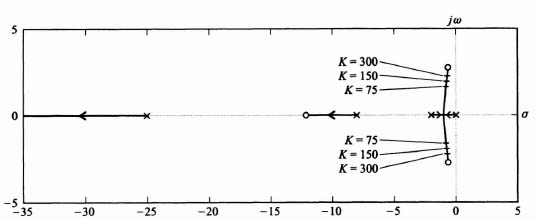

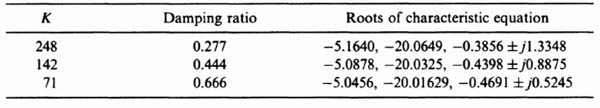

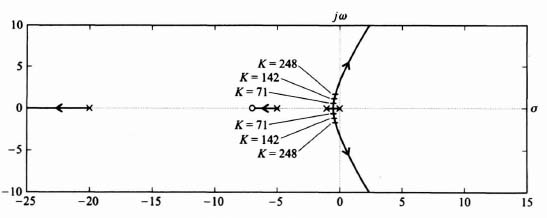

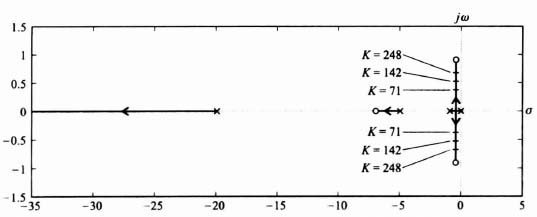

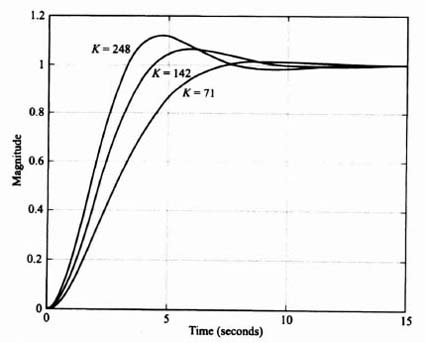

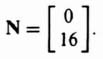

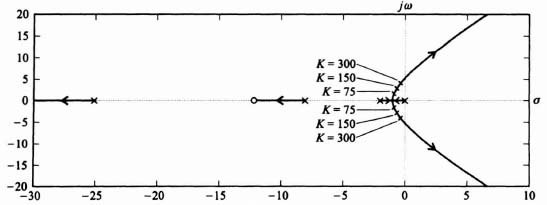

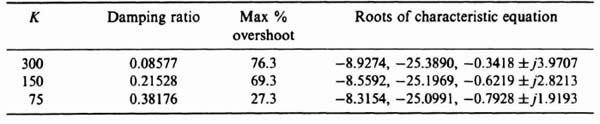

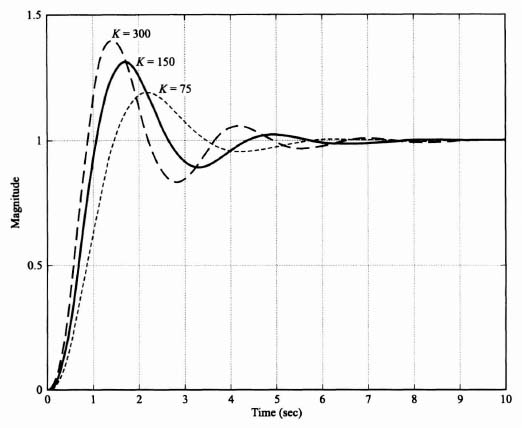

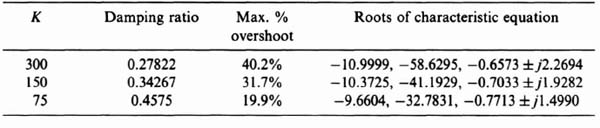

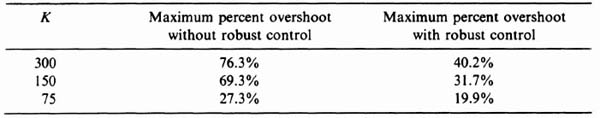

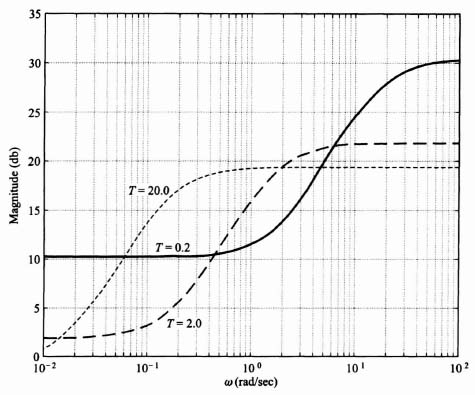

Because this control system acts as a low-pass filter, the sensitivity of H(s) with respect to K is poor. The bandwidth of this control system with K = 142 is only 3.2 rad/sec, while the sensitivity of H(s) with respect to K is expected to be greater than one at frequencies greater than 3.2 rad/sec, Figure 3.24 illustrates the unit step response of the system when K = 142 (the nominal value), K = 248, and K = 71. Table 3.1 lists the characteristics of the unit step transient responses and the characteristic equation roots of this control system which were obtained using MATLAB. Observe that variations of K from its nominal value of 142 result in considerable variation in the damping ratio and the transient responses of this control system. Figure 3.25 illustrates the root loci and the location of the closed-loop, complex-conjugate, roots for the three cases being analyzed.

Figure 3.24 Unit step response for system of Figure 3.23 with KGc2(s)G0(s) given by Eq. (3.203) and Gc1(s) = 1.

Table 3.1. Characteristics of the Control System Illustrated in Figure 3.23 where KGc2(s)G0(s) is given by Eq. (3.203), and Gc1(s) = 1

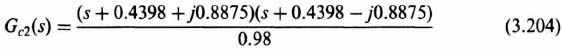

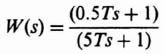

The design approach for this robust controller, Gc2(s), is to place two zeros at (or near) the desired complex, conjugate-loop, poles at −0.4398 ± j0.8875 for the nominal gain case of K = 142. Therefore,

or

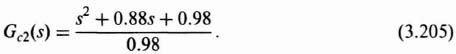

We will approxinate Gc2(s) as follows:

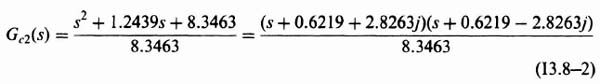

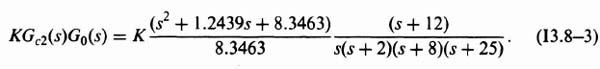

Therefore, the forward-path transfer function of this control system with KG0(s) given by Eq. (3.202) and Gc2(s) given by Eq. (3.206) is:

Figure 3.25 Root-locus plot for system of Figure 3.23 with KGc2(s)G0(s) given by Eq. (3.203) and Gc1(s) = 1.

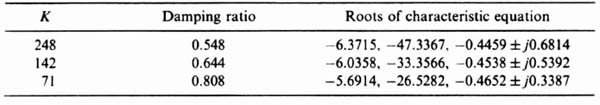

Table 3.2 lists the damping ratio and the characteristic equation roots of this control system, obtained using MATLAB, with the forward-loop transfer function given by Eq. (3.207). Observe that the range of the damping ratios are much closer (0.548 to 0.808) than they were before the addition of the robust controller Gc2(s) and shown in Table 3.1 (where the damping previously varied from 0.277 to 0.666).

Figure 3.26 illustrates the root loci with the robust controller Gc2(s) added, and the location of the closed-loop, complex-conjugate, roots for the three cases. Observe from this root locus that by locating the two zeros of the forward-loop controller Gc2(s) near the desired characteristic equation complex- conjugate roots for K = 142, the sensitivity of this control system becomes much better.

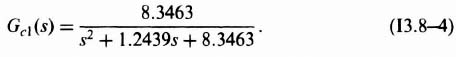

It was shown in Section 3.2 during the discussion on the concept of liner-state-variable feedback that for the system illustrated in Figure 3.3, the zeros of C(s)/R(s) are the zeros of G(s) based on comparing Eqs. (3.17) and (3.24). Therefore, in the system we are currently analyzing in Figure 3.23, the zeros of the forward-path transfer function KGc2(s)G0(s) are identical to the zeros of the closed-loop transfer function. Therefore, the closed-loop zeros of Gc2(s) in Eq. (3.206) come close to canceling the effect of the complex-conjugate, closed-loop poles. Therefore, it is necessary to also add the series controller Gc1(s), as illustrated in Figure 3.23, so that Gc1(s) contains poles to cancel the zeros of s2 + 0.88 + 1 of the closed-loop transfer function. Therefore, the transfer function of the forward-loop controller, Gc1(s), is given by:

Table 3.2. Characteristics of the Control System Illustrated in Figure 3.23 with the Forward-Loop Controller Gc2(s) added and where KGc2(s)G0(s) is given by Eq. (3.207)

Figure 3.26 Root-locus plot for system shown in Figure 3.23 with KGc2(s)G0(s) given by Eq. (3.207).

The unit step response of this control system with the forward-path transfer function of the control system given by Eq. (3.207), with K = 71, 142, and 248, and with the forward-loop controller transfer function given by Eq. (3.208) is illustrated in Figure 3.27. Comparing these unit step responses with those in Figure 3.24, we conclude that this control system has been made to be much less sensitive to variations in K. For example, the maximum percent overshoot of the transient responses for the original system illustrated in Figure 3.24 ranged from 5.6% (for K = 71) to 39% (for K = 248). However, the control system designed to be robust has a transient response as illustrated in Figure 3.27 which shows that the maximum percent overshoot varies from 1.4% (for K = 71) to only 13% (for K = 248). In addition, as we pointed out before in comparing Eqs. (3.198) and (3.201), which are identical, the robustness with respect to variations in K will also provide disturbance suppression using the same control-system techniques.

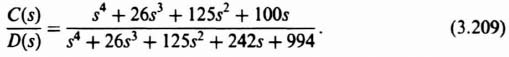

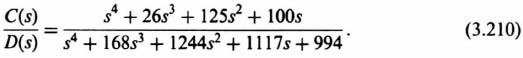

Since the disturbance suppression attributes are a function of frequency, let us examine the frequency characteristics of the C(s)/D(s) transfer function. Substituting Eq. (3.203) into Eq. (3.198), we obtain the following transfer function for C(s)/D(s) for the case where the forward-loop controller Gc2(s) has a gain equal to one:

Substituting Eq. (3.207) into Eq. (3.198), we obtain the following transfer function for C(s)/D(s) for the case where the forward-loop controller Gc2(s) has the transfer function given by Eq. (3.206)

Figure 3.27 Unit step response for system of Figure 3.23 with KGc2(s)G0(s) given by Eq. (3.207) and Gc1(s) given by Eq. (3.208).

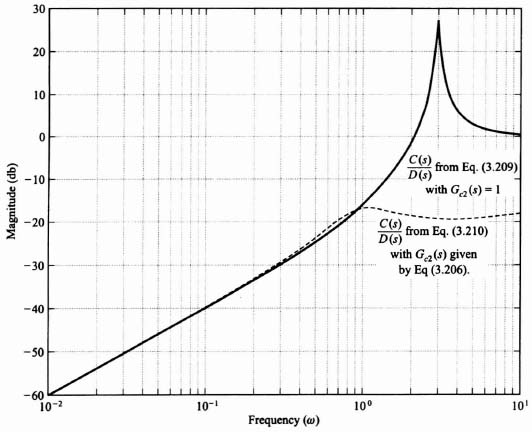

Figure 3.28 is a plot of the frequency response of the disturbance suppression transfer fucntion C(s)/D(s) as defined in Eqs. (3.209) and (3.210). It shows that the disturbance suppression of the control system at low frequencies is approximately the same with and without Gc2(s) in the control system. However, the addition of Gc2(s), as defined by Eq. (3.206), greatly improves the disturbance suppression attributes of the control system at high frequencies. This is consistent with our expectations.

Robust control-system design principles are being applied to many, modem, practical control systems. For example, the reader is referred to Reference 15 which presents the design for a robust control system for preventing car skidding. A complete case study for the design of a robust control system for controlling the flaps of a hydrofoil is presented in Section 7.7.

3.11. AN INTRODUCTION TO H∞ CONTROL CONCEPTS [16, 17]

In the presentation of this book, the classical frequency-domain approach and the modern state-variable time-domain approach have been presented in parallel. It has been shown that they complement each other. Prior to the 1960s, the frequency-domain approach predominated. With the advent of the space race, the availability of practical digital computers, modern optimal control theory, and the state-variable approach in the early 1960s, the pendulum swung to the time-domain approach. The 1960s and 1970s saw an abundant amount of work performed on applying modern optimal control theory, which is presented in Chapter 6 in this book. In the early 1980s, a new technique has emerged known as H∞ control theory which combines both the fequency- and time-domain approaches to provide a unified answer. Zames is given credit for its introduction with his paper in the IEEE Transactions on Automatic Control [16]. The H∞ approach has dominated the trend of control-system development in the 1980s and 1990s. A complete treatment of the subject of H∞ is complex, and beyond the scope of this book. However, we can expand the concepts of robustness (introduced in Section 3.10) and sensitivity (introduced in Chapter 1), together with the frequency and state-variable domain techniques presented in this book to introduce the basic concepts of H∞ control theory and apply it to some simple problems. This is the objective of this and the following sections.

Figure 3.28 Frequency response of the disturbance suppression transfer functions defined in Eqs. (3.209) and (3.210).

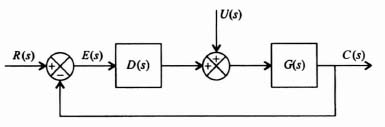

A. Sensitivity of Control Systems Containing Disturbances and Measurement Noise

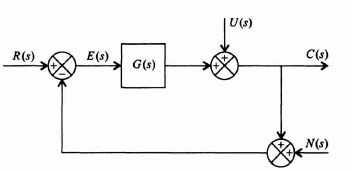

Let us extend our understanding of sensitivity developed in Chapter 1 to the control system illustrated in Figure 3.29 which contains a disturbance U(s) and feedback sensor measurement noise N(s). Although noise is a stochastic process, we will assume in this analysis that it can be represented as a deterministic process. This initial analysis will focus on the single-input single-output (SISO) system shown in Figure 3.29 which contains a disturbance U(s) and feedback sensor measurement noise N(s). We will then discuss the extension of these results to multiple-input multiple-output (MIMO) systems.

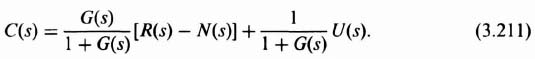

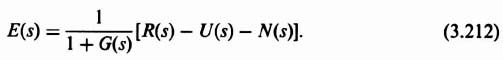

Using Mason's theorem, we can write the following relationship for the output C(s) by inspection of Figure 3.29:

Figure 3.29 Control system containing a disturbance U(s) and sensor measurement observation noise N(s).

We can also use Mason's theorem to write the following relationship for the control-system error E(s) by inspection of Figure 3.29:

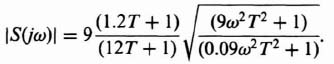

From the analysis in Section 1.7 on sensitivity, we know that the sensitivity of the transfer function C(s)/R(s) to changes in G(s) for the control system shown in Figure 3.29 is given by the following expression:

It is interesting to observe that this sensitivity is also the transfer function from U(s) to −E(s):

In the MIMO case, the sensitivity function in Eq. (3.213) is modified so that G(s) becomes the matrix of transfer functions between the inputs and the outputs, and the value of I is replaced with the identity matrix.

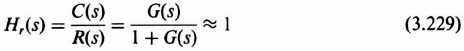

The transfer function C(s)/R(s) of the control system shown in Figure 3.29 is given by the following:

This expression is also known as the complementary sensitivity function which is defined as follows:

It is very important to recognize that the sum of the sensitivity function given by Eq. (3.213), and the complementary sensitivity function given by Eq. (3.216) equals one:

We can express the error function E(s) in Eq. (3.212) in terms of the sensitivity function. Let us assume that the feedback sensor measurement noise is zero. Therefore, substituting Eq. (3.213) into Eq. (3.212), we obtain the following:

Equation (3.218) is a very important relationship because it states that we should make the sensitivity function S small to make the control system error E(s) small. In addition, we know that since Eq. (3.213) represents the sensitivity function as well as the transfer function between U(s) and −E(s) [see Eq. (3.214)], we want the sensitivity function S(s) to be as small as possible for both disturbance rejection and for making the control-system error small.

The conclusion of this analysis is that we want to make S(s) as small as possible. However, is this possible over the entire range of frequencies? Let us analyze Eq. (3.213) to determine if this is feasible. Since G(s) approaches zero as s approaches infinity in practical systems, then

The result of Eq. (3.213) is that we can only make the sensitivity function S(s) small over low and mid-range frequencies, but not at high frequencies.

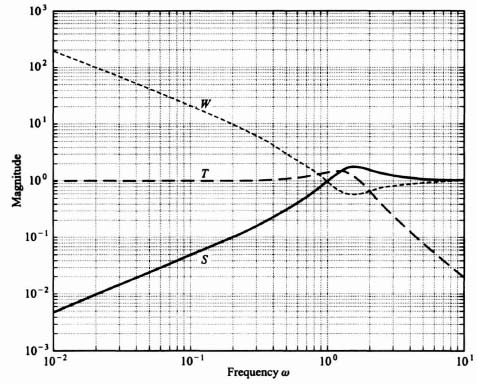

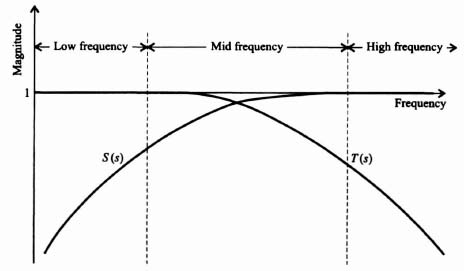

Ideally, what would we like to make the complementary sensitivity function? Analyzing Eq. (3.217), we would like to make the complementary sensitivity function T(s) equal to one because that would then result in the sensitivity function S(s) being equal to zero. However, we know from Eq. (3.216) that T(s) approaches zero as G(s) approaches infinity. Therefore, we can design the complementary sensitivity function T(s) to approximate one at low and mid-range frequencies, but not at high frequencies. Figure 3.30 illustrates the representative frequency responses of the sensitivity function S(s) and the complementary sensitivity function T(s).

The transfer function relating the sensor measurement noise N(s) to the output C(s) can be obtained from Eq. (3.211) by setting R(s) = U(s) = 0:

Observe that Eq. (3.220) is the negative of the complementary sensitivity function T(s) defined in Eq. (3.216). Therefore,

Figure 3.30 Representative sensitivity and complementary sensitivity frequency responses.

This results in a problem to the control-system engineer because we want the transfer function C(s)/N(s) to be as small as possible, but the desirable T(s) is large at low and mid-range frequencies as illustrated in Figure 3.30. Equation (3.217) showed that we would want to make T(s) = 1 ideally in order to drive the sensitivity function S(s) = 0. Therefore, the control-system engineer must make a tradeoff here between allowable feedback sensor measurement noise and the complementary sensitivity function (which also affects the sensitivity function [see Eq. (3.217)]. The basic tradeoff is to determine allowable noise and permissible sensitivity.

B. Desirable Control-System Transfer-Function Characteristics

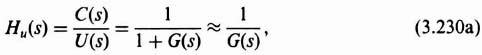

From Figure 3.29 and Eq. (3.211), we can state the relationship between the reference input R(s), the disturbance U(s), and the feedback sensor measurement noise N(s) with the output C(s) as follows:

where

In terms of the error function E(s), as defined in Eq. (3.212), we define E(s) as follows:

It is interesting to examine the effect of the feedback sensor noise on the control system. To do this, let us assume that all the other external inputs are zero:

Let us assume that n(t) has a Fourier transform although, in practice, noise usually does not have a Fourier transform. Using Parseval's theorem, we can determine the integral-squared error (ISE):

By focusing on the square of the error, the ISE penalizes both positive and negative values of the error. The function |N(jω)|2 is defined as the energy density spectrum of ω.

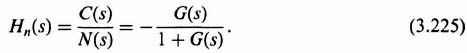

Let us try to define the desirable frequency characteristics from a practical viewpoint for Hr(jω), Hu(jω), and Hn(jω). The reference input R(s) and the disturbance U(s) are usually low-frequency signals. Therefore, it is desired to have Hr(jω) ![]() 1 and Hu(jω)

1 and Hu(jω) ![]() 0 at low frequencies. In practice, Hr(jω)

0 at low frequencies. In practice, Hr(jω) ![]() 0 and Hu(jω) and Hn(jω)

0 and Hu(jω) and Hn(jω) ![]() 1 at high frequencies. In practice, we try to make Hr(jω)

1 at high frequencies. In practice, we try to make Hr(jω) ![]() 1, and Hu(jω) and Hn(jω)

1, and Hu(jω) and Hn(jω) ![]() 0 over the frequency range from 0 to the gain crossover frequency ωc. These are ideals and, in practice, we can only approximate these. For example, Figure I1.7-3 shows the closed-loop frequency response of Figure I1.7-1. This closed-loop frequency response corresponds to Hr(s) for the system shown in Figure I1.7-1.

0 over the frequency range from 0 to the gain crossover frequency ωc. These are ideals and, in practice, we can only approximate these. For example, Figure I1.7-3 shows the closed-loop frequency response of Figure I1.7-1. This closed-loop frequency response corresponds to Hr(s) for the system shown in Figure I1.7-1.

In practice, G(s) is much greater than one at low frequencies. Therefore, Eq. (3.223) reduces to

and Eqs. (3.224) and (3.225) reduce to

at low frequencies. Therefore, a high loop gain is very desirable for frequencies in the passband (defined as frequencies between 0 and ωc) because it then approximates Hr(jω) ![]() 1 and Hu(jω) is very small. However, the sensor measurement observation noise remains a problem because Hn(s) is approximately −1. The control engineer has two choices regarding N(s): (a) Design the sensor so that its measurement noise is very small; (b) tradeoff how high the loop gain is designed so that it minimizes the effect of the disturbance input U(s), but the loop gain should not be too high so that the effect of the noise is tolerable.

1 and Hu(jω) is very small. However, the sensor measurement observation noise remains a problem because Hn(s) is approximately −1. The control engineer has two choices regarding N(s): (a) Design the sensor so that its measurement noise is very small; (b) tradeoff how high the loop gain is designed so that it minimizes the effect of the disturbance input U(s), but the loop gain should not be too high so that the effect of the noise is tolerable.

C. Extension of Sensitivity and Complementary Sensitivity Concepts to Multivariable Control Systems

Many modern control systems contain several inputs and outputs. Therefore, it is important to extend our understanding of sensitivity and complementary sensitivity to multiple-input multiple-output (MIMO) control systems.

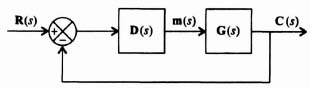

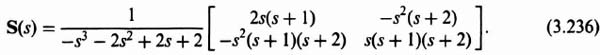

Figure 3.31 illustrates the block diagram of a MIMO control system where r(t) and c(t) are vectors, and D(s) and G(s) are matrices. The dimensions of D(s) are n × i, and the dimensions of G(s) are i × n, where n = dim[C(s)] and i = dim[m(s)]. By analogy to Eq. (3.213) for the SISO control system, the sensitivity function for the MIMO control system is given by:

Figure 3.31 Block diagram of a MIMO control system.

By analogy to Eq. (3.216) for the SISO control system, the complementary sensitivity function for the MIMO control system is given by

The sensitivity and complementary sensitivity functions are both n × n matrices. By analogy to Eq. (3.217) for the SISO case, the sum of the sensitivity and complementary functions for the MIMO case is given by

where I represents the identity matrix.

D. Example for Finding S(s) and T(s) for a MIMO Control System

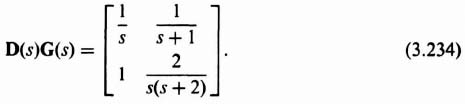

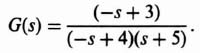

The open-loop transfer function matrix for a two-input, two-output control system to be analyzed is given by

Let us determine the sensitivity and the complementary sensitivity functions.

The sensitivity function for this MIMO control system is obtained from Eq. (3.231) as follows:

Therefore,

The complementary sensitivity function T(s) can be found from

Simplifying this expression, we obtain

3.12. FOUNDATIONS OF H∞ CONTROL THEORY

Based on the preceding results, the concepts of modern H∞ control theory will be presented. The very basic problem that H∞ as presented by Zames in Reference 16 focuses on is sensitivity reduction of feedback control systems as an optimization problem, and it is separated from the problem of stabilization. The technique is concerned with the effects of feedback on uncertainty, where the uncertainty may be in the form of an additive disturbance U(s) as illustrated in Figure 3.29. H∞ control theory approaches the problem from the point of view of classical sensitivity theory, which has been presented in Chapter 1 and subsections 3.11A–3.11D, with the difference that feedback will not only reduce but also optimize sensitivity in an appropriate sense.

H∞ control theory is a complex subject. The purpose of presenting it in this book, in a clear and cohesive manner, is to introduce it and motivate the reader with an interest in this field to review fome of the recent papers which have been written on this subject [18–21]. In its basic form H∞ control theory attempts to minimize the supremum function over the entire frequency range

where S(jω) is the sensitivity function and W(jω) is a weighting function. We can view the magnitude of the product S(jω)W(jω) as the magnitude of the weighted sensitivity. The weighting function emphasizes that low sensitivity is more important at low frequencies than higher frequencies. Therefore, by emphasizing the minimization of the magnitude of the product, the result is that the weighting function is greatest at those frequencies where the sensitivity is the smallest-namely at low frequencies.

The general solution to Eq. (3.239), obtained from function analysis, has the following general form [21]:

where k is a constant and B(s) is known as the Blaschke product. For the problem being considered in this book where the process G(s) is stable (e.g., all of its poles are in the left-half of the s-plane and are of minimum phase), then B(s) = 1 and the constant k is any desirable, small, real number. Therefore, Eq. (3.240) states that we want to make the weighting function large for those low frequencies where we want to make the sensitivity small. Conversely, we want to make the weighting function small for those high frequencies where the sensitivity is large.

Let us examine in detail the result when G(s) has all its poles in the left-half of the s-plane, and B(s) = 1. Therefore,

which implies that the shape of the magnitude of the sensitivity curve is the inverse of the weighting function. Therefore, the shape of the optimum |S(jω)| is independent of the process G(s), and only depends on the magnitudes of the constant k and the weighting function W(jω).

A. Application of the Theory to an Example

Let us try to pull all the concepts presented in this section together by giving an example. We will consider a simple SISO control system, and determine its sensitivity function, S, complementary sensitivity function, T, and the weighting function, W. We will also assume that the constant k in Eq. (3.240) is equal to one.

For the illustrative example, let us consider a unity-feedback control system whose forward transfer function G(s) is given by

Substituting Eq. (3.242) into Eqs. (3.213), (3.216), and (3.241), we can calculate the sensitivity S, the complementary sensitivity T, and the weighting function W, respectively. The result is shown in Figure 3.32. The result agrees with the theoretical results expected from the presentation of this section. The sensitivity S is very small at low frequencies, and approaches one at very high frequencies. The weighting function W is the inverse of the sensitivity function, and is very large at low frequencies and also approaches one at very high frequencies. The complementary sensitivity function equals one minus the sensitivity function (see Eq. (3.217)), and equals one at very low frequencies and is very small at very high frequencies.

B. The H∞ Process for the General SISO Case

Let us assume that in the general SISO case, the process G(s) has poles and zeros in the right half-plane. For this general case, the H∞ process would proceed in the following step-by-step manner:

1. The weighting function W(s) would be selected which would have the following characteristics:

- It must have no zeros in the right half-plane.

Figure 3.32 S, T and W for a unity-feedback system with G(s) = 2/s(s + 1).

- It must contain any poles that G(s) has on the jω axis.

- It must not contain jω poles at zeros of G(s).

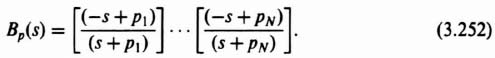

2. Determine the Blaschke product B(s) from knowledge of the poles of G(s). If G(s) has no poles in the right half-plane as illustrated in the preceding example in subsection 3.11A, B(s) = 1. Let us next consider the general case where G(s) has poles in the right half-plane. Therefore, from Eq. (3.240),

where Pn are poles of G(s) in the right half-plane.

3. S(Pn) has to satisfy the following interpolation conditions for simple poles and zeros of G(s) in the right half-plane:

- S equals zero at right-half poles, and one at right-half zeros.

- T is one at right-half poles and zero at right-half zeros.

This can be shown as follows. Let us consider the control system in Figure 3.33. The sensitivity of C(s)/R(s) to D(s)G(s) is given by

Figure 3.33 General SISO control system containing a reference input, R(s), and a disturbance signal, U(s).

The complementary sensitivity function is given by

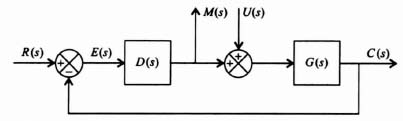

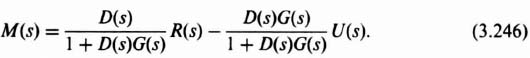

The transfer functions between the inputs R(s) and U(s), and the outputs M(s) and C(s) are given as follows:

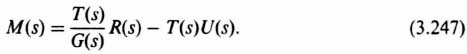

In terms of T(s) given by Eq. (3.245), Eq. (3.246) can be written as

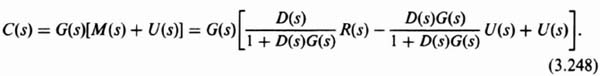

Therefore,

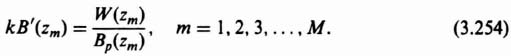

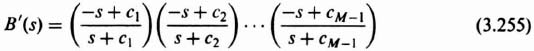

Equation (3.248) can be reduced to the following:

In terms of T(s) and S(s), Eq. (3.249) can be rewritten as follows:

Analysis of Eq. (3.247) reveals the following:

- If T(s)/G(s) is to be stable, then T(s) must cancel the right-half zero(s) of G(s).

Analysis of Eq. (3.250) reveals the following:

- If G(s)S(s) is to be stable, then S(s) must cancel the pole(s) in the right-half plane of G(s).

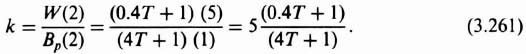

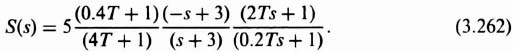

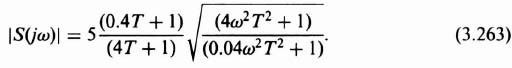

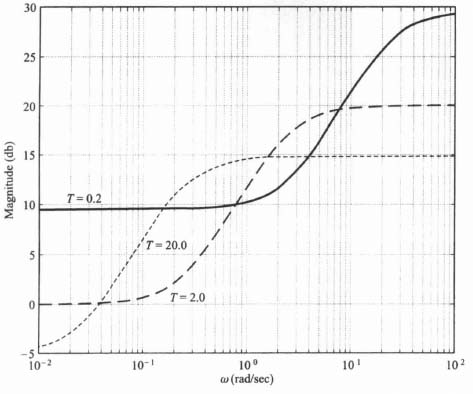

These two very important interpolation conditions imply that for simple zeros and poles which are in the right half-plane, S(s) equals one at the right-half-plane zeros, and S(s) equals zero at the right-half plane poles. Similarly, T(s) equals zero at the right-half plane zeros, and T(s) equals one at the right-half-plane poles in accordance with Eq. (3.217).