5

NONLINEAR CONTROL-SYSTEM DESIGN

5.1. INTRODUCTION

The feedback control-system design methods presented in previous chapters were restricted to linear constant systems, that is, systems that can be represented by linear differential equations with constant coefficients. In practice, linear systems possess the property of linearity only over a certain range of operation; all physical systems are nonlinear to some degree. Therefore it is important that one acquire a facility for analyzing control systems with varying degrees of nonlinearity.

Any attempt to restrict attention strictly to linear systems can only result in severe complications in system design. To operate linearly over a wide range of variation of signal amplitude and frequency would require components of an extremely high quality; such a system would probably be impractical from the viewpoints of cost, space, and weight. In addition, the restriction of linearity severely limits the system characteristics that can be realized.

In practice, linear operation is required only for small deviations about a quiescent operating point. The saturation of amplifying devices having large deviations about the quiescent operating point is usually acceptable. The presence of nonlinearities in the form of dead zones for small deviations about the quiescent operating point is also usually acceptable. In both cases one attempts to limit the effects of nonlinearities to acceptable tolerances, as it is impractical to eliminate the problem entirely.

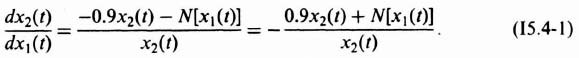

It is worth noting that nonlinearities may be intentionally introduced into a system in order to compensate for the effects of other undesirable nonlinearities, or to obtain better performance than could be achieved using linear elements only. A simple example of an intentional nonlinearity is the use of nonlinear damping (see Section 4.5‡) to optimize response as a function of the error [1]. The on-off contactor (relay) servo, where full torque is applied as soon as the error exceeds a specified value, is another case of an intentionally nonlinear system.

The purpose of this chapter is to examine the broad aspects of nonlinear systems. We first study the characteristics of nonlinearities and then present several methods for analysis and design of nonlinear control systems. We follow in Chapter 6 with several illustrations of synthesis of nonlinear systems having intentional nonlinearities utilizing optimal control theory. A case study of a positioning system containing nonlinearities is presented in Chapter 7.

We should emphasize here that methods of analyzing nonlinear systems have not progressed as rapidly as have techniques for anlayzing linear systems. Comparatively speaking, at the present time we are still in the development stage. However, the various methods presented in this chapter will enable one to analyze and synthesize nonlinear control systems quantitatively.

5.2. NONLINEAR DIFFERENTIAL EQUATIONS

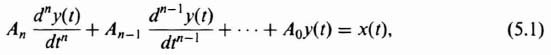

A linear differential equation of the nth order, with constant coefficients, is written

where x(t) represents the input to the system, t represents time and is the independent variable, y(t) represents the dependent variable, or the output of the system, and An, An–1,…, A0 are constants.

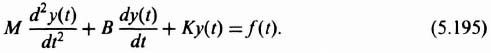

This equation is of the form derived for several representative mechanical and electrical systems in Chapter 3‡. For example, Eq. (3.19)‡, which is repeated below, gave the differential equation of motion for a mechanical system which consists of a force f(t) applied to a mass, damper, and spring:

The mass of the system is represented by the constant M, the damping factor by the constant B, and the spring constant by K.

Detailed solutions for the class of differential equations having the form shown in Eq. (5.1) are available. They have been studied extensively, and several powerful techniques, such as the Laplace transformation, exist for their solution. All the analytical methods discussed in Chapter 1 and Chapter 2 are based on systems that can be represented by simple differential equations having this general form.

If any of the coefficients An, An–1,…, A0 are functions of the independent variable time, then the linear differential equation is said to have variable coefficients. In this case, the differential equation takes the following form:

where An, An–1,…, A0 are all functions of time. Except in special cases (such as when the coefficients are polynomials), the solution of linear time-variable equations is quite complex.

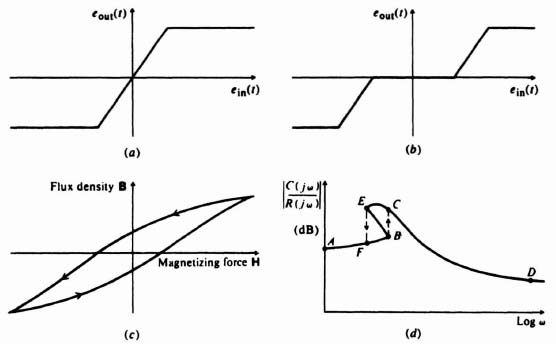

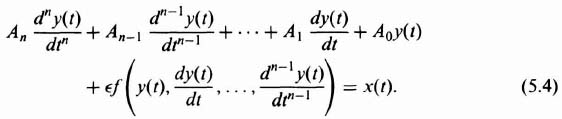

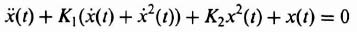

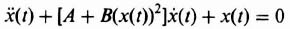

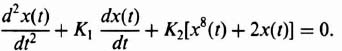

If the coefficients of the differential equation are functions of the dependent variable y(t), then a nonlinear differential equation results. Its general form is

where x(t) represents the input to the system, t represents time and is the independent variable, y(t) represents the dependent variable and the output of the system, An, An–1,…, A0 are constants, ![]() is a constant indicating the degree of nonlinearity present, and f(y(t), dy(t)/dt,…, dn–1 y(t)/dtn–1) is a nonlinear function.

is a constant indicating the degree of nonlinearity present, and f(y(t), dy(t)/dt,…, dn–1 y(t)/dtn–1) is a nonlinear function.

Notice that if ![]() = 0, Eq. (5.3) reduces to Eq. (5.1), which represents a linear differential equation having constant coefficients. This leads us to the qualitative rule that a small amount of nonlinearity in a system means that

= 0, Eq. (5.3) reduces to Eq. (5.1), which represents a linear differential equation having constant coefficients. This leads us to the qualitative rule that a small amount of nonlinearity in a system means that ![]() is small in comparison with the coefficients An, An–1,…, A0. In addition, a large amount of nonlinearity means that

is small in comparison with the coefficients An, An–1,…, A0. In addition, a large amount of nonlinearity means that ![]() is large compared with An, An–1,…, A0.

is large compared with An, An–1,…, A0.

5.3. PROPERTIES OF LINEAR SYSTEMS THAT ARE NOT VALID FOR NONLINEAR SYSTEMS

Several inherent properties of linear systems, which greatly simplify the solution for this class of systems, are not valid for nonlinear systems. The fact that nonlinear systems do not have these properties further complicates their analysis.

Superposition is a fundamental property of linear systems. As a matter of fact, this property is the basis of the definition of a linear system. The principle of superposition states that if c1(t) is the response of a system to r1(t) and c2(t) is its response to r2(t), then the system's response to a1r1(t) + a2r2(t) is a1c1(t) + a2c2(t). Unfortunately, the superposition principle does not apply to nonlinear systems. Therefore, several mathematical procedures used in the design of linear systems cannot be used for nonlinear systems.

Stability of linear systems has been shown (in previous chapters) to depend only on the system's parameters. The stability of nonlinear systems, however, depends on the intial conditions and the nature of the input signal as well as the system parameters. One cannot expect a nonlinear system that exhibits a stable response to one type of input to have a stable response to other types of input. We shall shortly illustrate nonlinear systems that are stable for very small or very large signals, but not for both.

We normally expect the output of a linear system, excited by a sinusoidal signal, to have the same frequency as the input, although its amplitude and phase may differ. However, the output of nonlinear systems usually contains additional frequency components and may, in fact, not contain the input frequency.

For linear systems, interchanging two elements in cascade does not affect behavior. This is not true if one of the elements is nonlinear.

The question of stability is clearly defined for linear constant systems: A system is either stable or unstable. An unstable linear constant system has an output that grows without bound, either exponentially or in an oscillatory mode with the envelope of the oscillations increasing exponentially. In the nonlinear systems, system instability may means a constant-amplitude output having an arbitrary waveform. It is important to emphasize that an oscillator is stable according to Liapunov [2, 3]. The exponentially decaying system, which we have referred to in this book as being stable, is described by Liapunov as being asymptotically stable.”*

5.4. UNIQUE CHARACTERISTICS OF NONLINEAR SYSTEMS

This section describes in detail some of the unusual characteristics that are peculiar to nonlinear systems. These phenomena, which do not occur in linear systems, may be desirable or undesirable depending on the application. We discuss specifically the following behavior: limit cycle, soft and hard self-excitation, hysteresis, jump resonance, and subharmonic generation.

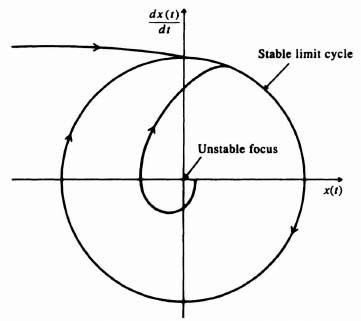

Limit cycles are oscillations of fixed amplitude and period that occur in nonlinear systems. Depending on whether the oscillation converges or diverges from the conditions represented, limit cycles can be either stable or unstable. It is possible that conditionally stable systems may contain both a stable and an unstable limit cycle. The occurrence of limit cycles in nonlinear systems makes it necessary to define instability† in terms of acceptable magnitudes of oscillation, because a very small nonlinear oscillation may not be detrimental to the performance of a system.

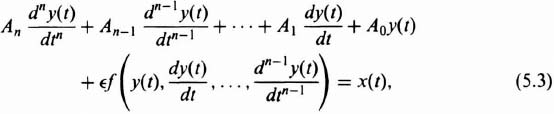

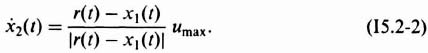

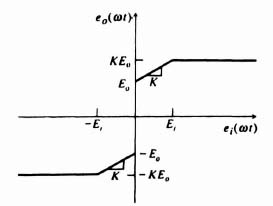

Self-excited oscillations occurring in systems that are unstable in the presence of very small signals are called soft self-excitation. Self-excited oscillations occurring in systems that are unstable in the presence of very large signals are called hard-self-excitation. Because soft and hard types of oscillation can occur, the control engineer must specify the dynamic range of operation completely when designing a nonlinear system. A feedback control system containing an element having saturation characteristics, such as illustrated in Figure 5.1a, could exhibit soft self-excitation. A feedback control system containing an element having dead-zone characteristics, such as illustrated in Figure 5.1b, could exhibit hard self-excitation.

Hysteresis is a nonlinear phenomenon that is most usually associated with magnetization curves or backlash of gear trains. A conventional magnetization curve whose path depends on whether the magnetizing force H is increasing or decreasing is shown in Figure 5.1c.

Jump resonance [4], another form of hysteresis, is of considerable interest. It exhibits itself in the closed-loop frequency response of certain nonlinear systems, as illustrated in Figure 5.1d. As the frequency ω is increased and the input amplitude R is held constant, the response follows the curve AFB. At point B, a small change in frequency results in a discontinuous jump to point C. The response then follows the curve to point D upon further increase in frequency. As the frequency is decreased from point D, the response follows the curve to points C and E. At point E, a small change in frequency results in a discontinuous jump to point F. The response follows the curve to point A for further decreases in frequency. Observe from this description that the response never actually follows the segment BE. This portion of the curve represents a condition of unstable equilibrium. The system must be of second order or higher for the phenomenon of jump resonance to occur.

Figure 5.1 (a) Saturation characteristics. (b) Dead-zone characteristics. (c) Conventional hysteresis loop. (d) Closed-loop response of a system with jump resonance.

Subharmonic generation [5] refers to nonlinear systems whose output contains subharmonics of the input's sinusoidal excitation frequency. The transition from normal harmonic operation to subharmonic operation is usually quite sudden. Once the subharmonic operation is established, however, it is usually quite stable. In general, if sinuosoidal signals f1 and f2 are added and their sum is applied to a nonlinear device, the output contains frequency components af1 ± bf2, where a and b assume all possible integers includingzero.

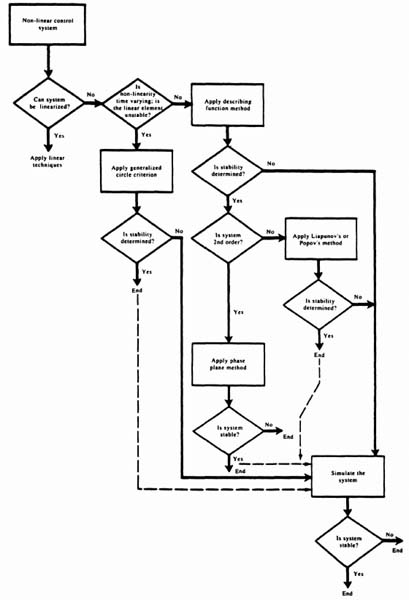

5.5. METHODS AVAILABLE FOR ANALYZING NONUNEAR SYSTEMS

Several tools are available for the analysis of nonlinear systems. All these techniques depend on the severity of the nonlinearity and/or the order of the system under consideration. We consider all of the useful and popular techiques in this chapter and illustrate their practical application. The chapter concludes with the presentation of guidelines for selecting the “best” nonlinear control-system method for the analysis and design of a particular problem in Section 5.23.

The analysis of nonlinear systems is concerned with the existence and effects of limit cycles, soft and hard self-excitation, hysteresis, jump resonance, and subharmonic generation. In addition, the response to specific input functions must be determined, The major difficulty of analyzing nonlinear systems is that no single technique is generally applicable to all problems.

Quasilinear systems, where the deviation from linearity is not too large, permit the use of certain linearizing approximations [6]. The describing-function approach, which is applicable to nonlinear systems of any order and is concerned with discovering limit cycles, simplifies the problem by assuming that the input to the nonlinear system is sinusoidal and the only significant frequency component of the output is that component having the same frequency as the input [7–10].

Nonlinear systems can often be approximated by several linear regions: The piecewise-linear approach permits the segmented linearization of any nonlinearity for any order of system. The phase-plane method is a very useful technique for analyzing the response of a second-order nonlinear system [10–13]. Liapunov's stability methods are very powerful techniques for determining the steady-state stability of nonlinear systems based on generalizations of energy notions [2]. Popov's method is very useful for determining the stability of time-invariant, nonlinear systems. The generalized circle criterion is applicable to time-variable, nonlinear systems whose linear portion is not necessarily open-loop stable [16,17].

Systems of very high order having several nonlinearities have hardly been dealt with in general analytical terms, This problem usually requires the use of numerical methods utilizing digital computers for a solution. It is worth emphasizing at this point that any nonlinear differential equation can be solved by these techiques provided many small increments are used [21–24]. However, the resulting solution is valid only for the specific problem being considered. It is very difficult to extend the result and obtain a general solution which can be used for other problems.

As a final check on the stability of nonlinear control systems, I always recommend that the system be simulated [25,26]. It will aid in overcoming such factors as possible uncertainty regarding the validity of assumptions, and to analytic difficulties caused by system complexity. That is further emphasized in Section 5.23.

5.6. UNEARIZING APPROXIMATIONS

In quasilinear systems, where the deviation from linearity is not too great, linear approximations may permit the extension of ordinary linear concepts. This approach acknowledges that certain system characteristics change from operating point to operating point, but it assumes linearity in the neighborhood of a specific operating point. The technique of linearizing approximations is universally used by the engineer and may be more familiar to the reader under the names small-signal theory and/or theory of small perturbations.

Linearizing approximations were utilized when we discussed the two-phase ac servomotor in Section 3.4‡. For this device, Figure 3.16‡ illustrated the quasilinear characteristics relating developed torque and speed. However, by approximating the torque speed curves with straight lines, the linear differential equation (3.98)‡ was formulated. We then obtained the transfer function of the two-phase ac servomotor, assuming that it was a linear device. It is left as an exercise to the reader in Problem 5.1 to determine the effect of various linearizing approximations.

The effects of a small amount of nonlinearity can be studied analytically by considering small perturbations or changes in the variables about some average value of the variables. This can be represented analytically by [see Eq. (5.3)]

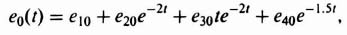

An expansion of the solution to this differential equation, for small nonlinearities, can be written as a power series in ![]() as

as

From this equation, y(t) may be interpreted as being composed of a linear component y(0)(t) and several deviation factors: ![]() . Assuming that

. Assuming that ![]() is very small, the nonlinear components will not seriously affect the system's behavior if a linear approximation is assumed. Therefore, within the realm of reasonable engineering approximations, the control engineer may be able to extend linear theory for certain feedback control systems which exhibit a small amount of nonlinearity. It is very interesting that this is just the reason linear theory has had such good results, even though practical systems are never purely linear.

is very small, the nonlinear components will not seriously affect the system's behavior if a linear approximation is assumed. Therefore, within the realm of reasonable engineering approximations, the control engineer may be able to extend linear theory for certain feedback control systems which exhibit a small amount of nonlinearity. It is very interesting that this is just the reason linear theory has had such good results, even though practical systems are never purely linear.

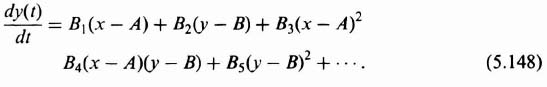

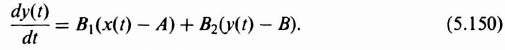

Linearization techniques can also be applied to those problems where it is desired to linearize nonlinear equations by limiting attention to small perturbations about a reference state [6]. This technique is often used in the design of space navigation and control systems where it is desired to maintain a space vehicle along a specified reference trajectory. It will now be shown how the corrective control forces required to keep the vehicle on the desired flight trajectory can be synthesized from a set of linear differential equations, although the basic differential equations describing the reference flight trajectory are nonlinear.

To illustrate this, let us assume that the equation of the system is given by

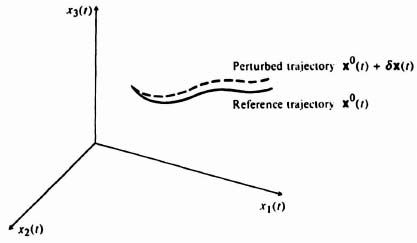

where the function f is nonlinear. Figure 5.2 illustrates the reference trajectory of the space vehicle (solid line) which satisfies the equation

where the superscript zero refers to parameters occurring along the reference trajectory. These reference parameters are related to the parameters of the actual trajectory (dashed line) as follows:

Figure 5.2 Reference and perturbed trajectories of a space vehicle [6].

Figure 5.2 illustrates the reference and actual trajectories, where the actual state x(t) is perturbed from the reference state x0(t) by δx(t). Physically, this means that the actual trajectory of the space vehicle is perturbed, or slightly different, from that of the desired reference trajectory. The vector δu(t) represents the deviation of the control input from the desired reference input u0(t) which would result in the desired system response x0(t).

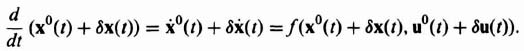

What kind of relationship can we derive between x0(t), δx(t), u0(t), and δu(t)? The basic nonlinear equation of the system,

![]()

can be expressed as

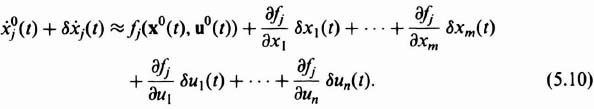

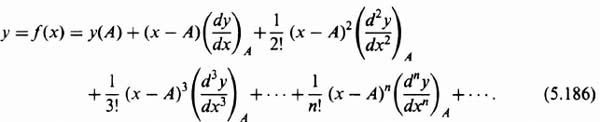

Because we are assuming that the actual perturbations of the system are small, we can expand the jth component of this equation in a Taylor series about the reference trajectory as follows:

Using Eq. (5.7), we can rewrite Eq. (5.10) as follows:

where j = 1, 2, 3,…, m.

Equation (5.11) can be simplified by utilizing the Jacobian matrices* that are defined as follows:

It is important to emphasize that all of the partial derivatives in the Jacobian matrices are evaluated along the reference trajectory of the space vehicle. Based on the Jacobian matrices, Eq. (5.11) can be rewritten in the following simplified form:

This resulting equation is very important. It states that the differential equation describing the perturbations about the reference trajectory are approximately linear, although the basic system differential equations describing the reference flight trajectory are nonlinear. Therefore, we have succeeded in linearizing the problem.

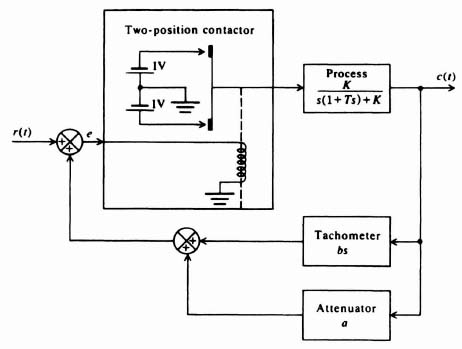

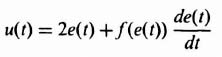

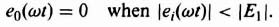

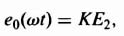

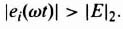

We can also linearize a system if we can adapt it to behave like a linear system. In order to demonstrate this, let us consider a two-position relay that controls the rotation of a motor in either direction. It is assumed that the control voltage applied by the relay to the motor, ec(t), is given by

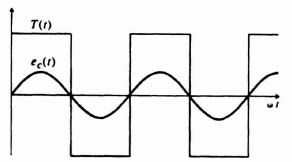

and the resulting motor torque developed, T(t), would be a square wave due to the switching action. Both ec(t) and T(t) are illustrated in Figure 5.3. Observe from this figure that the average, or mean, value of both functions is zero.

Figure 5.3 Relay-conlrolled molar characlerislics—Case 1.

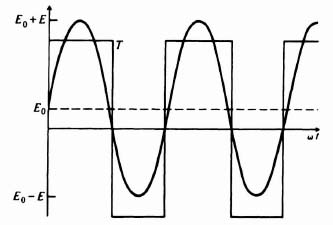

Let us next assume that the control voltage has a finite mean value E0, where

For this case, the torque is a periodic function whose mean value is some nonzero value T0, because time intervals where ec(t) is positive or negative are not equal, as indicated in Figure 5.4. Note that E0 is also a function of time, but it is assumed that it is very slowly varying compared with ω. Assuming, in addition, that E0 ![]() E, it can easily be shown that the mean value of T0 is given by the linear relationship

E, it can easily be shown that the mean value of T0 is given by the linear relationship

Therefore, the mean value of the torque T0 is proportional to the mean value of the controlling voltage.

This is a very important result. It shows that by means of a nonlinear element like a relay, a linear relationship can be obtained between the mean value of the controlling voltage and the mean value of the motor torque developed. The basic linearization technique utilized has taken the mean value of the applied voltage to the relay as the input, and superimposed on it a sinuosidal function of time whose amplitude and frequency are very high relative to that of the input.

In the following section, we extend our linearization concepts and attempt to apply them to nonlinear systems. Although the notion of a transfer function is inapplicable for nonlinear systems, an equivalent approximate transfer characteristic for a nonlinear device can be derived which can be manipulated as a transfer function under certain circumstances. We define this approximate transfer characteristic as the describing function. It is a very useful notion and is frequently employed in practice.

5.7. DESCRIBING-FUNCTION CONCEPT

The use of describing functions is an attempt to extend the very powerful transfer-function approach of linear systems to nonlinear systems [7, 8, 10]. A describing function is defined as the ratio of the fundamental component of the output of a nonlinear device to the amplitude of a sinusoidal input signal. In general, the describing function depends on the input signal's amplitude and frequency and is complex because phase shift may occur between the input and the fundamental component of the output. We study the describing function method of analysis and compare it with the transfer-function concept for linear systems.

Figure 5.4 Relay-controlled motor characteristics—Case 2.

If the input to a nonlinear element is a sinusoidal signal, the describing-function analysis assumes that the output is a periodic signal having the same fundamental period as that of the input signal. Therefore, the analysis is concerned only with the fundamental component of the output waveform. All harmonics, subharmonics, and any de component are neglected. This assumption is reasonable, because the harmonic terms are often small when compared with the fundamental term. In addition, a feedback control system usually provides additional attenuation of the harmonic terms because of its inherent filtering action of high frequencies in the region of the harmonic terms. Many nonlinear elements do not generate a dc term because they are symmetrical, nor do they generate any subharmonic terms [5]. Therefore, in many (but not all) situations the fundamental term is the only significant component of the output of the nonlinear element. In addition, it is assumed that there is only one nonlinear element in the feedback control system and that it is not varying. If a system contains more than one nonlinearity, we must lump all the nonlinearities together and obtain an overall describing function.

An examination of these limitations indicates that the describing function is based on a restricted mathematical foundation. The technique does give, however, reasonable results and does have an advantage that it can be used for systems of any order and is fairly simply to apply. It is recommended that the results always be verified with another technique or a computer simulation [25,26].

Given its limitations, the describing-function technique is still a very useful tool for analyzing and designing nonlinear systems. The describing function should be thought of as a generalized transfer function for nonlinear systems.

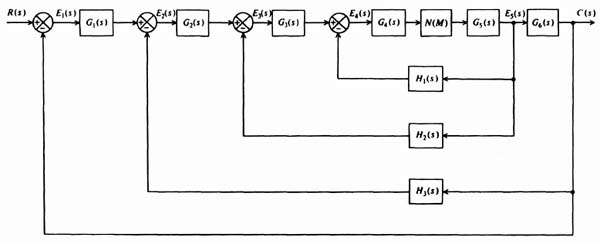

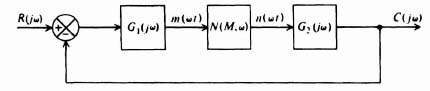

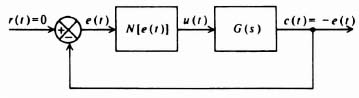

In order to derive a mathematical expression for the describing function, let us consider the general nonlinear system illustrated in Figure 5.5. In accordance with the definition of the describing function, let us assume that the input to the nonlinear element N(M, ω) is given by

In general, the steady-state output of the nonlinear device can be represented by the series

Figure 5.5 General nonlinear system.

By definition, the describing function is

Notice that the describing function depends on the amplitude and frequency of the input signal. The nonlinear element is thus considered to have a gain and phase shift varying with the amplitude and frequency of the input signal.

5.8. DERIVATION OF DESCRIBING FUNCTIONS FOR COMMON NONLINEARITIES

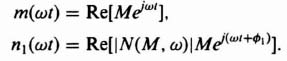

The describing functions for several common nonlinearities are derived in this section [8–10, 27]. The procedure most commonly used is to determine the Fourier series of the output waveshape from the nonlinear device and consider only the fundamental component. Let us consider the nonlinear element N(M, ω) in an overall feedback control system, as shown in Figure 5.5. Assuming that the input m(ωt) is given by a sinusoidal signal where

we can represent the output waveshape by a Fourier series given by the expression

where

In general, if n(ωt) = −n(−ωt), then the function is odd and AK = 0. In addition, if n(ωt) = n(−ωt), then the function is even and BK = 0.

Because we are only concerned with the fundamental component of the output, it is necessary to determine only A1 and B1. For m(ωt) = M sin ωt, the describing function can then be obtained from the expression

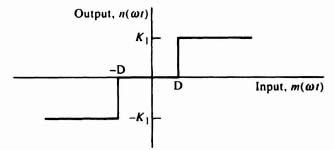

The control engineer is usually concerned with the nonlinearities due to dead zones, saturation, backlash, on-off relay-control systems, Coulomb friction, and stiction. We specifically derive and catalog their describing functions so that a handy reference for some common describing functions will be available. In addition, the procedure illustrated should enable one to develop the facility for calculating the describing function of any nonlinearity encountered.

A. Describing function of a Dead Zone

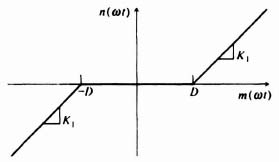

Figure 5.6 illustrates the dead-zone characteristics. The relationships between input and output of this nonlinearity can be expressed by the equations

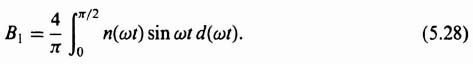

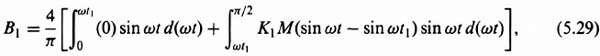

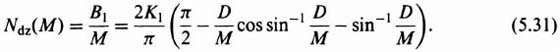

Figure 5.7 illustrates typical input and output waveshapes. Notice that the output is an odd function, and therefore AK = 0. The symmetry over the four quarters of the period allows us to evaluate the expression for the Fourier coefficient, B1, by taking four times the integral over one quarter of a cycle, as follows:

Figure 5.6 Nonlinear characteristics of a dead zone.

Figure 5.7 Input and output waveshape from a nonlinear device having the dead-zone characteristics shown in Figure 5.6.

Substituting Eqs. (5.25) and (5.26) into Eq. (5.28), we obtain

where

![]()

Evaluation of Eq. (5.29) results in the expression

From Eq. (5.30), because the describing function is the ratio of the amplitude of the fundamental component of the output B1 to M, it can be expressed as

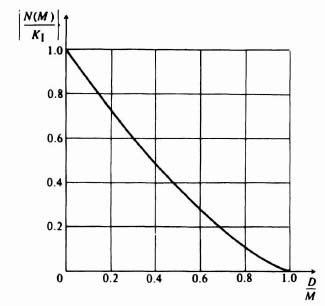

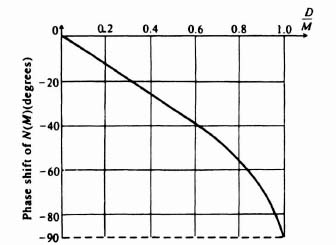

Notice that the describing function for a dead zone is only a function of the amplitude of the input and not of frequency. Figure 5.8, obtained from Eq. (5.31), is a sketch of the normalized value of the describing function N(M)/K1 as a function of the ratio D/M. For very small values of D/M the normalized describing function approaches unity. For values of D/M ![]() 1 it equals zero, which implies that the input must be greater than the dead-zone magnitude in order to obtain an output. Notice that the describing function for a dead zone does not have any phase shift.

1 it equals zero, which implies that the input must be greater than the dead-zone magnitude in order to obtain an output. Notice that the describing function for a dead zone does not have any phase shift.

Figure 5.8 Normalized describing function for a dead zone.

B. Describing function of Saturation

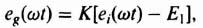

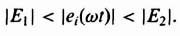

Figure 5.9 illustrates the nonlinear saturation characteristic. The relationship between input and output of this nonlinearity can be expressed by the following equations

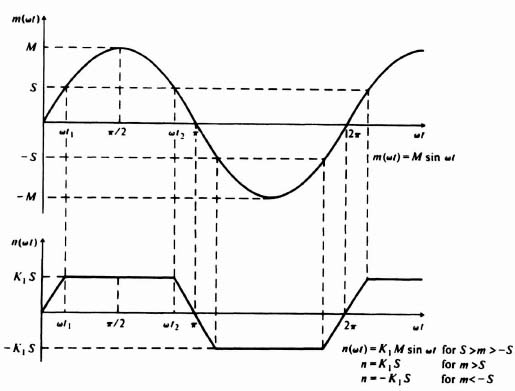

Figure 5.10 illustrates typical input and output waveshapes.

Figure 5.9 Nonlinear characteristics of saturation.

Figure 5.10 Input and output waveshape from a nonlinear device having saturation characteristics shown in Figure 5.9.

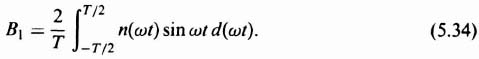

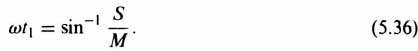

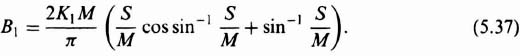

Notice that the output waveshape is an odd function for the case of saturation just as it was for the case of a dead zone. Therefore, the expression for the Fourier coefficient B1 of the output waveshape is

As was true for a dead zone, the expression for the Fourier coefficient B1 can be obtained by taking four times the integral over one quarter of a cycle because of the symmetry over the four quarters of the period. This results in the expression

Substituting Eqs. (5.32) and (5.33) into Eq. (5.35), we obtain

where

Evaluation of Eq. (5.36) results in the expression

Because the describing function is defined as the ratio of the amplitude of the fundamental component of the output, B1 to M, the describing function can be expressed as

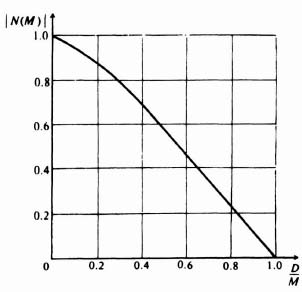

Notice that the describing function for saturation is only a function of the amplitude of the input and not of frequency. Figure 5.11, obtained from Eq. (5.38) is a sketch of the normalized value of the describing function N(M)/K1 as a function of the ratio S/M. For very small values of S/M, the normalized describing function approaches zero. For values of S/M ![]() 1 it equals unity, which implies that the output is unaffected by the saturation level if S

1 it equals unity, which implies that the output is unaffected by the saturation level if S ![]() M. Notice that the describing function for saturation does not introduce any phase shift.

M. Notice that the describing function for saturation does not introduce any phase shift.

It is important to emphasize that the describing functions for dead zone and saturation could have been obtained from one nonlinear characteristic containing both types of nonlinearities. Then the resulting describing function could be reduced to the describing function of dead zone [Eq. (5.31)] by letting the saturation level approach infinity, or it could be reduced to the describing function of saturation [Eq. (5.38)] by letting the dead-zone width approach zero. It is recommended that the reader derive Eqs. (5.31) and (5.38) using this approach (see Problem 5.2).

Figure 5.11 Normalized describing function for saturation.

C. Describing function of Backlash

Backlash or mechanical hysteresis is due to the difference in motion between an increasing and a decreasing output. Figure 5.12 illustrates a model of backlash, and Figure 5.13 illustrates its characteristics. The source of backlash that usually receives the most attention is the “looseness” inherent in mechanical gearing.

Figure 5.12 Physical model of backlash.

Figure 5.13 Characteristics of backlash.

From Figure 5.14, the relationship between inut and output for this nonlinearity can be expressed by the following equations:

Figure 5.14 Backlash characteristics where D < M < 2D.

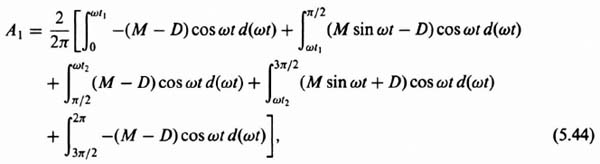

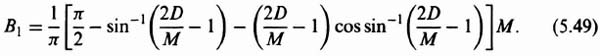

Notice that the output waveshape is neither an odd nor an even function. This means that the Fourier series of the output waveshape has AK and HK components. Because the describing function is concerned only with the fundamental component of the output, however, we are interested only in A1 and B1. From Eqs. (5.39) through (5.43), we can express A1 as

where

and

and B1 can be expressed as

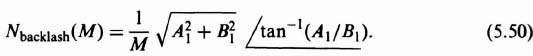

Integrating Eqs. (5.44) and (5.47), we obtain the following results:

Therefore, the describing function for backlash is given by

This expression is valid only when the positive slope of the backlash characteristic as shown in Figure 5.14 is unity. If it is any other value, such as K1, then Eq. (5.50) is modified, because the right-hand sides of the defining equations (5.40) and (5.42) would have to be modified.

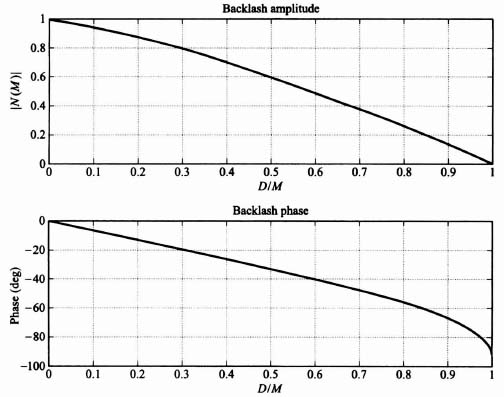

Notice the describing function for backlash is only a function of the amplitude of the input and not of the frequency. Figures 5.15 and 5.16, which have been obtained from Eqs. (5.48) through (5.50), are sketches of the amplitude and phase characteristics, respectively, of the describing function as a function of the ratio D/M. Notice that a phase lag occurs at low input amplitudes. This phase lag may introduce problems of stability in a feedback system.

Figure 5.15 Amplitude characteristics for the describing function of backlash.

Figure 5.16 Phase characteristics for the describing function of backlash.

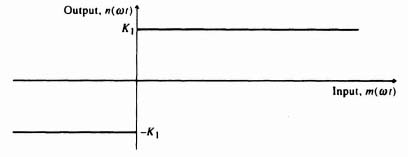

D. Describing function of an On-Off Element Having Hysteresis

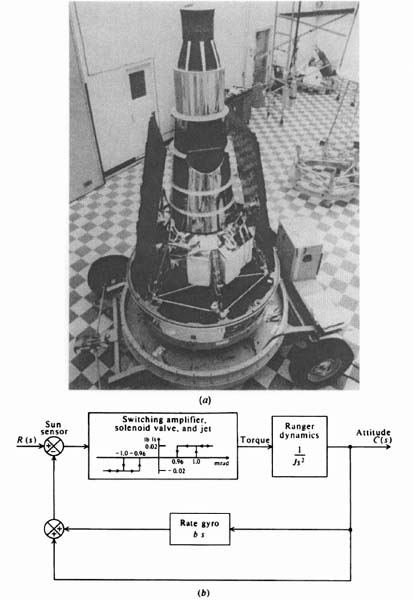

A class of systems of great practical importance is that of on-off control systems. In these systems, as soon as the error signal exceeds a certain level, a relay switches on full corrective torque having proper polarity. When the error falls below a certain level, all the corrective torque is removed. These simple and relatively inexpensive devices find many practical uses in thermostatic control of heat, in automobile voltage regulators, in aircraft and space-vehicle control applications where space and weight limitations are very critical, and so on.

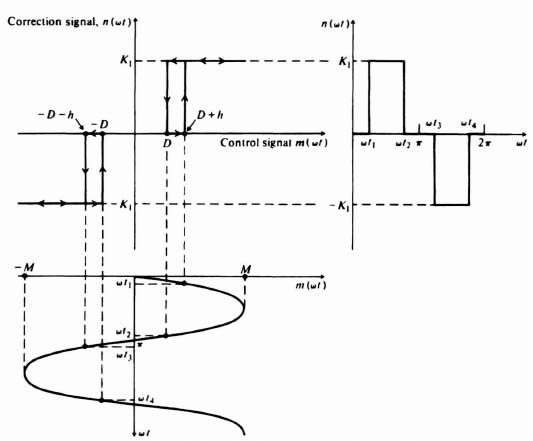

The heart of an on-off control system, the relay or contactor, has a variety of characteristics. For the purpose of deriving a describing function, we consider a three-position contactor exhibiting hysteresis characteristics; this includes the two-position contactor as a limiting case. Figure 5.17 illustrates the input-output characteristic and the waveshapes for such a device. The hysteresis effect occurs because of the different values of control signal required for corrective torque application and its removal. Torque is applied when the control signal reaches ±(D + h), but it is not removed until the control signal equals ±D. The relationship between input and output for this nonlinearity can be expressed by the following equations:

Figure 5.17 On-off characteristics for a three-position contactor having hysteresis.

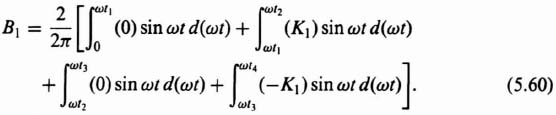

Notice that the output waveshape is neither an odd nor an even function. The Fourier series of the output waveshape, therefore, contains AK and BK components. From Eqs. (5.51)–(5.54), we can express A1 as

where

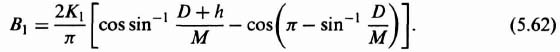

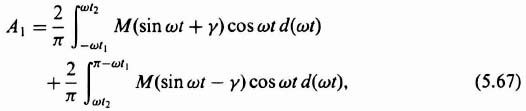

and B1 as

Integrating Eqs. (5.55) and (5.60), we obtain the expressions

The describing function is given by the expression

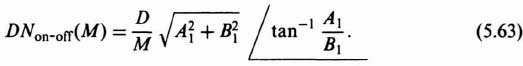

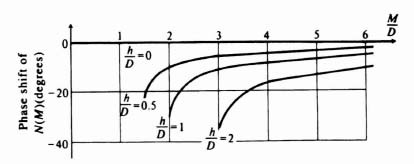

Notice that the describing function for this device is a function only of the amplitude of the input and not of frequency. Figures 5.18 and 5.19, which have been obtained from Eqs. (5.61) and (5.62), are sketches of the normalized amplitude and phase characteristics of the describing function as a function of the ratio M/D, respectively. Notice that the phase lag is zero when hysteresis is not present and grows progressively worse as the hysteresis increases.

Figure 5.18 Amplitude characteristics for the describing function of an on-off device having hysteresis.

Figure 5.19 Phase characteristics for the describing function of an on-off device having hysteresis.

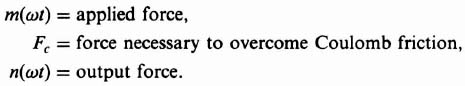

E. Describing Function of Coulomb Friction and Stiction

In Chapter 3‡ we considered the effect of damping in linear systems. Damping, a form of friction, is known as viscous friction. Its characteristic is that its magnitude is always proportional to velocity, as illustrated in Figure 5.20a. The damping factor B is the slope of this characteristic. Another type of frictional force commonly found in control systems is known as Coulomb friction. Unlike viscous friction, it is not proportional to velocity but is a constant force that always opposes the velocity. This nonlinear phenomenon is illustrated in Figure 5.20b, where the Coulomb friction force is denoted by ±Fc. Another nonlinear form of frictional force, known as static friction or stiction, is the value of the frictional force at zero velocity. It is usually denoted by ±Fs. Figure 5.20c illustrates the composite frictional-force characteristics generally encountered when controlling some load.

To determine the describing function of Coulomb friction, we can express the relationship between the input and output as

Figure 5.20 (a) Viscous friction characteristics. (b) Coulomb friction characteristics. (c) Composite friction characteristics illustrating viscous, Coulomb, and static friction (stiction).

where

The corresponding steady-state waveforms are given by Figure 5.21. It should be noted that the discontinuities of the output waveform correspond to zero velocity, because the force required to overcome Coulomb friction changes sign at these instants. The relationship between the input and output forces is

where

![]()

Figure 5.21 Coulomb friction characteristics.

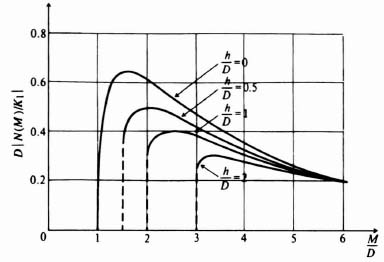

The fundamental components of the output A1 and B1 are

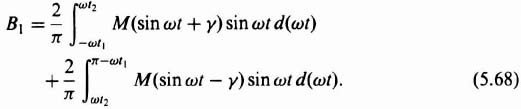

Integrating Eqs. (5.67) and (5.68), we obtain the following expressions:

The resulting expression for Coulomb friction is

Observe that the describing function for Coulomb friction depends only on the amplitude of the input and not on its frequency. The gain-phase relationship of the describing function for Coulomb friction is illustrated in Figure 5.22.

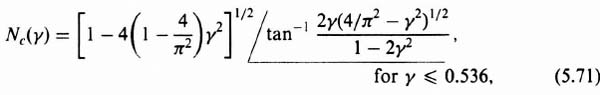

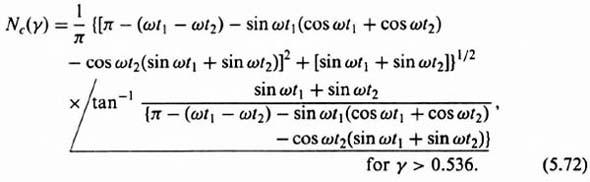

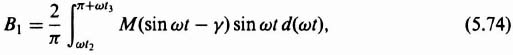

The describing function for the simultaneous occurrence of both Coulomb friction and stiction is considered next. Waveform relationships between the applied force m(ωt), the output force n(ωt), and the forces necessary to overcome Coulomb friction and stiction Fc and Fs are given in Figure 5.23. The expressions for the fundamental components of the Fourier coefficients are

Figure 5.22 Gain-phase characteristics for the describing function of Coulomb friction.

Figure 5.23 Couloumb friction and stiction characteristics.

where

The describing function for the combined case of Coulomb friction and stiction is obtained by integrating Eqs. (5.73) and (5.74). The resultant expression is

Observe from this expression that the describing function for the combined case of Coulomb friction and stiction is a function of the amplitude of the input and the relative magnitudes of friction but not of frequency. Figure 5.24 illustrates the gain-phase relationship of the describing function for the combined case of Coulomb friction and stiction.

Figure 5.24 Gain-phase characteristics for the describing function of Coulomb friction and stiction.

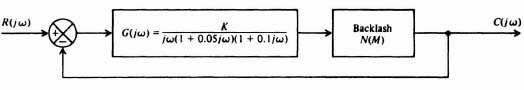

5.9. USE OF THE DESCRIBING FUNCTION TO PREDICT OSCILLATIONS

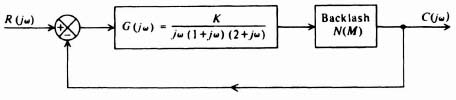

The describing function of a nonlinear element can be utilized to determine the existence of a limit cycle in a nonlinear control system in an approximating manner [28]. Let us reconsider the general nonlinear system illustrated in Figure 5.5. If we assume that the describing function of the nonlinearity is N(M, ω) and R(jω) = 0, let us determine the conditions under which an assumed oscillation m(ωt) can be sustained, where

The fundamental component of n(ωt) is given by

Expressing Eqs. (5.76) and (5.77) as the real part of a complex exponential, we obtain the following expressions:

The output of the linear elements is given by

where

Equation (5.78) must equal −m(ωt) if the initial assumption is to hold. Thus, dropping the real-part notation, we obtain the following expression:

![]()

This can be rewritten as

For a sustained oscillation, the bracketed term in Eq. (5.79) must vanish, since M ≠ 0. Therefore,

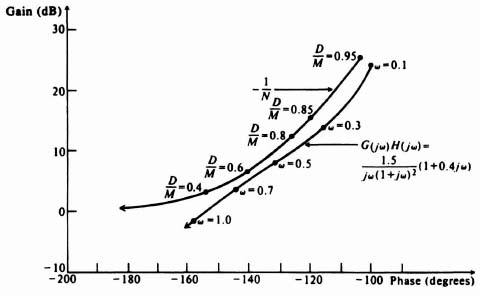

and any combination of values of input ampitude M and frequency ω which satisfy the equation

are capable of providing a limit cycle. If a combination of amplitude and frequency can be found which satisfies Eq. (5.81), the feedback control system can have a sustained oscillation.

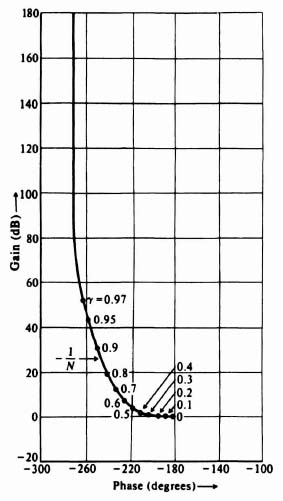

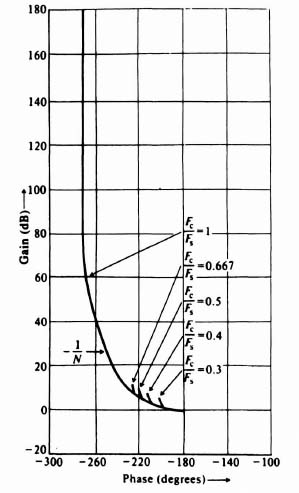

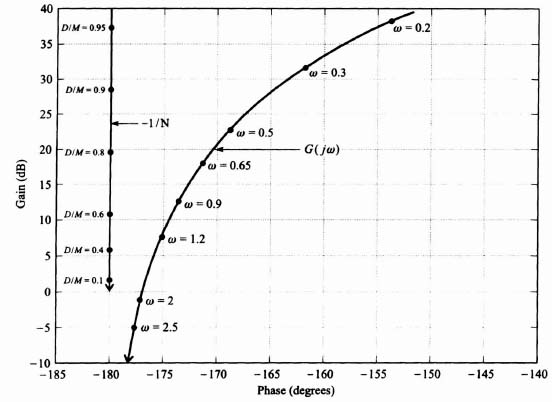

From experience, I have found the gain-phase plot to be the most revealing technique for stability analysis when utilizing the describing-function method. Two separate sets of loci, corresponding to G(jω) and −1/N(M, ω) of Eq. (5.81), are sketched on the gain-phase plot. Intersections of the two loci indicate possible solutions to Eq. (5.81) and yield information as to the magnitude and frequency ω of sustained oscillation. If no intersections result, an oscillation is unlikely. (Remember, the describing function is an approximation.) The distance to a possible intersection can be used as a criterion of closeness to oscillation. This is next illustrated on the gain-phase diagram for several representative systems.

A. Example 5.9-1

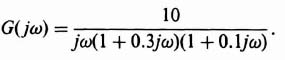

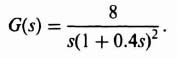

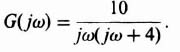

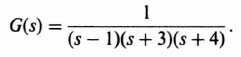

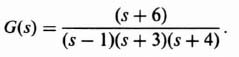

Figure 5.25a illustrates an analysis via the gain-phase diagram for a nonlinear system containing a dead zone where K1 = 1. The figure illustrates a stable system where a dead zone is present and

Figure 5.25b illustrates a system where a dead zone is pre sent and

An intersection occurs at a frequency of approximately 4.4 rad/sec and a value of D/M of 0.09. This is to be interpreted as the frequency and amplitude which satisfy Eq. (5.81) and which result in a limit cycle. Notice that the system is unstable from a linear viewpoint, because the −1, 0 point is enclosed.

The interpretation of this situation is quite illuminating. Because the normalized describing function of a dead zone is less than one, its effect is to reduce the overall system gain. When multiplied by the transfer function given by Eq. (5.82), which would produce a stable feedback system by itself, its effect is to make the system even more stable. Therefore, in order to illustrate a limit cycle in this nonlinear system containing a dead zone, it is necessary to illustrate a system that produces an unstable control system from a linear viewpoint as well. The frequency function of Eq. (5.83) satisfies this requirement, as indicated in Figure 5.25b.

It is interesting to observe that this nonlinear control system can only be unstable if we also make it unstable from a linear viewpoint. The next question to answer is how will it behave in an unstable mode, from the linear or nonlinear aspect? A linear system that is unstable has an unbounded response to a bounded input. (Review the BIBO concept presented in Section 6.1.‡) A nonlinear system that is unstable can have a stable or unstable limit cycle. The limit cycle in this problem is a stable one, based on the rule presented in the following problem (see Figure 5.27). Therefore, nonlinear theory states that the system would oscillate at a fixed frequency wand amplitude M defined by the intersection of the two curves in Figure 5.25b. However, linear theory states that the system would have an unbounded output to any bounded input (including noise), and the linear theory viewpoint would be dominant in this problem.

Figure 5.25 (a) Gain-phase diagram stability analysis of nonlinear system containing a dead zone, where

(b) Gain-phase diagram stability analysis of nonlinear system containing a dead zone, where

![]()

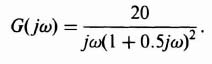

B. Example 5.9-2

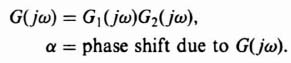

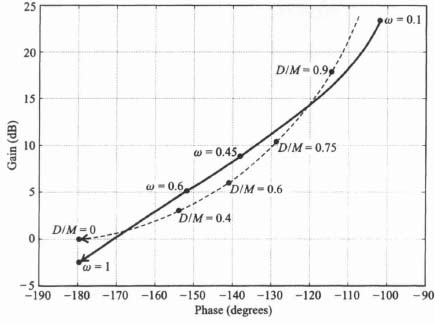

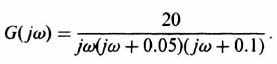

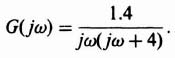

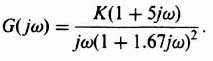

Figure 5.26 illustrates the gain-phase diagram analysis for a nonlinear system containing backlash, where

Figure 5.26 Gain-phase diagram analysis of nonlinear system containing backlash.

This gain-phase diagram was obtained using MATLAB, [29–31] and is contained in the M-file that is part of my AMCSTD Toolbox disk which can be retrieved free from The MathWorks, Inc. anonymous FTP server at ftp://ftp.mathworks.com/pub/books/advshinners. Notice that the system has two points of intersection corresponding to a pair of limit cycles. They occur at ω = 0.8139, D/M = 0.1742 and ω = 0.2786, D/M = 0.8398. These two limit cycles must now be examined to determine whether they are stable or unstable.

A generalized rule can be established for determining whether a limit cycle is stable or unstable [32]. If the two loci are assigned a sense of direction so that the linear locus G(jω) is pointing in the direction of increasing frequency and the nonlinear locus −1/N is pointing in the direction of increasing amplitude M (decreasing D/M in our example), then a stable limit cycle occurs when the nonlinear locus appears to an observer, stationed on the linear locus and facing in the direction of increasing frequency, to cross from left to right in the direction of increasing amplitude M. If the opposite occurs, then the limit cycle is unstable and the state of the system is divergent. As an example of applying this rule, consider the gain-phase plot of Figure 5.27. Here, we see that there are two unstable limit cycles (divergent states) and three stable limit cycles (convergent states). The unstable limit cycles cannot maintain themselves in the presence of minute disturbances; the stable limit cycles will always maintain themselves in the presence of disturbances.

Applying this generalized rule for determining whether the limit cycles in Figure 5.26 are stable or unstable, we find that ω = 0.8139, D/M = 0.1742 corresponds to a stable limit cycle and the other intersection corresponds to an unstable limit cycle. The stable limit cycle corresponds to a convergent point because disturbances at either side tend to converge to these conditions. This is contrasted with the unstable limit cycle at ω = 0.2786, D/M = 0.8398, which is a divergent point, because disturbances that are not large enough to reach this intersection will decay, and disturbances that are larger will result in oscillations that tend to increase in amplitude until the stable limit cycle is reached.

Figure 5.27 Illustration of stable and unstable limit cycles.

The describing function analysis presented in this book uses the following special functions, which are part of my AMCSTD Toolbox: relays;back_lsh.m;dead_zn.m. They determine the following:

- back_lsh.m determines the value of N(M) in Eq. (5.50) for the describing function of backlash, and is then used for the gain-phase plot. Values of D/M and ω at the various limit-cycle intersections, if any, are then provided as an output after the values for the linear transfer function, G(jω), and an initial estimate of where D/M is at the intersection are provided as inputs to BACK_LSH.M.

- dead_zn.m determines the value of N(M) in Eq. (5.31) for the describing function of dead zone, and is then used for the gain-phase plot. Values of D/M and ω at the various limit-cycle intersections, if any, are then provided as an output after the values for the linear transfer function, G(jω), and an initial estimate of where D/M is at the intersection are provided as inputs to dead_zm.m.

- relays determine the value of N(M) in Eq. (5.63) for the describing function of an on-off element having hysteresis, which can be used for the gain-phase plot. Values at the various limit cycle intersections, if any, can be determined after the values for the linear transfer function, G(jω), are added to the gain-phase plot.

The calculations performed in back_lsh.m and dead_zn.m are based on the describing function definitions, and are analytical and very accurate as opposed to those results obtained using graphical techniques. The determination of N(M) by relays for a nonlinear on-off element having hysteresis is based on this nonlinear device's describing-function definition, but the solution for limit cycles using relays is based on graphical techniques. These three functions could be used as illustrations by the student to implment functions for the other nonlinear characteristics presented (e.g., saturation). My AMCSTD Toolbox can be retrieved free from The MathWorks, Inc. anonymous FTP server at ftp://ftp.mathworks.com/pub/books/advshinners.

5.10. COMPENSATION AND DESIGN OF NONLINEAR CONTROL SYSTEMS USING DESCRIBING FUNCTIONS

The purpose of this section is to illustrate the procedure for compensating for undesirable nonlinearities in a system. As an example, we consider the nonlinearity to be backlash. The gain-phase plot will be used for analysis, although we could use the Nyquist diagram just as well.

Oscillating input signals, having frequencies very much greater in magnitude than the system bandwidth, can be used to maintain the output of a system containing backlash at its correct average value. This techique is known as dither. It is effective in reducing the influence of very small amplitude nonlinearities. However, the resultant increased wear on the system is a serious disadvantage of this simple approach to the problem. The effect of dither on the describing function is analyzed in References [33–37] where the “dual input describing function” is discussed.* For systems which are to operate continuously for long periods of time, it is necessary to utilize other approaches that will eliminate backlash. Reducing the system gain, adding phase-lead networks, and introducing rate feedback in parallel with position feedback are all relatively simple methods that can be used to minimize the effects of backlash. We next demonstrate the theoretical effects of these techniques on backlash [1,28].

Let us reconsider the nonlinear system analysis of Figure 5.26. This sketch illustrates that a nonlinear system having the form shown in Figure 5.5, where

and having a backlash element, was indeed oscillatory. We demonstrate how this system can be stabilized by each of the following electrical methods:

- Reducing the system gain.

- Adding a phase-lead network.

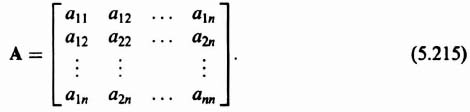

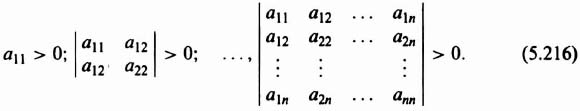

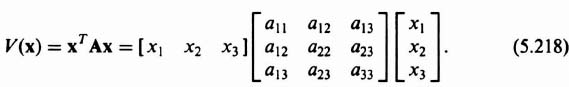

- Introducing rate feedback in parallel with position feedback.

A. Reducing the System Gain

In order to consider the effects of gain changes, let us rewrite G(jω) of Eq. (5.80) as

where K represents the system gain and G'(jω) represents only the poles and zeros of the linear part of the system. Therefore, Eq. (5.81) can be rewritten as

By reducing the gain K, the limit cycle is eliminated because the curve of −1/KN is moved upward. Figure 5.28 illustrates how the oscillation can be eliminated by reducing the system gain from 1.5 to unity. At a gain setting of approximately 1.3, the curves of G'(jω) and −1/KN just clear each other. A gain setting of unity was chosen in order to maintain some margin of safety.

B. Addition of a Phase-Lead Network

A passive phase-lead network can also be used to eliminate the oscillation. The transfer function of this network is given by

where αT > T. (The attenuation 1/α is nullified by increasing the system gain by α.) The compensated value of G(jω), G(jω)comp, is given by

Figure 5.28 Compensation of a nonlinear system containing backlash, by reduction of the system gain.

The best approach for designing the phase-lead network is to use Eqs. (2.7) and (2.8), which are repeated here for solving for ωmax and φmax respectively:

To choose ωmax and φmax we analyze Figures 2.11 and 5.26. We will assume that the 1/α attenuation of the phase-lead network, shown in Figure 2.11, is compensated for by the control system's amplifier. In selecting ωmax and φmax we must take into account from Figure 2.11 that the gain characteristics of the phase-lead network increased with frequency, while the low-frequency portion (below 1/αT) will be unaffected (because the 1/α attenuation is compensated for). Therefore, it is advantageous to place ωmax at a relatively high frequency such as near the stable limit cycle at ω = 0.8139 and D/M = 0.1742, rather than at the frequency where the two curves have maximum phase overlap (at approximately ω = 0.7). Accordingly, we will add an additional phase of 29° at ωmax = 0.9. The result is αT = 1.9 and T = 0.65. For this system, a value of αT = 1.9 and T = 0.65 will eliminate the limit cycles, as illustrated in Figure 5.29. It is important to recognize, however, that this is not a unique solution. It is only one of many possible solutions, as can be noted from studying the gain-phase diagram.

Figure 5.29 Compensation of a nonlinear system containing backlash, by addition of a phase-lead network.

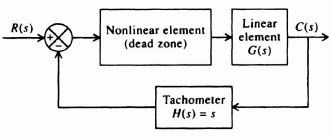

C. Introduction of Rate Feedback

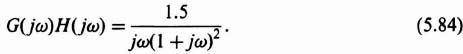

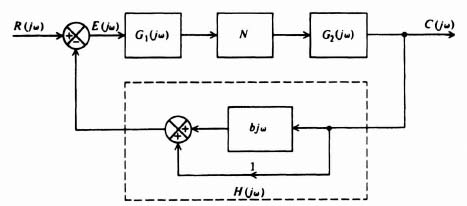

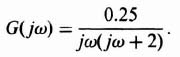

Addition of rate feedback in parallel with position feedback can also be a very effective method for eliminating the oscillation. For this configuration, which is illustrated in Figure 5.30, the value of the system feedback element H(jω) is 1 + bjω which represents a pure zero. Therefore, the oscillation criterion for this configuration must be modified from that given by Eq. (5.81) to the following expression:

With rate feedback, the value of G(jω)H(jω) for the system being considered is given by

The best approach for selecting b is to recognize from Figure 5.30 that the rate feedback in parallel with position feedback results in a pure zero term (1 + bjω) as shown in Eq. (5.93). Therefore, it contributes a phase lead of tan−1 bω. Analysis of Figure 5.26 indicates that the maximum phase overlap is approximately 9° at ω = 0.7. We will design for an additional 7° for safety, and we can solve for b from

Therefore,

A value of b = 0.4 will eliminate the limit cycle, as is illustrated in Figure 5.31, and a relatively safe margin is achieved. At a value of b approximately equal to 0.25, the curves of G(jω)H(jω) and −1/N just clear each other.

Figure 5.30 Nonlinear system containing rate and position feedback.

Figure 5.31 Compensation of a nonlinear system containing backlash, by the introduction of rate feedback.

A complete case study for the design of the positioning system of a tracking radar that uses conventional linear techniques and the describing function is presented jointly in Section 7.3. In this problem we show the separate linear and nonlinear considerations for the design of an actual control system, and their joint relationships.

5.11. DESCRIBING-FUNCTION ANALYSIS AND DESIGN USING MATLAB [29]

The use of MATLAB to analyze linear control sytems has been abundantly illustrated in previous chapters of this book. In this section, the application of MATLAB for analyzing the application of the describing functions for analysis and design is illustrated.

The AMCSTD Toolbox provides the following describing function utilities to supplement MATLAB and the Control System Toolbox:

- “deadzone” provides the normalized gain (N) for a given D/M and it also allows the refinement of a solution to the intersection of the dead-zone curve (−1/N) and a given transfer function for the linear portion of the control system. The AMCSTD Toolbox's command for this utility is

- “backlash” provides the gain (N) for any given D/M, and it also allows the refinement of a solution to the intersection of the backlash curve (−1/N) and a given transfer function for the linear portion of the control systems. The AMCSTD Toolbox's command for this utility is

- “relays” is a hysteresis utility with relay being a subset of its actual capability. It provides the gain (N) for any given set(s) of M, D, and H or H/D. It also allows the refinement of a solution to the intersection of the hysteresis curve (−1/N) and a given transfer equation for the lienar portion of the control system. The AMCSTD Toolbox's command for this utility is

The MathWorks has available a Nonlinear Control Design Toolbox for nonlinear control system analysis, which is illustrated in the AMCSTD Toolbox. The DEMO section of the AMCSTD Toolbox has many illustrations of the analysis of nonlinear systems using MATLAB.

A. Example 5.11-1

Let us now demonstrate the MATLAB program which provides the describing-function analysis illustrated in Figure 5.26. This is also illustrated in the DEMO example 5.2 in the AMCSTD Toolbox.

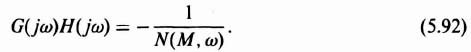

We will reconsider the nonlinear control system whose linear portion is represented by Eq. (5.84), which is repeated here,

and whose nonlinear portion is backlash. The MATLAB program to illustrate the backlash curves corresponding to Figures 5.15 and 5.16 is illustrated in the MATLAB program shown in Table 5.1, and is repeated in Figure 5.32. The MATLAB program to plot the describing function analysis shown in Figure 5.26 is given by the MATLAB program shown in Table 5.2, and is repeated in Figure 5.33.

Table 5.1. MATUB Program for Obtaining the Describing Function of Backlash

dm = linspace(.999,0);

n = back_lsh(dm);

subplot (211);

plot (dm, abs(n));

grid;

title('Backlash Amplitude'),

xlabel('D/M')

ylabel('IN(M)I'),

subplot (212);

plot(dm, angle(n)*180/pi);

grid;

title('Backlash Phase'),

xlabel('D/M'),

ylabel('Phase(Deg)')

|

Figure 5.32 Describing-function for backlash.

Table 5.2. MATLAB Program for Obtaining the Describing Function Analysis for a Control System Containing Backlash and whose Linear Portion is Defined by Eq. 5.96

num = [0 1.5];

den = [1 1];

w = logspace(−1,0);

[mag,ph] = bode(num,den,w);

magdb=20*log10(mag);

dm=linspace(.95,0);

n = back_lsh(dm);

ninv = (0*n-1)./n;

xlabel('Phase(degrees)')

ylabel('Gain(dB)'),

w = [.1 .45 .6 1];

dm = [.9 .75 .6 .4 0];

grid;

title('Nonlinear Analysis for System Containing Backlash')

|

5.12. DIGITAL COMPUTER PROGRAMS FOR PERFORMING THE DESCRIBING-FUNCTION ANALYSIS

For those who do not have access to MATLAB, which has now become an industry standard (more or less), this book also provides another digital computer program for performing the describing function analysis. This approach for providing both MATLAB and other digital computer programs which are not dependent on COTS (commercial-off-the-shelf software) is used throughout this book.

Figure 5.33 Describing-function analysis for a nonlinear control system containing backlash, whose linear portion is described by Eq. (5.84).

As shown in Sections 5.10 and 5.11 with the use of MATLAB, the digital computer is very useful for the construction of the describing function [25,38]. The computer can compute −1/N and, as indicated in Chapter 1, G(jω). This section illustrates the procedure used to analyze and compensate a practical nonlinear system containing backlash with the aid of a working digital computer program. It is based on the method illustrated in Sections 5.7 through 5.10. The Basic language will be used for this program [39,40].

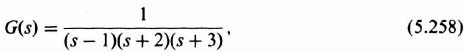

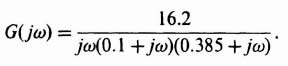

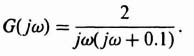

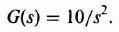

Let us consider the system illustrated in Figure 5.5, where

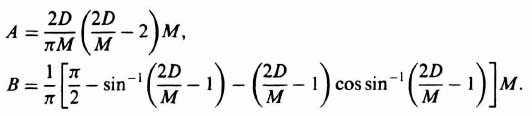

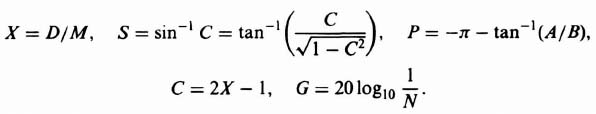

and N corresponds to backlash. We know from Eq. (5.50) that

where

Note that the subscripts have been dropped from the A and B terms for simplicity. The coding symbols used are as follows:

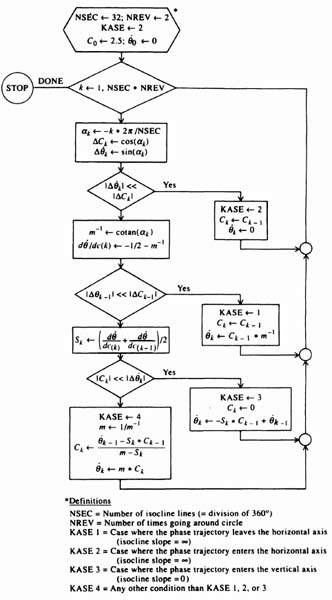

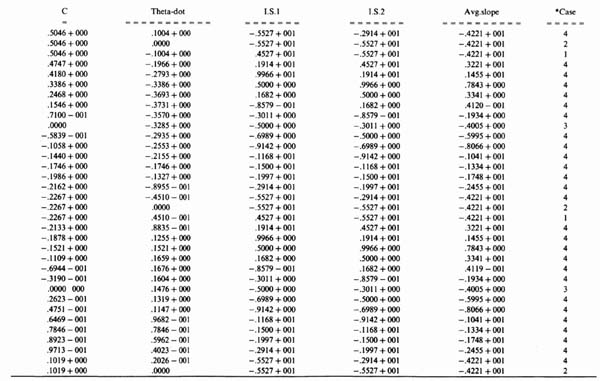

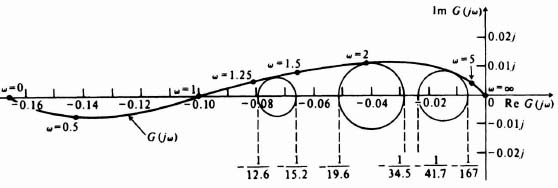

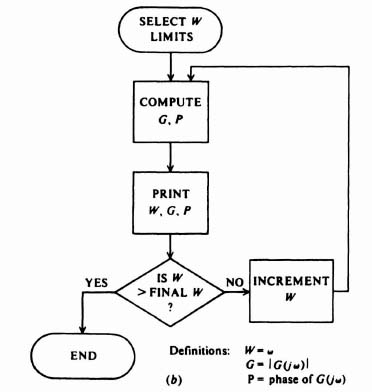

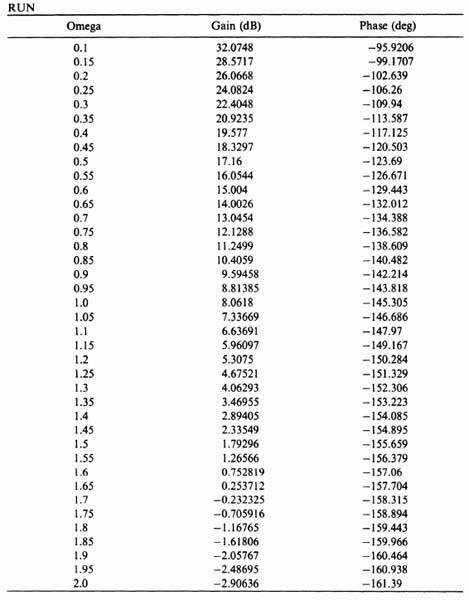

Figure 5.34a illustrates the logic flow diagram for developing the program for computing −1/N. Table 5.3 illustrates the actual program for computing −1/N. The reader should compare these two in order fully to understand the digital computer's program. Table 5.4 illustrates the computer run for calculating −1/N. Notice that is was necessary to compute additional values of 0.9 < D/M < 0.99 at the end of the computer run since it was found that a limit cycle existed in this region. Figure 5.34b illustrates the logic flow diagram for determining G(jω). In the coding G represents the gain of G(jω), P represents its phase and W represents ω. Table 5.5 illustrates the actual program for computing G(jω) and Table 5.6 illustrates the computer run for calculating G(jω).

The values for −1/N and G(jω) are illustrated on the gain-phase diagram in Figure 5.35. It indicates limit cycles at ω = 1.36, D/M = 0.41 (stable) and ω = 0.27, D/M = 0.945 (unstable). As illustrated previously in Section 5.10, this nonlinear system can be compensated by lowering the gain, by the addition of a phase-lead network or rate feedback. A similar analysis indicates that at K = 1.65, a phase-lead network given by

Table 5.3. Computer Program for Computing −1/N (Basic Program)

1 REM DESCRIBING FUNCTION FOR BACKLASH 10 PRINT “D/M”, “GAIN(DB)”, “PHASE(DEGREES)”, “N” 20 READ X1,X2,D 30 FOR X=X1 TO X2 STEP D 40 LET C=2*X-1 50 LET S=ATN(C/SQR(1-C↑2)) 60 LET A=1.27324*X* (X-1) 70 LET B=0.31831*(1.570796-S-C*COS(S)) 80 LET N=SQR(A↑2+B↑2) 90 LET G=20*0.43429448*LOG(1/N) 100 LET P=-180-57.29578*ATN(A/B) 110 PRINT X,G,P,N 120 NEXT X 130 DATA 0.05, 0.95, 0.05 140 END OK |

Figure 5.34 (a) Logic flow diagram for determination of −1/N.

Figure 5.34 (b) Logic flow diagram for determination of G(jω).

Table 5.4. Results of Computer Analysis for −1/N

and rate feedback having a constant of 0.47 result in a 3-dB margin of safety. The system compensated with a phase-lead network and rate feedback is illustrated in Figure 5.35.

Table 5.5. Computer Program for Computing G(jω) (Basic Program)

10 PRINT “OMEGA”, “GAIN(DB)”, “PHASE(DEG)” 20 READ K 30 READ Wl,W2,D 40 FOR W=Wl TO W2 STEP D 50 LET G=4.342944S*LOG(K*K*(1+9*W*W)/(W*W*(1+4*W*W)↑2)) 60 LET P=-90+57.2957S*(ATN(3*W)-2*ATN(2*W)) 70 PRINT W,G,P 80 NEXT W 90 DATA 4,0.1,2.0,0.05 200 END |

Figure 5.35 Describing-function analysis based on computer program results.

Table 5.6. Results of Computer Analysis for G(jω)

5.13. PIECEWISE-LINEAR APPROXIMATIONS

Approximating any nonlinearity by means of piecewise-linear segmentation is a very useful tool for analysis. Each segment leads to a relatively simple linear differential equation which can be solved by conventional linear techniques. This method, which is not limited to quasilinear systems, has the advantage of yielding an exact solution for nonlinearities of any order, if the nonlinearity is itself piecewise linear or can be approximated by piecewise-linear segments. We illustrate its application by means of an example.

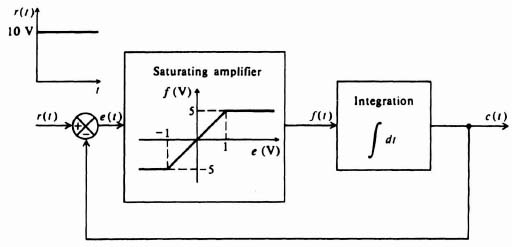

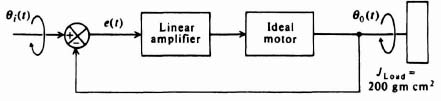

Let us consider saturation. Figure 5.36 illustrates a simple feedback control system containing an integrator and an amplifier which saturates. The amplifier gain is 5 over an input voltage range of ±1 V, for input voltages greater than this, the amplifier saturates. It is quite evident that two distinct linear operating regions for the amplifier exist. Each of these linear regions can be considered separately in a piecewise-linear manner in order to obtain the composite response of the system.

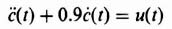

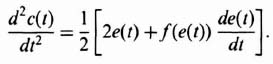

For the unsaturated region, the relationships depicting the system operation are

During saturation, Eqs. (5.100) and (5.102) are still valid. However, (5.101) changes to

Assuming zero initial conditions and a step input of 10V, the expression for the output during the saturated region of operation csat(t) is given by

The expression for the output during the unsaturated region is given by

Figure 5.36 Feedback control system containing saturation.

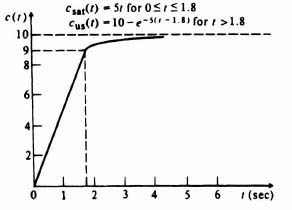

The time t1 is the time at which the amplifier becomes unsaturated. When c = 9, e = 1, and t1 is 1.8 sec. Using conventional techniques, the solution to Eq. (5.106) is

The initial value for this region, cus(0), is the same as the final value of the saturated region, csat(1.8) = 9; this continuity of the output is imposed by the integrator. Therefore, the composite solution for this problem, obtained by a piecewise-linear analysis, is

The response of this system to a step input of 10V is sketched in Figure 5.37.

The piecewise-linear approach illustrated in the preceding problem can be extended to very complex nonlinearities. It is important to emphasize that the boundary conditions between linear regions are continuous whenever the transfer function following the nonlinearity is a proper rational function. The resulting differential equation for each segmented region is linear and can be easily solved by conventional linear techniques.

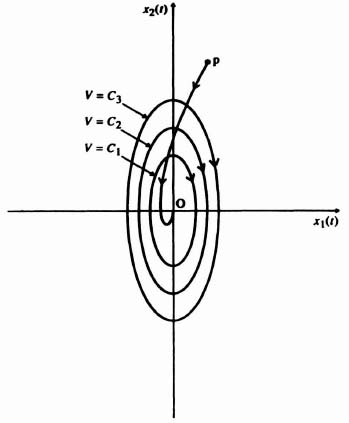

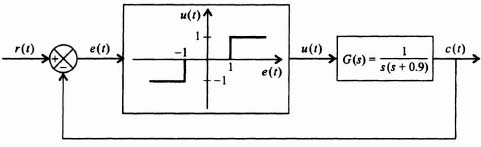

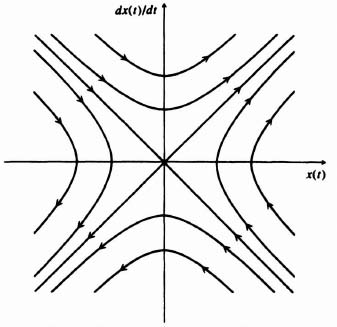

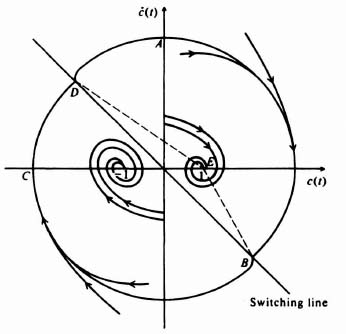

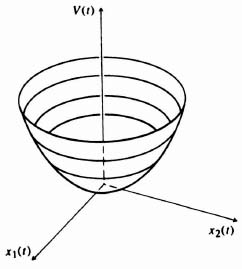

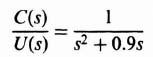

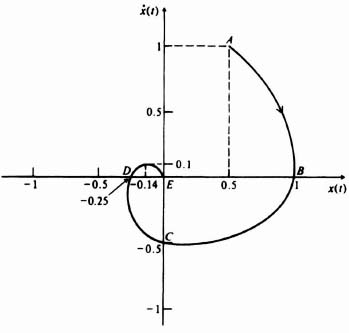

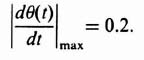

5.14. STATE-VARIABLE ANALYSIS: THE PHASE PLANE

A useful technique of applying the state-space approach to nonlinear systems is the phase-plane method [10, 11, 41]. It is a technique for analyzing the transient response of a nonlinear control system to an external input or for solving an initial-condition problem. The phase-plane method is limited to second-order systems. The variation of the displacement is plotted against velocity on a graph known as the phase-plane. A curve for a specific step input is known as a trajectory. A set of curves of displacment versus velocity of a specific system, which are repeated for several input values (or initial conditions) and are plotted on the same phase plane, is called a phase portrait.

Figure 5.37 Step response of saturating system obtained from piecewise-linear analysis.

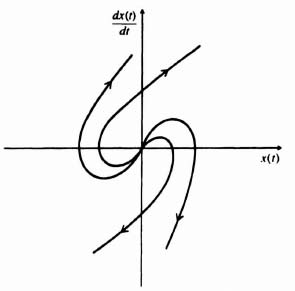

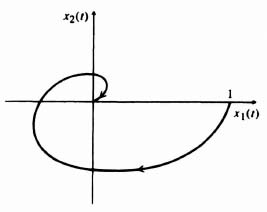

The starting point of a trajectory is the initial displacement, x(0), and velocity, (dx(0)/dt). The future path of the trajectory after it leaves its initial starting point represents the behavior of the control system for an input excitation. If the trajectory approaches infinity on the phase plane, the system is unstable. If the phase trajectory approaches the vicinity of the origin, however, the system is stable. If the trajectory circles the origin continuously in a closed curve after excitation, a sustained oscillation known as a limit cycle exists.

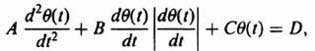

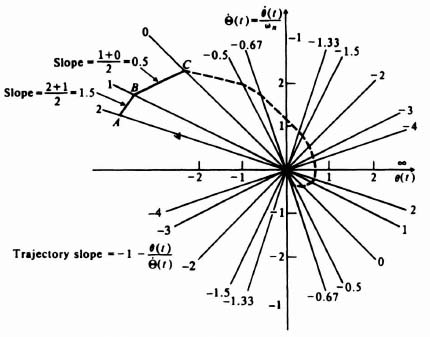

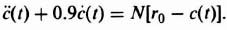

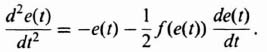

The phase-plane method is specifically concerned with the solution of second-order, nonlinear differential equations having the following general form:

For our initial analysis, we will assume that the forcing function, u(t), equals zero and that the initial conditions, x(0) and (dx(0)/dt), represent the input to the system. Equation (5.110) immediately emphasizes some serious limitations of the phase-plane method. It is only useful for analyzing second-order systems. It is interesting to compare the limitations of the phase-plane method with those of the describing-function analysis, where the response to sinusoidal inputs of systems having any order could be determined. The phase-plane method could be extended to higher-order systems, but it is too impractical.*

The following section discusses the techniques which can be used for constructing the phase portrait from the differential equation of a system. Then we examine the properties and interpretation of the phase portrait. The procedure to be followed for applying the phase-plane approach to design problems, and a computer program for obtaining the phase plane are then presented.

5.15. CONSTRUCTION OF THE PHASE PORTRAIT

Four procedures that can be used to construct the phase portrait of a system are:

- direct solution of the differential equation;

- transformation of the second-order differential equation to a first-order equation;

- the method of isoclines;

- digital computer program.

The first two methods are analytical techniques; the third is graphical; the last is presented in Section 5.19. We shall describe these methods next, together with some illustrative examples.

A. Direct Solution of the Differential Equation

This is the most straightforward method for obtaining the phase portrait. It is usually the most useless method from a practical viewpoint, because we do not have to resort to the phase-plane representation for a solution if the differential equation is integrable. However, this method does provide an understanding of the situation.

The procedure is to solve the differential equation for the dependent variable x(t). The solution for x(t) is then differentiated in order to obtain the derivative of the dependent variable, dx(t)/dt. The dependent variable, time, is then eliminated between the two resulting equations. A single equation that relates x(t) and dx(t)/dt results, and can be used to plot the phase portrait directly. However, the approach has the disadvantage that it requires the solution of a nonlinear, second-order, differential equation.

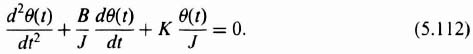

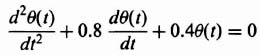

Let us illustrate the procedure in detail by first considering a simple linear system where a torque T(t) was applied to a body having a moment of inertia J, a twisting shaft having a stiffness factor K, and a damper having a damping factor B. Applying Newton's second law of motion to this system resulted in the following relationship:

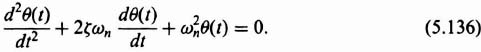

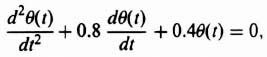

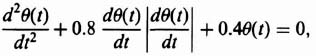

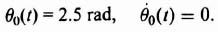

where θ(t) is the displacement. The differential equation is second order, and the phase-plane method is certainly applicable. Assuming that the system is unexcited, the resulting differential equation is given by

Equation (5.112) can be written in terms of damping ratio ζ and undamped natural frequency ωn, as follows.

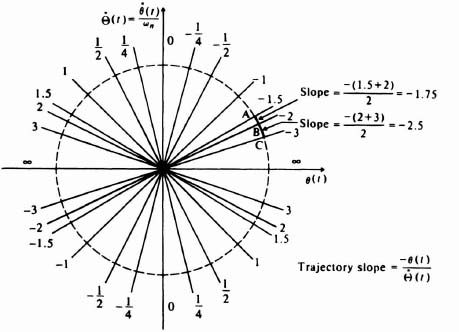

where

Before sketching the phase portrait, let us consider the simpler case of an undamped system where ζ = 0. For this situation, Eq. (5.113) reduces to

From elementary calculus, the solution to Eq. (5.114) is that of simple harmonic motion:

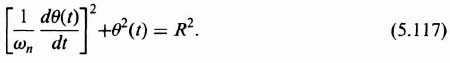

In order to obtain a relationship between θ(t) and dθ(t)/dt, we differentiate Eq. (5.115) and then eliminate time between the resulting equations, as follows:

Eliminating time between Eqs. (5.115) and (5.116), we obtain the expression

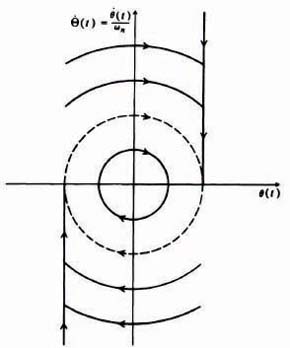

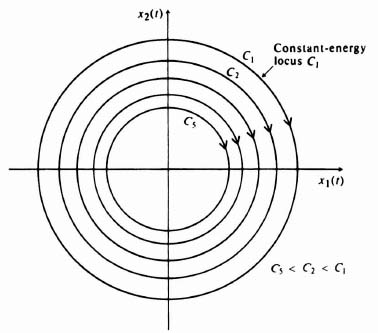

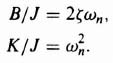

The phase portrait for this system can be drawn directly from Eq. (5.117). Observe that Eq. (5.117) describes a family of concentric circles in the (1/ωn)(dθ(t)/dt) versus θ(t) plane having a radius of R. Therefore, the phase portrait for this system (shown in Figure 5.38) is a family of concentric circles if a normalized ordinate axis is used. If the ordinate axis is not normalized, a family of ellipses results. Any set of initial conditions, such as points R1, R2, R3, and R4, specifies a particular circle. For t > 0, the motion is in the indicated direction. The origin is defined as a center, and is discussed further in Section 5.16.

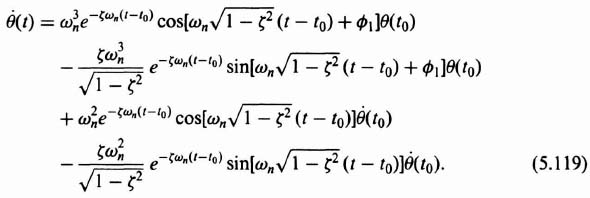

Let us next sketch the phase portrait for the case where the damping ratio of this system is finite. The general solution for Eq. (5.113), when it is excited by a step input and has a set of initial conditions, was derived in Chapter 4‡ [see Eq. (4.45)‡]. The form of the solution for this system when it is unexcited by a step input and only has a set of initial conditions, θ(t0) and θ(t0), present is given by

Figure 5.38 Phase portrait for a second-order linear system having no damping. The origin is defined as a center.

Proceeding as in the previous example, the derivative of Eq. (5.118) is obtained:

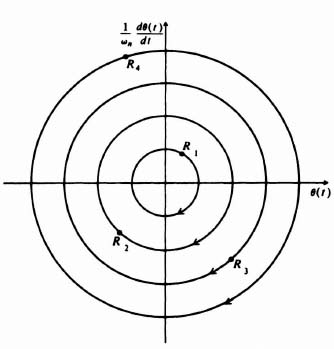

The complexity of Eqs. (5.118) and (5.119) makes it quite difficult to eliminate time between them. Therefore we use an alternative approach. Equations (5.118) and (5.119) will be evaluated for several values of time to obtain the corresponding coordinates in a normalized phase plane. The result is plotted in Figure 5.39 for a damping ratio of 0.7. Notice that the phase portrait is a collection of noncrossing paths describing system behavior for all possible initial conditions. Because all the trajectories approach the origin of this system where u(t) = 0, the system is stable, as we would expect it to be. The origin is defined as a stable node, and is discussed further in Section 5.16.

The projection of a specific trajectory onto the abscissa gives the variation of θ(t) with time, and its projection onto the ordinate gives the variation of dθ(t)/dt with time. Time appears in the portrait implicitly as a parameter along all phase trajectories. We discuss its computation later in Section 5.16.

Figure 5.39 Phase portrait of a second-order linear system having damping. The origin is defined as a stable node.

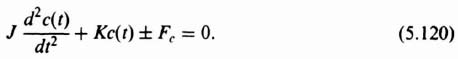

Let us next illustrate the application of this method to a relatively simple nonlinear differential equation. We consider the problem of nonlinear friction which was discussed previously in Section 5.8. We first determine the phase portrait for Coulomb friction and then extend it to the case of stiction. Consider a system containing Coulomb friction ±Fc, the moment of inertia of the system being J and the spring constant K. The differential equation of the motion of the system is given by

Defining

the normalized equation of motion is

The phase portrait for Eq. (5.124) in the α(t) versus ![]() plane is the same as that for the linear, second-order system considered previously (see Figure 5.38). However, in the

plane is the same as that for the linear, second-order system considered previously (see Figure 5.38). However, in the ![]() versus c(t) plane, the phase portrait for Eq. (5.124) is quite different, and can be obtained by considering Eq. (5.124) in two parts: one for the upper half-plane where

versus c(t) plane, the phase portrait for Eq. (5.124) is quite different, and can be obtained by considering Eq. (5.124) in two parts: one for the upper half-plane where ![]() > 0, and the other for the lower half-plane, where

> 0, and the other for the lower half-plane, where ![]() < 0. A little thought shows that the phase portrait in the upper half-plane is a family of semi-circles, centered about c(t) = −γ, since c(t) is related to α(t) merely by a simple translation. In a similar manner, the phase portrait for the lower half-plane is a family of semicircles centered about c(t) = γ. Figure 5.40 illustrates the phase portrait.

< 0. A little thought shows that the phase portrait in the upper half-plane is a family of semi-circles, centered about c(t) = −γ, since c(t) is related to α(t) merely by a simple translation. In a similar manner, the phase portrait for the lower half-plane is a family of semicircles centered about c(t) = γ. Figure 5.40 illustrates the phase portrait.

It is interesting to observe from Figure 5.40 that as soon as the displacement has a value within the interval on the c(t) axis given by

all motion stops. This gives rise to the possibility of large, steady-state errors. For example, if the initial conditions are at point 1, the trajectory will be 1–2–3–4 and the system will not have any steady-state error. If the initial conditions are at point 5, however, the trajectory followed will be 5–6–7–8 and the system will have a steady-state error equal to γ.

We have already seen that dither is useful for eliminating the steady-state error due to Coulomb friction. Its effect on the steady-state error can easily be understood by studying the phase plane. For example, let us assume that a finite steady-state error exists corresponding to point 9 on Figure 5.40. With dither, the effect of a negative disturbance, segment 9–10, results in the system returning to point 11 on the c(t) axis, while a positive disturbance, segment 9–12, results in the system returning to point 13 on the c(t) axis. Because the projection 11–9 is greater than the projection 9–13, the system will tend to move towards the origin.

Figure 5.40 Phase portrait for a system containing Coulomb friction.

Before leaving this system, let us consider the required modification to the phase portrait in Figure 5.40 for stiction. Because stiction occurs only for zero velocity and is greater than Coulomb friction, its effect is to extend the termination line: ±γ to ±γs. These extended terminations are illustrated in Figure 5.40.

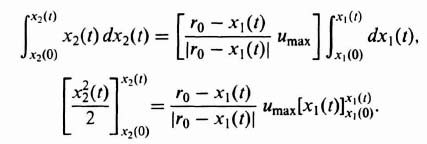

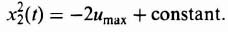

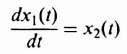

B. Transformation of the Second-Order Differential Equation to a First-Order Equation

If the differential equation of the system cannot be easily integrated, a new differential equation in terms of the phase variables may be formed, from which the phase portrait can be obtained directly. This method can best be illustrated by considering the linear, second-order, undamped system discussed previously, namely,

Using the dot notation, we have

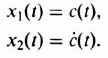

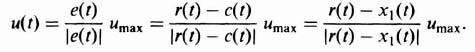

Defining the state variables as

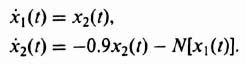

we have

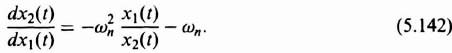

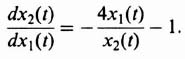

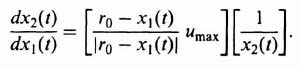

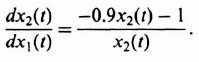

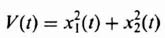

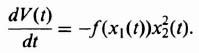

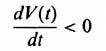

Dividing Eq. (5.131) by Eq. (5.130), the following is obtained:

Equation (5.132) can be rewritten as

![]()

Integrating, we obtain

![]()

where C is an arbitrary constant easily determined from the initial conditions. Solving for x2(t), we obtain

![]()

In terms of the phase variable, this equation can be written as

The result of sketching various phase trajectories from Eq. (5.133) will be the phase portrait illustrated in Figure 5.38, where a normalized ordinate axis is used. The initial conditions determine the particular trajectory followed.

This techique can be easily extended to the systems whose phase portraits are illustrated in Figures 5.39 and 5.40. This method is by far the most useful analytic technique for obtaining the phase portrait of a system.

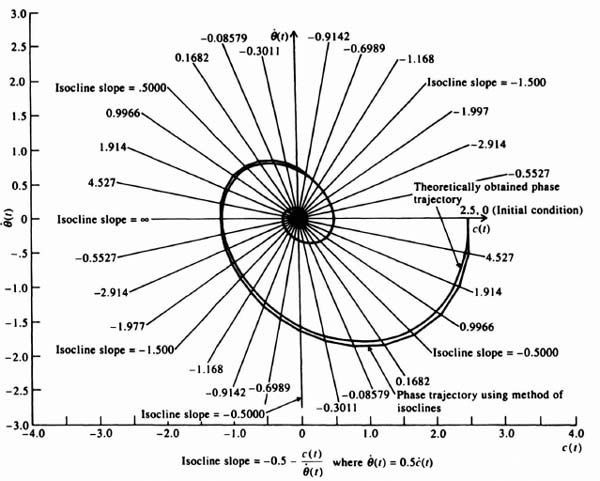

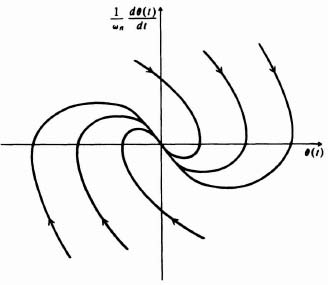

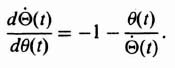

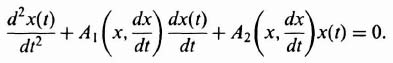

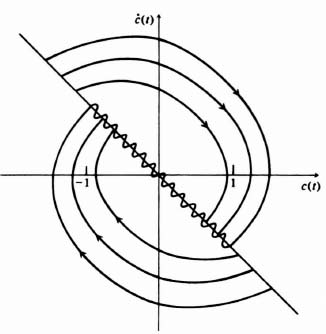

C. Method of Isoclines

The method of isoclines is a graphcial procedure for determining the phase portrait. It can be used even if the differential equation cannot be solved analytically. In practice, it is a very powerful method to use.

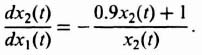

Isoclines are lines in the phase plane corresponding to constant slopes of the phase portrait. One starts with the differential equation in the form shown by Eq. (5.132). Here dx2/dx1(t), or d![]() (t)/dθ(t), corresponds to the slope of the trajectories that form the phase portrait. Numerical values are assigned for the slopes d

(t)/dθ(t), corresponds to the slope of the trajectories that form the phase portrait. Numerical values are assigned for the slopes d![]() /dθ(t), and Eq. (5.132) is used to find the corresponding points in the phase plane having those slopes. Once a set of isoclines is drawn, a trajectory may be drawn by starting at some point on an isocline and then proceeding to the next isocline along a straight line whose slope is the average of the slopes corresponding to the two isoclines. Because the procedure is a numerical approximation, closer spacing of the isoclines increases the accuracy of the resulting trajectory.

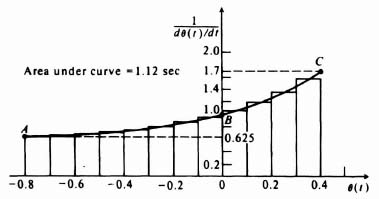

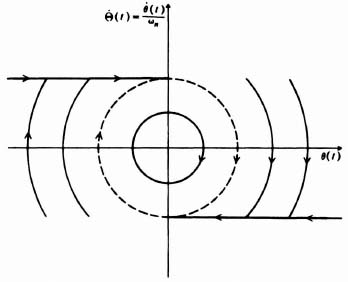

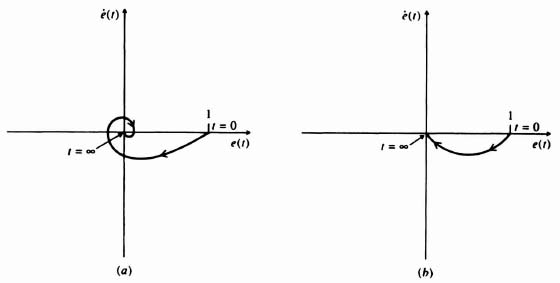

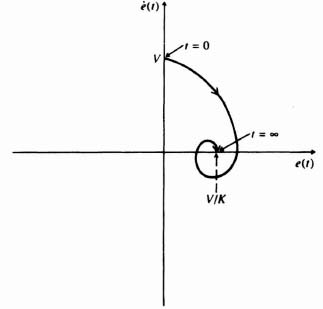

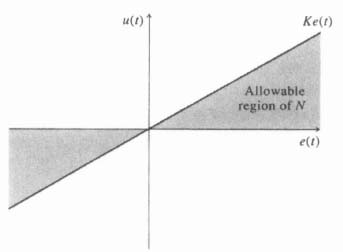

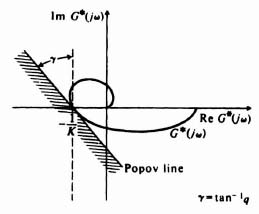

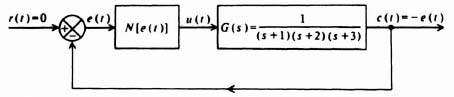

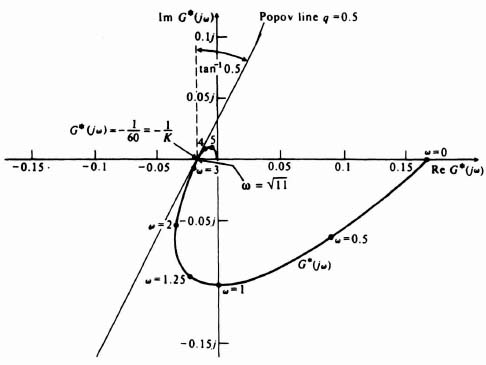

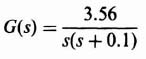

/dθ(t), and Eq. (5.132) is used to find the corresponding points in the phase plane having those slopes. Once a set of isoclines is drawn, a trajectory may be drawn by starting at some point on an isocline and then proceeding to the next isocline along a straight line whose slope is the average of the slopes corresponding to the two isoclines. Because the procedure is a numerical approximation, closer spacing of the isoclines increases the accuracy of the resulting trajectory.