Chapter 5. Automating deployment: CloudFormation, Elastic Beanstalk, and OpsWorks

- Running a script on server startup to deploy applications

- Deploying common web applications with the help of AWS Elastic Beanstalk

- Deploying multilayer applications with the help of AWS OpsWorks

- Comparing the different deployment services available on AWS

Whether you want to use software from in-house development, open source projects, or commercial vendors, you need to install, update, and configure the application and its dependencies. This process is called deployment. In this chapter, you’ll learn about three tools for deploying applications to virtual servers on AWS:

- Deploying a VPN solution with the help of AWS CloudFormation and a script started at the end of the boot process.

- Deploying a collaborative text editor with AWS Elastic Beanstalk. The text editor Etherpad is a simple web application and a perfect fit for AWS Elastic Beanstalk, because the Node.js platform is supported by default.

- Deploying an IRC web client and IRC server with AWS OpsWorks. The setup consists of two parts: a Node.js server that delivers the IRC web client and the IRC server itself. The example consists of multiple layers and is perfect for AWS OpsWorks.

We’ve chosen examples that don’t need a storage solution for this chapter, but all three deployment solutions would support delivering an application together with a storage solution. You’ll find examples using storage in the next part of the book.

The examples in this chapter are completely covered by the Free Tier. As long as you don’t run the examples for longer than a few days, you won’t pay anything. Keep in mind that this only applies if you created a fresh AWS account for this book and nothing else is going on in your AWS account. Try to complete the examples of the chapter within a few days; you’ll clean up your account at the end of each example.

Which steps are required to deploy a typical web application like WordPress—a widely used blogging platform—to a server?

1. Install an Apache HTTP server, a MySQL database, a PHP runtime environment, a MySQL library for PHP, and an SMTP mail server.

2. Download the WordPress application and unpack the archive on your server.

3. Configure the Apache web server to serve the PHP application.

4. Configure the PHP runtime environment to tweak performance and increase security.

5. Edit the wp-config.php file to configure the WordPress application.

6. Edit the configuration of the SMTP server, and make sure mail can only be sent from the virtual server to avoid misuse from spammers.

7. Start the MySQL, SMTP, and HTTP services.

Steps 1–2 handle installing and updating the executables. These executables are configured in steps 3–6. Step 7 starts the services.

System administrators often perform these steps manually by following how-tos. Deploying applications manually is no longer recommended in a flexible cloud environment. Instead your goal will be to automate these steps with the help of the tools you’ll discover next.

5.1. Deploying applications in a flexible cloud environment

If you want to use cloud advantages like scaling the number of servers depending on the current load or building a highly available infrastructure, you’ll need to start new virtual servers several times a day. On top of that, the number of virtual servers you’ll have to supply with updates will grow. The steps required to deploy an application don’t change, but as figure 5.1 shows, you need to perform them on multiple servers. Deploying software manually to a growing number of servers becomes impossible over time and has a high risk of human failure. This is why we recommend that you automate the deployment of applications.

Figure 5.1. Deployment must be automated in a flexible and scalable cloud environment.

The investment in an automated deployment process will pay off in the future by increasing efficiency and decreasing human failures.

5.2. Running a script on server startup using CloudFormation

A simple but powerful and flexible way of automating application deployment is to run a script on server startup. To go from a plain OS to a fully installed and configured server, you need to follow these three steps:

1. Start a plain virtual server containing just an OS.

2. Execute a script at the end of the boot process.

3. Install and configure your applications with the help of a script.

First you need to choose an AMI from which to start your virtual server. An AMI bundles the OS and preinstalled software for your virtual server. When you’re starting your server from an AMI containing a plain OS without any additional software installed, you need to provision the virtual server at the end of the boot process. Translating the necessary steps to install and configure your application into a script allows you to automate this task. But how do you execute this script automatically after booting your virtual server?

5.2.1. Using user data to run a script on server startup

You can inject a small amount—not more than 16 KB—of data called user data into every virtual server. You specify the user data during the creation of a new virtual server. A typical way of using the user data feature is built into most AMIs, such as the Amazon Linux Image and the Ubuntu AMI. Whenever you boot a virtual server based on these AMIs, user data is executed as a shell script at the end of the boot process. The script is executed as user root.

The user data is always accessible from the virtual server with a HTTP GET request to http://169.254.169.254/latest/user-data. The user data behind this URL is only accessible from the virtual server itself. As you’ll see in the following example, you can deploy applications of any kind with the help of user data executed as a script.

5.2.2. Deploying OpenSwan as a VPN server to a virtual server

If you’re working with a laptop from a coffee house over Wi-Fi, you may want to tunnel your traffic to the internet through a VPN. You’ll learn how to deploy a VPN server to a virtual server with the help of user data and a shell script. The VPN solution, called OpenSwan, offers an IPSec-based tunnel that’s easy to use with Windows, OS X, and Linux. Figure 5.2 shows the example setup.

Figure 5.2. Using OpenSwan on a virtual server to tunnel traffic from a personal computer

Open your command line and execute the commands shown in the next listing step by step to start a virtual server and deploy a VPN server on it. We’ve prepared a CloudFormation template that starts the virtual server and its dependencies.

Listing 5.1. Deploying a VPN server to a virtual server: CloudFormation and a shell script

You can avoid typing these commands manually at your command line by using the following command to download a bash script and execute it directly on your local machine. The bash script contains the same steps as shown in listing 5.1:

$ curl -s https://raw.githubusercontent.com/AWSinAction/ code/master/chapter5/ vpn-create-cloudformation-stack.sh | bash -ex

The output of the last command should print out the public IP address of the VPN server, a shared secret, the VPN username, and the VPN password. You can use this information to establish a VPN connection from your computer, if you like:

[...]

[

{

"Description": "Public IP address of the vpn server",

"OutputKey": "ServerIP",

"OutputValue": "52.4.68.225"

},

{

"Description": "The shared key for the VPN connection (IPSec)",

"OutputKey": "IPSecSharedSecret",

"OutputValue": "sqmvJll/13bD6YqpmsKkPSMs9RrPL8itpr7m5V8g"

},

{

"Description": "The username for the vpn connection",

"OutputKey": "VPNUser",

"OutputValue": "vpn"

},

{

"Description": "The password for the vpn connection",

"OutputKey": "VPNPassword",

"OutputValue": "aZQVFufFlUjJkesUfDmMj6DcHrWjuKShyFB/d0lE"

}

]

Let’s take a deeper look at the deployment process of the VPN server. You’ll dive into the following tasks, which you’ve used unnoticed so far:

- Starting a virtual server with custom user data and configuring a firewall for the virtual server with AWS CloudFormation

- Executing a shell script at the end of the boot process to install an application and its dependencies with the help of a package manager, and to edit configuration files

Using CloudFormation to start a virtual server with user data

You can use CloudFormation to start a virtual server and configure a firewall. The template for the VPN server includes a shell script packed into user data, as shown in listing 5.2.

The CloudFormation template includes two new functions: Fn::Join and Fn::Base64. With Fn::Join, you can join a set of values to make a single value with a specified delimiter:

{"Fn::Join": ["delimiter", ["value1", "value2", "value3"]]}

The function Fn::Base64 encodes the input with Base64. You’ll need this function because the user data must be encoded in Base64:

{"Fn::Base64": "value"}

Listing 5.2. Parts of a CloudFormation template to start a virtual server with user data

Basically, the user data contains a small script to fetch and execute the real script, vpn-setup.sh, which contains all the commands for installing the executables and configuring the services. Doing so frees you from inserting scripts in the unreadable format needed for the JSON CloudFormation template.

Installing and configuring a VPN server with a script

The vpn-setup.sh script shown in listing 5.3 installs packages with the help of the package manager yum and writes some configuration files. You don’t have to understand the details of the configuration of the VPN server; you just need to know that this shell script is executed during the boot process to install and configure a VPN server.

Listing 5.3. Installing packages and writing configuration files on server startup

That’s it. You’ve learned how to deploy a VPN server to a virtual server with the help of EC2 user data and a shell script. After you terminate your virtual server, you’ll be ready to learn how to deploy a common web application without writing a custom script.

You’ve reached the end of the VPN server example. Don’t forget to terminate your virtual server and clean up your environment. To do so, enter aws cloudformation delete-stack --stack-name vpn at your terminal.

5.2.3. Starting from scratch instead of updating

You’ve learned how to deploy an application with the help of user data in this section. The script from the user data is executed at the end of the boot process. But how do you update your application with this approach?

You’ve automated the installation and configuration of software during the boot process of your virtual server, so you can start a new virtual server without any extra effort. If you have to update your application or its dependencies, you can do so with the following steps:

1. Make sure the up-to-date version of your application or software is available through the package repository of your OS, or edit the user data script.

2. Start a new virtual server based on your CloudFormation template and user data script.

3. Test the application deployed to the new virtual server. Proceed with the next step if everything works as it should.

4. Switch your workload to the new virtual server (for example, by updating a DNS record).

5. Terminate the old virtual server, and throw away its unused dependencies.

5.3. Deploying a simple web application with Elastic Beanstalk

It isn’t necessary to reinvent the wheel if you have to deploy a common web application. AWS offers a service that can help you to deploy web applications based on PHP, Java, .NET, Ruby, Node.js, Python, Go, and Docker; it’s called AWS Elastic Beanstalk. With Elastic Beanstalk, you don’t have to worry about your OS or virtual servers because it adds another layer of abstraction on top of them.

Elastic Beanstalk lets you handle the following recurring problems:

- Providing a runtime environment for a web application (PHP, Java, and so on)

- Installing and updating a web application automatically

- Configuring a web application and its environment

- Scaling a web application to balance load

- Monitoring and debugging a web application

5.3.1. Components of Elastic Beanstalk

Getting to know the different components of Elastic Beanstalk will help you to understand its functionality. Figure 5.3 shows these elements:

Figure 5.3. An Elastic Beanstalk application consists of versions, configurations, and environments.

- An application is a logical container. It contains versions, environments, and configurations. If you start to use Elastic Beanstalk in a region, you have to create an application first.

- A version contains a specific version of your application. To create a new version, you have to upload your executables (packed into an archive) to the service Amazon S3, which stores static files. A version is basically a pointer to this archive of executables.

- A configuration template contains your default configuration. You can manage your application’s configuration (such as the port your application listens on) as well as the environment’s configuration (such as the size of the virtual server) with your custom configuration template.

- An environment is where Elastic Beanstalk executes your application. It consists of a version and the configuration. You can run multiple environments for one application using the versions and configurations multiple times.

Enough theory for the moment. Let’s proceed with deploying a simple web application.

5.3.2. Using Elastic Beanstalk to deploy Etherpad, a Node.js application

Editing a document collaboratively can be painful with the wrong tools. Etherpad is an open source online editor that lets you edit a document with many people in real time. You’ll deploy this Node.js-based application with the help of Elastic Beanstalk in three steps:

1. Create an application: the logical container.

2. Create a version: a pointer to a specific version of Etherpad.

3. Create an environment: the place where Etherpad will run.

Creating an application for AWS elastic beanstalk

Open your command line and execute the following command to create an application for the Elastic Beanstalk service:

$ aws elasticbeanstalk create-application --application-name etherpad

You’ve created a container for all the other components that are necessary to deploy Etherpad with the help of AWS Elastic Beanstalk.

Creating a version for AWS elastic beanstalk

You can create a new version of your Etherpad application with the following command:

$ aws elasticbeanstalk create-application-version --application-name etherpad --version-label 1.5.2 --source-bundle S3Bucket=awsinaction,S3Key=chapter5/etherpad.zip

For this example, we uploaded a zip archive containing version 1.5.2 of Etherpad. If you want to deploy another application, you can upload your own application to the AWS S3 service for static files.

Creating an environment to execute etherpad with elastic beanstalk

To deploy Etherpad with the help of Elastic Beanstalk, you have to create an environment for Node.js based on Amazon Linux and the version of Etherpad you just created. To get the latest Node.js environment version, called a solution stack name, run this command:

$ aws elasticbeanstalk list-available-solution-stacks --output text --query "SolutionStacks[?contains(@, 'running Node.js')] | [0]" 64bit Amazon Linux 2015.03 v1.4.6 running Node.js

The option EnvironmentType = SingleInstance launches a single virtual server without the ability to scale and load-balance automatically. Replace $SolutionStackName with the output from the previous command:

$ aws elasticbeanstalk create-environment --environment-name etherpad --application-name etherpad --option-settings Namespace=aws:elasticbeanstalk:environment, OptionName=EnvironmentType,Value=SingleInstance --solution-stack-name "$SolutionStackName" --version-label 1.5.2

Having fun with Etherpad

You’ve created an environment for Etherpad. It will take several minutes before you can point your browser to your Etherpad installation. The following command helps you track the state of your Etherpad environment:

$ aws elasticbeanstalk describe-environments --environment-names etherpad

If Status turns to Ready and Health turns to Green, you’re ready to create your first Etherpad document. The output of the describe command should look similar to the following example.

Listing 5.4. Describing the status of the Elastic Beanstalk environment

You’ve deployed a Node.js web application to AWS with three commands. Point your browser to the URL shown in CNAME and open a new document by typing in a name for it and clicking the OK button. Figure 5.4 shows an Etherpad document in action.

Figure 5.4. Online text editor Etherpad in action

Exploring elastic beanstalk with the management console

You’ve deployed Etherpad with the help of Elastic Beanstalk and the AWS command-line interface (CLI) by creating an application, a version, and an environment. You can also control the Elastic Beanstalk service with the help of the Management Console, a web-based user interface:

1. Open the AWS Management Console at https://console.aws.amazon.com.

2. Click Services in the navigation bar, and click the Elastic Beanstalk service.

3. Click the etherpad environment, represented by a green box. An overview of the Etherpad application is shown, as in figure 5.5.

Figure 5.5. Overview of AWS Elastic Beanstalk environment running Etherpad

You can also fetch the log messages from your application with the help of Elastic Beanstalk. Download the latest log messages with the following steps:

1. Choose Logs from the submenu. You’ll see a screen like that shown in figure 5.6.

Figure 5.6. Downloading logs from a Node.js application via AWS Elastic Beanstalk

2. Click Request Logs, and choose Last 100 Lines.

3. After a few seconds, a new entry will appear in the table. Click Download to download the log file to your computer.

Now that you’ve successfully deployed Etherpad with the help of AWS Elastic Beanstalk and learned about the service’s different components, it’s time to clean up. Run the following command to terminate the Etherpad environment:

$ aws elasticbeanstalk terminate-environment --environment-name etherpad

You can check the state of the environment by executing the following command:

$ aws elasticbeanstalk describe-environments --environment-names etherpad

Wait until Status has changed to Terminated, and then proceed with the following command:

$ aws elasticbeanstalk delete-application --application-name etherpad

That’s it. You’ve terminated the virtual server providing the environment for Etherpad and deleted all components of Elastic Beanstalk.

5.4. Deploying a multilayer application with OpsWorks

Deploying a basic web application with the help of Elastic Beanstalk is convenient. But if you have to deploy a more complex application consisting of different services—also called layers—you’ll reach the limits of Elastic Beanstalk. In this section, you’ll learn about AWS OpsWorks, a free service offered by AWS that can help you to deploy a multilayer application.

OpsWorks helps you control AWS resources like virtual servers, load balancers, and databases and lets you deploy applications. The service offers some standard layers with the following runtimes:

|

|

|

You can also add a custom layer to deploy anything you want. The deployment is controlled with the help of Chef, a configuration-management tool. Chef uses recipes organized in cookbooks to deploy applications to any kind of system. You can adopt the standard recipes or create your own.

Chef is a configuration-management tool like Puppet, SaltStack, and Ansible. Chef transforms templates (recipes) written in a domain-specific language (DSL) into actions, to configure and deploy applications. A recipe can include packages to install, services to run, or configuration files to write, for example. Related recipes can be combined into cookbooks. Chef analyzes the status quo and changes resources where necessary to reach the described state from the recipe.

You can reuse cookbooks and recipes from others with the help of Chef. The community publishes a variety of cookbooks and recipes at https://supermarket.chef.io under open source licenses.

Chef can be run in solo or client/server mode. It acts as a fleet-management tool in client/server mode. This can help if you have to manage a distributed system consisting of many virtual servers. In solo mode, you can execute recipes on a single virtual server. AWS OpsWorks uses solo mode integrated in its own fleet management without needing to configure and operate a setup in client/server mode.

In addition to letting you deploy applications, OpsWorks helps you to scale, monitor and update your virtual servers running beneath the different layers.

5.4.1. Components of OpsWorks

Getting to know the different components of OpsWorks will help you understand its functionality. Figure 5.7 shows these elements:

Figure 5.7. Stacks, layers, instances, and apps are the main components of OpsWorks.

- A stack is a container for all other components of OpsWorks. You can create one or more stacks and add one or more layers to each stack. You could use different stacks to separate the production environment from the testing environment, for example. Or you could use different stacks to separate different applications.

- A layer belongs to a stack. A layer represents an application; you can also call it a service. OpsWorks offers predefined layers for standard web applications like PHP and Java, but you’re free to use a custom stack for any application you can think of. A layer is responsible for configuring and deploying software to virtual servers. You can add one or multiple virtual servers to a layer. The virtual servers are called instances in this context.

- An instance is the representation for a virtual server. You can launch one or multiple instances for each layer. You can use different versions of Amazon Linux and Ubuntu or a custom AMI as a basis for the instances, and you can specify rules for launching and terminating instances based on load or timeframes for scaling.

- An app is the software you want to deploy. OpsWorks deploys your app to a suitable layer automatically. You can fetch apps from a Git or Subversion repository or as archives via HTTP. OpsWorks helps you to install and update your apps onto one or multiple instances.

Let’s look at how to deploy a multilayer application with the help of OpsWorks.

5.4.2. Using OpsWorks to deploy an IRC chat application

Internet Relay Chat (IRC) is still a popular means of communication. In this section, you’ll deploy kiwiIRC, a web-based IRC client, and your own IRC server. Figure 5.8 shows the setup of a distributed system consisting of a web application delivering the IRC client and an IRC server.

Figure 5.8. Building your own IRC infrastructure consisting of a web application and an IRC server

kiwiIRC is an open source web application written in JavaScript for Node.js. The following steps are necessary to deploy a two-layer application with the help of OpsWorks:

1. Create a stack, the container for all other components.

2. Create a Node.js layer for kiwiIRC.

3. Create a custom layer for the IRC server.

4. Create an app to deploy kiwiIRC to the Node.js layer.

5. Add an instance for each layer.

You’ll learn how to handle these steps with the Management Console. You can also control OpsWorks from the command line, as you did Elastic Beanstalk, or with CloudFormation.

Creating a new OpsWorks stack

Open the Management Console at https://console.aws.amazon.com/opsworks, and create a new stack. Figure 5.9 illustrates the necessary steps:

Figure 5.9. Creating a new stack with OpsWorks

1. Click Add Stack under Select Stack or Add Your First Stack.

2. For Name, type in irc.

3. For Region, choose US East (N. Virginia).

4. The default VPC is the only one available. Select it.

5. For Default Subnet, select us-east-1a.

6. For Default Operating System, choose Ubuntu 14.04 LTS.

7. For Default Root Device Type, select EBS Backed.

8. For IAM Role, choose New IAM Role. Doing so automatically creates the necessary dependency.

9. Select your SSH key, mykey, for Default SSH Key.

10. For Default IAM Instance Profile, choose New IAM Instance Profile. Doing so automatically creates the necessary dependency.

11. For Hostname Theme, choose Layer Dependent. Your virtual servers will be named depending on their layer.

12. Click Add Stack to create the stack.

You’re redirected to an overview of your irc stack. Everything is ready for you to create the first layer.

Creating a Node.js layer for an OpsWorks stack

kiwiIRC is a Node.js application, so you need to create a Node.js layer for the irc stack. Follow these steps to do so:

1. Select Layers from the submenu.

2. Click the Add Layer button.

3. For Layer Type, select Node.js App Server, as shown in figure 5.10.

Figure 5.10. Creating a layer with Node.js for kiwiIRC

4. Select the latest 0.10.x version of Node.js.

5. Click Add Layer.

You’ve created a Node.js layer. Now you need to repeat these steps to add another layer and deploy your own IRC server.

Creating a custom layer for an OpsWorks stack

An IRC server isn’t a typical web application, so the default layer types are out of the question. You’ll use a custom layer to deploy an IRC server. The Ubuntu package repository includes various IRC server implementations; you’ll use the ircd-ircu package. Follow these steps to create a custom stack for the IRC server:

1. Select Layers from the submenu.

2. Click Add Layer.

3. For Layer Type, select Custom, as shown in figure 5.11.

Figure 5.11. Creating a custom layer to deploy an IRC server

4. For Name and for Short Name, type in irc-server.

5. Click Add Layer.

You’ve created a custom layer.

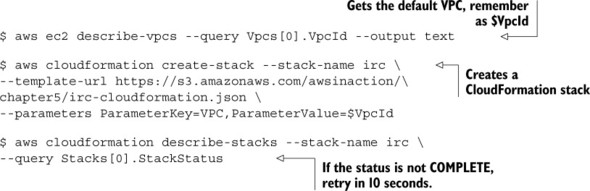

The IRC server needs to be reachable through port 6667. To allow access to this port, you need to define a custom firewall. Execute the commands shown in listing 5.5 to create a custom firewall for your IRC server.

You can avoid typing these commands manually to your command line by using the following command to download a bash script and execute it directly on your local machine. The bash script contains the same steps as shown in listing 5.5:

$ curl -s https://raw.githubusercontent.com/AWSinAction/ code/master/chapter5/irc-create-cloudformation-stack.sh | bash -ex

Listing 5.5. Creating a custom firewall with the help of CloudFormation

Next you need to attach this custom firewall configuration to the custom OpsWorks layer. Follow these steps:

1. Select Layers from the submenu.

2. Open the irc-server layer by clicking it.

3. Change to the Security tab and click Edit.

4. For Custom Security Groups, select the security group that starts with irc, as shown in figure 5.12.

Figure 5.12. Adding a custom firewall configuration to the IRC server layer

5. Click Save.

You need to configure one last thing for the IRC server layer: the layer recipes to deploy an IRC server. Follow these steps to do so:

1. Select Layers from the submenu.

2. Open the irc-server layer by clicking it.

3. Change to the Recipes tab and click Edit.

4. For OS Packages, add the package ircd-ircu, as shown in figure 5.13.

Figure 5.13. Adding an IRC package to a custom layer

5. Click the + button and then the Save button.

You’ve successfully created and configured a custom layer to deploy the IRC server. Next you’ll add the kiwiIRC web application as an app to OpsWorks.

Adding an app to the Node.js layer

OpsWorks can deploy apps to a default layer. You’ve already created a Node.js layer. With the following steps, you’ll add an app to this layer:

1. Select Apps from the submenu.

2. Click the Add an App button.

3. For Name, type in kiwiIRC.

4. For Type, select Node.js.

5. For Repository Type, select Git, and type in https://github.com/AWSinAction/KiwiIRC.git for Repository URL, as shown in figure 5.14.

Figure 5.14. Adding kiwiIRC, a Node.js app, to OpsWorks

6. Click the Add App button.

Your first OpsWorks stack is now fully configured. Only one thing is missing: you need to start some instances.

Adding instances to run the IRC client and server

Adding two instances will bring the kiwiIRC client and the IRC server into being. Adding a new instance to a layer is easy—follow these steps:

1. Select Instances from the submenu.

2. Click the Add an Instance button on the Node.js App Server layer.

3. For Size, select t2.micro, the smallest and cheapest virtual server, as shown in figure 5.15.

Figure 5.15. Adding a new instance to the Node.js layer

4. Click Add Instance.

You’ve added an instance to the Node.js App Server layer. Repeat these steps for the irc-server layer as well.

The overview of instances should be similar to figure 5.16. To start the instances, click Start for both.

Figure 5.16. Starting the instances for the IRC web client and server

It will take some time for the virtual servers to boot and the deployment to run. It’s a good time to get some coffee or tea.

Having fun with kiwiIRC

Be patient until the status of both instances changes to Online, as shown in figure 5.17. You can now open kiwiIRC in your browser by following these steps:

Figure 5.17. Waiting for deployment to open kiwiIRC in the browser

1. Keep in mind the public IP address of the instance irc-server1. You’ll need it to connect to your IRC server later.

2. Click the public IP address of the nodejs-app1 instance to open the kiwiIRC web application in a new tab of your browser.

The kiwiIRC application should load in your browser, and you should see a login screen like the one shown in figure 5.18. Follow these steps to log in to your IRC server with the kiwiIRC web client:

Figure 5.18. Using kiwiIRC to log in to your IRC server on channel #awsinaction

1. Type in a nickname.

2. For Channel, type in #awsinaction.

3. Open the details of the connection by clicking Server and Network.

4. Type the IP address of irc-server1 into the Server field.

5. For Port, type in 6667.

6. Disable SSL.

7. Click Start, and wait a few seconds.

Congratulations! You’ve deployed a web-based IRC client and an IRC server with the help of AWS OpsWorks.

It’s time to clean up. Follow these steps to avoid unintentional costs:

1. Open the OpsWorks service with the Management Console.

2. Select the irc stack by clicking it.

3. Select Instances from the submenu.

4. Stop both instances and wait until Status is Stopped for both.

5. Delete both instances, and wait until they disappear from the overview.

6. Select Apps from the submenu.

7. Delete the kiwiIRC app.

8. Select Stack from the submenu.

9. Click the Delete Stack button, and confirm the deletion.

10. Execute aws cloudformation delete-stack --stack-name irc from your terminal.

5.5. Comparing deployment tools

You have deployed applications in three ways in this chapter:

- Using AWS CloudFormation to run a script on server startup

- Using AWS Elastic Beanstalk to deploy a common web application

- Using AWS OpsWorks to deploy a multilayer application

In this section, we’ll discuss the differences between these solutions.

5.5.1. Classifying the deployment tools

Figure 5.19 classifies the three AWS deployment options. The effort required to deploy an application with the help of AWS Elastic Beanstalk is low. To benefit from this, your application has to fit into the conventions of Elastic Beanstalk. For example, the application must run in one of the standardized runtime environments. If you’re using OpsWorks, you’ll have more freedom to adapt the service to the needs of your application. For example, you can deploy different layers that depend on each other, or you can use a custom layer to deploy any application with the help of a Chef recipe; this takes extra effort but gives you additional freedom. On the other end of the spectrum, you’ll find CloudFormation and deploying applications with the help of a script running at the end of the boot process. You can deploy any application with the help of CloudFormation. The disadvantage of this approach is that you have to do more work because you don’t use standard tooling.

Figure 5.19. Comparing different ways to deploy applications on AWS

5.5.2. Comparing the deployment services

The previous classification can help you decide the best fit to deploy an application. The comparison in table 5.1 highlights other important considerations.

Table 5.1. Differences between using CloudFormation with a script on server startup, Elastic Beanstalk, and OpsWorks

|

CloudFormation with a script on server startup |

Elastic Beanstalk |

OpsWorks |

|

|---|---|---|---|

| Configuration-management tool | All available tools | Proprietary | Chef |

| Supported platforms | Any |

|

|

| Supported deployment artifacts | Any | Zip archive on Amazon S3 | Git, SVN, archive (such as Zip) |

| Common use case | Complex and nonstandard environments | Common web application | Micro-services environment |

| Update without downtime | Possible | Yes | Yes |

| Vendor lock-in effect | Medium | High | Medium |

Many other options are available for deploying applications on AWS, from open source software to third-party services. Our advice is to use one of the AWS deployment services because they’re well integrated into many other AWS services. We recommend that you use CloudFormation with user data to deploy applications because it’s a flexible approach. It is also possible to manage Elastic Beanstalk and Ops Works with the help of CloudFormation.

An automated deployment process will help you to iterate and innovate more quickly. You’ll deploy new versions of your applications more often. To avoid service interruptions, you need to think about testing changes to software and infrastructure in an automated way and being able to roll back to a previous version quickly if necessary.

5.6. Summary

- It isn’t advisable to deploy applications to virtual servers manually because virtual servers pop up more often in a dynamic cloud environment.

- AWS offers different tools that can help you deploy applications onto virtual servers. Using one of these tools prevents you from reinventing the wheel.

- You can throw away a server to update an application if you’ve automated your deployment process.

- Injecting Bash or PowerShell scripts into a virtual server during startup allows you to initialize servers individually—for example, for installing software or configuring services.

- OpsWorks is good for deploying multilayer applications with the help of Chef.

- Elastic Beanstalk is best suited for deploying common web applications.

- CloudFormation gives you the most control when you’re deploying more complex applications.