HOUR 2

The Benefits of Networking

What You’ll Learn in This Hour:

• Computing before the advent of computer networks

• The first computer networks

• How packet-switching transformed data networking

• The downsides of not networking computers

• The advantages of using computer networks

It is interesting to speculate how life would be if we humans did not have—what Alexis de Tocqueville proclaimed—a natural propensity to organize things at the drop of a hat. But we do organize. This trait helps make us the dominant large-body species on earth. What’s more, our comfortable lot in life depends on our highly organized networks—from the postal service, to the world’s electronic funds transfer system. Without networks, many of the luxuries we take for granted in our daily lives could not exist. In this hour, you will learn more about the extraordinary benefits of computer networking.

Computing Before Computer Networks

Assume that you have a time machine and can go back 40–50 years to examine the computers that existed during those years. Chances are you wouldn’t recognize much about them. The computers that businesses and governments used were huge water-cooled behemoths the size of rooms. In spite of their bulk, they weren’t powerful by today’s measures; they could process only small programs, and they usually lacked sufficient memory—that is, the physical part of the computer where the computer stores the 1s and 0s of software and data—to hold a whole program at one time. That’s why pictures of these older machines are often depicted with huge reels of magnetic tape, which held the data the computer wasn’t using at that moment. This model of computing is antiquated, but only 40–50 years ago, it was state of the art.

In those days, computers offered little interaction between the user and the system. Interactive video display screens and keyboards were for the future. Instead of sitting at a terminal or PC typing characters and using a mouse, users submitted the work they needed the computer to do to a computer operator, who was the only person allowed to directly interact with the computer. Usually, the work was submitted on punched paper tape or punched cards.

A great deal of the time, computers were kept in climate-controlled rooms with glass walls—hence the slang name “glass house” for a data center. Users submitted their jobs on punch cards that were executed (run) in batches on the computer—one or two batches per shift—from which we derive the term batch processing. Batch processing was common in early environments in which many tasks were scheduled to run at a specific time late in the evening. The user never directly interacted with a batch-processing computer. Debugging (correcting) programs was much more difficult because a programmer had to wait for the machine to print the results of the program’s “run,” debug the code, and then resubmit the job for another overnight run.

Computers at that time couldn’t interact with each other. An IBM computer simply couldn’t “talk” to a Honeywell or Burroughs computer. Even if they had been able to connect, they could not have shared data—the computers used different data formats; the only standard at that time was ASCII. ASCII is the American Standard Code for Information Interchange, a way computers format 1s and 0s (binary code) into the alphabet, numerals, and other characters that humans can understand. The computers would have had to convert the data before they could use it, which, in those prestandard days, could have taken as long as reentering the data.

Even if computers had been capable of understanding each other’s data formats, the data transfers would have been slow because of the inability to link computers directly together. Even between computers made by the same manufacturer, the only method to transfer data was to carry a tape or a large hard disk to the recipient of the data. This meant physical delivery of these storage devices to each location needing a copy of data—a snail’s pace when compared to modern networks.

Fortunately, the U.S. government’s Advanced Research Projects Agency (ARPA) funded several programs based on a set of memos written at MIT in 1962 about interconnecting computers. These ideas found support at ARPA, which then funded the creation of an ARPAnet: a network of interconnected computers communicating with each other with “packets.” In 1968, ARPA published a Request for Comments (RFC) for the development of a packet switch called the Interface Message Processor (IMP). The RFC was awarded to Bolt, Beranek, and Newman (BBN), the company who designed some of the first successful packet switches.

Networking’s Breakthrough: Packet-Switched Data

This section provides an explanation of packet-switching, a technique used in all computer networks to transport traffic between nodes. Regardless of the scope and size of the network, it uses packet-switching operations.

Packet-switching was invented to solve several problems pertaining to the methods used by emerging data networks to transmit data. In the past, a communications link used a technique called circuit switching to allot resources to traffic. For voice traffic, circuit switching was effective, because it dedicated a channel to a voice conversation for the duration of the conversation. Generally, the link was effectively utilized because the two people on the telephones talked most of the time.

This situation was not the case for data dialogues. Because of the stop-and-go nature of keying in data on a computer keyboard (keying in characters, backspacing to correct mistakes, thinking a bit more about the “transmission”), a circuit-switched network, like that of the telephone system, experienced frequent periods when a dedicated link was idle—waiting for the two correspondents to actually correspond. Packet-switching solves this expensive problem by providing these benefits:

• More than one user stream of data can be sent over a link during a given window of time.

• Packet-switching does not set up a connection through a network. Thus, it does not require dedicated end-to-end channels. If problems occur in one part of network, user data can be dynamically rererouted to those switches that are operating satisfactorily. In the past, a failed circuit switch required the tedious and time-consuming job of reestablishing a dedicated end-to-end connection.

• Because many user sessions (such as email and text messaging) entail a slow introduction of data into the network, packet-switching “packages” this data into small bundles and sends it on its way to the destination. (By the way, even “faster” sessions, such as file transfer, do not fully utilize a high-capacity communications link.) While the packet switching software is waiting for more data to spring forth from our cumbersome fingers and thumbs, it shifts its attention to an active user and for a brief time, it allots network resources to this user. Later, when we are keying in data, it turns its attention back to us.

• In other words, expensive network resources are used only when users need these resources. It’s an ideal arrangement for “bursty” data communications in which facilities are used intermittently.

At first glance, packet-switching might be a bit difficult to understand. Nonetheless, to grasp the underpinnings of computer networks, we must come to grips with packet-switching. To that end, here’s a brief experiment that should help explain packet-switching networks. We will compare packet-switching networks to a postal network.

Assume you are an author writing a manuscript that must be delivered to your editor, who lives far from you. Also assume (for the purposes of this experiment) that the postal service limits the weight of packages it carries, and the entire manuscript is heavier than the limit. Clearly, you’re going to have to break up the manuscript in a way that ensures your editor can reassemble it in the correct order without difficulty. How are you going to accomplish this task?

First, you break up the manuscript into standard sizes. Let’s assume a 50-page section of the manuscript plus an envelope is the maximum weight the postal service will support. After assuring your manuscript pages are numbered, you divide the manuscript into 50-page chunks. It doesn’t matter whether the chunks break on chapter lines or even in the middle of a sentence—the pages are numbered, so they can be reassembled at the receiving node. If any pages are lost because of a torn envelope, the page numbers help determine what’s missing.

Dividing the large manuscript into small equal-sized chunks with a method of verifying the completeness of the data (through the use of the page numbers) is the first part of packetizing data. The editor can use the page numbers, which are a property of the data, to determine if all the data has arrived correctly. He can use other procedures to verify the correctness of the received data.

Next, you put the 50-page manuscript chunks into envelopes numbered sequentially—the first 50 pages go in envelope number 1, the second 50 pages go in envelope number 2, and so forth until you’ve reached the end of the manuscript. The sequence numbers are important because they help the destination node (your editor, or a computer) reassemble the data in proper order.

The number of pages in each envelope is also written on the outside of the envelope, which describes the data packet’s size. (In computer networks, the number of characters (bytes) is used, not the number of pages.) If the size is wrong when the packet arrives at the destination, the destination computer discards the packet and requests a retransmission. Another approach is for the sending and receiving parties to agree on the size of the packets before they are sent.

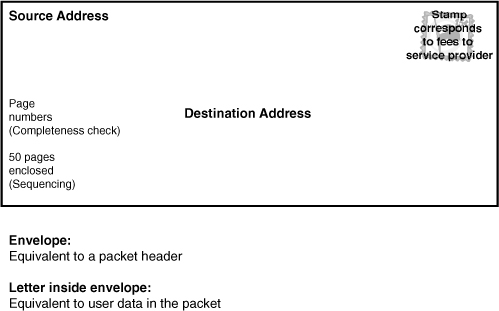

Last, you write your editor’s address as the destination and your address as the return address on the outside of the envelopes and send them using the postal service. Figure 2.1 illustrates the hypothetical envelope and the relationship each element has to a data packet in a computer network.

FIGURE 2.1 The various parts of the envelope and how they correspond to the parts of a data packet

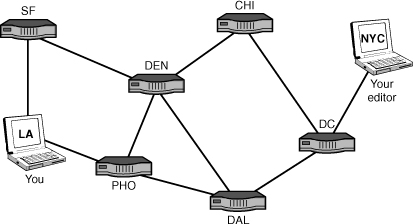

The route the envelopes take while in transit between your mailbox and your editor’s desk is not important to your editor and you. As shown in Figure 2.2, some of the envelopes might be routed through Chicago; others might be routed through Dallas—it’s not important as long as all the envelopes arrive at your editor’s desk. If the number of pages your editor receives does not match the number of pages written on the outside of the envelope, the editor knows that something is wrong—the envelope came unsealed and pages fell out, or someone tampered with the contents. If you had sent your editor this (electronic) manuscript over the Internet, the process would work the same way—the sections of the book (inside the packets) could have been routed through many machines (routers) before arriving at your editor’s computer.

FIGURE 2.2 Data packets can follow several paths across the Internet.

In networking terms, each complete envelope is a packet of data. The order in which your editor—or a computer—receives them doesn’t matter because the editor (or the computer) can reassemble the data from the sequence numbers on the outside of the envelopes.

For each correct envelope your editor receives, he sends you an acknowledgment. If an envelope fails to arrive or is compromised in some way, your editor won’t acknowledge receipt of that specific envelope. After a specified time, if you don’t receive acknowledgment for that packet, you must resend it so the editor has the entire manuscript. Packet-switched data does not correspond perfectly with this example, but it’s quite close, and it’s sufficient for us to proceed into more technical details.

Any data you send over a computer network is packetized—from the smallest text message to the largest file. The beauty of packet-switching networks is that more than one computer can transmit data over one communications link at a time—a concept called time-division multiplexing. Thousands of packets from multiple machines can be multiplexed onto a link without confusion because each packet (like the postal envelope) contains the following elements:

• A source address—The return address or origin of the packet

• A destination address—Where the packet is headed

• A sequence number—Where the packet fits in with the remainder of associated packets

• An error check—An assurance that the data is free of errors

Because each computer has a different address or set of addresses (as explained in Hours 3 and 15), transmitting data through computer networks is similar to sending mail through postal networks.

The importance of standards with respect to packet switching specifically (and computer networks in general) cannot be overstated. The success of packet-switched networks depends on the widespread adoption of standards. Networking rewards cooperation. No matter how elegant and efficient a system is, if it does not adhere to a community-accepted standard, it will fail. Several organizations exist to create these standards. For packet-switching, the authoritative bodies are the International Telecommunications Union (ITU) and several Internet working groups and standards bodies.

Benefits of Networking

As explained at the beginning of this hour, before computer networks came about, transferring data between computers was a time-consuming and labor-intensive task. As local area networks (LANs) were coming into existence in offices, a person who wanted to exchange data with someone whose computer was located on another LAN copied the data onto a disk, walked to the other machine, and transferred the data file to the other computer. This technique earned the name Sneakernet.

File Management

Obviously, Sneakernet is not an efficient way to move or manage files. It’s time consuming and unreliable. Moreover, the data is decentralized. Each user can conceivably have a different version of a particular file stored on her standalone computer. The confusion that ensues when users need the same version of a file and don’t have it can create serious problems for an organization. With computers connected through a network, data can be shared among them. We take this capability for granted today, but it didn’t exist until LANs were connected in the late 1970s.

Sharing Software

Disconnected computers also suffer from another malady: They can’t share software applications. Every application must be installed on each computer if data passed by Sneakernet is to be effective. If a user doesn’t have the application that created a file stored on her computer, she can’t read the file. Of course, if we can’t share applications, no one can share, say, calendars or contact lists with other users, let alone send them email. Sharing software has just the opposite effect of nonshared software. For example, we need not load all the software on our computer to have our traffic routed from our LAN in Los Angeles to a LAN in New York. Our computer shares some of its software with a lot of software on a local router to provide this service.

A groupware application (also called collaborative software) is an application that enables multiple users to work together by using a network to connect them. Such applications can work serially, where (for instance) a document is automatically routed from person A to person B after person A is done with it. Groupware might also enable real-time collaboration. IBM’s Lotus Notes software is an example of the former, and Microsoft’s Office has some real-time collaborative features. Another example is the help desk of software vendors. Often, when a customer calls for assistance, the technician connects to the user’s application with troubleshooting software routines to analyze the problem. The user’s computer is sharing the powerful investigative software, but the user’s computer doesn’t have to download it to use it.

Other examples of shared applications are group calendaring, which allows a staff to plan meetings and tasks using a centralized schedule instead of 20 different ones; and email, or electronic mail, which is often called the killer application (killer app) of networking. Email and other network applications are discussed in more depth in Hour 13, “Network Applications.”

Sharing Printers and Other Peripheral Devices

Printers and scanners are expensive. If they can’t be shared, they become an enormous capital expense to organizations and even a household. You can imagine the strain on a budget if each computer in a home or enterprise had to have a dedicated printer or scanner.

Centralized Configuration Management

As personal computers found their way into the mass marketplace, software manufacturers faced a major problem: The correction and improvement of their products, which resided on millions of machines. Before computer networks became commonplace (abetted by the Internet), a correction to, say, a bug in Microsoft’s DOS software required the sending of a disk to users, or the users having the means to dial up a Microsoft site to download the patch on a low-capacity telephone line. Many users didn’t keep their systems tuned to these updates, resulting in dissimilar versions of software throughout a product line. The Microsofts of the world faced a complex situation when trying to keep their changes compatible with customers’ software.

With high-capacity computer networks, the vendors can automatically download their changes to millions of users, all in a few seconds. In today’s environment, with each logon to the Internet, it isn’t unusual for a user’s PC to have enhancements and corrections made to several of a machine’s thousands of software programs.

What is more, network administrators use their own networks to manage those networks. For example, in a large corporation, hundreds or even thousands of servers and routers are positioned across a country, a continent, or perhaps the globe. With a variety of software utilities, an administrator can diagnose and fix problems, as well as install and configure software. These utility suites allow a network administrator to collect and standardize computer configurations and to troubleshoot problems in the network.

Learning about network management and its initial setup requires a lot of work on the part of the administrator, but when the initial installation is finished, the administrator’s life becomes easier. Centralized management saves time and money (two things accountants appreciate). It also engenders the goodwill of the users and the credibility of the administrator (two things the users and administrators appreciate). To find out more about managing networks, look at the network administration hours in Part V of this book, “Network Administration.”

Speed and Economy

In a nutshell, computer networks allow us to perform our jobs more quickly and more efficiently and lead to greater productivity in the workforce. It’s fair to say they have been an important cog in increasing the wealth of a country, as well as its citizens.

And we shouldn’t omit the fact that these wonderful systems allow us to play Texas Holdem and Scrabble online well into the hours when we should be sleeping.

Summary

When computer resources are shared through a network, its users reap a variety of benefits ranging from reduced costs, to ease of use, to simpler administration. The financial savings and worker productivity gains represented by networks will be appreciated by companies trying to economize. From the worker’s viewpoint, an employee does not have to chase down information anymore. If applications such as email, calendaring, and contact management are added to the mix, the network begins to establish synergistic relationships between users and data. A well-designed computer network allows us to accomplish a great deal more than we could do without it.

Q&A

Q. Name the technology that enhances the utilization of network resources.

A. Packet-switching is the technology that does this. It improves the utilization of communications links and provides a means for dynamic, adaptive routing through the network.

Q. What sorts of computer resources can be shared on a network?

A. Networks facilitate the sharing of data, software applications, printers, scanners, computers, and those vital tools for productivity and creativity: human minds.

Q. What are some of the reasons for using centralized management?

A. Centralized management of a network promotes more efficient administration of computing resources, effective (automated) installation of software on network users’ desktop computers, easier computer configuration management, as well as troubleshooting and recovery from problems.