Chapter 5

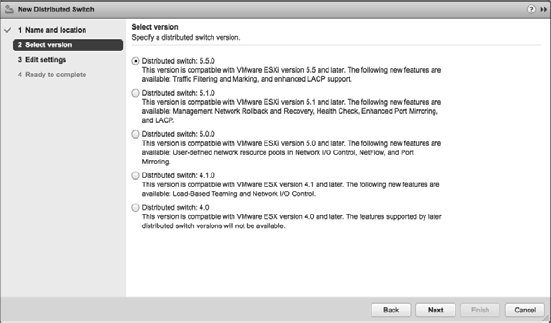

Creating and Configuring Virtual Networks

Eventually, it all comes back to the network. Having servers running VMware ESXi with VMs stored on a highly redundant Fibre Channel SAN is great, but they are ultimately useless if the VMs cannot communicate across the network. What good is the ability to run 10 production systems on a single host at less cost if those production systems aren't available? Clearly, virtual networking within ESXi is a key area for every vSphere administrator to understand fully.

In this chapter, you will learn to

- Identify the components of virtual networking

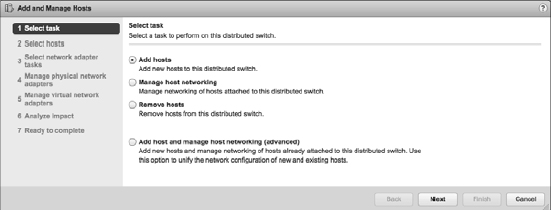

- Create virtual switches and distributed virtual switches

- Create and manage NIC teaming, VLANs, and private VLANs

- Examine the options for third-party virtual switches in your environment

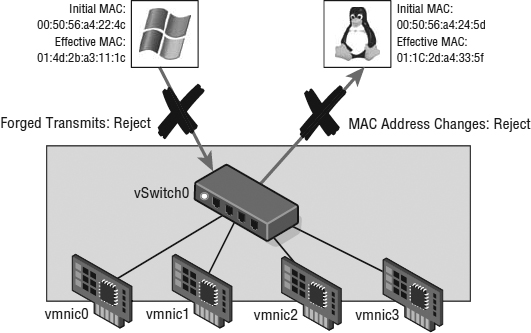

- Configure virtual switch security policies

Putting Together a Virtual Network

Designing and building virtual networks with ESXi and vCenter Server bears some similarities to designing and building physical networks, but there are enough significant differences that an overview of components and terminology is warranted. So, we'll take a moment here to define the various components involved in a virtual network, and then we'll discuss some of the factors that affect the design of a virtual network:

vSphere Standard Switch A software-based switch that resides in the VMkernel and provides traffic management for VMs. Users must manage vSphere Standard Switches independently on each ESXi host. You'll see us use the term vSwitch to refer to both a vSphere Standard Switch as well as a virtual switch in general.

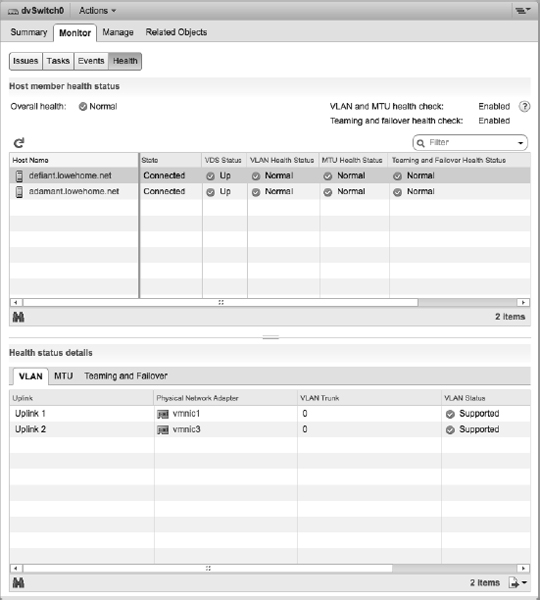

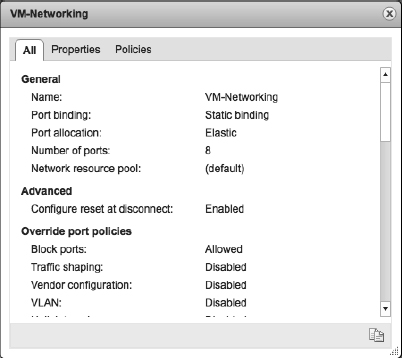

vSphere Distributed Switch A software-based switch that resides in the VMkernel and provides traffic management for VMs and the VMkernel. Distributed vSwitches are shared by and managed across entire clusters of ESXi hosts. You might see vSphere Distributed Switch abbreviated as VDS; we'll use VDS, vSphere Distributed Switch, or just distributed switch in this book.

Port/Port Group A logical object on a vSwitch that provides specialized services for the VMkernel or VMs. A virtual switch can contain a VMkernel port or a VM port group. On a vSphere Distributed Switch, these are called distributed port groups.

VMkernel Port A specialized virtual switch port type that is configured with an IP address to allow hypervisor management traffic, vMotion, iSCSI storage access, network attached storage (NAS) or Network File System (NFS) access, and vSphere Fault Tolerance (FT) logging. A VMkernel port is also referred to as a vmknic.

NO MORE SERVICE CONSOLE PORTS

Because vSphere 5.5, like vSphere 5.0 and 5.1 before it, does not include VMware ESX with a traditional Linux-based Service Console, pure vSphere 5.x environments will not use a Service Console port (or vswif). Instead, the functionality of a Service Console port in ESX 4.x and earlier is handled by a VMkernel port in vSphere 5.x.

VM Port Group A group of virtual switch ports that share a common configuration and allow VMs to access other VMs or the physical network.

Virtual LAN A logical LAN configured on a virtual or physical switch that provides efficient traffic segmentation, broadcast control, security, and efficient bandwidth utilization by providing traffic only to the ports configured for that particular virtual LAN (VLAN).

Trunk Port (Trunking) A port on a physical switch that listens for and knows how to pass traffic for multiple VLANs. It does this by maintaining the 802.1q VLAN tags for traffic moving through the trunk port to the connected device(s). Trunk ports are typically used for switch-to-switch connections to allow VLANs to pass freely between switches. Virtual switches support VLANs, and using VLAN trunks allows the VLANs to pass freely into the virtual switches.

TRUNKING VS. LINK AGGREGATION?

You might, depending on your networking vendor, also see use of the term trunk to describe an aggregation of multiple individual links into a single logical link. In this book, we use trunk only to describe a connection that passes multiple VLAN tags, and we'll use the term NIC teaming or link aggregation to refer to the practice of bonding multiple individual links together.

Access Port A port on a physical switch that passes traffic for only a single VLAN. Unlike a trunk port, which maintains the VLAN identification for traffic moving through the port, an access port strips away the VLAN information for traffic moving through the port.

Network Interface Card Team The aggregation of physical network interface cards (NICs) to form a single logical communication channel. Different types of NIC teams provide varying levels of traffic load balancing and fault tolerance.

vmxnet Adapter A virtualized network adapter operating inside a guest operating sys-tem (guest OS). The vmxnet adapter is a high-performance, 1 Gbps virtual network adapter that operates only if VMware Tools have been installed. The vmxnet adapter is sometimes referred to as a paravirtualized driver. The vmxnet adapter is identified as Flexible in the VM properties.

vlance Adapter A virtualized network adapter operating inside a guest OS. The vlance adapter is a 10/100 Mbps network adapter that is widely compatible with a range of operating systems and is the default adapter used until the VMware Tools installation is completed.

e1000 Adapter A virtualized network adapter that emulates the Intel e1000 network adapter. The Intel e1000 is a 1 Gbps network adapter. The e1000 network adapter is the most common in 64-bit VMs.

Now that you have a better understanding of the components involved and the terminology that you'll see in this chapter, we'll discuss how these components work together to form a virtual network in support of VMs and ESXi hosts.

Your answers to the following questions will, in large part, determine the design of your virtual networking:

- Do you have or need a dedicated network for management traffic, such as for the management of physical switches?

- Do you have or need a dedicated network for vMotion traffic?

- Do you have an IP storage network? Is this IP storage network a dedicated network? Are you running iSCSI or NAS/NFS?

- How many NICs are standard in your ESXi host design?

- Do the NICs in your hosts run 1 Gb Ethernet or 10 Gb Ethernet?

- Do you need extremely high levels of fault tolerance for VMs?

- Is the existing physical network composed of VLANs?

- Do you want to extend the use of VLANs into the virtual switches?

As a precursor to setting up a virtual networking architecture, you need to identify and document the physical network components and the security needs of the network. It's also important to understand the architecture of the existing physical network, because that also greatly influences the design of the virtual network. If the physical network can't support the use of VLANs, for example, then the virtual network's design has to account for that limitation.

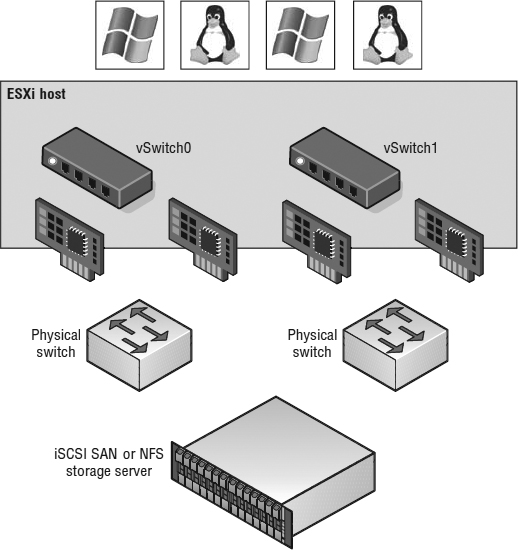

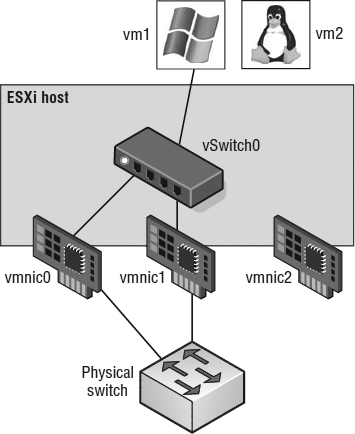

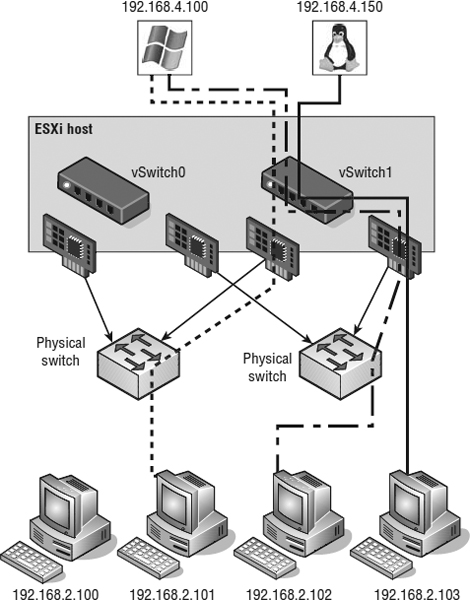

Throughout this chapter, as we discuss the various components of a virtual network in more detail, we'll also provide guidance on how the various components fit into an overall virtual network design. A successful virtual network combines the physical network, NICs, and vSwitches, as shown in Figure 5.1.

FIGURE 5.1 Successful virtual networking is a blend of virtual and physical network adapters and switches.

Because the virtual network implementation makes VMs accessible, it is essential that the virtual network be configured in a manner that supports reliable and efficient communication around the different network infrastructure components.

Working with vSphere Standard Switches

The networking architecture of ESXi revolves around creating and configuring virtual switches. These virtual switches are either vSphere Standard Switches or vSphere Distributed Switches. First we'll discuss vSphere Standard Switches, hereafter called vSwitches; we'll discuss vSphere Distributed Switches next.

You create and manage vSwitches through the vSphere Web Client or through the vSphere CLI using the esxcli command, but they operate within the VMkernel. Virtual switches provide the connectivity to provide communication as follows:

- Between VMs within an ESXi host

- Between VMs on different ESXi hosts

- Between VMs and physical machines on the network

- For VMkernel access to networks for vMotion, iSCSI, NFS, or Fault Tolerance logging (and management on ESXi)

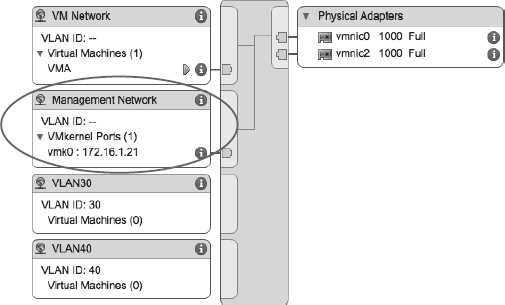

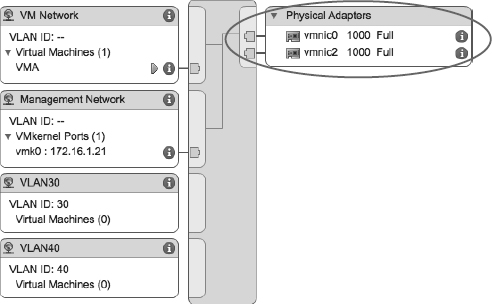

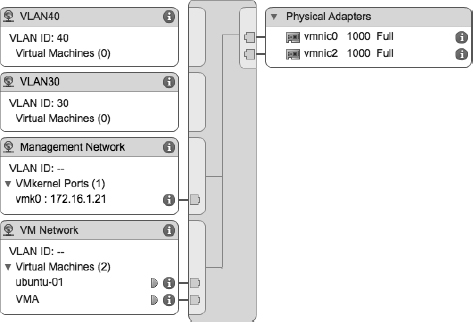

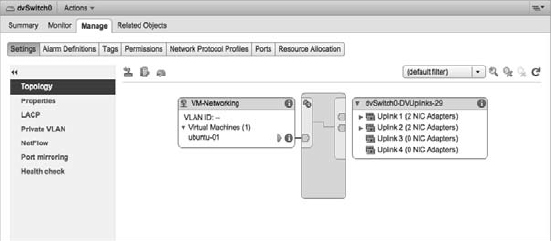

Take a look at Figure 5.2, which shows the vSphere Web Client depicting a vSwitch on an ESXi host.

FIGURE 5.2 Virtual switches alone can't provide connectivity; they need ports or port groups and uplinks.

In this figure, the vSwitch isn't depicted alone; it also requires ports or port groups and uplinks. Without uplinks, a virtual switch can't communicate with the upstream network; without ports or port groups, a vSwitch can't provide connectivity for the VMkernel or the VMs. It is for this reason that most of our discussion about virtual switches centers on ports, port groups, and uplinks.

First, though, let's take a closer look at vSwitches and how they are similar to yet different from physical switches in the network.

Comparing Virtual Switches and Physical Switches

Virtual switches in ESXi are constructed by and operate in the VMkernel. Virtual switches (referred to in the general sense as vSwitches) are not managed switches and do not provide all the advanced features that many new physical switches provide. You cannot, for example, telnet into a vSwitch to modify settings. There is no command-line interface (CLI) for a vSwitch, apart from the vSphere CLI commands such as esxcli. Even so, a vSwitch operates like a physical switch in some ways. Like its physical counterpart, a vSwitch functions at layer 2, maintains MAC address tables, forwards frames to other switch ports based on the MAC address, supports VLAN configurations, can trunk VLANs using IEEE 802.1q VLAN tags, and can establish port channels. Similar to physical switches, vSwitches are configured with a specific number of ports.

Despite these similarities, vSwitches do have some differences from physical switches. A vSwitch does not support the use of dynamic negotiation protocols for establishing 802.1q trunks or port channels, such as Dynamic Trunking Protocol (DTP) or Link Aggregation Control Protocol (LACP). A vSwitch cannot be connected to another vSwitch, thereby eliminating a potential loop configuration. Because there is no possibility of looping, the vSwitches do not run Spanning Tree Protocol (STP). Looping can be a common network problem, so this is a real benefit of vSwitches.

In physical switches, Spanning Tree Protocol (STP) offers redundancy for paths and prevents loops in the network topology by locking redundant paths in a standby state. Only when a path is no longer available will STP activate the standby path.

It is possible to link vSwitches together using a VM with layer 2 bridging software and multiple virtual NICs, but this is not an accidental configuration and would require some effort to establish.

vSwitches and physical switches have some other differences:

- A vSwitch authoritatively knows the MAC addresses of the VMs connected to it, so there is no need to learn MAC addresses from the network.

- Traffic received by a vSwitch on one uplink is never forwarded out another uplink. This is yet another reason why vSwitches do not run STP.

- A vSwitch does not need to perform Internet Group Management Protocol (IGMP) snooping because it knows the multicast interests of the VMs attached to it.

As you can see from this list of differences, you simply can't use virtual switches in the same way you can use physical switches. You can't use a virtual switch as a transit path between two physical switches, for example, because traffic received on one uplink won't be forwarded out another uplink.

With this basic understanding of how vSwitches work, let's now take a closer look at ports and port groups.

Understanding Ports and Port Groups

As described previously in this chapter, a vSwitch allows several different types of communication, including communication to and from the VMkernel and between VMs. To help distinguish between these different types of communication, ESXi uses ports and port groups. A vSwitch without any ports or port groups is like a physical switch that has no physical ports; there is no way to connect anything to the switch, and it is, therefore, useless.

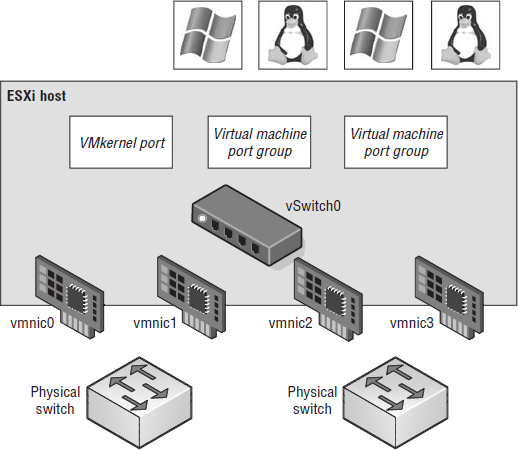

Port groups differentiate between the types of traffic passing through a vSwitch, and they also operate as a boundary for communication and/or security policy configuration. Figure 5.3 and Figure 5.4 show the two different types of ports and port groups that you can configure on a vSwitch:

- VMkernel port

- VM port group

FIGURE 5.3 Virtual switches can contain two connection types: VMkernel port and VM port group.

FIGURE 5.4 You can create virtual switches with both connection types on the same switch.

Because a vSwitch cannot be used in any way without at least one port or port group, you'll see that the vSphere Web Client combines the creation of new vSwitches with the creation of new ports or port groups.

As shown in Figure 5.2, though, ports and port groups are only part of the overall solution. The uplinks are the other part of the solution that you need to consider because they provide external network connectivity to the vSwitches.

Understanding Uplinks

Although a vSwitch allows communication between VMs connected to the vSwitch, it cannot communicate with the physical network without uplinks. Just as a physical switch must be connected to other switches to communicate across the network, vSwitches must be connected to the ESXi host's physical NICs as uplinks to communicate with the rest of the network.

Unlike ports and port groups, uplinks aren't required for a vSwitch to function. Physical systems connected to an isolated physical switch with no uplinks to other physical switches in the network can still communicate with each other—just not with any other systems that are not connected to the same isolated switch. Similarly, VMs connected to a vSwitch without any uplinks can communicate with each other but not with VMs on other vSwitches or physical systems.

This sort of configuration is known as an internal-only vSwitch. It can be useful to allow VMs to communicate only with each other. VMs that communicate through an internal-only vSwitch do not pass any traffic through a physical adapter on the ESXi host. As shown in Figure 5.5, communication between VMs connected to an internal-only vSwitch takes place entirely in the software and happens at the speed at which the VMkernel can perform the task, whatever that may be.

FIGURE 5.5 VMs communicating through an internal-only vSwitch do not pass any traffic through a physical adapter.

NO UPLINK, NO VMOTION

VMs connected to an internal-only vSwitch are not vMotion capable. However, if the VM is disconnected from the internal-only vSwitch, a warning will be provided, but vMotion will succeed if all other requirements have been met. The requirements for vMotion are covered in Chapter 12, “Balancing Resource Utilization.”

For VMs to communicate with resources beyond the VMs hosted on the local ESXi host, a vSwitch must be configured to use at least one physical network adapter, or uplink. A vSwitch can be bound to a single network adapter or bound to two or more network adapters.

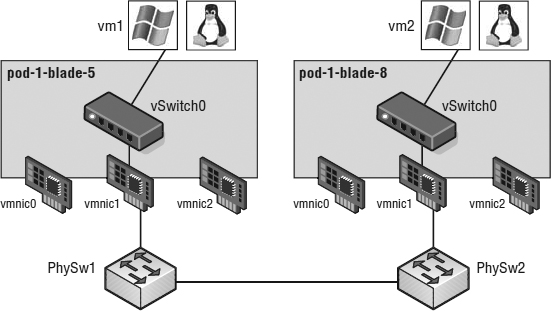

A vSwitch bound to at least one physical network adapter allows VMs to establish communication with physical servers on the network or with VMs on other ESXi hosts. That's assuming, of course, that the VMs on the other ESXi hosts are connected to a vSwitch that is bound to at least one physical network adapter. Just like a physical network, a virtual network requires connectivity from end to end. Figure 5.6 shows the communication path for VMs connected to a vSwitch bound to a physical network adapter. In the diagram, when vm1 on pod-1-blade-5 needs to communicate with vm2 on pod-1-blade-8, the traffic from the VM passes through vSwitch0 (via a VM port group) to the physical network adapter to which the vSwitch is bound. From the physical network adapter, the traffic will reach the physical switch (PhySw1). The physical switch (PhySw1) passes the traffic to the second physical switch (PhySw2), which will pass the traffic through the physical network adapter associated with the vSwitch on pod-1-blade-8. In the last stage of the communication, the vSwitch will pass the traffic to the destination virtual machine vm2.

FIGURE 5.6 A vSwitch with a single network adapter allows VMs to communicate with physical servers and other VMs on the network.

The vSwitch associated with a physical network adapter provides VMs with the amount of bandwidth the physical adapter is configured to support. All the VMs will share this bandwidth when communicating with physical machines or VMs on other ESXi hosts. In this way, a vSwitch is once again similar to a physical switch. For example, a vSwitch bound to a network adapter with a 1 Gbps maximum speed will provide up to 1 Gbps of bandwidth for the VMs connected to it; similarly, a physical switch with a 1 Gbps uplink to another physical switch provides up to 1 Gbps of bandwidth between the two switches for systems attached to the physical switches.

A vSwitch can also be bound to multiple physical network adapters. In this configuration, the vSwitch is sometimes referred to as a NIC team, but in this book we'll use the term NIC team or NIC teaming to refer specifically to the grouping of network connections, not to refer to a vSwitch with multiple uplinks.

Although a single vSwitch can be associated with multiple physical adapters as in a NIC team, a single physical adapter cannot be associated with multiple vSwitches. ESXi hosts can have up to 32 e1000 network adapters, 32 Broadcom TG3 Gigabit Ethernet network ports, or 16 Broadcom BN32 Gigabit Ethernet network ports. ESXi hosts support up to 8 10 Gigabit Ethernet adapters.

Figure 5.7 and Figure 5.8 show a vSwitch bound to multiple physical network adapters. A vSwitch can have a maximum of 32 uplinks. In other words, a single vSwitch can use up to 32 physical network adapters to send and receive traffic from the physical switches. Binding multiple physical NICs to a vSwitch offers the advantage of redundancy and load distribution. In the section “Configuring NIC Teaming,” you'll dig deeper into the configuration and workings of this sort of vSwitch configuration.

FIGURE 5.7 A vSwitch using NIC teaming has multiple available adapters for data transfer. NIC teaming offers redundancy and load distribution.

So, we've examined vSwitches, ports and port groups, and uplinks, and you should have a basic understanding of how these pieces begin to fit together to build a virtual network. The next step is to delve deeper into the configuration of the various types of ports and port groups, because they are so essential to virtual networking. We'll start with a discussion on management networking.

Configuring Management Networking

Management traffic is a special type of network traffic that runs across a VMkernel port. VMkernel ports provide network access for the VMkernel's TCP/IP stack, which is separate and independent from the network traffic generated by VMs. The ESXi management network, however, is treated a bit differently than “regular” VMkernel traffic in two ways:

- First, the ESXi management network is automatically created when you install ESXi. In order for the ESXi host to be reachable across the network, it must have a management network configured and working. So, the ESXi installer automatically sets up an ESXi management network.

- Second, the Direct Console User Interface (DCUI)—the user interface that exists when you're working at the physical console of a server running ESXi—provides a mechanism for configuring or reconfiguring the management network but not any other forms of networking on that host.

FIGURE 5.8 Virtual switches using NIC teaming are identified by the multiple physical network adapters assigned to the vSwitch.

Although the vSphere Web Client offers an option to enable management traffic when configuring networking, as you can see in Figure 5.9, it's unlikely that you'll use this option very often. After all, for you to configure management networking from within the vSphere Web Client, the ESXi host must already have functional management networking in place (vCenter Server communicates with ESXi over the management network). You might use this option if you were creating additional management interfaces. To do this, you would use the procedure described later (in the section “Configuring VMkernel Networking”) to create VMkernel ports with the vSphere Web Client, simply enabling Management Traffic in the Enable Services section while creating the VMkernel port.

FIGURE 5.9 The vSphere Client offers a way to enable management networking when configuring networking.

In the event that the ESXi host is unreachable—and therefore cannot be configured using the vSphere Client—you'll need to use the DCUI to configure the management network.

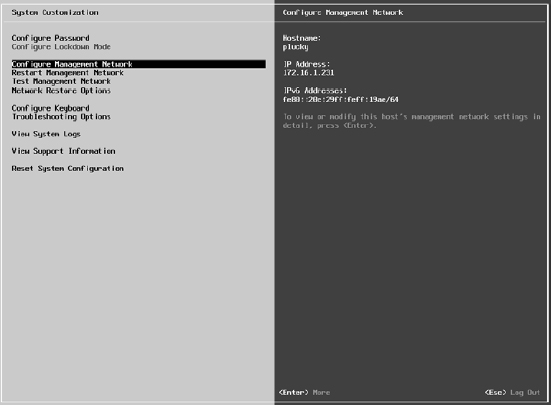

Perform the following steps to configure the ESXi management network using the DCUI:

- At the server's physical console or using a remote console utility such as the HP iLO, press F2 to enter the System Customization menu.

If prompted to log in, enter the appropriate credentials.

- Use the arrow keys to highlight the Configure Management Network option, as shown in Figure 5.10, and press Enter.

FIGURE 5.10 To configure ESXi's equivalent of the Service Console port, use the Configure Management Network option in the System Customization menu.

- From the Configure Management Network menu, select the appropriate option for configuring ESXi management networking, as shown in Figure 5.11.

You cannot create additional management network interfaces from here; you can only modify the existing management network interface.

- When finished, follow the screen prompts to exit the management networking configuration.

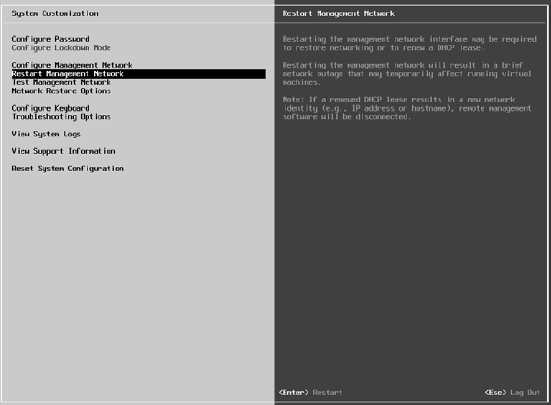

If prompted to restart the management networking, select Yes; otherwise, restart the management networking from the System Customization menu, as shown in Figure 5.12.

In looking at Figure 5.10 and Figure 5.12, you'll also see options for testing the management network, which lets you verify that the management network is configured correctly. This is invaluable if you are unsure of the VLAN ID or network adapters that you should use.

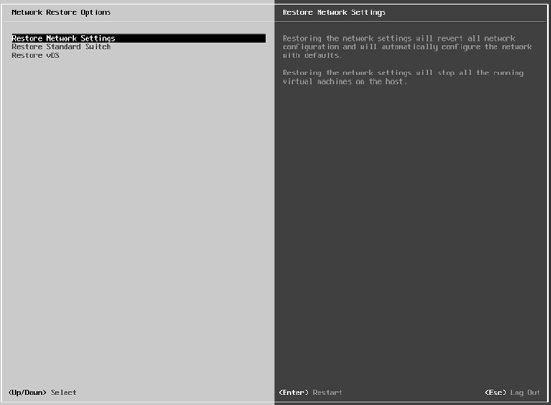

We also want to point out the Network Restore Options screen, shown in Figure 5.13. This screen lets you restore the network configuration to defaults, restore a vSphere Standard Switch, or even restore a vSphere Distributed Switch—all very handy options if you are troubleshooting management network connectivity to your ESXi host.

FIGURE 5.11 From the Configure Management Network menu, users can modify assigned network adapters, change the VLAN ID, or alter the IP configuration.

FIGURE 5.12 The Restart Management Network option restarts ESXi's management networking and applies any changes that were made.

FIGURE 5.13 Use the Network Restore Options screen to manage network connectivity to an ESXi host.

Let's move our discussion of VMkernel networking away from just management traffic and take a closer look at the other types of VMkernel traffic, as well as how to create and configure VMkernel ports.

Configuring VMkernel Networking

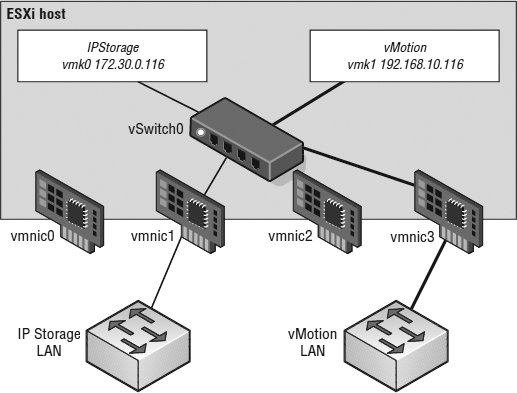

VMkernel networking carries management traffic, but it also carries all other forms of traffic that originate with the ESXi host itself (i.e., any traffic that isn't generated by VMs running on that ESXi host). As shown in Figure 5.14 and Figure 5.15, VMkernel ports are used for management, vMotion, iSCSI, NAS/NFS access, and vSphere FT—basically, all types of traffic that are generated by the hypervisor itself. In Chapter 6, “Creating and Configuring Storage Devices.” we detail the iSCSI and NAS/NFS configurations; in Chapter 12, we provide details of the vMotion process and how vSphere FT works. These discussions provide insight into the traffic flow between VMkernel and storage devices (iSCSI/NFS) or other ESXi hosts (for vMotion or vSphere FT). At this point, you should be concerned only with configuring VMkernel networking.

A VMkernel port actually comprises two different components: a port group on a vSwitch and a VMkernel network interface, also known as a vmknic. Creating a VMkernel port using the vSphere Web Client combines the task of creating the port group and the VMkernel NIC.

Perform the following steps to add a VMkernel port to an existing vSwitch using the vSphere Web Client:

- If not already connected, open a supported web browser and log in to a vCenter Server instance. For example, if your vCenter Server instance is called “vcenter,” then you'll connect to https://vcenter.domain.name:9443/vsphere-client and then log in with appropriate credentials.

- From the vSphere Web Client home page, select vCenter from the navigation list on the left.

- From the Inventory Lists area, select Hosts, then click the ESXi host on which you'd like to add the new VMkernel port.

FIGURE 5.14 A VMkernel port is associated with an interface and assigned an IP address for accessing iSCSI or NFS storage devices or for performing vMotion with other ESXi hosts.

FIGURE 5.15 The network labels for VMkernel ports should be as descriptive as possible.

- Select the Manage tab, and click the Networking button.

- Click Virtual Adapters.

- Click the Add Host Networking icon. This starts the Add Networking wizard.

- Select VMkernel Network Adapter, and then click Next.

- Because you're adding a VMkernel port to an existing vSwitch, make sure Select An Existing Standard Switch is selected, then click Browse to select the virtual switch to which the new VMkernel port should be added. Click OK in the Select Switch dialog box, and click Next to continue.

- Type the name of the port in the Network Label text box.

- If necessary, specify the VLAN ID for the VMkernel port.

- Select whether this VMkernel port will be enabled for IPv4, IPv6, or both.

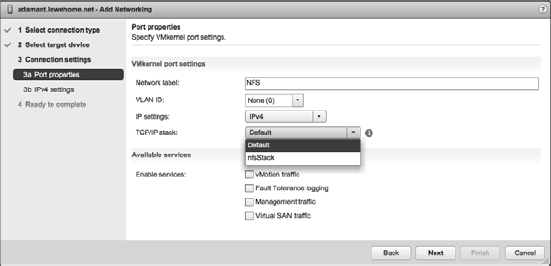

- Select the TCP/IP stack that this VMkernel port should use. Unless you have already created a custom TCP/IP stack, Default will be the only option listed here. We discuss IP stacks later in this chapter in the section titled “Configuring TCP/IP Stacks.”

- Select the various functions that will be enabled on this VMkernel port, and then click Next. For a VMkernel port that will be used only for iSCSI or NAS/NFS traffic, all the Enable Services check boxes should be deselected, as shown in Figure 5.16. For a VMkernel port that will act as an additional management interface, only Management Traffic should be selected.

FIGURE 5.16 VMkernel ports can carry IP-based storage traffic, vMotion traffic, Fault Tolerance logging traffic, management traffic, or Virtual SAN traffic.

- For IPv4 (applicable if you selected IPv4 or IPv4 And IPv6 for IP Settings in the previous step), you may elect to either obtain the configuration automatically (via DHCP) or supply a static configuration. If you opt to use a static configuration, ensure that the IP address is a valid IP address for the network to which the physical NIC is connected.

DEFAULT GATEWAY AND DNS SERVERS AREN'T EDITABLE

Note that the default gateway and DNS server addresses are controlled by the TCP/IP stack configuration and can't be changed here. To change these settings, you'll need to edit the TCP/IP stack settings, as described in the section titled “Configuring TCP/IP Stacks.”

- For IPv6 (applicable if you selected IPv6 or IPv4 And IPv6 for IP Settings earlier), you can choose to obtain configuration automatically via DHCPv6, obtain your configuration automatically via Router Advertisement, and/or assign one or more IPv6 addresses. Use the green plus symbol to add an IPv6 address that is appropriate for the network to which this VMkernel interface will be connected.

- Click Next to review the configuration summary, and then click Finish.

After you complete these steps, you can use the esxcli command—either from an instance of the vSphere Management Assistant or from a system with the vSphere CLI installed—to show the new VMkernel port and the new VMkernel NIC that was created:

esxcli --server=<vCenter hostname or IP> --vihost=<ESXi hostname or IP> --username=<vCenter admin user> network ip interface list

DIFFERENT COMMAND-LINE OPTIONS

vSphere 5.5 still provides the vicfg-* tools, such as vicfg-vswitch and vicfg-vmknic. However, most command-line functionality is being collapsed into esxcli moving forward, so it's a good idea to try to stick with esxcli wherever possible.

To help illustrate the different parts—the VMkernel port and the VMkernel NIC, or vmknic—that are created during this process, let's again walk through the steps for creating a VMkernel port using the vSphere Management Assistant.

Perform the following steps to create a VMkernel port on an existing vSwitch using the command line:

- Using PuTTY.exe (Windows) or a terminal window (Linux or Mac OS X), establish an SSH session to the vSphere Management Assistant.

- Enter the following command to add a port group named VMkernel to vSwitchO:

esxcli --server=<vCenter host name> --vihost=<ESXi host name> --username=<vCenter administrative user> network vswitch standard portgroup add --portgroup-name=VMkernel --vswitch-name=vSwitch0

- Use the esxcli command to list the port groups on vSwitchO. Note that the port group exists, but nothing has been connected to it (the Active Clients column shows 0).

esxcli --server=<vCenter host name> --vihost=<ESXi host name> --username=<vCenter administrative user> network vswitch standard portgroup list

- Enter the following command to create the VMkernel port and attach it to the port group created in step 2:

esxcli --server=<vCenter host name> --vihost=<ESXi host name> --username=<vCenter administrative user> network ip interface add --portgroup-name=VMkernel --interface-name=vmk4

- Use this command to assign an IP address and subnet mask to the VMkernel port created in the previous step:

- Repeat the command from step 3 again, noting now how the Active Clients column has incremented to 1.

This indicates that a vmknic has been connected to a virtual port on the port group. Figure 5.17 shows the output of the esxcli command after completing step 5.

FIGURE 5.17 Using the CLI helps drive home the fact that the port group and the VMkernel port are separate objects.

Aside from the default ports required for the management network, no VMkernel ports are created during the installation of ESXi, so all the nonmanagement VMkernel ports that may be required in your environment will need to be created, either using the vSphere Web Client or via CLI using the vSphere CLI or the vSphere Management Assistant.

In addition to adding VMkernel ports, you might need to edit a VMkernel port, or even remove a VMkernel port. Both of these tasks can be done in the same place you added a VMkernel port: the Networking section of the Manage tab for an ESXi host.

To edit a VMkernel port, select the desired VMkernel port from the list and click the Edit Settings icon (it looks like a pencil). This will bring up the Edit Settings dialog box, where you can change the services for which this port is enabled, change the MTU, and modify the IPv4 and/or IPv6 settings. Of particular interest here is the Analyze Impact section, shown in Figure 5.18, which helps point out dependencies on the VMkernel port in order to prevent unwanted side effects that might result from modifying the VMkernel port's configuration.

To delete a VMkernel port, select the desired VMkernel port from the list and click the Remove Selected Virtual Network Adapter (it looks like a red X). In the resulting confirmation dialog box, you'll see the option to analyze the impact (same as with modifying a VMkernel port). Click OK to remove the VMkernel port.

Before we move on to discussing how to configure VM networking, let's look at one more area related to host networking. Next, we'll introduce a feature new to vSphere 5.5: multiple TCP/IP stacks.

FIGURE 5.18 The Analyze Impact section shows administrators dependencies on VMkernel ports.

Configuring TCP/IP Stacks

Prior to the release of vSphere 5.5, all VMkernel interfaces shared a single instance of a TCP/IP stack. As a result, this means they all shared the same routing table and same DNS configuration. This created some interesting challenges in certain environments; for example, what if you needed a default gateway for your management network but you also needed a default gateway for your NFS traffic? The only workaround was to use a single default gateway and then populate the routing table with static routes. Clearly, this is not a very scalable solution.

With the release of vSphere 5.5, you can now create multiple TCP/IP stacks. Each stack has its own routing table and own DNS configuration.

Let's take a look at how to create TCP/IP stacks. Once we have at least one additional TCP/IP stack created, we will show you how to assign a VMkernel interface to a specific TCP/IP stack.

CREATING A TCP/IP STACK

In this release, creating new TCP/IP stack instances can only be done from the command line using the esxcli command.

To create a new TCP/IP stack, use this command:

esxcli --server=<vCenter host name> --vihost=<ESXi host name> --username=<vCenter administrative user> network ip netstack add --netstack=<Name of new TCP/IP stack>

For example, if you wanted to create a separate TCP/IP stack for your NFS traffic, the command might look something like this:

esxcli --server=vcenter.v12nlab.net --vihost=esxi-01.v12nlab.net --username=root network ip netstack add --netstack=nfsStack

You can get a list of all the configured TCP/IP stacks with a very similar esxcli command:

esxcli --server=<vCenter host name> --vihost=<ESXi host name> --username=<vCenter administrative user> network ip netstack list

Once the new TCP/IP stack is created, you can, if you wish, continue to configure the stack using the esxcli command. However, you will probably find it easier to use the vSphere Web Client to do the actual configuration of the new TCP/IP stack, as we describe in the next section.

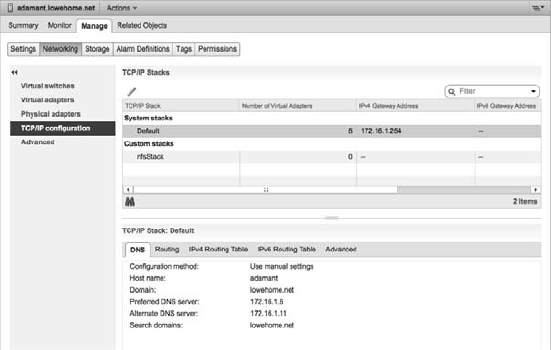

CONFIGURING TCP/IP STACK SETTINGS

You've actually seen references to the TCP/IP stacks already at least once (when creating a VMkernel interface), but the actual settings for the TCP/IP stacks are found in the same place where you create and configure other host networking settings: in the Networking section of the Manage tab for an ESXi host object, as shown in Figure 5.19.

FIGURE 5.19 TCP/IP stack settings are located with other host networking configuration options.

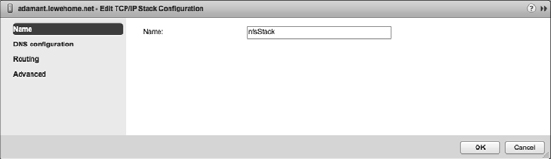

In Figure 5.19 you can see the new TCP/IP stack, named nfsStack, that we created in previous section. To edit the settings for that stack, you'll simply select it from the list and click the Edit TCP/IP Stack Configuration icon (it looks like a pencil above the list of TCP/IP stacks). That brings up the Edit TCP/IP Stack Configuration dialog box, shown in Figure 5.20.

FIGURE 5.20 Each TCP/IP stack can have its own DNS configuration, routing information, and other advanced settings.

In the Edit TCP/IP Stack Configuration dialog box, make the changes you need to make to the name, DNS configuration, routing, or other advanced settings. Once you're finished, click OK.

One final task regarding TCP/IP stacks remains: assigning interfaces to a TCP/IP stack. Until you actually assign an interface—specifically referring to VMkernel interfaces here—to a TCP/IP stack you've created, the VMkernel interface will use the default system stack and won't be able to use any of the custom settings you've configured.

ASSIGNING PORTS TO A TCP/IP STACK

Unfortunately, you can assign VMkernel ports to a TCP/IP stack only at the time of creation. In other words, once a VMkernel port has been created, you can't change the TCP/IP stack to which it has been assigned. You must delete the VMkernel port and then re-create it, assigning it to the desired TCP/IP stack. We described how to create and delete VMkernel ports earlier, so we won't go through those tasks again here.

You'll note that it's in step 12 of creating a VMkernel port that you have the option of selecting a specific TCP/IP stack to which to bind this VMkernel port. This is illustrated in Figure 5.21, where you can see the system default stack as well as the custom nfsStack we created earlier listed.

FIGURE 5.21 VMkernel ports can be assigned to a TCP/IP stack only at the time of creation.

One very important thing to note: In this release, using custom TCP/IP stacks isn't supported for use with vMotion, Fault Tolerance logging, management traffic, or Virtual SAN traffic. When you select a custom TCP/IP stack, you'll see that the check boxes to enable these services automatically disable themselves. At this time, you'll only be able to use custom TCP/IP stacks for IP-based storage, like iSCSI and NFS.

It's now time to shift our focus from host networking to VM networking.

Configuring VM Networking

The second type of port group to discuss is the VM port group, which is responsible for all VM networking. The VM port group is quite different from a VMkernel port. With VMkernel networking, there is a one-to-one relationship with an interface: Each VMkernel NIC, or vmknic, requires a matching VMkernel port group on a vSwitch. In addition, these interfaces require IP addresses that are used for management or VMkernel network access.

A VM port group, on the other hand, does not have a one-to-one relationship, and it does not require an IP address. For a moment, forget about vSwitches and consider standard physical switches. When you install or add an unmanaged physical switch into your network environment, that physical switch does not require an IP address: You simply install the switches and plug in the appropriate uplinks that will connect them to the rest of the network.

A vSwitch created with a VM port group is really no different. A vSwitch with a VM port group acts just like an additional unmanaged physical switch. You need only plug in the appropriate uplinks—physical network adapters, in this case—that will connect that vSwitch to the rest of the network. As with an unmanaged physical switch, an IP address does not need to be configured for a VM port group to combine the ports of a vSwitch with those of a physical switch. Figure 5.22 shows the switch-to-switch connection between a vSwitch and a physical switch.

FIGURE 5.22 A vSwitch with a VM port group uses an associated physical network adapter to establish a switch-to-switch connection with a physical switch.

Perform the following steps to create a vSwitch with a VM port group using the vSphere Web Client:

- Use the vSphere Web Client to establish a connection to a vCenter Server instance.

- From the vSphere Web Client home page, click vCenter from the Inventories section, then select Hosts from the inventory lists on the left.

- Select the ESXi host on which you'd like to add a vSwitch, then click Manage, and finally, select the Networking section.

- Click the Add Host Networking icon (a small globe with a plus sign) to start the Add Networking wizard.

- Select the Virtual Machine Port Group For A Standard Switch radio button, and click Next.

- Because you are creating a new vSwitch, select the New Standard Switch radio button. Click Next.

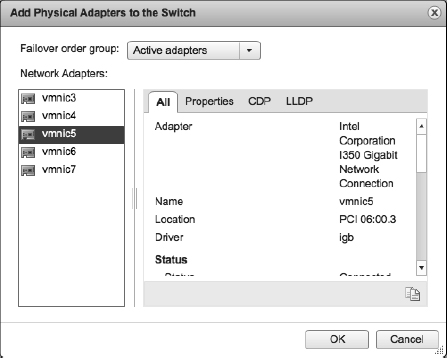

- Click the green plus icon to add physical network adapters to the new vSwitch you are creating. From the Add Physical Adapters To The Switch dialog box, select the NIC or NICs connected to the switch that can carry the appropriate traffic for your VMs.

- Click OK when you're done selecting physical network adapters. This returns you to the Create A Standard Switch screen, where you can click Next to continue.

- Type the name of the VM port group in the Network Label text box.

- Specify a VLAN ID, if necessary, and click Next.

- Click Next to review the virtual switch configuration, and then click Finish.

If you are a command-line junkie, you can create a VM port group from the vSphere CLI as well. You can probably guess the commands that are involved from the previous examples, but we'll walk you through the process anyway.

Perform the following steps to create a vSwitch with a VM port group using the command line:

- Using PuTTY.exe (Windows) or a terminal window (Linux or Mac OS X), establish an SSH session to a running instance of the vSphere Management Assistant.

- Enter the following command to add a virtual switch named vSwitch1:

esxcli --server=<vCenter host name> --vihost=<ESXi host name> --username=<vCenter administrative user> network vswitch standard add --vswitch-name=vSwitch1

- Enter the following command to bind the physical NIC vmnic1 to vSwitch1:

esxcli --server=<vCenter host name> --vihost=<ESXi host name> --username=<vCenter administrative user> network vswitch standard uplink add --vswitch-name=vSwitch1 --uplink-name=vmnic1

By binding a physical NIC to the vSwitch, you provide network connectivity to the rest of the network for VMs connected to this vSwitch. Again, remember that you can assign any given physical NIC to only one vSwitch at a time (but a vSwitch may have multiple physical NICs bound at the same time).

- Enter the following command to create a VM port group named ProductionLAN on vSwitch1:

esxcli --server=<vCenter host name> --vihost=<ESXi host name> --username=<vCenter administrative user> network vswitch standard portgroup add --vswitch-name=vSwitch1 --portgroup-name=ProductionLAN

Of the different connection types—VMkernel ports and VM port groups—vSphere administrators will spend most of their time creating, modifying, managing, and removing VM port groups.

PORTS AND PORT GROUPS ON A VIRTUAL SWITCH

A vSwitch can consist of multiple connection types, or each connection type can be created in its own vSwitch.

Configuring VLANs

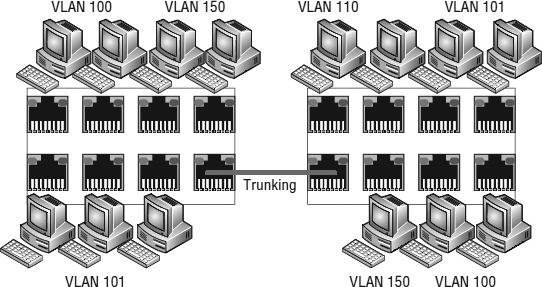

Several times so far we've referenced the use of the VLAN ID when configuring a VMkernel port and a VM port group. As defined previously in this chapter, a virtual LAN (VLAN) is a logical LAN that provides efficient segmentation, security, and broadcast control while allowing traffic to share the same physical LAN segments or same physical switches. Figure 5.23 shows a typical VLAN configuration across physical switches.

FIGURE 5.23 Virtual LANs provide secure traffic segmentation with-out the cost of additional hardware.

VLANs utilize the IEEE 802.1q standard for tagging, or marking, traffic as belonging to a particular VLAN. The VLAN tag, also known as the VLAN ID, is a numeric value between 1 and 4094, and it uniquely identifies that VLAN across the network. Physical switches such as the ones depicted in Figure 5.23 must be configured with ports to trunk the VLANs across the switches. These ports are known as trunk (or trunking) ports. Ports not configured to trunk VLANs are known as access ports and can carry traffic only for a single VLAN at a time.

USING VLAN ID 4095

Normally the VLAN ID will range from 1 to 4094. In a vSphere environment, however, a VLAN ID of 4095 is also valid. Using this VLAN ID with ESXi causes the VLAN tagging information to be passed through the vSwitch all the way up to the guest OS. This is called virtual guest tagging (VGT) and is useful only for guest OSes that support and understand VLAN tags.

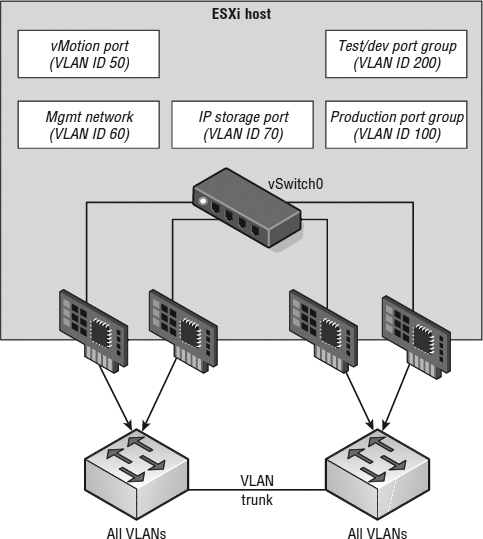

VLANs are an important part of ESXi networking because of the impact they have on the number of vSwitches and uplinks that are required. Consider this configuration:

- The management network needs access to the network segment carrying management traffic.

- Other VMkernel ports, depending upon their purpose, may need access to an isolated vMotion segment or the network segment carrying iSCSI and NAS/NFS traffic.

- VM port groups need access to whatever network segments are applicable for the VMs running on the ESXi hosts.

Without VLANs, this configuration would require three or more separate vSwitches, each bound to a different physical adapter, and each physical adapter would need to be physically connected to the correct network segment, as illustrated in Figure 5.24.

FIGURE 5.24 Supporting multiple networks without VLANs can increase the number of vSwitches, uplinks, and cabling that is required.

Add in an IP-based storage network and a few more VM networks that need to be supported and the number of required vSwitches and uplinks quickly grows. And this doesn't even take uplink redundancy, for example NIC teaming, into account!

VLANs are the answer to this dilemma. Figure 5.25 shows the same network as in Figure 5.24, but with VLANs this time.

While the reduction from Figure 5.24 to Figure 5.25 is only a single vSwitch and a single uplink, you can easily add more VM networks to the configuration in Figure 5.25 by simply adding another port group with another VLAN ID. Blade servers provide an excellent example of when VLANs offer tremendous benefit. Because of the small form factor of the blade casing, blade servers have historically offered limited expansion slots for physical network adapters. VLANs allow these blade servers to support more networks than they would be able to otherwise.

NO VLAN NEEDED

Virtual switches in the VMkernel do not need VLANs if an ESXi host has enough physical network adapters to connect to each of the different network segments. However, VLANs provide added flexibility in adapting to future network changes, so the use of VLANs where possible is recommended.

As shown in Figure 5.25, VLANs are handled by configuring different port groups within a vSwitch. The relationship between VLANs and port groups is not a one-to-one relationship; a port group can be associated with only one VLAN at a time, but multiple port groups can be associated with a single VLAN. Later in this chapter when we discuss security settings (in the section “Configuring Virtual Switch Security”), you'll see some examples of when you might have multiple port groups associated with a single VLAN.

FIGURE 5.25 VLANs can reduce the number of vSwitches, uplinks, and cabling required.

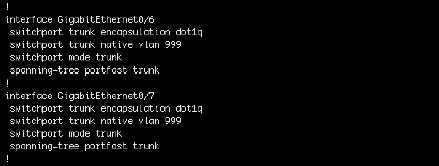

To make VLANs work properly with a port group, the uplinks for that vSwitch must be connected to a physical switch port configured as a trunk port. A trunk port understands how to pass traffic from multiple VLANs simultaneously while also preserving the VLAN IDs on the traffic. Figure 5.26 shows a snippet of configuration from a Cisco Catalyst 3560G switch for a couple of ports configured as trunk ports.

FIGURE 5.26 The physical switch ports must be configured as trunk ports in order to pass the VLAN information to the ESXi hosts for the port groups to use.

The configuration for switches from other manufacturers will vary, of course, so be sure to check with your particular switch manufacturer for specific details on how to configure a trunk port.

THE NATIVE VLAN

In Figure 5.26, you might notice the switchport trunk native vlan 999 command. The default native VLAN (also known as the untagged VLAN) on most switches is VLAN ID 1. If you need to pass traffic on VLAN 1 to the ESXi hosts, you should designate another VLAN as the native VLAN using this command (or its equivalent). We recommend creating a dummy VLAN, like 999, and setting that as the native VLAN. This ensures that all VLANs will be tagged with the VLAN ID as they pass into the ESXi hosts. Keep in mind this might affect behaviors like PXE booting, which generally requires untagged traffic.

When the physical switch ports are correctly configured as trunk ports, the physical switch passes the VLAN tags up to the ESXi server, where the vSwitch tries to direct the traffic to a port group with that VLAN ID assigned. If there is no port group configured with that VLAN ID, the traffic is discarded.

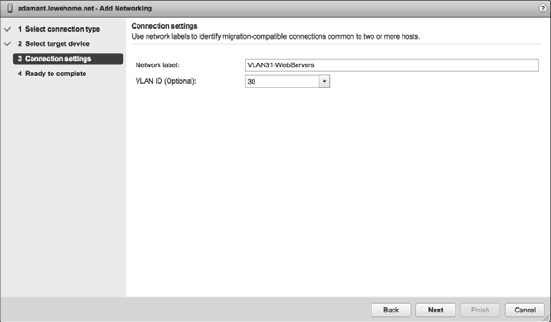

Perform the following steps to configure a VM port group using VLAN ID 30:

- Use the vSphere Web Client to establish a connection to a vCenter Server instance.

- Navigate to the ESXi host to which you want to add the VM port group, click the Manage tab, and then select Networking.

- Make sure Virtual Switches is selected on the left side, then select the vSwitch where the new port group should be created.

- Click the Add Host Networking icon (looks like a globe with a plus sign in the corner) to start the Add Networking wizard.

- Select the Virtual Machine Port Group For A Standard Switch radio button, then click Next.

- Make sure the Select An Existing Standard Switch radio button is selected and, if necessary, use the Browse button to choose which virtual switch will host the new VM port group. Click Next.

- Type the name of the VM port group in the Network Label text box.

Embedding the VLAN ID and a brief description into the name of the port group is strongly recommended, so typing something like VLANXXX-NetworkDescription would be appropriate, where XXX represents the VLAN ID.

- Type 30 in the VLAN ID (Optional) text box, as shown in Figure 5.27.

FIGURE 5.27 You must specify the correct VLAN ID in order for a port group to receive traffic intended for a particular VLAN.

You will want to substitute a value that is correct for your network here.

- Click Next to review the vSwitch configuration, and then click Finish.

As you've probably gathered by now, you can also use the esxcli command from the vSphere CLI to create or modify the VLAN settings for ports or port groups. We won't go through the steps here because the commands are extremely similar to what we've shown you already.

Although VLANs reduce the costs of constructing multiple logical subnets, keep in mind that they do not address traffic constraints. Although VLANs logically separate network segments, all the traffic still runs on the same physical network underneath. For bandwidth-intensive network operations, the disadvantage of the shared physical network might outweigh the scalability and cost savings of a VLAN.

CONTROLLING THE VLANS PASSED ACROSS A VLAN TRUNK

You might see the switchport trunk allowed vlan command in some Cisco switch configurations as well. This command allows you to control what VLANs are passed across the VLAN trunk to the device at the other end of the link—in this case, an ESXi host. You will need to ensure that all the VLANs that are defined on the vSwitches are also included in the switchport trunk allowed vlan command or those VLANs not included in the command won't work.

Configuring NIC Teaming

We know that in order for a vSwitch and its associated ports or port groups to communicate with other ESXi hosts or with physical systems, the vSwitch must have at least one uplink. An uplink is a physical network adapter that is bound to the vSwitch and connected to a physical network switch. With the uplink connected to the physical network, there is connectivity for the VMkernel and the VMs connected to that vSwitch. But what happens when that physical network adapter fails, when the cable connecting that uplink to the physical network fails, or the upstream physical switch to which that uplink is connected fails? With a single uplink, network connectivity to the entire vSwitch and all of its ports or port groups is lost. This is where NIC teaming comes in.

NIC teaming involves connecting multiple physical network adapters to a single vSwitch. NIC teaming provides redundancy and load balancing of network communications to the VMkernel and VMs.

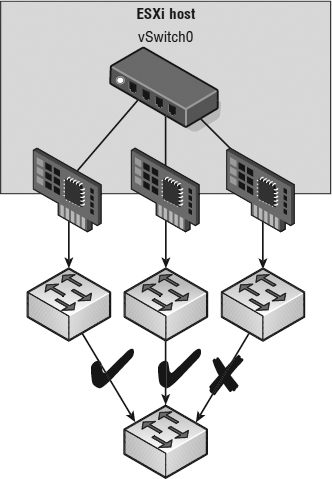

Figure 5.28 illustrates NIC teaming conceptually. Both of the vSwitches have two uplinks, and each of the uplinks connects to a different physical switch. Note that NIC teaming supports all the different connection types, so it can be used with ESXi management networking, VMkernel networking, and networking for VMs.

FIGURE 5.28 Virtual switches with multiple uplinks offer redundancy and load balancing.

Figure 5.29 shows what NIC teaming looks like from within the vSphere Web Client. In this example, the vSwitch is configured with an association to multiple physical network adapters (uplinks). As mentioned previously, the ESXi host can have a maximum of 32 uplinks; these uplinks can be spread across multiple vSwitches or all tossed into a NIC team on one vSwitch. Remember that you can connect a physical NIC to only one vSwitch at a time.

FIGURE 5.29 The vSphere Web Client shows when multiple physical network adapters are associated to a vSwitch using NIC teaming.

Building a functional NIC team requires that all uplinks be connected to physical switches in the same broadcast domain. If VLANs are used, then all the switches should be configured for VLAN trunking, and the appropriate subset of VLANs must be allowed across the VLAN trunk. In a Cisco switch, this is typically controlled with the switchport trunk allowed vlan statement.

In Figure 5.30, the NIC team for vSwitchO will work, because both of the physical switches share VLAN 100 and are therefore in the same broadcast domain. The NIC team for vSwitch1, however, will not work because the physical network adapters do not share a common broadcast domain.

FIGURE 5.30 All the physical network adapters in a NIC team must belong to the same layer 2 broadcast domain.

NIC teams should be built on physical network adapters located on separate bus architectures. For example, if an ESXi host contains two onboard network adapters and a PCI Express–based quad-port network adapter, a NIC team should be constructed using one onboard network adapter and one network adapter on the PCI bus. This design eliminates a single point of failure.

Perform the following steps to create a NIC team with an existing vSwitch using the vSphere Web Client:

- Use the vSphere Web Client to establish a connection to a vCenter Server instance.

- Navigate to the Networking section of the Manage tab for the ESXi host where you want to create the NIC team. We prefer to use the inventory lists rather than the hierarchy tree, but either method is fine.

- Make sure Virtual Switches is selected on the left, then select the virtual switch that will be assigned a NIC team and click the Manage The Physical Adapters Connected To The Selected Virtual Switch icon (it looks like a NIC with a wrench).

- In the Manage Physical Network Adapters dialog box, click the green Add Adapters icon.

- From the Add Physical Adapters To the Switch dialog box, select the appropriate adapter (or adapters) from the list, as shown in Figure 5.31.

FIGURE 5.31 Create a NIC team by adding network adapters that belong to the same layer 2 broadcast domain as the original adapter.

PUTTING NEW ADAPTERS INTO A DIFFERENT FAILOVER GROUP

The Add Physical Adapters To The Switch dialog box shown in Figure 5.31 allows you to add adapters not only to the list of active adapters but also to the list of standby or unused adapters. Simply change the desired group using the Failover Order Group drop-down list.

- Click OK to return to the Manage Physical Network Adapters dialog box.

- Click OK to complete the process and return to the Networking section of the Manage tab for the selected ESXi host. Note that it might take a moment or two for the display to update with the new physical adapter.

After a NIC team is established for a vSwitch, ESXi can then perform load balancing for that vSwitch. The load-balancing feature of NIC teaming does not function like the load-balancing feature of advanced routing protocols. Load balancing across a NIC team is not a product of identifying the amount of traffic transmitted through a network adapter and shifting traffic to equalize data flow through all available adapters. The load-balancing algorithm for NIC teams in a vSwitch is a balance of the number of connections—not the amount of traffic. NIC teams on a vSwitch can be configured with one of the following four load-balancing policies:

- vSwitch port-based load balancing (default)

- Source MAC-based load balancing

- IP hash-based load balancing

- Explicit failover order

The last option, explicit failover order, isn't really a “load-balancing” policy; instead, it uses the administrator-assigned failover order whereby the highest order uplink from the list of active adapters that passes failover detection criteria is used. More information on the failover order is provided in the section “Configuring Failover Detection and Failover Policy.” Also, note that the list we've supplied here applies only to vSphere Standard Switches; vSphere Distributed Switches, which we cover later in this chapter in the section “Working with vSphere Distributed Switches,” have additional options for load balancing and failover.

OUTBOUND LOAD BALANCING

The load-balancing feature of NIC teams on a vSwitch applies only to the outbound traffic.

REVIEWING VIRTUAL SWITCH PORT-BASED LOAD BALANCING

The vSwitch port-based load-balancing policy that is used by default uses an algorithm that ties (or pins) each virtual switch port to a specific uplink associated with the vSwitch. The algorithm attempts to maintain an equal number of port-to-uplink assignments across all uplinks to achieve load balancing. As shown in Figure 5.32, this policy setting ensures that traffic from a specific virtual network adapter connected to a virtual switch port will consistently use the same physical network adapter. In the event that one of the uplinks fails, the traffic from the failed uplink will fail over to another physical network adapter.

FIGURE 5.32 The vSwitch port-based load-balancing policy assigns each virtual switch port to a specific uplink. Failover to another uplink occurs when one of the physical network adapters experiences failure.

You can see how this policy does not provide dynamic load balancing but does provide redundancy. Because the port for a VM does not change, each VM is tied to a physical network adapter until failover occurs regardless of the amount of network traffic. Looking at Figure 5.32, imagine that the Linux VM and the Windows VM on the far left are the two most network-intensive VMs. In this case, the vSwitch port-based policy has assigned both ports for these VMs to the same physical network adapter. In this case, one physical network adapter could be much more heavily utilized than other network adapters in the NIC team.

The physical switch passing the traffic learns the port association and therefore sends replies back through the same physical network adapter from which the request initiated. The vSwitch port-based policy is best used when you have more virtual network adapters than physical network adapters. When there are fewer virtual network adapters, then some physical adapters will not be used. For example, if five VMs are connected to a vSwitch with six uplinks, only five vSwitch ports will be assigned to exactly five uplinks, leaving one uplink with no traffic to process.

REVIEWING SOURCE MAC-BASED LOAD BALANCING

The second load-balancing policy available for a NIC team is the source MAC-based policy, shown in Figure 5.33. This policy is susceptible to the same pitfalls as the vSwitch port-based policy simply because the static nature of the source MAC address is the same as the static nature of a vSwitch port assignment. The source MAC-based policy is also best used when you have more virtual network adapters than physical network adapters. In addition, VMs still cannot use multiple physical adapters unless configured with multiple virtual network adapters. Multiple virtual network adapters inside the guest OS of a VM will provide multiple source MAC addresses and allow multiple physical network adapters.

FIGURE 5.33 The source MAC-based load-balancing policy, as the name suggests, ties a virtual network adapter to a physical network adapter based on the MAC address.

VIRTUAL SWITCH TO PHYSICAL SWITCH

To eliminate a single point of failure, you can connect the physical network adapters in NIC teams set to use the vSwitch port-based or source MAC-based load-balancing policies to different physical switches; however, the physical switches must belong to the same layer 2 broadcast domain. Link aggregation using 802.3ad teaming is not supported with either of these load-balancing policies.

REVIEWING IP HASH-BASED LOAD BALANCING

The third load-balancing policy available for NIC teams is the IP hash-based policy, also called the out-IP policy. This policy, shown in Figure 5.34, addresses the limitation of the other two policies. The IP hash-based policy uses the source and destination IP addresses to calculate a hash. The hash determines the physical network adapter to use for communication. Different combinations of source and destination IP addresses will, quite naturally, produce different hashes. Based on the hash, then, this algorithm could allow a single VM to communicate over different physical network adapters when communicating with different destinations, assuming that the calculated hashes select a different physical NIC.

FIGURE 5.34 The IP hash-based policy is a more scalable load-balancing policy that allows VMs to use more than one physical network adapter when communicating with multiple destination hosts.

BALANCING FOR LARGE DATA TRANSFERS

Although the IP hash-based load-balancing policy can more evenly spread the transfer traffic for a single VM, it does not provide a benefit for large data transfers occurring between the same source and destination systems. Because the source-destination hash will be the same for the duration of the data load, it will flow through only a single physical network adapter.

Unless the physical hardware supports it, a vSwitch with the NIC teaming load-balancing policy set to use the IP-based hash must have all physical network adapters connected to the same physical switch. Some newer switches support link aggregation across physical switches, but otherwise all the physical network adapters will need to connect to the same switch. In addition, the switch must be configured for link aggregation. ESXi configured to use a vSphere Standard Switch supports standard 802.3ad teaming in static (manual) mode—sometimes referred to as EtherChannel in Cisco networking environments—but does not support the Link Aggregation Control Protocol (LACP) or Port Aggregation Protocol (PAgP) commonly found on switch devices. Link aggregation will increase overall aggregate throughput by potentially combining the bandwidth of multiple physical network adapters for use by a single virtual network adapter of a VM.

Another consideration to point out when using the IP hash-based load-balancing policy is that all physical NICs must be set to active instead of some configured as active and some as passive. This is because of the way IP hash-based load balancing works between the virtual switch and the physical switch.

Figure 5.35 shows a snippet of the configuration of a Cisco switch configured for link aggregation. Keep in mind that other switch manufacturers will have their own ways of configuring link aggregation, so refer to your specific vendor's documentation.

FIGURE 5.35 The physical switches must be configured to support the IP hash-based load-balancing policy.

Perform the following steps to alter the NIC teaming load-balancing policy of a vSwitch:

- Use the vSphere Web Client to establish a connection to a vCenter Server instance.

- Using your method of choice, navigate to the specific ESXi host that has the vSwitch whose NIC teaming configuration you wish to modify.

- With an ESXi host selected, go to the Manage tab, select Networking, and then make sure that Virtual Switches is highlighted.

- Select the name of the virtual switch from the list of virtual switches, and then click the Edit icon (it looks like a pencil).

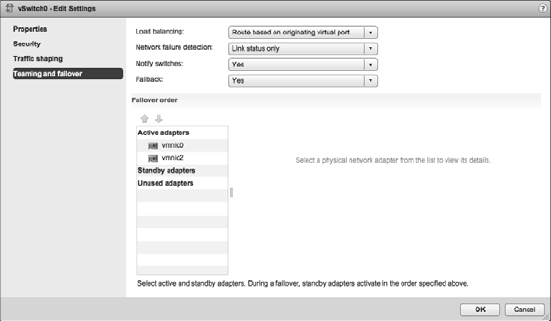

- In the Edit Settings dialog box, select Teaming And Failover, and then select the desired load-balancing strategy from the Load Balancing drop-down list, as shown in Figure 5.36.

- Click OK to save the changes.

Now that we've explained the load-balancing policies—and before we explain explicit failover order—let's take a deeper look at the failover and failback of uplinks in a NIC team. There are two parts to consider: failover detection and failover policy. We'll cover both of these in the next section.

FIGURE 5.36 Select the load-balancing policy for a vSwitch in the Teaming And Failover section.

CONFIGURING FAILOVER DETECTION AND FAILOVER POLICY

Failover detection with NIC teaming can be configured to use either a link status method or a beacon-probing method.

The link status failover-detection method works just as the name suggests. The link status of the physical network adapter identifies the failure of an uplink. In this case, failure is identified for events like removed cables or power failures on a physical switch. The downside to the setting for link status failover-detection is its inability to identify misconfigurations or pulled cables that connect the switch to other networking devices (for example, a cable connecting one switch to an upstream switch.)

OTHER WAYS OF DETECTING UPSTREAM FAILURES

Some network switch manufacturers have also added features into their network switches that assist in detecting upstream network failures. In the Cisco product line, for example, there is a feature known as link state tracking that enables the switch to detect when an upstream port has gone down and react accordingly. This feature can reduce or even eliminate the need for beacon probing.

The beacon-probing failover-detection setting, which includes link status as well, sends Ethernet broadcast frames across all physical network adapters in the NIC team. These broadcast frames allow the vSwitch to detect upstream network connection failures and will force failover when Spanning Tree Protocol blocks ports, when ports are configured with the wrong VLAN, or when a switch-to-switch connection has failed. When a beacon is not returned on a physical network adapter, the vSwitch triggers the failover notice and reroutes the traffic from the failed network adapter through another available network adapter based on the failover policy.

Consider a vSwitch with a NIC team consisting of three physical network adapters, where each adapter is connected to a different physical switch and each physical switch is connected to a single physical switch, which is then connected to an upstream switch, as shown in Figure 5.37. When the NIC team is set to the beacon-probing failover-detection method, a beacon will be sent out over all three uplinks.

FIGURE 5.37 The beacon-probing failover-detection policy sends beacons out across the physical network adapters of a NIC team to identify upstream network failures or switch misconfigurations.

After a failure is detected, either via link status or beacon probing, a failover will occur. Traffic from any VMs or VMkernel ports is rerouted to another member of the NIC team. Exactly which member that might be, though, depends primarily on the configured failover order.

Figure 5.38 shows the failover order configuration for a vSwitch with two adapters in a NIC team. In this configuration, both adapters are configured as active adapters, and either adapter or both adapters may be used at any given time to handle traffic for this vSwitch and all its associated ports or port groups.

Now look at Figure 5.39. This figure shows a vSwitch with three physical network adapters in a NIC team. In this configuration, one of the adapters is configured as a standby adapter. Any adapters listed as standby adapters will not be used until a failure occurs on one of the active adapters, at which time the standby adapters activate in the order listed.

It should go without saying, but adapters that are listed in the Unused Adapters section will not be used in the event of a failure.

Now take a quick look back at Figure 5.36. You'll see an option there labeled Use Explicit Failover Order. This is the explicit failover order policy that we mentioned toward the beginning of the section “Configuring NIC Teaming.” If you select that option instead of one of the other load-balancing options, then traffic will move to the next available uplink in the list of active adapters. If no active adapters are available, then traffic will move down the list to the standby adapters. Just as the name of the option implies, ESXi will use the order of the adapters in the failover order to determine how traffic will be placed on the physical network adapters. Because this option does not perform any sort of load balancing whatsoever, it's generally not recommended and one of the other options is used instead.

FIGURE 5.38 The failover order helps determine how adapters in a NIC team are used when a failover

FIGURE 5.39 Standby adapters automatically activate when an active adapter fails.

The Failback option controls how ESXi will handle a failed network adapter when it recovers from failure. The default setting, Yes, as shown in Figure 5.38 and Figure 5.39, indicates that the adapter will be returned to active duty immediately upon recovery, and it will replace any standby adapter that may have taken its place during the failure. Setting Failback to No means that the recovered adapter remains inactive until another adapter fails, triggering the replacement of the newly failed adapter.

USING FAILBACK WITH VMKERNEL PORTS AND IP-BASED STORAGE

We recommend setting Failback to No for VMkernel ports you've configured for IP-based storage. Otherwise, in the event of a “port-flapping” issue—a situation in which a link may repeatedly go up and down quickly—performance is negatively impacted. Setting Failback to No in this case protects performance in the event of port flapping.

Perform the following steps to configure the Failover Order policy for a NIC team:

- Use the vSphere Web Client to establish a connection to a vCenter Server instance.

- Navigate to the ESXi host that has the vSwitch for which you'd like to change the failover order. With an ESXi host selected, select the Manage tab, then click Networking.

- With Virtual Switches highlighted on the left, select the virtual switch you want to edit, then click the Edit Settings icon.

- Select Teaming And Failover.

- Use the Move Up and Move Down buttons to adjust the order of the network adapters and their location within the Active Adapters, Standby Adapters, and Unused Adapters lists, as shown in Figure 5.40.

FIGURE 5.40 Failover order for a NIC team is determined by the order of network adapters as listed in the Active Adapters, Standby Adapters, and Unused Adapters lists.

- Click OK to save the changes.

When a failover event occurs on a vSwitch with a NIC team, the vSwitch is obviously aware of the event. The physical switch that the vSwitch is connected to, however, will not know immediately. As you can see in Figure 5.40, a vSwitch includes a Notify Switches configuration setting, which, when set to Yes, will allow the physical switch to immediately learn of any of the following changes:

- A VM is powered on (or any other time a client registers itself with the vSwitch).

- A vMotion occurs.

- A MAC address is changed.

- A NIC team failover or failback has occurred.

TURNING OFF NOTIFY SWITCHES

The Notify Switches option should be set to No when the port group has VMs using Microsoft Network Load Balancing (NLB) in Unicast mode.

In any of these events, the physical switch is notified of the change using the Reverse Address Resolution Protocol (RARP). RARP updates the lookup tables on the physical switches and offers the shortest latency when a failover event occurs.

Although the VMkernel works proactively to keep traffic flowing from the virtual networking components to the physical networking components, VMware recommends taking the following actions to minimize networking delays:

- Disable Port Aggregation Protocol (PAgP) and Link Aggregation Control Protocol (LACP) on the physical switches.

- Disable Dynamic Trunking Protocol (DTP) or trunk negotiation.

- Disable Spanning Tree Protocol (STP).

VIRTUAL SWITCHES WITH CISCO SWITCHES

VMware recommends configuring Cisco devices to use PortFast mode for access ports or PortFast trunk mode for trunk ports.

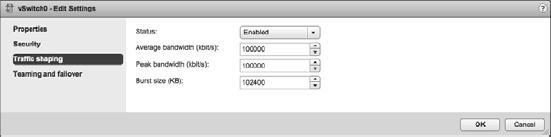

Using and Configuring Traffic Shaping

By default, all virtual network adapters connected to a vSwitch have access to the full amount of bandwidth on the physical network adapter with which the vSwitch is associated. In other words, if a vSwitch is assigned a 1 Gbps network adapter, then each VM configured to use the vSwitch has access to 1 Gbps of bandwidth. Naturally, if contention becomes a bottleneck hindering VM performance, NIC teaming will help. However, as a complement to NIC teaming, you can also enable and configure traffic shaping. Traffic shaping establishes hard-coded limits for peak bandwidth, average bandwidth, and burst size to reduce a VM's outbound bandwidth capability.

As shown in Figure 5.41, the Peak Bandwidth value and the Average Bandwidth value are specified in kilobits per second, and the Burst Size value is configured in units of kilobytes. The value entered for Average Bandwidth dictates the data transfer per second across the virtual vSwitch. The Peak Bandwidth value identifies the maximum amount of bandwidth a vSwitch can pass without dropping packets. Finally, the Burst Size value defines the maximum amount of data included in a burst. The burst size is a calculation of bandwidth multiplied by time. During periods of high utilization, if a burst exceeds the configured value, packets are dropped in favor of other traffic; however, if the queue for network traffic processing is not full, the packets are retained for transmission at a later time.

FIGURE 5.41 Traffic shaping reduces the out-bound bandwidth available to a port group.

TRAFFIC SHAPING AS A LAST RESORT

Use the traffic-shaping feature sparingly. Traffic shaping should be reserved for situations where VMs are competing for bandwidth and the opportunity to add physical network adapters isn't available because you don't have enough expansion slots on the physical chassis. With the low cost of network adapters, it is more worthwhile to spend time building vSwitch devices with NIC teams as opposed to cutting the bandwidth available to a set of VMs.

Perform the following steps to configure traffic shaping:

- Use the vSphere Web Client to establish a connection to a vCenter Server instance.

- Navigate to the ESXi host on which you'd like to configure traffic shaping. With an ESXi host selected, go to the Networking section of the Manage tab.

- Make sure Virtual Switches is selected, click the virtual switch on which traffic shaping should be enabled, and then click the Edit Settings icon.

- Select Traffic Shaping.

- Select the Enabled option from the Status drop-down list.

- Adjust the Average Bandwidth value to the desired number of kilobits per second.

- Adjust the Peak Bandwidth value to the desired number of kilobits per second.

- Adjust the Burst Size value to the desired number of kilobytes.

Keep in mind that traffic shaping on a vSphere Standard Switch applies only to outbound traffic.

Bringing It All Together

By now you've seen how all the various components of ESXi virtual networking interact with each other—vSwitches, ports and port groups, uplinks and NIC teams, and VLANs. But how do you assemble all these pieces into a usable whole?

The number and the configuration of the vSwitches and port groups depend on several factors, including the number of network adapters in the ESXi host, the number of IP subnets, the existence of VLANs, and the number of physical networks. With respect to the configuration of the vSwitches and VM port groups, no single correct configuration will satisfy every scenario. However, the greater the number of physical network adapters in an ESXi host, the more flexibility you will have in your virtual networking architecture.

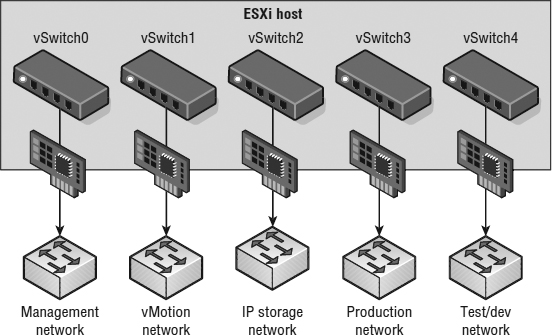

Later in the chapter we'll discuss some advanced design factors, but for now let's stick with some basic design considerations. If the vSwitches created in the VMkernel will not be configured with multiple port groups or VLANs, you will be required to create a separate vSwitch for every IP subnet or physical network to which you need to connect. This was illustrated previously in Figure 5.24 in our discussion about VLANs. To really understand this concept, let's look at two more examples.

Figure 5.42 shows a scenario in which there are five IP subnets that your virtual infrastructure components need to reach. The VMs in the production environment must reach the production LAN, the VMs in the test environment must reach the test LAN, the VMkernel needs to access the IP storage and vMotion LANs, and finally, the ESXi host must have access to the management LAN. In this scenario, without the use of VLANs and port groups, the ESXi host must have five different vSwitches and five different physical network adapters. (Of course, this doesn't account for redundancy or NIC teaming for the vSwitches.)

FIGURE 5.42 Without the use of port groups and VLANs in the vSwitches, each IP subnet will require a separate vSwitch with the appropriate connection type.

![]() Real World Scenario

Real World Scenario

WHY DESIGN IT THAT WAY?

During the virtual network design process we are often asked questions such as why virtual switches should not be created with the largest number of ports to leave room to grow or why multiple vSwitches should be used instead of a single vSwitch (or vice versa). Some of these questions are easy to answer; the answers to others are a matter of experience and, to be honest, personal preference.