25

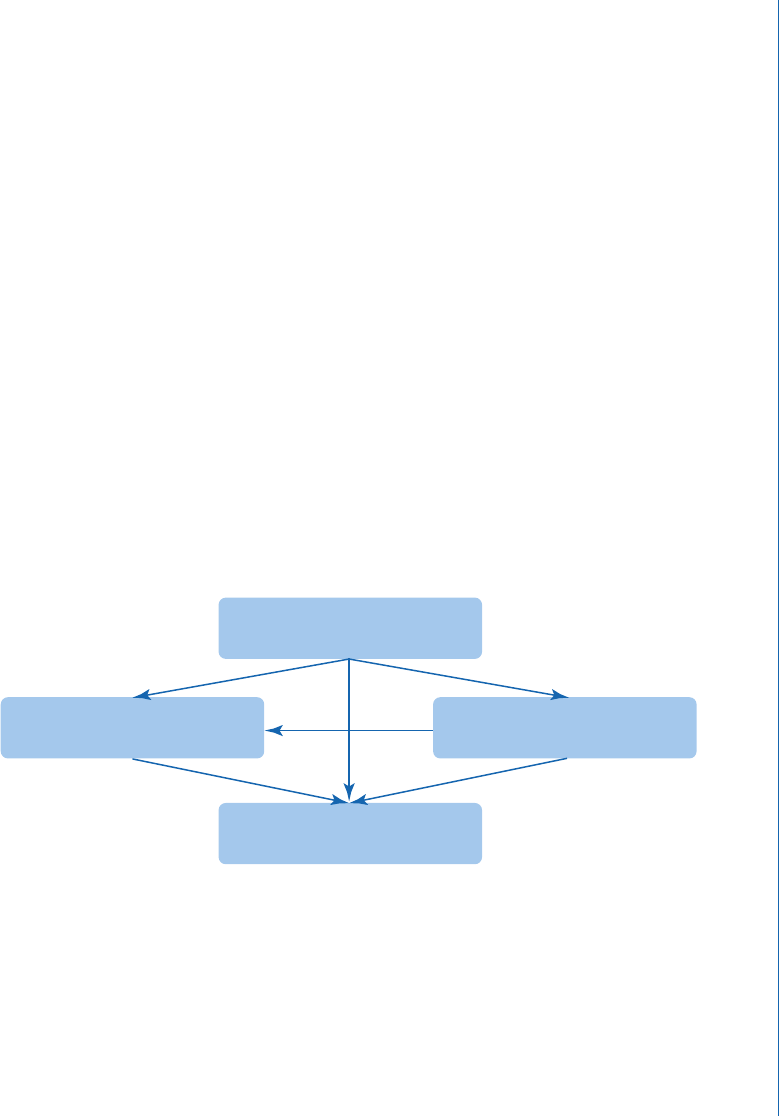

facets of a user study: research focus and variable; (2) main facets and factors to be manipulated: par-

ticipant, task, and system feature; (3) study procedure facets: study procedure and experimental design;

and (4) measurement and analysis facet: behavior and experience, data analysis, and results.

Among these four categories of main facets, research focus and variables are the root facets

and default items of user study design and fundamentally determine other the manipulation of

other main facets (e.g., participant, task type, system and interface features to be varied). Once the

specic variables and associated factors are decided, the study procedure and experimental design

(if applicable) can also be determined and unfolded accordingly. en, based on the specic re-

search problems, variables dened, and data collection techniques, researchers need to decide the

specic models and methods for data analysis and consider: (1) if the data analysis technique ts

well with the whole research design and procedure; and (2) to what extent the adopted methods

and models of analysis can (at least partially) address the limitations caused by the decisions made

in study procedure design. For example, a user-centered search behavior research may involve mul-

tiple independent variables that come from dierent levels (e.g., user level, task and session levels,

query segment level). Under this circumstance, applying multi-level or hierarchical linear model can

help clarify the eects caused by the variables from dierent levels in causal inference and thereby

mitigate the negative inuence caused by the decisions and compromises in user study design. e

connections among main facets discussed above are illustrated in Figure 4.2. e main facets and

associated subfacets will be explained in detail in the following subsections.

Study Procedure and

Experimental Design

Participant, Task, and

System Features

Research Focus and Variables

Measures of Behavior and

Experiencel; Data Analysis

Figure 4.2: Connections among dierent categories of user study facets.

4.1.1 RESEARCH FOCUS AND VARIABLES

According to the divergent research focuses, IIR user studies can be roughly classied into three

categories: (1) understanding user behavior and experience; (2) system or interface features eval-

uation; and (3) meta-evaluation of evaluation metrics. Given the research focus and associated

research questions, researchers have to dene corresponding variables as a preparation for user study

4.1 STRUCTURE OF THE FACETED FRAMEWORK

26 4. FACETED FRAMEWORK OF INTERACTIVE IR USER STUDIES

design, subsequent data analysis (e.g., statistical modeling) and result presentation. is is part of

the basic, default phase of user study design and will fundamentally dene a majority of facet val-

ues (e.g., task type, experimental system, participant features). For example, in Ong et al.’s (2017)

exploration on the impacts of information scent levels and patterns on search behavior, SERP type

can be considered as the core independent variable as it was intentionally manipulated to produce

varying information scent levels and patterns. Also, search behavioral measures (e.g., query refor-

mulation, dwell time on pages of dierent types, clicking behavior) in this case were considered as

dependent variables and incorporated into statistical models. en, to answer the proposed research

questions, a user study was designed around these fundamental cornerstones and a series of design

decisions (and compromises) were made.

Similarly, researchers focusing on system and interface evaluations often manipulate system

and/or interface feature(s) and see if the variation(s) in these features lead to any statistically sig-

nicant dierence in search performance (e.g., higher precision or recall in search; improved per-

formance in task completion) and search interaction experience (e.g., lower workload, higher level

of search satisfaction). For instance, Ruotsalo et al. (2018) introduced an innovative assistant tool

for search interaction called Interactive Intent Modeling, which can model a user’s evolving search

intents and visualize them as keywords for search interaction. While doing system evaluation, the

researchers employed a variety of search performance measures and found that the intent modeling

and visualization can signicantly improve retrieval eectiveness, users’ task performance, breadth

of information comprehension, and user experience. In this case, the presentation and adoption of

new intent modeling and visualization can be dened as an independent variable as researchers

directly manipulated the interaction interface for dierent groups of participants and compared

search performances and experiences (i.e., dependent variables) across dierent groups (i.e., baseline

group vs. treatment group).

With respect to meta-evaluation of evaluation metrics, researchers often propose and evalu-

ate a series of newly proposed evaluation measures (i.e., online search behavioral measures, such as

querying behavior, browsing and result examination behavior, eye movement pattern and attention

distribution; oine self-reported data, annotations, and usefulness judgments) that capture dier-

ent aspects of search interactions. Once the core measures and ground truth are dened, researchers

usually conduct the meta-evaluation mainly through two ways: (1) examining the correlations be-

tween these measures and predened ground truth (e.g., query-level satisfaction and session-level

satisfaction; relevance judgment; search success) (e.g., Chen et al., 2017); and (2) investigating the

predicative power of the model built upon new evaluation metrics/features in predicting predened

ground truth value (e.g., Liu et al., 2018).

In summary, clearly dening independent and dependent variables (i.e., the associated con-

cepts, operational denitions, as well as the hypotheses, if applicable) are the default “step one”

for user study design and are obviously crucial for most of the IIR research as well as information

..................Content has been hidden....................

You can't read the all page of ebook, please click here login for view all page.