A

Exercise Solutions

CHAPTER 1: DATABASE DESIGN GOALS

Exercise 1 Solution

The following list summarizes how the book provides (or doesn't) database goals:

- CRUD—This book doesn't let you easily Create information. You could write in new information, but there isn't much room for that and that's not really its purpose. The book lets you Read information, although it's hard for you to find a particular piece of information unless it is listed in the table of contents or the index. You can Update information by crossing out the old information and entering the new. You can also highlight key ideas by underlining, by using a highlighter, and by putting bookmarks on key pages. Finally, you can Delete data by crossing it out.

- Retrieval—The book's mission in life is to let you retrieve its data, although it can be hard to find specific pieces of information unless you have bookmarked them or they are in the table of contents or the index.

- Consistency—I've tried hard to make the book's information consistent. If you start making changes, however, it will be extremely difficult to ensure that you make related changes to other parts of the book.

- Validity—The book provides no data validation. If you write in new information, the book cannot validate your data. (If you write, “Normalization rocks!” the book cannot verify that it indeed rocks.)

- Easy Error Correction—Correcting one error is easy; simply cross out the incorrect data and write in the new data. Correcting systematic errors (for example, if I've methodically misspelled “the” as “thue” and the editors didn't catch it) would be difficult and time-consuming.

- Speed—The book's structure will hopefully help you learn database design relatively efficiently, but a lot relies on your reading ability.

- Atomic Transactions—The book doesn't really support transactions of any kind, much less atomic ones.

- ACID—Because it doesn't support transactions, the book doesn't provide ACID.

- Persistence and Backups—The book's information is nonvolatile so you won't lose it if the book “crashes.” If you lose the book or your dog eats it, then you can buy another copy but you'll lose any updates that you have written into it. You can buy a second copy and back up your notes into that one, but the chances of a tornado destroying your book are low and the consequences aren't all that earth-shattering, so I'm guessing you'll just take your chances.

- Low Cost and Extensibility—Let's face it, books are pretty expensive these days, although not as expensive as even a cheap computer. You can easily buy more copies of the book, but that isn't really extending the amount of data. The closest thing you'll get to extensibility is buying a different database-related book or perhaps buying a notebook to improve your note-taking.

- Ease of Use—This book is fairly easy to use. You've probably been using books for years and are familiar with the basic user interface.

- Portability—It's a fairly large book, but you can reasonably carry it around. You can't read it remotely the way you can a computerized database, but you can carry it on a bus.

- Security—The book isn't password protected, but it doesn't contain any top-secret material, so if it is lost or stolen you probably won't be as upset by the loss of its data as by the loss of the concert tickets that you were using as a bookmark. It'll also cost you a bit to buy a new copy if you can't borrow someone else's.

- Sharing—After you lose your copy, you could read over the shoulder of a friend (although then you need to read at their pace) or you could borrow someone else's book. Sharing isn't as easy as it would be for a computerized database, however, so you might just want to splurge and get a new copy.

- Ability to Perform Complex Calculations—Sorry, that's only available in the more expensive artificially intelligent edition.

Overall, the book is a reasonably efficient read-only database with limited search and correction capabilities. As long as you don't need to make too many corrections, it's a pretty useful tool. The fact that instructional books have been around for a long time should indicate that they work pretty well.

Exercise 2 Solution

This book provides a table of contents to help you find information about general topics and an index to help you find more specific information if you know the name of the concept that you want to study. Note that the page numbers are critical for both kinds of lookup.

Features that help you find information in less obvious ways include the introductory chapter that describes each chapter's concepts in more detail than the table of contents does, and cross-references within the text.

Exercise 3 Solution

CRUD stands for the four fundamental database operations: Create (add new data), Read (retrieve data), Update (modify data), and Delete (remove data from the database).

Exercise 4 Solution

A chalkboard provides:

- Create—Use chalk to write on the board.

- Read—Look at the board.

- Update—Simply erase old data and write new data.

- Delete—Just erase the old data.

A chalkboard has the following advantages over a book:

- CRUD—It's easier to create, read, update, and delete data.

- Retrieval—Although a chalkboard doesn't provide an index, it usually contains much less data than a book so it's easier to find what you need.

- Consistency—Keeping the data consistent isn't trivial but again, because there's less data than in a book, you can find and correct any occurrences of a problem more easily.

- Easy Error Correction—Correcting one error is trivial; just erase it and write in the new data. Correcting systematic errors is harder, but a chalkboard contains a lot less data than a book, so fixing all of the mistakes is easier.

- Backups—You can easily back up a chalkboard by taking a picture of it with your cell phone. (This is actually more important than you might think in a research environment where chalkboard discussions can contain crucial data.)

- Ease of Use—A chalkboard is even easier to use than a book. Toddlers who can't read can still scribble on a chalkboard.

- Security—It's relatively hard to steal a chalkboard nailed to a wall, although a ne'er-do-well could take a cell-phone picture of the board and steal the data. (In some businesses and schools, cleaning staff are forbidden to erase chalkboards without approval to prevent accidental data loss.)

- Sharing—Usually everyone in the room can see what's on a chalkboard at the same time. This is one of the main purposes of chalkboards.

A book has the following advantages over a chalkboard:

- Persistence—A chalkboard is less persistent. For example, someone brushing against the chalkboard may erase data. (I once had a professor who did that regularly and always ended the lecture with a stomach covered in chalk.)

- Low Cost and Extensibility—Typically, books are cheaper than chalkboards, at least large chalkboards.

- Portability—Books typically aren't nailed to a wall (although the books in the Hogwarts library's restricted section are chained to the shelves).

The following database properties are roughly equivalent for books and chalkboards:

- Validity—Neither provides features for validating new or modified data against other data in the database.

- Speed—Both are limited by your reading (and writing) speed.

- Atomic Transactions—Neither provides transactions.

- ACID—Neither provides transactions, so neither provides ACID.

- Ability to Perform Complex Calculations—Neither can do this (unless you have some sort of fancy interactive computerized book or chalkboard).

In the final analysis, books contain a lot of information and are intended for use by one person at a time, whereas chalkboards hold less information and are tools for group interaction. Which you should use depends on which of these features you need.

Exercise 5 Solution

A recipe card file has the following advantages over a book:

- CRUD—It's easier to create, read, update, and delete data in a recipe file. Updating and deleting data is also more aesthetically pleasing. In a book, these changes require you to cross out old data and optionally write in new data in a place where it probably won't fit too well. In a recipe file, you can replace the card containing the old data with a completely new card.

- Consistency—Recipes tend to be self-contained, so this usually isn't an issue.

- Easy Error Correction—Correcting one error in the recipe file is trivial; just replace the card that holds the error with one that's correct. Correcting systematic errors is harder but less likely to be a problem. (What are the odds that you'll mistakenly confuse metric and English units and mix up liters and tablespoons? But you can go to

https://gizmodo.com/five-massive-screw-ups-that-wouldn’t-have-happened-if-we-1828746184to read about five disasters that were caused by that kind of mix-up.) - Backups—You could back up a recipe file fairly easily. In particular, it would be easy to make copies of any new or modified cards. I don't know if anyone (except perhaps Gordon Ramsay or Martha Stewart) does this.

- Low Cost and Extensibility—It's extremely cheap and easy to add a new card to a recipe file.

- Security—You could lose a recipe file, but it will probably stay in your kitchen most of the time, so losing it is unlikely. Someone could break into your house and steal your recipes, but you'd probably give copies to anyone who asked (except for your top-secret death-by-chocolate brownie recipe).

A book has the following advantages over a recipe file:

- Retrieval—A recipe file's cards are sorted, essentially giving it an index, but a book also provides a table of contents. With this kind of recipe file, it would be hard to simultaneously sort cards alphabetically and group them by type such as entrée, dessert, aperitif, or midnight snack.

- Persistence—The structure of a recipe file is slightly less persistent than that of a book. If you drop your card file down the stairs, the cards will be all mixed up (although that may be a useful way to pick a random recipe if you can't decide what to have for dinner).

The following database properties are roughly equivalent for books and recipe files:

- Validity—Neither provides features for validating new or modified data against other data in the database.

- Speed—Both are limited by your reading and writing speeds.

- Atomic Transactions—Neither provides transactions.

- ACID—Neither provides transactions, so neither provides ACID.

- Ease of Use—Many people are less experienced with using a recipe file than a book, but both are fairly simple. (Following the recipes will probably be harder than using the file, at least if you cook anything interesting.)

- Portability—Both books and recipe files are portable, although you may never need your recipes to leave the kitchen.

- Sharing—Neither is easy to share.

- Ability to Perform Complex Calculations—Neither can do this. (Some computerized recipe books can adjust measurements for a different number of servings, but index cards cannot.)

Instructional books usually contain tutorial information, and you are expected to read them in big chunks. A recipe file is intended for quick reference and you generally use specific recipes rather than reading many in one sitting. The recipe card file is more like a dictionary and has many of the same features.

Exercise 6 Solution

ACID stands for Atomicity, Consistency, Isolation, and Durability.

- Atomicity means transactions are atomic. The operations in a transaction either all happen or none of them happen.

- Consistency means the transaction ensures that the database is in a consistent state before and after the transaction.

- Isolation means the transaction isolates the details of the transaction from everyone except the person making the transaction.

- Durability means that once a transaction is committed, it will not disappear later.

Exercise 7 Solution

BASE stands for the distributed database features of Basically Available, Soft state, and Eventually consistent.

- Basically Available means that the data is available. These databases guarantee that any query will return some sort of result, even if it's a failure.

- Soft state means that the state of the data may change over time, so the database does not guarantee immediate consistency.

- Eventually consistent means these databases do eventually become consistent, just not necessarily before the next time that you read the data.

Exercise 8 Solution

The CAP theorem says that a distributed database can guarantee only two out of three of the following properties:

- Consistency—Every read receives the most recently written data (or an error).

- Availability—Every read receives a non-error response, if you don't guarantee that it receives the most recently written data.

- Partition tolerance—The data store continues to work even if messages are dropped or delayed by the network between the store's partitions.

This need not be a “pick two of three” situation if the database is not partitioned. In that case, you can have both consistency and availability, and partition tolerance is not an issue because there is only one partition.

Exercise 9 Solution

If transaction 1 occurs first, then Alice tries to transfer $150 to Bob and her balance drops below $0, which is prohibited.

If transaction 2 occurs first, then Bob tries to transfer $150 to Cindy and his balance drops below $0, which is prohibited.

So, transaction 3 must happen first: Cindy transfers $25 to Alice and $50 to Bob. Afterward Alice has $125, Bob has $150, and Cindy has $25.

At this point, Alice and Bob have enough money to perform either transaction 1 or transaction 2.

If transaction 1 comes second, then Alice, Bob, and Cindy have $0, $275, and $25, respectively. (If he can, Bob should walk away at this point and quit while he's ahead.) Transaction 2 follows and the three end up with $0, $125, and $175, respectively.

If transaction 2 comes second, then Alice, Bob, and Cindy have $125, $0, and $175, respectively. Transaction 1 follows and the three end up with $0, $125, and $175, respectively.

Therefore, the allowed transaction orders are 3 – 1 – 2 and 3 – 2 – 1. Note that the final balances are the same in either case.

Exercise 10 Solution

If the data is centralized, then it does not remain on your local computer. In particular, if your laptop is lost or stolen, you don't need to worry about your customers' credit card information because it is not on your laptop.

Be sure to use good security on the database so cyber-criminals can't break into it remotely. Also don't use the application on an unsecured network (such as in a coffee shop or shopping mall) where someone can electronically eavesdrop on you.

CHAPTER 2: RELATIONAL OVERVIEW

Exercise 1 Solution

This constraint means that all salespeople must have a salary or work on commission but they cannot have both a salary and receive commissions.

Exercise 2 Solution

In Figure A.1, lines connect the corresponding database terms.

Exercise 3 Solution

State/Abbr/Title is a superkey because no two rows in the table can have exactly the same values in those columns.

Exercise 4 Solution

Engraver/Year/Got is not a superkey because the table could hold two rows with the same values for those columns.

Exercise 5 Solution

The candidate keys are State, Abbrev, and Title. Each of these by itself guarantees uniqueness so it is a superkey. Each contains only one column so it is a minimal superkey, and therefore a candidate key.

All of the other fields contain duplicates and any combination that doesn't have duplicates in the data shown (such as Engraver/Year) is just a coincidence (someone could engrave two coins in the same year). That means any superkey must include at least one of State, Abbrev, or Title to guarantee uniqueness, so there can be no other candidate keys.

Exercise 6 Solution

The domains for the columns are:

- State—The names of the 50 U.S. states

- Abbrev—The abbreviations of the 50 U.S. states

- Title—Any text string that might describe a coin

- Engraver—People's names

- Year—A four-digit year—more precisely, 1999 through 2008

- Got—“Yes” or “No”

Exercise 7 Solution

Room/FirstName/LastName and FirstName/LastName/Phone/CellPhone are the possible candidate keys.

CellPhone can uniquely identify a row if it is not null. If CellPhone is null, then we know that Phone is not null because all students must have either a room phone or a cell phone. But roommates share the same Phone value, so we need FirstName and LastName to decide which is which. (Basically Phone/CellPhone gets you to the Room.)

Exercise 8 Solution

In this case, FirstName/LastName is not enough to distinguish between roommates. If their room has a phone, they might not have cell phones so there's no way to tell them apart in this table. In this case, the table has no candidate keys. That might be a good reason to add a unique column such as StudentId. (Or if the administration assigns rooms, just don't put two John Smiths in the same room. You don't have to tell them it's because of your poorly designed database!)

Exercise 9 Solution

The room numbers are even so you could use Room Is Even. (Don't worry about the syntax for checking that a value is even.) You could also use some simple range checks, such as (Room > = 100) AND (Room < 300), depending on what room numbers are actually allowed.

You might also notice that every Phone value has the same area code and exchange 202-237, so you could check for that.

Exercise 10 Solution

Every student must have a Phone or CellPhone value, so you could check that (Phone <> null) OR (CellPhone <> null).

CHAPTER 3: NOSQL OVERVIEW

Exercise 1 Solution

This data forms a tree, so you could store it in a graph database. If there is some inbreeding, then the data forms a network rather than a tree, but it will still fit easily into a graph database. (The fact that the data represents relationships among dogs is also a hint that you might want to use a graph database.)

A graph database would let you perform relationship-oriented queries such as finding all the ancestors on the source dog's father's side or determining the number of generations between this dog and TV celebrity Lassie. Most graph databases also allow you to query on node properties in case you want to find dogs with certain characteristics such as show winners and flyball champions.

If there is no inbreeding, then you could save the tree in an XML or JSON file, although that would reduce the kinds of queries you could perform.

You could also store the data in a relational database. That would let you search for dog characteristics but would make it harder to study the relationships in the tree or network.

Overall, the graph database will probably give you the largest assortment of query capabilities with the least effort.

Exercise 2 Solution

This may seem like a more complicated database than the one in Exercise 1, but it's still a tree with two main branches connected to the source dog: one leading to descendants and one leading to ancestors. (If there is inbreeding, then it's a network as before.)

For the same reasons described in the solution to Exercise 1, a graph database will likely give you the best query capabilities with the least work.

Exercise 3 Solution

Application settings are easy to store in almost any database.

If you use a flat file, then you need to write code to save and retrieve values.

If you use an XML or JSON file, then you may be able to use built-in programming language tools or libraries to save and retrieve values more easily. You could save the XML or JSON data in a document database.

A key-value database would allow applications to easily load and update settings as needed, but it might be overkill. If the settings are different for each user, then saving them all in a shared database will increase the total overhead somewhat.

Similarly, you could store settings in a relational database and, similarly, that might be overkill.

Any of the solution that stores settings in a centralized location (such as a document database, key-value database, or relational database) would allow administrators to fix settings if a user makes a window zero pixels wide or drags a window completely off the screen. Those approaches also mean that if you logged into the application from any computer, you found your personal settings ready and waiting for you.

Despite those advantages, I prefer the simplicity of storing settings in simple XML or JSON files stored in the program's executable directory unless a user typically logs in from several different computers.

Exercise 4 Solution

This sounds like a very simple database whose major requirement is graphing, so a spreadsheet can probably handle this. That would limit the application to tasks that spreadsheets can perform, however, and if the users later decide that they want to store more complex data and perform sophisticated queries on it, you might wish you'd chosen a relational database.

Exercise 5 Solution

A spreadsheet can also handle this requirement, but there's the same risk that the users will later decide they need more features than a spreadsheet can easily handle.

Exercise 6 Solution

A spreadsheet will still work, with the same caveat. At this point, however, I would notice that the users are starting to add more and more features. I would want to explore the requirements more fully and make sure this is really their final request before committing to a spreadsheet. It would be better to move to a more complicated database model now than to have to rebuild everything from scratch in six months (or just as likely, have users complain about how the spreadsheet doesn't do all of the things they didn't tell you it was supposed to do).

Exercise 7 Solution

This is a fairly simple tree so it will fit easily in a graph database. It's such a small tree (relatively speaking) that it seems unlikely that you'll need to perform complex ad hoc queries, so you could store it in an XML or a JSON file.

Exercise 8 Solution

This probably needs to be some sort of relational database. They are great at handling large amounts of interconnected data and performing complex ad hoc queries.

Which type of relational database you should pick (regular, object-oriented, or some other flavor) depends largely on your development philosophy and environment.

Exercise 9 Solution

As in Exercise 8, this problem cries out for some kind of relational database. To make the boss happy, you could use an object-oriented database. In several projects, I've used an object-relational mapping approach planted on top of a relational database and it has always worked quite well.

Exercise 10 Solution

If the recipe book will be fairly small, you could just put each recipe on a separate page in a Microsoft Word document and use Word's search capability to find recipe names, part of a meal, or an ingredient. (Fooled you, didn't I? That wasn't one of the main topics covered in this chapter! However, it would be a reasonable solution for such a simple application. Remember, the goal is to provide a useful solution with the minimum amount of work.)

Of the solutions that are described in this chapter, you could pick a relational database. It will provide better search capabilities than a simpler flat file, spreadsheet, XML file, or JSON file.

A truly object-oriented database would probably be serious overkill for this project. (I would only pick one of them if I wanted practice with a particular new tool—for example, one that I knew was going to be used on a future project.)

You could also store each recipe as a document in a document database. You could still query on the fields inside the recipes like you can with a relational database. The document database would allow different recipes to use different formats if necessary.

This is a pretty good example where a NoSQL database might provide some useful flexibility. It would be fairly easy to build this in a relational database, but a document database would let you easily use new formats later if you run across a strange recipe that didn't fit the usual pattern.

Exercise 11 Solution

This could require some serious sorting and searching, so a relational database is probably your best bet. (You would use a separate table or two to define power decks.) Which flavor you should pick (regular, object-oriented, etc.) depends largely on your development philosophy and environment.

Alternatively, you could store each card's data in a document inside a document database. You should probably be able to figure out which fields you will need in advance, however, so the flexibility of being able to give each document a different format seems less useful than it would be for the recipe database.

Exercise 12 Solution

This application would require some serious search capabilities, so you might think “relational database.” That would work, but the different media types have different characteristics, so you would need separate tables for each and that would make queries more complicated. This would still be possible, but it would be a fair amount of work.

Alternatively, you could use a column-oriented database. It would look like a single huge table, but the different media types would have different columns. For example, movies (such as The Pelican Brief and Shrek) would list Lithgow in the Actor column while books (such as The Remarkable Farkle McBride and Marsupial Sue) would list him in the Author column.

If you later decide to add new information to the database, such as RottenTomatoesRating for movies and Awards for all media, you could simply add those columns. In fact, you could even add new media such as audiobooks with little effort. You could add those features to a relational database, but it would be more work.

It might also be interesting to build a graph database to examine the relationships among different works. (For example, that would let you dominate the game “Six Degrees of Kevin Bacon.”) That would be a lot of work, however, and wouldn't be required for the original problem statement.

Exercise 13 Solution

This database will require some serious sorting, searching, and grouping, so a relational database may be in order. That would allow you to perform complex queries linking players and their teams.

Unfortunately different sports have different statistics, league structures, numbers of players, and other basic information, so it might be hard to build a single table to hold information for them all. Later, when you add dragon boating and quidditch, you may need to restructure the database.

As was the case with the media database in Exercise 12, a document-column-oriented database would allow you to perform queries while also giving you greater flexibility for later improvements.

Exercise 14 Solution

This data is so simple that it could conveniently be stored in just about any kind of database. If the application uses a database for some other purpose, you might consider adding this information to it because the database will be there anyway.

Otherwise, you should use the simplest solution that makes sense. A plain-old text file posted on a network-accessible server would work just fine. Alternatively, you could use a document database or key-value database. You could even squeeze the message of the day into a relational, column-oriented, or graph database, although that would not be a natural fit.

CHAPTER 4: UNDERSTANDING USER NEEDS

Exercise 1 Solution

In Figure A.2, lines connect the customer roles with their corresponding descriptions.

Exercise 2 Solution

A use case can cover any part of the customers' operation, including big or little pieces of the whole process. In fact, it's easier to test a big scenario if you break it into smaller pieces. The answer that doesn't describe a use case is:

- c. It should cover the customer's entire operation from start to finish.

Exercise 3 Solution

Brainstorming sessions should include everyone interested so the correct answer is:

- d. All of the above

(Although technically Customer Representatives and the Devil's Advocate are also Stakeholders, so “c. All interested Stakeholders” is also sort of correct. Let's not quibble.)

Exercise 4 Solution

The correct answer is:

- b. Ask the customer why they think that.

You never know if the customer knows more than they're admitting and if they might have very good reasons for suggesting that kind of database. Even if they're wrong, the reasons they give will tell you more about the situation and may lead to other important insights.

Answer “d. Study the problem to see if that kind of database makes sense,” almost works because it's good to see if that kind of database makes sense, but it's also important to know why the customer thinks it does.

Exercise 5 Solution

Whenever you don't understand something about the customers' operation you should ask someone, so the correct answer is:

- a. Ask someone what that's all about.

The answer you get may be as arbitrary as “that's just the way Mark likes to do it,” but in this fictitious scenario the customers use the first date stamp to record when the order was received and the second to indicate that the order entry operator looked at the back of the order to check for notes and comments.

If you didn't ask, you might have incorrectly placed two date fields in the Orders table. Once the process is online, however, you won't need the second date because there is no “other side” of the order to check. (Looking at the back of your computer monitor won't tell you much.) All of the notes and comments will be in a text box at the bottom of the online form.

Exercise 6 Solution

The following table summarizes the fields' data requirements:

| FIELD | REQUIRED? | DOMAIN |

|---|---|---|

| Address 1 | Yes | Valid street addresses or street names without numbers |

| Address 2 | No | Apartment, suite, floor, etc. |

| Company | No | Valid company names (which could be practically anything) |

| Street Address | Yes | Valid street addresses or street names without numbers |

| Apt/Suite/Other | No | Apartment, suite, floor, etc. |

| City | Yes | Valid cities |

| State | Yes | Valid states |

| ZIP Code | No | Five-digit or ZIP+4 codes as in 12345 and 12345-6789 |

The required fields are marked on the form with asterisks.

The fields on this form have one complex interdependency: you must include either the city and state, or the ZIP Code. (If you include the ZIP Code, then the form looks up the city and state.) This isn't obvious from those fields because none of them is marked with an asterisk, so the form includes text to explain this.

The form could use a foreign key validation for the city, checking against a table listing every city in the country. It would be a huge table and would probably contain errors, so in many applications this might not be worth the effort. However, this application needs the city to look up the ZIP Code, so if the city isn't legal the lookup will fail. (In fact, that may be the best way to validate the data: see if you can look up the ZIP Code.)

The form could also verify that the ZIP Code is valid for the city, if the user enters both. Again, the whole point is to look up a ZIP Code, so it would be easy to check it against any value that the user entered.

Exercise 7 Solution

Backup policy is a data reliability issue more than a security issue, so the correct answer is:

- c. The frequency with which you need to perform backups.

However, the two issues are often closely related. For example, in many applications backups must be stored securely so that sensitive data doesn't fall into the wrong hands. Backups are also useful if a hacker gets into your system and trashes the database.

Exercise 8 Solution

The correct answer is:

- d. It depends (you need more information).

This is probably a Priority 1 or 2 feature, depending on how serious Frank is and how soon he wants to add this feature. This doesn't sound too complicated (it would probably just require a few new fields in an inventory table or a new plant lookup table), so I would say if Frank is serious then he should make this a Priority 1 feature and add it to the database design. I would also make this data not required in case Frank doesn't have time to enter all of this information right away for every kind of plant.

Exercise 9 Solution

MOSCOW stands for Must, Should, Could, Won't.

Exercise 10 Solution

The answer to this one depends on the operating system that you're using. I'm currently sitting at a computer running Windows 11, so here's how my use case might read:

- Goals—Authorized users should be able to log in and unauthorized users should not.

- Summary—The user tries to enter a username and password or PIN. If they are correct, the user is allowed access to the system.

- Actors:

- The user—tries to log in.

- The operating system—validates the username and password and grants or denies access.

- Pre-Conditions—No one is currently logged in to the system. (The case when someone else is already logged in is a different use case.)

- Post-Conditions—If the user enters a valid username and password or PIN, the system is logged in and displays the user's desktop. If the user enters an invalid username/password combination, the system remains logged out and the user cannot see the desktop or any of the data in the computer.

- Normal Flow—The tester should try all the possible combinations of blank, valid, and invalid usernames, passwords, and PINs and click OK. The following table lists the combinations and their desired results. The tester should fill in the blank column with “Pass” or “Fail” to indicate whether each test gave the desired result.

USERNAME PASSWORD DESIRED RESULT PASS/FAIL Blank username Blank password No access Blank username Valid password No access Blank username Invalid password No access Blank username Valid PIN No access Blank username Invalid PIN No access Valid username Blank password No access Valid username Valid password Access Valid username Invalid password No access Valid username Valid PIN Access Valid username Invalid PIN No access Valid username Valid password for different account No access Valid username Valid PIN for different account No access Invalid username Blank password No access Invalid username Invalid password No access Invalid username Invalid PIN No access - Alternative Flow—Instead of clicking OK, the user could click Cancel. The system should reset the screen, clearing the username and password text boxes.

- Notes—In all cases that do not give the user access, the system should deny access in exactly the same way so that the user cannot learn, for example, that they have guessed a valid username but an invalid password. That would give a ne'er-do-well a valid username to attack and that would be bad.

Note that this use case specifies the user's actions with enough detail that a relatively inexperienced user could follow it.

Exercise 11 Solution

When a heavy-hitter like a vice president attacks, you need to call in your Executive Champion. Ideally, they can point to your requirements document and show that you did, in fact, consider farbulistic granilation, and that everyone agreed the allowance was sufficient. If you didn't consider this issue, then you may need to put in some extra study to give your Executive Champion ammunition to fend off the attack.

If your Executive Champion doesn't have enough clout to fight off the Supervillain, then you could be in trouble.

One project I worked on really did have Supervillains and Executive Champions at that level in a Fortune 500 company. I won't bore you with the details, but our Executive Champion and Customer Champion spent a huge amount of time fending off attacks for about two years before the project finished. (I don't think they enjoyed that part of the project.)

CHAPTER 5: TRANSLATING USER NEEDS INTO DATA MODELS

Exercise 1 Solution

Figure A.3 shows one possible solution.

- In the

STUDENTclass,COURSEandPROJECThave cardinality 0.N and 0.1, respectively. This doesn't capture the fact that at least one of these two attributes must include at least one value. - Similarly the

INSTRUCTORclass does not capture the fact that at least one of theCOURSEorPROJECTattributes must include at least one value.

Exercise 2 Solution

Figure A.4 shows an inheritance diagram for the Person, Student, and Instructor entities. It also shows the relationship between the Person and Phone entities.

The Phone entity doesn't have a primary key because it doesn't make sense to search for just a Phone entity by itself. Instead, you can find the Phone entities corresponding to a Person entity. That means Phone is a weak entity so it is surrounded by a thick rectangle and its identifying relationship is drawn with a thick arrow.

Figure A.5 shows one possible ER diagram for the college course data.

The diagram has the following constraints:

- It doesn't make sense to look for a particular

CourseResultso it doesn't have a primary key. Instead you can look forCourseResults associated with aStudentor with aCourse. That meansCourseResultis a weak entity, so it is drawn with a thick rectangle and it is connected to its identifying relationships with thick arrows. - Similarly

ProjectResultis a weak entity.

- A

Coursemust be involved in a relationship with aStudent(or else theCourseis canceled), so its line leading towardStudentis double (a participation constraint). - Similarly a

Projectmust be involved in a relationship with aStudent, so its line leading towardStudentis double (a participation constraint). - A

Coursemust be involved in a relationship with anInstructor(someone has to teach it), so its line leading towardInstructoris double (a participation constraint). ACoursecan have only oneInstructor, so the line is also an arrow (a key constraint). - Similarly, a

Projectmust be involved in a relationship with exactly oneInstructor, so its line leading towardInstructoris a double arrow (participation and key constraint). - A

Studentcan work on at most oneProjectat a time, so its line leading toProjectis an arrow (key constraint).

Special notes:

- The

Studententity's relationships withCourseandProjectdo not indicate that aStudentmust be involved with at least oneCourseor aProject. - Similarly, the

Instructorentity's relationships withCourseandProjectdo not indicate that anInstructormust be involved with at least oneCourseor aProject.

Exercise 3 Solution

Figure A.6 shows one possible solution.

Notice the way this model handles the fact that Student and Instructor inherit from Person. The Persons table holds the basic Person information and a PersonId. The Students and Instructors tables include PersonId foreign keys to link to the corresponding basic Person data.

Note also the different approach used for the Student/Course and Instructor/Course relationships. Because a course has exactly one instructor, the Instructors and Courses tables are connected with a simple one-to-many relationship. In contrast, a course has many students, so the relationship uses an intermediate StudentCourses table to connect the two to build a many-to-many relationship. (The same reasoning applies to the Student/Project and Instructor/Project relationships.)

Finally, notice the difference between the Student/Course and Student/Project relationships. A student can be enrolled in any number of courses but at most one project, so the first is a many-to-many relationship while the second is a one-to-one relationship.

Unfortunately, this solution doesn't capture every aspect of the system either. In particular, it doesn't indicate that a Student must be enrolled in at least one Course or a Project. Similarly, it doesn't show that an Instructor must teach at least one Course or supervise at least one Project. The model also doesn't include data type, required, and other domain data. All of this should be noted in separate documents.

Exercise 4 Solution

Figure A.7 shows one possible solution.

Special notes: the semantic object model actually does a pretty good job of capturing the Mike's Trikes data. About the only item that isn't described explicitly is the manager's role. In this model, you can deduce the manager at any given time by examining the manager's shift data. If Mike needed a more explicit record of who is managing during a salesperson's shift or when a contract was sold, the model would need to be modified.

Exercise 5 Solution

Figure A.8 shows an inheritance diagram for the Person, Customer, Salesperson, and Manager entities. It also shows the relationship between the Person and Phone entities.

The Phone entity doesn't have a primary key because it doesn't make sense to search for just a Phone entity by itself. Instead, you can find the Phone entities corresponding to a Person entity. That means Phone is a weak entity, so it is surrounded by a thick rectangle and its identifying relationship is drawn with a thick arrow.

Figure A.9 shows one possible ER diagram for Mike's Trikes.

The diagram's constraints are:

Paymentis a weak entity because you look up payments via theCustomerwho made them.Paymentis drawn with a thick rectangle and a thick arrow pointing toward its identifying relationship.Shiftis also is a weak entity because you look up shift data via theSalespersonwho works the shift.Shiftis drawn with a thick rectangle and a thick arrow pointing toward its identifying relationship.- A

Customermust be involved in at least oneContract(we don't make aCustomerrecord untilCustomer Purchases Contract), so its line leading towardContractis double (a participation constraint). - A

Contractmust have exactly oneCustomerand exactly oneSalesperson,so the lines leading out ofContracttoward those other entities are double (participation constraint) and arrows (key constraint).

Special notes:

- This diagram does not emphasize the fact that a

Manageris also aSalesperson, so a manager could play the role of theSalespersonin the diagram. You could add theManager Works Shiftrelationship but that would complicate the diagram.

Exercise 6 Solution

Figure A.10 shows one possible solution.

Notice how this model builds the inheritance hierarchy. The Customers and Salespersons tables use PersonId foreign key fields to link to their corresponding Persons records. The Managers table uses a SalespersonId foreign key field to link to Salespersons records.

As usual, the model doesn't capture all of the information available about the situation. In particular, it doesn't indicate that a Customers record must be associated with at least one Contracts record. You should write down this and other facts such as field data types and domain information in separate documents.

Exercise 7 Solution

Figure A.11 shows one possible solution.

Exercise 8 Solution

Figure A.12 shows one possible solution.

CHAPTER 6: EXTRACTING BUSINESS RULES

Exercise 1 Solution

The following chart describes the Phones table.

| FIELD | REQUIRED | DATA TYPE | DOMAIN | SANITY CHECKS |

|---|---|---|---|---|

| PersonId | Yes | ID | Persons.PersonId | |

| Type | Yes | String | List: Home, Work, Cell, Fax | |

| Number | Yes | String | Phone numbers |

The following chart describes the Persons table.

| FIELD | REQUIRED | DATA TYPE | DOMAIN | SANITY CHECKS |

|---|---|---|---|---|

| PersonId | Yes | ID | Any ID | |

| FirstName | Yes | String | Any string | |

| MiddleName | No | String | Any string | |

| LastName | Yes | String | Any string | |

| Street | Yes | String | Any string | |

| City | Yes | String | Any string | |

| State | Yes | String | List: (states) | |

| Zip | Yes | String | ZIP or ZIP+4 format | Verify ZIP or ZIP+4 format |

| EmailAddress | No | String | Valid email address | Contains one @ symbol |

| MedicalNotes | ? | String | Any string | |

| IceQualified? | ? | Yes/No | Yes or No | |

| RockQualified? | ? | Yes/No | Yes or No | |

| JumpQualified? | ? | Yes/No | Yes or No |

The following chart describes the Guides table.

| FIELD | REQUIRED | DATA TYPE | DOMAIN | SANITY CHECKS |

|---|---|---|---|---|

| PersonId | Yes | ID | Persons.PersonId | |

| GuideId | Yes | ID | Any ID | |

| IceInstructor? | Yes | Yes/No | Yes or No | |

| RockInstructor? | Yes | Yes/No | Yes or No | |

| JumpInstructor? | Yes | Yes/No | Yes or No |

The following chart describes the Explorers table.

| FIELD | REQUIRED | DATA TYPE | DOMAIN | SANITY CHECKS |

|---|---|---|---|---|

| PersonId | Yes | ID | Persons.PersonId | |

| ExplorerId | Yes | ID | Any ID |

The following chart describes the Organizers table.

| FIELD | REQUIRED | DATA TYPE | DOMAIN | SANITY CHECKS |

|---|---|---|---|---|

| PersonId | Yes | ID | Persons.PersonId | |

| OrganizerId | Yes | ID | Any ID |

The following chart describes the Adventures table.

| FIELD | REQUIRED | DATA TYPE | DOMAIN | SANITY CHECKS |

|---|---|---|---|---|

| AdventureId | Yes | ID | Any ID | |

| ExplorerId | Yes | ID | Explorers.ExplorerId | |

| EmergencyContact | Yes | ID | Persons.PersonId | |

| OrganizerId | Yes | ID | Organizers.OrganizerId | |

| TrekId | Yes | ID | Treks.TrekId | |

| DateSold | Yes | Date | Any date | Before the trek's start date. Between January 1, 2000 and December 31, 2050 (or some other very early and late dates). |

| IncludeAir? | Yes | Yes/No | Yes or No | |

| IncludeEquipment? | Yes | Yes/No | Yes or No | |

| TotalPrice | Yes | Currency | Monetary amount > $0 | Price > $250 (or some minimum sane value). |

| Notes | ? | Yes/No | Yes or No |

The following chart describes the Treks table.

| FIELD | REQUIRED | DATA TYPE | DOMAIN | SANITY CHECKS |

|---|---|---|---|---|

| TrekId | Yes | ID | Any ID | |

| GuideId | Yes | ID | Guides. GuideId | |

| Description | Yes | String | Any string | Length > 100 (anything shorter couldn't say enough). |

| Locations | Yes | String | List of locations | Length > 5. |

| StartLocation | Yes | String | A location | Length > 5. |

| EndLocation | Yes | String | A location | Length > 5. |

| StartDate | Yes | Date | Any date | StartDate is on or before EndDate. Between January 1, 2000 and December 31, 2050 (or some other very early and late dates). |

| EndDate | Yes | Date | Any date | EndDate is on or after StartDate. Between January 1, 2000 and December 31, 2050 (or some other very early and late dates). |

| Price | Yes | Currency | Monetary amount > $0 | Price > $250 (or some minimum sane value). Price > some minimum price per day times the number of days (EndDate–StartDate). |

| MaxExplorers | Yes | Number | Number > 0 | Number > 0. Number < 20 (or some maximum sane amount). |

| IceRequired? | Yes | Yes/No | Yes or No | |

| RockRequired? | Yes | Yes/No | Yes or No | |

| JumpRequired? | Yes | Yes/No | Yes or No |

Exercise 2 Solution

The following list describes business rules that can be implemented in field or table checks for the Phones table:

- Type—Verify that the type is one of Home, Work, Cell, or Fax. Alternatively, if you think this list might change in the future, you could put these values in a lookup table.

- Number—Verify that the value has a valid phone number format. In the United States, you would probably want to verify that it is a 10-digit number of the format ###-###-#### and you should allow for an extension.

The following list describes business rules that can be implemented in field or table checks for the Persons table:

- FirstName/MiddleName/LastName—Verify that this combination is unique. This will prevent you from adding the same person twice, perhaps as an explorer and as an emergency contact.

It would also be natural to try to validate the EmailAddress field in a field check. Unfortunately, valid email address formats are quite complicated so this probably doesn't belong in the simpler field and table checks.

Similarly, it might be nice to look up the explorer's City, State, and Zip values to make sure they are compatible. If you build a table listing all of the possible combinations, this wouldn't be a hard check, but it would be an enormous table and it's probably not worth all the extra effort. (For bonus points, though, you could probably use a web service to perform this check over the Internet. If you don't know what a web service is, don't worry about it.)

You could also look up the State value in a list built into a field check. Although it's unlikely that the list of allowed states will change often, this list is so long that it's easier to manage in a separate lookup table rather than in a very long field check. (And who knows, Canada may eventually be officially recognized as “The Maple Leaf State.”) (Just kidding! But this does bring up a whole series of questions about non-U.S. explorers. This model ignores those issues completely. Yes, I feel guilty.)

The Explorers, Organizers, and Guides tables should verify that their records are unique. That means checking uniqueness for the Explorers table's PersonId/ExplorerId fields, the Organizers table's PersonId/OrganizerId fields, and the Guides table's PersonId/GuideId fields.

The following list describes business rules that can be implemented in field or table checks for the Adventures table:

- Table—Verify that the trek has room for this explorer.

- Table—Verify that the explorer's IceQualified?, RockQualified?, and JumpQualified? values include those required for this trek.

- ExplorerId/TrekId—Verify that this combination is unique. An explorer should not buy the same trek twice. (We're assuming that the same trip on different dates gets a different record in the Treks table and thus a different TrekId. Some people may very well want to go to the same places again.)

- EmergencyContact—Verify that the EmergencyContact is not going on the same trek listed for this Adventures record.

- IncludeAir?/Notes—If IncludeAir? is Yes, then the Notes field should include flight information such as the explorer's starting airport and meal preferences. The database can probably not verify that the notes make sense (who knows if the low-sodium meal is available on that flight?), but it can verify that the Notes entry has some minimum length if IncludeAir? is Yes.

The Adventures table would be a natural place to try to deal with the discounts for purchasing airline tickets or renting equipment. You would set TotalPrice equal to the trek's cost minus any discounts. (Note that this model doesn't have room to hold information about the equipment rented. The full model would need more order-related information along those lines.)

In any case, the discount schedule seems likely to change so it's better handled later, not in a simple field or table check.

The following list describes business rules that can be implemented in field or table checks for the Treks table:

- Table—Verify that the guide's IceQualified?, RockQualified?, JumpQualified?, IceInstructor?, RockInstructor?, and JumpInstructor? values include those required for this trek.

Exercise 3 Solution

The following list summarizes business rules that should be extracted from the database's structure:

- If you really want to validate email addresses, it would be better to do so outside of the field and table checks. You could put this code in a stored procedure, code library, or middle tier.

- If you use a lookup table to validate phone number types (Home, Work, Cell, or Fax), do so here.

- If you're going to perform a complex City/State/Zip lookup, this is where to do it. You might use a huge table or you might call a web service over the Internet.

- If you use a lookup table to validate State values, do so here.

- This is where you would calculate an adventure's TotalPrice. You would look up discount information stored in a separate table and perform the calculation. You could put this code in a stored procedure, code library, or middle-tier layer.

- The fact that one of the company's owners asked which calculation would give the customer the biggest discount if they both purchase airline tickets and rent equipment (by the way, add the two discounts and take 15 percent off to give the biggest discount) further implies that they might someday change the way they perform this calculation. That gives you more reason to extract this rule from the database so it's easier to change later.

- If the adventure's IncludeAir? value is Yes, you could try to parse the Notes field to see if the flight and meal information is present. If you really need this check, you should move the flight and meal information into separate fields so they are easier to find and examine. (I've seen several systems that make these sorts of checks on notes fields, mostly because the requirements changed after the database was built and they couldn't easily modify the tables to include new fields. Scanning notes is always hard, so mistakes are common.)

Exercise 4 Solution

The PhoneTypes table would have only one field: Type. The records would initially include Home, Work, Cell, and Fax.

The States table would have only one field: State. The records would list all of the allowed State values: AL, AK, AS,…, WY.

The DiscountParameters table would have two fields: Type and Amount. Type would give the discount type (Air or Equipment) and Amount would be the discount amount (15 or 5 percent).

An additional Parameters table would have two fields: Name and Value. This table would hold parameters used in other calculations so that they would be easier to update than they would be if they were embedded in check constraints. The following table describes the initial values in this table.

| NAME | VALUE | PURPOSE |

|---|---|---|

| MinimumDate | January 1, 2000 | Sanity check date for DateSold, StartDate, and EndDate |

| MaximumDate | December 31, 2050 | Sanity check date for DateSold, StartDate, and EndDate |

| MinimumTotalPrice | $250 | Sanity check price for an Adventure's TotalPrice |

| MinimumTrekPrice | $250 | Sanity check price for a Trek's Price |

| MinimumPricePerDay | $100 | Sanity check minimum price per day for a Trek's Price |

| MaximumExplorers | 20 | Sanity check maximum number of explorers on a trek |

CHAPTER 7: NORMALIZING DATA

Exercise 1 Solution

- The list isn't in 1NF because it violates these 1NF rules:

- Each column must have a unique name. The two Email fields have the same name.

- The order of the rows and columns doesn't matter. The order of the Email columns represents the student's preferred email address.

- Each column must have a single data type. The MajorOrSchool field holds both majors and schools.

- Each column must contain a single value. The Name field contains the student's first and last names together.

Let's take these rules one at a time.

- Each column must have a unique name. The two Email fields have the same name. You could fix this problem by giving them different names. For example, you could name them Email1 and Email2. The numbers would indicate the student's preferred email address solving the problem with Rule 2. This is the approach taken by the Phone1 and Phone2 fields so it might work, right? Not really.

There's another equally important issue here. These two Email fields represent the same kind of data with only a minor difference: priority. Aside from the student's preference of which comes first, the two fields hold identical values. How do we know you won't want to add a third email address later? You've already got two, why not three or four? Simply renaming the fields solves the duplicate name issue, but locks you in to exactly two email addresses. Not only would that prevent you from adding more email addresses, but in many cases the second field would be empty.

This is also flirting with 1NF rule number 6: Columns cannot contain repeating groups. A better solution to the multiple Email field problem would be to pull those fields into a new StudentEmails table.

While we're thinking about multiple fields holding the same kind of data, let's take a closer look at the Phone1, PhoneType1, Phone2, and PhoneType2 fields. Although they have different names, they also represent the same kind of information and you're probably even more likely to want a third phone number than you are to want a third email address. Although these fields technically don't violate 1NF (aside from Rule 6), it's probably worthwhile moving them into a new StudentPhones table.

- The order of the rows and columns doesn't matter. The order of the Email columns represents the student's preferred email address. The new StudentEmails table should have a Priority column to capture the student's preference. Similarly, the new StudentPhones table should have a Priority column to indicate the student's preference.

- Each column must have a single data type. The MajorOrSchool field holds both majors and schools. It should be split into Major and School fields. Note that students have a school whether they have a major or not, so the School field should always contain a value while the Major field may contain null.

- Each column must contain a single value. The Name field contains the student's first and last names together. Here, you need to decide whether the name value is atomic. In other words, will you ever need to do something with just a first name or just a last name? Chances are good that you'll want to at least be able to search for last names (so you can easily look up students), so you should split this field into FirstName and LastName fields.

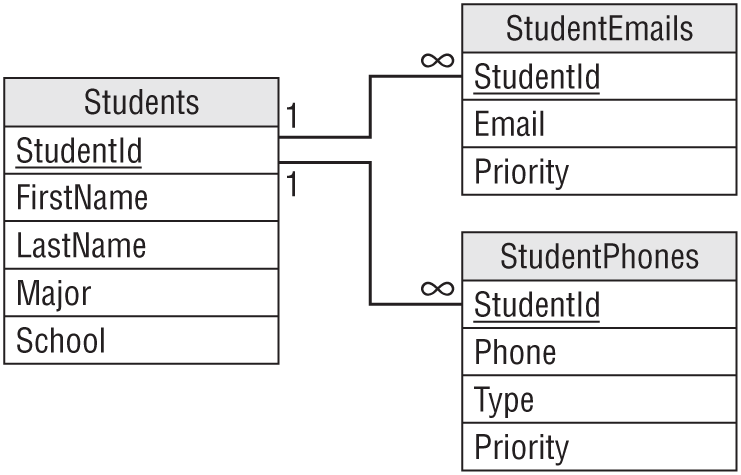

- Figure A.13 shows a relational diagram for this model.

Exercise 2 Solution

- The list isn't in 1NF because it violates these 1NF rules:

- The order of the rows and columns doesn't matter. The order of the rows represents the rows' priorities.

- Each column must contain a single value. The Items column contains a comma-separated list of values.

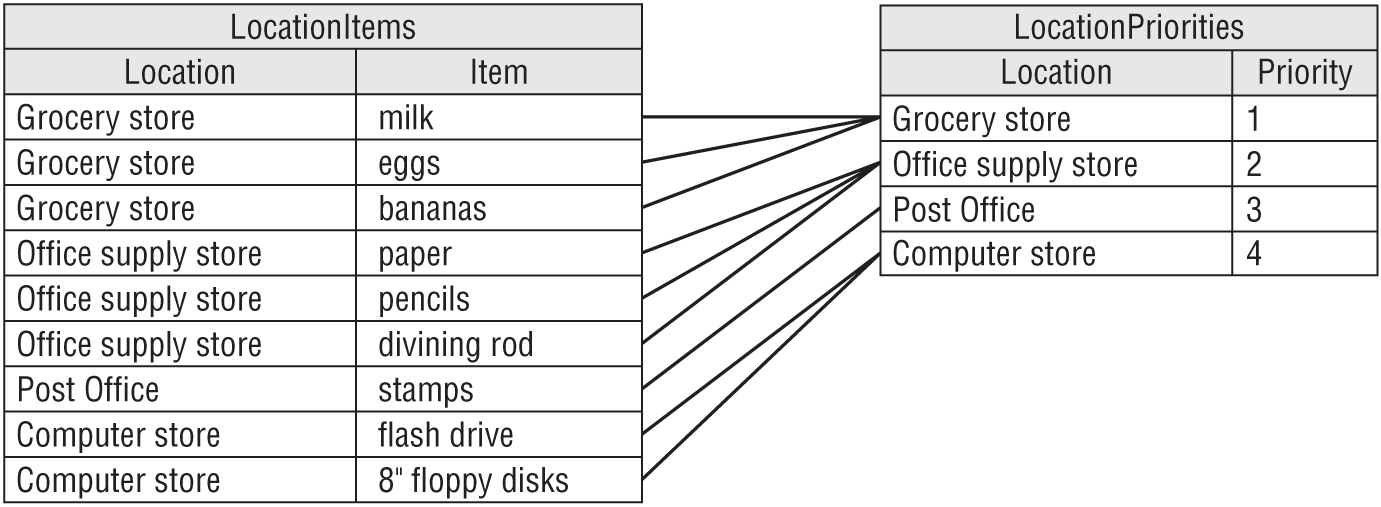

- The following table shows one way to convert the list into 1NF.

LOCATION ITEM PRIORITY Grocery store milk 1 Grocery store eggs 1 Grocery store bananas 1 Office supply store paper 2 Office supply store pencils 2 Office supply store divining rod 2 Post Office stamps 3 Computer store flash drive 4 Computer store 8” floppy disks 4 The primary key for this table is the combination Location/Item.

Exercise 3 Solution

- The list isn't in 2NF because it violates the 2NF rule:

- All of the non-key fields depend on all of the key fields. The Priority field depends on Location but not on Item. That's why its values are repeated so many times in the table.

- The solution is to pull the non-key field (Priority) out into a new table and use the key field that it depends on (Location) as the link to the original data. Figure A.14 shows the new relational design.

Figure A.15 shows the new tables holding the original data.

Exercise 4 Solution

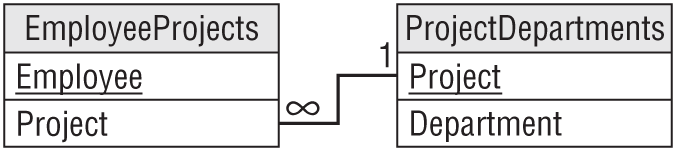

- The list isn't in 3NF because it violates the 3NF rule:

- It contains no transitive dependencies. In this table, the Department field depends on the Project. Because those fields are not key fields, this is a transitive dependency.

- The solution is to pull the dependent field (Department) out into a new table and use the field that it depends on (Project) as the link to the original data. Figure A.16 shows the new relational design.

Figure A.17 shows the new tables holding the original data.

Exercise 5 Solution

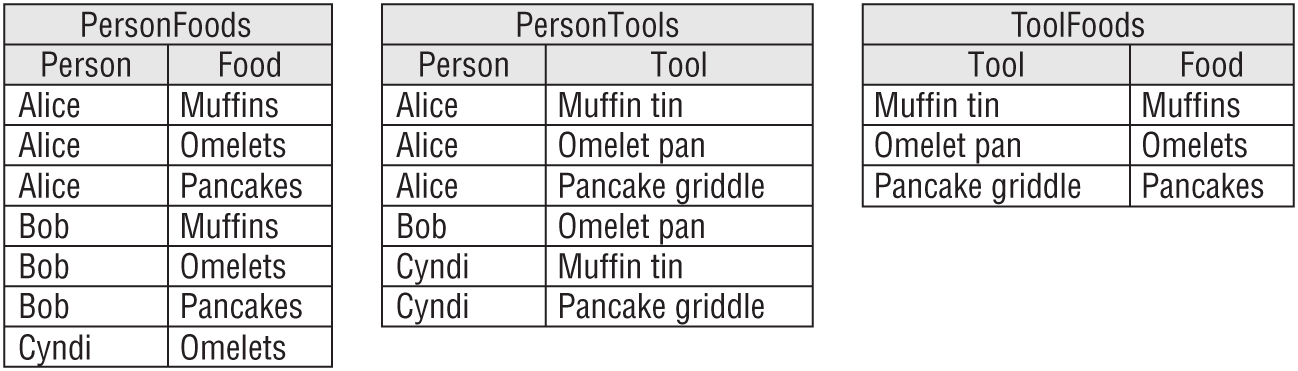

- The table isn't in 5NF because it violates the 5NF rule:

- It contains no related multivalued dependencies. In this table, Person determines Food (the type the person can make), Person determines Tools (those in the person's kitchen), and Tool partially determines Food (you can't make muffins without a muffin tin). This makes a related multivalued dependency. Figure A.18 shows an ER diagram for this model.

- It contains no related multivalued dependencies. In this table, Person determines Food (the type the person can make), Person determines Tools (those in the person's kitchen), and Tool partially determines Food (you can't make muffins without a muffin tin). This makes a related multivalued dependency. Figure A.18 shows an ER diagram for this model.

- The solution is to break the single table into three new tables that record the three different relationships: Person/Food, Person/Tool, and Tool/Food. Figure A.19 shows the new relational model.

Exercise 6 Solution

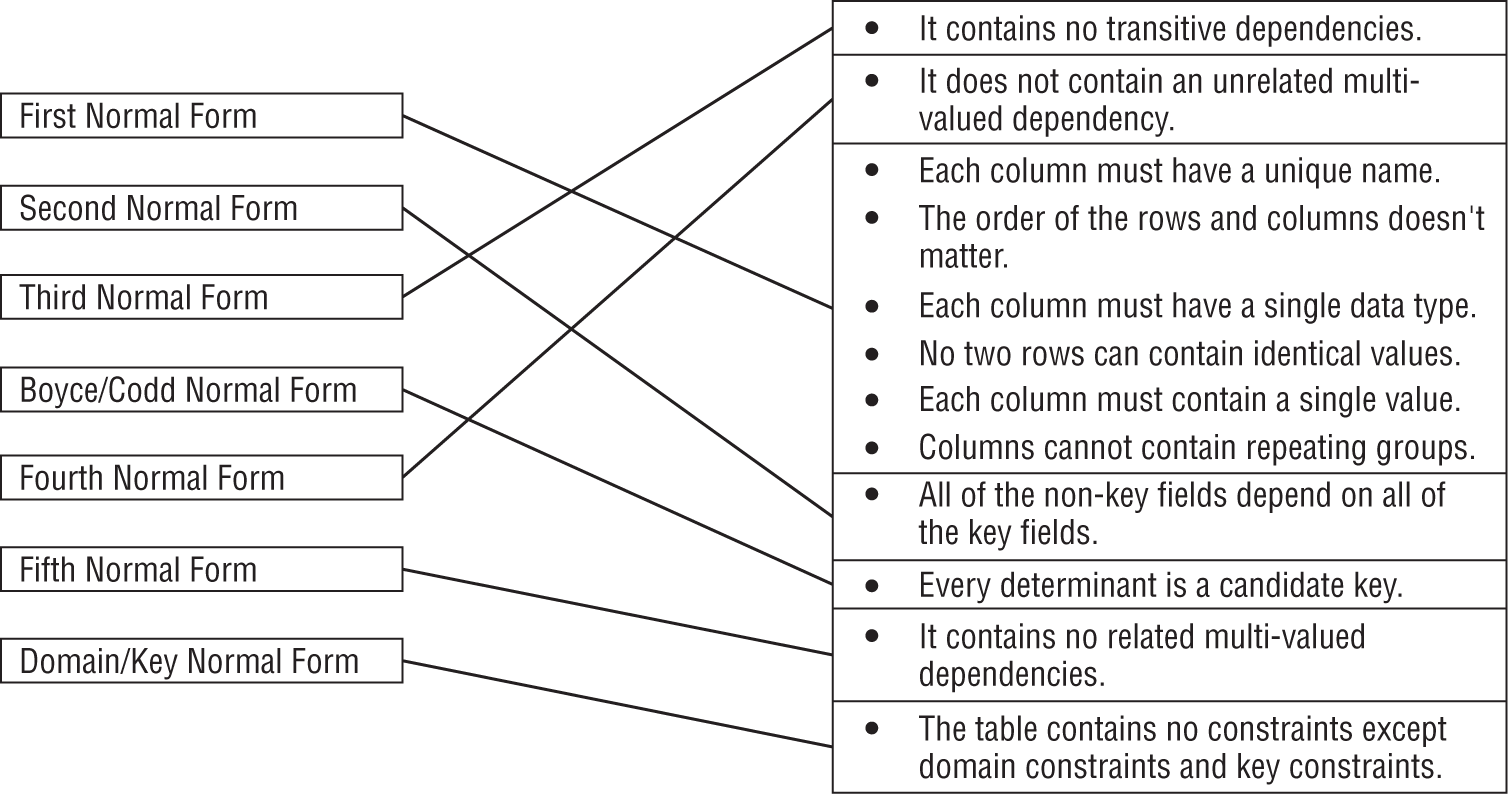

Figure A.20 shows the matching between normal forms and their rules.

CHAPTER 8: DESIGNING DATABASES TO SUPPORT SOFTWARE

Exercise 1 Solution

The following ShipClasses table contains the allowed combinations of Ship and Class.

| SHIP | CLASS |

|---|---|

| Luxury Liner | 1st Class |

| Luxury Liner | 2nd Class |

| Luxury Liner | 3rd Class |

| Luxury Liner | 4th Class |

| Luxury Liner | 5th Class |

| Schooner | 1st Class |

| Schooner | 2nd Class |

| Tuna Boat | 1st Class |

| Barge | None |

Because the validation involves two fields, this must be a two-field foreign key constraint. In the Trips table, the combination of fields Ship/Class will be a foreign key referencing the ShipClasses table's Ship/Class fields.

Exercise 2 Solution

The Students table holds information about students, so it is an object table. Similarly, the Departments table holds information about the school's departments and the Classes table holds information about classes, so they are also object tables.

The StudentClasses table links the Students and Classes tables, so it is a link table. Similarly, the DepartmentClasses table links the Departments and Classes tables, so it is also a link table.

Exercise 3 Solution

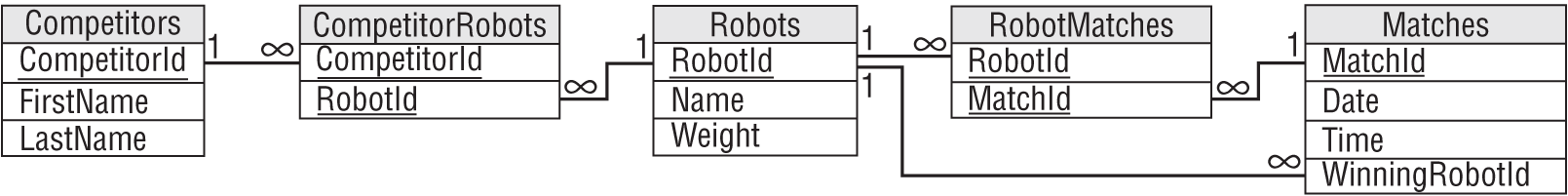

This table is trying to hold information about three different concepts: the first player, the second player, and the match they will play.

To fix it, create a Players table with fields PlayerId, Name, and Rank. Put all of the player information in this table for all of the Player1 and Player2 entries. This is an object table holding information about players.

Then create a Matches table that has fields PlayerId1, PlayerId2, and MatchTime. This is a link table that links the Players table to itself. It also holds extra information about the link: the times of the matches.

Exercise 4 Solution

The following list tells which daily values should be stored in a redundant variable and which should be calculated as needed.

- Average minutes late for an airline at a particular airport. This will require finding and averaging up to a few hundred values, so it should be possible to calculate as needed.

- Average minutes late for all airlines at a particular airport. This will require finding and averaging several hundred values. It might still be possible to perform this calculation as needed.

- Average minutes late for an airline across the country. This could require a huge number of calculations. If this is a common query (for example, if many people are asking for this information all over the country hundreds of times per day), then it might be better to store and update the information as planes take off and land rather than calculating it as needed.

- Average minutes late for all airlines across the entire country. This will require an enormous number of calculations. This could take quite a while even if the database isn't heavily used, so it might be best to store this value rather than calculating it as needed.

Of course, as long as you're going to store some of these values, you might want to just store them all so you can treat them uniformly.

CHAPTER 9: USING COMMON DESIGN PATTERNS

Exercise 1 Solution

Figure A.21 shows an ER diagram to represent Parcheesi matches.

Exercise 2 Solution

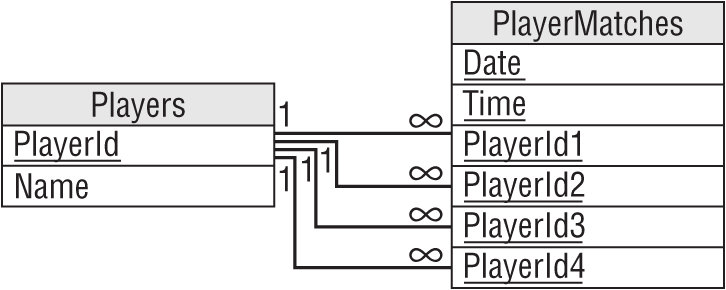

Figure A.22 shows a relational model for recording information about Parcheesi matches. PlayerId1 finished first, PlayerId2 finished second, PlayerId3 finished third, and PlayerId4 finished fourth.

Exercise 3 Solution

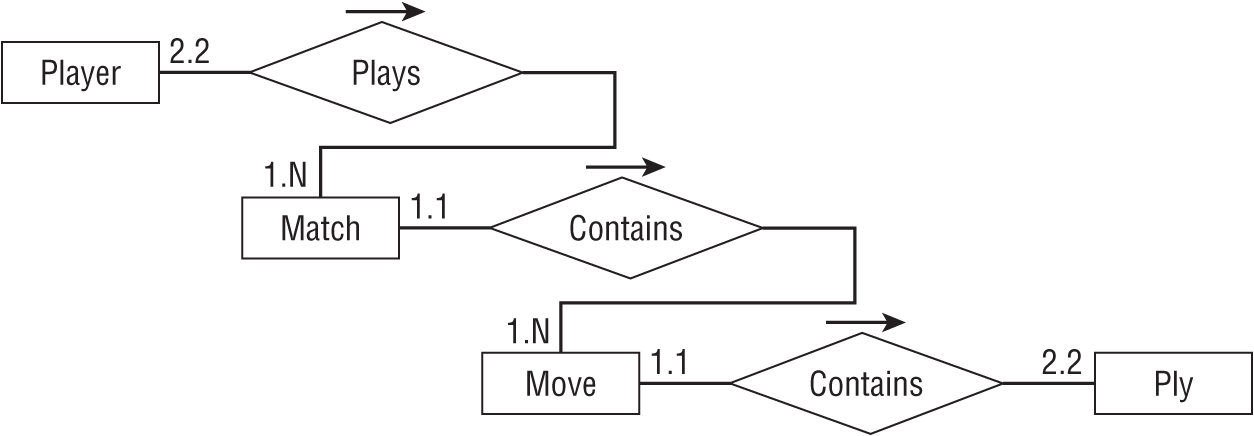

Figure A.23 shows an ER diagram that represents the relationships between Match, Move, and Ply.

Exercise 4 Solution

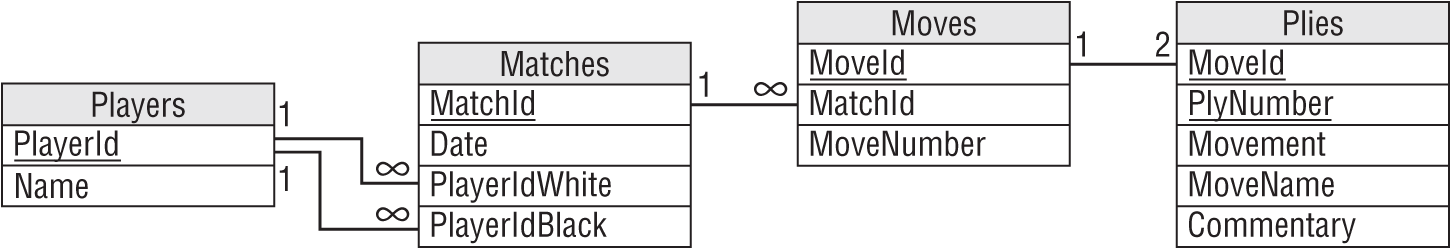

Figure A.24 shows a relational model for recording chess Match, Move, and Ply data.

You can model the one-to-two relationship between Moves and Plies by making the domain of the PlyNumber field include the values 1 and 2. You can implement that as a field-level check constraint on PlyNumber. There's no need to make this a foreign key constraint because the International Chess Federation will never change a move to include more than or fewer than two plies.

Note that the fact that MoveId/PlyNumber is the Plies table's primary key ensures that each move cannot contain two plies with the same PlyNumber.

Exercise 5 Solution

Figure A.25 shows the chess model without the Moves table.

The new diagram doesn't explicitly show that there should be exactly two plies per move. It has converted the old one-to-two relationship into a new one-to-many relationship.

The database still needs to verify that there are only two plies per move, however. You can still use a field-level check constraint to verify that the PlyNumber is either 1 or 2. The fact that MatchId/MoveNumber/PlyNumber is the Plies table's primary key ensures that any move in a given match cannot contain two plies with the same PlyNumber.

Exercise 6 Solution

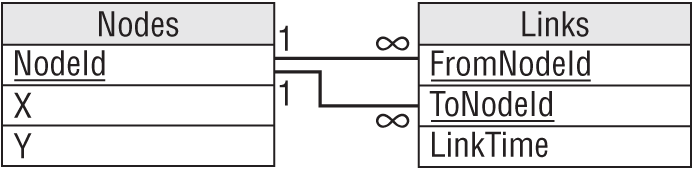

The network pattern described in the section “Network Data” earlier in this chapter uses the two tables shown in Figure A.26. The Nodes table holds node IDs and coordinates. The Links table holds link times and the IDs of the nodes that each link connects.

The pipe network exercise is slightly different because it is an undirected network. In other words, each link has the same “value” no matter which direction you cross it. The solution shown in Figure A.26 isn't perfect because the FromNodeId and ToNodeId fields imply a direction for the link. To use this design, you would either need to recognize that a Links record connecting node1 to node2 also represents a link connecting node2 with node1. Or you could insert two records for each link with the order of the node IDs switched, but that would double the number of records and all of that duplication screams out, “I'm not normalized!”

In normalization terms, FromNodeId and ToNodeId store the same kind of data. For a directed network, the two fields are not exactly the same thing, so there's some benefit to using two fields with different names to store their data and differentiate them.

Normalization purists would say that the link's node data should be moved into a new table with an extra field to tell you which was the “from” node and which was the “to” node. For a directed network, the extra layer of indirection seems like a lot of work for little benefit. In addition to making you follow extra links to find the data, you would also need to perform some new validations to ensure that every link corresponded to exactly two nodes.

However, this more normalized design works somewhat better for the undirected network that we have in this exercise because moving the link's nodes into a new table removes the implication that one is the “from” node and one is the “to” node.

You still need a way to ensure that each link has two nodes, however. One way to do that is to give the new table a NodeNumber field to indicate which node this is, make the domain of NodeNumber be the numbers 1 and 2, and make LinkId/NodeNumber the primary key. That ensures that any link can have only two nodes. This design is shown in Figure A.27.

This is the same as the normalized design for a directed network. The only difference is that in the undirected network you treat the NodeNumber field as a simple index to ensure that a link has two nodes whereas in a directed network you use that field to tell which node is “from” and which is “to.”

Exercise 7 Solution

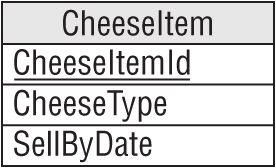

This is fairly straightforward temporal data. Figure A.28 shows a model to hold cheese item data. A CheeseItem record would probably hold other information such as the quantity of cheese purchased, the lot number, and so forth.

Exercise 8 Solution

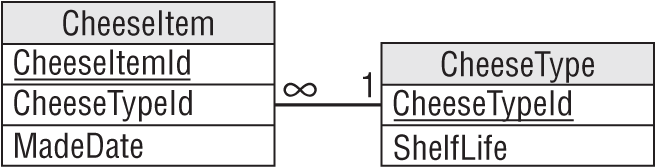

Figure A.29 shows the new model to hold cheese item data. Instead of a SellByDate, this version stores the date when the cheese was made and a link leading to the shelf life. The CheeseType record could also store other cheese data such as the type of milk used (cow, buffalo, goat, yak, horse, and so forth), the location where it was made, and a description (a firm, fruity and nutty cheese reminiscent of locusts and with a hint of lichen).

In the model, the CheeseItem table is the same size as the model for Exercise 7 and there's a new table, so you could ask if this is an improvement. In terms of looking up expiration data for a particular cheese item, it is not. It takes more space and requires an extra lookup plus a calculation (MadeDate + ShelfLife) to find the cheese item's sell-by date.

However, this model provides more consistency and avoids update anomalies because it ensures that each item of a particular kind of cheese uses the same shelf life.

Exercise 9 Solution

The following table shows the cost per month for the different plans.

| PLAN | STORAGE COST (PER MONTH) | RETRIEVAL COST (PER MONTH) | TOTAL COST (PER MONTH) |

|---|---|---|---|

| Standard | $0.0200 * 10,000 GB = $200 | $0.00 * 1,000 GB = $0 | $200 |

| Nearline | $0.0100 * 10,000 GB = $100 | $0.01 * 1,000 GB = $10 | $110 |

| Coldline | $0.0040 * 10,000 GB = $40 | $0.02 * 1,000 GB = $20 | $60 |

| Archive | $0.0012 * 10,000 GB = $12 | $0.05 * 1,000 GB = $50 | $62 |

In this example, the Coldline plan is the least expensive.

CHAPTER 10: AVOIDING COMMON DESIGN PITFALLS

Exercise 1 Solution

This table has a lot of problems. Specific problems include:

- The Name field includes two logical fields, FirstName and LastName, so the table isn't even in first normal form.

- Your client plans to look up the state from the Zip value. Why doesn't he also look up the city? The table should be changed to either also look up the city or have separate City, State, and Zip fields (the second option is a lot easier, although less normalized).

- The two phone number fields are not differentiated. In other words, how do you know which number is a home phone, cellphone, or work phone? Which is the daytime number and which is the evening number? These fields should be moved into a Phones table with an additional field indicating the type of the phone number.

- Having at most two phone numbers is an arbitrary limit. Someday, a customer will probably want to leave more than two numbers. When you create the Phones table, you should not restrict a customer to two entries.

- The Address field has a bad name because Address implies that the field contains an entire address when, in fact, it only contains the street information. This field's name should be something like Street or StreetAddress.

- The Stuff field has a terrible name because “Stuff” could mean just about anything! This field's name should be changed to something like Interests.

- The freshly renamed Interests field lists more than one value. (The fact that the name is plural is a hint.) This field's data should be moved into a new CustomerInterests table. You should also make an Interests lookup table to list the allowed values so CustomerInterests can use it as a foreign key constraint.

- Planning for future changes, you might also suggest adding an Email field.

Your client's assumption that you can just build Orders and other tables implies that the plan isn't very well-thought-out. This project definitely needs a lot more planning and a complete database design before you start slapping tables together. This kind of homegrown project also rarely includes documentation of any kind, so you'll need to do a lot of documentation work early in the project. (Though this type of project may provide many hours of lucrative consulting later for debugging, it's the frustrating kind of consulting.)

Exercise 2 Solution

Because this client is opening a new store, you should wonder if they will grow even more in the next few years. Flying cars are also a brand-new technology, and if it they become as popular as The Jetsons cartoon indicates, demand for rentals could skyrocket.

This database will need extra testing at very high loads to verify that the database design can meet ever-increasing performance demands.

In contrast, a well-established party rental store probably won't experience explosive growth in the near future because it's been around for a while and it isn't selling new technology. You still need to thoroughly test their application, but your load testing doesn't need to run at loads quite as far beyond the current level.

Exercise 3 Solution

This table is hyper-normalized. Although you can break a street address into name, number, prefix, and so forth, there are very few applications where that is necessary. If you will only ever need to use the address information to send mail to someone, then you can combine all of this information in a single Street field. You can even include the apartment or suite number.

Similarly, you can probably combine the Zip and PlusFour fields into a single Zip field. If you're only going to use the ZIP Code to write addresses, there's no need to use separate fields.

The Floor and Neighborhood information is also probably not useful. (Although if your business is renting apartments, you might want to be able to search for ground floor apartments or apartments within a certain neighborhood. In that case, these fields might make sense.)

Here's the new list of fields:

- CustomerId

- Street

- City

- State

- Zip

So much simpler!

Exercise 4 Solution

In this model, the Phones table is fairly unconstrained because it allows a person to have any number of any type of phone number. All the fields are required. Some other validations that you could build into this table include:

| FIELD | CONSTRAINT | IMPLEMENTATION |

|---|---|---|

| PersonId | Exists | Foreign key match to Persons.PersonId. |

| Type | Enumerated value | Foreign key match to new PhoneTypes table. |

| Number | Format | Let the database verify that the value has format ###-###-####. |

In the Persons table, every field except MiddleName should be required. The table can implement the following constraints:

| FIELD | CONSTRAINT | IMPLEMENTATION |

|---|---|---|

| State | Enumerated value | Foreign key match to new States table. |

| Zip | Format | Let the database verify that the value has format #### or ####-####. |

All of the fields in the Courses and Projects tables should be required, although you might want to allow a blank InstructorId and DaysAndTime so you can create a course before you're ready to schedule it. This table should also have a foreign key constraint requiring that the InstructorId exist in the Instructors table.

The Students and Instructors tables should require all fields. They should also have a foreign key constraint requiring that their PersonId fields have values that exist in the Persons table.

StudentCourses and StudentProjects are linking tables used to implement many-to-many relationships. Their fields should be required and foreign key constraints should verify that their values exist in the corresponding tables.

CourseResults and ProjectResults are also linking tables that implement many-to-many relationships. They should require that all fields and foreign key constraints should verify that their ID values exist in the corresponding tables.

CourseResults and ProjectResults should also use constraints to verify that the Grade fields contain acceptable values. If Grade is numeric, then a check constraint should verify that it is between 0 and 100 (or whatever scale the school uses). If the Grade value includes A+, A, A-, B+, and so forth, then the tables should use foreign key constraints to verify that the Grade exists in a new PossibleGrades table.

Finally, you could check that the Date fields in the CourseResults and ProjectResults tables come after the corresponding student's enrollment date.

CHAPTER 11: DEFINING USER NEEDS AND REQUIREMENTS

Exercise 1 Solution

The following table summarizes the Course entity's fields.

| FIELD | REQUIRED? | DATA TYPE | DOMAIN |

|---|---|---|---|

| Title | Yes | String | Any string |

| Description | Yes | String | Any string |

| MaximumParticipants | Yes | Integer | Greater than 0 |

| Price | Yes | Currency | Greater than 0 |

| AnimalType | Yes | String | One of: Cat, Dog, Bird, Bat, and so on |

| Dates | Yes | Dates | List of dates |

| Time | Yes | Time | Between 8 a.m. and 11 p.m. |

| Location | Yes | String | One of: Room 1, Room 2, yard, arena, and so on |

| Trainer | No | Reference | The Employee teaching the course |

| Students | No | Reference | Customers table |

Because the Dates and Time fields are required, we cannot create a course until it is scheduled.

A more complex validation for new records should verify that there are no other courses scheduled for the same location with overlapping dates and times.