Chapter 6

Domain Name System and Load Balancing

THE AWS CERTIFIED ADVANCED NETWORKING – SPECIALTY EXAM OBJECTIVES COVERED IN THIS CHAPTER MAY INCLUDE, BUT ARE NOT LIMITED TO, THE FOLLOWING:

- Domain 2.0: Design and Implement AWS Networks

2.4 Determine network requirements for a specialized workload

2.4 Determine network requirements for a specialized workload 2.5 Derive an appropriate architecture based on customer and application requirements

2.5 Derive an appropriate architecture based on customer and application requirements- Domain 4.0: Configure Network Integration with Application Services

4.1 Leverage the capabilities of Amazon Route 53

4.1 Leverage the capabilities of Amazon Route 53 4.2 Evaluate DNS solutions in a hybrid IT architecture

4.2 Evaluate DNS solutions in a hybrid IT architecture 4.4 Given a scenario, determine an appropriate load balancing strategy within the AWS ecosystem

4.4 Given a scenario, determine an appropriate load balancing strategy within the AWS ecosystem 4.5 Determine a content distribution strategy to optimize for performance

4.5 Determine a content distribution strategy to optimize for performance

Introduction to Domain Name System and Load Balancing

Amazon Route 53 is a highly available and scalable cloud Domain Name System (DNS) service. It is designed to give you the ability to route end users to Internet applications by translating domain names such as www.example.com into numeric IP addresses such as 192.0.2.122. Elastic Load Balancing gives you the capability to distribute incoming application traffic automatically across multiple targets, such as Amazon Elastic Compute Cloud (Amazon EC2) instances, containers, and IP addresses. Amazon Route 53 and Elastic Load Balancing can work together to provide you with a scalable and fault-tolerant architecture for your applications.

This chapter reviews the core components of DNS, comparing and contrasting Amazon EC2 DNS with Amazon Route 53. We review the components that make up Amazon Route 53, including the architectural features that make Amazon Route 53 so reliable. We then dive into Elastic Load Balancing and the three types of load balancers that are available in AWS.

The exercises at the end of this chapter reinforce how to use Amazon Route 53 and Elastic Load Balancing. The exercises help provide an understanding of the building blocks that make up Amazon Route 53 and Elastic Load Balancing, as well as their advanced use cases. You are expected to have a strong understanding of these concepts to pass the exam.

Domain Name System

To begin our discussion on Domain Name System (DNS), we’ll start with a simple analogy. The IP address of your website is like a phone number in the contacts on your mobile device. Without a name identifier such as Harry or Sue, it would be difficult to distinguish between phone numbers or even remember which number is Harry’s or Sue’s.

Similarly, when a visitor wants to access your website, their computer takes the domain name typed in (www.amazon.com, for example) and looks up the IP address for that domain using DNS.

DNS is a globally distributed service that is foundational to the way people use the Internet. It uses a hierarchical name structure, with each level separated with a dot (.). Consider the domain names www.amazon.com and aws.amazon.com. In both of these examples, com is the Top-Level Domain (TLD) and amazon is the Second-Level Domain (SLD). There can be any number of lower levels (for example, www or aws) below the SLD.

Computers use the DNS hierarchy to translate human-readable names (for example, www.amazon.com) into the IP addresses (such as 192.0.2.1) that computers use to connect to one another. Every time you use a domain name, a DNS service must translate the name into the corresponding IP address. In short, if you have used the Internet, you’ve used DNS.

Amazon Route 53 is an authoritative DNS system. An authoritative DNS system provides a direct update mechanism that developers use to manage their public DNS names. It then answers DNS queries, translating domain names into IP addresses so that computers can communicate with each other.

Domain Name System Concepts

This section of the chapter defines DNS terms, describes how DNS works, and explains commonly used record types.

Top-Level Domains

A Top-Level Domain (TLD) is the most general part of the domain. The TLD is the farthest portion to the right (as separated by a dot).

TLDs are at the top of the hierarchy in terms of domain names. Certain parties are given management control over TLDs by the Internet Corporation for Assigned Names and Numbers (ICANN). These parties can then distribute domain names under the TLD, usually through a domain registrar. These domains are registered with the Network Information Center (InterNIC), a service of ICANN that enforces the uniqueness of domain names across the Internet. Each domain name becomes registered in a central database, known as the WHOIS database.

There are two types of top-level domains:

- Generic TLDs are global in nature and recognized across the globe. Some of the more common are .com, .net, and .org. Generic TLDs also include specialty domains, such as .auction, .coffee, and .cloud. It is worth noting that not all Generic TLDs support Internationalized Domain Names (IDN). An IDN is one that includes non-ASCII characters, such as accented Latin, Chinese, and Russian. It is worth checking whether a TLD supports IDNs if they are needed before registering a domain with Amazon Route 53.

- Geographic TLDs are associated with areas such as countries or cities and include country-specific extensions, known as country code Top-Level Domains (ccTLDs). Examples include .be (Belgium), .in (India), and .mx (Mexico). The naming and registration rules vary for each ccTLD.

Domain Names, Subdomains, and Hosts

A domain name is the human-friendly name associated with an Internet resource. For instance, amazon.com is a domain name.

A subdomain is a domain name within a larger hierarchy. Every domain name except the root domain name is a subdomain: com is a subdomain of the root domain, amazon.com is a subdomain of com, and aws.amazon.com is a subdomain of amazon.com associated with systems operated by AWS.

The root domain does not have a name. Depending on the application or protocol, it is represented either as an empty string or a single dot (.). A host is a label within a subdomain that has one or more IP addresses associated with it.

IP Addresses

An IP address is a number that is assigned to a host. Each IP address must be unique within its network. For public websites, this network is the entire Internet. IP addresses will be in either an IPv4 format, such as 192.0.2.44, or IPv6 format, such as 2001:0db8:85a3:0000:0000:abcd:0001:2345. DNS provides IPv4 addresses in response to a type A query and IPv6 addresses in response to a type AAAA query. Amazon Route 53 supports both type A and type AAAA resource record sets.

Fully Qualified Domain Names

A Fully Qualified Domain Name (FQDN), also referred to as an absolute domain name, specifies a domain or host in relation to the absolute root of the DNS.

This means that the FQDN specifies each parent domain, including the TLD. A proper FQDN ends with a dot, indicating the root of the DNS hierarchy. For example, mail.amazon.com. is an FQDN. Sometimes, software that calls for an FQDN does not require the ending dot, but it is required to conform to ICANN standards.

In Figure 6.1, you can see that the entire string is the FQDN, which is composed of the domain name, subdomain, root, TLD, SLD, and host.

FIGURE 6.1 FQDN components

Name Servers

Name Servers are servers in the DNS that translate domain names into IP addresses in response to queries. Name Servers will do most of the work in the DNS. Since the total volume of domain queries are too numerous for any one server, each server may redirect requests to other Name Servers or delegate responsibility for a subset of subdomains for which they are responsible.

Name Servers can be authoritative or non-authoritative for a given domain. Authoritative servers provide answers to queries about domains under their control. Non-authoritative servers point to other servers or serve cached copies of other Name Servers’ data. In almost all cases clients first connect to non-authoritative Name Servers, which keep cached copies of previous lookups to authoritative Name Servers. Thus the entire DNS system is a global distributed cached database, which both allows it to be enormously scalable, but can also cause problems when the cached data becomes stale or is not properly refreshed.

Zones

A zone is a container that holds information about how you want to route traffic on the Internet for a domain (example.com) and its subdomains (apex.example.com and acme.example.com).

If you have an existing domain and zone and want to create subdomain records, you can do either of the following:

- Create the records you need inside the existing example.com zone.

- Create a new zone to hold the records associated with your subdomain, as well as a delegation set in the parent zone that refers clients to the subdomain hosted zone.

The first method requires fewer zones and fewer queries, while the second method offers more flexibility when managing zones (for example, restricting who may edit the different zones). In addition to containing hosts or subdomains (additional zones), zones themselves can also have IP addresses. These IP addresses are called “zone root” or “zone apex” addresses, and enable scenarios such as the use of http://example.com in a user’s browser. Compared to host names (like www.example.com), they also create some special challenges.

Domain Name Registrars

Because all of the names in a given domain and domains themselves must be unique, there needs to be a way to organize them so that domains are not duplicated. This is where domain name registrars come in. A domain name registrar is an organization or commercial entity that manages the reservation of Internet domain names. A domain name registrar must be accredited by the Generic TLD registries and ccTLD registries for which it provides domain name registration services. The management is done in accordance with the guidelines of the designated domain name registries.

Steps Involved in DNS Resolution

When you type a domain name into your browser, your computer first checks it’s hosts file to see if it has that domain name stored locally. If it does not, it will check its DNS cache to see if you have visited the site before. If it still does not have a record of that domain name, it will contact a DNS server to resolve the domain name.

DNS is, at its core, a hierarchical system. At the top of this system are root servers. ICANN delegates the control of these servers to various organizations.

As of this writing, there are 13 root servers in operation. Root servers handle requests for information about TLDs. When a request comes in for a domain that a lower-level Name Server cannot resolve, a query is made to the root server for the authoritative Name Servers for that domain.

In order to handle the incredible volume of resolutions that happen every day, these root servers are mirrored and replicated. When requests are made to a certain root server, the request will be routed to the nearest mirror of that root server.

The root servers will not actually know where the domain is hosted. They will, however, be able to direct the requester to the Name Servers that handle the specifically-requested TLD.

For example, if a type A (IPv4 address) request for www.wikipedia.org is made to the root server, it will check its resource records for a listing that matches that domain name, but it will not find one in its records. It will instead find a record for the .org TLD and refer the requester to the Name Servers responsible for .org addresses, providing their domain names and IP addresses.

TLD Servers

After a root server returns the IP addresses of the servers responsible for the TLD of a request, the requester then sends a new request to one of those addresses.

To continue the example from the previous section, the requesting entity would send a request to the Name Server responsible for knowing about .org domains to see if it can locate www.wikipedia.org.

Once again, when the .org TLD Name Server searches its zone records for a www.wikipedia.org listing, it will not find one in its records. It will, however, find records for the Name Servers responsible for wikipedia.org and return their names and IP addresses.

Domain Level Name Servers

At this point, the requester has the IP address of a Name Server that is authoritative for the wikipedia.org domain. It sends a new request to one or more of the Name Servers asking, once again, if it can resolve www.wikipedia.org.

The Name Server knows that it is authoritative for the wikipedia.org zone and checks its records for a host or subdomain matching the www label within this zone. If found, the Name Server returns the actual IPv4 address or addresses to the requester.

Resolving Name Servers

In the previous scenario, we referred to a requester. What is the requester in this situation?

In almost all cases, the requester will be what is called a resolving Name Server, which is a server that is configured to ask other servers questions. Its primary function is to act as an intermediary for a user, caching previous query results to improve speed and providing the addresses of appropriate root servers to resolve new requests.

Users will usually have a few resolving Name Servers configured on their computer systems. The resolving Name Servers are typically provided by an Internet Service Provider (ISP) or other organization. There are also several public resolving DNS servers that you can query. These can be configured in your computer either automatically or manually.

When you type a URL in the address bar of your browser, your computer first looks to see if it can find the resource’s location locally. It checks the hosts file on the computer and any locally stored cache. If not found, it then sends the request to the resolving Name Server and waits to receive the IP address of the resource.

The resolving Name Server then checks its cache for the answer. If it does not find it, it goes through the steps outlined in the previous sections.

Resolving Name Servers take care of the requesting process for the end user transparently. The clients simply have to know to ask the resolving Name Servers where a resource is located, and the resolving Name Servers will do the work to investigate and return the final answer.

Record Types

Each zone file contains resource records. In its simplest form, a resource record is a single mapping between a resource and a name. These can map a domain name to an IP address or define resources for the domain, such as Name Servers or mail servers. This section describes each record type.

Start of Authority Record

A Start of Authority (SOA) record is mandatory in all zones, and it identifies the base DNS information about the domain. Each zone contains a single SOA record.

The SOA record stores information about the following:

- The name of the DNS server for that zone

- The administrator of the zone

- The current version of the data file

- The number of seconds that a secondary Name Server should wait before checking for updates

- The number of seconds that a secondary Name Server should wait before retrying a failed zone transfer

- The maximum number of seconds that a secondary Name Server can use data before it must either be refreshed or expire

- The default Time To Live (TTL) value (in seconds) for resource records in the zone

A and AAAA

Both types of address records map a host to an IP address. A records are used to map a host to an IPv4 IP address, while AAAA records are used to map a host to an IPv6 address.

Certificate Authority Authorization

A Certificate Authority Authorization (CAA) record lets you specify which Certificate Authorities (CAs) are allowed to issue certificates for a domain or subdomain. Creating a CAA record helps prevent the wrong CAs from issuing certificates for your domains.

Canonical Name

A Canonical Name (CNAME) record is a type of resource record in the DNS that defines an alias for a host. The CNAME record contains a domain name that must have an A or AAAA record.

Mail Exchange

Mail Exchange (MX) records are used to define the mail servers used for a domain and ensure that email messages are routed correctly. The MX record should point to a host defined by an A or AAAA record and not one defined by a CNAME. Again, this may be allowed by some clients but is not allowed by the DNS standards.

Name Authority Pointer

A Name Authority Pointer (NAPTR) is a resource record set type that is used by Dynamic Delegation Discovery System (DDDS) applications to convert one value to another or to replace one value with another. For example, one common use is to convert phone numbers into Session Initiation Protocol (SIP) Uniform Resource Identifiers (URIs).

Name Server

Name Server (NS) records are used by TLD servers to direct traffic to the DNS servers that contain the authoritative DNS records.

Pointer

A Pointer (PTR) record is essentially the reverse of an A record. PTR records map an IP address to a DNS name, and they are mainly used to check if the server name is associated with the IP address from where the connection was initiated.

Sender Policy Framework

Sender Policy Framework (SPF) records are used by mail servers to combat spam. An SPF record tells a mail server what IP addresses are authorized to send an email from your domain name. For example, if you wanted to ensure that only your mail server sends emails from your company’s domain (example.com), you would create an SPF record with the IP address of your mail server. That way, an email sent from your domain ([email protected]) would need to have an originating IP address of your company mail server in order to be accepted. This prevents people from spoofing emails from your domain. (SPF records are actually not a distinct record type in the DNS specification and system, but rather a defined way of using TXT records; see next item.)

Text

Text (TXT) records are used to hold text information. This record provides the ability to associate some arbitrary and unformatted text with a host or other name, such as human readable information about a server, network, data center, and other accounting information.

This record is sometimes used to provide programmatic data about a name that is not covered by other record types. For example, domain control is sometimes “proven” to a third party by setting a TXT record to a challenge value provided by the third party.

Service

A Service (SRV) record is a specification of data defining the location (the hostname and port number) of servers for specified services. The idea behind SRV is that, given a domain name (example.com) and a service name (web HTTP) which runs on a protocol (Transmission Control Protocol [TCP]), a DNS query may be issued to find the hostname that provides such a service for the domain, which may or may not be within the domain.

Amazon EC2 DNS Service

When you launch an Amazon EC2 instance within an Amazon Virtual Private Cloud (Amazon VPC), the Amazon EC2 instance is provided with a private IP address and, optionally, a public IP address. The private IP address resides within the Classless Inter-Domain Routing (CIDR) address block of the VPC. The public IP address, if assigned, is from an Amazon-owned CIDR block and is associated with an instance via a static one-to-one Network Address Translation (NAT) at the Internet gateway. This is illustrated in Figure 6.2.

FIGURE 6.2 NAT at the VPC Internet gateway

DNS names are provided for both the private and public IP addresses. Internal DNS hostnames for Amazon EC2 are in the form ip-private-ipv4-address.ec2.internal for the US-East-1 region and ip-private-ipv4-address.region.compute.internal for all other regions (where private-ipv4-address is the reverse lookup IPv4 address of the instance with dots replaced by dashes, and region is replaced by the API name of the AWS regions, for example, “us-west-2”). The private DNS hostname of an Amazon EC2 instance can be used for communication between instances within the VPC. This DNS name is not, however, resolvable outside of the instance’s network.

External DNS hostnames for EC2 are in the form ec2-public-ipv4-address.compute-1.amazonaws.com for the US-East-1 region and ec2-public-ipv4-address.region.amazonaws.com for other regions. Outside of the VPC, the external hostname resolves to the public IPv4 address of the instance outside the instance’s VPC. Within the VPC and on peered VPCs, the external hostname resolves to the private IPv4 address of the instance.

Amazon VPCs have configurable attributes, as shown in Table 6.1, which control the behavior of the EC2 DNS service.

TABLE 6.1 Amazon VPC DNS Attributes

| Attributes | Description |

| enableDnsHostnames |

Indicates whether the instances launched in the VPC will receive a public DNS hostname. If this attribute is true, instances in the VPC will receive public DNS hostnames, but only if the enableDnsSupport attribute is also set to true, and only if the instance has a public IP address assigned to it. |

| enableDnsSupport |

Indicates whether the DNS resolution is supported for the VPC. If this attribute is false, the Amazon-provided Amazon EC2 DNS service in the VPC that resolves public DNS hostnames to IP addresses is not enabled. If this attribute is true, queries to the Amazon-provided DNS server at the 169.254.169.253 IP address, or the reserved IP address at the base of the VPC IPv4 network range plus two, will succeed. |

For most use cases, the Amazon-assigned DNS hostnames are sufficient. If customized DNS names are needed, you can use Amazon Route 53.

Amazon EC2 DNS vs. Amazon Route 53

As shown in Table 6.1, Amazon will auto-assign DNS hostnames to Amazon EC2 instances when the enableDnsHostname attribute is set to true. Conversely, if customer-specified hostnames are required, Amazon Route 53 is the service that provides you with the ability to specify either a public DNS hostname through public hosted zones or private hosted zones. More information is provided in the Amazon Route 53 hosted zones section later in this chapter.

With Amazon DNS for Amazon EC2 instances, AWS takes care of the DNS name creation and configuration for EC2 instances, but customization capabilities are limited. Amazon Route 53 is a fully-featured DNS solution where you can control many more aspects of your DNS, including DNS CNAME creation and management.

Amazon EC2 DNS and VPC Peering

DNS resolution is supported over VPC peering connections. You can enable resolution of public DNS hostnames to private IP addresses when queried from the peered VPC. To enable a VPC to resolve public IPv4 DNS hostnames to private IPv4 addresses when queried from instances in the peer VPC, you must modify the peering connection configuration and enable Allow DNS Resolution from Accepter VPC (vpc-identifier) to Private IP.

Using DNS with Simple AD

Simple AD is a Microsoft Active Directory-compatible managed directory powered by Samba 4. Simple AD supports up to 500 users (approximately 2,000 objects, including users, groups, and computers).

Simple AD provides a subset of the features offered by Microsoft Active Directory, including the ability to manage user accounts and group memberships, create and apply group policies, securely connect to Amazon EC2 instances, and provide Kerberos-based Single Sign-On (SSO). Note that Simple AD does not support features such as trust relationships with other domains, Active Directory Administrative Center, PowerShell support, Active Directory recycle bin, group managed service accounts, and schema extensions for POSIX and Microsoft applications.

Simple AD forwards DNS requests to the IP address of the Amazon-provided DNS servers for your VPC. These DNS servers will resolve names configured in your Amazon Route 53 private hosted zones. By pointing your on-premises computers to Simple AD, you can resolve DNS requests to the private hosted zone. This solves the issue of building private applications within your VPC and not having the ability to resolve those private-only DNS hostnames from your on-premises environment.

Custom Amazon EC2 DNS Resolver

In some cases, you may want a custom DNS resolver running on an Amazon EC2 instance within your VPC. This lets you leverage DNS servers that are capable of both conditional forwarding for DNS queries and recursive DNS resolution. This is shown in Figure 6.3.

FIGURE 6.3 Amazon EC2 DNS instance acting as resolver and forwarder

As shown in Figure 6.3, DNS queries in this scenario work in the following way:

- DNS queries for public domains are recursively resolved by the custom DNS resolver using the latest root hints available from the Internet Assigned Number Authority (IANA). The names and IP addresses of the authoritative Name Servers for the root zone are provided in the cache hints file so that a recursive DNS server can initiate the DNS resolution process.

- DNS queries bound for on-premises servers are conditionally forwarded to on-premises DNS servers.

- All other queries are forwarded to the Amazon DNS server.

Because the DNS server is running within a public subnet, exposing both forwarding and recursive DNS to the public Internet may not be desirable. In this case, it is possible to run recursion in the public subnet and a forwarder in the private subnet that handles all DNS queries from instances, as shown in Figure 6.4.

FIGURE 6.4 Amazon EC2 DNS instances with segregated resolver and forwarder

As shown in Figure 6.4, DNS queries in this scenario work in the following way:

- DNS queries for public domains from instances are conditionally forwarded by the DNS forwarder in the private subnet to the custom DNS resolver in the public subnet.

- DNS queries bound for on-premises servers are conditionally forwarded to on-premises DNS servers.

- All other queries are forwarded to the Amazon DNS server.

By having a customer-managed forwarder and resolver running within your VPC on Amazon EC2 instances, it is possible to resolve to Amazon DNS from on-premises in the same way that was achievable by using Simple AD for DNS.

Amazon Route 53

Now that we have reviewed the foundational components that make up DNS, the different DNS record types, and Amazon EC2 DNS, we can explore Amazon Route 53.

Amazon Route 53 is a highly available and scalable cloud DNS web service that is designed to give developers and organizations an extremely reliable and cost-effective way to route end users to Internet applications. It can also be used to provide much more control over private DNS resolution within customers’ VPCs using its private hosted zone feature.

Amazon Route 53 performs three main functions:

1. Domain registration Amazon Route 53 lets you register domain names, such as example.com.

2. DNS service Amazon Route 53 translates friendly domain names like www.example .com into IP addresses like 192.0.2.1. Amazon Route 53 responds to DNS queries using a global network of authoritative DNS servers, which reduces latency. To comply with DNS standards, responses sent over User Datagram Protocol (UDP) are limited to 512 bytes in size. Responses exceeding 512 bytes are truncated, and the resolver must re-issue the request over TCP.

3. Health checking Amazon Route 53 sends automated requests over the Internet to your application to verify that it is reachable, available, and functional.

You can use the Domain registration and DNS service together or independently. For example, you can use Amazon Route 53 as both your registrar and your DNS service, or you can use Amazon Route 53 as the DNS service for a domain that you registered with another domain registrar.

In the following sections, we discuss the use of Route 53’s graphical user interface within the AWS console. Please note, however, that all functions of Route 53, including the end-to-end flow of domain registration, can also be accomplished using its API or accompanying CLI. That means that all these workflows can be automated and accomplished via software without any human intervention. Especially in the area of domain registration, this makes Route 53 uniquely powerful as compared to most other domain registration services.

If you want to create a website or any other public-facing service, you first need to register the domain name. If you already registered a domain name with another registrar, you have the option to transfer the domain registration to Amazon Route 53. Transfer is not required, however, to use Amazon Route 53 as your DNS service or to configure health checking for your resources. The following are the domain registration steps for Amazon Route 53:

Amazon Route 53 supports domain registration for a wide variety of generic TLDs (for example, .com and .org) and geographic TLDs (such as .be and .us). For a complete list of supported TLDs, refer to the Amazon Route 53 Developer Guide: https://docs.aws.amazon.com/Route53/latest/DeveloperGuide/. You can transfer domain registration from another registrar to Amazon Route 53, from one AWS account to another, or from Amazon Route 53 to another registrar. When transferring a domain to Amazon Route 53, the following steps must be performed. If you skip any of these steps, your domain might become unavailable on the Internet. Transferring your domain can also affect the current expiration date. When you transfer a domain between registrars, TLD registries may let you keep the same expiration date for your domain, add a year to the expiration date, or change the expiration date to one year after the transfer date. For most TLDs, you can extend the registration period for a domain by up to 10 years after you transfer it to Amazon Route 53. Amazon Route 53 is an authoritative Domain Name System (DNS) Service that routes Internet traffic to your website by translating friendly domain names into IP addresses. When someone enters your domain name in a browser or sends you an email, a DNS request is forwarded to the nearest Amazon Route 53 DNS server in a global network of authoritative DNS servers. Amazon Route 53 responds with the IP address that you specified. If you register a new domain name with Amazon Route 53, Amazon Route 53 will be automatically configured as the DNS service for the domain and a hosted zone will be created for your domain. You add resource record sets to the hosted zone that define how you want Amazon Route 53 to respond to DNS queries for your domain. These responses can include, for example, the IP address of a web server, the IP address for the nearest Amazon CloudFront Edge location, or the IP address for an Elastic Load Balancing load balancer. Amazon Route 53 charges a monthly fee for each hosted zone (separate from charges for domain registration). There is also a fee charged for the DNS queries that are received for your domain. If you registered your domain with another domain registrar, that registrar is probably providing the DNS service for your domain. You are able to transfer the DNS service to Amazon Route 53, with or without transferring registration for the domain. If you are using Amazon CloudFront, Amazon Simple Storage Service (Amazon S3), or Elastic Load Balancing, you can configure Amazon Route 53 to resolve the IP addresses of these resources directly by using aliases. Unlike the alias feature provided by CNAME records, which work by sending a referral message back to the requester, these aliases dynamically look up the current IP addresses in use by the AWS service, and return A or AAAA (IPv4 or IPv6 address records, respectively) directly to the client. This allows you to use these resources as the target of a zone apex record. Note that dynamic resolution of zone apex names to IP addresses won’t work with other DNS services, which signficantly restrict the use of CloudFront, S3, or Elastic Load Balancing (ELB) in conjunction with the zone apex hosted by another DNS service. The exception is the Network Load Balancer mode of ELB, which provides fixed and unchanging IP addresses (one per availability zone) for the lifetime of the load balancer. These IP addresses can be added to the zone apex of a domain hosted in another DNS service. A hosted zone is a collection of resource record sets hosted by Amazon Route 53. Like a traditional DNS zone file, a hosted zone represents resource record sets that are managed together under a single domain name. Each hosted zone has its own metadata and configuration information. Likewise, a resource record set is an object in a hosted zone that you use to define how you want to route traffic for the domain or subdomain. There are two types of hosted zones: private and public. A private hosted zone is a container that holds information about how you want to route traffic for a domain and its subdomains within one or more VPCs. A public hosted zone is a container that holds information about how you want to route traffic on the Internet for a domain (such as example.com) and its subdomains (for example, apex.example.com and acme.example.com). The resource record sets contained in a hosted zone must share the same suffix. For example, the example.com hosted zone can contain resource record sets for the www.example.com and www.aws.example.com subdomains, but it cannot contain resource record sets for a www.example.ca subdomain. Amazon Route 53 supports the following DNS resource record types. When you access Amazon Route 53 using the Application Programming Interface (API), you will see examples of how to format the Value element for each record type. Additionally, Amazon Route 53 offers Alias records, which are Amazon Route 53-specific virtual records. Alias records are used to map resource record sets in your hosted zone to other AWS Cloud services such as Elastic Load Balancing load balancers, Amazon CloudFront distributions, AWS Elastic Beanstalk environments, or Amazon S3 buckets that are configured as websites. Alias records work like a CNAME record in that you can map one DNS name (example.com) to another target DNS name (elb1234.elb.amazonaws.com). Alias records differ from a CNAME record in that they are not visible to resolvers; the dynamic lookup of current IP addresses is handled transparently by Route 53. Resolvers only see the A record and the resulting IP address of the target record. When you create a resource record set, you set a routing policy that determines how Amazon Route 53 responds to queries. Routing policy options are simple, weighted, latency-based, failover, geolocation, multianswer value, and geoproximity (only through the Amazon Route 53 traffic flow feature). When specified, Amazon Route 53 evaluates a resource’s relative weight, the client’s network latency to the resource, or the client’s geographical location when deciding which resource to send back in a DNS response. Routing policies can also be associated with health checks. Unhealthy resources are removed from consideration before Amazon Route 53 decides which resource to return. A description of possible routing policies and health check options follows. This is the default routing policy when you create a new resource. Use a simple routing policy when you have a single logical resource that performs a given function for your domain (for example, the load balancer on front of the web server that serves content for the example.com website). In this case, Amazon Route 53 responds to DNS queries based only on the values in the resource record set (such as the CNAME of a load balancers DNS name, or the IP address of a single server in an A record). With weighted DNS, you can associate multiple resources (such as Amazon EC2 instances or Elastic Load Balancing load balancers) with a single DNS name. Use the weighted routing policy when you have multiple resources that perform the same function (such as web servers that serve the same website) and want Amazon Route 53 to route traffic to those resources in proportions that you specify. To configure weighted routing, you create resource record sets that have the same name and type for each of your resources. You then assign each record a relative weight that corresponds with how much traffic you want to send to each resource. When processing a DNS query, Amazon Route 53 searches for a resource record set or a group of resource record sets that have the same name and DNS record type (such as an A record). Amazon Route 53 then selects one record from the group. The probability of any resource record set being selected is governed by the Weighted DNS Formula:

For example, if you want to send a tiny portion of your traffic to a test resource and the rest to a control resource, you might specify weights of 1 and 99. The resource with a weight of 1 gets 1 percent of the traffic [1/(1+99)], and the other resource gets 99 percent [99/(1+99)]. You can gradually change the balance by changing the weights. If you want to stop sending traffic to a resource, you can change the weight for that record to 0. Latency-based routing allows you to route your traffic based on the lowest network latency for your end user. You can use a latency-based routing policy when you have resources that perform the same function in multiple Availability Zones or AWS Regions and you want Amazon Route 53 to respond to DNS queries using the resources that provide the lowest latency to the client. For example, suppose that you have Elastic Load Balancing load balancers in the US-West-2 (Oregon) region and in the AP-Southeast-2 (Singapore) region. You create a latency resource record set in Amazon Route 53 for each load balancer for your domain. A user in London enters the name of your domain in a browser, and DNS routes the request to the nearest Amazon Route 53 Name Server using a technology called Anycasting. Amazon Route 53 refers to its data on latency between London and the Singapore region and between London and the Oregon region. If latency is lower between London and the Oregon region, Amazon Route 53 responds to the user’s request with the IP address of your load balancer in Oregon. If latency is lower between London and the Singapore region, Amazon Route 53 responds with the IP address of your load balancer in Singapore. Latency over the Internet can change over time due to changes in network connectivity and the way packets are routed. Latency-based routing is based on latency measurements that are continually reevaluated by Amazon Route 53. As a result, a request from a given client might be routed to the Oregon region one week and the Ohio region the following week due to routing changes on the Internet. Use a failover routing policy to configure active-passive failover, in which one resource takes all of the traffic when it is available and the other resource takes all of the traffic when the first resource fails health checks. Failover resource record sets are only available for public hosted zones as of this writing. For example, you might want your primary resource record set to be in US-West-1 (N. California) and a secondary disaster recovery resource to be in US-East-1 (N. Virginia). Amazon Route 53 will monitor the health of your primary resource endpoints using a health check that you have configured. The health check configuration tells Amazon Route 53 how to send requests to the endpoint whose health you want to check: which protocol to use (HTTP, Hypertext Transfer Protocol Secure [HTTPS], or TCP), which IP address and port to use, and, for HTTP/HTTPS health checks, a domain name and path. After you have configured a health check, Amazon will monitor the health of your selected DNS endpoint. If your health check fails, then failover routing policies will be applied and your DNS will fail over to your disaster recovery site. To configure failover in a private hosted zone by checking the endpoint within a VPC by IP address, you must assign a public IP address to the instance in the VPC. An alternative could be to configure a health checker to check the health of an external resource upon which the instance relies, such as a database server. You could also create an Amazon CloudWatch metric, associate an alarm with the metric, and then create a health check that is based on the state of the alarm. Geolocation routing lets you choose where Amazon Route 53 will send your traffic based on the geographic location of your users (the location from which DNS queries originate). For example, you might want all queries from Europe to be routed to a fleet of Amazon EC2 instances that are specifically configured for your European customers, with local languages and pricing in Euros. You can also use geolocation routing to restrict distribution of content only to those locations in which you have distribution rights. Another possible use is for balancing load across endpoints in a predictable, easy-to-manage way so that each user location is consistently routed to the same endpoint. You can specify geographic locations by continent, by country, or by state in the United States. You can also create separate resource record sets for overlapping geographic regions, with conflicts resolved in favor of the smallest geographic region. This allows you to route some queries for a continent to one resource and to route queries for selected countries on that continent to a different resource. For example, if you have geolocation resource record sets for North America and Canada, users in Canada will be directed to the Canada-specific resources. Geolocation works by using a database to map IP addresses to locations. Exercise caution, however, because these results are not always accurate. Some IP addresses have no geolocation data associated with them, and an ISP may move an IP block across countries without notification. Even if you create geolocation resource record sets that cover all seven continents, Amazon Route 53 will receive some DNS queries from locations that it cannot identify. In this case, you can create a default resource record set that handles queries from both unmapped IP addresses and locations lacking geolocation resource record sets. If you omit a default resource record set, Amazon Route 53 returns a “no answer” response for queries from those locations. To improve the accuracy of geolocation routing, Amazon Route 53 supports the edns-client-subnet extension of EDNS0, which adds several optional extensions to the DNS protocol. Amazon Route 53 can use edns-client-subnet only when DNS resolvers support it, with the following behavior to be expected: Multivalue answer routing lets you configure Amazon Route 53 to return multiple values, such as IP addresses for your web servers, in response to DNS queries. Any DNS record type except for NS and CNAME is supported. You can specify multiple values for almost any record, but multivalue answer routing also lets you check the health of each resource, so Amazon Route 53 returns only values for healthy resources. While this is not a substitute for a load balancer, the ability to return multiple health-checkable IP addresses improves availability and load balancing efficiency. To route traffic in a reasonably random manner to multiple resources, such as web servers, you can create a single multivalue answer record for each resource and optionally associate an Amazon Route 53 health check with each record. Amazon Route 53 will then respond to DNS queries with up to eight healthy records and will give different answers to different requesting DNS resolvers. Managing resource record sets for complex Amazon Route 53 configurations can be challenging, especially when using combinations of Amazon Route 53 routing policies. Through the AWS Management Console, you get access to Amazon Route 53 traffic flow. This provides you with a visual editor that helps you create complex decision trees in a fraction of the time and effort. You can then save the configuration as a traffic policy and associate the traffic policy with one or more domain names in multiple hosted zones. Amazon Route 53 traffic flow then allows you to use the visual editor to find resources that you need to update and apply updates to one or more DNS names. You then have the ability to roll back updates if the new configuration is not performing as you intended. Figure 6.5 shows an example traffic policy using Amazon Route 53 traffic flow. FIGURE 6.5 Amazon Route 53 traffic flow—an example traffic policy Geoproximity routing lets Amazon Route 53 route traffic to your resources based on the geographic location of your resources. Additionally, geoproximity routing gives you the option to route more traffic or less traffic to a particular resource by specifying the value, bias, which will expand or shrink the size of a geographic region to where traffic is currently being routed. When creating a geoproximity rule for your AWS resources, you must specify the AWS Region in which you created the resource. If you’re using non-AWS resources, you must specify the latitude and longitude of the resources. You can then optionally expand the size of the geographic region from which Amazon Route 53 routes traffic to a resource by specifying a positive integer from 1 to 99 for the bias. When you specify a negative bias of -1 to -99, the opposite is true: Amazon Route 53 will shrink the size of the geographic region to which it routes traffic. The effect of changing the bias for your resources depends on a number of factors, including the number of resources that you have, how close the resources are to one another, and the number of users that you have near the border area between geographic regions. Amazon Route 53 health checks monitor the health of your resources such as web servers and email servers. You can configure Amazon CloudWatch alarms for your health checks so that you receive notification when a resource becomes unavailable. You can also configure Amazon Route 53 to route Internet traffic away from resources that are unavailable. Health checks and DNS failover are the primary tools in the Amazon Route 53 feature set that help make your application highly available and resilient to failures. If you deploy an application in multiple Availability Zones and multiple AWS Regions and attach Amazon Route 53 health checks to every endpoint, Amazon Route 53 will respond to queries with a list of healthy endpoints only. Health checks can automatically switch to a healthy endpoint with minimal disruption to your clients and without any configuration changes. You can use this automatic recovery scenario in active-active or active-passive setups, depending on whether your additional endpoints are always hit by live traffic or only after all primary endpoints have failed. Using health checks and automatic failovers, Amazon Route 53 improves your service uptime, especially when compared to the traditional monitor-alert-restart approach of addressing failures. Amazon Route 53 health checks are not triggered by DNS queries; they are run periodically by AWS, and the results are published to all DNS servers. This way, Name Servers can be aware of an unhealthy endpoint and route differently within approximately 30 seconds of a problem (after three failed tests in a row with a request interval of 10 seconds) and new DNS results will be known to clients a minute later (if your TTL is set to 60 seconds), bringing complete recovery time to about a minute and a half in total in this scenario. Beware, however, that DNS TTLs are not always honored by some intermediate Name Servers nor by some client software, so for some clients the ideal failover time might be much longer. Figure 6.6 shows an overview of how health checking works in the scenario where you want to be notified when resources become unavailable, with the following workflow:

FIGURE 6.6 Amazon Route 53 health checking You can create a health check and stipulate values that define how you want the health check to work, specifying the following attributes: You can additionally set how you want to be notified when Amazon Route 53 detects that an endpoint is unhealthy. When you configure notification, Amazon Route 53 will automatically set an Amazon CloudWatch alarm. Amazon CloudWatch uses Amazon SNS to notify users that an endpoint is unhealthy. It is important to note that Amazon Route 53 does not check the health of the resource specified in the resource record set, such as the A record for example.com. When you associate a health check with a resource record set, it will begin to check the health of the endpoint that you have specified in the health check.Domain Registration

Transferring Domains

Domain Name System Service

Hosted Zones

Supported Record Types

Routing Policies

Simple Routing Policy

Weighted Routing Policy

![]()

Latency-Based Routing Policy

Failover Routing Policy

Geolocation Routing Policy

Multivalue Answer Routing

Traffic Flow to Route DNS Traffic

Geoproximity Routing (Traffic Flow Only)

More on Health Checking

Elastic Load Balancing

An advantage of having access to a large number of servers in the cloud, such as Amazon EC2 instances on AWS, is the ability to provide a more consistent experience for the end user. One way to ensure consistency is to balance the request load across more than one server. A load balancer in AWS is a mechanism that automatically distributes traffic across multiple Amazon EC2 instances (or potentially other targets). You can either manage your own virtual load balancers on Amazon EC2 instances or leverage an AWS Cloud service called Elastic Load Balancing, which provides a managed load balancer for you. A combination of both virtual managed load balancers on Amazon EC2 instances and Elastic Load Balancing can also be used. This configuration is referred to as the ELB sandwich.

Using Elastic Load Balancing can provide advantages over building a load balancing service based on Amazon EC2 instances. Because Elastic Load Balancing is a managed service, it scales in and out automatically to meet the demands of increased application traffic and is highly available within a region itself as a service. Elastic Load Balancing provides high availability by helping you distribute traffic across healthy instances in multiple Availability Zones. Additionally, Elastic Load Balancing seamlessly integrates with the Auto Scaling service to scale automatically the Amazon EC2 instances behind the load balancer. Lastly, Elastic Load Balancing can offer additional security for your application architecture, working with Amazon VPC to route traffic internally between application tiers. This allows you to expose only Internet-facing public IP addresses of the load balancer.

The Elastic Load Balancing service allows you to distribute traffic across a group of Amazon EC2 instances in one or more Availability Zones, enabling you to achieve high availability in your applications. Using multiple Availability Zones is always recommended when using services that do not automatically provide regional fault-tolerance, such as Amazon EC2.

Elastic Load Balancing supports routing and load balancing of Hypertext Transfer Protocol HTTP, HTTPS, TCP, and Transport Layer Security (TLS) traffic to Amazon EC2 instances. Note, Transport Layer Security (TLS) and its predecessor Secure Sockets Layer (SSL) are protocols that are used to encrypt confidential data over insecure networks such as the Internet. The TLS protocol is a newer version of the SSL protocol. In this chapter, we refer to both SSL and TLS protocols as the SSL protocol, with Elastic Load Balancing supporting TLS 1.2, TLS 1.1, TLS 1.0, SSL 3.0.

Elastic Load Balancing provides a stable, single DNS name for DNS configuration and supports both Internet-facing and internal application-facing load balancers. Network Load Balancers also provide a stable set of IP addresses (one per Availability Zone) over their lifetimes. It is still a best practice, however, to use the DNS name of a load balancer as the mechanism for referencing it.

Elastic Load Balancing supports health checks for Amazon EC2 instances to ensure that traffic is not routed to unhealthy or failing instances. Elastic Load Balancing can automatically scale based on collected metrics recorded in Amazon CloudWatch.

Elastic Load Balancing also supports integrated certificate management and SSL termination.

Types of Load Balancers

Elastic Load Balancing provides three different types of load balancers at the time of this writing: Classic Load Balancer, Application Load Balancer, and Network Load Balancer. Each type of Amazon load balancer is suited to a particular use case. The features of each type are shown in Table 6.2. Each type of load balancer can be configured as either internal for use within the Amazon VPC or external for use on the public Internet. Elastic Load Balancing also supports features such as HTTPS and encrypted connections.

TABLE 6.2 Elastic Load Balancer Comparison

| Feature | Classic Load Balancer | Application Load Balancer | Network Load Balancer |

| Protocols | TCP, SSL, HTTP, HTTPS | HTTP, HTTPS | TCP |

| Platforms | EC2-Classic, VPC | VPC | VPC |

| Health checks | ✓ | ✓ | ✓ |

| Amazon CloudWatch metrics | ✓ | ✓ | ✓ |

| Logging | ✓ | ✓ | ✓ |

| Availability Zone failover | ✓ | ✓ | ✓ |

| Connection draining (deregistration delay) | ✓ | ✓ | ✓ |

| Load balancing to multiple ports on the same instance | ✓ | ✓ | |

| WebSockets | ✓ | ✓ | |

| IP addresses as targets | ✓ | ✓ | |

| Load balancer deletion protection | ✓ | ✓ | |

| Path-based routing | ✓ | ||

| Host-based routing | ✓ | ||

| Native HTTP/2 | ✓ | ||

| Configurable idle connection timeout | ✓ | ✓ | |

| Cross-zone load balancing | ✓ | ✓ | |

| SSL offloading | ✓ | ✓ | |

| Sticky sessions | ✓ | ✓ | |

| Back-end server encryption | ✓ | ✓ | |

| Static IP | ✓ | ||

| Elastic IP address | ✓ | ||

| Preserve source IP address | ✓ |

Classic Load Balancer

Classic Load Balancer is the original class of Elastic Load Balancing prior to the release of Application Load Balancer and Network Load Balancer. The Classic Load Balancer will distribute incoming application traffic across multiple Amazon EC2 instances in multiple Availability Zones, increasing the fault tolerance of your application. You can configure health checks to monitor the health of the registered targets so that the load balancer can send requests only to the healthy targets.

Classic Load Balancers can be configured to pass TCP traffic directly through (Layer 4 in the Open Systems Interconnect [OSI] model) or to handle HTTP/HTTPS requests (Layer 7) and perform SSL termination and insert HTTP proxy headers.

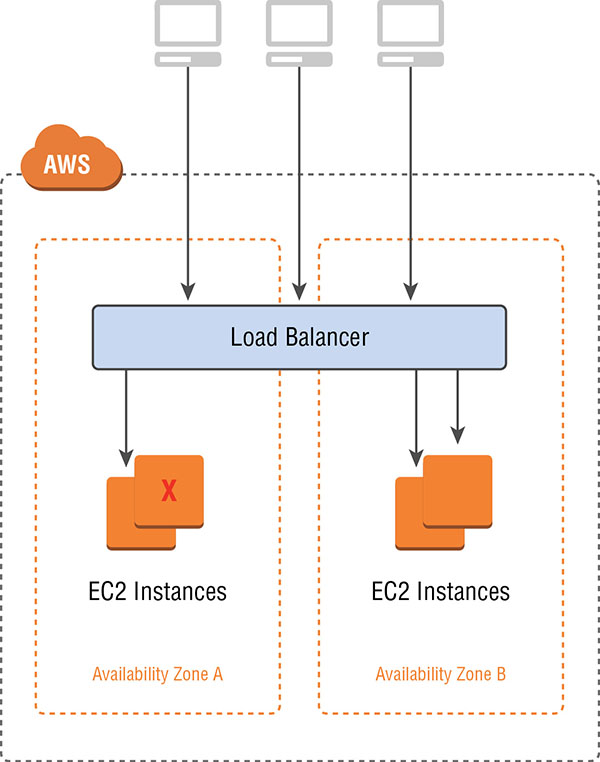

In most cases, an Application Load Balancer or Network Load Balancer will be the better choice when building your load balancing architecture. For load balancing across Amazon EC2-classic targets, however, using Classic Load Balancers is required. The Classic Load Balancer architecture is shown in Figure 6.7.

FIGURE 6.7 Classic Load Balancer

Application Load Balancer

An Application Load Balancer operates at the application layer (Layer 7) of the OSI model. After receiving a request, the Application Load Balancer will evaluate the listener rules in a priority order to determine which rule to apply, then select the appropriate target from a target group using the round robin routing algorithm.

The Application Load Balancer serves as a single point of contact for clients, increasing the availability of your application. It also gives you the ability to route requests to different target groups based on the content of the application traffic. As with Classic Load Balancer, you can configure health checks to monitor the health of the registered targets so that the load balancer can send requests only to the healthy targets.

The Application Load Balancer is the ideal choice when you need support for:

- Path-based routing, with rules for your listener that forward requests based on the HTTP URL in the request.

- Routing requests to multiple services on a single EC2 instance by registering the same instance using multiple ports.

- Containerized applications by having the ability to select an unused port when scheduling a task and registering that task with a target group using this port.

- Monitoring the health of each service independently because health checks are defined at the target group level and many Amazon CloudWatch metrics are reported at the target group level. Attaching a target group to an Auto Scaling group enables you to scale each service dynamically based on demand.

- Improved load balancer performance over the Classic Load Balancer.

The Application Load Balancer architecture is shown in Figure 6.8.

FIGURE 6.8 Application Load Balancer

Targets and target groups are discussed later in this chapter.

Network Load Balancer

An Amazon Network Load Balancer operates at the transport layer (Layer 4) of the OSI model. Network Load Balancer works by receiving a connection request, selecting a target from the target group associated with the Network Load Balancer, and then attempting to open a TCP connection to the selected target on the port specified in the listener configuration.

The Network Load Balancer architecture is shown in Figure 6.9.

FIGURE 6.9 Network Load Balancer

Using a Network Load Balancer instead of a Classic Load Balancer or Application Load Balancer has the following benefits:

- Ability to handle volatile workloads and scale to millions of requests per second.

- Support for static IP addresses for the load balancer. You can also assign one Elastic IP address per Availability Zone enabled for the load balancer.

- Support for registering targets by IP address, including targets outside the VPC for the load balancer.

- Support for routing requests to multiple applications on a single EC2 instance. You can register each instance or IP address with the same target group using multiple ports.

- Support for containerized applications. Amazon EC2 Container Service (Amazon ECS) can select an unused port when scheduling a task and register the task with a target group using this port. This enables you to make efficient use of your clusters.

- Support for monitoring the health of each service independently, as health checks are defined at the target group level and many Amazon CloudWatch metrics are reported at the target group level. Attaching a target group to an Auto Scaling group enables you to scale each service dynamically based on demand.

It is also worth noting that with Network Load Balancers, the load balancer node that receives the connection selects a target from the target group using a flow hash algorithm. This flow hashing algorithm is based on the protocol, source IP address, source port, destination IP address, destination port, and TCP sequence number. As the TCP connections from a client have different source ports and sequence numbers, each connection can be routed to a different target. Each individual TCP connection is also routed to a single target for the life of the connection.

Internet-Facing Load Balancers

An Internet-Facing Load Balancer is, as the name implies, a load balancer that takes requests from clients over the Internet and distributes them to Amazon EC2 instances or IP addresses that are registered with the load balancer. Each of the three types of Elastic Load Balancer can be configured as Internet-Facing Load Balancers, with publicly reachable endpoints through a public subnet within a VPC.

When you configure a load balancer, it receives a public DNS name that clients can use to send requests to your application. The DNS servers resolve the DNS name to your load balancer’s public IP address, which can be visible to client applications.

Because Elastic Load Balancing scales in and out to meet traffic demand, it is not recommended to bind an application to an IP address that may no longer be part of a load balancer’s pool of resources. Network Load Balancer has a static IP feature, where the load balancer will be represented by a single IP address per Availability Zone regardless of the scale of the Network Load Balancer. Classic Load Balancer and Application Load Balancer do not have this static IP functionality.

Both the Classic Load Balancer and Application Load Balancer support dual stack addressing (IPv4 and IPv6). At the time of this writing, Network Load Balancer only supports IPv4.

Internal Load Balancers

In a multi-tier application, it is often useful to load balance between the tiers of the application. For example, an Internet-Facing Load Balancer might receive and balance external traffic to the presentation or web tier composed of Amazon EC2 instances. These instances then send requests to a load balancer sitting in front of an application tier that should not receive traffic from the Internet. You can use internal load balancers to route traffic to your application-tier Amazon EC2 instances in VPCs with private subnets.

HTTPS Load Balancers

Both Application Load Balancer and Classic Load Balancer support HTTPS traffic. You can create an Application Load Balancer or Classic Load Balancer that uses the SSL/Transport Layer Security (TLS) protocol for encrypted connections (also known as SSL offload). This feature enables traffic encryption between your load balancer and the clients that initiate HTTPS sessions. It also enables the connection between your load balancer and your back-end Elastic Load Balancing to provide security policies that have predefined SSL negotiation configurations. These can be used to negotiate connections between clients and the load balancer. In order to use SSL, you must install an SSL certificate on the load balancer that it uses to terminate the connection and then decrypt requests from clients before sending requests to the back-end Amazon EC2 instances. You can optionally choose to enable authentication on your back-end instances. If you are performing SSL on your back-end instances, the Network Load Balancer may be a better choice.

Elastic Load Balancing Concepts

A load balancer serves as the single point of contact for clients. Clients can send a request to the load balancer, and the load balancer will send them to targets, such as Amazon EC2 instances, in one or more Availability Zones. Elastic Load Balancing load balancers are made up of the following concepts.

Listeners

Every load balancer must have one or more listeners configured. A listener is a process that waits for connection requests. Every listener is configured with a protocol, a port for a front-end connection (client to load balancer) and a protocol, and a port for the back-end (load balancer to Amazon EC2 instance) connection. Application Load Balancer and Classic Load Balancer load balancers both support the following protocols:

- HTTP

- HTTPS

- TCP

- SSL

Classic Load Balancer supports protocols operating at two different OSI layers. In the OSI model, Layer 4 is the transport layer that describes the TCP connection between the client and your back-end instance through the load balancer. Layer 4 is the lowest level that is configurable for your load balancer. Layer 7 is the application layer that describes the use of HTTP and HTTPS connections from clients to the load balancer and from the load balancer to your back-end instance.

The Network Load Balancer supports TCP (Layer 4 of the OSI model). Application Load Balancer supports HTTPS and HTTP (Layer 7 of the OSI model).

Listener Rules

A major advantage of Application Load Balancer is the ability to define listener rules. The rules that you define for your Application Load Balancer will determine how the load balancer routes requests to the targets in one of more target groups for the Application Load Balancer. Listener rules are composed of the following:

A rule priority Rules are evaluated in priority order, from the lowest value to the highest value.

One or more rule actions Each rule action has a type and a target group. Currently, the only supported type is forward, which forwards requests to the target group.

Rule conditions When the conditions for a rule are met, then its action is taken. You can use host conditions to define rules that forward requests to different target groups based on the hostname in the host header (also known as host-based routing). You can use path conditions to define rules that forward requests to different target groups based on the URL in the request (also known as path-based routing).

Targets

An Application Load Balancer or Network Load Balancer serves as a single point of contact for clients and distributes traffic across healthy registered targets. Targets are selected destinations to which the Application Load Balancer or Network Load Balancer sends traffic. Targets such as Amazon EC2 instances are associated with a target group and can be one of the following:

- An instance, where the targets are specified by instance ID.

- An IP, where the targets are specified by IP address.

When a target type is IP, you can specify addresses from one of the following CIDR blocks:

- The subnets of the VPC for the target group

- 10.0.0.0/8 (RFC 1918)

- 100.64.0.0/10 (RFC 6598)

- 172.16.0.0/12 (RFC 1918)

- 192.168.0.0/16 (RFC 1918)

These supported CIDR blocks enable you to register the following with a target group: ClassicLink instances, instances in a peered VPC, AWS resources addressable by IP address and port (for example, databases), and on-premises resources that are reachable by either AWS Direct Connect or a VPN connection.

If you specify targets using an instance ID, traffic is routed to instances using the primary private IP address specified in the primary network interface for the instance. If you specify targets using IP addresses, you can route traffic to an instance using any private IP address from one or more network interfaces. This enables multiple applications on an instance to use the same port. Each network interface can also have its own security group assigned.

If demand on your application increases, you can register additional targets with one or more of your target groups, with the load balancer additionally routing requests to newly registered targets, as soon as the registration process completes and the new target passes the initial health checks.

If you are registering targets by instance ID, you can use your load balancer with an Auto Scaling group. After you attach a target group to an Auto Scaling group, Auto Scaling registers your targets with the target group for you when it launches them.

Target Groups

Target groups allow you to group together targets, such as Amazon EC2 instances, for the Application Load Balancer and Network Load Balancer. The target group can be used in listener rules. This makes it easy to specify rules consistently across multiple targets.

You define health check settings for your load balancer on a per-target group basis. After you specify a target group in a rule for a listener, the load balancer continually monitors the health of all targets registered within the target group that are in an Availability Zone that is enabled for the load balancer. The load balancer will then route requests to registered targets within the group that are healthy.

Elastic Load Balancer Configuration

Elastic Load Balancing allows you to configure many aspects of the load balancer, including idle connection timeout, cross-zone load balancing, connection draining, proxy protocol, sticky sessions, and health checks. Configuration settings can be modified using either the AWS Management Console or a Command Line Interface (CLI). Most features are available for Classic Load Balancer, Application Load Balancer, and Network Load Balancer. A few features, like cross-zone load balancing, are only available for Classic Load Balancer and Application Load Balancer.

Idle Connection Timeout

The Idle Connection Timeout feature is applicable to the Application Load Balancer and Classic Load Balancer. For each request that a client makes through a load balancer, the load balancer maintains a front-end connection to the client and a back-end connection to the Amazon EC2 instance. For each connection, the load balancer manages an idle timeout that is triggered when no data is sent over the connection for a specified time period. After the idle timeout period has elapsed and no data has been sent or received, the load balancer closes the connection.

By default, Elastic Load Balancing sets the idle timeout to 60 seconds for both connections. If an HTTP request fails to complete within the idle timeout period, the load balancer closes the connection, even if the request is still being processed. You can change the idle timeout setting for the connections to ensure that lengthy operations, such as large file uploads, have time to complete.

If you use HTTP and HTTPS listeners, we recommend that you enable the TCP keep-alive option for your Amazon EC2 instances. This is configured either on the application or the operating system running on your Amazon EC2 instances. Enabling keep-alive allows the load balancer to reuse connections to your back-end instance, which reduces CPU utilization.

Cross-Zone Load Balancing

Cross-zone load balancing, available for Classic Load Balancer and Application Load Balancer, ensures that request traffic is routed evenly across all back-end instances for your load balancer, regardless of the Availability Zone in which they are located. Cross-zone load balancing reduces the need to maintain equivalent numbers of back-end instances in each Availability Zone and improves your application’s ability to handle the loss of one or more back-end instances. It is still recommended that you maintain approximately equivalent numbers of instances in each Availability Zone for higher fault tolerance.

For environments where clients cache DNS lookups, incoming requests might favor one of the Availability Zones. Using cross-zone load balancing, this imbalance in the request load is spread across all available back-end instances in the region, reducing the impact of misconfigured clients.

Connection Draining (Deregistration Delay)

Available for all load balancer types, connection draining ensures that the load balancer stops sending requests to instances that are deregistering or unhealthy while keeping the existing connections open. This enables the load balancer to complete in-flight requests made to these instances.

When you enable connection draining, you can specify a maximum time for the load balancer to keep connections alive before reporting the instance as deregistered. The maximum timeout value can be set between 1 and 3,600 seconds, with the default set to 300 seconds. When the maximum time limit is reached, the load balancer forcibly closes any remaining connections to the deregistering instance.

Proxy Protocol

This option is available for Classic Load Balancer. When you use TCP or SSL for both front-end and back-end connections, your load balancer forwards requests to the back-end instances without modifying the request headers. If you enable proxy protocol, connection information, such as the source IP address, destination IP address, and port numbers, is injected into the request before being sent to the back-end instance.

Before enabling proxy protocol, verify that your load balancer is not already behind a proxy server with proxy protocol enabled. If proxy protocol is enabled on both the proxy server and the load balancer, the load balancer injects additional configuration information into the request, interfering with the information from the proxy server. Depending on how your back-end instance is configured, this duplication might result in errors.

Proxy protocol is not needed when using an Application Load Balancer, because the Application Load Balancer already inserts HTTP X-Forwarded-For headers. Network Load Balancer provides client IP pass-through to Amazon EC2 instances (if you use the instance ID when configuring a target).

Sticky Sessions

This feature is applicable to Classic Load Balancer and Application Load Balancer. By default, an Application Load Balancer or Classic Load Balancer load balancer will route each request independently to the registered instance with the smallest load. You can also use the sticky session feature (also known as session affinity), which enables the load balancer to bind a user’s session to a specific instance. This ensures that all requests from the user during the session are sent to the same instance.

The key to managing sticky sessions with the Classic Load Balancer is to determine how long your load balancer should consistently route the user’s request to the same instance. If your application has its own session cookie, you can configure Classic Load Balancing so that the session cookie follows the duration specified by the application’s session cookie. If your application does not have its own session cookie, you can configure Elastic Load Balancing to create a session cookie by specifying your own stickiness duration. Elastic Load Balancing creates a cookie named AWSELB that is used to map the session to the instance.

Sessions for the Network Load Balancer are inherently sticky due to the flow hashing algorithm used.

Health Checks

Elastic Load Balancing supports health checks to test the status of the Amazon EC2 instances behind an Elastic Load Balancing load balancer. For the Classic Load Balancer, the status of the instances that are healthy at the time of the health check is InService, and any instances that are unhealthy at the time of the health check are labeled as OutOfService.

For Network Load Balancer and Application Load Balancer, before the load balancer will send a health check request to the target, you must register it with a target group, specify its target group in a listener rule, and ensure that the Availability Zone of the target is enabled for the load balancer. There are also five health check states for targets:

Initial This state is where the load balancer is in the process of registering the target or performing the initial health checks on the target.

Healthy This state is where the target is healthy.

Unhealthy This state is where the target did not respond to a health check or failed the health check.

Unused This state is where the target is not registered with a target group, the target group is not used in a listener rule for the load balancer, or the target is in an Availability Zone that is not enabled for the load balancer.

Draining This state is where the target is deregistering and connection draining is in process.

In general, the load balancer performs health checks on all registered instances to determine whether the instance is in a healthy state or an unhealthy state. A health check is a ping, a connection attempt, or a page that is checked periodically. You can set the time interval between health checks and also the amount of time to wait to respond in case the health check page includes a computational aspect. Lastly, you can set a threshold for the number of consecutive health check failures before an instance is marked as unhealthy.

ELB Sandwich

When you want to deploy virtual load balancers on Amazon EC2 instances, it is up to you to manage the redundancy of these instances. As the Elastic Load Balancing service has native redundancy that is managed by AWS, you can use a combination of Elastic Load Balancing load balancers and virtual load balancers on instances. Virtual load balancers may be used when you want access to vendor-specific features, such as F5 Big-IP iRules. An overview of the ELB sandwich is shown in Figure 6.10.

FIGURE 6.10 ELB sandwich

The ELB sandwich uses an Elastic Load Balancing load balancer to serve front-end traffic from users, such as web users wanting to reach a website. The first load balancing layer in the ELB sandwich is made of an Elastic Load Balancing load balancer and is represented by the ELB FQDN. The FQDN of the load balancer could also be referred to by an Amazon Route 53 domain name pointing to the load balancer via an Alias record. The first layer of an ELB sandwich, usually the Classic Load Balancer or Network Load Balancer, will then load balance across your virtual instances such as HAProxy, NGINX, or the F5 LTM Big-IP Virtual Edition. The virtual load balancer layer will then forward traffic on to your second layer of Elastic Load Balancing, which then load balances across your front-end application tier. The ELB sandwich is a method of using native high availability that is built into the Elastic Load Balancing product to provide higher availability for Amazon EC2 virtual instances.

Many virtual load balancers that run in AWS cannot natively refer to their next hop via a FQDN. For the traditional ELB sandwich using Classic Load Balancer, it is always a good idea to reference the FQDN of an Elastic Load Balancing load balancer as required to forward traffic to the second layer of load balancers. This is due to the fact that the instances within the Elastic Load Balancing load balancer may change at any point. Pointing directly to IP addresses of the second tier of load balancer could cause traffic loss if the Classic Load Balancer IPs change, similar to what happens during Classic Load Balancer scaling.

Virtual load balancing vendors have, in some cases, implemented solutions that will periodically look up the FQDN of a load balancer and store these IPs as the next hop for traffic. This, however, requires periodic lookups of the Classic Load Balancer’s FQDN. An alternate solution is to use the Network Load Balancer as the second tier of load balancers in front of the web tier. This is a better solution because the Network Load Balancer supports having a single static IP per Availability Zone, which the virtual layer of load balancers can point to rather than having to perform periodic lookups of the load balancer’s FQDN.

Summary