Chapter 8

Facility Location

Models

8.1 Introduction

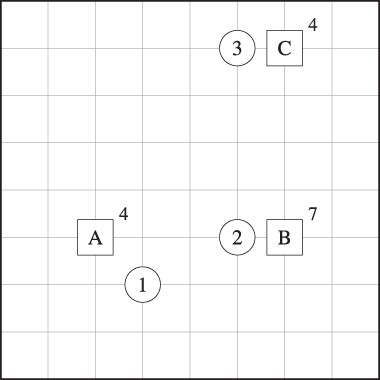

One of the major strategic decisions faced by firms is the number and locations of factories, warehouses, retailers, or other physical facilities. This is the purview of a large class of models known as facility location problems. The key trade‐off in most facility location problems is between the facility cost and customer service. If we open a lot of facilities (Figure 8.1(a)), we incur high facility costs (to build and maintain them), but we can provide good service since most customers are close to a facility. On the other hand, if we open few facilities (Figure 8.1(b)), we reduce our facility costs but must travel farther to reach our customers (or they to reach us).

Figure 8.1 Facility location configurations. Squares represent facilities; circles represent customers.

Most (but not all) location problems make two related sets of decisions: (1) where to locate, and (2) which customers are assigned or allocated to which facilities. Therefore, facility location problems are also sometimes known as location–allocation problems.

A huge range of approaches has been considered for modeling facility location decisions. These differ in terms of how they model facility costs (for example, some include the costs explicitly, while others impose a constraint on the number of facilities to be opened) and how they model customer service (for example, some include a transportation cost , while others require all or most facilities to be covered—that is, served by a facility that is within some specified distance). Facility location problems come in a great variety of flavors based on what types of facilities are to be located, whether the facilities are capacitated, which (if any) elements of the problem are stochastic, what topology the facilities may be located on (e.g., on the plane, in a network, or at discrete points), how distances or transportation costs are measured, and so on. Several excellent textbooks provide additional material for the interested reader; for example, see Mirchandani and Francis (1990), Drezner (1995a), Drezner and Hamacher (2002), or Daskin (2013). For an annotated bibliography of papers on facility location problems, see ReVelle et al. (2008b). The book by Eiselt and Marianov (2011) contains chapters on a number of seminal papers in facility location, each describing the original contribution as well as later extensions.

In addition to supply chain facilities such as plants and warehouses, location models have been applied to public sector facilities such as bus depots and fire stations, as well as to telecommunications hubs, satellite orbits, bank accounts, and other items that are not really “facilities” at all. In addition, many operations research problems can be formulated as facility location problems or have subproblems that resemble them. Facility location problems are often easy to state and formulate but are difficult to solve; this makes them a popular testing ground for new optimization tools. For all of these reasons, facility location problems are an important topic in operations research, and in supply chain management in particular, in both theoretical and applied work.

In this chapter, we will begin by discussing a classical facility location model, the uncapacitated fixed‐charge location problem (UFLP), in Section 8.2. The UFLP and its descendants have been deployed more widely in supply chain management than perhaps any other location model. One reason for this is that the UFLP is very flexible and, although it is NP‐hard, lends itself to a variety of effective solution methods. Another reason is that the UFLP includes explicit costs for both key elements of the problem—facilities and customer service—and is therefore well suited to supply chain applications.

In Section 8.3, we discuss other so‐called minisum models (in particular, the p‐median problem and a capacitated version of the UFLP), and in Section 8.4, we discuss covering models (including the p‐center, set covering, and maximal covering problems). We briefly discuss a variety of other deterministic facility location problems in Section 8.5. In Section 8.6, we introduce stochastic and robust models for facility location under uncertainty. We then discuss models for network design—a close cousin of facility location—in Section 8.7.

8.2 The Uncapacitated Fixed‐Charge Location Problem

8.2.1 Problem Statement

The uncapacitated fixed‐charge location problem (UFLP) chooses facility locations in order to minimize the total cost of building the facilities and transporting goods from facilities to customers. The UFLP makes location decisions for a single echelon, and the facilities in that echelon are assumed to serve facilities in a downstream echelon, all of whose locations are fixed. We will tend to refer to the facilities in the upstream echelon as distribution centers (DCs) or warehouses and to those in the downstream echelons as customers. However, the model is generic, and the two echelons may instead contain other types of facilities—for example, factories and warehouses, or regional and local DCs, or even fire stations and homes. Sometimes it's also useful to think of an upstream echelon, again with fixed location(s), that serves the DCs.

Each potential DC location has a fixed cost that represents building (or leasing) the facility; the fixed cost is independent of the volume that passes through the DC. There is a transportation cost per unit of product shipped from a DC to each customer. There is a single product. The DCs have no capacity restrictions—any amount of product can be handled by any DC. (We'll relax this assumption in Section 8.3.1.) The problem is to choose facility locations to minimize the fixed cost of building facilities plus the transportation cost to transport product from DCs to customers, subject to constraints requiring every customer to be served by some open DC.

As noted above, the key trade‐off in the UFLP is between fixed and transportation costs. If too few facilities are open, the fixed cost is small, but the transportation cost is large because many customers will be far from their assigned facility. On the other hand, if too many facilities are open, the fixed cost is large, but the transportation cost is small. The UFLP tries to find the right balance, and to optimize not only the number of facilities, but also their locations.

8.2.2 Formulation

Define the following notation:

| Sets | |

| I | = set of customers |

| J | = set of potential facility locations |

| Parameters | |

|

|

= annual demand of customer |

|

|

= cost to transport one unit of demand from facility |

|

|

= fixed annual cost to open a facility at site |

| Decision Variables | |

|

|

= 1 if facility j is opened, 0 otherwise |

|

|

= the fraction of customer i's demand that is served by facility j |

The transportation costs

![]() might be of the form

might be of the form ![]() for some constant k (if the shipping company charges k per mile per unit) or may be more arbitrary (for example, based on airline ticket prices, which are not linearly related to distance). In the former case, distances

may be computed in a number of ways:

for some constant k (if the shipping company charges k per mile per unit) or may be more arbitrary (for example, based on airline ticket prices, which are not linearly related to distance). In the former case, distances

may be computed in a number of ways:

-

Euclidean distance: The

distance between

and

and  is given by

is given by

The Euclidean distance metric is also known as the

norm. This is an intuitive measure of distance but is not usually applicable in supply chain contexts because Cartesian coordinates are not useful for describing real‐world locations.

norm. This is an intuitive measure of distance but is not usually applicable in supply chain contexts because Cartesian coordinates are not useful for describing real‐world locations. -

Manhattan or rectilinear metric: The

distance is given by

This metric assumes that travel is only possible parallel to the x‐ or y‐axis, e.g., travel along city streets. It is also known as the

norm.

norm. -

Great circle: This

method for calculating distances takes into account the curvature of the earth and, more importantly, takes latitudes and longitudes as inputs and returns distances in miles or kilometers. Great circle distances assume that travel occurs over a great circle, the shortest route over the surface of a sphere. Let

and

and  be the latitude and longitude of two points in radians, and let

be the latitude and longitude of two points in radians, and let  and

and  be the differences in the latitude and longitude (respectively). Then the great circle distance between the two points is given by

be the differences in the latitude and longitude (respectively). Then the great circle distance between the two points is given by

where r is the radius of the Earth, approximately 3958.76 miles or 6371.01 km (on average), and the trigonometric functions are assumed to use radians.

A simpler formula, known as the spherical law of cosines , sets the distance equal to

and is nearly as accurate as 8.1 except when the distance between the two points is very small. (See Problem 8.44.)

- Highway/network: The distance is computed as the shortest path within a network, for example, the US highway network. This is usually the most accurate method for calculating distances in a supply chain context. However, since they require data on the entire road network, they must be obtained from geographic information systems (GIS) or from online services such as Mapquest or Google Maps. (In contrast, the distance measures above can be calculated from simple formulas using only the coordinates of the facilities and customers.)

- Matrix: Sometimes a matrix containing the distance between every pair of points is given explicitly. This is the most general measure, since all others can be considered a special case. It is also the only possible measure when the cost structure exhibits no particular pattern—for example, when they are based on airline ticket prices.

In general, we won't be concerned with how transportation costs are computed—we'll assume they are given to us already as the parameters ![]() .

.

The UFLP is formulated as follows:

Formulations very similar to this were originally proposed by Manne (1964) and Balinski (1965). The objective function 8.3 computes the total (fixed plus transportation) cost. In the discussion that follows, we'll use ![]() to denote the optimal objective value of (UFLP). Constraints 8.4 require the full amount of every customer's demand to be assigned, to one or more facilities. These are often called assignment constraints. Constraints 8.5 prohibit a customer from being assigned to a facility that has not been opened. These are often called linking constraints. Constraints 8.6 require the location (x) variables to be binary, and constraints 8.7 require the assignment (y) variables to be nonnegative.

to denote the optimal objective value of (UFLP). Constraints 8.4 require the full amount of every customer's demand to be assigned, to one or more facilities. These are often called assignment constraints. Constraints 8.5 prohibit a customer from being assigned to a facility that has not been opened. These are often called linking constraints. Constraints 8.6 require the location (x) variables to be binary, and constraints 8.7 require the assignment (y) variables to be nonnegative.

Constraints 8.4 and 8.7 together ensure that ![]() . In fact, it is always optimal to assign each customer solely to its nearest open facility. (Why?) Therefore, there always exists an optimal solution in which

. In fact, it is always optimal to assign each customer solely to its nearest open facility. (Why?) Therefore, there always exists an optimal solution in which ![]() for all

for all ![]() . It is therefore appropriate to think of the

. It is therefore appropriate to think of the ![]() as binary variables and to talk about “the facility to which customer i is assigned.”

as binary variables and to talk about “the facility to which customer i is assigned.”

Another way to write constraints 8.5 is

If ![]() , then

, then ![]() can be 1 for any or all

can be 1 for any or all ![]() , while if

, while if ![]() , then

, then ![]() must be 0 for all i. These constraints are equivalent to 8.5 for the IP. But the LP relaxation

is weaker (i.e., it provides a weaker bound) if constraints 8.8 are used instead of 8.5. This is because there are solutions that are feasible for the LP relaxation with 8.8 that are not feasible for the LP relaxation with 8.5. To take a trivial example, suppose there are 2 facilities and 10 customers with equal demand, and suppose each facility serves 5 customers in a given solution. Then it is feasible to set

must be 0 for all i. These constraints are equivalent to 8.5 for the IP. But the LP relaxation

is weaker (i.e., it provides a weaker bound) if constraints 8.8 are used instead of 8.5. This is because there are solutions that are feasible for the LP relaxation with 8.8 that are not feasible for the LP relaxation with 8.5. To take a trivial example, suppose there are 2 facilities and 10 customers with equal demand, and suppose each facility serves 5 customers in a given solution. Then it is feasible to set ![]() for the problem with 8.8 but not with 8.5. Since the feasible region for the problem with 8.8 is larger than that for the problem with 8.5, its objective value is no greater. It is important to understand that the IPs have the same optimal objective value, but the LPs have different values—one provides a weaker LP bound than the

other.

for the problem with 8.8 but not with 8.5. Since the feasible region for the problem with 8.8 is larger than that for the problem with 8.5, its objective value is no greater. It is important to understand that the IPs have the same optimal objective value, but the LPs have different values—one provides a weaker LP bound than the

other.

The UFLP is NP‐hard (Garey and Johnson, 1979). A large number of solution methods have been proposed in the literature over the past several decades, both exact algorithms and heuristics. Some of the earliest exact algorithms involve simply solving the IP using branch‐and‐bound. Today, this would mean solving (UFLP) as‐is using CPLEX, Gurobi, or another off‐the‐shelf IP solver, although such general‐purpose solvers did not exist when the UFLP was first formulated. This approach works quite well using modern solvers, in part because the LP relaxation of (UFLP) is usually extremely tight, and in fact it often results in all‐integer solutions “for free” (Morris, 1978). (ReVelle and Swain (1970) discuss this property in the context of a related problem, the p‐median problem. ) Current versions of CPLEX or Gurobi can solve instances of the UFLP with thousands of potential facility sites in a matter of minutes. However, when it was first proposed that branch‐and‐bound be used to solve the UFLP (by Efroymson and Ray (1966)), IP technology was much less advanced, and this approach could only be used to solve problems of modest size. Therefore, a number of other optimal approaches were developed. Two of these—Lagrangian relaxation and a dual‐ascent method called DUALOC—are discussed in Sections 8.2.3 and 8.2.4, respectively. Many other IP techniques, such as Dantzig–Wolfe or Benders decomposition, have also been successfully applied to the UFLP (e.g., Balinski (1965) and Swain (1974)). We discuss heuristic methods for the UFLP in Section 8.2.5.

8.2.3 Lagrangian Relaxation

8.2.3.1 Introduction

One of the methods that has proven to be most effective for the UFLP and other location problems is Lagrangian relaxation, a standard technique for integer programming (as well as other types of optimization problems). The basic idea behind Lagrangian relaxation is to remove a set of constraints to create a problem that's easier to solve than the original. But instead of just removing the constraints, we include them in the objective function by adding a term that penalizes solutions for violating the constraints. This process gives a lower bound on the optimal objective value of the UFLP, but it does not necessarily give a feasible solution. Feasible solutions must be found using some other method (to be described below); each feasible solution provides an upper bound on the optimal objective value. When the upper and lower bounds are close (say, within 1%), we know that the feasible solution we have found is close to optimal.

For more details on Lagrangian relaxation, see Appendix D.1. See also Fisher (1981,1985) for excellent overviews. Lagrangian relaxation was proposed as a method for solving a UFLP‐like problem by Cornuejols et al. (1977).

We want to use Lagrangian relaxation on the UFLP formulation given in Section 8.2.2. The question is, which constraints should we relax? There are only two options: 8.4 and 8.5. (Constraints 8.6 and 8.7 can't be relaxed using Lagrangian relaxation.) Relaxing either 8.4 or 8.5 results in a problem that is quite easy to solve, and both relaxations produce the same bound (for reasons discussed below). But relaxing 8.4 involves relaxing fewer constraints, which is generally preferable (also for reasons that will be discussed below). Therefore, we will relax constraints 8.4, although in Section 8.2.3.8 we will briefly discuss what happens when constraints 8.5 are relaxed.

8.2.3.2 Relaxation

We relax constraints 8.4, removing them from the problem and adding a penalty term to the objective function:

The ![]() are called Lagrange multipliers.

There is one for each relaxed constraint. Their purpose is to ensure that violations in the constraints are penalized by just the right amount—more on this later. We'll use

are called Lagrange multipliers.

There is one for each relaxed constraint. Their purpose is to ensure that violations in the constraints are penalized by just the right amount—more on this later. We'll use ![]() to represent the vector of

to represent the vector of ![]() values.

values.

For now, assume ![]() is fixed. Relaxing constraints 8.4 gives us the following problem, known as

the Lagrangian subproblem:

is fixed. Relaxing constraints 8.4 gives us the following problem, known as

the Lagrangian subproblem:

(The subscript ![]() on the problem name reminds us that this problem depends on

on the problem name reminds us that this problem depends on ![]() as a parameter.) Since the

as a parameter.) Since the ![]() are all constants, the last term of 8.9 can be ignored during the optimization.

are all constants, the last term of 8.9 can be ignored during the optimization.

How can we solve this problem? It turns out that the problem is quite easy to solve by inspection—we don't need to use an IP solver or any sort of complicated algorithm. Suppose that we set ![]() for a given facility j. By constraints 8.10, setting

for a given facility j. By constraints 8.10, setting ![]() allows

allows ![]() to be set to 1 for any

to be set to 1 for any ![]() . For which i would

. For which i would ![]() be set to 1 in an optimal solution to the problem? Since this is a minimization problem,

be set to 1 in an optimal solution to the problem? Since this is a minimization problem, ![]() would be set to 1 for all i such that

would be set to 1 for all i such that ![]() . So if

. So if ![]() were set to 1, the benefit (or contribution to the objective function) would be

were set to 1, the benefit (or contribution to the objective function) would be

Now the question is, which ![]() should be set to 1? It's optimal to set

should be set to 1? It's optimal to set ![]() if and only if

if and only if ![]() ; that is, if the benefit of opening the facility outweighs its fixed cost. Theorem 8.1 summarizes these conclusions.

; that is, if the benefit of opening the facility outweighs its fixed cost. Theorem 8.1 summarizes these conclusions.

Notice that in optimal solutions to (UFLP‐LRλ), customers may be assigned to 0 or more than 1 facility since the constraints requiring exactly one facility per customer have been relaxed.

Why is this problem so much easier to solve than the original problem? The answer is that (UFLP‐LRλ) decomposes by j, in the sense that we can focus on each ![]() individually since there are no constraints tying them together. In the original problem, constraints 8.4 tied the js together—we could not make a decision about

individually since there are no constraints tying them together. In the original problem, constraints 8.4 tied the js together—we could not make a decision about ![]() without also making a decision about

without also making a decision about ![]() since i had to be assigned to exactly one facility.

since i had to be assigned to exactly one facility.

The method for solving (UFLP‐LRλ) is summarized in Algorithm 8.1.

8.2.3.3 Lower Bound

We've now solved (UFLP‐LRλ) for given ![]() . How does this help us? Well, from Theorem D.1, we know that, for any

. How does this help us? Well, from Theorem D.1, we know that, for any ![]() , the optimal objective value of (UFLP‐LRλ) is a lower bound on the optimal objective value for the original problem:

, the optimal objective value of (UFLP‐LRλ) is a lower bound on the optimal objective value for the original problem:

The point of Lagrangian relaxation is not to generate feasible solutions, since the solutions to (UFLP‐LRλ) will generally be infeasible for (UFLP). Instead, the point is to generate good (i.e., high) lower bounds in order to prove that a feasible solution we've found some other way is good. For example, if we've found a feasible solution for the UFLP (using any method at all) whose objective value is 1005 and we've also found a ![]() so that

so that ![]() , then we know our solution is no more than

, then we know our solution is no more than ![]() = 0.5% away from optimal. (It may in fact be exactly optimal, but given these two bounds, we can only say it's within 0.5%.)

= 0.5% away from optimal. (It may in fact be exactly optimal, but given these two bounds, we can only say it's within 0.5%.)

Now, if we pick ![]() at random, we're not likely to get a particularly good bound—that is,

at random, we're not likely to get a particularly good bound—that is, ![]() won't be close to

won't be close to ![]() . We have to choose

. We have to choose ![]() cleverly so that we get the best possible bound—so that

cleverly so that we get the best possible bound—so that ![]() is as large as possible. That is, we want to solve problem (LR) given in (D.8), which, for the UFLP, can be written as follows:

is as large as possible. That is, we want to solve problem (LR) given in (D.8), which, for the UFLP, can be written as follows:

We'll talk more later about how to solve this problem. For now, let's assume we know the optimal ![]() and that the optimal objective value is

and that the optimal objective value is ![]() . How large can

. How large can ![]() be? Theorem D.1 tells us it cannot be larger than

be? Theorem D.1 tells us it cannot be larger than ![]() , but how close can it get? The answer turns out to be related to the LP relaxation

of the problem. From Theorem D.2, we have

, but how close can it get? The answer turns out to be related to the LP relaxation

of the problem. From Theorem D.2, we have

where ![]() is the optimal objective value of the LP relaxation of (UFLP) and

is the optimal objective value of the LP relaxation of (UFLP) and ![]() is the optimal objective value of (LR).

is the optimal objective value of (LR).

Combining 8.16 and 8.18, we now know that

For most problems, ![]() , so where in the gap does

, so where in the gap does ![]() fall? An IP is said to have the integrality property

if its LP relaxation naturally has an all‐integer optimal solution. You should be able to convince yourself that (UFLP‐LRλ) has the integrality property for all

fall? An IP is said to have the integrality property

if its LP relaxation naturally has an all‐integer optimal solution. You should be able to convince yourself that (UFLP‐LRλ) has the integrality property for all ![]() since it is never better to set x and y to fractional values. Therefore, the following is a corollary to Lemma D.3:

since it is never better to set x and y to fractional values. Therefore, the following is a corollary to Lemma D.3:

Combining 8.19 and Corollary 8.1, we have

This means that if the LP relaxation bound from the UFLP is not very tight, the Lagrangian relaxation bound won't be very tight either. Fortunately, as noted in Section 8.2.2, the UFLP tends to have very tight LP relaxation bounds. This raises the question of why we'd want to use Lagrangian relaxation at all since the LP bound is just as tight.

There are several possible answers to this question. The first is that when Lagrangian relaxation was first applied to the UFLP, computer implementations of the simplex method were quite inefficient, and even the LP relaxation of the UFLP could take a long time to solve, whereas the Lagrangian subproblem could be solved quite quickly. Recent implementations of the simplex method, however (for example, recent versions of CPLEX), are much more efficient and are able to solve reasonably large instances of the UFLP—LP or IP—pretty quickly. Nevertheless, Lagrangian relaxation is still an important tool for solving the UFLP. One advantage of this method is that it can often be modified to solve extensions of the UFLP that IP solvers can't solve—for example, nonlinear, nonconvex problems like the location model with risk pooling (LMRP), which we discuss in Section 12.2.

It is important to distinguish between ![]() (the best possible lower bound achievable by Lagrangian relaxation) and

(the best possible lower bound achievable by Lagrangian relaxation) and ![]() (the lower bound achieved at a given iteration of the procedure). At any given iteration, we have

(the lower bound achieved at a given iteration of the procedure). At any given iteration, we have

where ![]() is the objective value of the Lagrangian subproblem for the particular

is the objective value of the Lagrangian subproblem for the particular ![]() at the current iteration, and

at the current iteration, and ![]() is the objective value of the particular feasible solution

is the objective value of the particular feasible solution ![]() found at the current

iteration.

found at the current

iteration.

8.2.3.4 Upper Bound

Now that we've obtained a lower bound on the optimal objective of (UFLP) using (UFLP‐LRλ), we need to find an upper bound. Upper bounds come from feasible solutions to (UFLP). How can we build good feasible solutions? One way would be using construction and/or improvement heuristics like those described in Section 8.2.5. But we'd like to take advantage of the information contained in the solutions to (UFLP‐LRλ); that is, we'd like to convert a solution to (UFLP‐LRλ) into one for (UFLP). Remember that solutions to (UFLP‐LRλ) consist of a set of facility locations (identified by the x variables) and a set of assignments (identified by the y variables). It is the y variables that make the solution infeasible for (UFLP), since customers might be assigned to 0 or more than 1 facility. (If every customer happens to be assigned to exactly 1 facility, the solution is also feasible for (UFLP). In fact, it is optimal for (UFLP) since it has the same objective value for both (UFLP‐LRλ), which provides a lower bound, and (UFLP), which provides an upper bound. But we can't expect this to happen in general.)

Generating a feasible solution for (UFLP) is easy: We just open the facilities that are open in the solution to (UFLP‐LRλ) and then assign each customer to its nearest open facility. (See Algorithm 8.2.) The resulting solution is feasible and provides an upper bound on the optimal objective value of (UFLP). Sometimes an improvement heuristic (like the swap or neighborhood search heuristics discussed in Section 8.3.2.3) is applied to each feasible solution found, but this is optional.

8.2.3.5 Updating the Multipliers

Each ![]() gives a single lower bound and (using the method in Section 8.2.3.4) a single upper bound. The Lagrangian relaxation process involves many iterations, each using a different value of

gives a single lower bound and (using the method in Section 8.2.3.4) a single upper bound. The Lagrangian relaxation process involves many iterations, each using a different value of ![]() , in the hopes of tightening the bounds. It would be impractical to try every possible value of

, in the hopes of tightening the bounds. It would be impractical to try every possible value of ![]() ; we want to choose

; we want to choose ![]() cleverly.

cleverly.

Using the logic of Section D.1.3, if ![]() is too small, there's no real incentive to set the

is too small, there's no real incentive to set the ![]() variables to 1 since the penalty will be small. On the other hand, if

variables to 1 since the penalty will be small. On the other hand, if ![]() is too large, there will be an incentive to set lots of

is too large, there will be an incentive to set lots of ![]() variables to 1, making the term inside the parentheses negative and the overall penalty large and negative. (Remember that (UFLP‐LRλ) is a minimization problem.) By changing

variables to 1, making the term inside the parentheses negative and the overall penalty large and negative. (Remember that (UFLP‐LRλ) is a minimization problem.) By changing ![]() , we'll encourage fewer or more

, we'll encourage fewer or more ![]() variables to be 1.

variables to be 1.

So:

- If

, then

, then  is too small; it should be increased.

is too small; it should be increased. - If

, then

, then  is too large; it should be decreased.

is too large; it should be decreased. - If

, then

, then  is just right; it should not be changed.

is just right; it should not be changed.

Here's another way to see the effect of changing ![]() . Remember that if

. Remember that if ![]() in the solution to (UFLP‐LRλ),

in the solution to (UFLP‐LRλ), ![]() will be set to 1 if

will be set to 1 if

Increasing ![]() makes this hold for more facilities j, while decreasing it makes it hold for fewer.

makes this hold for more facilities j, while decreasing it makes it hold for fewer.

There are several ways to make these adjustments to ![]() . Perhaps the most common is subgradient optimization,

discussed in Section D.1.3. For the UFLP, the step size at iteration t (denoted

. Perhaps the most common is subgradient optimization,

discussed in Section D.1.3. For the UFLP, the step size at iteration t (denoted ![]() ) is given by

) is given by

where ![]() is the lower bound found at iteration t, UB is the best upper bound found (i.e., the objective value of the best feasible solution found so far), and

is the lower bound found at iteration t, UB is the best upper bound found (i.e., the objective value of the best feasible solution found so far), and ![]() is a constant that is generally set to 2 at iteration 1 and divided by 2 after a given number (say 20) of consecutive iterations have passed during which the best known lower bound has not improved. The step direction for iteration i is simply given by

is a constant that is generally set to 2 at iteration 1 and divided by 2 after a given number (say 20) of consecutive iterations have passed during which the best known lower bound has not improved. The step direction for iteration i is simply given by

(the violation in the constraint).

To obtain the new multipliers (call them ![]() ) from the old ones (

) from the old ones (![]() ), we set

), we set

Note that since ![]() , this follows the rules given above: If

, this follows the rules given above: If ![]() , then

, then ![]() increases; if

increases; if ![]() , then

, then ![]() decreases; and if

decreases; and if ![]() , then

, then ![]() stays the

same.

stays the

same.

The process of solving (UFLP‐LRλ), finding a feasible solution, and updating ![]() is continued until some of criteria are met.

(See Section D.1.4.)

is continued until some of criteria are met.

(See Section D.1.4.)

At the first iteration, ![]() can be initialized using a variety of ways: For example, set

can be initialized using a variety of ways: For example, set ![]() for all i, set it to some random number, or set it according to some other

ad‐hoc rule.

for all i, set it to some random number, or set it according to some other

ad‐hoc rule.

If the Lagrangian procedure stops before the upper and lower bounds are sufficiently close to each other, we can use branch‐and‐bound to close the optimality gap; see Section D.1.6. The Lagrangian procedure is summarized in Section D.1.7.

8.2.3.6 Summary

The Lagrangian relaxation method for the UFLP is summarized in the pseudocode in Algorithm 8.3. In the pseudocode, ![]() represents an optimal solution to (UFLP‐LRλ) ,

represents an optimal solution to (UFLP‐LRλ) , ![]() represents a feasible solution to (UFLP), and

represents a feasible solution to (UFLP), and ![]() represents the current best solution for (UFLP). Note that in step 29, other termination criteria can be used, instead or in addition.

represents the current best solution for (UFLP). Note that in step 29, other termination criteria can be used, instead or in addition.

8.2.3.7 Variable Fixing

Sometimes the Lagrangian relaxation procedure terminates with the lower and upper bounds farther apart than we'd like. Before executing branch‐and‐bound

to close the gap, we may be able to fix some of the ![]() variables to 0 or 1 based on the facility benefits and the current bounds. The variables can be fixed permanently, throughout the entire branch‐and‐bound tree. The more variables we can fix, the faster the branch‐and‐bound procedure is likely to run. Essentially, the method works by “peeking” down a branch of the tree and running a quick check to determine whether the next node down the branch would be fathomed.

variables to 0 or 1 based on the facility benefits and the current bounds. The variables can be fixed permanently, throughout the entire branch‐and‐bound tree. The more variables we can fix, the faster the branch‐and‐bound procedure is likely to run. Essentially, the method works by “peeking” down a branch of the tree and running a quick check to determine whether the next node down the branch would be fathomed.

Note that, in the second part of the theorem, if ![]() then, by 8.14,

then, by 8.14, ![]() , which is why the left‐hand side of 8.24 might be greater than UB.

, which is why the left‐hand side of 8.24 might be greater than UB.

This trick has been applied successfully to a variety of facility location problems; see, e.g., Daskin et al. (2002) and Snyder and Daskin (2005). Typically, the conditions in Theorem 8.3 are checked twice after processing has terminated at the root node, once using the most recent multipliers ![]() and once using the multipliers that produced the best‐known lower bound. The time required to check these conditions for every j is

negligible.

and once using the multipliers that produced the best‐known lower bound. The time required to check these conditions for every j is

negligible.

8.2.3.8 Alternate Relaxation

As stated above, we could have chosen instead to relax constraints 8.5. In this case, the Lagrangian subproblem becomes

Now every customer must be assigned to a single facility, but that facility need not be open. There are no constraints linking the x and y variables, so the problem can be written as two separate problems:

To solve the x‐problem, we simply set ![]() for all j such that

for all j such that ![]() . (Note that since the constraints relaxed are

. (Note that since the constraints relaxed are ![]() constraints,

constraints, ![]() ; see Section D.1.5.1.) To solve the y‐problem, for each i, we set

; see Section D.1.5.1.) To solve the y‐problem, for each i, we set ![]() for the j that minimizes

for the j that minimizes ![]() . The rest of the procedure is similar, except that the step‐size calculation becomes

. The rest of the procedure is similar, except that the step‐size calculation becomes

and the multiplier‐updating formula becomes

In practice, relaxing the assignment constraints 8.4 tends to work better than relaxing the linking constraints 8.5. One reason for this is that the former relaxation involves relaxing fewer constraints, which generally makes it easier to find good multipliers using subgradient optimization. Another reason is that since ![]() will be 0 for many j that are open, there will be many constraints such that

will be 0 for many j that are open, there will be many constraints such that ![]() . It is often difficult to get good results when relaxing inequality constraints if many of them

have slack.

. It is often difficult to get good results when relaxing inequality constraints if many of them

have slack.

8.2.4 The DUALOC Algorithm

The DUALOC algorithm was proposed by Erlenkotter (1978). It is a dual‐ascent or primal–dual algorithm that constructs good feasible solutions for the dual of the LP relaxation of (UFLP) and then uses these to develop good (often optimal) integer solutions for the primal, i.e., for (UFLP) itself.

We form the LP relaxation of (UFLP), denoted (UFLP‐P), by replacing constraints 8.6 with

Let ![]() and

and ![]() be the dual variables for constraints 8.4 and 8.5, respectively. In addition, for notational convenience, let

be the dual variables for constraints 8.4 and 8.5, respectively. In addition, for notational convenience, let ![]() . Then the LP dual

is given by

. Then the LP dual

is given by

The complementary slackness conditions for (UFLP‐P) and (UFLP‐D) are given by

Suppose we are given arbitrary values of the variables ![]() . Then either it is feasible to set

. Then either it is feasible to set ![]() as small as possible, i.e.,

as small as possible, i.e.,

for all i and j, or there are no feasible ![]() values (for the given

values (for the given ![]() values). Moreover, since

values). Moreover, since ![]() does not appear in the objective function, any feasible

does not appear in the objective function, any feasible ![]() (for fixed

(for fixed ![]() ) is acceptable. Thus, we assume that 8.45 holds and substitute this relationship into (UFLP‐D). Constraints 8.39 and 8.40 are automatically satisfied when 8.45 holds; therefore, we obtain the following condensed dual,

which uses only

) is acceptable. Thus, we assume that 8.45 holds and substitute this relationship into (UFLP‐D). Constraints 8.39 and 8.40 are automatically satisfied when 8.45 holds; therefore, we obtain the following condensed dual,

which uses only ![]() and not

and not ![]() :

:

Substituting 8.45 into the complementary slackness conditions 8.41–8.44, we obtain

Note that (UFLP‐CD) is not an LP, since the ![]() function is nonlinear. One could develop a customized simplex‐type algorithm

to solve it—an approach like this is proposed by Schrage (1978) for the p‐median problem,

among others—but instead, the DUALOC approach exploits the structure of (UFLP‐CD) to find near‐optimal solutions directly.

function is nonlinear. One could develop a customized simplex‐type algorithm

to solve it—an approach like this is proposed by Schrage (1978) for the p‐median problem,

among others—but instead, the DUALOC approach exploits the structure of (UFLP‐CD) to find near‐optimal solutions directly.

The DUALOC algorithm consists of two main procedures. The first is a dual‐ascent procedure that generates feasible dual solutions for (UFLP‐CD) and corresponding primal integer solutions for (UFLP). The second is a dual‐adjustment procedure that attempts to reduce complementary slackness violations (thereby improving the primal or dual solutions, or both) by adjusting the dual solution iteratively and calling the dual‐ascent procedure as a subroutine. If these procedures terminate without an optimal integer solution to (UFLP), branch‐and‐bound is used to close the optimality gap.

8.2.4.1 Primal–Dual Relationships

The dual‐ascent procedure generates both a dual solution ![]() for (UFLP‐CD) and a set

for (UFLP‐CD) and a set ![]() of facility locations such that the following properties hold:

of facility locations such that the following properties hold:

-

Primal–Dual Property 1 (PDP1):

for all

for all

-

Primal–Dual Property 2 (PDP2): For each

, there exists at least one

, there exists at least one  such that

such that

Such a solution can easily be converted to an integer primal solution: The set ![]() provides the x variables for (UFLP), and, as in the Lagrangian relaxation procedure (Section 8.2.3.4), the y variables can be set by assigning each customer to its nearest open facility. That is, an integer primal solution for (UFLP) can be obtained from

provides the x variables for (UFLP), and, as in the Lagrangian relaxation procedure (Section 8.2.3.4), the y variables can be set by assigning each customer to its nearest open facility. That is, an integer primal solution for (UFLP) can be obtained from ![]() as follows:

as follows:

where ![]() .

.

The primal–dual solution ![]() satisfies three of the four complementary slackness conditions

: 8.48 is satisfied because of PDP1, and 8.50 is satisfied because each i is assigned to exactly one j in 8.53. To see why 8.49 is satisfied, suppose

satisfies three of the four complementary slackness conditions

: 8.48 is satisfied because of PDP1, and 8.50 is satisfied because each i is assigned to exactly one j in 8.53. To see why 8.49 is satisfied, suppose ![]() , i.e.,

, i.e., ![]() . By PDP2,

. By PDP2, ![]() for some

for some ![]() and

and ![]() by the definition of

by the definition of ![]() , so

, so

Thus, ![]() and

and ![]() are optimal for (UFLP‐P) and (UFLP‐CD), respectively, if and only if 8.51 holds. Moreover, since

are optimal for (UFLP‐P) and (UFLP‐CD), respectively, if and only if 8.51 holds. Moreover, since ![]() is integer, if it is optimal for (UFLP‐P), then it is also optimal for (UFLP). (It may seem strange to hope that the integer solution

is integer, if it is optimal for (UFLP‐P), then it is also optimal for (UFLP). (It may seem strange to hope that the integer solution ![]() is optimal for the LP relaxation (UFLP‐P). But remember that (UFLP‐P) often has all‐integer solutions “for free” (see page 16), and is usually very tight when it is not all‐integer so that good integer solutions to (UFLP‐P) are likely to be good also for (UFLP).)

is optimal for the LP relaxation (UFLP‐P). But remember that (UFLP‐P) often has all‐integer solutions “for free” (see page 16), and is usually very tight when it is not all‐integer so that good integer solutions to (UFLP‐P) are likely to be good also for (UFLP).)

Condition 8.51 may be violated when ![]() for some

for some ![]() , since in that case

, since in that case ![]() but

but ![]() . This suggests that complementary slackness violations can be reduced by focusing on the

. This suggests that complementary slackness violations can be reduced by focusing on the ![]() terms for

terms for ![]() , and indeed those terms directly affect the duality gap, as the next lemma attests.

, and indeed those terms directly affect the duality gap, as the next lemma attests.

The dual‐ascent procedure

(Section 8.2.4.2) generates ![]() and

and ![]() . The dual‐adjustment procedure

(Section 8.2.4.3) then attempts to improve the solutions by reducing

. The dual‐adjustment procedure

(Section 8.2.4.3) then attempts to improve the solutions by reducing ![]() terms for

terms for ![]() .

.

8.2.4.2 The Dual‐Ascent Procedure

The dual‐ascent procedure constructs a dual solution ![]() and a facility set

and a facility set ![]() such that properties PDP1 and PDP2 hold for

such that properties PDP1 and PDP2 hold for ![]() and

and ![]() . The procedure begins by constructing an easy feasible solution in which the

. The procedure begins by constructing an easy feasible solution in which the ![]() variables are small (in order to ensure feasibility with respect to 8.47) and then increasing the

variables are small (in order to ensure feasibility with respect to 8.47) and then increasing the ![]() one by one (in order to improve the objective 8.46).

one by one (in order to improve the objective 8.46).

For each ![]() , sort the costs

, sort the costs ![]() in nondecreasing order and let

in nondecreasing order and let ![]() be the kth of these costs, for

be the kth of these costs, for ![]() . Define

. Define ![]() . Then an initial solution can be generated by setting

. Then an initial solution can be generated by setting ![]() for all

for all ![]() ; this solution is feasible for (UFLP‐CD). (Why?) Actually, any initial feasible solution will work, but this one is easy to obtain.

; this solution is feasible for (UFLP‐CD). (Why?) Actually, any initial feasible solution will work, but this one is easy to obtain.

The dual‐ascent procedure is given in Algorithm 8.4. In line 1, we initialize ![]() to

to ![]() and initialize the index

and initialize the index ![]() to 2. Throughout the algorithm,

to 2. Throughout the algorithm, ![]() equals the smallest k such that

equals the smallest k such that ![]() ; as the

; as the ![]() increase in the algorithm, so do the

increase in the algorithm, so do the ![]() . In line 2,

. In line 2, ![]() represents the slack in constraint 8.47 for facility j; since

represents the slack in constraint 8.47 for facility j; since ![]() equals the smallest

equals the smallest ![]() ,

, ![]() . The algorithm loops through the customers; for each customer i, we would like to set

. The algorithm loops through the customers; for each customer i, we would like to set ![]() to the next larger value of

to the next larger value of ![]() , i.e., to

, i.e., to ![]() . However, increasing

. However, increasing ![]() increases the left‐hand side of 8.47 for all j such that

increases the left‐hand side of 8.47 for all j such that ![]() . (These j are the facilities whose costs are

. (These j are the facilities whose costs are ![]() .) Therefore, line 6 calculates the largest allowable increase in

.) Therefore, line 6 calculates the largest allowable increase in ![]() without violating 8.47 for any j. Note that we only consider j such that

without violating 8.47 for any j. Note that we only consider j such that ![]() ; for the other j, the left‐hand sides of 8.47 will not increase because we will not increase

; for the other j, the left‐hand sides of 8.47 will not increase because we will not increase ![]() past

past ![]() , as enforced by lines 7–8. Lines (9)–(10) update the

, as enforced by lines 7–8. Lines (9)–(10) update the IMPROVED flag and the index ![]() . (The

. (The IMPROVED flag is only set to TRUE if we were able to increase ![]() all the way to

all the way to ![]() for some i, not for smaller increases.) Lines 12–14 adjust the slack for all facilities whose left‐hand sides of 8.47 will change, and line 15 performs the update to

for some i, not for smaller increases.) Lines 12–14 adjust the slack for all facilities whose left‐hand sides of 8.47 will change, and line 15 performs the update to ![]() . The process repeats until

. The process repeats until ![]() cannot be increased to

cannot be increased to ![]() for any customer. Line 18 sets

for any customer. Line 18 sets ![]() equal to the final value of v and builds the set

equal to the final value of v and builds the set ![]() , and the algorithm returns both these values.

, and the algorithm returns both these values.

If there is a strict subset of ![]() that still satisfies PDP1 and PDP2, it is better to use that subset. To see why, suppose there is a facility

that still satisfies PDP1 and PDP2, it is better to use that subset. To see why, suppose there is a facility ![]() with

with ![]() that is not included in

that is not included in ![]() . PDP1 does not prohibit this situation; it prohibits the converse. Would it be better to add

. PDP1 does not prohibit this situation; it prohibits the converse. Would it be better to add ![]() to

to ![]() ? Lemma 8.4 suggests the answer is no: For each

? Lemma 8.4 suggests the answer is no: For each ![]() , either

, either ![]() (so

(so ![]() becomes the new closest facility to i), in which case

becomes the new closest facility to i), in which case ![]() increases by

increases by ![]() ; or

; or ![]() , in which case

, in which case ![]() increases by

increases by ![]() . Therefore, we want

. Therefore, we want ![]() to be minimal in the sense that no facility can be removed from it without violating PDP2. Of course, finding a minimal

to be minimal in the sense that no facility can be removed from it without violating PDP2. Of course, finding a minimal ![]() is itself a combinatorial problem. Erlenkotter (1978) suggests a simple heuristic for finding such a set, but to keep things simple, we'll just assume that

is itself a combinatorial problem. Erlenkotter (1978) suggests a simple heuristic for finding such a set, but to keep things simple, we'll just assume that ![]() contains all j for which

contains all j for which ![]() .

.

You might be wondering why we limit ![]() to

to ![]() in line 8, since we want

in line 8, since we want ![]() to be as large as possible, and we can leave

to be as large as possible, and we can leave ![]() at the value set in line 6 while maintaining feasibility. Recall that the complementary slackness condition 8.51 is violated when

at the value set in line 6 while maintaining feasibility. Recall that the complementary slackness condition 8.51 is violated when ![]() and j is open but i is not assigned to j. There tend to be fewer of these violations when we spread the

and j is open but i is not assigned to j. There tend to be fewer of these violations when we spread the ![]() among the customers i rather than having a few customers with very large

among the customers i rather than having a few customers with very large ![]() values.

values.

Once we have ![]() , we can generate an integer primal solution

, we can generate an integer primal solution ![]() using 8.52 and 8.53. If

using 8.52 and 8.53. If ![]() satisfies 8.51 for all i and j, then the complementary slackness conditions are all satisfied and

satisfies 8.51 for all i and j, then the complementary slackness conditions are all satisfied and ![]() is optimal. If, instead, 8.51 is violated for some i and j, then we attempt to reduce these violations using the dual‐adjustment procedure, described in the next section.

is optimal. If, instead, 8.51 is violated for some i and j, then we attempt to reduce these violations using the dual‐adjustment procedure, described in the next section.

8.2.4.3 The Dual‐Adjustment Procedure

The dual‐adjustment procedure identifies customers and facilities that violate the complementary slackness

condition 8.51 and reduces these violations by decreasing the dual variable ![]() for some

for some ![]() . Doing so frees up slack on some of the binding constraints 8.47, which allows us to increase

. Doing so frees up slack on some of the binding constraints 8.47, which allows us to increase ![]() for other

for other ![]() . Each unit of decrease in

. Each unit of decrease in ![]() allows one unit of increase in

allows one unit of increase in ![]() (since the coefficients in 8.51 equal 1). The dual objective value will increase if more than one

(since the coefficients in 8.51 equal 1). The dual objective value will increase if more than one ![]() can be increased in this way and will stay the same if only one can be increased. In either case, we may obtain a new (potentially better) primal solution since the set

can be increased in this way and will stay the same if only one can be increased. In either case, we may obtain a new (potentially better) primal solution since the set ![]() might change.

might change.

We face three questions: (1) Which dual variables ![]() are candidates for reduction? (2) Once we reduce

are candidates for reduction? (2) Once we reduce ![]() , adding slack to some of the constraints, which

, adding slack to some of the constraints, which ![]() are candidates for increase? (3) How much should we increase each of the candidate

are candidates for increase? (3) How much should we increase each of the candidate ![]() ? We'll answer each of these questions in turn.

? We'll answer each of these questions in turn.

Which

![]() to decrease? A customer i is a candidate for reduction in

to decrease? A customer i is a candidate for reduction in ![]() if it violates the complementary slackness condition 8.51. The next lemma characterizes those customers in terms of v and

if it violates the complementary slackness condition 8.51. The next lemma characterizes those customers in terms of v and ![]() .

.

Therefore, a customer i is a candidate for reduction in ![]() if

if ![]() for at least two

for at least two ![]() . The algorithm reduces it only as far as the next‐smaller

. The algorithm reduces it only as far as the next‐smaller ![]() ; that is, it reduces it to

; that is, it reduces it to ![]() , where

, where ![]() is the largest

is the largest ![]() (among all

(among all ![]() ) that is strictly less than

) that is strictly less than ![]() :

:

Which

![]() to increase? Suppose

to increase? Suppose ![]() for at least two

for at least two ![]() and so we reduce

and so we reduce ![]() . This adds slack to 8.47 for all

. This adds slack to 8.47 for all ![]() such that

such that ![]() . Lemma 8.2 implies that two of these constraints correspond to

. Lemma 8.2 implies that two of these constraints correspond to ![]() and

and ![]() , where

, where ![]() is the second‐closest facility in

is the second‐closest facility in ![]() to i. (Recall that

to i. (Recall that ![]() is the closest.) Suppose there is some

is the closest.) Suppose there is some ![]() for which there is only one

for which there is only one ![]() such that

such that ![]() . We'll say that

. We'll say that ![]() is solely constrained by j in this case, because j is the only facility preventing an increase in

is solely constrained by j in this case, because j is the only facility preventing an increase in ![]() . If

. If ![]() is solely constrained by

is solely constrained by ![]() or

or ![]() , then a decrease in

, then a decrease in ![]() can be matched by an increase

can be matched by an increase ![]() . The algorithm therefore focuses on such

. The algorithm therefore focuses on such ![]() , attempting to increase their

, attempting to increase their ![]() values first. It also uses the “solely constrained” test to identify candidates for reduction in

values first. It also uses the “solely constrained” test to identify candidates for reduction in ![]() : If there are no

: If there are no ![]() that are solely constrained by

that are solely constrained by ![]() or

or ![]() , the algorithm does not consider reducing

, the algorithm does not consider reducing ![]() , even if i is a candidate for a decrease in

, even if i is a candidate for a decrease in ![]() as described above.

as described above.

How much to increase

![]() ? Deciding which

? Deciding which ![]() to increase, and by how much, is precisely the intent of the dual‐ascent procedure! Therefore, the dual‐adjustment procedure uses the dual‐ascent procedure as a subroutine—first with the set of customers restricted to the candidates for increases in

to increase, and by how much, is precisely the intent of the dual‐ascent procedure! Therefore, the dual‐adjustment procedure uses the dual‐ascent procedure as a subroutine—first with the set of customers restricted to the candidates for increases in ![]() , then with i added, and then with the full customer set I.

, then with i added, and then with the full customer set I.

The dual‐adjustment procedure is described in pseudocode in Algorithm 8.5. The algorithm loops through the customers to identify candidates for reducing ![]() . A customer is a candidate if (1) it violates the complementary slackness condition 8.51 (this check occurs in line 3, making use of Lemma 8.2), and (2) there are at least two customers that are solely constrained by either

. A customer is a candidate if (1) it violates the complementary slackness condition 8.51 (this check occurs in line 3, making use of Lemma 8.2), and (2) there are at least two customers that are solely constrained by either ![]() or

or ![]() (this check occurs in lines 4–5). Assuming customer i passes both checks, lines 6–8 increase the slack for all j for which

(this check occurs in lines 4–5). Assuming customer i passes both checks, lines 6–8 increase the slack for all j for which ![]() , and line 9 reduces

, and line 9 reduces ![]() to the next smallest

to the next smallest ![]() value.

value.

Next, the algorithm calls the dual‐ascent procedure to decide how to use up the newly created slack. Line 10 restricts I to the customers that are solely constrained by ![]() or

or ![]() ; line 11 adds i itself to this set; and line 12 runs the dual‐ascent algorithm on the entire set I in order to ensure a valid solution

; line 11 adds i itself to this set; and line 12 runs the dual‐ascent algorithm on the entire set I in order to ensure a valid solution ![]() . If

. If ![]() has increased, the adjustment procedure repeats (for the same i), and this continues until there is no improvement or

has increased, the adjustment procedure repeats (for the same i), and this continues until there is no improvement or ![]() reaches its original value. At that point, we move on to the next customer. The algorithm terminates when all customers have been considered.

reaches its original value. At that point, we move on to the next customer. The algorithm terminates when all customers have been considered.

If the dual‐ascent and dual‐adjustment procedures result in primal and dual solutions ![]() whose objective values are equal, then

whose objective values are equal, then ![]() is optimal for the dual LP and

is optimal for the dual LP and ![]() is optimal for the primal LP and IP. If the objectives are unequal, then a straightforward implementation of branch‐and‐bound

can be used to close the optimality gap. Erlenkotter (1978) reports excellent computational results for this method on several test problems, typically with little or no branching required.

is optimal for the primal LP and IP. If the objectives are unequal, then a straightforward implementation of branch‐and‐bound

can be used to close the optimality gap. Erlenkotter (1978) reports excellent computational results for this method on several test problems, typically with little or no branching required.

Körkel (1989) proposes computational improvements that speed the DUALOC algorithm up considerably. DUALOC has been adapted to solve many other problems, such as the p‐median problem discussed in Section 8.3.2 (Galvão, 1980; Nauss and Markland, 1981), the stochastic UFLP discussed in Section 8.6.2 (Mirchandani et al., 1985), the Steiner tree problem (Wong, 1984), and general supply chain network design problems (Balakrishnan et al., 1989). Goemans and Williamson (1997) discuss DUALOC and other primal–dual algorithms.

8.2.5 Heuristics for the UFLP

Heuristics for combinatorial problems such as the UFLP fall into two categories: construction heuristics and improvement heuristics. Construction heuristics build a feasible solution from scratch, whereas improvement heuristics start with a feasible solution and attempt to improve it.

The

most basic construction heuristics for the UFLP are greedy heuristics

such as the “greedy‐add” procedure (Kuehn and Hamburger, 1963): Start with all facilities closed and open the single facility that can serve all customers with the smallest objective function value; then at each iteration open the facility that gives the largest decrease in the objective, stopping when no facility can be opened that will decrease the objective. (See Algorithm 8.6. In the algorithm, ![]() ,

, ![]() , and

, and ![]() refer to the solution when facility k is (temporarily) opened.)

refer to the solution when facility k is (temporarily) opened.)

By assuming that the next facility to open will be the last, the greedy‐add heuristic can easily fall into a trap. For example, if it is optimal to open two facilities, the greedy‐add heuristic may first open a facility in the center of the geographical region, which then must stay open for the second iteration, when in fact it is optimal to open one facility on each side of the region. A reverse approach is called the “greedy‐drop” heuristic, which starts with all facilities open and sequentially closes the facility that decreases the objective the most. It has similar advantages and disadvantages as greedy‐add. One important improvement heuristic is the swap or exchange heuristic (Teitz and Bart, 1968), which attempts to find a facility to open and a facility to close such that the new solution has a smaller objective function value. For more on the swap heuristic, see Section 8.3.2.3. Other procedures attempt to find closed facilities that can be opened to reduce the objective function, or open facilities that can be closed. The heuristics mentioned here have proven to perform well in practice, which means they return good solutions and execute quickly. Metaheuristics have also been widely applied to the UFLP. These include genetic algorithms (Jaramillo et al., 2002), tabu search (Al‐Sultan and Al‐Fawzan, 1999), and simulated annealing (Arostegui et al., 2006).

8.3 Other Minisum Models

The UFLP is an example of a minisum location problem. Minisum models are so called because their objective is to minimize a sum of the costs or distances between customers and their assigned facilities (as well as possibly other terms, such as fixed costs). In contrast, covering location problems are more concerned with the maximum distance, with the goal of ensuring that most or all customers are located close to their assigned facilities.

At the risk of over‐generalizing, it can be said that minisum models are more commonly applied in the private sector, in which profits and costs are the dominant concerns, and covering models are most commonly applied in the public sector, in which service, fairness, and equity are more important. For further discussion of this dichotomy, see Revelle et al. (1970).

In this section, we discuss two other minisum models—the capacitated fixed‐charge location problem and the p‐median problem. In Section 8.4, we discuss covering models.

8.3.1 The Capacitated Fixed‐Charge Location Problem (CFLP)

In the UFLP, we assumed that there are no capacity restrictions on the facilities. Obviously, this is an unrealistic assumption in many practical settings. The UFLP can be easily modified to account for capacity restrictions; the resulting problem (not surprisingly) is called the capacitated fixed‐charge location problem, or CFLP. Suppose ![]() is the maximum demand that can be served by facility j per year. The CFLP can be formulated as follows:

is the maximum demand that can be served by facility j per year. The CFLP can be formulated as follows:

This IP is identical to (UFLP) except for the new capacity constraints 8.57. Sometimes the following constraint is added, which says that the total capacity of the opened facilities is sufficient to meet the total demand:

This constraint is redundant in the IP formulation but tightens some relaxations.

Many approaches have been proposed to solve this problem. We briefly outline a method very similar to the method discussed for the UFLP. We relax the assignment constraints 8.55 to obtain the following Lagrangian subproblem:

As in the UFLP, this sproblem separates by j, but now computing the benefit ![]() is a little more complicated because of the capacity constraint. In particular, for each

is a little more complicated because of the capacity constraint. In particular, for each ![]() , we need to solve a problem of the form

, we need to solve a problem of the form

where ![]() ,

, ![]() , and

, and ![]() . This is a continuous knapsack problem,

which can be solved efficiently by sorting the is so that

. This is a continuous knapsack problem,

which can be solved efficiently by sorting the is so that

(This sort order favors large negative values of ![]() and small positive values of

and small positive values of ![]() .) We then set

.) We then set ![]() for

for ![]() , where r is the largest number such that

, where r is the largest number such that ![]() and

and

If ![]() , we set

, we set ![]() . Other aspects of the Lagrangian procedure (finding upper bounds, subgradient optimization, branch‐and‐bound) are similar to those discussed in Section 8.2.3, although the upper‐bounding procedure must take into account the capacity

constraints.

. Other aspects of the Lagrangian procedure (finding upper bounds, subgradient optimization, branch‐and‐bound) are similar to those discussed in Section 8.2.3, although the upper‐bounding procedure must take into account the capacity

constraints.

Several other relaxations for the CFLP have been studied, often using slightly different formulations from (CFLP). Davis and Ray (1969) solve the LP relaxation of the CFLP in a branch‐and‐bound algorithm, as do Akinc and Khumawala (1977). Nauss (1978) and Christofides and Beasley (1983) use Lagrangian relaxation, relaxing constraints 8.55, similar to the method outlined above. Klincewicz and Luss (1986) relax the capacity constraints 8.57 to obtain a UFLP. Van Roy (1986) also relaxes 8.57 but rather than using standard Lagrangian relaxation, he uses cross‐decomposition, a hybrid of Lagrangian relaxation and Benders decomposition. Barcelo et al. (1991) use variable splitting (Guignard and Kim, 1987), also known as Lagrangian decomposition, a method in which some of the variables are doubled, the new variables are forced equal to the original ones via a constraint, and that constraint is then relaxed using Lagrangian relaxation. Also see Geoffrion and McBride (1978) and Cornuejols et al. (1991) for a discussion of the relative tightness of the theoretical bounds from the various relaxations of the CFLP.

Generally, the optimal solution to (CFLP) will not have ![]() as in (UFLP). (Why?) This means that some customers will receive product from more than one DC. Sometimes it is important to prohibit this from happening by requiring

as in (UFLP). (Why?) This means that some customers will receive product from more than one DC. Sometimes it is important to prohibit this from happening by requiring ![]() ; this is called a single‐sourcing constraint.

The CFLP with single‐sourcing constraints is harder to solve because

; this is called a single‐sourcing constraint.

The CFLP with single‐sourcing constraints is harder to solve because ![]() becomes a 0–1 knapsack problem,

which is NP‐hard.

On the other hand, good algorithms exist for the knapsack problem, and since the knapsack problem does not have the integrality property,

the Lagrangian bound will be tighter than the LP bound.

This highlights the important trade‐off between the quality of the Lagrangian bound and the ease with which the subproblem can be solved.

becomes a 0–1 knapsack problem,

which is NP‐hard.

On the other hand, good algorithms exist for the knapsack problem, and since the knapsack problem does not have the integrality property,

the Lagrangian bound will be tighter than the LP bound.

This highlights the important trade‐off between the quality of the Lagrangian bound and the ease with which the subproblem can be solved.

A closely related problem is the capacitated concentrator location problem (CCLP),

in which the demands ![]() are ignored in the objective function (or, equivalently, the transportation costs

are ignored in the objective function (or, equivalently, the transportation costs ![]() are divided by

are divided by ![]() ) but not in the capacity constraints. The CCLP features prominently in the location‐based heuristic for the vehicle routing problem (Section 11.3.3). See Mirzaian (1985), Klincewicz and Luss (1986),

and Gourdin et al. (2002).

) but not in the capacity constraints. The CCLP features prominently in the location‐based heuristic for the vehicle routing problem (Section 11.3.3). See Mirzaian (1985), Klincewicz and Luss (1986),

and Gourdin et al. (2002).

8.3.2 The p‐Median Problem (pMP)

In the UFLP, the fixed costs in the objective function prevent the model from opening too many facilities. Another way to accomplish the same thing is simply to add a constraint that explicitly limits the number of open facilities. This is the approach taken by the p‐median problem (pMP), which was introduced by Hakimi (1965).

Hakimi focused on problems on networks, in which the distances among nodes are defined not by a geographical measure like Euclidean

or great circle distances,

but rather on shortest‐path distances along the edges of the network. (See Section 8.2.2.) His main result, which has come to be known as the Hakimi property

, is that there is always an optimal solution consisting of nodes of the network rather than points along the edges. In particular, suppose ![]() are the nodes of the network. For any set X consisting of p points on the network (either at the nodes or along the edges) and for any

are the nodes of the network. For any set X consisting of p points on the network (either at the nodes or along the edges) and for any ![]() , define

, define ![]() to be the shortest‐path distance from i to the nearest point in X. (This is a generalization of the notation

to be the shortest‐path distance from i to the nearest point in X. (This is a generalization of the notation ![]() to consider distances to points that are not nodes.) Hakimi proved the following:

to consider distances to points that are not nodes.) Hakimi proved the following:

In other words, there exists an optimal set that consists only of nodes. This allows us to treat the problem as a discrete one consisting of a finite number of feasible solutions rather than a continuous one with an infinite number. Hakimi solved the pMP using complete enumeration

of all subsets of p nodes, but of course this approach only works for small p and ![]() . Many more efficient algorithms have been proposed since Hakimi's original work, several of which we discuss below.

. Many more efficient algorithms have been proposed since Hakimi's original work, several of which we discuss below.

8.3.2.1 Formulation

The pMP uses the same notation as the UFLP (Section 8.2.2), plus the following:

| Parameter | |

| p | = number of facilities to locate |

The problem is formulated as follows:

The objective function 8.69 computes the transportation cost. (Often ![]() is defined as distance, rather than per‐unit cost, in which case the objective function is interpreted as representing the demand‐weighted distance.

) Constraint 8.72 requires exactly p facilities to be opened. This constraint could be written with a

is defined as distance, rather than per‐unit cost, in which case the objective function is interpreted as representing the demand‐weighted distance.

) Constraint 8.72 requires exactly p facilities to be opened. This constraint could be written with a ![]() instead of

instead of ![]() , but since the objective function decreases with the number of open facilities, the optimal solution under such a constraint will always open exactly p facilities; therefore, the two forms of the constraint are equivalent. The other constraints function the same as the corresponding constraints in

the (UFLP).

, but since the objective function decreases with the number of open facilities, the optimal solution under such a constraint will always open exactly p facilities; therefore, the two forms of the constraint are equivalent. The other constraints function the same as the corresponding constraints in

the (UFLP).

8.3.2.2 Exact Algorithms for the pMP

The

pMP is NP‐hard for arbitrary p but is polynomially solvable

if p is fixed (Garey and Johnson, 1979). This means that there exist algorithms for which the worst‐case running time is a polynomial function of the problem size (![]() ,

, ![]() ) but not of p. The pMP can also be solved in polynomial time for arbitrary p when the underlying network is a tree (Kariv and Hakimi, 1979b), i.e., when the distance matrix

is derived from shortest‐path distances on a tree network.

Despite its NP‐hardness, however, the pMP, like the UFLP, can be solved relatively efficiently, partly due to the fact that its LP relaxation

is typically quite

tight (ReVelle and Swain, 1970).

) but not of p. The pMP can also be solved in polynomial time for arbitrary p when the underlying network is a tree (Kariv and Hakimi, 1979b), i.e., when the distance matrix

is derived from shortest‐path distances on a tree network.

Despite its NP‐hardness, however, the pMP, like the UFLP, can be solved relatively efficiently, partly due to the fact that its LP relaxation

is typically quite

tight (ReVelle and Swain, 1970).

The Lagrangian relaxation procedure discussed in Section 8.2.3 can be easily modified for the pMP (Cornuejols et al., 1977). Relaxing constraints 8.70, we obtain the following Lagrangian subproblem:

The benefit of opening facility j is given by 8.13, exactly as in the UFLP. Then, we set ![]() for the p facilities with the smallest

for the p facilities with the smallest ![]() (negative or positive). (Recall that for the UFLP, we set

(negative or positive). (Recall that for the UFLP, we set ![]() if and only if

if and only if ![]() .) Finally, we set

.) Finally, we set ![]() if

if ![]() and

and ![]() . The optimal objective function value of the subproblem,

. The optimal objective function value of the subproblem,

provides a lower bound on the optimal objective function value of ![]() . Feasible solutions can be found by assigning each customer to the nearest facility that is open in the solution to the subproblem, and the corresponding objective function value provides an upper bound. The Lagrange multipliers

can be found using subgradient optimization (Section 8.2.3.5)

. Branch‐and‐bound can then be used to close any remaining optimality

gap.

. Feasible solutions can be found by assigning each customer to the nearest facility that is open in the solution to the subproblem, and the corresponding objective function value provides an upper bound. The Lagrange multipliers

can be found using subgradient optimization (Section 8.2.3.5)

. Branch‐and‐bound can then be used to close any remaining optimality

gap.

Other exact methods include LP relaxation/branch‐and‐bound (ReVelle and Swain, 1970), decomposition methods (Garfinkel et al., 1974), row and column reduction (Rosing et al., 1979), and adaptations by Galvão (1980) and Nauss and Markland (1981) of the DUALOC algorithm (Erlenkotter, 1978) discussed in Section 8.2.4. Reese (2006) provides a thorough survey of the literature on the pMP, including both exact and heuristic algorithms.

8.3.2.3 Heuristics for the pMP

Most heuristics for the UFLP (Section 8.2.5) are easily adapted for the pMP. For instance, we can apply the greedy‐add and greedy‐drop heuristics, except that the procedure terminates when there are exactly p facilities open rather than when no objective‐reducing adds or drops can be found.

One

of the earliest and most widely known heuristics for the pMP is the swap or exchange heuristic introduced by Teitz and Bart (1968). The swap heuristic attempts to find a pair j, k of facilities with j open and k closed such that if j were closed and k opened (and the customers reassigned as needed), the objective function value would decrease. If such a pair can be found, the swap is made and the procedure continues. Pseudocode for the swap heuristic is given in Algorithm 8.7. It takes as inputs the current solution variable x and its objective value z. In the pseudocode, ![]() is a temporary solution and

is a temporary solution and ![]() is its cost.

is its cost.

The swap heuristic can be modified in many ways. For example, at each iteration, we can either implement the first swap we find that reduces the objective (this is called a first‐improving strategy