Chapter 4

Stochastic Inventory Models: Periodic

Review

4.1 Inventory Policies

In this chapter and the next, we will consider inventory models in which the demand is stochastic. A key concept in these chapters will be that of a policy. A policy is a simple rule that provides a solution to the inventory problem. For example, consider a periodic‐review model with fixed costs (such as the Wagner–Whitin model) but with stochastic demands. (We will examine such a model more closely in Section 4.4.) One could imagine several possible policies for this system. Here are a few:

- Every R periods, place an order for Q units.

- Whenever the inventory position falls to s, order Q units.

- Whenever the inventory position falls to s, place an order of sufficient size to bring the inventory position to S.

- Place an order whose size is equal to the first two digits of last night's lottery number. Then, wait a number of periods equal to the last two digits of the lottery number before placing another order.

Now, you probably suspect that some of these policies will perform better (in the sense of keeping costs small) than others. For example, policy 4 is probably a bad one. You probably also suspect that the performance of a policy depends on its parameters. 1 For example, policy 1 sounds reasonable, but only if we choose good values for R and Q.

It is often possible (and always desirable) to prove that a certain policy is optimal for a given problem—that no other policy (even policies that no one has thought of yet) can outperform the optimal policy, provided that we set the parameters of that policy optimally. For example, policy 3 turns out to be optimal for the model in Section 4.4: If we choose the right s and S, then we are guaranteed to incur the smallest possible expected cost.

When using policies, then, inventory optimization really has two parts: Choosing the form of the optimal policy and choosing the optimal parameters for that policy. Sometimes we can't solve one of these parts optimally, so we use approximate methods. For example, although it's possible to find the optimal s and S for the model in Section 4.4, heuristics are commonly used to find approximately optimal values. Similarly, for some problems, no one even knows the form of the optimal policy, so we simply choose a policy that seems plausible.

We'll consider periodic‐review models in this chapter. We'll first consider problems with no fixed costs (in Section 4.3) and then problems with nonzero fixed costs (in Section 4.4). In both of these sections, we'll simply choose a policy to use and focus on optimizing the policy parameters (or, in the case of finite‐horizon models, not restrict ourselves to a policy at all). This is the approach taken in the seminal paper by Arrow et al. (1951). Then, in Section 4.5, we'll prove that the policies we chose for the periodic‐review models in Sections 4.3 and 4.4 are, in fact, optimal. (We won't prove policy optimality for the continuous‐review models in Chapter 5, but those policies, too, are optimal.)

We will continue to use the same notation introduced in Chapter 3. All of the costs we discussed in Section 3.1.3 are in play, including fixed cost K,

purchase cost c,

holding cost h,

and stockout cost p.

We'll assume that K and c are nonnegative, that h and p are strictly positive, and that ![]() (otherwise it costs more to buy the product from the supplier than it does to stock out, so we should never place an order). Now, however, we'll represent the demand as a random variable D with mean

(otherwise it costs more to buy the product from the supplier than it does to stock out, so we should never place an order). Now, however, we'll represent the demand as a random variable D with mean ![]() , variance

, variance ![]() , pdf

, pdf ![]() , and cdf

, and cdf ![]() .

(D will represent demands over different time intervals in different models, but we'll make this clear in each section.) We'll usually assume that D is a continuous random variable, with a few exceptions.

.

(D will represent demands over different time intervals in different models, but we'll make this clear in each section.) We'll usually assume that D is a continuous random variable, with a few exceptions.

Throughout most of this chapter, we will assume that unmet demands are backordered. In Section 4.6, we briefly discuss the lost‐sales assumption.

Before continuing, we introduce two important concepts in stochastic inventory theory: cycle stock and safety stock. Cycle stock (or working inventory) is the inventory that is intended to meet the expected demand. Safety stock is extra inventory that's kept on hand to buffer against uncertainty. The target inventory level or order quantity set by most stochastic inventory problems can be decomposed into cycle and safety stock components. We'll see later that the cycle stock depends on the mean of the demand distribution, while the safety stock depends on the standard deviation.

4.2 Demand Processes

In real life, customers tend to arrive at a retailer at random, discrete points in time. Similarly, (some) retailers place orders to wholesalers at random, discrete times, and so on up the supply chain. One way to model these demands is using a Poisson process , which describes random arrivals to a system over time. If each customer may demand more than one unit, we might use a compound Poisson process , in which arrivals are Poisson and the number of units demanded by each customer is governed by some other probability distribution.

It

will often be convenient for us to work with continuous demand distributions

(rather than discrete distributions such as Poisson), most commonly the normal distribution with mean ![]() and variance

and variance ![]() . Sometimes, the normal distribution is used as an approximation

for the Poisson distribution, in which case

. Sometimes, the normal distribution is used as an approximation

for the Poisson distribution, in which case ![]() since the Poisson variance equals its mean. (This approximation is especially accurate when the mean is large.)

since the Poisson variance equals its mean. (This approximation is especially accurate when the mean is large.)

In the continuous‐review case, normally distributed demands mean that the demand over any t time units is normally distributed, with a mean and standard deviation that depend on t. Although it's unusual to think of demands occurring “continuously” in this way, it's a useful way to model demands over time. In the periodic‐review case, we simply assume that the demand in each time period is normally distributed.

One drawback to using the normal distribution

is that any normal random variable will sometimes have negative realizations, even though the demands that we aim to model are nonnegative. If the demand mean is much greater than its standard deviation, then the probability of negative demands is so small that we can simply ignore it. This suggests that the normal distribution is appropriate as a model for the demand only if ![]() —say, if

—say, if ![]() . If this condition fails to hold, then it is more appropriate to use a distribution whose support does not contain negative values, such as the lognormal distribution.

(If the true demands are Poisson

and we are using the normal distribution to approximate it, then another justification for the condition

. If this condition fails to hold, then it is more appropriate to use a distribution whose support does not contain negative values, such as the lognormal distribution.

(If the true demands are Poisson

and we are using the normal distribution to approximate it, then another justification for the condition ![]() is that the normal approximation for the Poisson distribution is most effective when the Poisson mean,

is that the normal approximation for the Poisson distribution is most effective when the Poisson mean, ![]() , is large, in which case

, is large, in which case ![]() , which is the standard

deviation.)

, which is the standard

deviation.)

4.3 Periodic Review with Zero Fixed Costs: Base‐Stock Policies

For the remainder of this chapter, we focus on periodic‐review models. The time horizon consists of T time periods; T can be finite or infinite. We will usually assume the lead time is zero, but in Sections 4.3.4.1 and 4.6.2, we'll discuss the implications of assuming a nonzero lead time in the case of backorders (which is easy) and lost sales (which is hard).

We'll first consider the important special case in which ![]() (in this section), and then the more general case of

(in this section), and then the more general case of ![]() (in Section 4.4). We'll also assume that the costs h, p, c, and K are constant throughout the time horizon.

(in Section 4.4). We'll also assume that the costs h, p, c, and K are constant throughout the time horizon.

We will model the time value of money by discounting future periods using a discount factor ![]() . That is, $1 spent (or received) in period

. That is, $1 spent (or received) in period ![]() is equivalent to $

is equivalent to $![]() in period t. If

in period t. If ![]() , then there is no discounting. For the single‐period and finite‐horizon

problems, our objective will be to minimize the total expected discounted cost over the horizon. However, the total cost over an infinite horizon

will be infinite if

, then there is no discounting. For the single‐period and finite‐horizon

problems, our objective will be to minimize the total expected discounted cost over the horizon. However, the total cost over an infinite horizon

will be infinite if ![]() and may still be infinite if

and may still be infinite if ![]() . Therefore, in the infinite‐horizon case, we will minimize the expected cost per period if

. Therefore, in the infinite‐horizon case, we will minimize the expected cost per period if ![]() and the total expected discounted cost over the horizon if

and the total expected discounted cost over the horizon if ![]() . (The solutions to the two problems turn out to be closely related.)

. (The solutions to the two problems turn out to be closely related.)

The sequence of events in each period t is as follows:

- The inventory level is observed.

- A replenishment order of size

is placed and is received instantly.

is placed and is received instantly. - Demand

occurs; as much as possible is satisfied from inventory, and the rest is backordered.

occurs; as much as possible is satisfied from inventory, and the rest is backordered. - Holding and stockout costs are assessed based on the ending inventory level.

The ending inventory level in period t (step 4) is denoted ![]() . It is equal to the starting inventory level in period

. It is equal to the starting inventory level in period ![]() (step 1) and is given by

(step 1) and is given by ![]() .

.

4.3.1 Base‐Stock Policies

Throughout Section 4.3, we'll assume that the firm follows a base‐stock policy.

2

A base‐stock policy works as follows: In each time period, we observe the current inventory position

and then place an order whose size is sufficient to bring the inventory position up to S. (We sometimes say we “order up to S.”) S is a constant—it does not depend on the current state of the system—and is known as the base‐stock level.

Base‐stock policies are optimal when ![]() ; we will prove this in Section 4.5.1. One of the earliest analyses of this type of policy is by Arrow et al. (1951).

; we will prove this in Section 4.5.1. One of the earliest analyses of this type of policy is by Arrow et al. (1951).

In multiple‐period models, the base‐stock level may be different in different periods. If the base‐stock level is the same throughout the horizon, then in every period, we simply order ![]() items. (Why?)

items. (Why?)

We will divide this problem into three cases—with ![]() ,

, ![]() , and

, and ![]() —and find the optimal base‐stock levels in each case.

—and find the optimal base‐stock levels in each case.

4.3.2 Single Period: The Newsvendor Problem

4.3.2.1 Problem Statement

Consider a firm selling a single product during a single time period. Single‐period models are most often applied to perishable products, which include (as you might expect) products such as eggs and flowers that may spoil, but also products that lose their value after a certain date, such as newspapers, high‐tech devices, and fashion goods. The key element of the model is that the firm only has one opportunity to place an order—before the random demand is observed.

Even if the firm actually sells its products over multiple periods (as is typical), the operations in subsequent periods are not linked: Excess inventory cannot be held over until the next period, nor can excess demands (that is, unmet demands are lost, not backordered). Therefore, the firm's multiperiod model can be reduced to multiple independent copies of the single‐period model presented here.

This model is one of the most fundamental stochastic inventory models, and many of the models discussed subsequently in this book use it as a starting point. It is often referred to as the newsvendor (or newsboy)

model. The story goes like this: Imagine a newsvendor who buys newspapers each day from the publisher for $0.30 each and sells them for $1.00. The daily demand for newspapers at his newsstand is normally distributed with a mean of 50 and a standard deviation of 8. If the newsvendor has unsold newspapers left at the end of the day, he cannot sell them the next day, but he can sell them back to the publisher for $0.12 (called the salvage value).

The question is: How many newspapers should he buy from the publisher each day? If he buys exactly 50, he has an equal probability of being understocked and overstocked. But it costs more to stock out than to have excess (since stocking out costs him 70 cents in lost profit while excess newspapers cost him ![]() cents each). So he should order more than 50 newspapers each day—but how many more?

cents each). So he should order more than 50 newspapers each day—but how many more?

The inventory carried by the newsvendor can be decomposed into two components: cycle stock and safety stock. As noted in Section 4.1, cycle stock is the inventory that is intended to meet the expected demand—in our example, 50—whereas safety stock is extra inventory that's kept on hand to buffer against demand uncertainty—the amount over 50 ordered by the newsvendor. We will see later that the newsvendor's cycle stock depends on the mean of the demand distribution, while the safety stock depends on the standard deviation.

It is possible for the safety stock to be negative: If stocking out is less expensive than holding extra inventory, the newsvendor would want to order fewer than 50 papers. This can actually occur in practice—for example, for expensive and highly perishable products—but it is the exception rather than the rule.

The mathematical analysis of the newsvendor problem originated with Arrow et al. (1951), though some of the ideas are much older: Edgeworth (1888) uses newsvendor‐type logic to determine the amount of cash to keep on hand at a bank to satisfy random withdrawals by patrons. Morse and Kimball (1951) introduced the name “newsboy problem,” and Porteus (2008) cites Matt Sobel as proposing the gender‐neutral term “newsvendor problem.”

As previously noted, the newsvendor model applies to perishable goods. In particular, it applies to goods that perish before the next ordering opportunity. Many perishable goods have a shelf life that exceeds the order interval: For example, a supermarket might place replenishment orders every few days for milk, which has a shelf life of a few weeks. Cases like this are much more difficult to optimize; for a more detailed discussion, see Section 16.3.2.

4.3.2.2 Formulation

As

usual, we will use h to represent the holding cost: the cost per unit of having too much inventory on hand. In the newsvendor problem, this typically consists of the purchase cost

of the unit, minus any salvage value,

but may include other costs, such as processing costs. (Since inventory cannot be carried to the next period, this cost is not technically a holding cost, though we will refer to it that way anyway.) Similarly, p represents the stockout cost: the cost per unit of having too little inventory, consisting of lost profit and loss‐of‐goodwill

costs. The holding cost is the cost per unit of positive ending inventory, while the stockout cost is the cost per unit of negative ending inventory.

The costs h and p are sometimes referred to as overage and underage costs, respectively (and some authors denote them ![]() and

and ![]() ). We can assume that the purchase cost is included in h and that its negative is included in p, and therefore, we assume that

). We can assume that the purchase cost is included in h and that its negative is included in p, and therefore, we assume that ![]() . We'll also assume the firm starts the period with

. We'll also assume the firm starts the period with ![]() , but this, too, is easy to relax (see Section 4.3.2.6). Since there is only a single period, the discount factor

, but this, too, is easy to relax (see Section 4.3.2.6). Since there is only a single period, the discount factor ![]() won't play a role in the analysis.

won't play a role in the analysis.

We will refer to the model discussed here as the implicit formulation of the newsvendor problem since the costs and revenues are not modeled explicitly but instead are accounted for in the holding and stockout costs h and p. (In contrast, see the explicit formulation in Section 4.3.2.4.)

Recall that D is a random variable that represents the demand per period. We'll assume, for now, that D has a continuous distribution. In Section 4.3.2.8, we'll modify the analysis to handle discrete demand distributions.

Our goal is to determine the base‐stock level S to minimize the expected cost in the single period. The strategy for solving this problem is first to develop an expression for the cost as a function of d (the observed demand) and S (call it ![]() ); then to determine the expected cost

); then to determine the expected cost ![]() (call it

(call it ![]() ); and then (in Section 4.3.2.3) to determine S to

minimize

); and then (in Section 4.3.2.3) to determine S to

minimize ![]() .

.

Let ![]() and

and ![]() be the on‐hand inventory and backorders, respectively, at the end of the period if the firm orders up to S and sees a demand of d units. The cost for an observed demand of d is

be the on‐hand inventory and backorders, respectively, at the end of the period if the firm orders up to S and sees a demand of d units. The cost for an observed demand of d is

Since the demand is stochastic, however, we must take an expectation over D. Let ![]() and

and ![]() be the expected on‐hand inventory and backorders if the firm orders up to S. Then,

be the expected on‐hand inventory and backorders if the firm orders up to S. Then,

Let

These functions are known as the loss function and the complementary loss function, 3 respectively. They can be defined for any probability distribution; here, we define them in terms of the demand distribution. (See Section C.3.1 for more information about these functions.) Then we can rewrite 4.3 as

This gives us three ways to write the expected cost function: using ![]() and

and ![]() 4.2, using integrals 4.3, and using loss functions 4.6. It is also common to use the following identities:

4.2, using integrals 4.3, and using loss functions 4.6. It is also common to use the following identities:

all of which follow from the fact that ![]() for all x. These let us rewrite 4.2, 4.3,

and 4.6 as

for all x. These let us rewrite 4.2, 4.3,

and 4.6 as

4.3.2.3 Optimal Solution

The derivatives of the loss function and its complement are given by

(See Problem 4.23.) Moreover, ![]() , so

, so ![]() and

and ![]() are both convex, and therefore so is

are both convex, and therefore so is ![]() .

To minimize

.

To minimize ![]() , therefore, we set its first derivative to 0. Using 4.6,

, therefore, we set its first derivative to 0. Using 4.6,

Setting this equal to 0 gives

Alternately, we can differentiate 4.12 to get

which is identical to 4.15 and so gives the same optimal solution as 4.17.

The expression for ![]() in 4.17 is an important one, so we'll state it as a theorem (which we've now proven).

in 4.17 is an important one, so we'll state it as a theorem (which we've now proven).

![]() is known as the critical ratio

(or critical fractile).

It is implicit in a result by Arrow et al. (1951) but was first formulated explicitly by Whitin (1953). Since p and h are both positive,

is known as the critical ratio

(or critical fractile).

It is implicit in a result by Arrow et al. (1951) but was first formulated explicitly by Whitin (1953). Since p and h are both positive,

so ![]() always exists.

always exists. ![]() , or the probability of no stockouts. This is known as the type‐1 service level

(see Section 4.3.4.2). Equation 4.17 then says that under the optimal solution, the type‐1 service level should be equal to the critical ratio. It is optimal to stock out in

, or the probability of no stockouts. This is known as the type‐1 service level

(see Section 4.3.4.2). Equation 4.17 then says that under the optimal solution, the type‐1 service level should be equal to the critical ratio. It is optimal to stock out in ![]() fraction of periods. To put it another way, the probability of not having a stockout is equal to the shaded area in Figure 4.1, and Theorem 4.1 says that this area should equal

fraction of periods. To put it another way, the probability of not having a stockout is equal to the shaded area in Figure 4.1, and Theorem 4.1 says that this area should equal ![]() and that the non‐shaded area should equal

and that the non‐shaded area should equal ![]() . As p increases, the critical ratio increases, so

. As p increases, the critical ratio increases, so ![]() and the type‐1 service level both increase—it is more costly to stock out, so we should do it less frequently. As h increases, the critical ratio decreases, as does

and the type‐1 service level both increase—it is more costly to stock out, so we should do it less frequently. As h increases, the critical ratio decreases, as does ![]() —it is more costly to have excess inventory, so we will order less. The type‐1 service level necessarily decreases as well.

—it is more costly to have excess inventory, so we will order less. The type‐1 service level necessarily decreases as well.

Figure 4.1 Optimal solution to newsvendor problem plotted on demand distribution.

Theorem 4.1—or one very much like it—holds for a wide range of models, not just the single‐period newsvendor model formulated here. Perhaps most importantly, a variant of the theorem still holds for the mutliperiod, infinite‐horizon version of the model; see Section 4.3.4.

4.3.2.4 Explicit Formulation

The formulation given in Sections 4.3.2.2–4.3.2.3 interprets h and p as the overage and underage costs, respectively—the cost per unit of having too much or too little inventory. The actual cost and revenue parameters are included implicitly through the overage and underage costs. For instance, in the example described in Section 4.3.2.1, there is a revenue of $1.00, a purchase cost of $0.30, and a salvage value of $0.12, but these don't appear explicitly in the expected cost function 4.2; rather, they are factored into h and p.

Instead, one can write the expected cost function explicitly using these cost parameters, and the resulting formulation is sometimes more intuitive. In particular, let r be the revenue earned per unit sold, let c be the cost per unit purchased,

and let v be the salvage value

earned for each unit of excess inventory. We assume ![]() , otherwise we earn more by salvaging a unit than by selling it, so we would never sell any items.

, otherwise we earn more by salvaging a unit than by selling it, so we would never sell any items.

Let h and p be the holding and stockout costs, but reinterpret them to exclude the costs and revenues related to selling, buying, and salvaging the inventory. For example, h might represent a storage cost or a cost to dispose of the inventory; p might represent loss of goodwill or bookkeeping costs related to unmet demands.

As before, the objective is to minimize ![]() , which should now include revenues as negative costs. We have:

, which should now include revenues as negative costs. We have:

Sometimes, this is instead formulated as a profit maximization

problem in which we maximize ![]() .

.

Since ![]() and

and ![]() are both convex, and since

are both convex, and since ![]() ,

, ![]() is convex

(and

is convex

(and ![]() is concave), so it suffices to set the first derivative of 4.19 to 0:

is concave), so it suffices to set the first derivative of 4.19 to 0:

We can translate this to the implicit version of the problem by determining the overage and underage costs (which we'll denote by ![]() and

and ![]() , respectively, since they have a slightly different meaning than h and p in the explicit formulation). For each unit of excess inventory, we incur a holding cost of h, and we paid c for the extra unit but earn v as a salvage value; therefore,

, respectively, since they have a slightly different meaning than h and p in the explicit formulation). For each unit of excess inventory, we incur a holding cost of h, and we paid c for the extra unit but earn v as a salvage value; therefore, ![]() . Similarly, for each stockout, we incur a penalty of p in addition to the lost profit

. Similarly, for each stockout, we incur a penalty of p in addition to the lost profit ![]() , so

, so ![]() . Therefore, applying 4.17, we get

. Therefore, applying 4.17, we get

which matches 4.20. The expected cost functions 4.12 and 4.19 are not equal, but they differ only by an additive constant (see Problem 4.15).

It is perfectly acceptable to set any of the cost or revenue parameters to 0 if they are negligible or should not be included in the model.

One word of caution: Avoid mixing the implicit and explicit approaches, since doing so can lead to incorrect accounting of the various costs and revenues. For example, it is a common mistake to use something like the objective function from the implicit formulation 4.3, but to add ![]() or subtract

or subtract

to reflect a purchase cost or sales revenue. If the holding and stockout costs in 4.3 are interpreted as overage and underage costs, then the purchase cost and sales revenue are already implicitly included in h and p (as they are in Example 4.1). To include them explicitly in the objective function would be to double‐count them.

For the remainder of this chapter and for most of the rest of this book, we will use the implicit formulation. An exception is Chapter 14, which uses something more like the explicit approach.

4.3.2.5 Normally Distributed Demands

In this section, we discuss results for the special case in which demands are normally distributed: ![]() , with pdf f and cdf F. We use

, with pdf f and cdf F. We use ![]() and

and ![]() to denote the pdf and cdf, respectively, of the standard normal

distribution:

to denote the pdf and cdf, respectively, of the standard normal

distribution:

We also use ![]() to denote the

to denote the ![]() th fractile of the standard normal distribution;

that is,

th fractile of the standard normal distribution;

that is, ![]() .

.

As discussed in Section 4.2, we will assume that ![]() so that the probability of negative demands is negligible.

so that the probability of negative demands is negligible.

From 4.16, we have

If we let ![]() , we have

, we have

The first term of 4.24 represents the cycle stock

—it depends only on ![]() . The second term represents the safety stock

—it depends on

. The second term represents the safety stock

—it depends on ![]() . The newsvendor problem can be thought of as a problem of setting safety stock. The firm already knows that it will need

. The newsvendor problem can be thought of as a problem of setting safety stock. The firm already knows that it will need ![]() units to satisfy the expected demand; the question is how much more to order to satisfy any demand in excess of the mean. This extra inventory is the safety stock. (See Figure 4.2.)

units to satisfy the expected demand; the question is how much more to order to satisfy any demand in excess of the mean. This extra inventory is the safety stock. (See Figure 4.2.)

Figure 4.2 Optimal solution to newsvendor problem plotted on normal demand distribution.

Note that, as discussed in Section 4.3.2.1, the safety stock is negative if ![]() since, in that case,

since, in that case, ![]() and

and ![]() .

.

We next derive an expression for the expected cost under the optimal solution, as we did with the economic order quantity (EOQ) problem in Section 3.2.3. If X is a normally distributed random variable, then its loss and complementary loss functions are given by

where ![]() and

and

(See Problem 4.22.) 4.25 and 4.26 assume ![]() ; this is true for actual demands, but it is only approximately true for the normal distribution we use to model demand.

; this is true for actual demands, but it is only approximately true for the normal distribution we use to model demand. ![]() is called the standard normal loss function;

it is equivalent to

is called the standard normal loss function;

it is equivalent to ![]() in 4.4 if X has a standard normal distribution.

in 4.4 if X has a standard normal distribution. ![]() is tabulated in many textbooks, or it can be computed explicitly as

is tabulated in many textbooks, or it can be computed explicitly as

Equation 4.28 is convenient for calculating ![]() in, say, Excel

, MATLAB

, or Python

, since these and many other environments have built‐in functions for

in, say, Excel

, MATLAB

, or Python

, since these and many other environments have built‐in functions for ![]() and

and ![]() but not

for

but not

for ![]() .

.

Then, for our problem with normally distributed demands, the cost function 4.6 becomes

for any ![]() , where

, where ![]() . From 4.24,

. From 4.24, ![]() . Then from 4.29,

. Then from 4.29,

It seems surprising at first that 4.30 depends only on ![]() , not on

, not on ![]() . But with a little reflection, this makes sense: Since the problem comes down to setting safety stock levels, only

. But with a little reflection, this makes sense: Since the problem comes down to setting safety stock levels, only ![]() should figure into the objective function. Remember that the objective function includes only holding and stockout costs—costs that result from the randomness in demand, not its magnitude.

should figure into the objective function. Remember that the objective function includes only holding and stockout costs—costs that result from the randomness in demand, not its magnitude.

Again, let's summarize the optimal order quantity and its cost in a theorem:

4.3.2.6 Nonzero Starting Inventory Level

We assumed that the firm starts the period with ![]() . In fact, this assumption is easy to relax (and it will be important to do so in the multiperiod versions of this model). If

. In fact, this assumption is easy to relax (and it will be important to do so in the multiperiod versions of this model). If ![]() , then the firm should order up to

, then the firm should order up to ![]() , as usual. But suppose

, as usual. But suppose ![]() . The firm can't order up to

. The firm can't order up to ![]() since it already has too much inventory. But should the firm order any units? By the convexity of

since it already has too much inventory. But should the firm order any units? By the convexity of ![]() , the answer is no: It would be better to leave the inventory level where it is. Therefore, the optimal order quantity at the start of the period is

, the answer is no: It would be better to leave the inventory level where it is. Therefore, the optimal order quantity at the start of the period is

4.3.2.7 Forecasting and Standard Deviations

In most real‐world settings, we do not know the demand process exactly. Instead, we generate a forecast or estimate of the demand parameters required to make inventory decisions. We'll assume the demand is normally distributed. If we knew ![]() and

and ![]() , we would simply use them in 4.24 to determine the optimal order quantity. But suppose we don't know them; instead, suppose we have observed the demand for a long time, and let

, we would simply use them in 4.24 to determine the optimal order quantity. But suppose we don't know them; instead, suppose we have observed the demand for a long time, and let ![]() be the observed demand in period t. In each period, we can generate an estimate of

be the observed demand in period t. In each period, we can generate an estimate of ![]() and

and ![]() from the historical data. There are many ways to do this (see Chapter 2); one of the simplest is to use a moving average

(Section 2.2.1) to estimate

from the historical data. There are many ways to do this (see Chapter 2); one of the simplest is to use a moving average

(Section 2.2.1) to estimate ![]() and what we might call a moving standard deviation

to estimate

and what we might call a moving standard deviation

to estimate ![]() in period t:

in period t:

To choose an order quantity in period t, we replace ![]() with

with ![]() in 4.24. However, it turns out that

in 4.24. However, it turns out that ![]() is not the right standard deviation to use in place of

is not the right standard deviation to use in place of ![]() . Instead, the correct quantity to use is

the standard deviation of the forecast error.

. Instead, the correct quantity to use is

the standard deviation of the forecast error.

Returning to our historical data, ![]() serves as a forecast for the demand in period t. The forecast error

(the forecast minus the observed demand in a given period) is a random variable, and it has a mean, denoted

serves as a forecast for the demand in period t. The forecast error

(the forecast minus the observed demand in a given period) is a random variable, and it has a mean, denoted ![]() , and a standard deviation, denoted

, and a standard deviation, denoted ![]() . The correct quantity to replace

. The correct quantity to replace ![]() with in 4.24 is

with in 4.24 is ![]() . We'll omit a rigorous explanation of why this is the case (see, e.g., Nahmias (2005, Section 2.12)), but here is the intuition. The forecasting process introduces sampling error in addition to the randomness in demand, and it is this error that the firm really needs to protect itself against using safety stock.

Suppose that the demand is very variable (

. We'll omit a rigorous explanation of why this is the case (see, e.g., Nahmias (2005, Section 2.12)), but here is the intuition. The forecasting process introduces sampling error in addition to the randomness in demand, and it is this error that the firm really needs to protect itself against using safety stock.

Suppose that the demand is very variable (![]() is large), but we are extremely good at predicting it (

is large), but we are extremely good at predicting it (![]() and

and ![]() are both small). We would need very little safety stock, because we can accurately predict how much inventory we will need. Now suppose that the demand is extremely steady (

are both small). We would need very little safety stock, because we can accurately predict how much inventory we will need. Now suppose that the demand is extremely steady (![]() is small) but that, for some reason, our forecast is always 100 units too large (

is small) but that, for some reason, our forecast is always 100 units too large (![]() is large,

is large, ![]() is small). Here, too, we need very little safety stock, because (knowing our forecast is always too large), we can simply revise our forecast downward. Finally, suppose that the demand is steady (

is small). Here, too, we need very little safety stock, because (knowing our forecast is always too large), we can simply revise our forecast downward. Finally, suppose that the demand is steady (![]() is small) but our forecasts are all over the place—sometimes high, sometimes low (

is small) but our forecasts are all over the place—sometimes high, sometimes low (![]() is small,

is small, ![]() is large). In this case, we need a lot of safety stock to protect against the uncertainty arising from our inability to predict the demand. In all of these cases, it is the standard deviation of the forecast error that drives the inventory requirement.

is large). In this case, we need a lot of safety stock to protect against the uncertainty arising from our inability to predict the demand. In all of these cases, it is the standard deviation of the forecast error that drives the inventory requirement.

Unfortunately, we don't know ![]() any more than we know

any more than we know ![]() . Instead, we can observe the forecast error

in period t,

. Instead, we can observe the forecast error

in period t,

and estimate the standard deviation of the forecast error as

where

is the estimate of the mean of the forecast error

made in period t. (If we know for sure that our forecasts are unbiased, we can replace ![]() with 0.) We then replace

with 0.) We then replace ![]() with

with ![]() in 4.24 and in the analysis that follows. Of course, if the firm uses a forecasting technique other than moving average, we can simply replace the formulas above with the appropriate ones.

in 4.24 and in the analysis that follows. Of course, if the firm uses a forecasting technique other than moving average, we can simply replace the formulas above with the appropriate ones.

Now, in nearly all of the models in this book (one exception is Section 13.2.2), we assume that the demand parameters are known and stationary. In that case, the forecast ![]() is always equal to the true demand mean

is always equal to the true demand mean ![]() , and the forecast error is

, and the forecast error is ![]() with mean 0 and standard deviation

with mean 0 and standard deviation

which converges to ![]() in the long run. Therefore,

in the long run. Therefore, ![]() and

and ![]() are the appropriate parameters to use.

are the appropriate parameters to use.

In general, one can show that ![]() for some constant c (at least for moving average

and exponential smoothing

forecasts; see, e.g., Hax and Candea (1984, p. 174), or Nahmias (2005, Appendix 2‐A)), so in some sense the distinction between the standard deviation of the demand and that of the forecast error is academic, but it's still worth drawing.

for some constant c (at least for moving average

and exponential smoothing

forecasts; see, e.g., Hax and Candea (1984, p. 174), or Nahmias (2005, Appendix 2‐A)), so in some sense the distinction between the standard deviation of the demand and that of the forecast error is academic, but it's still worth drawing.

This analysis assumes that ![]() , i.e., the forecast is unbiased. If it is not, we should also use

, i.e., the forecast is unbiased. If it is not, we should also use ![]() in place of

in place of ![]() in 4.24: If our forecasts tend to be too high (

in 4.24: If our forecasts tend to be too high (![]() ), then we should reduce the estimate of the mean demand to account for this; and if our forecasts are low (

), then we should reduce the estimate of the mean demand to account for this; and if our forecasts are low (![]() ), we should

increase it.

), we should

increase it.

4.3.2.8 Discrete Demand Distributions

Suppose now that D is discrete. In this case, 4.3 becomes

The expected cost can still be expressed in terms of loss functions, keeping 4.6 as is but replacing the definitions of ![]() and

and ![]() in 4.4 and 4.5

with

in 4.4 and 4.5

with

(See Section C.3.4 for more on loss functions for discrete distributions.)

Figure 4.3

and

and  .

.

The expected cost function ![]() is still convex

but no longer differentiable; it is piecewise‐linear,

with breakpoints at each positive integer. (Why?) Therefore, we cannot use derivatives to minimize it. Instead, we can use finite differences

. A finite difference is very similar to a derivative except that, instead of measuring the change in the function as the variable changes infinitesimally, it measures the change as the variable changes by one unit. Let

is still convex

but no longer differentiable; it is piecewise‐linear,

with breakpoints at each positive integer. (Why?) Therefore, we cannot use derivatives to minimize it. Instead, we can use finite differences

. A finite difference is very similar to a derivative except that, instead of measuring the change in the function as the variable changes infinitesimally, it measures the change as the variable changes by one unit. Let

Imagine starting at ![]() and increasing S one unit at a time. If

and increasing S one unit at a time. If ![]() , i.e.,

, i.e., ![]() , then we would want to increase S to

, then we would want to increase S to ![]() to bring the cost down. Since

to bring the cost down. Since ![]() is convex,

is convex, ![]() is the smallest S such that

is the smallest S such that ![]() . (See Figure 4.3.) Well,

. (See Figure 4.3.) Well,

Therefore, ![]() is the smallest S such that

is the smallest S such that ![]() ; that is:

; that is:

Unless we get lucky, there is no S such that ![]() equals the critical ratio,

as it does for continuous demands, so instead we “round up” to the next greater integer. That is, if

equals the critical ratio,

as it does for continuous demands, so instead we “round up” to the next greater integer. That is, if ![]() , there is no need to evaluate both

, there is no need to evaluate both ![]() and

and ![]() ;

; ![]() will always be smaller. Note, however, that this does not hold when the demands are continuous but the order quantities must be discrete;

see Problem 4.16.

will always be smaller. Note, however, that this does not hold when the demands are continuous but the order quantities must be discrete;

see Problem 4.16.

4.3.3 Finite Horizon

Now

consider a multiple‐period problem consisting of a finite number of periods, T. Suppose we are at the beginning of period t. Do we need to know the history of the system (e.g., order quantities and demands through period ![]() ) in order to make an optimal inventory decision in period t? The answer is no: All of the information we need to make the inventory decision is contained in a single quantity—the starting inventory level, which equals the ending inventory level in the previous period,

) in order to make an optimal inventory decision in period t? The answer is no: All of the information we need to make the inventory decision is contained in a single quantity—the starting inventory level, which equals the ending inventory level in the previous period, ![]() . Moreover, once we decide how much to order, we can easily calculate the probability distribution of the ending inventory level in period t (as we'll see below). This suggests that the periods can be optimized recursively—in particular, using dynamic programming (DP). Just as in the DP algorithm we used for the Wagner–Whitin problem (Section 3.7.3), this DP will make inventory decisions for period t, assuming that optimal decisions have already been made for periods

. Moreover, once we decide how much to order, we can easily calculate the probability distribution of the ending inventory level in period t (as we'll see below). This suggests that the periods can be optimized recursively—in particular, using dynamic programming (DP). Just as in the DP algorithm we used for the Wagner–Whitin problem (Section 3.7.3), this DP will make inventory decisions for period t, assuming that optimal decisions have already been made for periods ![]() and using the cost of those optimal decisions to calculate the cost of the decisions in period t. However, in this DP, the optimal decisions in period t will depend on a random state variable (in particular,

and using the cost of those optimal decisions to calculate the cost of the decisions in period t. However, in this DP, the optimal decisions in period t will depend on a random state variable (in particular, ![]() ), whereas the decisions in the Wagner–Whitin DP depended only on the period, t.

), whereas the decisions in the Wagner–Whitin DP depended only on the period, t.

First consider what happens at the end of the time horizon. Presumably, on‐hand units

and backorders

must be treated differently now that the horizon has ended than they would be during the horizon. The terminal cost function,

denoted ![]() , represents the additional cost incurred at the end of the horizon if we end the horizon with inventory level x. For example, we may incur a terminal holding cost

, represents the additional cost incurred at the end of the horizon if we end the horizon with inventory level x. For example, we may incur a terminal holding cost

![]() for on‐hand units that must be disposed of, and a terminal stockout cost

for on‐hand units that must be disposed of, and a terminal stockout cost

![]() for backorders that must be satisfied through overtime or other expensive measures. Then

for backorders that must be satisfied through overtime or other expensive measures. Then ![]() . Or, maybe we can salvage excess units at the end of the horizon for a revenue of

. Or, maybe we can salvage excess units at the end of the horizon for a revenue of ![]() per unit, in which

case

per unit, in which

case ![]() .

.

Let ![]() be the optimal expected cost in periods

be the optimal expected cost in periods ![]() if we begin period t with an inventory level of x (and act optimally thereafter). We can define

if we begin period t with an inventory level of x (and act optimally thereafter). We can define ![]() recursively in terms of

recursively in terms of ![]() for later periods s. In each period t, we need to decide how much to order, but we will express this optimization problem not in terms of the order quantity Q, but the order‐up‐to level

y, defined as

for later periods s. In each period t, we need to decide how much to order, but we will express this optimization problem not in terms of the order quantity Q, but the order‐up‐to level

y, defined as ![]() .

4

In particular:

.

4

In particular:

where

is the single‐period expected cost function (see 4.3 and 4.6). The minimization considers all possible order‐up‐to levels ![]() (since Q must be nonnegative) and, for each, calculates the sum of the cost to order

(since Q must be nonnegative) and, for each, calculates the sum of the cost to order ![]() units, the expected cost in period t, and the expected discounted future cost. Note that if we order up to y in period t, then the starting inventory level in period

units, the expected cost in period t, and the expected discounted future cost. Note that if we order up to y in period t, then the starting inventory level in period ![]() will be

will be ![]() , where D is the (random) demand in period t; therefore, the (random) cost in periods

, where D is the (random) demand in period t; therefore, the (random) cost in periods ![]() equals

equals ![]() .

.

The DP algorithm for the finite‐horizon problem is given in Algorithm 4.1. The optimal expected cost for the entire horizon is given by ![]() , where

, where ![]() is the inventory level that the system starts with at the beginning of period 1.

is the inventory level that the system starts with at the beginning of period 1.

One way to think about this DP is as follows. Imagine a spreadsheet whose columns correspond to the periods ![]() and whose rows correspond to the possible values of x. The value in cell

and whose rows correspond to the possible values of x. The value in cell ![]() equals

equals ![]() . We start by filling in the

. We start by filling in the ![]() values in the last column, one for each value of x. Then, we calculate the cells in column T: For each x, we calculate

values in the last column, one for each value of x. Then, we calculate the cells in column T: For each x, we calculate ![]() using 4.36—which requires us to look in column

using 4.36—which requires us to look in column ![]() for the

for the ![]() values—and write the result in cell

values—and write the result in cell ![]() . Then we calculate the cells in column

. Then we calculate the cells in column ![]() , using the values in column T, and so on, until we solve period 1. Also imagine a second spreadsheet with identical structure but whose cells contain

, using the values in column T, and so on, until we solve period 1. Also imagine a second spreadsheet with identical structure but whose cells contain ![]() rather than

rather than ![]() .

.

The completed spreadsheets tell the firm everything it needs to know about optimally managing the inventory system. If it finds itself with an inventory level of x at the start of period t, it simply looks in the second spreadsheet and orders up to the ![]() value that is found in cell

value that is found in cell ![]() . (The corresponding cell in the first spreadsheet tells the expected current and future cost of this action.)

. (The corresponding cell in the first spreadsheet tells the expected current and future cost of this action.)

Two problems with this approach bear mentioning. First, the DP calculates ![]() “for all x.” But x can potentially become arbitrarily large or small, depending on the values we choose for y and on the random demands. For example, if

“for all x.” But x can potentially become arbitrarily large or small, depending on the values we choose for y and on the random demands. For example, if ![]() and

and ![]() , it is possible (although extremely unlikely) that

, it is possible (although extremely unlikely) that ![]() will equal

will equal ![]() , so our spreadsheet should extend at least this far. Of course, this is neither practical nor essential (since the probability is so low), so we typically truncate

the state space to consider only “reasonable” x values. (The definition of “reasonable” depends on the specific problem at hand.)

, so our spreadsheet should extend at least this far. Of course, this is neither practical nor essential (since the probability is so low), so we typically truncate

the state space to consider only “reasonable” x values. (The definition of “reasonable” depends on the specific problem at hand.)

Second, even if we consider only a reasonably narrow range of x values, if D has a continuous distribution, there are still an infinite number of possible inventory levels to consider. This problem is typically addressed by discretizing

the demand distribution so we consider only a finite number of possible demand values. The granularity of the discretization (e.g., do we round demands to the nearest integer? to the nearest 0.001? the nearest ![]() ?) again depends on the specific problem. In general, larger ranges of x values and smaller granularity result in more accurate solutions but longer run times.

?) again depends on the specific problem. In general, larger ranges of x values and smaller granularity result in more accurate solutions but longer run times.

Even after we resolve these two problems, this approach is still somewhat unsatisfying, at least from a managerial point of view. The spreadsheets described above will work, but they are fairly cumbersome. It would be nice if we could boil the information contained in the spreadsheets down into a simple policy. To that end, let's look more closely at the results of the DP.

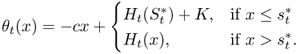

Figure 4.4 plots ![]() for three different periods t and for a range of x values for a particular instance of the problem.

5

Essentially, each curve contains the data from a column in the second spreadsheet. Notice that all three curves are flat for a while and then climb linearly along the line

for three different periods t and for a range of x values for a particular instance of the problem.

5

Essentially, each curve contains the data from a column in the second spreadsheet. Notice that all three curves are flat for a while and then climb linearly along the line ![]() . That is, for each t, there exists some value

. That is, for each t, there exists some value ![]() such that, for

such that, for ![]() , we have

, we have ![]() , and for

, and for ![]() , we have

, we have ![]() . (In particular,

. (In particular, ![]() ,

, ![]() ,

, ![]() .) In other words, these curves each depict a base‐stock policy!

In fact, we will prove in Section 4.5.1.2 that a base‐stock policy is optimal in every period of the finite‐horizon model presented here—the pattern suggested by Figure 4.4 always holds.

.) In other words, these curves each depict a base‐stock policy!

In fact, we will prove in Section 4.5.1.2 that a base‐stock policy is optimal in every period of the finite‐horizon model presented here—the pattern suggested by Figure 4.4 always holds.

Figure 4.4

DP results,  :

:  .

.

Recognizing the optimality of a base‐stock policy has simplified the results: We don't need the entire ![]() spreadsheet to tell us how to act in each period, we just need a list of

spreadsheet to tell us how to act in each period, we just need a list of ![]() values—the optimal base‐stock level

for each period t. In general, these can be different for different periods, as suggested by Figure 4.4, although in some special cases, the same base‐stock level is optimal in every period (see Section 4.5.1.2). However, base‐stock optimality has not simplified the computation required to determine the optimal policy—we still need to solve the DP to find the optimal base‐stock levels in each period. In particular,

values—the optimal base‐stock level

for each period t. In general, these can be different for different periods, as suggested by Figure 4.4, although in some special cases, the same base‐stock level is optimal in every period (see Section 4.5.1.2). However, base‐stock optimality has not simplified the computation required to determine the optimal policy—we still need to solve the DP to find the optimal base‐stock levels in each period. In particular, ![]() is equal to

is equal to ![]() , or, assuming we have truncated the range of possible x values,

, or, assuming we have truncated the range of possible x values, ![]() equals

equals ![]() for the smallest x value

considered.

for the smallest x value

considered.

4.3.4 Infinite Horizon

Our third and final variety of periodic‐review models with no fixed costs is the case in which ![]() . This problem is sometimes referred to as the infinite‐horizon newsvendor model.

If the number of periods is infinite, then the total expected cost across the horizon may be infinite, too. (It certainly will be if

. This problem is sometimes referred to as the infinite‐horizon newsvendor model.

If the number of periods is infinite, then the total expected cost across the horizon may be infinite, too. (It certainly will be if ![]() .) An alternate objective is to minimize the expected cost per period. The former case is known as the discounted‐cost criterion,

while the latter is known as the average‐cost criterion.

We'll consider the average‐cost criterion first, then the discounted‐cost criterion.

.) An alternate objective is to minimize the expected cost per period. The former case is known as the discounted‐cost criterion,

while the latter is known as the average‐cost criterion.

We'll consider the average‐cost criterion first, then the discounted‐cost criterion.

Under

the average‐cost criterion, we assume ![]() . The expected cost in a given period if we use base‐stock level S is given by

. The expected cost in a given period if we use base‐stock level S is given by

This is exactly the same expected cost function as in the single‐period model of Section 4.3.2. Therefore, the same base‐stock level—given in Theorem 4.1—is optimal, in every period!

In formulating 4.38, we glossed over two potentially problematic issues. First, why didn't we account for the purchase cost

c, and second, why didn't we account for the cost in future periods? Well, in the long run, the expected number of units ordered is the same—![]() —no matter what S we choose. And since

—no matter what S we choose. And since ![]() , the timing of our orders does not affect the purchase cost. Therefore, the expected purchase cost per period is independent of S.

, the timing of our orders does not affect the purchase cost. Therefore, the expected purchase cost per period is independent of S.

What about future periods? In 4.38, we didn't account for the impact of our choice of S on future periods. Is this approach sound, or do we need to account for the future cost, as in the finite‐horizon DP model of Section 4.3.3? For example, if we start period t with ![]() , then the expected cost in period t is

, then the expected cost in period t is ![]() rather than

rather than ![]() . In this case, 4.38 would give an incomplete picture of the expected cost in period t since it assumes we can always order up to S. This suggests that we cannot optimize the periods independently. However, as long as

. In this case, 4.38 would give an incomplete picture of the expected cost in period t since it assumes we can always order up to S. This suggests that we cannot optimize the periods independently. However, as long as ![]() , we can be sure that the system starts period

, we can be sure that the system starts period ![]() with

with ![]() . (Why?) Therefore, no matter what value we choose for

. (Why?) Therefore, no matter what value we choose for ![]() , we know that we can always order up to

, we know that we can always order up to ![]() in period

in period ![]() . And, as we will see in Section 4.5.1.3, the same base‐stock level is optimal in every period. Therefore,

. And, as we will see in Section 4.5.1.3, the same base‐stock level is optimal in every period. Therefore, ![]() , so

, so ![]() and we can optimize 4.38 to find the optimal base‐stock

level.

and we can optimize 4.38 to find the optimal base‐stock

level.

Now

suppose ![]() , i.e., consider the discounted‐cost criterion. Since the timing of orders now affects the cost, 4.38 is no longer valid. However, the solution turns out to be nearly as simple: The optimal base‐stock level is the same in every period, and it is given by

, i.e., consider the discounted‐cost criterion. Since the timing of orders now affects the cost, 4.38 is no longer valid. However, the solution turns out to be nearly as simple: The optimal base‐stock level is the same in every period, and it is given by

(We omit the proof.)

We summarize these conclusions in the following theorem:

Note that this theorem holds for both ![]() and

and ![]() , i.e., for both the average‐ and discounted‐cost criteria.

, i.e., for both the average‐ and discounted‐cost criteria.

If demand is normally distributed,

then the results from Section 4.3.2.5 still hold, after modifying to account for ![]() . In particular,

. In particular,

where ![]() . The comments on forecasting in Section 4.3.2.7 also apply here.

. The comments on forecasting in Section 4.3.2.7 also apply here.

4.3.4.1 Lead Times and Reorder Intervals

So far, we have assumed that the lead time is 0 and that the reorder interval

—the number of periods that elapse between orders—is 1. (The reorder interval is sometimes called the review period.)

In this section, we relax those assumptions to allow the lead time to be nonzero and the reorder interval to be greater than 1. In general, we define the lead‐time demand

as the cumulative demand in ![]() consecutive periods. In the newsvendor problem

in Section 4.3.2,

consecutive periods. In the newsvendor problem

in Section 4.3.2, ![]() and

and ![]() , so the lead‐time demand is just the demand in a single period.

, so the lead‐time demand is just the demand in a single period.

The sequence of events is the same as that on page 5. In the discussion that follows, we will use the following notation:

|

|

= ending inventory level (after step 4 of sequence of events) in period t |

|

|

= inventory position after order is placed but before demand is observed |

| (after step 2 of sequence of events) in period t | |

|

|

= demand in period t |

|

|

= cumulative demand in periods |

|

|

|

|

|

= cumulative demand in |

|

|

= pdf/pmf and cdf of |

|

|

= loss function and complementary loss function of |

For

the moment, assume that the reorder interval R equals 1, but allow an L‐period lead time, ![]() . That is, an order placed in period t (in step 2 of the sequence of events) is received in step 2 of period

. That is, an order placed in period t (in step 2 of the sequence of events) is received in step 2 of period ![]() . From step 4 of the sequence of events, the holding and stockout costs are incurred based on the ending inventory level,

. From step 4 of the sequence of events, the holding and stockout costs are incurred based on the ending inventory level, ![]() , a random variable; therefore, to calculate the expected holding and stockout costs, we need to know the distribution of

, a random variable; therefore, to calculate the expected holding and stockout costs, we need to know the distribution of ![]() , which in turn depends on the inventory policy parameters (e.g., S). The distribution of

, which in turn depends on the inventory policy parameters (e.g., S). The distribution of ![]() is not obvious, because

is not obvious, because ![]() depends on both the random demand

and the inventory actions governed by S. Worse still, there is a delayed reaction: Inventory decisions made in period t do not have an effect on

depends on both the random demand

and the inventory actions governed by S. Worse still, there is a delayed reaction: Inventory decisions made in period t do not have an effect on ![]() until period

until period ![]() . In the intervening periods, other orders may have arrived (increasing the inventory level) and demands will have occurred (decreasing the inventory level).

. In the intervening periods, other orders may have arrived (increasing the inventory level) and demands will have occurred (decreasing the inventory level).

The solution to this problem is to relate the inventory level at time ![]() to the inventory position at time t (which we know, in the case of a base‐stock policy) and to the demand during periods

to the inventory position at time t (which we know, in the case of a base‐stock policy) and to the demand during periods ![]() (whose probability distribution we know). In particular, the ending inventory level in period

(whose probability distribution we know). In particular, the ending inventory level in period ![]() is given by

is given by

Why is 4.41 true? Well, all of the items included in ![]() —including items on hand and on order—will have arrived by period

—including items on hand and on order—will have arrived by period ![]() . Moreover, no items ordered after period t will have arrived by period

. Moreover, no items ordered after period t will have arrived by period ![]() . Therefore, all items that are on hand or on order in period t will be included in the ending inventory level in period

. Therefore, all items that are on hand or on order in period t will be included in the ending inventory level in period ![]() , except for the

, except for the ![]() items that have since been demanded. (See Figure 4.5.) Another way to think of this is that if the inventory position in period t is

items that have since been demanded. (See Figure 4.5.) Another way to think of this is that if the inventory position in period t is ![]() and there is no demand during

and there is no demand during ![]() , then the inventory level in period

, then the inventory level in period ![]() will be

will be ![]() ; and if some demand does occur, then

; and if some demand does occur, then ![]() will be

will be ![]() minus that demand.

minus that demand.

Figure 4.5

Inventory dynamics. All items on order or on hand in period t have arrived by period  . Items ordered before

. Items ordered before  arrive before t, and items ordered after t arrive after

arrive before t, and items ordered after t arrive after  . In the figure,

. In the figure,  .

.

Equation 4.41 is a very important equation. It applies to the periodic‐review models in this chapter and—in modified form—to the continuous‐review models in Chapter 5. The idea dates back to Scarf (1960); Zipkin (2000) refers to it as a conservation of flow equation.

Note that 4.41 only holds for the lead time L; that is, in general,

This is because some of the units included in ![]() may not be delivered by period

may not be delivered by period ![]() (if

(if ![]() ), or some units ordered after period t may have been delivered by period

), or some units ordered after period t may have been delivered by period ![]() (if

(if ![]() ).

).

In steady state, we can drop the time indices from 4.41 and write

where ![]() is a random variable representing the lead‐time demand.

(We're omitting some of the probabilistic arguments necessary to justify the move from 4.41 to 4.43. See, for example, Galliher et al. (1959) and Zipkin (1986b).) If

is a random variable representing the lead‐time demand.

(We're omitting some of the probabilistic arguments necessary to justify the move from 4.41 to 4.43. See, for example, Galliher et al. (1959) and Zipkin (1986b).) If ![]() , then 4.41 simply says that the ending inventory in period t equals the inventory position after the order minus the demand in the

period.

, then 4.41 simply says that the ending inventory in period t equals the inventory position after the order minus the demand in the

period.

Let us apply this insight to the infinite‐horizon base‐stock problem under the average‐cost criterion

in Section 4.3.4. (Continue to assume that ![]() .) Since this is a base‐stock policy,

.) Since this is a base‐stock policy, ![]() in every period t. Therefore,

in every period t. Therefore,

or in steady state,

In other words, the pdf of ![]() is

is

The expected cost is still given by 4.38, and Theorem 4.4 still gives the optimal base‐stock level, with ![]() ,

, ![]() ,

, ![]() , and

, and ![]() replaced by

replaced by ![]() ,

, ![]() ,

, ![]() , and

, and ![]() . In essence, we have shifted the accounting so that actions taken in period t do not incur costs until period

. In essence, we have shifted the accounting so that actions taken in period t do not incur costs until period ![]() , though all of that logic is buried in the expectations in 4.38.

, though all of that logic is buried in the expectations in 4.38.

For normally distributed demands, Theorem 4.4 says that

where ![]() and

and ![]() refer to the demand per period (and so

refer to the demand per period (and so ![]() is the mean and

is the mean and ![]() is the standard deviation of lead‐time demand).

In 4.46,

is the standard deviation of lead‐time demand).

In 4.46, ![]() is the cycle stock

and

is the cycle stock

and ![]() is the safety stock.

The safety stock is held to protect against fluctuations in lead time demand,

which is why the safety stock component uses the standard deviation of lead time demand. The reason the cycle stock level depends on the lead time, too,

is that the base‐stock level refers to the inventory position

—so if the lead time is 4 weeks, we always want 4 weeks' worth of cycle stock in the pipeline plus 1 week's worth on

hand.

is the safety stock.

The safety stock is held to protect against fluctuations in lead time demand,

which is why the safety stock component uses the standard deviation of lead time demand. The reason the cycle stock level depends on the lead time, too,

is that the base‐stock level refers to the inventory position

—so if the lead time is 4 weeks, we always want 4 weeks' worth of cycle stock in the pipeline plus 1 week's worth on

hand.

Now let's generalize this logic to allow a reorder interval of ![]() , so that orders are placed every R periods. Continue to assume that the lead time is

, so that orders are placed every R periods. Continue to assume that the lead time is ![]() . The conservation‐of‐flow argument now goes as follows: Assume that period t is an order period and that

. The conservation‐of‐flow argument now goes as follows: Assume that period t is an order period and that ![]() . All items included in

. All items included in ![]() will have arrived by period

will have arrived by period ![]() , and therefore by period

, and therefore by period ![]() . No items ordered after period t will have arrived by period

. No items ordered after period t will have arrived by period ![]() (because any such items would have been ordered in period

(because any such items would have been ordered in period ![]() at the earliest), or therefore by period

at the earliest), or therefore by period ![]() . Therefore, the ending inventory level in period

. Therefore, the ending inventory level in period ![]() equals

equals ![]() minus the demand in periods

minus the demand in periods ![]() :

:

For a base‐stock policy, ![]() , so we have

, so we have

if t is an order period. Therefore, the expected cost is

where ![]() is the newsvendor cost function 4.3 with

is the newsvendor cost function 4.3 with ![]() replaced by

replaced by ![]() . In general, this cost function must be optimized numerically to find the optimal base‐stock level,

. In general, this cost function must be optimized numerically to find the optimal base‐stock level, ![]() .

.

Note that if ![]() , then 4.47 and 4.48 reduce to 4.41 and 4.44, respectively, and 4.49 reduces

to 4.3.

, then 4.47 and 4.48 reduce to 4.41 and 4.44, respectively, and 4.49 reduces

to 4.3.

4.3.4.2 Service Levels

The service level measures how successful an inventory policy is at satisfying the demand. There are many definitions of service level. The two most common are as follows:

- Type‐1 service level : the percentage of order cycles during which no stockout occurs, sometimes called the cycle service level , denoted A.

- Type‐2 service level : the percentage of demand that is met from stock, sometimes called the fill rate , denoted B.

(An order cycle

is the interval between two consecutive orders, or order arrivals. For base‐stock policies, the duration of each order cycle is equal to the reorder interval,

R. For ![]() policies, and for the continuous‐review models

in Chapter 5, the length of an order cycle is

stochastic.)

policies, and for the continuous‐review models

in Chapter 5, the length of an order cycle is

stochastic.)

For example, suppose there are 3 periods with the demands and stockouts given in Table 4.1. Then the type‐1 service level A is 67% (because we stocked out in 1 of 3 periods), while the type‐2 service level B is 90% (because we filled 450 out of 500 demands). In theory, the type‐1 service level can be greater than the type‐2 service level, but this rarely happens since the type‐1 service level is a more stringent measure—any cycle during which a stockout occurs is counted as a “failure,” rather than just counting the individual stockouts as failures. (The type‐1 service level would be greater than the type‐2 service level if, for example, stockouts occur very rarely, but when they do, the number of stockouts is very large.)

Table 4.1 Sample demands and stockouts.

| Period | Demand | Stockouts |

| 1 | 150 | 0 |

| 2 | 100 | 0 |

| 3 | 250 | 50 |

Focusing

now on base‐stock policies, assume that the lead time is ![]() periods. If the review period is

periods. If the review period is ![]() (see Section 4.3.4.1), the type‐1 service level is easy to calculate: By 4.45, no stockout will occur in a given period if and only if the lead‐time demand

for the interval ending at that period is less than or equal to S, i.e.,

(see Section 4.3.4.1), the type‐1 service level is easy to calculate: By 4.45, no stockout will occur in a given period if and only if the lead‐time demand

for the interval ending at that period is less than or equal to S, i.e., ![]() . If

. If ![]() , the type‐1 service level is the probability that there are no stockouts in an order cycle, i.e., over the R periods between two order arrivals. No stockout occurs in a cycle if and only if the inventory level at the end of the cycle (just before the next order arrival) is positive. By 4.48, this inventory level is positive if and only if

, the type‐1 service level is the probability that there are no stockouts in an order cycle, i.e., over the R periods between two order arrivals. No stockout occurs in a cycle if and only if the inventory level at the end of the cycle (just before the next order arrival) is positive. By 4.48, this inventory level is positive if and only if ![]() , which occurs with probability

, which occurs with probability ![]() . To summarize:

. To summarize:

The type‐2 service level is a bit trickier. The type‐2 service level is

We will start by making two simplifying assumptions to derive an approximate expression for the type‐2 service level, then relax one and then finally both assumptions to obtain another approximation and an exact expression.

- Simplifying Assumption 1 (SA1): Backorders never last for more than one order cycle. That is, each arriving order is large enough to clear all existing backorders.

-

Simplifying Assumption 2 (SA2):

SA1 is reasonable when S is sufficiently high, as it usually is in practice. SA2 is not true, of course, since ![]() in general for random variables X and Y; we will explore the loss of accuracy caused by this assumption later in this section. We will use

in general for random variables X and Y; we will explore the loss of accuracy caused by this assumption later in this section. We will use ![]() to denote the type‐2 service level under SA1 and SA2,

to denote the type‐2 service level under SA1 and SA2, ![]() to denote that under SA2 only, and B to denote the exact type‐2 service level that assumes neither.

to denote that under SA2 only, and B to denote the exact type‐2 service level that assumes neither.

Under SA1 and SA2, we have

where the second equality follows from the fact that each cycle lasts exactly R periods. Assume that an order is placed in period t and consider the cycle that begins in period ![]() and ends in period

and ends in period ![]() . After the order arrives in period

. After the order arrives in period ![]() , the inventory level is positive (by SA1), so the number of stockouts in the cycle equals

, the inventory level is positive (by SA1), so the number of stockouts in the cycle equals ![]() , using the notation in Section 4.3.4.1. Therefore,

, using the notation in Section 4.3.4.1. Therefore,

where the second equality follows from 4.48. Therefore,

Johnson et al. (1995) and subsequent authors refer to this as the “traditional approach.”

Now relax SA1. We can no longer calculate the expected number of stockouts in a cycle using the inventory level at the end of the cycle because not all of the “negative” items in ![]() are stockouts from the current cycle; some may be left over from the previous cycle. Therefore, we must account for these items more carefully.

are stockouts from the current cycle; some may be left over from the previous cycle. Therefore, we must account for these items more carefully.

Suppose period t is an order period. Let's focus on the cycle that begins in period ![]() and ends in period

and ends in period ![]() . After the order arrives in

. After the order arrives in ![]() , no additional orders arrive in this cycle. Therefore, the number of demands met from stock during this cycle equals the difference between the on‐hand inventory immediately after the order arrival in period