Chapter 17

Creating a Data Flow

This lesson covers the basics of the Data Flow Task. Section 3 comprises lessons covering the sources, destinations, and transformations in detail. However, this lesson gives you the tools to get started creating a Data Flow and understanding its purpose.

The Data Flow Task is used to transfer data from a source to a destination and can transform the data as needed. The Data Flow Task is capable of handling large amounts of data. The source and destination can be any of several different types, such as a flat file or database, and can contain millions of rows of data. The destination can also be of several types.

You can use the Data Flow Task to extract data from a database and write to a flat file location or to move the data from a flat file to a database. This capability enables you to receive files from outside sources and write this data to your database. You can also use the Data Flow Task to move data from one database to another.

The transforms that exist in the Data Flow enable you to make changes to the data as you move it from a source to a destination. For example, if you are receiving flat files from a vendor and the data is not formatted correctly (say, the Social Security numbers need to have dashes) you can fix that before writing it to a database. Fixing things like that prior to writing to your database prevents you from having to run updates on your database later.

Additionally, these transforms are faster in SSIS. SSIS performs the transforms in memory, which is why it is much faster than reading and writing the data to a drive. This speed is especially evident in the case of running updates to a table. SQL update commands read data from a database and write data back to the same database. This reading and writing to the same location makes the process very slow compared to SSIS.

The Data Flow enables you to save data to multiple locations simultaneously, which also improves performance when you are saving data. You can receive a flat file from a vendor, open it with an SSIS package, parse through the data, make decisions on where data needs to be written, and write the data to multiple locations, all in one Data Flow.

You have two ways to create a Data Flow in a package. You can drag out the Data Flow Task from the Toolbox, or you can click the Data Flow tab at the top and click the blue link in the middle of the screen. This link states, as shown in Figure 17-1, “No Data Flow tasks have been added to this package. Click here to add a new Data Flow task.”

If there is already a Data Flow in the package, clicking the Data Flow tab shows that Data Flow. If multiple Data Flows are in a package, you see a drop-down menu at the top of the Data Flow screen showing the list of all the Data Flows in the package. It is a best practice to give the Data Flows a name that is descriptive. With descriptive names, you can then easily select the correct Data Flow you are trying to alter. Descriptive naming is a major help when your package contains a large number of Data Flows.

After you enter the Data Flow tab by either method previously mentioned, the Toolbox will contain a new set of tools. The top section contains the Favorites, the middle contains the common transforms, and the bottom contains the source and destinations. These tasks can be used only in the Data Flow Task and cannot be used in the Control Flow screen. You can move any item in the Toolbox to the Favorites section by right-clicking on it and clicking Move to Favorites.

Several sources have the same type as a destination. For example, there is an Excel Source and an Excel Destination. These tasks are not interchangeable. A source can only read data and a destination can only write data. Keep in mind that any connections you add to the Connection Managers in a package can be reused in other Data Flows or even in the Control Flow of the package (connection managers are explained in Lesson 6). So, a source in a Data Flow can connect to an Excel file, and an Excel Destination can connect to the same Excel file. The connection exists just once in the connection manager but can be used multiple times in a package.

Once you drag a source into a Data Flow, two lines appear from the bottom of the task. The blue line is the good data. The red line is the bad data. Sources and destinations, including how to use these blue and red lines, are explained in detail in the lessons following this one. Double-clicking the source opens the source editor for that source type. In the editor, you can select the location of the source.

After your source is established, you can connect it to a transform from the transformation section of the Toolbox. This transform can manipulate the data to be in the form you want for your destination. The transform can also make complex decisions to send data to different destinations.

Once the sources and transforms are created, you can drag out a destination and connect the last step of the transforms to the destination. If the Data Flow does not contain any transforms, the source can be connected directly to the destination, which simply moves data from one location to another without changing the data. This arrangement is the simplest form of a Data Flow.

Try It

In this Try It, you create a package with a Data Flow Task. The Data Flow is going to move data from a SQL database table to a flat file. After this lesson, you will have an understanding of how to create a Data Flow Task in the Control Flow of a package.

Lesson Requirements

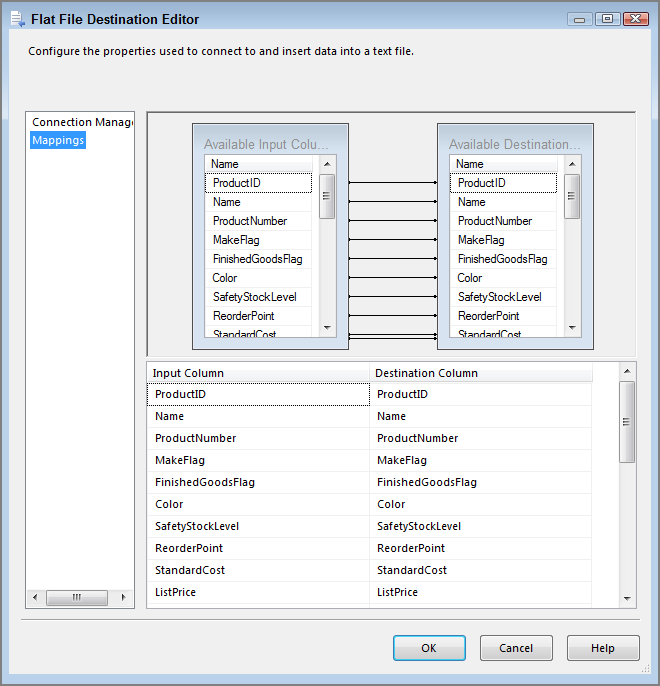

You need to create a Data Flow in a package and create a source and a destination. The source is going to be an OLE DB connection to the AdventureWorks2012 database to the Products table. The destination is going to be a flat file you create.

You can download the completed Lesson17.dtsx from www.wrox.com.

Hints

- You need only one Data Flow Task for this example.

- The Data Flow needs a source and a destination.

- The package needs two connections in the connection manager.